The iPhone 17e is a powerful, long-lasting iPhone for those who can live without expensive camera and display features.

Pros

- A19 chip is plenty fast

- MagSafe compatibility

Cons

- Only a single rear camera

- No always-on display or 120Hz

With its Pro-level display and innovative camera system, the iPhone 17 is a phenomenal value proposition.

Pros

- A 120Hz refresh rate

- Excellent selfie lens

- 40W charging

Cons

- No true telephoto lens

- Battery life is good, not great

A direct successor to the iPhone 16e, the iPhone 17e is intended to be an affordable, no-frills entry point into the iPhone ecosystem, but how does it compare to the next-cheapest model in Apple’s newest lineup, the iPhone 17?

In this guide, we’ll be comparing the two phones’ key specs and features to help you decide which iPhone 17 model is best for you. If you’re willing to spend a bit more money, you can also check out our iPhone 17 vs iPhone 17 Pro comparison.

Article continues below

iPhone 17e vs iPhone 17: specs comparison

Before we dig into the details, here’s an overview of both phones’ key specs:

| Header Cell – Column 0 |

iPhone 17e |

iPhone 17 |

|---|---|---|

|

Dimensions: |

146.7 x 71.5 x 7.8mm |

149.6 x 71.5 x 8mm |

|

Weight: |

169g |

177g |

|

Display: |

6.1-inch OLED |

6.3-inch OLED |

|

Refresh rate: |

60Hz |

120Hz |

|

Peak brightness: |

1,200 nits |

3,000 nits |

|

Chipset: |

A19 |

A19 |

|

RAM: |

8GB |

8GB |

|

Rear cameras: |

48MP wide |

48MP wide, 48MP ultra-wide |

|

Front camera: |

12MP |

18MP |

|

Battery: |

4,005mAh (unofficial) |

3,692mAh (unofficial) |

|

Charging: |

20W wired, 15W wireless |

40W wired, 25W wireless, 4.5W reverse wired |

|

Storage: |

256GB, 512GB |

256GB, 512GB |

iPhone 17e vs iPhone 17: price and availability

Both the iPhone 17e and iPhone 17 are available globally; the former was released in March 2026, while the latter hit shelves in September 2025.

The iPhone 17e retails for $599 / £599 / AU$999 for 256GB of storage, and $799 / £799 / AU$1,399 for 512GB of storage. In the same configurations, the iPhone 17e retails for $799 / £799 / AU$1,399 and $999 / £999 / AU$1,799, respectively.

In other words, the iPhone 17e is $200 / £200 / AU$400 cheaper than the iPhone 17, whichever way you slice it.

It’s also important to note that both the iPhone 16e and iPhone 16 shipped with 128GB of storage as standard, so both iPhone 17e and iPhone 17 offer more base storage than their respective predecessors for the same starting price.

Winner: iPhone 17e

iPhone 17e vs iPhone 17: design

The iPhone 17e and iPhone 17 are very similar-looking devices – they’re almost identical in size and weight, both have aluminum frames with Ceramic Shield 2 protection on their respective displays, and both are rated IP68 for water and dust resistance.

The iPhone 17e also gets the same customizable Action button as the iPhone 17, which was once an exclusive feature of Apple’s top-end iPhone 15 Pro.

The iPhone 17 has a larger 6.3-inch display than its cheaper sibling, but the two phones feel very similar in the hand, owing to the iPhone 17e’s chunkier display bezels.

The key design differences are functional. The iPhone 17 benefits from Camera Control and an extra lens on the back (more on this later), while the iPhone 17e has no such cut-out.

They’re also available in different colors; both come in black or white, but the iPhone 17e offers an additional pink shade, while the iPhone 17 also comes in blue, green, or lavender.

Winner: Tie

iPhone 17e vs iPhone 17: display

As mentioned, the iPhone 17 gets a larger OLED display than the iPhone 17e – 6.3 inches vs 6.1 inches – but it’s fitted into largely the same frame. That means the iPhone 17’s bezels are wafer-thin, while the iPhone 17e has to make do with some thicker, less premium-looking black borders.

The iPhone 17 also gets Apple’s interactive Dynamic Island cut-out at the top of its display, where the iPhone 17e is stuck with a physical notch. If you haven’t used the Dynamic Island before, you won’t know what you’re missing, but it’s essentially a pill-shaped area that’s capable of displaying real-time alerts, notifications, and background activities.

As for display detail, both phones are just as sharp as one another (460-pixels-per-inch), but the iPhone 17 can get a lot brighter, boasting a peak brightness of 3,000 nits to the iPhone 17e’s 1,200 nits. Mind you, in most everyday scenarios, you can expect to get around 800 nits from the iPhone 17e and around 1,000 nits from the iPhone 17.

The biggest display difference comes in the refresh rate department. The iPhone 17e’s screen is locked to 60Hz, while the iPhone 17 gets an always-on, 120Hz display. That basically means the scrolling experience on the iPhone 17 is far smoother than that of the 17e, though again, if you’re used to the 60Hz refresh rate of Apple’s older iPhone models, you’re not likely to be disappointed by how the 17e feels to navigate.

Winner: iPhone 17

iPhone 17e vs iPhone 17: cameras

The biggest difference between the iPhone 17e and iPhone 17 can be spotted by someone who knows absolutely nothing about phones: Apple’s more expensive phone has a whole extra lens on the back. Specifically, it’s a 48MP ultra-wide lens, which lets you capture expansive subjects like landscapes and tall buildings with ease.

The iPhone 17e does at least get the same 48MP Fusion camera as the rest of the iPhone 17 line (including the iPhone 17 Pro and iPhone 17 Pro Max). This lens lets you capture shots at either 1x or 2x, and uses some smart pixel-binning wizardry to maintain image quality at that larger distance (you’ll need to go Pro if you want to zoom further than 2x).

Notably, you also get the next-generation Portrait Mode – which automatically detects depth and lets you adjust the focus of an image post-capture – on both the iPhone 17e and iPhone 17, which is a nice win for Apple’s cheapest iPhone. Several other features, though – like spatial photo capture and Dolby Vision video capture – are exclusive to the iPhone 17.

In the selfie department, the iPhone 17 features an 18MP Center Stage camera that can switch orientation from vertical to horizontal to help you fit more people into the frame. The iPhone 17e, meanwhile, gets a run-of-the-mill 12MP front-facing lens.

Winner: iPhone 17

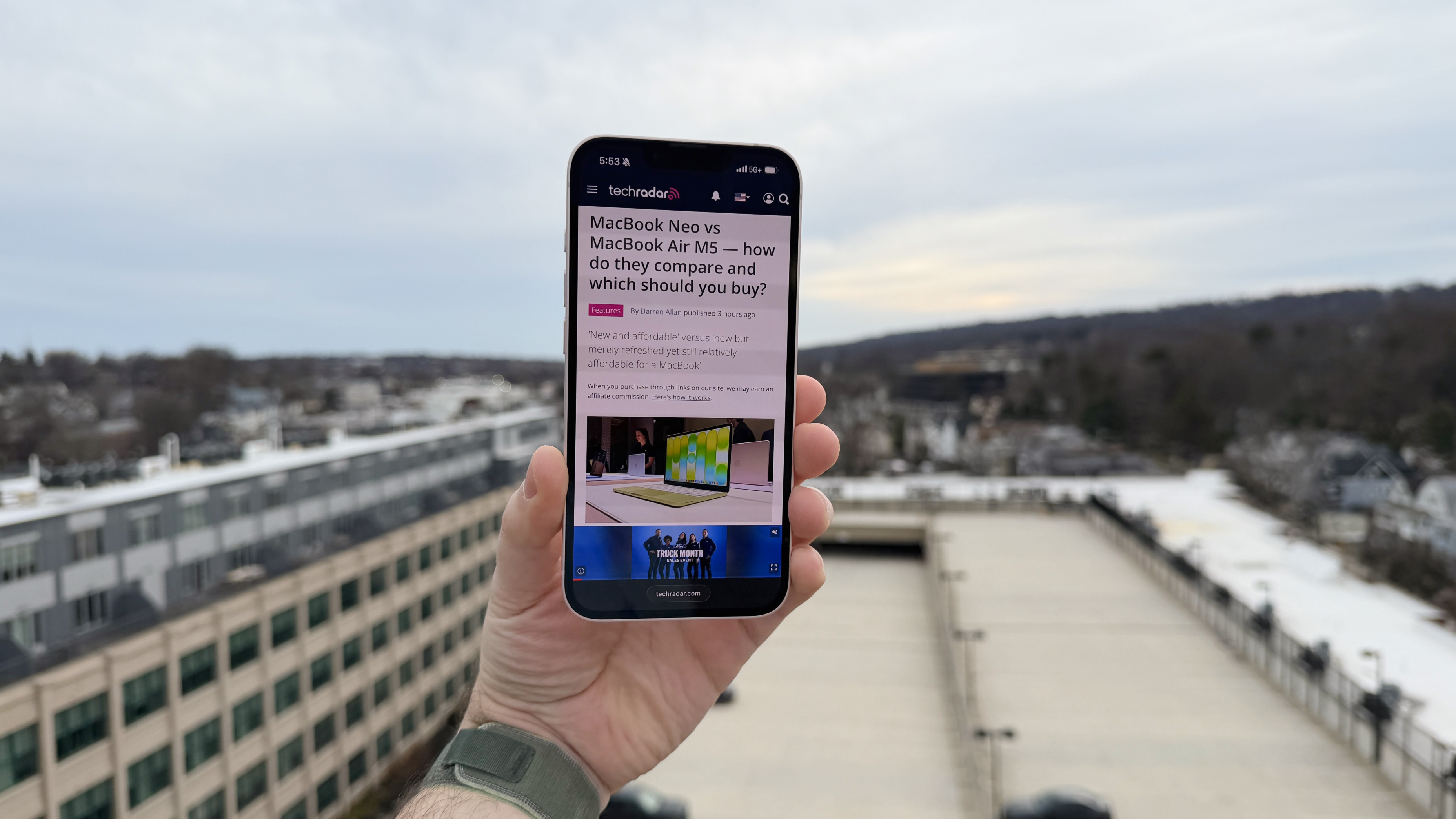

iPhone 17e camera samples

iPhone 17 camera samples

iPhone 17e vs iPhone 17: performance and software

Both the iPhone 17e and iPhone 17 use an almost identical A19 chipset (the latter phone has an extra GPU core), making them just as powerful as one another.

Indeed, as we noted in our iPhone 17e review, “even with one fewer GPU core, everything flies on the iPhone 17e. If you’re coming from an older smartphone, you’re going to notice a significant improvement […] In daily use, [we] found the 17e to be consistently responsive, and quick to deliver on whatever [we] asked it to do.”

The software experience is also the same across both phones. The iPhone 17e – like the rest of the iPhone 17 line – runs iOS 26 out of the box, is compatible with Apple Intelligence, and offers the customizable Action Button for handy software shortcuts.

As for software support, you’ll get between five and seven years of major iOS updates, regardless of which iPhone you choose.

Winner: Tie

iPhone 17e vs iPhone 17: battery

When it comes to battery life, the iPhone 17e and iPhone 17 offer similar endurance thanks to their shared A19 chipset and C1X modem. Apple rates the former for 26 hours of video playback and the latter for 30 hours, and that proved to be largely accurate in our testing – you’ll get at least a full day of juice from either model.

The gaps begin to appear in the charging department. While both devices benefit from MagSafe compatibility – which is a big win for the iPhone 17e, since the iPhone 16e offered no such compatibility – the iPhone 17 offers faster charging speeds across the board.

Specifically, the iPhone 17 offers 40W wired, 25W wireless, and 4.5W reverse wired charging, while the iPhone 17e offers 20W wired and 15W wireless speeds. Those aren’t deal-breaking disparities, but for reference, you can expect to reach 50% charge in around 30 minutes with the iPhone 17e, and the same figure in around 20 minutes with the iPhone 17 (if you’re using chargers that support their respective max wattages).

Winner: iPhone 17

iPhone 17e vs iPhone 17: verdict

On paper, the iPhone 17e doesn’t triumph over the iPhone 17 in any area except price, but if you’re looking for a no-frills iPhone that’ll remain powerful and supported for years to come, it’s still a great-value product.

Keen photographers, though, will be better served by one of Apple’s more expensive iPhones, and if you’re someone who values the display experience above all else, then the iPhone 17’s faster refresh rate and higher brightness do, in this writer’s opinion, justify the $200 / £200 / AU$400 premium over the iPhone 17e.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.