Tech

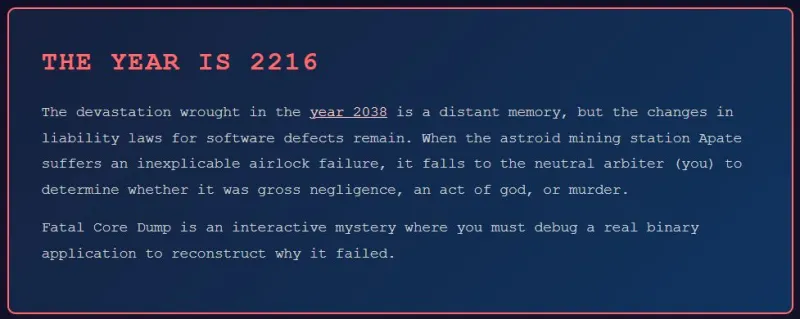

Turning a GDB Coredump Debug Session Into a Murder Mystery

Debugging an application crash can oftentimes feel like you’re an intrepid detective in a grimy noir detective story, tasked with figuring out the sordid details behind an ugly crime. Slogging through scarce clues and vapid hints, you find yourself down in the dumps, contemplating the deeper meaning of life and the true nature of man, before hitting that eureka moment and cracking the case. One might say that this makes for a good game idea, and [Jonathan] would agree with that notion, thus creating the Fatal Core Dump game.

Details can be found in the (spoiler-rich) blog post on how the game was conceived and implemented. The premise of the game is that of an inexplicable airlock failure on an asteroid mining station, with you being the engineer tasked to figure out whether it was ‘just a glitch’ or that something more sinister was afoot. Although an RPG-style game was also considered, ultimately that proved to be a massive challenge with RPG Maker, resulting in this more barebones game, making it arguably more realistic.

Suffice it to say that this game is not designed to be a cheap copy of real debugging, but the real deal. You’re expected to be very comfortable with C, GDB, core dump analysis, x86_64 ASM, Linux binary runtime details and more. At the end you should be able to tell whether it was just a silly mistake made by an under-caffeinated developer years prior, or a malicious attack that exploited or introduced some weakness in the code.

If you want to have a poke at the code behind the game, perhaps to feel inspired to make your own take on this genre, you can take a look at the GitHub project.

Tech

Trump Administration Won’t Rule Out Further Action Against Anthropic

At Anthropic’s first court hearing challenging sanctions imposed by the Trump administration, the AI tech startup asked the government to commit that it wouldn’t levy additional penalties on the company. That didn’t happen.

“I am not prepared to offer any commitments on that issue,” James Harlow, a Justice Department attorney, told US district judge Rita Lin over video conference on Tuesday.

In fact, the government is gearing up to take another step designed to sideline the company from doing business with federal agencies. President Trump is currently finalizing an executive order that would formally ban usage of Anthropic tools across the government, according to a person at the White House familiar with the matter but not authorized to discuss it. Axios first reported on the plan.

Tuesday’s hearing stemmed from one of the two federal lawsuits Anthropic filed against the Trump administration on Monday, alleging that the government unconstitutionally designated it a supply-chain risk and turned it into a tech industry pariah. Billions of dollars in revenue for Anthropic is now at risk, with current customers and prospective ones dropping out of deals and demanding new terms, according to the company.

Anthropic seeking a preliminary court order suspending the risk designation and barring the administration from taking further punitive measures against the company.

The court appearance on Tuesday was to decide on the schedule for a preliminary hearing, and Anthropic is eager for it to happen soon to prevent further harm to its business. Michael Mongan, an attorney for Anthropic at WilmerHale, told Lin he was less concerned about delaying it until April if the Trump administration could commit to not taking additional action. “The actions of defendants are causing irreparable injuries, and those injuries are mounting day by day,” Mongan said.

After Harlow declined, Lin moved up the date of the hearing to March 24 in San Francisco, though that timeline was still later than Anthropic wanted. “The case is quite consequential from both sides, and I want to make sure I’m deciding on an expedited record but also a full record,” the judge said.

Scheduling in the other case, which is in Washington, DC, is on hold while Anthropic pursues an administrative appeal to the Department of Defense, which is expected to fail on Wednesday.

The months-long dispute between the Pentagon and Anthropic began when the AI startup refused to sign off on its current technologies being used by the military for any lawful purpose, which it fears could include broad surveillance of Americans and the launch of missiles without human supervision. The Defense Department contends usage decisions are its prerogative.

Several attorneys with expertise in government contracts and the US Constitution believe the administration’s action against Anthropic continues a pattern of abusing the law to punish perceived political enemies, including universities, media companies, and law firms (such as WilmerHale, the firm representing Anthropic). The experts believe Anthropic should prevail, but the challenge will be overcoming the deference that courts often give to national security arguments from the government, especially during times of war.

“If this is a one-off, you might give the president some deference,” says Harold Hongju Koh, a Yale Law School professor who worked in the Barack Obama presidential administration and has written about the Anthropic case. “But now, it’s just unmistakable that this is just the latest in a chain of events related to a punitive presidency.”

David Super, a Georgetown University Law Center professor who studies the constitution, says the provisions the Defense Department used to sanction Anthropic were designed to protect the country from potential sabotage by its enemies.

Tech

Human Problems: It’s Not Always The Technology’s Fault

from the it-prevents-us-from-fixing-societal-issues dept

We have met the enemy and he is us.

When a teenage boy in Orlando started texting Character.AI’s chatbot, it started as an innocent use of a new tool. Sewell Setzer III customized the chatbot to have the Game of Thrones-inspired persona of Daenerys Targaryen, the series’ prominent dragon-riding queen. In the months that followed, the boy developed a romantic connection with the chatbot. One night, he messaged the bot: “What if I told you I could come home right now?” The bot sent back, “[P]lease do, my sweet king.” Setzer was only fourteen years old when he died by suicide later that evening.

Setzer’s death is a tragedy. Like many parents in the wake of suicide, Seltzer’s mother is left searching for answers and accountability. Suicide often leaves behind a painful void, filled with questions that rarely yield satisfying explanations.

In her search, Setzer’s mother sued the chatbot’s developer, Character Technologies, alleging that its chatbot caused her son’s death. The complaint describes the bot as a “defective” and “inherently dangerous” technology, and accuses the company of having “engineered Setzer’s harmful dependency on their products.” She is not alone. Three other families have brought similar suits against Character Technologies, and another has sued OpenAI, alleging the chatbots harmed their children.

Framing suicide and other harms as technology problems—as much of the current discourse around chatbots suggests—obscures underlying societal conditions and can undermine effective interventions. In effect, what are often described as “tech problems” are, more accurately, the result of human decisions, norms, and policies. They are, at their core, human problems.

Historical Framing of Tech and Media in Creating and Sustaining Societal Problems

This is just the latest vintage whine, rebottled yet another time. Humanity has long sought to condemn new technologies and media for problems of the day. When the printing press made literature available to the masses, church and state condemned publications for causing immorality. Rock ‘n’ Roll and comic books were blamed for juvenile delinquency. Later, it was heavy metal and role-playing games. The advent of video games supposedly led to increased violence by adolescent boys.

The desire to hold technology companies responsible for human harms, however, has its immediate antecedent in social media. Over the past decade, users have sued social media platforms for offline violence committed by people they met online, failing to prevent cyberbullying, and hosting user-generated content that allegedly radicalized extremists.

Like in Setzer’s case, parents have also sued social media companies after the deaths of their children, arguing that design choices, engagement mechanics, and algorithmic targeting played a role. Indeed, this is the central question at the heart of the current wave of “social media addiction” litigation that is currently being tried.

AI is just the latest technological scapegoat to which we seek to ascribe fault. It’s easier to hold technology responsible for our problems, especially when the technology is as uncanny as generative AI. We’re afraid of robots, perhaps not because of any harm they cause us, but because they show us how much we, as humanity, can harm ourselves. We would rather fault the technology du jour than confront the harder truths underneath.

Death by Suicide as a Case Study

To put this into context, consider the allegations about the Character.AI chatbot and Setzer’s suicide. Suicide is a complex, deeply human problem. Among youth and young adults, it stands as the second leading cause of death. Suicide has no single cause. Public health experts have long recognized that risk emerges from a convergence of individual, relational, communal, and societal factors. These can include long-term effects of childhood trauma, substance abuse, social isolation, relationship loss, economic instability, and discrimination. On the surface, these may look like personal struggles, but they’re really the fallout of systemic failure.

Access to lethal means compounds the risk of self-harm and suicide. In particular, the presence of firearms in the home has remained strongly associated with higher youth suicide rates.

These systemic failures tend to hit teens the hardest. Studies consistently show that young people are facing rising rates of mental health challenges, especially due to and following the COVID-19 pandemic. This is compounded by chronically underfunded school counseling programs, inaccessible mental health care, and inconsistent support for youth in crisis. LGBTQ+ youth, in particular, bear the brunt, facing higher rates of bullying, depression, and suicidal ideation, all while increasingly being targeted by state policies that strip away protections and deny their identities.

We don’t and can’t know for sure why Setzer or anyone else died by suicide. Tragically, teenage suicide is common. Indeed, it’s the subject of many songs. There’s no mechanism to definitively determine how Setzer and other victims felt when they started using Character.AI. However, as we likely all remember from our own lives, teenage years can be trying. As we mature physically and mentally, it can be difficult to express and accept ourselves. Other children can be cruel. Hormones can lead us to lash out in anger and withdraw into ourselves.

In Setzer’s case, the complaint and public reporting indicate that he exhibited other signs and conditions commonly associated with elevated suicide risk, including anxiety and depression, withdrawal from teachers and peers, chronic lateness, significant sleep deprivation, and access to a firearm in the home. His interactions with fictional characters on the Character.AI service may suggest unmet emotional needs or a search for understanding and connection. At different points, he described a character as resembling a father figure and spoke about feelings of loneliness and a lack of romantic connection—experiences that are not uncommon for adolescents, particularly during periods of heightened vulnerability. According to the complaint, Setzer also raised the topic of suicide in earlier conversations with the chatbot, and those exchanges were promptly halted by the system.

The uncomfortable truth about suicide is that it has existed as long as there have been people–sometimes for reasons we can understand, and often for reasons we never will. We are terrified that people die by suicide, not only because it is difficult to comprehend, but because the forces that drive someone there can feel disturbingly familiar.

Parents like Setzer’s can’t fix systemic governmental and societal failures. What feels more immediate and actionable is holding the technology companies accountable when their services appear to enable or amplify harm. It is far easier to fixate on the medium through which people express suicidal thoughts rather than ask where those thoughts came from or why they felt like the only option.

Legal Analysis of Faulting Tech

Legal doctrine appears to recognize that holding the technology responsible for these systemic failures is not viable. For example, because suicide is shaped by so many overlapping factors, tort claims against AI companies for causing a teen’s death—while understandable in their urgency—are, doctrinally speaking, a stretch.

Under traditional tort principles, providers of generative AI systems and social media services are unlikely to bear legal responsibility in these cases. Claims based on intentional torts, such as battery, generally fail because providers of online services do not act with the intent to cause—or even to contribute to—physical harm. Therefore, Plaintiffs more commonly turn to negligence theories.

Negligence, however, requires more than just harm in fact. It demands both factual causation and proximate (i.e., legal) causation. In some situations, an online service or generative AI model might satisfy a but-for test because the harm would not have occurred without the service. But that is not sufficient.

Proximate cause—what the law treats as a legally meaningful connection between conduct and injury—is where most of these claims falter. In many cases, particularly those involving such numerous and complex factors as suicide, the link between a provider’s conduct and the ultimate injury is typically too attenuated to meet this standard.

Services such as social media and AI chatbots are typically designed as broad, general-purpose tools. The potentially implicatable content comes from other users’ behaviors, personalized interactions, or the user’s own actions. Even where excessive technology use—including social media—has been associated with elevated rates of suicidal ideation among youth and young adults, research has not established a direct causal link. As a result, courts are generally reluctant to find the technology service to be the legal cause of death.

The Broader Ramifications of a Myopic Focus on Tech

Beyond legal error, focusing solely on technology obscures the path to real solutions. When we frame fundamentally human problems as technological ones, we deflect attention from the underlying conditions that lead to these tragedies and make it more likely they will recur.

This framing guides policymakers and advocates toward seemingly easy, surface-level technological fixes such as imposing age-verification requirements, mandating disclosures about content moderation, or curbing algorithmic feeds. True, technology companies can—and should—consider how to help mitigate real-world harms. Yet these proposed interventions rest on the assumption that technology is the primary culprit, even though research increasingly shows that, in the right contexts, technology can actually help those in crisis.

The appeal of reducing complex social issues to matters of redesigning or banning technology is understandable. Technology problems can feel tractable. They suggest clear targets and concrete fixes.

What this logic ignores, however, is that the pre-technology status quo for many public health crises has long been dismal. The better question, then, isn’t whether technology causes harm, but whether it deepens an already broken baseline—or simply reflects it.

Technology, including generative AI, often acts less as a cause than a mirror. Our digital spaces often reflect the offline world, including its ills.

Today, children face more pressure to excel at school and attend the best universities, even while job prospects stagnate and inflation soars. They have lost access to the kinds of public and community spaces that once offered structure, connection, and care. Libraries operate with reduced hours. Budget cuts have decimated after-school programs. Parks are monitored and restricted for loitering. Community centers that shuttered during the pandemic have never reopened. In many ways, technology—and social media in particular—has stepped in as a makeshift third space for teens. Yet rather than address the erosion of offline support, policymakers are now working to dismantle these digital communities too.

If human distress reflects deteriorating real-world social infrastructure, then optimizing digital services cannot restore stasis. Technological interventions address a symptom while the deeper human cancer persists.

A Pragmatic Path Forward

The path forward requires resisting the impulse to treat fundamentally human problems as technological ones. When new technologies appear alongside harm, the harder and more necessary questions are not simply how to regulate the tool, but what human choices produced the conditions in which harm emerged, which institutions failed or fell short, and what values should guide our response. These questions are more difficult—and often more uncomfortable—because they turn our attention inward, toward ourselves, rather than external and more convenient actors.

Instead of focusing our energies on systematically regulating platforms, we should direct our efforts toward these human problems. For suicide, public health experts point to a wide range of evidence-based strategies for preventing and mitigating risk factors. These include strengthening economic supports such as household financial stability and housing security; creating safer environments by reducing at-risk individuals’ access to lethal means; fostering healthy organizational policies and cultures; and improving access to healthcare by expanding insurance coverage for mental health services and increasing provider availability and remote access in underserved areas. Experts also emphasize the importance of promoting social connection and teaching problem-solving skills that can help individuals navigate periods of acute distress.

These and other socioeconomic reforms are not easy solutions. They aren’t just a matter of adjusting algorithms or restricting platform features. They demand uncomfortable conversations about how we structure work, education, and community life. They require sustained political commitment and resource allocation. Yet if we can achieve these results, we will create a better world than one derived from mere technological fixes.

In short, technology doesn’t cause suicide. It doesn’t cause a host of human problems for which it is often accused. Sadly, they have always been with us.

But technology, used wisely, could help us mitigate these problems. For example, through processing massive amounts of data, AI can detect patterns that elude us humans. This alone could help reveal early warning signs or surface new protective factors. AI chatbots, for example, could help us identify teens who are at risk and create opportunities to intervene.

But that kind of progress demands that we take responsibility for these problems. We must acknowledge that our governments, societies, communities, and even ourselves may have normalized and contributed to these harmful conditions. We may discover there’s no rhyme or reason to why teenagers commit suicide. But we may uncover that teen suicide isn’t random at all. It may stem from something we’ve unwittingly ignored, or perhaps built into the world.

That possibility is far more unsettling than the idea of dangerous technology. It’s the idea that the danger might be us.

Kevin Frazier directs the AI Innovation and Law Program at the University of Texas School of Law and is a Senior Fellow at the Abundance Institute. Brian L. Frye is a Spears-Gilbert Professor of Law at the University of Kentucky J. David Rosenberg College of Law. Michael P. Goodyear is an Associate Professor at New York Law School. Jess Miers is an Assistant Professor of Law at The University of Akron School of Law

Filed Under: ai, blame, moral panic, negligence, proximate cause, suicide, tech, tort law

Tech

Stephen Thaler’s Legendary AI Copyright Losing Streak Ends With Nowhere Left To Appeal

from the public-domain-ftw dept

We’ve been covering Stephen Thaler’s quixotic quest to get copyright (and patent) protection for works generated entirely by his AI system “DABUS” for years now. If there’s one thing Thaler has proved beyond all reasonable doubt, it’s that you can be comprehensively, thoroughly, and repeatedly wrong at every level of the American legal system and still keep going. He loses everywhere, every time, at every level. The Copyright Office rejected him. A federal district court rejected him. The DC Circuit rejected him. The Patent Office rejected him. Courts rejected his parallel patent claims. Even the Trump administration—not exactly known for its nuanced intellectual property positions—told the Supreme Court not to bother hearing his appeal.

And now, the Supreme Court has declined to take up the case, putting the final period on what has been one of the most impressive losing streaks in recent IP law history.

Plaintiff Stephen Thaler had appealed to the justices after lower courts upheld a U.S. Copyright Office decision that the AI-crafted visual art at issue in the case was ineligible for copyright protection because it did not have a human creator.

That was always the fatal flaw with his argument. He wasn’t making the more nuanced claim that a human who uses AI as a tool should get some copyright protection. He was making the maximalist claim: the AI did it all by itself, and it (or rather, he, as the AI’s owner) should get the copyright anyway.

The image in question—”A Recent Entrance to Paradise,” of train tracks entering a portal surrounded by green and purple plant-like imagery—was, according to Thaler, created entirely by DABUS with no human creative input. Every single institution that looked at this said no.

A federal judge in Washington upheld the office’s decision in Thaler’s case in 2023, writing that human authorship is a “bedrock requirement of copyright.” The U.S. Court of Appeals for the District of Columbia Circuit affirmed the ruling in 2025.

Thaler’s lawyers, for their part, tried to argue that the stakes were too high for the Court to sit this one out:

With a refusal by the court to hear the appeal, Thaler’s lawyers said, “even if it later overturns the Copyright Office’s test in another case, it will be too late. The Copyright Office will have irreversibly and negatively impacted AI development and use in the creative industry during critically important years.”

That’s rich. The Copyright Office is already working through the genuinely harder questions in cases involving tools like Midjourney—cases where humans actually did have meaningful creative input. Those cases are moving through the system right now. The problem for Thaler is that he chose the worst possible vehicle to force a Supreme Court showdown: a case so maximalist in its claims (the AI did everything, humans did nothing, give us the copyright anyway) that courts could rule against him on the narrowest possible grounds without ever having to engage with the nuanced questions at all. His all-or-nothing bet made this an easy case.

Still, the question of what happens when a human uses AI as a creative tool—rather than letting the machine do everything—isn’t actually as novel or unsettled as many people seem to think.

Copyright law has required human creative choices since at least Burrow-Giles Lithographic Co. v. Sarony all the way back in 1884 in a case about whether or not photographs get covered by copyright. And the wonderful Feist Publications v. Rural Telephone Service from 1991 (a case we cite often) hammered the point home by establishing that copyright demands original creative expression. Consider how this already works with photography. A photographer who frames a shot of a landscape gets copyright protection in the creative choices they made—the composition, the angle, the timing, the lighting. But the landscape itself? No human created that. It gets no copyright. The camera mechanically captured what was in front of it, but the human’s original creative decisions (and only those original creative decisions) are what copyright protects.

AI-generated works should work roughly the same way. If a human’s creative input—through a sufficiently specific and expressive prompt, through selection and arrangement, through iterative creative choices—meaningfully shapes the output, that human contribution can be protected. But the parts that the AI generated autonomously, without human creative direction? Those are “the landscape.” They’re the thing no human authored.

There will certainly be disputes at the margins about exactly how much human input is enough, and where the line sits between “I told the AI to make something cool” and genuine creative direction. But the fundamental framework for handling this already exists. We’ve been here before with every new creative tool, from cameras to Photoshop. The principle has always been the same: copyright protects human creativity, regardless of the tool used to express it.

Thaler chose to fight for the one position that had no support in law (or in common sense). His losing streak is now complete, and there’s nowhere left to appeal. But the legacy of his many, many losses is actually kind of useful: he has, through sheer persistence, generated an incredibly clear and consistent body of authority establishing that purely AI-generated works, with no human creative input, do not get copyright protection.

So, thanks for that, I guess. Oh, and I guess we can confidently post that “Recent Entrance to Paradise” image as it, like the monkey selfie before it, is officially in the public domain.

Filed Under: ai, copyright, copyright office, copyrightable subject matter, dabus, human creativity, stephen thaler, supreme court

Tech

HiFiMAN Arya WiFi and HE1000 WiFi First Impressions at CanJam NYC 2026: Are Wireless Planar Headphones Finally Ready for Audiophiles?

For years, the biggest challenge in the wireless headphone space hasn’t been convenience or features. It’s been performance. High-end brands have spent the past decade refining flagship wired models that rely on dedicated headphone amplifiers and carefully tuned drivers, and translating that level of performance into a self contained wireless design is not a trivial exercise. That’s the context for the HiFiMAN Arya WiFi and HiFiMAN HE1000 WiFi, two open back wireless planar magnetic headphones introduced at CanJam NYC 2026.

HiFiMAN took its time with these for a reason. Integrating the necessary wireless electronics, amplification, DAC architecture, and battery systems into both earcups and the headband, while still preserving the acoustic character of the award winning wired Arya and HE1000 was not going to happen overnight.

HiFiMAN has experimented with wireless concepts before, but the Arya WiFi and HE1000 WiFi represent its most serious attempt yet to bring high bandwidth wireless audio to planar magnetic headphones.

Both models still support Bluetooth with codecs like aptX HD and LDAC, and they can function as USB Audio devices when connected directly. But those options are really just the supporting cast.

The real story here is WiFi. That’s where the bandwidth lives, and where HiFiMAN believes wireless listening can finally approach the kind of fidelity that planar magnetic headphones are known for.

Hymalaya R2R DAC: A Ladder Inside the Headphones

Both the HiFiMAN Arya WiFi and HiFiMAN HE1000 WiFi incorporate HiFiMAN’s proprietary Hymalaya R2R DAC, along with integrated amplification inside the earcups and planar magnetic drivers derived from the company’s established designs. That combination turns each headphone into something closer to a complete playback system rather than a passive transducer waiting for a DAC and amplifier to do the heavy lifting.

The Hymalaya DAC is built around a classic R2R ladder architecture, a design long favored in high end audio for its precise timing and direct signal conversion. Instead of relying on the delta sigma DAC chips found in most wireless headphones, an R2R DAC converts digital audio through a network of precision resistors that translate binary data directly into analog voltage. The upside can be excellent transient response and tonal accuracy. The downside is that traditional ladder DACs tend to be large, complex, and hungry for power.

HiFiMAN’s solution was to rethink the architecture from the ground up. The Hymalaya platform combines the R2R ladder with FPGA control and extremely low power consumption, allowing the company to shrink the circuit dramatically while maintaining support for high resolution formats. What makes this particularly impressive is the scale. Each earcup houses a compact DAC stage containing hundreds of precision resistors, carefully matched and integrated alongside the internal amplification and wireless electronics.

Packing that level of circuitry into both sides of a headphone while keeping noise low, power consumption manageable, and heat under control is not trivial. From an engineering standpoint alone, this is one of the more ambitious wireless headphone designs we encountered at CanJam NYC 2026.

At a private post-show gathering on Saturday evening, HiFiMAN explained that developing a product like the HiFiMAN HE1000 WiFi or HiFiMAN Arya WiFi can take 12 to 18 months, even when starting with established passive designs.

The company chose the HE1000 and Arya platforms for two key reasons. First, both models are built on proven driver technologies, which reduces the number of variables when integrating the DAC, amplification, wireless circuitry, and battery systems directly into the headphone. Second, these sit at the higher end of HiFiMAN’s lineup, where production volumes are naturally lower. That allows the company to maintain tighter control over quality and consistency while manufacturing the smaller batches required for a design this complex.

HiFiMAN also made it clear that this technology will not immediately trickle down to more affordable models. A Sundara-based WiFi version is not on the roadmap anytime soon, and neither is a version with active noise cancellation. The company’s view is that both would need to meet the same performance standards relative to their price points before they would consider bringing them to market with so much established competition.

You May Want to Sit Down for the Price

The HiFiMAN HE1000 WiFi isn’t cheap. Not even close.

The flagship of HiFiMAN’s new WiFi headphone lineup carries an expected retail price of $2,699, placing it squarely in the same territory as many high-end wired reference headphones.

That number shouldn’t come as a total shock when you consider what’s inside. The HE1000 WiFi is essentially a self contained planar magnetic system with its own DAC, amplification, wireless receiver, battery system, and internal signal chain built directly into the headphone. In other words, it’s doing the job that normally requires a DAC, headphone amplifier, and source component sitting on your desk.

Still, there’s no getting around the sticker shock. Spending nearly three grand on wireless headphones will raise eyebrows even in the audiophile community. But compared to what it would cost to assemble a comparable desktop system for the wired HiFiMAN HE1000 lineage, the math starts to look a little less insane.

And if that price feels steep, the HiFiMAN Arya WiFi exists as the slightly less punishing option at around $1,449, though “budget” isn’t exactly the word most people would use here either. Focal and DALI have options around the same price that we’ve already reviewed for those interested in closed-back options.

Two Ways to Go Wireless: HiFiMAN HE1000 WiFi and Arya WiFi

The HE1000 WiFi and Arya WiFi are built on the same basic premise, but they are not the same headphone with different price tags slapped on the box.

Both are open back wireless planar magnetic designs with WiFi as the primary connection, backed up by Bluetooth with LDAC and aptX HD and USB Audio support. Both also integrate HiFiMAN’s Hymalaya R2R DAC, internal amplification, and battery powered electronics directly into the headphone. That alone is unusual. Most wireless headphones still lean on Bluetooth and off the shelf chipsets. HiFiMAN decided to build something far more ambitious and a lot more complicated.

Where they begin to separate is with the driver platform.

The HE1000 WiFi is based on the company’s more upscale Nano Diaphragm driver architecture, paired with Stealth Magnet technology to reduce wave diffraction and preserve a cleaner path for the soundwave. It feels like the more luxurious and visually striking of the two, and frankly, at this price it had better. Chris Boylan and I both found it very manageable on the head. Not super lightweight, but certainly not some neck killing science project either. Clamping force felt right, and the changes to the suspension headband, yoke, and internal cable routing were very well executed.

The Arya WiFi uses a similar overall layout, but swaps in HiFiMAN’s Super Nano diaphragm driver, a thinner planar diaphragm intended to improve transient response and efficiency. It still uses Stealth Magnet technology, integrated amplification, and the Hymalaya DAC, but it is positioned as the more accessible entry into this new WiFi based range. Same core concept. Different driver implementation. Lower price of admission. Slightly less “you may need to explain this purchase to your family” energy.

Both also offer something you won’t find on the HE1000 Unveiled or Arya Unveiled: protective grilles in front of the drivers. HiFiMAN explained that because these headphones may be used in a wider range of real world environments, they needed to protect the drivers in a way the more purist home oriented wired models do not. That makes sense. Open back headphones this expensive do not need extra ways to get murdered.

What also deserves real credit is the comfort and mechanical design. Because both earcups contain the DAC, amplification stage, wireless electronics, and battery systems, HiFiMAN had to rethink how the internal cabling runs through the headband and how the added hardware would affect overall balance and long term wear.

That could have gone badly. It didn’t.

The revised suspension headband, yoke structure, and internal cable routing appear to have been carefully engineered so the additional electronics don’t create pressure points or imbalance across the headband. Both Chris Boylan and I tried them and while neither headphone is super lightweight, they felt very manageable. Clamping force is well judged and the weight distribution works far better than one might expect from a headphone with this much hardware built into both earcups.

Visually, the HE1000 WiFi in particular is rather gorgeous. At nearly $2,700, it probably needs to be.

Setup is also a little more involved than typical Bluetooth pairing. We were given a quick walkthrough at the show, and while there are a few extra steps, the payoff is immediately noticeable. The WiFi connection offers significantly higher sound quality than Bluetooth, which is the entire point of the design.

So How Do they Actually Sound?

If you’re familiar with the HiFiMAN HE1000 Unveiled or HiFiMAN Arya Unveiled, their wireless siblings get you a sizable percentage of the way there. That’s impressive on its own, but it also needs a bit of context.

The Wi-Fi environment at the hotel in Times Square during CanJam NYC 2026 was far from ideal. Thousands of attendees were hammering the network all day streaming music and uploading content, and even 45 floors above the city the connection on our smartphones and tablets was inconsistent at best.

In other words, what we heard was likely only a glimpse of what these headphones are capable of under more controlled conditions.

My personal experience with the HE1000 Unveiled is that it rewards better electronics. Feed it a strong amplifier and a good DAC and it delivers a well balanced, near neutral presentation. There’s enough life and energy to keep things engaging without tipping into the sterile or analytical. Sub bass isn’t exactly seismic, but unless you live exclusively in EDM territory, it feels honest and realistic for a planar magnetic design.

The HE1000 WiFi gets surprisingly close to that presentation. The sub bass won’t rattle your fillings, but the pacing, detail, and overall resolution are very solid. Vocals come across clean and natural, and the soundstage is large and well organized with precise imaging.

Compared to something like the Focal Bathys MG, the character is very different. The Bathys MG is punchier and more forward, designed to sound impressive right out of the gate. The HiFiMAN approach leans more toward spaciousness, neutrality, and detail retrieval, which will feel far more familiar to anyone who spends time with open back planar headphones.

In other words, these still sound like HiFiMAN headphones. The surprise is how much of that DNA survived the jump to WiFi.

Reviews of both coming in April.

Related Reading:

Tech

In a vote of confidence for Meta’s Threads, Kalshi adds sharing feature

Prediction market Kalshi is making it easier for its users to have conversations on Meta’s social network Threads. Kalshi now offers a share option that will automatically embed the relevant prediction market chart into a Threads post.

Whether people want to discuss who’s going to win Best Picture or which reality TV contestant is going to go home (and possibly bet on the outcome on Kalshi), “with this integration, people can share their opinions alongside the forecasts they’re seeing on Kalshi,” the company said in a blog post.

It’s a move that echoes a successful social media strategy for both Kalshi and its biggest rival, Polymarket, on X. However, things have gotten complicated for Kalshi on X recently. In June, X named Polymarket as its “official” prediction market partner.

Last month, Kalshi removed its affiliate badges from X accounts run by its sponsored traders. This came after X enacted a policy that prohibits sponsored accounts from posting about sports betting. That policy was adopted after the prediction markets were reportedly busted for partnering with fake sports insiders who spread misinformation.

While the share button is not as significant as what the prediction markets have with X, it is a vote of confidence in the X rival just a couple of months after user data appeared to show Threads growing faster than X.

Tech

Valerion VisionMaster Max review: Premium projector that's still consumer-friendly

The Valerion VisionMaster Max is a super-premium 4K home theater projector that manages to get extremely close to the professional-grade experience for less than half the cost.

Valerion VisionMaster Max review

For anyone constructing their own home theater, the choice between a projector and a television is frequently determined by budget. A massive TV provides most of the benefits and few of the drawbacks, but you just don’t get the same sort of experience as you would when using a projector.

However, there are other factors that weigh into the home projector experience, not the least of which is managing the light in your theater space to get the ideal image. Your choice of projector also makes a massive difference to what you can eventually see on your wall or projector screen.

Continue Reading on AppleInsider | Discuss on our Forums

Tech

Intel Demos Chip To Compute With Encrypted Data

An anonymous reader quotes a report from IEEE Spectrum: Worried that your latest ask to a cloud-based AI reveals a bit too much about you? Want to know your genetic risk of disease without revealing it to the services that compute the answer? There is a way to do computing on encrypted data without ever having it decrypted. It’s called fully homomorphic encryption, or FHE. But there’s a rather large catch. It can take thousands — even tens of thousands — of times longer to compute on today’s CPUs and GPUs than simply working with the decrypted data. So universities, startups, and at least one processor giant have been working on specialized chips that could close that gap. Last month at the IEEE International Solid-State Circuits Conference (ISSCC) in San Francisco, Intel demonstrated its answer, Heracles, which sped up FHE computing tasks as much as 5,000-fold compared to a top-of the-line Intel server CPU.

Startups are racing to beat Intel and each other to commercialization. But Sanu Mathew, who leads security circuits research at Intel, believes the CPU giant has a big lead, because its chip can do more computing than any other FHE accelerator yet built. “Heracles is the first hardware that works at scale,” he says. The scale is measurable both physically and in compute performance. While other FHE research chips have been in the range of 10 square millimeters or less, Heracles is about 20 times that size and is built using Intel’s most advanced, 3-nanometer FinFET technology. And it’s flanked inside a liquid-cooled package by two 24-gigabyte high-bandwidth memory chips—a configuration usually seen only in GPUs for training AI.

In terms of scaling compute performance, Heracles showed muscle in live demonstrations at ISSCC. At its heart the demo was a simple private query to a secure server. It simulated a request by a voter to make sure that her ballot had been registered correctly. The state, in this case, has an encrypted database of voters and their votes. To maintain her privacy, the voter would not want to have her ballot information decrypted at any point; so using FHE, she encrypts her ID and vote and sends it to the government database. There, without decrypting it, the system determines if it is a match and returns an encrypted answer, which she then decrypts on her side. On an Intel Xeon server CPU, the process took 15 milliseconds. Heracles did it in 14 microseconds. While that difference isn’t something a single human would notice, verifying 100 million voter ballots adds up to more than 17 days of CPU work versus a mere 23 minutes on Heracles.

Tech

ASUS ROG Cetra Open Wireless Gaming Earbuds Launch With Cross Platform Support

The gaming headset and wireless earbuds category has exploded over the past decade, growing into a multi billion dollar market fueled by competitive gaming, streaming, and the rise of cross platform play across PCs, consoles, and mobile devices. Brands that once focused solely on traditional PC hardware have expanded aggressively into gaming audio, and ASUS sits firmly in the deep end of that pool.

Best known for its laptops, gaming PCs, and high performance monitors, ASUS has steadily built out its audio lineup through its Republic of Gamers (ROG) division, targeting PC gamers who want the same level of engineering and performance from their headsets and earbuds. After recently introducing the ROG Kithara Open-back Planar Gaming Headset, ASUS is expanding the concept with something far more portable: the ROG Cetra Open Wireless Gaming Earbuds, designed for players and listeners who want immersive audio without completely shutting out the world around them.

Designed to combine immersive sound with real world situational awareness, the Cetra Open Wireless targets gamers, music listeners, and active users who want premium audio performance in a form factor better suited to life away from the desk.

ASUS ROG Cetra Open Wireless Features

Open Ear Design: The open ear earhook construction is ultra lightweight and designed for a comfortable, stable fit. This allows users to hear music, voice chat, and game audio while remaining aware of their surroundings.

Controls: Physical control buttons provide tactile feedback and remain responsive in rain or during intense activity. Unlike touch controls, they reduce accidental inputs and offer more consistent operation.

Drivers: The Cetra Open Wireless uses large 14.2 mm diamond like carbon coated drivers designed to deliver high resolution audio with crisp highs, clear mids, and solid bass impact. The diamond like carbon coating helps reduce distortion while improving clarity and soundstage, making the earbuds suitable for both gaming and music playback.

Dual Mode Connectivity: Supports seamless switching between Bluetooth and ultra low latency 2.4 GHz ROG SpeedNova wireless technology for synchronized in game audio and responsive gameplay.

Wireless Dongle: The included USB C 2.4 GHz wireless dongle supports passthrough charging, allowing users to power their device while the earbuds remain connected. This ensures uninterrupted gameplay, streaming, or voice chat.

Sound Modes: Built in sound modes let users tailor the listening experience. Phantom Bass enhances perceived low end response for more impact, while Immersion Mode reduces ambient noise to help maintain focus during gameplay or listening sessions.

Customization: Gear Link and Gear Link apps (iOS, Android) enable EQ fine-tuning, lighting options, and more to create personalized listening experiences.

IPX Rating: With an IPX5 splash resistance rating, the Cetra Open Wireless is designed to handle sweat, light rain, and everyday outdoor use.

Battery Life: On a full charge, the earbuds provide up to 16 hours of playback in Bluetooth mode with RGB lighting and sound modes disabled and the microphone muted. A quick 15 minute charge delivers up to 3 hours of listening time.

Quad Mic with AI Noise Cancellation: Four integrated microphones with an omnidirectional pickup pattern work alongside AI noise cancellation to capture voice communication with improved clarity during gaming, calls, or streaming.

Active Lifestyle Support: Designed for extended wear, the Cetra Open Wireless features ergonomic liquid silicone ear hooks and includes a detachable reflective neck strap for added stability during workouts and outdoor activities.

ASUS ROG Cetra Open Wireless Gaming Earbuds Specifications

| Asus ROG Model | Cetra Open |

| Product Type | Open-Ear Wireless Gaming Earbuds |

| Price | $229.99 |

| Wireless | 2.4Ghz Bluetooth |

| Support Platform | PC MAC PlayStation 4 PlayStation 5 Nintendo Switch iOS Android Bluetooth device |

| Driver Material | Diamond-Like Carbon Coated Diaphragm Drivers |

| Driver Size | 14mm |

| Headphones Impedance | 16 ohms |

| Headphones Frequency Response | 20Hz – 20kHz |

| Microphone Pick-up Pattern | Omnidirectional |

| Microphone Sensitivity | -38dB |

| Microphone Frequency Response | 100Hz – 8kHz |

| AI Noise Cancelling Microphone | Yes |

| Number of Channels | Stereo |

| Lighting | ASUS Aura RGB Lighting: Offers up to 16.8 million colors and includes four preset effects |

| Weight | 11g (earbuds) 116g (charging case) |

| Color | Black |

| Cable | 0.6m USB-C to USB-A charging cable |

| Package Contents | ROG Cetra Open Wireless Open-Ear Headphones Wireless 2.4 GHz USB-C dongle Neck Strap USB-C to USB-A charging cable Quick start guide Warranty booklet |

The Bottom Line

The ASUS ROG Cetra Open Wireless stands out by targeting a very specific intersection of users: gamers who want low latency wireless audio but also prefer the comfort and situational awareness of an open ear design. The inclusion of ASUS’ ROG SpeedNova 2.4 GHz wireless connection alongside standard Bluetooth makes these more versatile than most open earbuds, especially for players who move between PC, consoles, and mobile devices.

That flexibility is what makes the Cetra Open Wireless unique. Many open fit earbuds are designed primarily for fitness or casual listening, while ASUS is clearly aiming at cross platform gaming and everyday mobility in a single product.

There is no shortage of competition. Cleer Audio’s Arc 3 Gaming Edition targets gamers with a similar open ear concept, while Shokz OpenFit focuses more heavily on comfort and fitness use. Clip style alternatives such as TCL’s Crystal Clip and Sony’s LinkBuds Clip offer another take on the open ear category for users who want minimal ear fatigue during long listening sessions.

The ROG Cetra Open Wireless makes the most sense for mobile gamers, PC players who want a lightweight alternative to traditional headsets, and active users who prefer open ear awareness while listening to music or chatting online. If ASUS can deliver on its promises of low latency performance and solid sound quality, the Cetra Open could carve out a meaningful niche in one of the fastest growing segments of personal audio.

Price & Availability

The ASUS ROG Cetra Open Wireless Earbuds are available now for $229.99 at Amazon in black.

Tip: For those looking for an earbud that fits deeper in the ear, the ASUS ROG Cetra True Wireless Earbuds are available for $219.99 at Amazon in black or white.

Related Reading:

Tech

Gas Just Hit $8 A Gallon In This Major US City

As if the traffic wasn’t already some of the worst in the state, Los Angeles drivers now have to deal with some of the highest fuel costs, as well. With so much uncertainty surrounding oil as global tensions continue to rise overseas, one Los Angeles gas station actually started charging people $8.21 a gallon to fill up.

While Gas Buddy says the statewide average currently sits around $5.26 a gallon (as of this writing), there’s nothing stopping a gas station from charging more than the other guys. It’s not a crime, either. California only has laws against price gouging during emergencies (though the state does reserve the right to investigate and penalize excessive margins outside of those scenarios).

It’s a pain point people are feeling not just in California but coast to coast as well. According to that same Gas Buddy data, the national average is above $3 in every state but Kansas and Oklahoma since the outbreak of war in Iran. And even then, they’re only a few cents away from crossing the threshold themselves. That’s on top of seasonal trends that typically send gas prices higher this time of year anyway.

Why gas is so much more expensive in California

If it feels like you’re always hearing about higher gas prices in California over all the other states, it’s because they have a few factors working against them. For one, the state’s excise taxes, environmental fees, and climate programs all contribute to the price people pay at the pump. California also requires a specialized cleaner-burning gasoline blend. That’s both more expensive to produce and made by a smaller number of refineries. In line with basic supply and demand economics, that gives them the freedom to charge more for it. To top it all off (no pun intended), less in-state gasoline production has led to an even higher demand.

Sure, you could say this one specific gas station is just trying to get media attention, but they might not be alone for long. Some state lawmakers fear that the combination of global instability and California’s unique fuel market could drive prices that high across the entire state. A recent report cited by state Sen. Suzette Valladares suggested gasoline could reach $8 per gallon statewide by the end of 2026 if current trends continue.

Tech

Pete Hegseth Is Pushing Defense Employees to Volunteer With DHS

The Department of Defense is putting more pressure on employees to volunteer to support the Department of Homeland Security’s immigration crackdown.

In a February 19 memo sent to civilians across the DOD, secretary of defense Pete Hegseth wrote that he expects “every supervisor to encourage their civilian employees to volunteer. Leadership must continue to promote this detail program and educate their civilian employees on its importance.” The memo, which was titled “Department of War Guidance to Encourage Support to the Department of Homeland Security Southern Border and Internal Immigration Enforcement Missions,” was sent to thousands of civilian DOD employees. The memo was first reported by GovExec and was also viewed by WIRED.

The instructions follow a June 2025 memo in which Hegseth authorized civilian employees to be detailed to DHS. But an Army civilian employee who spoke to WIRED on the condition of anonymity for fear of retaliation says that there is “definitely more pressure” now, “at least on the supervisory chain.”

The DOD and DHS did not respond to a request for comment.

“I received the obligatory announcement email with the first memo when it came out, and no one has talked about it at all, so much so that I had forgotten about it entirely,” says the Army civilian employee. “I don’t know anyone who has taken the job.” In a statement from August 2025, the DOD claims that “nearly 500 DoD civilians have signed up to participate and bring their skill sets to the border security and immigration enforcement mission at the participating DHS agencies.”

“While details and other short-term professional development opportunities are common for Army civilians, I have never heard of supervisors being REQUIRED to approve such details,” they say.

The employee noted that, as part of the Trump administration’s efforts to cut back on government jobs in the name of “efficiency,” Hegseth has sought to cut the department’s workforce. “I have taken up the duties of three departed colleagues on top of the job I was hired for as a result,” they say. This means it would be difficult for the department to lose anymore staff or for workers to step away from existing projects. The employee described this kind of request to volunteer for another federal agency as “very not common.” It’s not like the Defense Department has any spare time at the moment, either: Hegseth and DOD leadership are currently engaged in directing the US’s role in conflict with Iran.

DOD employees who want to volunteer to be detailed to DHS need to apply through USAJobs. According to the job posting, the Federal Emergency Management Agency (FEMA), which is part of DHS, will be reviewing applications. Volunteers will not only be sent to the southern border, but to “several ICE and CBP facilities throughout the interior of the United States.”

While some volunteer roles appear to be mundane tasks like “data entry,” others appear to be in the thick of immigration enforcement operations. These include assisting ICE and CBP in “developing concepts of operation and campaign plans to execute internal arrests and raids as well as patrols along the Southwest Border”; assisting ICE and CBP in “managing the physical flow of detained illegal aliens from arrest to deportation, as well as manage associated data”; and “managing the logistical planning to move law enforcement personnel, operational capabilities, and support equipment across the United States.”

The memo is just the latest in a series of changes across the federal government meant to enforce president Donald Trump’s immigration agenda. At the Department of Housing and Urban Development, a new rule would bar families with immigrant members from receiving certain forms of support from the agency, and at the General Services Administration, staff have been asked to assist ICE in procuring new physical spaces across the country.

-

Business4 days ago

Form 8K Entergy Mississippi LLC For: 6 March

-

News Videos2 days ago

News Videos2 days ago10th Algebra | Financial Planning | Question Bank Solution | Board Exam 2026

-

Fashion4 days ago

Fashion4 days agoWeekend Open Thread: Ann Taylor

-

Tech6 days ago

Tech6 days agoBitwarden adds support for passkey login on Windows 11

-

Crypto World1 day ago

Crypto World1 day agoParadigm, a16z, Winklevoss Capital, Balaji Srinivasan among investors in ZODL

-

Sports5 days ago

Sports5 days ago499 runs and 34 sixes later, India beat England to enter T20 World Cup final | Cricket News

-

Sports3 days ago

Sports3 days agoThree share 2-shot lead entering final round in Hong Kong

-

Sports3 days ago

Sports3 days agoBraveheart Lakshya downs Lai in epic battle to enter All England Open final | Other Sports News

-

Politics5 days ago

Politics5 days agoTop Mamdani aide takes progressive project to the UK

-

Business10 hours ago

Business10 hours agoExxonMobil seeks to move corporate registration from New Jersey to Texas

-

NewsBeat5 days ago

NewsBeat5 days agoPiccadilly Circus just unveiled ‘London’s newest tourist attraction’ and it only costs 80p to enter

-

Entertainment4 days ago

Entertainment4 days agoHailey Bieber Poses For Sexy Selfies In New Luscious Lip Thirst Traps

-

Business2 days ago

Business2 days agoSearch for Nancy Guthrie Enters 37th Day as FBI Probes Wi-Fi Jammer Theory

-

NewsBeat1 day ago

NewsBeat1 day agoPagazzi Lighting enters administration as 70 jobs lost and 11 stores close across Scotland

-

Tech1 day ago

Tech1 day agoDespite challenges, Ireland sixth in EU for board gender diversity

-

Entertainment6 days ago

Harry Styles Has ‘Struggled’ to Discuss Liam Payne’s Death

-

Crypto World6 days ago

Crypto World6 days agoNew Crypto Mutuum Finance (MUTM) Reports V1 Protocol Progress as Roadmap Enters Phase 3

-

Tech6 days ago

Tech6 days agoACIP To Discuss COVID ‘Vaccine Injuries’ Next Month, Despite That Not Being In Its Purview

-

Business1 day ago

Business1 day agoSearch Enters 39th Day with FBI Tip Line Developments and No Major Breakthroughs

-

NewsBeat6 days ago

NewsBeat6 days agoGood Morning Britain fans delighted as Welsh presenter returns to host ITV show