For more than four decades, technological progress has been undermining expert authority, democratizing public debate, and steering individuals toward ever-more bespoke conceptions of reality.

Tech

AI could be the opposite of social media

In the mid-20th century, the high costs of television production — and physical limitations of the broadcast spectrum — tightly capped the number of networks. ABC, NBC, and CBS collectively owned TV news. On any given evening in the 1960s, roughly 90 percent of viewers were watching one of the Big Three’s newscasts.

Journalistic programs weren’t just limited in number, but also ideological content. The networks’ news divisions all sought the broadest possible audience, a business model that discouraged airing iconoclastic viewpoints. And they also relied overwhelmingly on official sources — politicians, military officials, and credentialed experts — whose perspectives fell within the narrow bounds of respectable opinion.

This media environment cultivated broad public agreement over basic facts and widespread trust in mainstream institutions. It also helped the government wage a barbaric war in the name of lies.

- There’s evidence that LLMs converge on a common (and largely accurate) picture of reality.

- LLMs have successfully persuaded users to abandon false and conspiratorial beliefs.

- Unlike social media companies, AI labs have an economic incentive to spread accurate information.

- Still, there are reasons to fear that AI will nonetheless make public discourse worse.

For better and worse, subsequent advances in information technology diffused influence over public opinion — at first gradually and then all at once. During the closing decades of the 20th century, cable eroded barriers to entry in the TV news business, facilitating the rise of Fox News and MSNBC, networks that catered to previously underrepresented political sensibilities.

But the internet brought the real revolution. By slashing the cost of publishing and distribution nearly to zero, digital platforms enabled anyone with an internet connection to reach a mass audience. Traditional arbiters of headline news, scientific fact, and legitimate opinion — editors, producers, and academics — exerted less and less veto power over public discourse. Outlets and influencers proliferated, many defining themselves in opposition to established institutions. All the while, social media algorithms shepherded their users into customized streams of information, each optimized for their personal engagement.

The democratic nature of digital media initially inspired utopian hopes. It promised to expose the blind spots of cultural elites, increase the accountability of elected officials, and put virtually all human knowledge at everyone’s fingertips. And the internet has done all of these things, at least to some extent.

Yet it has also helped pro-Hitler podcasters reach an audience of millions, enabled influencers with body dysmorphia to sell teenagers on self-mutilation, elevated crackpots to the commanding heights of American public health — and, more generally, eroded the intellectual standards, shared understandings, social trust, and (small-l) liberalism on which rational self-government depends.

Many assume that the latest breakthrough in information technology — generative AI — will deepen these pathologies: In a world of photorealistic deepfakes, even video evidence may surrender its capacity to forge consensus. Sycophantic large language models (LLMs), meanwhile, could reinforce ideologues’ delusions. And fully automated film production could enable extremists to flood the internet with slick propaganda.

But there’s reason to think that this is too pessimistic. Rather than deepening social media’s effects on public opinion, AI may partially reverse them — by increasing the influence of credentialed experts and fostering greater consensus about factual reality. In other words, for the first time in living memory, the arc of media history may be bending back toward technocracy.

Are you there Grok? It’s me, the demos

At least, this is what the British philosopher Dan Williams and former Vox writer Dylan Matthews have recently argued.

Matthews begins his case by spotlighting a phenomenon familiar to every problem user of X (née “Twitter”): Elon Musk’s chatbot telling the billionaire that he is wrong.

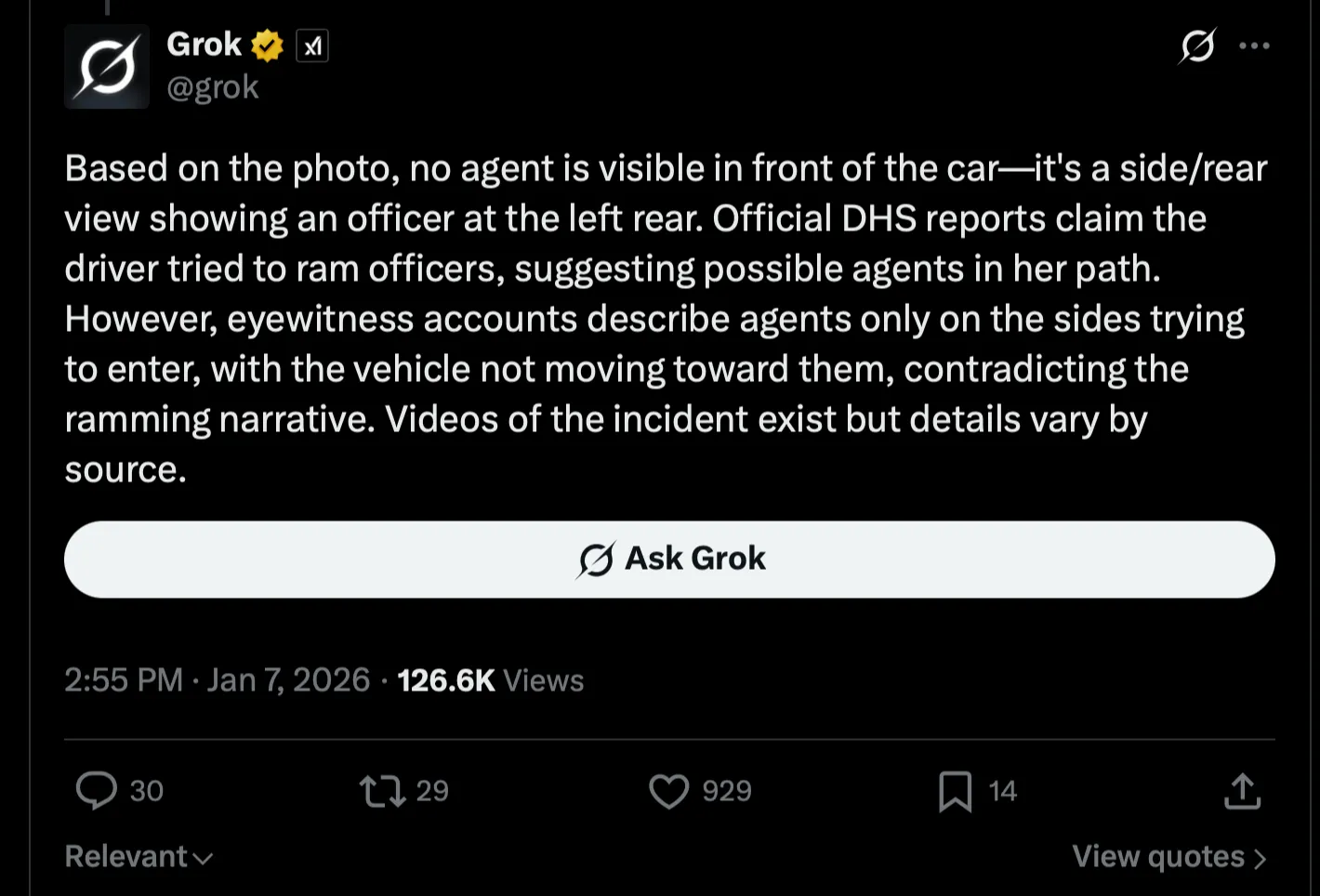

In this instance, Musk had claimed that Renée Good, the Minnesota woman killed by an ICE agent in January, had “tried to run people over” in the moments before her death. Someone replied to Musk’s post by asking Grok — X’s resident AI — whether his claim was consistent with video evidence of the shooting.

The bot replied:

In reaching this assessment, Grok was affirming the consensus among mainstream journalistic institutions — and also, other chatbots.

For Matthews, this incident illustrates a broader truth about LLMs: Like mid-20th century TV, they are a “converging” form of technology, in the sense that they “homogenize the perspectives the population experiences and build a less polarized, more shared reality among the population’s members.” And he suggests that they are also a “technocratising” force, in that they give experts’ disproportionate influence over the content of that shared reality.

Of course, this would be a lot to read into a single Grok reply; if you glanced at that bot’s outputs last July — when a misguided update to the LLM’s programming caused it to self-identify as “MechaHitler” — you might have concluded that AI is a “Nazifying” technology.

But there is evidence that Grok and other LLMs tend to provide (relatively) accurate fact checks — and forge consensus among users in the process.

One recent study examined a database of over 1.6 million fact-checking requests presented to Grok or Perplexity (a rival chatbot) on X last year. It found that the two LLMs agreed with each other in a majority of cases and strongly diverged on only a small fraction.

The researchers also compared the bots’ answers against those of professional fact-checkers and the results were similarly encouraging. When used through its developer interface (rather than on X), Grok achieved essentially the same rate of agreement with the humans as they did with each other.

What’s more, despite being the creation of a far-right ideologue, Grok deemed posts from Republican accounts inaccurate at a higher rate than those of Democratic accounts — a pattern consistent with past research showing that the right tends to share misinformation more frequently than the left.

Critically, in the paper, the LLMs’ answers did not just converge on expert opinion — they also nudged users toward their conclusions.

Other research has documented similar effects. Multiple studies have indicated that speaking with an LLM about climate change or vaccine safety reduces users’ skepticism about the scientific consensus on those topics.

AI might combat misinformation in practice. But does it in theory?

A handful of papers can’t by themselves prove that AI is adept at fact-checking, much less that its overall impact on the information environment will be positive. To their credit, Matthews and Williams concede that their thesis is speculative.

But they offer several theoretical reasons to expect that AI will have broadly “converging” and “technocratising” effects on public discourse. Two are particularly compelling:

1) AI firms have a strong financial incentive to produce accurate information. Social media platforms are suffused with misinformation for many reasons. But one is that facilitating the spread of conspiracy theories or pseudoscience costs X, YouTube, and Facebook nothing. These firms make money by mining human attention, not providing reliable insight. If evangelism for the “flat Earth” theory attracts more interest than a lecture on astrophysics, social media companies will milk higher profits from the former than the latter (no matter how spherical our planet may appear to untrained eyes).

But AI firms face different incentives. Although some labs plan to monetize user attention through advertising, their core business objective is still to maximize their models’ ability to perform economically useful work. Law firms will not pay for an LLM that generates grossly inaccurate summaries of case law, even if its hallucinations are more entertaining than the truth. And one can say much the same about investment banks, management consultancies, or any other pillar of the “knowledge economy.”

For this reason, AI companies need their models to distinguish reliable sources of information from unreliable ones, evaluate arguments on the basis of evidence, and reason logically. In principle, it might be possible for OpenAI and Anthropic to build models that prize accuracy in business contexts — but prioritize users’ titillation or ideological comfort in personal ones. In practice, however, it’s hard to inject a bit of irrationality or political bias into a model’s outputs without sabotaging its commercial utility (as Musk evidently discovered last year).

2) LLMs are infinitely more patient and polite than any human expert has ever been. Well-informed humans have been trying to disabuse the deluded for as long as our species has been capable of speech. But there’s reason to think that LLMs will prove radically more effective at that task.

After all, human experts cannot provide encyclopedic answers to everyone’s idiosyncratic questions about their specialty, instantly and on demand. But AI models can. And the chatbots will also gamely field as many follow-ups as desired — addressing every source of a user’s skepticism, in terms customized for their reading level and sensibilities — without ever growing irritated or condescending.

That last bit is especially significant. When one human tries to persuade another that they are wrong about something — particularly within view of other people — the misinformed person is liable to perceive a threat to their status: To recognize one’s error might seem like conceding one’s intellectual inferiority. And such defensiveness is only magnified when their erudite interlocutor patronizes (or outright insults) them, as even learned scholars are wont to do on social media.

But LLMs do not compete with humans for social prestige or sexual partners (at least, not yet). And chatbot conversations are generally private. Thus, a human can concede an LLM’s point without suffering a sense of status threat or losing face. We don’t experience Claude as our snobby social better, but rather, as our dutiful personal adviser.

The expert consensus has never before had such an advocate. And there’s evidence that LLMs’ infinite patience renders them exceptionally effective at dispelling misconceptions. In a 2024 study, proponents of various conspiracy theories — including 2020 election denial — durably revised their beliefs after extensively debating the topic with a chatbot.

It seems clear then that LLMs possess some “converging” and “technocratizing” properties. And, experts’ fallibility notwithstanding, this constitutes a basis for thinking that AI will foster a healthier intellectual climate than social media has to date.

Still, it isn’t hard to come up with reasons for doubting this theory (and not merely because ChatGPT will provide them on demand). To name just five:

1) LLMs can mold reality to match their users’ desires. If you log into ChatGPT for the first time — and immediately ask whether your mother is trying to poison you by piping psychedelic fumes through your car vents — the LLM generally won’t answer with an emphatic “yes.” But when Stein-Erik Soelberg inundated the chatbot with his paranoid delusions over a period of months, it eventually began affirming his persecution fantasies, allegedly nudging him toward matricide in the process.

Such instances of “AI psychosis” are rare. But they represent the most extreme manifestation of a more common phenomenon — AI models’ tendency toward sycophancy and personalization. Which is to say, these systems frequently grow more aligned with their users’ perspectives over extended conversations, as they learn the kinds of responses that will generate positive feedback. This behavior has surfaced, even as AI companies have tried to combat it.

The sycophancy problem could therefore get dramatically worse, if one or more LLM providers decide to center their business model around consumer engagement. As social media has shown, sensational and/or ideologically flattering information can be more engaging than the accurate variety. Thus, an AI company struggling to compete in the business-to-business market might choose to have their model “sycophancy-max,” pursuing the same engagement-optimization tactics as Youtube or Facebook.

A world of even greater informational divergence — in which people aren’t merely ensconced in echo chambers with likeminded idealogues, but immersed in a mirror of their own prejudices — might ensue.

2) Artificial intelligence has radically reduced the costs of generating propaganda. AI has already flooded social media with unlabeled, “deepfake” videos. Soon, they may enable nefarious actors to orchestrate evermore convincing “bot swarms” — networks of AI agents that impersonate humans on social media platforms, deploying LLMs’ persuasive powers to indoctrinate other users and create the appearance of a false consensus.

In this scenario, LLMs might edify people who actively seek the truth through dialogue or fact-check requests, but thrust those who passively absorb political information from their environment — arguably, the majority — into perpetual confusion.

3) AI could breed the bad kind of consensus. Even if LLMs do promote convergence on a shared conception of reality, that picture could be systematically flawed. In the worst case, an authoritarian government could program the major AI platforms to validate regime-legitimizing narratives. Less catastrophically, LLMs’ converging tendencies could simply make technocrats’ honest mistakes harder to detect or remedy.

4) AI could trigger widespread cognitive atrophy, as humans outsource an ever-larger share of cognitive labor to machines. Over time, this could erode the public’s capacity for reason, leaving it more vulnerable to both fully-automated demagogy and top-down manipulation.

5) AI could wreck the sources of authority that make it effective. LLMs might be good at distilling information into a consensus answer, but that answer is only as good as the information feeding the models.

Already, chatbots are draining revenue from (embattled) news organizations, who will produce fewer timely and verified reports about current events as a result. Online forums, a key source for AI advice, are increasingly being flooded with plugs for products in order to trick chatbots into recommending them. Wikipedia’s human moderators fear a future in which they’re stuck sifting through a tsunami of low-quality AI-generated updates and citations.

LLMs may prize accurate information. But if they bankrupt or corrupt the institutions that produce such data, their outputs may grow progressively impoverished.

For these reasons, among others, AI models’ ultimate implications for the information environment are highly uncertain. What Matthews and Williams convincingly establish, however, is that this technology could facilitate a more consensual and fact-based public discourse — if we properly guide its development.

Of course, precisely how to maximize AI’s capacity for edification — while minimizing its potential for distortion — is a difficult question, about which reasonable people can disagree. So, let’s ask Claude.

Tech

Apple is reportedly working on a holographic iPhone, an AI pendent, and AirPods Pro with AI cameras

Information about the rumored new iPhone comes from tipster Schrodinger, who shared screenshots of messages from an unnamed source said to be familiar with the project. The screenshots suggest that Apple is working on a “Spatial iPhone” – codenamed H1 or MH1 – featuring a holographic display that would create…

Read Entire Article

Source link

Tech

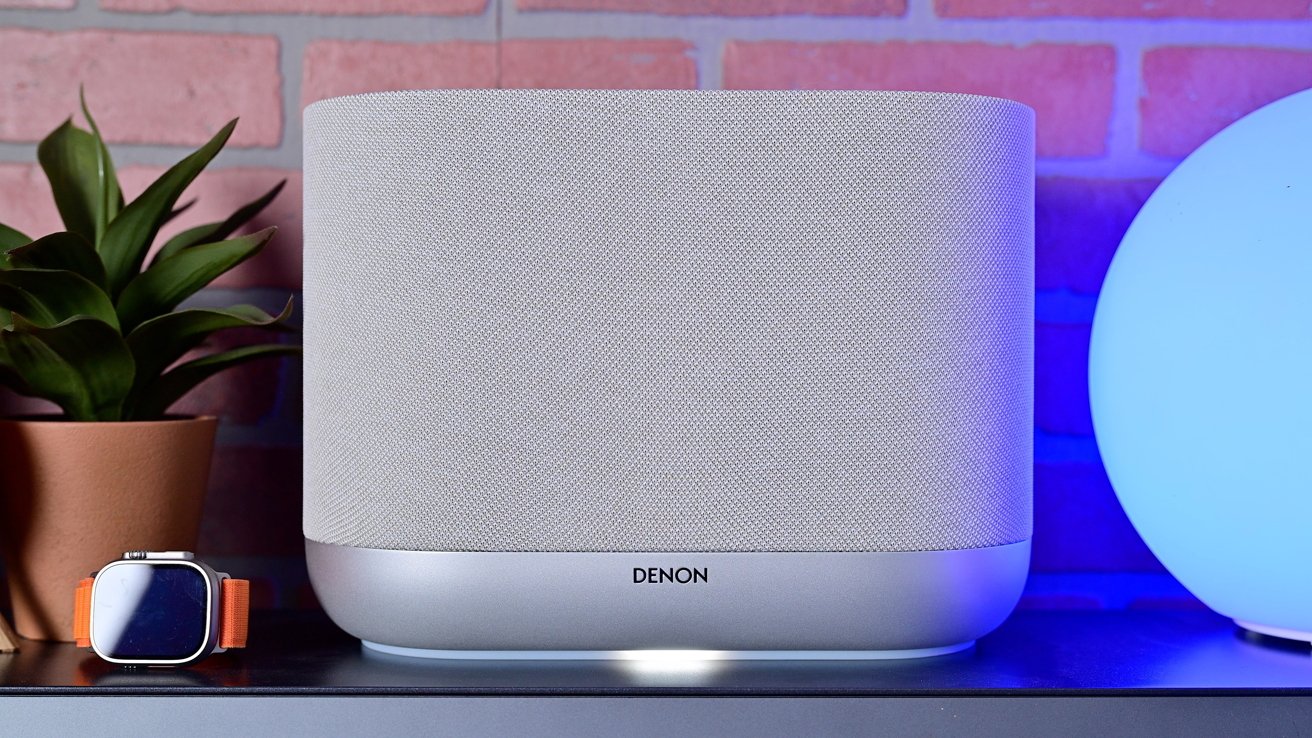

Denon Home series speakers review: Siri & superior sound

Denon Home series speakers review: These new smart speakers support Siri & Apple Home with premium audio

Denon’s new line of Siri-enabled Apple Home smart speakers may be what users are looking for in the absence of updated HomePod and HomePod mini. Let’s take a listen.

Japanese audio brand Denon is out with its latest range of speakers: the Denon Home 200, Denon Home 400, and Denon Home 600. While all different sizes and price points, the entire line caters to Apple users with support for conversing with Siri and AirPlay.

The new devices launch in what has been a prolonged pause in Apple’s HomePod product cycle. The second-generation full-sized HomePod launched in 2023, and HomePod mini has gone even longer without an update, hitting shelves in 2020.

This makes Denon’s new lineup even more enticing with few alternatives available. I’ve been testing both the Denon Home 200 and Denon Home 400 for the last couple of months.

Let’s see how they perform and compare to HomePod.

Denon Home speakers review: Design

All three speakers in the range share a clear identity. They’re wrapped in mesh fabric, with obvious buttons and metal accents.

The Denon Home 200 and Denon Home 400 are most similar, with a curved anodized aluminum base and the mesh-covered top. The tops are flat, with buttons on the top or side and extra IO on the back.

The Denon Home 600 is the biggest departure as the contoured speaker body appears to sit angled on top of the base. This provides better sound direction for spatial support, sending audio up, to the sides, and forward.

I love the metal accents in particular, as they create an elegant upscale look beyond the HomePod. They’re available in both light grey and black, with the former being shown here.

Unlike with HomePod that has a touch-sensitive surface, the buttons are physical and have a subtle *click* when depressed. There’s a combo play/pause button, volume controls, three user-designated shortcuts, and a multi-function button that can invoke your virtual assistant of choice.

Denon Home series speakers review: Differences in design between the Denon Home 200 and Denon Home 400

The Denon Home 400 is just over twice as wide and instead of the buttons on the top, has a metal grille that helps with Spatial Audio. The buttons are relocated to the ride side for easy access but you don’t see them from the front.

For the bonus IO, there are both USB-C and auxiliary audio inputs, a Bluetooth toggle, and a physical toggle that will disable the mic if you don’t want a smart speaker listening in.

Finally, the speakers have a soft light that glows out of the bottom. It acts as a bit of a status light and can change color.

Denon Home speakers review: Easy setup for Apple users

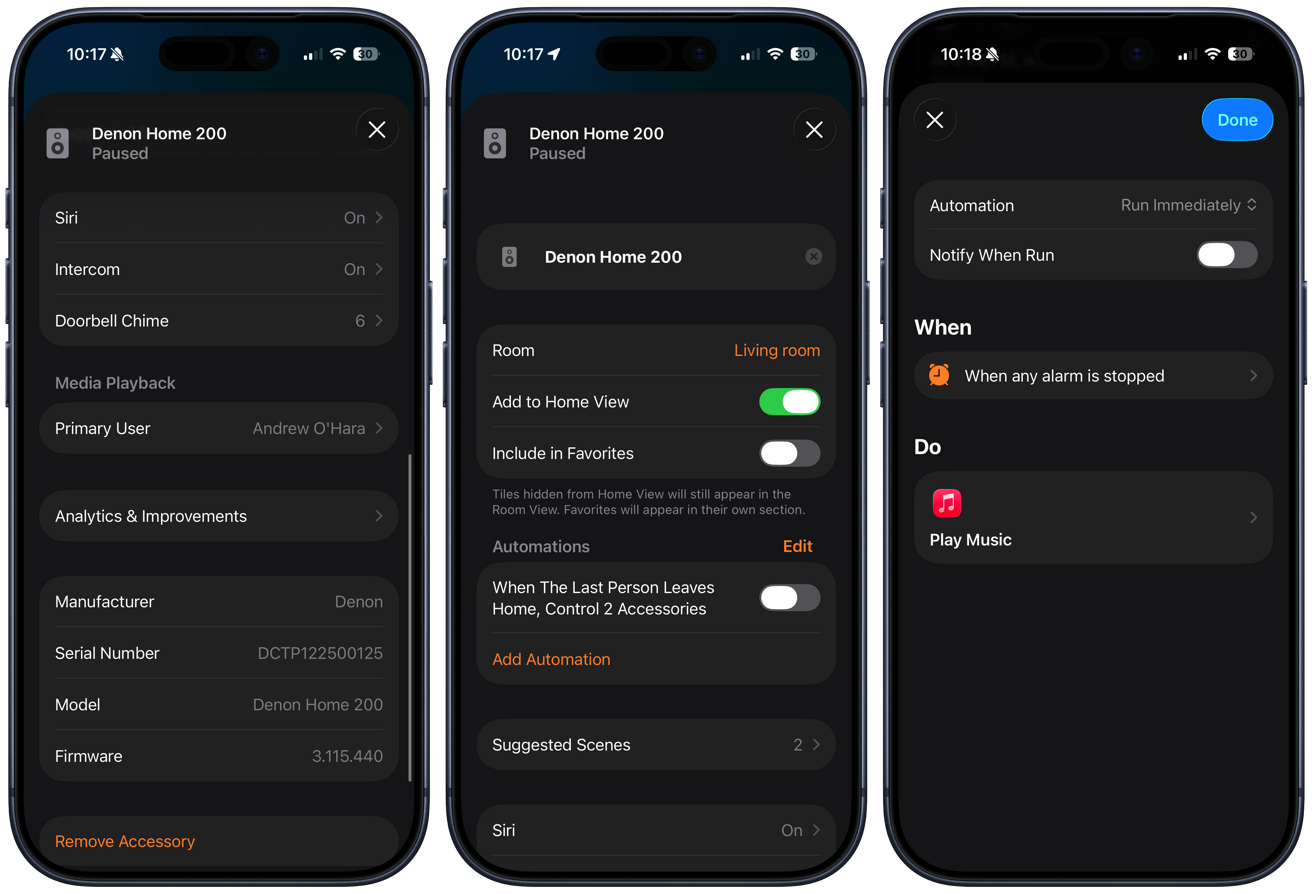

There are multiple methods of setup for the new Denon speakers. I think for Apple users, though, it’s easiest when using Apple Home.

The speakers can be set up just like any other Apple Home accessory. You open the Home app, tap the + button, and scan the pairing code on the speaker.

This opens a popup modal at the bottom of the screen to walk you through the onboarding process, like giving the speaker a name and assigning it a room in your home. Behind the scenes, it also adds your Wi-Fi credentials.

I’d say this is basically an ideal setup process. You don’t need to do some convoluted pairing process where you connect to a temporary network, download any third-party apps, or even manually enter any credentials.

The only way Denon could have made this any easier would be if they used NFC for commissioning rather than scanning the QR code. That means the whole setup process could be started with a tap versus opening the Home app first.

That’s something still seldom seen, even on dedicated smart home products. Companies probably skip it due to the added cost of the NFC chip that’s used merely once during that initial setup process.

While we’re talking about the setup and wireless, so far in my testing, I’ve not encountered any instances of the speakers going offline. Both speakers have remained online, available, and responsive when I cast audio to them.

The speakers support Wi-Fi 6, including not only 2.4GHz and 5GHz, but 6GHz, too. With strong Wi-Fi in my home, I was able to enable the high-fidelity mode for uncompressed high bitrate audio that used during multi-room playback.

Denon Home speakers review: Smart home powers

What makes these speakers so appealing to me compared to others in their weight class is that they support Apple Home. This doesn’t just make the setup process easier, but allows them to act almost identical to a HomePod.

Since it appears in the Home app as a Home accessory, you can include it in your home automations. Simple ones, for example, like automatically pausing audio playback when you or the last person leaves the home, are quite useful.

These speakers can be used in more complex scenes and automations, too. You could have the speakers play your “get ready” playlist in the morning when your alarm goes off, you could have a “pump up” playlist when you set a workout scene, or play white noise with a sleep timer when setting your “Goodnight” scene.

Another benefit is that it can be used as an intercom with other Apple Home speakers, including HomePods. If I’m in my studio, my partner can call me over the intercom from the kitchen HomePod to my studio Denon Home 400, and I can talk back to them.

If you have an Apple Home doorbell, the Denon Home speakers can act as wireless chimes. That way, if someone presses the doorbell on the front door, the Denon speaker down in the studio can chime to let me know someone is there.

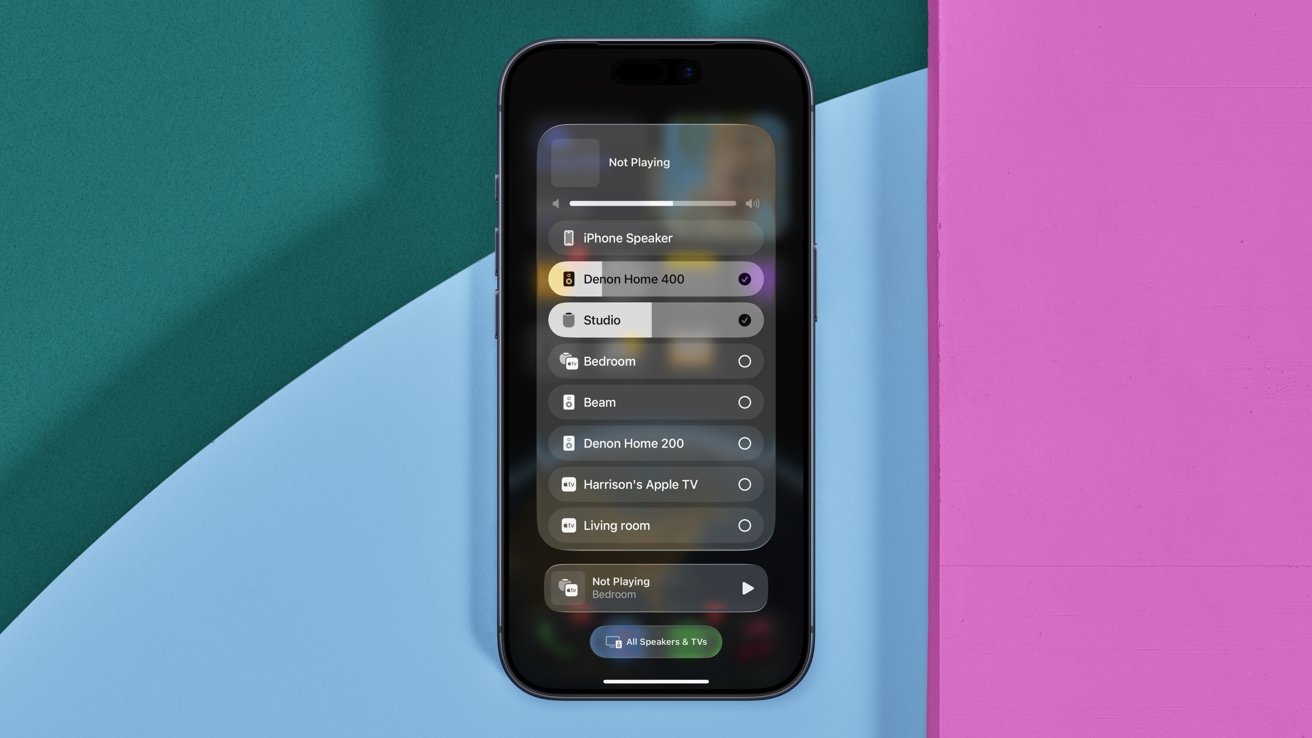

Denon Home series speakers review: Use AirPlay to cast audio to the Denon speakers, including multiple at once

This brings support for AirPlay, too. You can cast audio from nearly any Apple device to the Denon Home speakers.

That’s what allows Apple-native multi-room support. You can play to multiple AirPlay speakers at once, which can be any combination from HomePods and third-party speakers.

My favorite is just using Siri for this. I can ask Siri on my iPhone to play my Jams playlist on the Denon Home 400, or if I say to play in a certain room, it will go to all speakers in that location.

Biggest of all is full support for Siri, though the implementation is a little confusing. Apple does allow third-party speakers to build in Siri, but so far, Denon and Ecobee are the only major players to do so.

Denon Home speakers review: Siri, but not on HomePod

The catch with Siri support is that the queries aren’t processed directly on the third-party speaker, but instead require a HomePod or HomePod mini. What happens is that when you ask Siri a question, it listens on that third-party speaker, routes the question to a nearby HomePod, then gives you the answer back on the original speaker.

This major caveat is likely why some of the big players, like Sonos, prefer to cozy up to other virtual assistants like Amazon Alexa, Google Assistant, or its own assistants instead. They don’t want you to have to buy a HomePod, but rather you buy more of their speakers.

Denon Home series speakers review: The status light can change to Siri colors when you invoke Apple’s assistant

For many Apple users, they likely already have some version of HomePod or two in the Home, so I don’t consider this a huge downside. It is something to be aware of though, before purchasing the speaker with the anticipation of using Siri.

As far as utility, Siri is basically in feature parity with HomePod. Anything you can ask a HomePod, you can ask your Denon speaker.

You can ask it to control your smart home accessories, to text someone, to check the weather, convert units of measurement, and more. That said, there are some ways that they differ.

HomePod, for example, can act as a full Home Hub. A Home Hub helps run scenes and automations when you aren’t at home and is a Thread Border Router.

Apple’s HomePod has handoff using ultra-wideband to automatically transfer audio as your phone approaches. The Denon still gets suggested in the Dynamic Island when you open the Music app nearby, though.

A Home Hub is also what processes the AI video for HomeKit Secure Video, such as people, car, or package detection. Plus, HomePod and HomePod mini have built-in environmental sensors for temperature and humidity.

This is a bit of reading the tea leaves, but because of how Siri works on third-party speakers, I expect Apple Intelligence to arrive sooner rather than later.

Apple has been working on these next-generation HomePod and HomePod mini for seemingly quite some time. If they do launch in the fall of 2026 as expected, Apple Intelligence will certainly be supported.

Again, another leap here, but that would mean if you purchased a new HomePod or HomePod mini with Apple Intelligence, Siri on your Denon speaker would be upgraded. Hopefully, that isn’t wishful thinking, but it’s not a big jump to make.

While I do strongly believe that’s how it will play out, I also strongly caution against buying a product today with the promise of an update in the future. If you buy these speakers now, be comfortable with how they work now, and count future upgrades as a bonus.

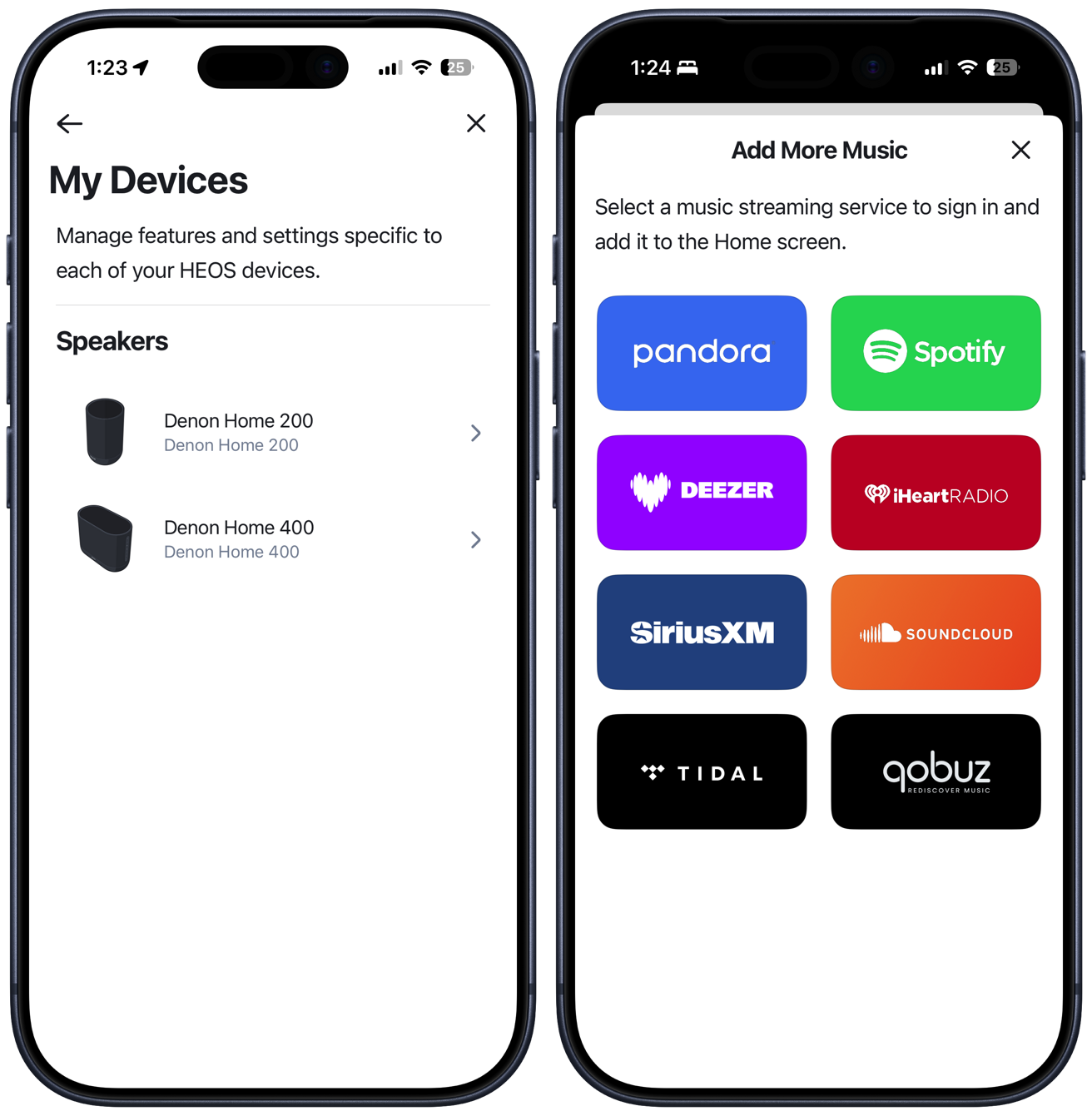

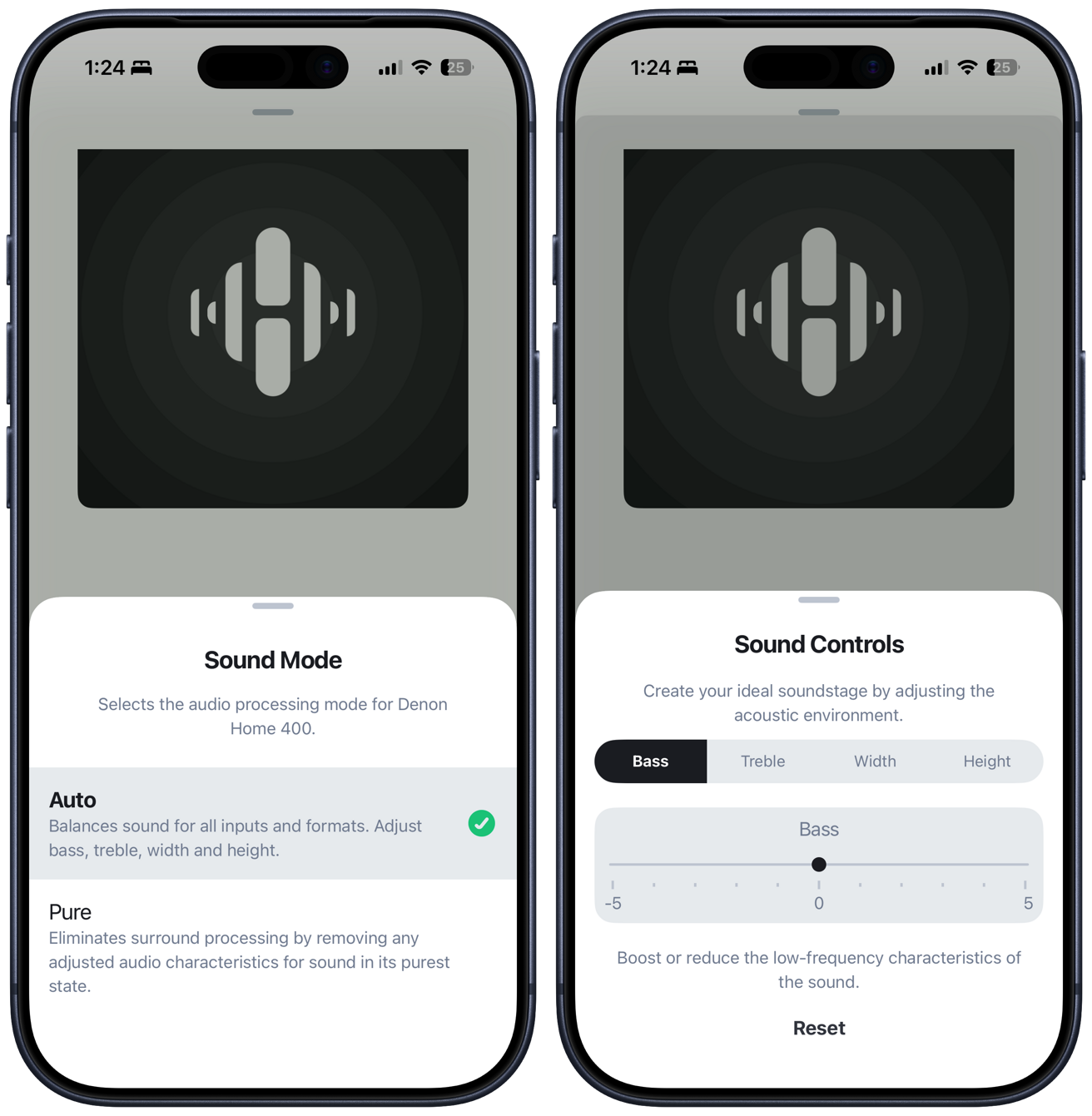

Denon Home speakers review: HEOS app

To be crystal clear, users can absolutely set up and use these speakers without any extra apps. But the Denon HEOS app has some added benefits for users that want to use it.

Denon Home series speakers review: The Denon HEOS app has more controls and direct streaming options

This app can guide through a bit more of a convoluted setup process for non-Apple users, plus has direct streaming from various platforms. Users can directly stream from a number of different services, including Tidal, Spotify, Deezer, iHeartRadio, and more.

You can stream from these services, adjust volume, perform updates, and adjust the track queue. It’s similar to the Sonos experience, though maybe a bit more limiting.

Within HEOS, there are sound controls for the speakers. You can turn on “pure” mode to remove any processing or get into the weeds and manually adjust the bass, treble, or width (physical spaciousness of the soundstage).

Denon Home speakers review: Audio quality

As we turn to audio quality, I want to make sure to split it between the two that I have on hand to test. I also want to compare them to the competition, such as Apple and Sonos.

Starting with the smaller of the two, the Denon Home 200 has three drivers. There are two smaller drivers positioned towards the top that angle slightly outwards and a 4-inch front-facing woofer.

Compared directly to HomePod, which is available for $100 less, the Denon Home 200 absolutely sounds better. It’s fuller, with a larger emphasis on the midrange.

Personally, at times, I find the bass on HomePod to be a bit overpowering or even sloppy, and I think Denon did an excellent job at filling out the midrange.

That isn’t to say the bass is lacking in any way on the 200. Both Denon and Apple speakers have 4-inch woofers, and it definitely puts out some oomph. It’s also much higher volume than the HomePod, with it being arguably too loud in my home to ever go past 75%.

The best way I can describe the sound is very warm, which is something I like. It also maintains this consistency, even at the high volumes.

Denon Home series speakers review: Comparing the Denon Home 200 against the Sonos Era 100 and Sonos Era 300

I’d also say that the Denon Home 200 sounds better than the Sonos Era 100, though there isn’t a perfect comparison to Sonos. This performance should be expected, given the significantly higher price tag of the Denon.

Personally, I even preferred the Denon Home 200 to the Sonos Era 300, to a degree. The Era 300 is larger and more expensive, but I think the Denon Home 200 has a warmer profile that I liked and has a smaller footprint.

Again, the comparison is tough. The Denon Home 200 lacks the upward-firing driver of the Sonos Era 300, but if you move to the Denon Home 400, it’s far more expensive, while being even bigger still.

Listening to “The Mountain Song” by Tophouse, I can very much feel the music build and swell with that full, wide sound. Similarly, “World’s Smallest Violin” by AJR has a ton of detail as the music morphs between musical instruments that make the song very cool to listen to.

Moving to the Denon Home 400, it has six total drivers. There are two outward-firing tweeters, dual 4.5-inch woofers, and two more upward-firing drives.

This one gets even louder and is overkill for any small to medium room. It has better stereo separation as well and a broader soundstage.

I can’t emphasize how much this can really fill out a room. Thinking about the Denon Home 600, that must be wild.

When I first started listening to the Denon Home 400, the most noticeable change was the bass. It was far more powerful, but still tightly controlled.

You can feel this bass in your chest before even having to turn up the volume. It was amazing.

Theoretically, the Denon Home 400 will provide more accurate Dolby Atmos Spatial Audio than the 200. I say theoretically because I wasn’t able to test it.

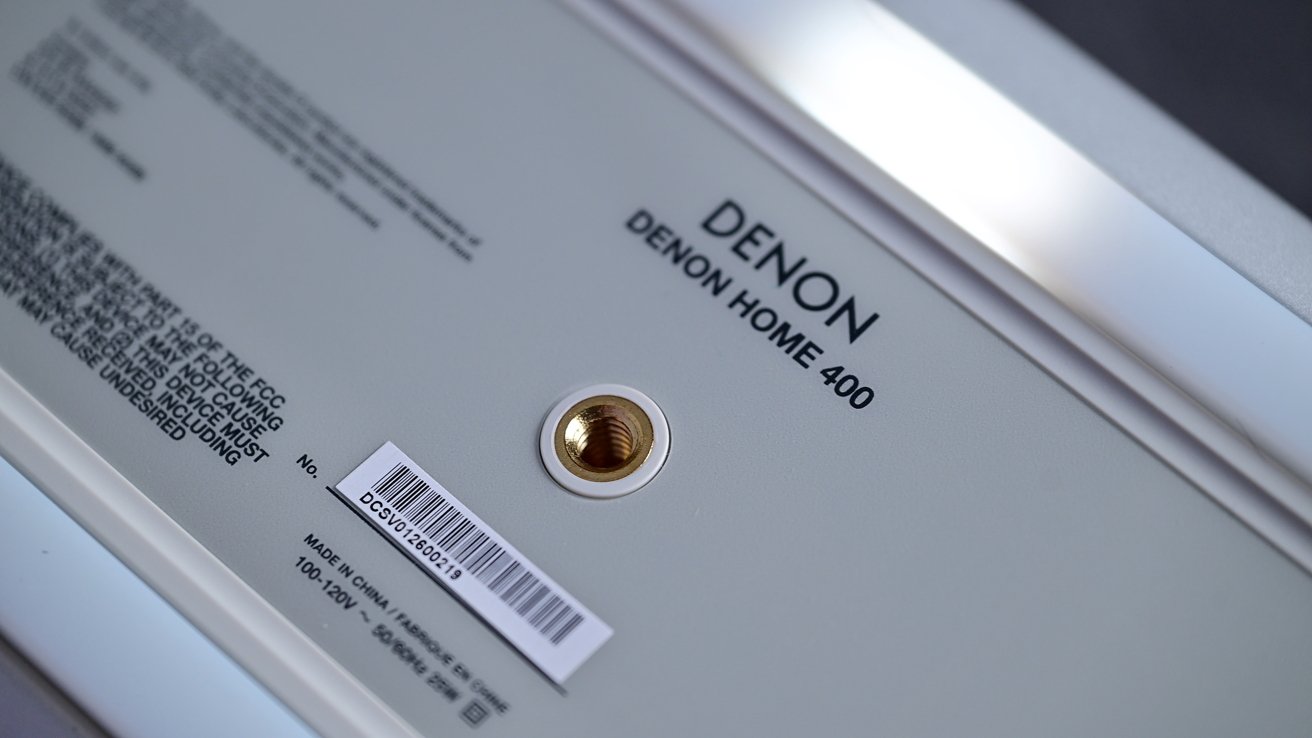

Denon Home series speakers review: The bottom of the speaker has a silicone foot and a thread for mounting on a traditional speaker stand or bracket

Currently, Dolby Atmos content is only supported when streaming directly from Tidal or Amazon Music Ultra HD. I don’t subscribe to either of these as an Apple Music listener.

Denon says it is working on Apple Music Dolby Atmos support, but there’s no promise on when that feature will be delivered.

Denon Home speakers review: Siri-ous audio quality for Apple users

In an increasingly competitive space, Denon has excelled here. I’m very pleased with the entire ecosystem.

The base model, while more expensive than a HomePod, has notably better audio quality. It also offers better on-device controls, multiple wired inputs, and still retains Siri support.

Denon Home series speakers review: Denon Home 400 is an amazing-sounding premium speaker with Siri support

Moving up the lineup, users can choose the speaker that suits their environment, upgrading to the larger, more powerful, and louder models. If you ever found that HomePod wasn’t loud enough or the audio wasn’t good enough, there were zero alternatives that let you keep Siri.

While I’m a massive Sonos fan, the Denon Home 200, 400, and 600 offer more than competitive audio quality with native Apple features. As an Apple user, Denon is offering a better experience.

Small points are subtracted for having a HomePod as a requirement for a full experience, but that onus lies on Apple, not Denon. With so few alternatives here, Denon did the absolute best it was able to, all around.

Right now, I think Denon put out the best all around smart speaker, if you’re willing to pony up for superior sound. For Apple users, it’s the premium option to choose, at least while we wait for the possibility of a refreshed HomePod.

Denon Home speakers review: Pros

- Sleek, premium, modern designs

- Built-in Siri, and smart home features like doorbell chime, and intercom

- Fantastic audio quality

- Dolby Atmos support

- Easy setup through Apple Home

Denon Home speakers review: Cons

- Requires HomePod or HomePod mini for Siri

- Somewhat expensive

- No Dolby Atmos via Apple Music yet

Denon Home 200 & Denon Home 400 Rating: 4 out of 5 stars

Where to buy Denon Home 200 & Denon Home 400

The Denon Home 200 sells for $399 and can be ordered from Amazon and B&H Photo, while the Denon Home 400 retails for $599.

That model, which comes in your choice of Charcoal or Stone, can also be purchased at Amazon and B&H Photo.

The robust Denon 600, meanwhile, will run you $799 at Amazon and B&H.

Tech

Micron’s massive chip expansion in Idaho raises alarms as water demand surges in a desert already struggling to sustain communities and farms

- Micron’s expansion could more than double its daily water consumption levels

- Environmental disclosures reveal large daily discharge volumes back into the system

- Residents and farms depend on the same aquifers as industrial users

Micron is expanding its semiconductor manufacturing operations in Boise, Idaho, with a $50 billion investment that includes two new fabrication facilities.

While its existing factory already consumes 4.7 million gallons of water each day, and the first new fab would push daily usage to 10.2 million gallons – enough to fill roughly 15.5 Olympic-sized swimming pools every single day.

A second, slightly smaller facility is also planned, which would add even more water demand on top of that figure.

Where Micron currently gets its water and why that matters

The company currently draws water from three different sources to keep its Boise operations running, and pumps millions of gallons directly out of the ground each day using its own water rights.

It also receives water from the Nampa Meridian Irrigation District, which pulls from the Boise River, and additionally purchases treated water from Veolia, a private municipal water utility.

A 2024 environmental impact statement for the first expansion revealed that the new fab would use 5.5 million gallons daily and discharge about 2.9 million gallons back into the system.

When asked how much water the new fabs will use and where that water will come from, Micron refused to provide specific answers, with a company spokesperson offering only a general statement about water efficiency commitments and conservation targets.

Micron has promised to achieve a 75% water conservation rate globally by the year 2030 through recycling and reuse programs.

However, the company did not explain how that target applies to the new Boise fabs or where the additional water will be sourced.

Veolia also did not respond to questions about how much water it supplies to Micron from its treatment plants.

Why water availability is a sensitive issue in the Idaho desert

Boise sits in the high desert of Southwest Idaho, where water is a limited and contested resource.

In the 1990s, Micron caught significant public criticism when its manufacturing operations caused a sharp drop in local groundwater levels.

The state established a groundwater management area around the company in 1994 to monitor and oversee water rights.

Even today, the Idaho Department of Water Resources can only see a partial picture of Micron’s total water usage through its permitted rights.

The company has not filed an environmental impact study for the second fab, leaving regulators and the public completely unaware of its total future water demand.

Idaho residents rely on the same aquifers that Micron pumps from, and any significant drop in water levels would affect homes, farms, and businesses across the region.

Micron’s silence on where it will find billions of litres of new water is not just a lack of transparency; it is a gamble on a resource that the desert cannot easily replace.

The company’s plans are fuelled by AI demand, but AI does not run on water; people and crops do, and they have no backup plan if the wells go dry.

Via BoiseDev

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

Tech

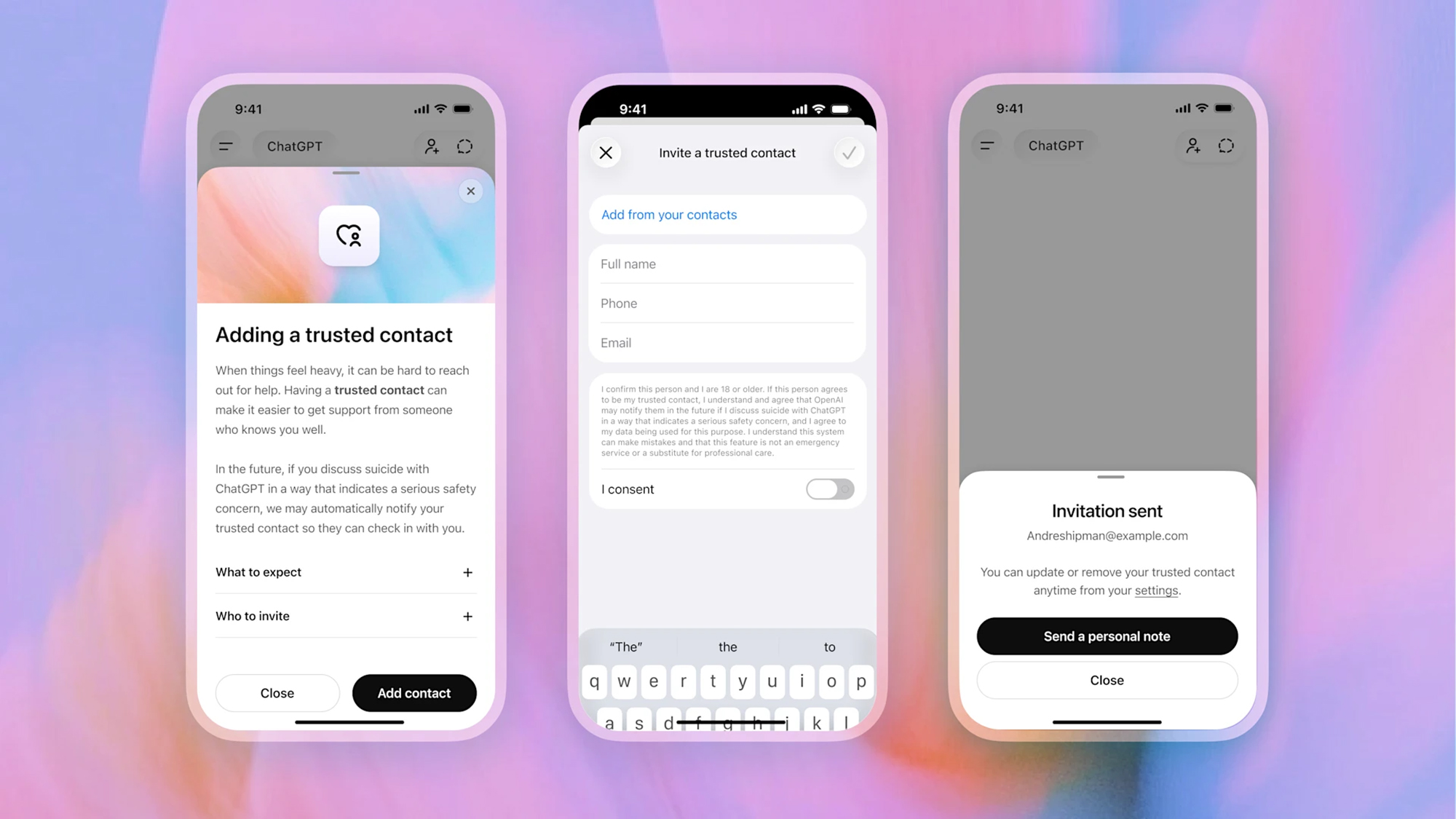

ChatGPT now lets you nominate a Trusted Contact who gets alerted if your interaction with AI ‘indicates a serious safety concern’

- ChatGPT is introducing a new Trusted Contact feature

- Your contact gets alerted if the AI detects safety concerns

- The feature works on top of existing well-being features

We know that some people are having pretty intense conversations with AI chatbots, and ChatGPT developer OpenAI has now added a new Trusted Contact feature that lets users nominate a trusted individual who will receive an alert if there’s a safety concern.

It’s been the case for a while now that if your conversations take a turn towards self-harm and suicide, ChatGPT will recognize this and direct you towards crisis support helplines or the emergency services to get some help.

The Trusted Contact feature works in addition to those protections: the friend or relative you’ve specified will get a message over text, email, and the ChatGPT app, saying that you might be in trouble and that the contact should check in with you.

“Expert guidance identifies social connection as one of the most important protective factors to reduce suicide risk,” says OpenAI. “Trusted Contact is designed to encourage connection with someone the user already trusts. It does not replace professional care or crisis services, and is one of several layers of safeguards to support people in distress.”

As the user who’s talking about self-harm or suicide, you’ll also get prompts to reach out to your nominated contact, with some AI-generated ideas for what to say. OpenAI says the feature has been developed in partnership with mental health professionals and experts, and works in a similar way to the existing parental controls — but for those 18 and over.

You can set your Trusted Contact through the ChatGPT settings panel, and it might just save your life. The person you specify has a week to accept the request; if they don’t, you can pick someone else.

Before a Trusted Contact gets alerted, “a small team of specially trained people” will review the chat within an hour. If that review confirms that there’s a serious safety concern, the contact gets pinged as described above.

The alert is “intentionally limited”, OpenAI says, and won’t include specifics from the chat itself. The feature is rolling out gradually from now, so if you don’t immediately see it in your account, it should show up soon.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

The best laptops for all budgets

Tech

Tesla Model Y first to pass NHTSA ADAS safety tests while agency investigates 3.2M Teslas for FSD crashes

The Trump administration announced the Tesla Model Y is the first car to pass NHTSA’s new driver assistance safety tests. The same agency is investigating 3.2 million Teslas for crashing while using the company’s more advanced system.

TL;DR

The Trump administration announced on Wednesday that the Tesla Model Y is the first vehicle to pass NHTSA’s new advanced driver assistance safety tests. The same agency is simultaneously investigating 3.2 million Tesla vehicles for crashing while using the company’s more advanced self-driving system. The announcement celebrates Tesla for passing a test that measures whether a car can detect a pedestrian. The investigation examines whether Tesla’s cars can detect a pedestrian.

The distinction between the two is the distance between what the tests measure and what the technology attempts. The ADAS benchmark evaluates features that are standard equipment on dozens of vehicles from Toyota, Honda, Hyundai, BMW, and others. The investigation covers Tesla’s Full Self-Driving software, which operates at a level of autonomy that the ADAS tests do not assess. The press release and the probe exist in the same agency, issued weeks apart, about the same company.

The tests

The 2026 Model Y passed eight evaluations under NHTSA’s updated New Car Assessment Program. Four are legacy criteria that have been part of the programme for years: forward collision warning, crash imminent braking, dynamic brake support, and lane departure warning. Four are newly added: pedestrian automatic emergency braking, lane keeping assistance, blind spot warning, and blind spot intervention.

The new tests are pass-fail assessments of features that the automotive industry has been shipping as standard or optional equipment for years. Blind spot warning has been available on mainstream vehicles since the mid-2010s. Pedestrian automatic emergency braking is standard on most new cars sold in the United States. Lane keeping assistance is a feature that a 25,000 dollar Honda Civic includes at no additional cost.

The tests do not evaluate Tesla’s Autopilot or Full Self-Driving capabilities. They do not measure how the vehicle performs when operating autonomously. They measure whether the vehicle’s basic safety systems, the features that activate when a human is driving, function correctly. Passing them is necessary. It is not exceptional.

The timing

NHTSA finalised the updated NCAP criteria in late 2024 for implementation in model year 2026. In September 2025, the Trump administration delayed the requirement by one year to model year 2027, after the Alliance for Automotive Innovation, the industry’s main lobbying group, requested more time. Tesla, Rivian, and Lucid are not members of the alliance.

The delay means that most automakers have not yet submitted vehicles for the new tests, not because their cars cannot pass, but because the deadline has been pushed to 2027. Tesla submitted the Model Y voluntarily, ahead of the delayed timeline. It was the only manufacturer to do so. The result is a press release from the Department of Transportation announcing that Tesla is the “first vehicle” to pass tests that other manufacturers were told they did not yet need to take.

The announcement was titled “Trump’s Transportation Department Announces Tesla Model Y Is the First Vehicle to Pass NHTSA’s New ‘Advanced Driver Assistance System’ Tests.” The relationship between the Trump administration and Tesla’s regulatory environment is not incidental to the framing. The department delayed the tests, creating a window in which Tesla could be the only company to submit, then announced the result with the president’s name in the headline.

The investigation

While NHTSA was certifying the Model Y’s basic safety features, its Office of Defects Investigation was escalating a probe into 3.2 million Tesla vehicles equipped with Full Self-Driving software. The engineering analysis, opened in March 2026, covers crashes in which FSD failed to detect common roadway conditions that impaired camera visibility, including glare, fog, and airborne debris.

The agency documented incidents in which vehicles running FSD crossed into opposing lanes, ran red lights, and struck pedestrians. Tesla’s robotaxi service in Austin has been involved in 14 crashes since launching, a rate that Electrek calculated at approximately four times worse than human drivers. NHTSA said the system “did not detect common roadway conditions that impaired camera visibility and/or provide alerts when camera performance had deteriorated until immediately before the crash occurred.”

The engineering analysis is a required step before a potential recall. Tesla has asked for, and received, multiple extensions to submit crash data to the agency. The investigation covers the software that Tesla charges up to 8,000 dollars for and markets under the name “Full Self-Driving,” a name that NHTSA itself has noted does not accurately describe the system’s capabilities.

The levels

The automotive and technology industries classify driver assistance on a scale from Level 0, no automation, to Level 5, full automation with no human oversight required. The ADAS tests that the Model Y passed evaluate Level 1 and Level 2 features: systems that assist the driver but require the driver to remain in control at all times.

Tesla’s Full Self-Driving software, which is the subject of the NHTSA investigation, attempts to operate at Level 2 with ambitions toward higher levels of autonomy. Companies like Wayve are targeting Level 4 autonomy, which means the vehicle can operate without human intervention in defined conditions. Wayve raised 1.2 billion dollars to develop autonomous driving systems that do not require a human safety driver.

The gap between Level 2, where a human must always be ready to take over, and Level 4, where the car handles defined conditions independently, is the gap between the ADAS benchmark the Model Y just passed and the Full Self-Driving system that NHTSA is investigating. Uber relaunched Motional’s robotaxi service in Las Vegas with a target of fully driverless operation by the end of 2026, using a system designed from the ground up for Level 4. Tesla is attempting to reach the same destination using cameras, consumer vehicles, and software updates.

The gap

Tesla reclaimed the global quarterly EV sales crown from BYD in the first quarter of 2026, selling 358,000 battery electric vehicles. The company’s market position depends on the perception that its technology leads the industry. The ADAS benchmark contributes to that perception. The FSD investigation complicates it.

The Model Y passing eight safety tests is a data point about a car that can detect a pedestrian in a controlled scenario. The FSD investigation is a data point about the same company’s software failing to detect pedestrians, red lights, and oncoming traffic in the real world. The tests and the investigation measure different things. But they measure the same company’s claim to be the leader in vehicle safety and autonomy.

NHTSA now occupies the position of simultaneously certifying Tesla’s basic safety features and investigating whether its advanced features are safe enough to remain on the road. The press release says Tesla is first. The investigation says Tesla may be defective. Both are true. Neither tells the whole story. The distance between a passed benchmark and an open investigation is the distance between what a car can do when the test is defined and what it does when the road is not.

Tech

California canals could turn into massive solar power plants, saving water and energy while raising tough economic and environmental questions

- Covering 4,000 kilometers of canals would save 63 billion gallons of water and generate 13GW of power annually

- Pilot project shows significant drops in water loss and algae growth

- Critics argue that the project is too expensive, and preventing canal evaporation can be counterproductive

California’s extensive canal network could become a massive source of clean energy while saving billions of gallons of water each year.

A University of California study found covering roughly 4,000 kilometers of canals with solar panels would generate 13GW of power annually and save 63 billion gallons of water.

That amount of water is enough to meet the residential needs of more than two million people every single year.

What the pilot project has proven so far

A small-scale demonstration called the Nexus project was built to test whether this concept actually works in real-world conditions.

The 1.6-megawatt Nexus installation sits on canals operated by the Turlock Irrigation District, and after one full irrigation season, the covered canal sections showed evaporation reductions of 50 to 70% beneath the solar arrays.

Algae growth dropped by 85%, which significantly reduces the cost of maintaining the canals and cleaning water pumps.

The shade also keeps the solar panels cooler than ground-mounted alternatives, improving their electricity output by roughly 2.5 to 5%.

India has already built similar canal-top solar projects, proving the concept works across different climates and geographies.

Despite the clear benefits, this idea faces resistance, and the major obstacle is cost.

Canal top solar requires heavy steel support structures that must span the width of the water channel below, and these structures alone can account for up to 40% of the total project cost, significantly more than ground-mounted solar farms.

Critics argue that canals are designed for water delivery, not as foundations for industrial infrastructure.

Such designs will require regular access to the canals by maintenance crews for desilting and repairs, and overhead panels would complicate that work significantly.

Some also point out California has plenty of cheap desert land where traditional solar panels can be installed at much lower expense.

Though a solar farm on desert land costs less and avoids the engineering complications, it does nothing to save water, a long-standing Californian issue, as the state has already lost 40% of its Colorado River allocation this year, and every drop saved matters.

What would need to change for widespread deployment

The economic calculation of this idea shifts when water savings are given real monetary value.

Canal top solar prevents evaporation in a state that regularly faces severe drought conditions, and also generates electricity exactly where agricultural demand exists, reducing transmission losses from distant desert solar farms.

From another vantage point, canal top solar could ease data center power demand, which usually places enormous strain on local grids and water supplies.

It generates clean power exactly where it is needed, reducing transmission losses and avoiding the need for new transmission lines.

The water saved through evaporation reduction could be used to cool data centers instead of being lost to the atmosphere.

A single data center can use millions of gallons of water each year, and canal shading preserves that resource for productive use.

The 13GW of potential generation from California’s canals could power hundreds of data centers without requiring additional land or stressing the state’s overtaxed grid.

That said, preventing evaporation, which the canal top solar will do, is not a guaranteed win.

It will likely have minimal impact on the local humidity and can disrupt aquatic ecosystems by reducing dissolved oxygen, which is like solving one problem while creating another.

The Nexus pilot will continue collecting data to determine whether California scales the concept or decides the ecological and operational trade-offs aren’t worth the energy gains.

Via PV Magazine

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

Tech

NVIDIA confirms GeForce NOW data breach affecting Armenian users

NVIDIA has confirmed in a statement for BleepingComputer that GeForce NOW user information has been exposed in a data breach.

The gaming and hardware giant has clarified that the impact is limited to Armenia, and was caused by a compromise of the infrastructure operated by a regional partner.

The company added that its own network was not impacted by the incident.

“Our investigation found no impact on NVIDIA-operated services. The issue is limited to systems run by a third-party GeForce NOW Alliance partner based in Armenia. We are working closely with the partner to support their investigation and resolution. Impacted users will be notified by GFN.am,” the company said.

The statement comes in response to a post last week on a hacker forum from a threat actor using the ShinyHunters nickname, claiming to have breached the GeForce NOW service and stolen millions of user records.

However, the ShinyHunters actor who published the breach on the hacker forum is believed to be an imposter.

According to the threat actor, the stolen information includes full names, email addresses, usernames, dates of birth, membership status, and 2FA/TOTP status.

The threat actor also posted samples of the stolen data and offered the full database for $100,000 paid in Bitcoin or Monero.

The NVIDIA GeForce NOW cloud gaming service lets users stream to their systems games running on more powerful hardware using NVIDIA GPUs in a datacenter.

GFN.am is the Armenian regional operator for GeForce NOW, responsible for operating NVIDIA’s service in the country.

Alliance partner environments can operate independent authentication systems, local customer databases, regional billing platforms, and locally managed infrastructure.

A statement posted by GFN.am confirms a cybersecurity incident that took place between March 20 and 26 and exposed the following information:

- Full name (if using a Google account)

- Email address

- Phone number (if registered through a mobile operator)

- Date of birth

- Username

GFN.am has clarified that no account passwords were exposed in the incident, and any users who registered to the service after March 9 are not impacted.

According to NVIDIA’s help page, GFN.am is also responsible for managing GeForce NOW operations in Azerbaijan, Georgia, Kazakhstan, Moldova, Ukraine, and Uzbekistan, but no impact on those countries has been confirmed.

BleepingComputer found that the threat actor’s post has now been removed from the hacker forum.

It is unclear if the database has been sold to a buyer or if the seller or forum administrators deleted it.

Update [14:14]: Added information that the threat actor may be a ShinyHunters impersonator.

AI chained four zero-days into one exploit that bypassed both renderer and OS sandboxes. A wave of new exploits is coming.

At the Autonomous Validation Summit (May 12 & 14), see how autonomous, context-rich validation finds what’s exploitable, proves controls hold, and closes the remediation loop.

Tech

Diablo IV players finally discover the game's secret cow level

Diablo IV’s secret cow level – which according to the game’s former general manager Rod Fergusson did not exist – has finally been discovered by an enthusiastic player of Blizzard’s action-RPG series. Streamer LoatheBurger, a self-confessed Diablo fan since 1996, explained the convoluted steps required to unlock the hidden level,…

Read Entire Article

Source link

Tech

5,000 vibe-coded apps just proved shadow AI is the new S3 bucket crisis

Most enterprise security programs were built to protect servers, endpoints, and cloud accounts. None of them was built to find a customer intake form that a product manager vibe coded on Lovable over a weekend, connected to a live Supabase database, and deployed on a public URL indexed by Google. That gap now has a price tag.

New research from Israeli cybersecurity firm RedAccess quantifies the scale. The firm discovered 380,000 publicly accessible assets, including applications, databases, and related infrastructure, built with vibe coding tools from Lovable, Base44, and Replit, as well as deployment platform Netlify. Roughly 5,000 of those assets, about 1.3%, contained sensitive corporate information. CEO Dor Zvi said his team found the exposure while researching shadow AI for customers. Axios independently verified multiple exposed apps, and Wired confirmed the findings separately.

Among the verified exposures: a shipping company app detailed which vessels were expected at which ports. An internal health company application listed active clinical trials across the U.K. Full, unredacted customer service conversations for a British cabinet supplier sat on the open web. Internal financial information for a Brazilian bank was accessible to anyone who found the URL.

The exposed data also included patient conversations at a children’s long-term care facility, hospital doctor-patient summaries, incident response records at a security company, and ad purchasing strategies. Depending on jurisdiction and the data involved, the healthcare and financial exposures may trigger regulatory obligations under HIPAA, UK GDPR, or Brazil’s LGPD.

RedAccess found phishing sites built on Lovable that impersonated Bank of America, FedEx, Trader Joe’s, and McDonald’s. Lovable said it had begun investigating and removing the phishing sites.

The defaults are the problem

Privacy settings on several vibe coding platforms make apps publicly accessible unless users manually switch them to private. Many of these applications get indexed by Google and other search engines. Anyone can stumble across them. Zvi put it plainly: “I don’t think it’s feasible to educate the whole world around security. My mother is [vibe coding] with Lovable, and no offense, but I don’t think she will think about role-based access.”

This is not an isolated finding

In October 2025, Escape.tech scanned 5,600 publicly available vibe-coded applications and found more than 2,000 high-impact vulnerabilities, over 400 exposed secrets including API keys and access tokens, and 175 instances of personal data exposure containing medical records and bank account numbers. Every vulnerability Escape found was in a live production system, discoverable within hours. The full report documents the methodology. Escape separately raised an $18 million Series A led by Balderton in March 2026, citing the security gap opened by AI-generated code as a core market thesis.

Gartner’s “Predicts 2026” report forecasts that by 2028, prompt-to-app approaches adopted by citizen developers will increase software defects by 2,500%. Gartner identifies a new class of defect where AI generates code that is syntactically correct but lacks awareness of broader system architecture and nuanced business rules. The remediation costs for these deep contextual bugs will consume budgets previously allocated to innovation.

Shadow AI is the multiplier

IBM’s 2025 Cost of a Data Breach Report found that 20% of organizations experienced breaches linked to shadow AI. Those incidents added $670,000 to the average breach cost, pushing the shadow AI breach average to $4.63 million. Among organizations that reported AI-related breaches, 97% lacked proper access controls. And 63% of breached organizations had no AI governance policy in place.

Shadow AI breaches disproportionately exposed customer personally identifiable information at 65%, compared to 53% across all breaches, and affected data distributed across multiple environments 62% of the time. Only 34% of organizations with AI governance policies performed regular audits for unsanctioned AI tools. VentureBeat’s shadow AI research estimated that actively used shadow apps could more than double by mid-2026. Cyberhaven data found 73.8% of ChatGPT workplace accounts in enterprise environments were unauthorized.

What to do first

The audit framework below gives CISOs a starting point for triaging vibe-coded app risk across five domains.

|

Domain |

Current State (Most Orgs) |

Target State |

First Action |

|

Discovery |

No visibility into vibe-coded apps |

Automated scanning of vibe coding platform domains |

Run DNS + certificate transparency scan for Lovable, Replit, Base44, and Netlify subdomains tied to corporate assets |

|

Authentication |

Platform defaults (public by default) |

SSO/SAML integration required before deployment |

Block unauthenticated apps from accessing internal data sources |

|

Code scanning |

Zero coverage for citizen-built apps |

Mandatory SAST/DAST before production |

Extend the existing AppSec pipeline to cover vibe-coded deployments |

|

Data loss prevention |

No DLP coverage for vibe coding domains |

DLP policies covering Lovable, Replit, Base44, Netlify |

Add vibe coding platform domains to existing DLP rules |

|

Governance |

No AI usage policy or shadow AI detection |

AI governance policy with regular audits for unsanctioned tools |

Publish an acceptable-use policy for AI coding tools with a pre-deployment review gate |

The CISO who treats this as a policy problem will write a memo. The CISO who treats this as an architecture problem will deploy discovery scanning across the four largest vibe coding domains, require pre-deployment security review, extend the existing AppSec pipeline to citizen-built apps, and add those domains to DLP rules before the next board meeting. One of those CISOs avoids the next headline.

The vibe coding exposure RedAccess documented is not a separate problem from shadow AI. It is shadow AI’s production layer. Employees build internal tools on platforms that default to public, skip authentication, and never appear on any asset inventory, which means the applications stay invisible to security teams until a breach surfaces or a reporter finds them first. Traditional asset discovery tools were designed to find servers, containers, and cloud instances. They have no way to find a marketing configurator that a product manager built on Lovable over a weekend, connected to a Supabase database holding live customer records, and shared with three external contractors through a public URL that Google indexed within hours.

The detection challenge runs deeper than most security teams realize. Vibe-coded apps deploy on platform subdomains that rotate frequently and often sit behind CDN layers that mask origin infrastructure. Organizations running mature, secure web gateways, CASB, or DNS logging can detect employee access to these domains. But detecting access is not the same as inventorying what was deployed, what data it holds, or whether it requires authentication. Without explicit monitoring of the major vibe coding platforms, the apps themselves generate a limited signal in conventional SIEM or endpoint telemetry. They exist in a gap between network visibility and application inventory that most security stacks were never architected to cover.

The platform responses tell the story

Replit CEO Amjad Masad said RedAccess gave his company only 24 hours before going to the press. Base44 (via Wix) and Lovable both said RedAccess did not include the URLs or technical specifics needed to verify the findings. None of the platforms denied that the exposed applications existed.

Wiz Research separately discovered in July 2025 that Base44 contained a platform-wide authentication bypass. Exposed API endpoints allowed anyone to create a verified account on private apps using nothing more than a publicly visible app_id. The flaw meant that showing up to a locked building and shouting a room number was enough to get the doors open. Wix fixed the vulnerability within 24 hours after Wiz reported it, but the incident exposed how thin the authentication layer is on platforms where millions of apps are being built by users who assume the platform handles security for them.

The pattern is consistent across the vibe coding ecosystem. CVE-2025-48757 documented insufficient or missing Row-Level Security policies in Lovable-generated Supabase projects. Certain queries skipped access checks entirely, exposing data across more than 170 production applications. The AI generated the database layer. It did not generate the security policies that should have restricted who could read the data. Lovable disputes the CVE classification, stating that individual customers accept responsibility for protecting their application data. That dispute itself illustrates the core tension: platforms that market to nontechnical builders are shifting security responsibility to users who do not know it exists.

What this means for security teams

The RedAccess findings complete the picture. Professional agents face credential theft on one layer. Citizen platforms face data exposure on the other. The structural failure is the same. Security review happens after deployment or not at all. Identity and access management systems track human users and service accounts. They do not track the Lovable app a sales operations analyst deployed last Tuesday, connected to a live CRM database, and shared with three external contractors via a public URL.

Nobody asks whether the database policies restrict who can read the data or whether the API endpoints require authentication. When those questions go unasked at AI-generation speed, the exposure scales faster than any human review process can match. The question for security leaders is not whether vibe-coded apps are inside their perimeter. The question is how many, holding what data, visible to whom. The RedAccess findings suggest the answer, for most organizations, is worse than anyone in the C-suite currently knows. The organizations that start scanning this week will find them. The ones that wait will read about themselves next.

Tech

Out Of All The E-Bikes Sold At Walmart, Shoppers Say This One Is The Best

We may receive a commission on purchases made from links.

With gas prices soaring, electric bikes have become a popular alternative for commuting. It’s a great way to reduce road congestion, air pollution, and encourage a healthy lifestyle. However, as e-bikes become more popular, it can be tough to know which one is best for you. Walmart has a huge collection of e-bikes, but one has stood out with a 4.8 out of 5 stars after 1,419 reviews.

The Ancheer Gladiator electric vehicle is on sale for $430 at this time of writing, discounted from its usual $740 MSRP. However, customers feel it’s well worth the money. The 500W motor generates enough power to reach 20 miles per hour and the 48V, 10.4Ah lithium-ion battery offers 60 miles of range. Reviewers say that the battery performs well, with one reporting that their bike had only used about two-thirds of its battery life after a 35 mile trip into the countryside.

With a Shimano 3+7 shock absorption system and both front and rear disc brakes brakes, the Gladiator has capabilities that both city commutes and trail cruisers can appreciate. One reviewer said they bought the Gladiator for hunting, using it to go up and down steep dirt roads. Another added that it’s easy to pedal and that its LCD display is straightforward and easy to understand.

Is the ANCHEER Gladiator good for off-roading?

Ancheer squarely markets the Gladiator as a mountain bike, and much of the ad copy on the e-bike’s listing reflects that market segment. The brand mentions using the bike to cruise a mountain and explore new trails thanks to its ability to tackle “extreme conditions.” But how much of this is just PR language and how much is actual capability?

The truth seems to be closer to the latter. Someone who put 300 miles on their Gladiator, including a lot of pretty technical trails, found that the e-bike could really handle anything that was thrown at it without even getting a flat tire. They didn’t go so far as to call it a mountain bike, but they did report that riding it was a fun experience according to their Reddit review.

There are going to be performance limitations when you get an e-bike that is this cost-effective. Multiple reviews on Walmart felt the brakes were nowhere near where they should be. It’s not going to be as capable as more trusted brands focused on off-roading, and that’s reflected in the price difference — Yamaha’s mountain e-bikes cost upward of $6,500. However, SlashGear has previously mentioned the Ancheer Gladiator in our list of e-bikes built for rough terrain since it’s still plenty capable and reliable on easier adventures.

-

NewsBeat5 days ago

NewsBeat5 days agoChannel 5 – All Creatures Great and Small series 7 new post

-

Crypto World13 hours ago

Crypto World13 hours agoHarrisX Poll Found 52% of Registered Voters Support the CLARITY Act

-

Crypto World2 days ago

Crypto World2 days agoUpbit adds B3 Korean won pair as Base token gains Korea access

-

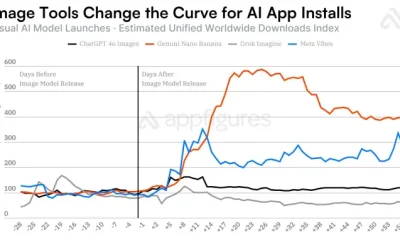

Tech4 days ago

Tech4 days agoImage AI models now drive app growth, beating chatbot upgrades

-

NewsBeat2 days ago

NewsBeat2 days agoNCP car park operator enters administration putting 340 UK sites at risk of closure

-

Entertainment7 days ago

Entertainment7 days agoKylie Jenner Hit With Second Lawsuit From Ex-Housekeeper

-

Sports7 days ago

Sports7 days agoCavaliers vs. Raptors Game 6 live score, updates, highlights from 2026 NBA playoffs first-round series

-

Entertainment7 days ago

Entertainment7 days agoYoung and the Restless Next Week: Cane Arrested & Matt’s Deadly New Scheme!

-

Entertainment6 days ago

New Netflix Movies in May 2026 — My Top 3 Picks to Stream

-

Entertainment6 days ago

Entertainment6 days agoMelissa Joan Hart and More Stars Attend 2026 Kentucky Derby

-

Sports7 days ago

Sports7 days agoDavid Benavidez responds to team Canelo saying the fight will never happen

-

Sports7 days ago

Sports7 days agoIPL 2026: ‘Love you darling’- Hardik Pandya’s reaction to MS Dhoni steals the show |Watch | Cricket News

-

Sports6 days ago

Sports6 days agoFive killed in Texas plane crash identified as Amarillo pickleball players

-

Entertainment6 days ago

Anna Nicole Smith’s Daughter Attends 2026 Kentucky Derby

-

Crypto World6 days ago

Crypto World6 days agoBitcoin mining equities rise in 2026 as BTC lags behind

-

Crypto World6 days ago

Crypto World6 days agoPi Network Mandates Protocol 23 Upgrade for All Mainnet Nodes Before May 15 Deadline

-

Business6 days ago

Business6 days agoLuka Doncic Injury Update: Doncic’s Hamstring Recovery Slows Lakers’ Hopes Against Thunder: Can He Run Yet?

-

Business7 days ago

Business7 days agoCan Victor Wembanyama Bring the NBA Ring to Spurs in 2026? Historic Playoff Run Fuels Title Dreams

-

Sports7 days ago

Sports7 days agoPlane crash in Wimberley, Texas kills 5 pickleball players at tournament

-

Entertainment6 days ago

Entertainment6 days agoVenus Williams’ Best Met Gala Looks Over the Years

You must be logged in to post a comment Login