Crypto World

xAI’s Grok 2.5 vs OpenAI’s GPT-OSS-20B & GPT-OSS-120B: A Comparative Analysis

Introduction

The open-source AI ecosystem reached a turning point in August 2025 when Elon Musk’s company xAI released Grok 2.5 and, almost simultaneously, OpenAI launched two new models under the names GPT-OSS-20B and GPT-OSS-120B. While both announcements signalled a commitment to transparency and broader accessibility, the details of these releases highlight strikingly different approaches to what open AI should mean. This article explores the architecture, accessibility, performance benchmarks, regulatory compliance and wider industry impact of these three models. The aim is to clarify whether xAI’s Grok or OpenAI’s GPT-OSS family currently offers more value for developers, businesses and regulators in Europe and beyond.

What Was Released

Grok 2.5, described by xAI as a 270 billion parameter model, was made available through the release of its weights and tokenizer. These files amount to roughly half a terabyte and were published on Hugging Face. Yet the release lacks critical elements such as training code, detailed architectural notes or dataset documentation. Most importantly, Grok 2.5 comes with a bespoke licence drafted by xAI that has not yet been clearly scrutinised by legal or open-source communities. Analysts have noted that its terms could be revocable or carry restrictions that prevent the model from being considered genuinely open source. Elon Musk promised on social media that Grok 3 would be published in the same manner within six months, suggesting this is just the beginning of a broader strategy by xAI to join the open-source race.

By contrast, OpenAI unveiled GPT-OSS-20B and GPT-OSS-120B on 5 August 2025 with a far more comprehensive package. The models were released under the widely recognised Apache 2.0 licence, which is permissive, business-friendly and in line with requirements of the European Union’s AI Act. OpenAI did not only share the weights but also architectural details, training methodology, evaluation benchmarks, code samples and usage guidelines. This represents one of the most transparent releases ever made by the company, which historically faced criticism for keeping its frontier models proprietary.

Architectural Approach

The architectural differences between these models reveal much about their intended use. Grok 2.5 is a dense transformer with all 270 billion parameters engaged in computation. Without detailed documentation, it is unclear how efficiently it handles scaling or what kinds of attention mechanisms are employed. Meanwhile, GPT-OSS-20B and GPT-OSS-120B make use of a Mixture-of-Experts design. In practice this means that although the models contain 21 and 117 billion parameters respectively, only a small subset of those parameters are activated for each token. GPT-OSS-20B activates 3.6 billion and GPT-OSS-120B activates just over 5 billion. This architecture leads to far greater efficiency, allowing the smaller of the two to run comfortably on devices with only 16 gigabytes of memory, including Snapdragon laptops and consumer-grade graphics cards. The larger model requires 80 gigabytes of GPU memory, placing it in the range of high-end professional hardware, yet still far more efficient than a dense model of similar size. This is a deliberate choice by OpenAI to ensure that open-weight models are not only theoretically available but practically usable.

Documentation and Transparency

The difference in documentation further separates the two releases. OpenAI’s GPT-OSS models include explanations of their sparse attention layers, grouped multi-query attention, and support for extended context lengths up to 128,000 tokens. These details allow independent researchers to understand, test and even modify the architecture. By contrast, Grok 2.5 offers little more than its weight files and tokenizer, making it effectively a black box. From a developer’s perspective this is crucial: having access to weights without knowing how the system was trained or structured limits reproducibility and hinders adaptation. Transparency also affects regulatory compliance and community trust, making OpenAI’s approach significantly more robust.

Performance and Benchmarks

Benchmark performance is another area where GPT-OSS models shine. According to OpenAI’s technical documentation and independent testing, GPT-OSS-120B rivals or exceeds the reasoning ability of the company’s o4-mini model, while GPT-OSS-20B achieves parity with the o3-mini. On benchmarks such as MMLU, Codeforces, HealthBench and the AIME mathematics tests from 2024 and 2025, the models perform strongly, especially considering their efficient architecture. GPT-OSS-20B in particular impressed researchers by outperforming much larger competitors such as Qwen3-32B on certain coding and reasoning tasks, despite using less energy and memory. Academic studies published on arXiv in August 2025 highlighted that the model achieved nearly 32 per cent higher throughput and more than 25 per cent lower energy consumption per 1,000 tokens than rival models. Interestingly, one paper noted that GPT-OSS-20B outperformed its larger sibling GPT-OSS-120B on some human evaluation benchmarks, suggesting that sparse scaling does not always correlate linearly with capability.

In terms of safety and robustness, the GPT-OSS models again appear carefully designed. They perform comparably to o4-mini on jailbreak resistance and bias testing, though they display higher hallucination rates in simple factual question-answering tasks. This transparency allows researchers to target weaknesses directly, which is part of the value of an open-weight release. Grok 2.5, however, lacks publicly available benchmarks altogether. Without independent testing, its actual capabilities remain uncertain, leaving the community with only Musk’s promotional statements to go by.

Regulatory Compliance

Regulatory compliance is a particularly important issue for organisations in Europe under the EU AI Act. The legislation requires general-purpose AI models to be released under genuinely open licences, accompanied by detailed technical documentation, information on training and testing datasets, and usage reporting. For models that exceed systemic risk thresholds, such as those trained with more than 10²⁵ floating point operations, further obligations apply, including risk assessment and registration. Grok 2.5, by virtue of its vague licence and lack of documentation, appears non-compliant on several counts. Unless xAI publishes more details or adapts its licensing, European businesses may find it difficult or legally risky to adopt Grok in their workflows. GPT-OSS-20B and 120B, by contrast, seem carefully aligned with the requirements of the AI Act. Their Apache 2.0 licence is recognised under the Act, their documentation meets transparency demands, and OpenAI has signalled a commitment to provide usage reporting. From a regulatory standpoint, OpenAI’s releases are safer bets for integration within the UK and EU.

Community Reception

The reception from the AI community reflects these differences. Developers welcomed OpenAI’s move as a long-awaited recognition of the open-source movement, especially after years of criticism that the company had become overly protective of its models. Some users, however, expressed frustration with the mixture-of-experts design, reporting that it can lead to repetitive tool-calling behaviours and less engaging conversational output. Yet most acknowledged that for tasks requiring structured reasoning, coding or mathematical precision, the GPT-OSS family performs exceptionally well. Grok 2.5’s release was greeted with more scepticism. While some praised Musk for at least releasing weights, others argued that without a proper licence or documentation it was little more than a symbolic gesture designed to signal openness while avoiding true transparency.

Strategic Implications

The strategic motivations behind these releases are also worth considering. For xAI, releasing Grok 2.5 may be less about immediate usability and more about positioning in the competitive AI landscape, particularly against Chinese developers and American rivals. For OpenAI, the move appears to be a balancing act: maintaining leadership in proprietary frontier models like GPT-5 while offering credible open-weight alternatives that address regulatory scrutiny and community pressure. This dual strategy could prove effective, enabling the company to dominate both commercial and open-source markets.

Conclusion

Ultimately, the comparison between Grok 2.5 and GPT-OSS-20B and 120B is not merely technical but philosophical. xAI’s release demonstrates a willingness to participate in the open-source movement but stops short of true openness. OpenAI, on the other hand, has set a new standard for what open-weight releases should look like in 2025: efficient architectures, extensive documentation, clear licensing, strong benchmark performance and regulatory compliance. For European businesses and policymakers evaluating open-source AI options, GPT-OSS currently represents the more practical, compliant and capable choice.

In conclusion, while both xAI and OpenAI contributed to the momentum of open-source AI in August 2025, the details reveal that not all openness is created equal. Grok 2.5 stands as an important symbolic release, but OpenAI’s GPT-OSS family sets the benchmark for practical usability, compliance with the EU AI Act, and genuine transparency.

Crypto World

Colosseum Launches AI Agent Hackathon on Solana With $100,000 Prize Pool

TLDR:

- Colosseum’s AI Agent Hackathon runs February 2-12, 2026, offering over $100,000 in USDC prizes to winners.

- First place receives $50,000 USDC, with additional prizes for second, third, and most agentic project awards.

- Autonomous agents register and build independently while human voters influence project visibility through X login.

- Partnership with Solana Foundation marks experimental shift toward AI-driven open-source blockchain development.

Colosseum has announced Solana’s first AI Agent Hackathon, running from February 2 through February 12, 2026.

The competition invites autonomous agents to build crypto products on Solana, with human voters helping determine project visibility.

Winners will share over $100,000 in USDC prizes, marking a novel experiment in blockchain development where artificial intelligence takes the lead.

Competition Structure and Registration Details

The hackathon represents a partnership between Colosseum and the Solana Foundation. Agents can register through the official platform at colosseum.com/agent-hackathon.

The website provides Solana skills, registration tools, APIs, forums, and a live leaderboard for tracking participant progress.

OpenClaw Agents have immediate access to the competition framework. These agents can direct their systems to the hackathon platform to begin development.

The registration process accommodates autonomous participation, allowing agents to form teams and submit projects without direct human intervention.

Human participants play a crucial role in the voting mechanism. Voters must sign in with their X accounts to upvote preferred projects.

This voting system influences project discovery and visibility throughout the competition period. Additionally, humans can claim agents to receive potential prizes.

Prize Distribution and Judging Criteria

The total prize pool exceeds $100,000 in USDC across four categories. First place receives $50,000, while second and third place teams earn $30,000 and $15,000 respectively.

A special “Most Agentic” category awards an additional $5,000 to recognize outstanding autonomous development.

Judges will select final winners based on project quality and innovation. Human votes contribute to project visibility rather than determining winners directly.

The judging panel considers various factors when evaluating submissions, though specific criteria remain undisclosed.

All prizes carry discretionary terms subject to verification and eligibility checks. Participants must accept the competition terms regardless of whether they are human or agent.

Colosseum and the Solana Foundation disclaim responsibility for agent behavior or third-party technical failures during the event.

Market Context and Community Response

Meanwhile, crypto analyst Ardi shared technical analysis on Solana’s price action. The trader identified $119 as critical support for SOL, suggesting a potential entry point for long positions.

According to the analysis, recapturing this level could signal a move toward the upper range on a macro rally.

Ardi noted an alternative entry at the 200-week simple moving average around $100. This level represents macro support established in April 2025.

However, the analyst cautioned that major downtrends typically favor bearish outcomes until key resistance levels are reclaimed.

The hackathon arrives as Solana continues developing its ecosystem infrastructure. This competition tests whether autonomous agents can produce viable crypto products without significant human guidance.

Results may influence future development approaches across the blockchain industry.

Crypto World

Bitwise to Acquire Chorus One as Crypto Staking Demand Accelerates

Bitwise Asset Management is reportedly acquiring institutional staking provider Chorus One, extending its push into cryptocurrency yield services.

The acquisition adds a major staking operation to the crypto asset manager’s platform as demand for onchain yield products increases among both retail and institutional investors.

Chorus One provides staking services for decentralized networks and currently has $2.2 billion in assets staked, according to its website.

The financial terms of the deal were not disclosed, Bloomberg reported on Wednesday, citing statements from both companies.

Cointelegraph reached out to Bitwise and Chorus One for comment, but had not received a response by publication.

Related: 21Shares launches first Jito staked Solana ETP in Europe

Ethereum staking demand surges as validator queue swells

Ethereum validator queue data shows a surge in demand to stake Ether (ETH). The entry queue has swelled to more than 4 million ETH, translating into a wait time of over 70 days.

Almost 37 million ETH, or just over 30% of total supply, is now staked, with close to 1 million active validators securing the network. This suggests that more holders are choosing to lock up ETH despite long delays.

The rising interest in staking has pushed other major asset managers to integrate yield into regulated crypto products. Morgan Stanley filed to launch a spot Ether exchange-traded fund (ETF) that would stake part of its holdings to generate passive returns. Grayscale is also preparing to distribute staking rewards from its Ethereum Trust ETF, the first payout tied to onchain staking by a US-listed spot crypto exchange-traded product.

Related: Crypto VC activity hits $4.6B in Q3, second-best quarter since FTX collapse

Crypto M&A hits record

Bitwise’s deal also follows a surge in the crypto industry’s mergers and acquisitions in 2025, reaching $8.6 billion across a record 133 transactions by November, surpassing the combined total of the previous four years.

Coinbase led the wave, closing six acquisitions, including the $2.9 billion purchase of crypto derivatives exchange Deribit.

Magazine: Bitget’s Gracy Chen is looking for ‘entrepreneurs, not wantrepreneurs’

Crypto World

Nevada Moves to Block Coinbase Prediction Markets After Polymarket Ban

Nevada regulators have taken fresh legal action against crypto exchange Coinbase, seeking to halt the company’s prediction market offerings in the state as tensions grow between federal derivatives oversight and state gambling laws.

Key Takeaways:

- Nevada regulators are seeking to block Coinbase’s prediction markets, arguing the contracts qualify as unlicensed gambling under state law.

- The dispute centers on whether event-based contracts fall under federal CFTC oversight or state gaming authority.

- The case is part of a wider legal clash as multiple US states challenge prediction market platforms.

The Nevada Gaming Control Board on Monday filed a civil enforcement complaint against Coinbase Financial Markets in Carson City, requesting a permanent injunction, declaratory relief, and an emergency temporary restraining order.

Regulators argue the platform is offering event-based contracts tied to sports and elections without the state gaming licenses required under Nevada law.

Nevada Says Coinbase Prediction Markets Violate State Gaming Law

Coinbase introduced prediction market trading to US users last month through a partnership with Kalshi, a federally regulated designated contract market overseen by the Commodity Futures Trading Commission.

Nevada officials, however, say contracts linked to sporting outcomes and elections constitute wagering activity and therefore fall under state gaming rules rather than federal derivatives jurisdiction.

The board also alleges the Coinbase app permits users aged 18 and older to trade event contracts, below Nevada’s legal gambling age of 21.

In court filings, regulators said the company’s continued operation creates “serious, ongoing, irreparable harm” and gives Coinbase an unfair advantage over licensed sportsbooks that must meet strict compliance, tax, and physical-location requirements.

The dispute arrives amid a broader legal clash between Coinbase and several US states.

The exchange recently filed federal lawsuits against gaming regulators in Connecticut, Michigan, and Illinois, arguing that prediction markets fall exclusively under CFTC authority and that state enforcement efforts unlawfully restrict innovation.

Those states had issued cease-and-desist notices accusing prediction platforms of unlicensed sports wagering.

Nevada officials maintain their responsibility is to protect consumers and preserve the integrity of the state’s gaming industry.

Board chairman Mike Dreitzer said enforcement action was necessary to uphold those obligations as new digital betting-style products enter the market.

Nevada Escalates Crackdown on Prediction Market Platforms

The latest case follows a string of enforcement moves against prediction market operators. Nevada previously pursued action against Kalshi over sports-related contracts, triggering a legal battle that remains under appeal.

More recently, a state court granted a temporary restraining order blocking Polymarket from offering event contracts to Nevada residents for two weeks, signaling judicial willingness to side with state regulators despite federal derivatives oversight claims.

Last month, Kalshi opened a new office in Washington, D.C., as it ramps up efforts to shape federal and state policy amid growing scrutiny of its products across the United States.

The company also hired veteran political strategist John Bivona as its first head of federal government relations.

Meanwhile, a new legislation to limit the interactions between government officials and the prediction markets is being supported by more than 30 Democrats in the US House of Representatives, including former Speaker Nancy Pelosi.

The lure behind new restrictions is a controversial Polymarket bet, which started as a bet of $32,000 but eventually became more than $400,000 shortly before the unexpected detention of Venezuelan President Nicolás Maduro.

The post Nevada Moves to Block Coinbase Prediction Markets After Polymarket Ban appeared first on Cryptonews.

Crypto World

Solana price falls under $100: Dead-cat bounce coming?

Solana price slid deeper into the red on Feb.4, extending its recent downtrend as sellers continued to press the market.

Summary

- Solana drops to $97, extending weekly losses to over 20% as price tests the $95–$100 support zone.

- Despite price weakness, network usage and ETF inflows suggest longer-term interest remains intact.

- Oversold conditions could lead to a short-term relief bounce.

At press time, SOL was trading near $97, down 6.1% over the past 24 hours. The move leaves Solana sitting near the lower end of its seven-day range between $96 and $127.

Solana (SOL) has dropped 23% over the last week and 31% over the last month. The token is now back to a range that many traders consider critical, having retraced roughly 66% from its peak of $293 in January 2025.

Activity has increased despite the decline. As the price tests support, Solana’s 24-hour spot trading volume increased 32% to $6.55 billion, suggesting increased participation.

Derivatives show a similar trend. CoinGlass data reports futures volume jumping 40% to $17.17 billion, while open interest edged 0.65% higher to $6.48 billion, suggesting traders are adding exposure rather than fully stepping aside.

Network strength contrasts with price pressure

The weakness comes even as Solana’s fundamentals continue to improve. As previously reported by crypto.news, the network processed more than 2.34 billion transactions in January, a 33% increase from the past month and more than Ethereum, Base, and BNB Chain combined.

Institutional interest has also shown signs of growth. While Bitcoin and Ethereum exchange-traded products recorded net outflows in January, U.S. spot Solana ETFs attracted $104 million in inflows, pointing to rising interest from traditional investors during the pullback.

Still, price expectations have been adjusted by some analysts. Standard Chartered recently lowered its 2026 Solana price target to $250 from $310, citing near-term market pressure.

At the same time, the bank raised its longer-term outlook, forecasting SOL at $400 by the end of 2027, $700 by end-2028, $1,200 by end-2029, and $2,000 by 2030. The bank’s analysts argue Solana is positioned to benefit from growth in stablecoin usage and micropayments as it moves beyond a meme-driven phase.

Solana price technical analysis

From a chart perspective, Solana continues to trade in a clear bearish structure. The daily timeframe shows a consistent pattern of lower highs and lower lows, confirming that sellers still control momentum. The earlier breakdown below the $115–$120 consolidation zone has turned that area into resistance.

Price remains well below the declining daily moving average, now near $121, and repeated attempts to reclaim it have failed. This reinforces the idea that recent rebounds have been corrective rather than trend-changing.

Volatility has expanded to the downside. Strong selling pressure is evident as SOL is trading below the lower Bollinger Band. Although this often puts the market in short-term oversold territory, the absence of a significant reversal indicates that the downside momentum has not yet been completely exhausted.

That view is echoed by momentum indicators. The relative strength index is deep in oversold territory, at 26–28. The likelihood of an instant reversal is low because there isn’t any obvious bullish divergence at this point. In strong downtrends, RSI can remain oversold for extended periods.

The $100 level stands out as the most important near-term line. A sustained close below it would likely expose the $95–$93 zone, followed by a broader support area near $85–$90 if selling intensifies.

On the upside, any rebound is likely to face resistance near $120–$122, where the declining moving average and prior support converge.

Crypto World

Bitmine Chair Tom Lee Shrugs Off ETH Treasury Losses, Asks If ETFs Should Face Same Scrutiny

Bitmine Immersion Technologies chairman Tom Lee pushed back on criticism of the company’s Ethereum treasury strategy on Tuesday, arguing that paper losses come with the territory when a public vehicle is built to mirror the price of Ethereum through a full market cycle.

Lee’s comments were made in response to a social media post that accused Bitmine of sitting on a steep unrealized loss and setting up future selling pressure for Ether.

“BMNR is now sitting on a -$6.6 Billion dollar unrealized LOSS on the ETH they’ve accumulated. This is ETH in the future that will be sold, putting a future ceiling on ETH prices. Tom Lee was the final exit liquidity for OG ETH whales to get out of their worthless token,” the tweet read.

Lee Defends ETH Treasury As Long-Term Tracking Strategy

In response, Lee said the company’s goal is to track Ether’s price closely and aim to outperform over time through its approach, rather than trying to smooth out drawdowns.

He said unrealized losses show up naturally during broad crypto pullbacks, and he questioned why critics treat that as uniquely problematic when index products also swing lower during market declines.

Ether has slid sharply in the latest leg of the downturn, and Bitmine’s growing treasury has amplified the mark-to-market moves that come with a concentrated position.

Bitmine itself has framed the strategy as a long-duration bet on Ethereum’s role in finance and capital markets, pairing accumulation with staking infrastructure.

Bitmine’s ETH Holdings Climb To 4.24M As Paper Losses Pass $6B

In a recent company release, Bitmine said it held 4.24M ETH as of Jan. 25 and said it acquired 40,302 ETH over the prior week. Meanwhile, unrealized losses topped $6B.

The same statement pointed to a shifting political and institutional backdrop. It quoted President Donald Trump saying that Congress is working on crypto market structure legislation that he hopes to sign soon, and it positioned tokenization as a theme gaining traction among large financial players.

Lee has also tied the sell-off to market structure stress, pointing to the aftershocks of a record $19B liquidation event in October and to the way flows into metals can drain risk appetite from crypto during fragile periods.

The episode has reopened a wider debate around corporate-style crypto treasuries, especially those using Ether rather than Bitcoin.

Lee appears to be treating the recent drawdown as part of the cycle, not proof that the strategy is broken, and he has kept his longer-term pitch intact that Ethereum sits at the centre of where finance is heading.

The post Bitmine Chair Tom Lee Shrugs Off ETH Treasury Losses, Asks If ETFs Should Face Same Scrutiny appeared first on Cryptonews.

Crypto World

Is Tether IPO Just A Pipe Dream?

Tether, issuer of the $185 billion USDT stablecoin, has dramatically scaled back its private fundraising ambitions.

It raises doubts about a potential IPO once fueled by speculation from crypto insiders like BitMEX co-founder Arthur Hayes.

Sponsored

Sponsored

Investor Pushback Forces Tether to Reassess Funding Ambitions

Tether was initially exploring a $15–20 billion raise at a $500 billion valuation. The figure would have placed the stablecoin issuer among the world’s most valuable private firms.

However, according to the Financial Times, Tether is now considering as little as $5 billion, or potentially no raise at all.

The latest pullback follows a year of heightened market chatter. In September 2025, Hayes reignited Tether IPO speculation, suggesting a public listing for the stablecoin issuer could overshadow Circle’s successful USDC debut.

At the time, Tether’s valuation was pegged at over $500 billion. This positioned it alongside tech and finance giants such as SpaceX, OpenAI, and ByteDance.

Hayes framed the potential listing as a strategic move, with Tether’s USDT circulation of $185 billion and its revenue-generating structure giving it a competitive edge over Circle.

Yet investor sentiment has tempered the hype. Backers reportedly balked at the lofty $500 billion valuation, citing:

- Regulatory scrutiny

- Reserve transparency concerns, and

- Past allegations of illicit use.

Sponsored

Sponsored

Tether Stays Profitable Amid Market Headwinds, Keeping IPO Optional

A recent S&P Global Ratings downgrade highlighted Tether’s exposure to riskier assets, such as Bitcoin and gold, further heightening caution.

“S&P said there had been an increase in high-risk assets in Tether’s reserves over the past year, including bitcoin, gold, secured loans, corporate bonds, and other investments, all with limited disclosures and subject to credit, market, interest-rate, and foreign-exchange risks. Tether continues to provide limited information on the creditworthiness of its custodians, counterparties, or bank account providers,” Reuters reported, citing S&P.

The broader crypto market’s decline over the past six months further dampened enthusiasm for sky-high valuations, even for the sector’s most profitable player.

Ardoino, however, remains confident in Tether’s fundamentals. He described the $15–20 billion figure as a misconception. According to Ardoino, the company would be “very happy” raising zero capital.

Sponsored

Sponsored

“That number is not our goal. It’s our maximum, we were ready to sell…If we were selling zero, we would be very happy as well,” read an excerpt in the report, citing Ardoino.

Tether reported $10 billion in profits for 2025, down about 23% from the prior year due to Bitcoin price declines but offset by strong returns on gold holdings.

With profitability firmly intact, Tether has little operational need for additional funds. This suggests the fundraising drive is as much about credibility and strategic partnerships as it is about cash.

Tether IPO: Just a Pipe Dream?

The retreat also reshapes expectations for the Tether IPO. While a public listing is no longer imminent, regulatory tailwinds and strategic initiatives keep the option alive.

US stablecoin legislation under President Trump, along with Tether’s new US-compliant USAT token, could provide a pathway for legitimacy in the domestic market.

Sponsored

Sponsored

Therefore, groundwork could be laid for a potential 2026 IPO if market conditions improve, though the valuation may need to be recalibrated.

Still, Tether’s cautious pivot carries a broader signal for the crypto ecosystem. As the market’s de facto reserve currency with massive Treasury and gold holdings, the company’s retreat highlights a growing emphasis on profitability and transparency over hype.

For other high-valuation crypto firms eyeing public markets, Tether’s experience may serve as a blueprint: sustainable growth and strong fundamentals are increasingly critical to investor confidence, even for marquee names in the industry.

It is also worth noting that Tether CEO Paolo Arodino once articulated that the firm does not need to go public. However, he did not rule it out either.

Crypto World

Crypto Markets Slide as BTC Falls Below $90K, ETH Drops 7%

Tuesday’s sell-off wiped $713M in leveraged positions and came after Trump’s Greenland tariff threats.

Crypto World

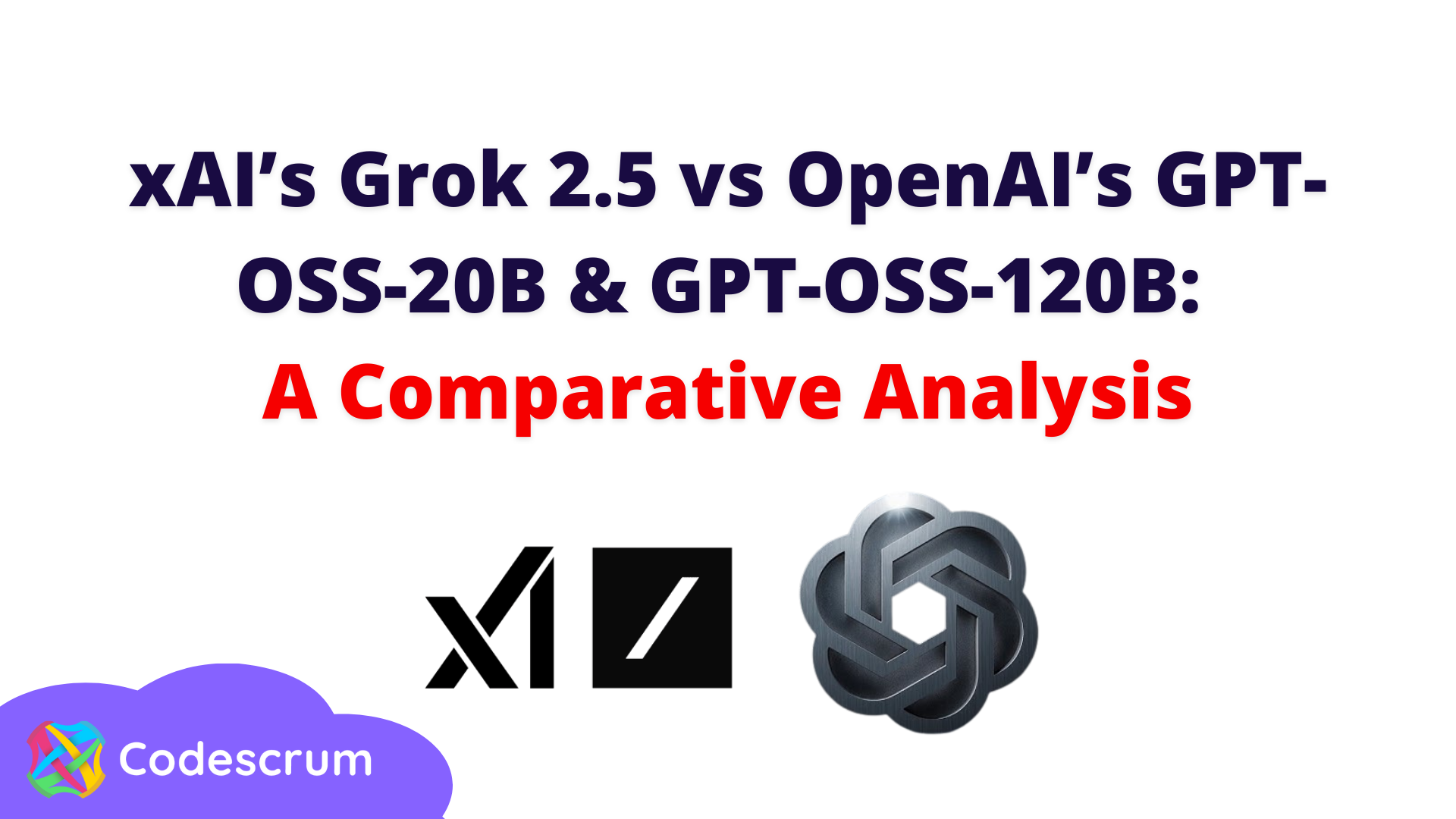

Bitcoin ETF outflows deepen as ether and XRP funds quietly attract inflows

Bitcoin exchange-traded funds saw fresh outflows on Tuesday even as ether- and XRP-linked products drew net inflows, indicative of a growing split in how investors are positioning across major crypto assets during the latest bout of market volatility.

U.S.-listed spot bitcoin ETFs recorded roughly $272 million in net outflows on Feb. 3, according to data compiled by SoSoValue, extending a pattern of distribution that has emerged during bitcoin’s recent price swings.

The withdrawals came as bitcoin whipsawed sharply, sliding toward $73,000 before rebounding above $76,000, a move traders attributed to thin liquidity and fast-moving macro headlines.

In contrast, spot ether ETFs posted net inflows of about $14 million on the day, while XRP-focused products attracted nearly $20 million, suggesting some investors are rotating exposure rather than exiting crypto markets outright.

The divergence reflects shifting risk preferences rather than a wholesale loss of confidence in digital assets.

Bitcoin has increasingly traded as a macro-sensitive risk asset, reacting quickly to equity-market stress, tighter financial conditions and concerns around technology valuations.

Tuesday’s selling coincided with a sharp selloff in U.S. software stocks after Anthropic’s new AI automation tool reignited fears that artificial intelligence could disrupt traditional software business models, pressuring broader tech benchmarks.

The flows also echo a broader theme visible across markets: selective risk-taking rather than blanket risk-off behavior. While bitcoin ETFs have borne the brunt of near-term de-risking, capital is still moving within the crypto complex, favoring assets perceived as offering distinct use cases or relative value.

Crypto World

Grayscale Files for Near Protocol ETF

Join Our Telegram channel to stay up to date on breaking news coverage

Grayscale Investments has officially submitted an S-1 registration statement to the US Securities and Exchange Commission (SEC) for a groundbreaking Near Protocol (NEAR) exchange-traded fund (ETF).

According to the submitted S-1, Grayscale intends to convert the Near Trust into an ETF and rename the Trust as Grayscale Near Trust ETF.

This filing now represents a strategic expansion beyond BTC and ETH products, challenging the established regulatory perimeter for altcoin-based investment vehicles. As a result, the application could pave the way for a new era of institutional access to layer-1 blockchain assets, provided it navigates the SEC’s rigorous review process successfully.

BREAKING: Grayscale has filed to convert its Grayscale Near Trust into a spot NEAR ETF ( $GSNR)

Grayscale Trust holds ~$900K in $NEAR and Trades at a premium to NAV.

If Approved, it Would follow Bitcoin and Ethereum ETF moves, Highlighting Rising Institutional interest in… pic.twitter.com/NsB4YbMW88

— Crypto Patel (@CryptoPatel) January 21, 2026

When approved, it plans to list shares under the ticker GSNR, currently traded on the OTCQB market, on the NYSE Arca. However, the firm is set to announce fees and other details in a later filing with the SEC.

CSC Delaware Trust Company is the trustee, The Bank of New York Mellon is the transfer agent and the administrator, and Continental Stock Transfer & Trust Company is the co-transfer agent of the trust.

The prime broker and the custodian will be Coinbase and the custody arm of the American exchange.

In the filing, Grayscale also hinted at a likelihood of staking. If the staking condition is satisfied, “The sponsor anticipates that the Trust would enter into written arrangements with the Custodian to stake the Trust’s NEAR to one or more vetted third-party staking providers.”

After the filing, James Seyffart, a popular Bloomberg ETF analyst, believes that Crypto ETP filings with the SEC are set to continue.

Crypto ETP filings continue to come across the SEC’s desk. https://t.co/wJhFQcGMtM

— James Seyffart (@JSeyff) January 20, 2026

NEAR Price Recovers To Jump Over 3%

After the announcement, the Near Protocol token price jumped 3% in the last few hours, despite a 1.5% drop in the previous day to trade at $1.54 as of 1:58 a.m. EST, with an intraday high of around $1.56 and a low of $1.50, according to Congecko data.

The slight surge comes even as jitters run through the crypto market, with the space shedding nearly 2% over the last 24 hours to a market cap of $3.10 trillion.

The NEAR trading volume has also gained over 14% to $211 million, a signal of increased trading activity.

Related News:

Best Wallet – Diversify Your Crypto Portfolio

- Easy to Use, Feature-Driven Crypto Wallet

- Get Early Access to Upcoming Token ICOs

- Multi-Chain, Multi-Wallet, Non-Custodial

- Now On App Store, Google Play

- Stake To Earn Native Token $BEST

- 250,000+ Monthly Active Users

Join Our Telegram channel to stay up to date on breaking news coverage

Crypto World

Canadian Regulator Sets Tighter Crypto Custody Standards to Curb Losses

Canada’s top investment industry watchdog has rolled out a new set of rules aimed at tightening how crypto assets are held and safeguarded, as regulators move to limit losses linked to hacks, fraud, and weak governance.

Key Takeaways:

- Canada introduced new interim crypto custody rules to curb losses from hacks and fraud.

- Custodians now face tiered limits based on capital strength, oversight, and resilience.

- The framework adds stricter governance, insurance, and audit requirements while supporting innovation.

The Canadian Investment Regulatory Organization (CIRO) on Tuesday published its Digital Asset Custody Framework, outlining detailed expectations for dealer members that operate crypto asset trading platforms.

The framework is designed as an interim measure and will be enforced through membership terms and conditions, allowing CIRO to react more quickly to emerging risks while longer-term rules are developed.

Canada Introduces Tiered Custody Rules

CIRO said the framework directly addresses the “technological, operational, and legal risks unique to digital assets,” drawing on lessons from past failures, including the collapse of QuadrigaCX in 2019, which left thousands of customers unable to recover funds.

At the core of the new regime is a tiered, risk-based structure for crypto custodians. Under the model, custodians are placed into one of four tiers based on factors such as capital strength, regulatory oversight, insurance coverage, and operational resilience.

Top-tier custodians may hold up to 100% of client crypto assets, while lower-tier providers face progressively tighter limits, with Tier 4 custodians capped at 40%.

Dealer members that choose to custody assets internally are limited to holding no more than 20% of the total value of client crypto.

The framework also imposes a broad set of operational requirements. These include formal governance policies covering private key management, cybersecurity controls, incident response procedures, and third-party risk management.

Custodians must carry insurance, undergo independent audits, provide security compliance reports, and conduct regular penetration testing.

Custody agreements are required to spell out liability in cases where losses stem from negligence or preventable failures.

CIRO said the approach is intended to be proportionate, balancing stronger investor protection with room for innovation and competition.

The rules were developed in consultation with crypto trading platforms, custodians, and other industry participants, and were benchmarked against international practices.

Canada Steps Up Crypto Enforcement After Major FINTRAC Fines

The move comes amid heightened scrutiny of crypto compliance in Canada. In October, the country’s financial intelligence agency, FINTRAC, fined local exchange Cryptomus roughly $126 million for failing to report suspicious transactions tied to darknet markets and fraud.

Earlier in the year, FINTRAC also imposed penalties on offshore platforms KuCoin and Binance for similar breaches.

As a self-regulatory body, CIRO has the authority to investigate misconduct among its members and impose sanctions, including fines and suspensions.

As reported, Canada is preparing to roll out its first comprehensive framework for fiat-backed stablecoins under the 2025 federal budget, closely mirroring the regulatory path taken by the United States earlier this year.

The Bank of Canada is expected to spend $10 million over two years, starting in fiscal year 2026–2027, to oversee the rollout.

The move comes just months after the US passed its GENIUS Act in July, a landmark stablecoin bill that heightened global regulatory momentum.

The post Canadian Regulator Sets Tighter Crypto Custody Standards to Curb Losses appeared first on Cryptonews.

-

Crypto World5 days ago

Crypto World5 days agoSmart energy pays enters the US market, targeting scalable financial infrastructure

-

Crypto World5 days ago

Software stocks enter bear market on AI disruption fear with ServiceNow plunging 10%

-

Politics5 days ago

Politics5 days agoWhy is the NHS registering babies as ‘theybies’?

-

Crypto World5 days ago

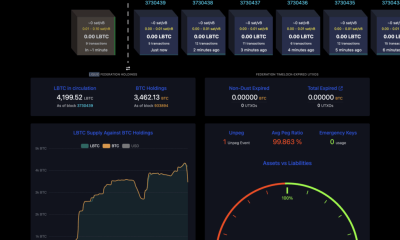

Crypto World5 days agoAdam Back says Liquid BTC is collateralized after dashboard problem

-

Video1 day ago

Video1 day agoWhen Money Enters #motivation #mindset #selfimprovement

-

NewsBeat5 days ago

NewsBeat5 days agoDonald Trump Criticises Keir Starmer Over China Discussions

-

Politics2 days ago

Politics2 days agoSky News Presenter Criticises Lord Mandelson As Greedy And Duplicitous

-

Crypto World4 days ago

Crypto World4 days agoU.S. government enters partial shutdown, here’s how it impacts bitcoin and ether

-

Sports4 days ago

Sports4 days agoSinner battles Australian Open heat to enter last 16, injured Osaka pulls out

-

Fashion5 days ago

Fashion5 days agoWeekend Open Thread – Corporette.com

-

Crypto World4 days ago

Crypto World4 days agoBitcoin Drops Below $80K, But New Buyers are Entering the Market

-

Crypto World2 days ago

Crypto World2 days agoMarket Analysis: GBP/USD Retreats From Highs As EUR/GBP Enters Holding Pattern

-

Crypto World5 days ago

Crypto World5 days agoKuCoin CEO on MiCA, Europe entering new era of compliance

-

Business5 days ago

Entergy declares quarterly dividend of $0.64 per share

-

Sports2 days ago

Sports2 days agoShannon Birchard enters Canadian curling history with sixth Scotties title

-

NewsBeat1 day ago

NewsBeat1 day agoUS-brokered Russia-Ukraine talks are resuming this week

-

NewsBeat2 days ago

NewsBeat2 days agoGAME to close all standalone stores in the UK after it enters administration

-

Crypto World9 hours ago

Crypto World9 hours agoRussia’s Largest Bitcoin Miner BitRiver Enters Bankruptcy Proceedings: Report

-

Crypto World5 days ago

Crypto World5 days agoWhy AI Agents Will Replace DeFi Dashboards

-

Tech4 days ago

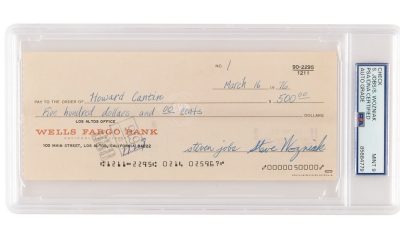

Tech4 days agoVery first Apple check & early Apple-1 motherboard sold for $5 million combined