After more than three weeks of war in Iran, the US has destroyed major components of Iran’s military, including ballistic missile sites and much of the country’s navy.

Tech

How recruitment fraud turned cloud IAM into a $2 billion attack surface

A developer gets a LinkedIn message from a recruiter. The role looks legitimate. The coding assessment requires installing a package. That package exfiltrates all cloud credentials from the developer’s machine — GitHub personal access tokens, AWS API keys, Azure service principals and more — are exfiltrated, and the adversary is inside the cloud environment within minutes.

Your email security never saw it. Your dependency scanner might have flagged the package. Nobody was watching what happened next.

The attack chain is quickly becoming known as the identity and access management (IAM) pivot, and it represents a fundamental gap in how enterprises monitor identity-based attacks. CrowdStrike Intelligence research published on January 29 documents how adversary groups operationalized this attack chain at an industrial scale. Threat actors are cloaking the delivery of trojanized Python and npm packages through recruitment fraud, then pivoting from stolen developer credentials to full cloud IAM compromise.

In one late-2024 case, attackers delivered malicious Python packages to a European FinTech company through recruitment-themed lures, pivoted to cloud IAM configurations and diverted cryptocurrency to adversary-controlled wallets.

Entry to exit never touched the corporate email gateway, and there is no digital evidence to go on.

On a recent episode of CrowdStrike’s Adversary Universe podcast, Adam Meyers, the company’s SVP of intelligence and head of counter adversary operations, described the scale: More than $2 billion associated with cryptocurrency operations run by one adversary unit. Decentralized currency, Meyers explained, is ideal because it allows attackers to avoid sanctions and detection simultaneously. CrowdStrike’s field CTO of the Americas, Cristian Rodriguez, explained that revenue success has driven organizational specialization. What was once a single threat group has split into three distinct units targeting cryptocurrency, fintech and espionage objectives.

That case wasn’t isolated. The Cybersecurity and Infrastructure Security Agency (CISA) and security company JFrog have tracked overlapping campaigns across the npm ecosystem, with JFrog identifying 796 compromised packages in a self-replicating worm that spread through infected dependencies. The research further documents WhatsApp messaging as a primary initial compromise vector, with adversaries delivering malicious ZIP files containing trojanized applications through the platform. Corporate email security never intercepts this channel.

Most security stacks are optimized for an entry point that these attackers abandoned entirely.

When dependency scanning isn’t enough

Adversaries are shifting entry vectors in real-time. Trojanized packages aren’t arriving through typosquatting as in the past — they’re hand-delivered via personal messaging channels and social platforms that corporate email gateways don’t touch. CrowdStrike documented adversaries tailoring employment-themed lures to specific industries and roles, and observed deployments of specialized malware at FinTech firms as recently as June 2025.

CISA documented this at scale in September, issuing an advisory on a widespread npm supply chain compromise targeting GitHub personal access tokens and AWS, GCP and Azure API keys. Malicious code was scanned for credentials during package installation and exfiltrated to external domains.

Dependency scanning catches the package. That’s the first control, and most organizations have it. Almost none have the second, which is runtime behavioral monitoring that detects credential exfiltration during the install process itself.

“When you strip this attack down to its essentials, what stands out isn’t a breakthrough technique,” Shane Barney, CISO at Keeper Security, said in an analysis of a recent cloud attack chain. “It’s how little resistance the environment offered once the attacker obtained legitimate access.”

Adversaries are getting better at creating lethal, unmonitored pivots

Google Cloud’s Threat Horizons Report found that weak or absent credentials accounted for 47.1% of cloud incidents in the first half of 2025, with misconfigurations adding another 29.4%. Those numbers have held steady across consecutive reporting periods. This is a chronic condition, not an emerging threat. Attackers with valid credentials don’t need to exploit anything. They log in.

Research published earlier this month demonstrated exactly how fast this pivot executes. Sysdig documented an attack chain where compromised credentials reached cloud administrator privileges in eight minutes, traversing 19 IAM roles before enumerating Amazon Bedrock AI models and disabling model invocation logging.

Eight minutes. No malware. No exploit. Just a valid credential and the absence of IAM behavioral baselines.

Ram Varadarajan, CEO at Acalvio, put it bluntly: Breach speed has shifted from days to minutes, and defending against this class of attack demands technology that can reason and respond at the same speed as automated attackers.

Identity threat detection and response (ITDR) addresses this gap by monitoring how identities behave inside cloud environments, not just whether they authenticate successfully. KuppingerCole’s 2025 Leadership Compass on ITDR found that the majority of identity breaches now originate from compromised non-human identities, yet enterprise ITDR adoption remains uneven.

Morgan Adamski, PwC’s deputy leader for cyber, data and tech risk, put the stakes in operational terms. Getting identity right, including AI agents, means controlling who can do what at machine speed. Firefighting alerts from everywhere won’t keep up with multicloud sprawl and identity-centric attacks.

Why AI gateways don’t stop this

AI gateways excel at validating authentication. They check whether the identity requesting access to a model endpoint or training pipeline holds the right token and has privileges for the timeframe defined by administrators and governance policies. They don’t check whether that identity is behaving consistently with its historical pattern or is randomly probing across infrastructure.

Consider a developer who normally queries a code-completion model twice a day, suddenly enumerating every Bedrock model in the account, disabling logging first. An AI gateway sees a valid token. ITDR sees an anomaly.

A blog post from CrowdStrike underscores why this matters now. The adversary groups it tracks have evolved from opportunistic credential theft into cloud-conscious intrusion operators. They are pivoting from compromised developer workstations directly into cloud IAM configurations, the same configurations that govern AI infrastructure access. The shared tooling across distinct units and specialized malware for cloud environments indicate this isn’t experimental. It’s industrialized.

Google Cloud’s office of the CISO addressed this directly in their December 2025 cybersecurity forecast, noting that boards now ask about business resilience against machine-speed attacks. Managing both human and non-human identities is essential to mitigating risks from non-deterministic systems.

No air gap separates compute IAM from AI infrastructure. When a developer’s cloud identity is hijacked, the attacker can reach model weights, training data, inference endpoints and whatever tools those models connect to through protocols like model context protocol (MCP).

That MCP connection is no longer theoretical. OpenClaw, an open-source autonomous AI agent that crossed 180,000 GitHub stars in a single week, connects to email, messaging platforms, calendars and code execution environments through MCP and direct integrations. Developers are installing it on corporate machines without a security review.

Cisco’s AI security research team called the tool “groundbreaking” from a capability standpoint and “an absolute nightmare” from a security one, reflecting exactly the kind of agentic infrastructure a hijacked cloud identity could reach.

The IAM implications are direct. In an analysis published February 4, CrowdStrike CTO Elia Zaitsev warned that “a successful prompt injection against an AI agent isn’t just a data leak vector. It’s a potential foothold for automated lateral movement, where the compromised agent continues executing attacker objectives across infrastructure.”

The agent’s legitimate access to APIs, databases and business systems becomes the adversary’s access. This attack chain doesn’t end at the model endpoint. If an agentic tool sits behind it, the blast radius extends to everything the agent can reach.

Where the control gaps are

This attack chain maps to three stages, each with a distinct control gap and a specific action.

Entry: Trojanized packages delivered through WhatsApp, LinkedIn and other non-email channels bypass email security entirely. CrowdStrike documented employment-themed lures tailored to specific industries, with WhatsApp as a primary delivery mechanism. The gap: Dependency scanning catches the package, but not the runtime credential exfiltration. Suggested action: Deploy runtime behavioral monitoring on developer workstations that flags credential access patterns during package installation.

Pivot: Stolen credentials enable IAM role assumption invisible to perimeter-based security. In CrowdStrike’s documented European FinTech case, attackers moved from a compromised developer environment directly to cloud IAM configurations and associated resources. The gap: No behavioral baselines exist for cloud identity usage. Suggested action: Deploy ITDR that monitors identity behavior across cloud environments, flagging lateral movement patterns like the 19-role traversal documented in the Sysdig research.

Objective: AI infrastructure trusts the authenticated identity without evaluating behavioral consistency. The gap: AI gateways validate tokens but not usage patterns. Suggested action: Implement AI-specific access controls that correlate model access requests with identity behavioral profiles, and enforce logging that the accessing identity cannot disable.

Jason Soroko, senior fellow at Sectigo, identified the root cause: Look past the novelty of AI assistance, and the mundane error is what enabled it. Valid credentials are exposed in public S3 buckets. A stubborn refusal to master security fundamentals.

What to validate in the next 30 days

Audit your IAM monitoring stack against this three-stage chain. If you have dependency scanning but no runtime behavioral monitoring, you can catch the malicious package but miss the credential theft. If you authenticate cloud identities but don’t baseline their behavior, you won’t see the lateral movement. If your AI gateway checks tokens but not usage patterns, a hijacked credential walks straight to your models.

The perimeter isn’t where this fight happens anymore. Identity is.

Tech

This Meta smartglasses-detecting app is a great model for Apple Glass developers to follow

Meta’s smart glasses are being used to film people in bathrooms, courts, and doctor’s offices. A new app just released on the App Store is the perfect example of safeguards should be implemented when Apple launches its smart glasses.

Meta Ray-Ban Display. Image source: Meta

The Apple Vision Pro isn’t exactly stealthy. Meta’s Ray-Bans are, and are being used mainly to violate other people’s privacy.

I’ve already talked at-length about the issue with smart glasses. Especially if they’re glasses designed to be relatively unclockable at a distance.

Continue Reading on AppleInsider | Discuss on our Forums

Tech

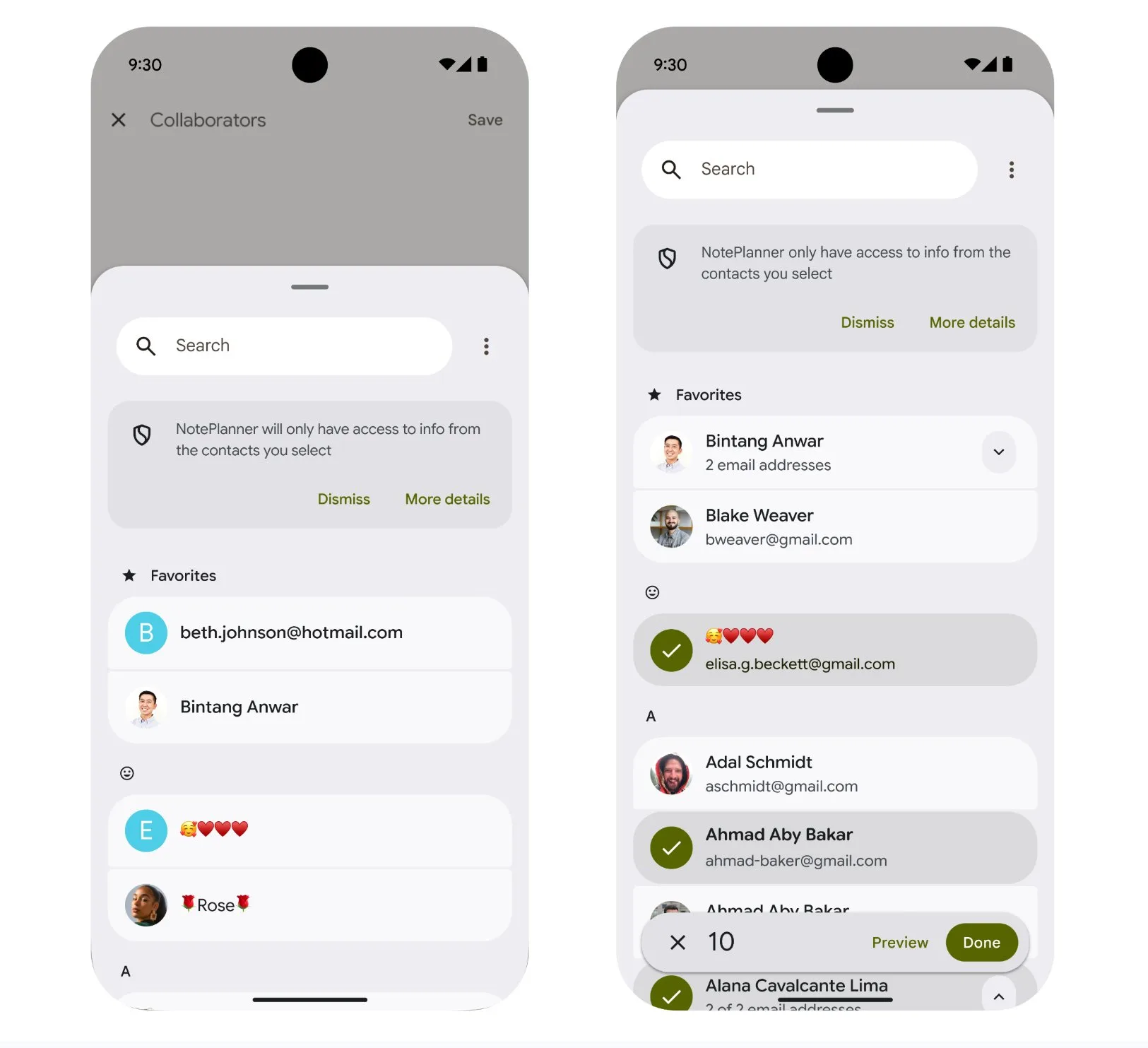

Android 17’s new Contact Picker stops apps from accessing your entire contact list

Android 17 is getting a new Contact Picker that changes how apps access your contacts list. Earlier reports hinted at this shift toward tighter privacy, and now Google is rolling it out.

Instead of giving apps full access to your address book, you will be able to choose exactly which contacts they can see. Previously, apps often relied on a broad permission that exposed your entire contact list.

That resulted in sharing data more than necessary without even realizing it. With this update, Android is trying to limit that exposure while keeping things simple for you.

How the new Contact Picker keeps your contacts private

The new Contact Picker in Android 17 offers a secure and searchable interface where you select specific contacts to share. Apps only receive the data you approve, not your full address book. This reduces unnecessary access and gives you more control over your information.

For apps built for Android 17 devices or newer, the system automatically routes existing contact pick requests through the new, more secure Contact Picker interface. That means even apps that have not been fully updated may still benefit from better privacy protections.

Developers are also being pushed to adopt the new picker directly. It supports features like selecting multiple contacts in one go, making it more flexible than older methods. Apps can also request only the exact details they need, like a phone number or email address.

Android 17 changes how apps interact with your contacts

With this update, Android is moving away from blanket permissions and toward more precise, user-driven access. For you, that means fewer apps quietly pulling your entire contact list in the background

This update does not just tighten privacy; it also sets a new standard for how apps should handle personal data going forward.

Recently, Android rolled out a new contact feature with customizable calling cards, which makes it easier to personalize how you appear on calls. Google is also working on a tap-to-share contact feature to quickly exchange contact details between devices, just like Apple’s NameDrop.

Tech

Postal Service to Impose Its First-Ever Fuel Surcharge on Packages

The U.S. Postal Service plans to impose its first-ever fuel surcharge on packages (source paywalled; alternative source), adding an 8% fee starting in April as it struggles with rising fuel costs and ongoing financial pressure. The surcharge will not apply to letter mail and is currently expected to remain in place until January 2027. The Wall Street Journal reports: Other parcel carriers, including FedEx and United Parcel Service, have imposed fuel surcharges, as well as a basket of other surcharges and fees, for years. Both FedEx and UPS have dramatically raised their fuel surcharges in recent weeks as the price of oil has increased amid the turmoil in the Middle East. […] The post office has been trying to increase the volume of packages it delivers. It previously differentiated itself from commercial carriers by saying that it doesn’t apply residential, Saturday delivery or fuel or remote-delivery surcharges.

Tech

Iran’s drone war: How the cheap, accurate Shahed-136 is changing warfare

One advantage Iran retains, though, is the Shahed-136. The Shahed, a one-way, single-use attack drone, is small, inexpensive, and highly accurate. Iranian drone attacks have led to the death of six US service members, damaged oil and natural gas facilities in the United Arab Emirates, Qatar, and Saudi Arabia, and are quickly depleting America’s interceptor stockpiles.

Michael C. Horowitz is a senior fellow for technology and innovation at the Council on Foreign Relations and a professor at the University of Pennsylvania. He says these drones have ushered in a new era of warfare: “The way that I would think about this is just like the introduction of the machine gun at scale in World War I,” he told Today, Explained co-host Noel King.

Noel talks with Horowitz about what the drones can do, how the US can counter them, and what they mean for the future of warfare.

Below is an excerpt of their conversation, edited for length and clarity. There’s much more in the full podcast, so listen to Today, Explained wherever you get podcasts, including Apple Podcasts, Pandora, and Spotify.

The US has done damage to Iran’s missile sites and military bases. But Iran still has cheap, easy-to-assemble drones that pose a real threat on the battlefield. Michael Horowitz, senior fellow at the Council on Foreign Relations, tell us about them drones!

These one-way attack drones, like the Shahed-136, are used essentially as a substitute for a cruise missile. Iran is using them to do things like target American air defense radars, which are necessary to find other drones and shoot them down. Iran is using them to target government buildings like embassies. Iran is using them to target critical infrastructure that countries in the Middle East use for oil and gas.

The thing that somebody like me worries about is that American aircraft carriers in general are extremely well protected. A drone in and of itself would never take out an American aircraft carrier. They’re just too small. But a lot of them could. And the real risk here is that suppose you fired not one, not a hundred, but 500 at an American aircraft carrier at once. Even if the US could shoot down 450 of them, that’s still a lot that are getting through it.

The scale of these one-way attack drones that you can launch generates the potential ability to not just target the kinds of infrastructure and things that we’re seeing Iran doing, but really important military targets as well, including our ships.

Iran presumably does not have an infinite number of these drones. How many do they actually have on hand?

We don’t actually know exactly how many Iran has on hand, but we know that they have thousands. We also know, for example, that Russia has the ability to produce a thousand or more every couple of weeks of their knockoff of the Shahed-136.

Iran likely has the ability to do something in that range as well. The US and Israel are obviously targeting their manufacturing capabilities, but Iran has a lot of manufacturing that’s more underground, and because you can use commercial manufacturing to build these systems, you can do that almost anywhere.

That’s one of the reasons why I have been very vocal that the United States needs to invest more in these capabilities. And why I was thrilled, frankly, in the context of this conflict, regardless of what one thinks of the conflict itself, to see the US use its first precise mass system, the LUCAS drone, against Iran.

The American military arsenal is based on quality over quantity. It’s based on having small numbers of exquisite, expensive, hard-to-produce systems that are the best in the world, but they were designed to be essentially bespoke products. They were not designed for mass production. The issue is that that’s not enough anymore.

In a world that required having those expensive, exquisite systems to do things like accurately fire weapons at your adversaries, then that was a unique advantage for the United States military. But because everybody — both smaller states and militant groups — can launch more accurate precision strikes at lots of different targets, it means that just having those kinds of systems is not enough for the United States.

If Iran is firing a $35,000 Shahed-136 at the United States, and the United States is shooting it down with a weapon that costs anywhere between $1 million per shot and $4 million per shot, you do not need to be a defense planner to understand that that cost curve is in the wrong direction.

How did Iran get so well-armed?

Necessity is the mother of invention. A country like Iran has felt intense security threats in the region. In part that’s because of Iran’s own ideology: If you’re going to roll around chanting “death to America,” then you need to be prepared for the United States and the region to have some questions.

Iran fought a war against Iraq in the 1980s. Iran has been in continual tussles with various neighbors over the years. And so Iran built up a pretty extensive military arsenal. Not anywhere near as good as the United States or Israel, but Iran, in some ways because they had to, was a pioneer in developing these low-cost, long-range precise mass weapons that they then shared with Russia. And Russia’s used hundreds of thousands against the Ukrainians.

Is there a way for the US to defend against these Iranian drones without spending so much money?

The US has options. It’s just going to take some time to get there.

Another country where necessity has been the mother of invention has been Ukraine, facing down the Russian invaders now for four years. And because Ukraine is the victim of dozens to hundreds of launches of these Shaheds almost every day, Ukraine has pioneered lower-cost air defense systems using even less expensive drones, for example, to take out those $35,000 drones, or even in some cases using old World War II-style anti-aircraft guns.

If a fairly cheap unmanned drone can overwhelm a billion-dollar aircraft carrier, does the US need to start rethinking the way it fights wars?

One hundred percent. The plan to rely only on these exquisite, expensive, hard-to-produce weapons is no longer going to be enough for the United States. That would especially be true in a war against the most sophisticated potential adversaries the United States could face like China or Russia.

What the United States needs to pursue is what’s called a high/low mix of forces. Some of those high-end systems like Tomahawk missiles and F-35s, things that the United States has worked on for a generation, but then also a new wave of these lower-cost systems that need to be treated not as the kind of thing you might hold onto for 50 years, but as cheaper, more disposable, and upgraded on a regular basis.

What do you think war looks like a generation from now?

The character of warfare is always in flux. The way that I would think about this is just like the introduction of the machine gun at scale in World War I. It fundamentally changed the character of warfare.

The machine gun then just became a ubiquitous weapon. Everybody had machine guns. And then in World War II it was the tank. And everywhere since then, there have been tanks.

What we are now seeing between the Russia-Ukraine war and this war with Iran is these one-way attack drones. It’s not that they’re the only things that militaries need, but these are now going to be part of the arsenal moving forward. And if you don’t have them, and if you can’t defend against them, you’re going to be in trouble.

Tech

Oracle converges the AI data stack to give enterprise agents a single version of truth

Enterprise data teams moving agentic AI into production are hitting a consistent failure point at the data tier. Agents built across a vector store, a relational database, a graph store and a lakehouse require sync pipelines to keep context current. Under production load, that context goes stale.

Oracle, whose database infrastructure runs the transaction systems of 97% of Fortune Global 100 companies by the company’s own count, is now making a direct architectural argument that the database is the right place to fix that problem.

Oracle this week announced a set of agentic AI capabilities for Oracle AI Database, built around a direct architectural counter-argument to that pattern.

The core of the release is the Unified Memory Core, a single ACID (Atomicity, Consistency, Isolation, and Durability)-transactional engine that processes vector, JSON, graph, relational, spatial and columnar data without a sync layer. Alongside that, Oracle announced Vectors on Ice for native vector indexing on Apache Iceberg tables, a standalone Autonomous AI Vector Database service and an Autonomous AI Database MCP Server for direct agent access without custom integration code.

The news isn’t just that Oracle is adding new features, it’s about the world’s largest database vendor realizing that things have changed in the AI world that go beyond what its namesake database was providing.

“As much as I’d love to tell you that everybody stores all their data in an Oracle database today — you and I live in the real world,” Maria Colgan, Vice President, Product Management for Mission-Critical Data and AI Engines, at Oracle told VentureBeat. “We know that that’s not true.”

Four capabilities, one architectural bet against the fragmented agent stack

Oracle’s release spans four interconnected capabilities. Together they form the architectural argument that a converged database engine is a better foundation for production agentic AI than a stack of specialized tools.

Unified Memory Core. Agents reasoning across multiple data formats simultaneously — vector, JSON, graph, relational, spatial — require sync pipelines when those formats live in separate systems. The Unified Memory Core puts all of them in a single ACID-transactional engine. Under the hood it is an API layer over the Oracle database engine, meaning ACID consistency applies across every data type without a separate consistency mechanism.

“By having the memory live in the same place that the data does, we can control what it has access to the same way we would control the data inside the database,” Colgan explained.

Vectors on Ice. For teams running data lakehouse architectures on the open-source Apache Iceberg table format, Oracle now creates a vector index inside the database that references the Iceberg table directly. The index updates automatically as the underlying data changes and works with Iceberg tables that are managed by Databricks and Snowflake. Teams can combine Iceberg vector search with relational, JSON, spatial or graph data stored inside Oracle in a single query.

Autonomous AI Vector Database. A fully managed, free-to-start vector database service built on the Oracle 26ai engine. The service is designed as a developer entry point with a one-click upgrade path to full Autonomous AI Database when workload requirements grow.

Autonomous AI Database MCP Server. Lets external agents and MCP clients connect to Autonomous AI Database without custom integration code. Oracle’s row-level and column-level access controls apply automatically when an agent connects, regardless of what the agent requests.

“Even though you are making the same standard API call you would make with other platforms, the privileges that user has continued to kick in when the LLM is asking those questions,” Colgan said.

Standalone vector databases are a starting point, not a destination

Oracle’s Autonomous AI Vector Database enters a market occupied by purpose-built vector services including Pinecone, Qdrant and Weaviate. The distinction Oracle is drawing is about what happens when vector alone is not enough.

“Once you are done with vectors, you do not really have an option,” Steve Zivanic, Global Vice President, Database and Autonomous Services, Product Marketing at Oracle, told VentureBeat. “With this, you can get graph, spatial, time series — whatever you may need. It is not a dead end.”

Holger Mueller, principal analyst at Constellation Research, said that the architectural argument is credible precisely because other vendors cannot make it without moving data first. Other database vendors require transactional data to move to a data lake before agents can reason across it. Oracle’s converged legacy, in his view, gives it a structural advantage that is difficult to replicate without a ground-up rebuild.

Not everyone sees the feature set as differentiated. Steven Dickens, CEO and principal analyst at HyperFRAME Research, told VentureBeat that vector search, RAG integration and Apache Iceberg support are now standard requirements across enterprise databases — Postgres, Snowflake and Databricks all offer comparable capabilities.

“Oracle’s move to label the database itself as an AI Database is primarily a rebranding of its converged database strategy to match the current hype cycle,” Dickens said. In his view the real differentiation Oracle is claiming is not at the feature level but at the architectural level — and the Unified Memory Core is where that argument either holds or falls apart.

Where enterprise agent deployments actually break down

The four capabilities Oracle shipped this week are a response to a specific and well-documented production failure mode. Enterprise agent deployments are not breaking down at the model layer. They are breaking down at the data layer, where agents built across fragmented systems hit sync latency, stale context and inconsistent access controls the moment workloads scale.

Matt Kimball, vice president and principal analyst at Moor Insights and Strategy, told VentureBeat the data layer is where production constraints surface first.

“The struggle is running them in production,” Kimball said. “The gap is seen almost immediately at the data layer — access, governance, latency and consistency. These all become constraints.”

Dickens frames the core mismatch as a stateless-versus-stateful problem. Most enterprise agent frameworks store memory as a flat list of past interactions, which means agents are effectively stateless while the databases they query are stateful. The lag between the two is where decisions go wrong.

“Data teams are exhausted by fragmentation fatigue,” Dickens said. “Managing a separate vector store, graph database and relational system just to power one agent is a DevOps nightmare.”

That fragmentation is precisely what Oracle’s Unified Memory Core is designed to eliminate. The control plane question follows directly.

“In a traditional application model, control lives in the app layer,” Kimball said. “With agentic systems, access control breaks down pretty quickly because agents generate actions dynamically and need consistent enforcement of policy. By pushing all that control into the database, it can all be applied in a more uniform way.”

What this means for enterprise data teams

The question of where control lives in an enterprise agentic AI stack is not settled.

Most organizations are still building across fragmented systems, and the architectural decisions being made now — which engine anchors agent memory, where access controls are enforced, how lakehouse data gets pulled into agent context — will be difficult to undo at scale.

The distributed data challenge is still the real test.

“Data is increasingly distributed across SaaS platforms, lakehouses and event-driven systems, each with its own control plane and governance model,” Kimball said. “The opportunity now is extending that model across the broader, more distributed data estates that define most enterprise environments today.”

Tech

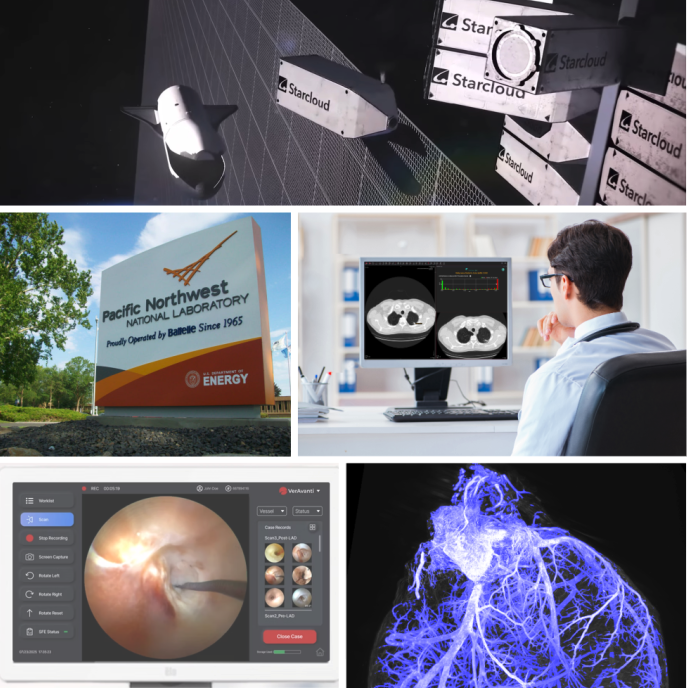

GeekWire Awards: Breakthrough tech for healthcare and data centers highlight Innovation of the Year

From the research lab to the healthcare clinic and all the way above Earth — the Pacific Northwest continues to produce game-changing innovation.

The finalists for Innovation of the Year at the 2026 GeekWire Awards — Alpenglow Biosciences; Pacific Northwest National Laboratory; RevealDx; Starcloud; and VerAvanti — include companies and organizations thinking outside the box to develop cutting-edge technology that help power data centers, modernize healthcare diagnostics, and more.

Now in its 18th year, the GeekWire Awards is the premier event recognizing the top leaders, companies and breakthroughs in Pacific Northwest tech, bringing together hundreds of people to celebrate innovation and the entrepreneurial spirit. It takes place May 7 at the Showbox SoDo in Seattle.

Microsoft’s Majorana 1, a new quantum processor based on a novel state of matter, won Innovation of the Year honors last year.

This category is presented by Astound Business Solutions.

Continue reading for information on Innovation of the Year finalists, who were chosen by a panel of independent judges from community nominations. You can help pick the winner: Cast your ballot here or in the embedded form at the bottom. Voting runs through April 10.

Seattle-based Alpenglow Biosciences, which spun out of the University of Washington in 2018, has developed tools to quickly create multi-dimensional images from biological tissue samples and analyze the results. The company recently announced a partnership with PathNet, a leading U.S. pathology laboratory, to help commercialize use of the startup’s 3D microscope technology in clinical settings.

Alpenglow is led by CEO and co-founder Dr. Nick Reder, who helped launch the company to solve problems he experienced as a medical resident in pathology at the UW.

Pacific Northwest National Laboratory, known as PNNL, is a 60-year-old institution managed by the U.S. Department of Energy that performs research in areas including energy, chemistry, data analytics and other science and technology fields. More than 210 companies have their roots at the laboratory, and 3,213 patents have been issued for research that started at PNNL.

Some of the latest work from the lab includes research on quantum computing; the application of new AI models for scientific discovery; the intersection of robotics and lab experiments; and tiny fish monitoring technology.

RevealDx is a Seattle-based startup that develops software aimed at improving the way healthcare professionals diagnose lung cancer. The company’s product uses machine learning techniques to assess the probability that lung nodules found on chest CT scans are cancerous — an alternative to more invasive procedures. RevealDx recently received FDA clearance for its RevealAI-Lung imaging software.

The company is led by CEO Chris Wood, who previously founded Seattle health tech company Clario Medical Imaging and was CTO at Intelerad Medical Systems.

Starcloud is building out a space-based data centers, powered by grids of massive solar panels that offer an alternative to data centers on Earth amid a surge in energy demand from the AI boom. The Redmond, Wash.-based company, previously known as Lumen Orbit, graduated from Y Combinator in 2024. NVIDIA showed off Starcloud’s data center at the beginning of Jensen Huang’s keynote at the chip giant’s recent GTC conference.

Starcloud is led by CEO and co-founder Philip Johnston, a former associate at McKinsey & Co. who also co-founded an e-commerce venture called Opontia.

VerAvanti, a Bothell, Wash.-based medical technology company founded in 2013, develops ultra-thin imaging scopes that can be used for diagnosis in cardiology, neurosurgery, and peripheral artery work. The company raised a $31.5 million round last year and later announced a $5 million investment from a Middle Eastern family office that operates as a medical device distributor.

VerAvanti is led by CEO Gerald McMorrow, who previously helped launch Verathon, another medical device company that sold in 2009 for $300 million.

Astound Business Solutions is the presenting sponsor of the 2026 GeekWire Awards. Thanks also to gold sponsors Amazon Sustainability, Baird, BECU, JLL, First Tech and Wilson Sonsini, and silver sponsors Prime Team Partners.

The event will feature a VIP reception, sit-down dinner and fun entertainment mixed in. Tickets go fast. A limited number of half-table and full-table sponsorships available. Contact events@geekwire.com to reserve a spot for your team today.

(function(t,e,s,n){var o,a,c;t.SMCX=t.SMCX||[],e.getElementById(n)||(o=e.getElementsByTagName(s),a=o[o.length-1],c=e.createElement(s),c.type=”text/javascript”,c.async=!0,c.id=n,c.src=”https://widget.surveymonkey.com/collect/website/js/tRaiETqnLgj758hTBazgd5M58tggxeII7bOlSeQcq8A_2FgMSV6oauwlPEL4WBj_2Fnb.js”,a.parentNode.insertBefore(c,a))})(window,document,”script”,”smcx-sdk”); Create your own user feedback survey

Tech

Google bumps up Q Day deadline to 2029, far sooner than previously thought

Google is dramatically shortening its readiness deadline for the arrival of Q Day, the point at which existing quantum computers can break public-key cryptography algorithms that secure decades’ worth of secrets belonging to militaries, banks, governments, and nearly every individual on earth.

In a post published on Wednesday, Google said it is giving itself until 2029 to prepare for this event. The post went on to warn that the rest of the world needs to follow suit by adopting PQC—short for post-quantum cryptography—algorithms to augment or replace elliptic curves and RSA, both of which will be broken.

The end is nigh

“As a pioneer in both quantum and PQC, it’s our responsibility to lead by example and share an ambitious timeline,” wrote Heather Adkins, Google’s VP of security engineering, and Sophie Schmieg, a senior cryptography engineer. “By doing this, we hope to provide the clarity and urgency needed to accelerate digital transitions not only for Google, but also across the industry.”

Separately, Google detailed its timeline for making Android quantum resistant, the first time the company has publicly discussed PQC support on the operating system. Starting with the beta version, Android 17 will support ML-DSA, a digital signing algorithm standard advanced by the National Institute for Standards and Technology. ML-DSA will be added to Android’s hardware root of trust. The move will allow developers to have PQC keys for signing their apps and verifying other software signatures.

Google said it now has ML-DSA integrated into the Android verified boot library, which secures the boot sequence against manipulation. Google engineers are also beginning to move remote attestation to PQC. Remote attestation is a feature that allows a device to prove its current state to a remote server to, for example, prove to a server on a corporate network that it’s running a secure OS version.

Tech

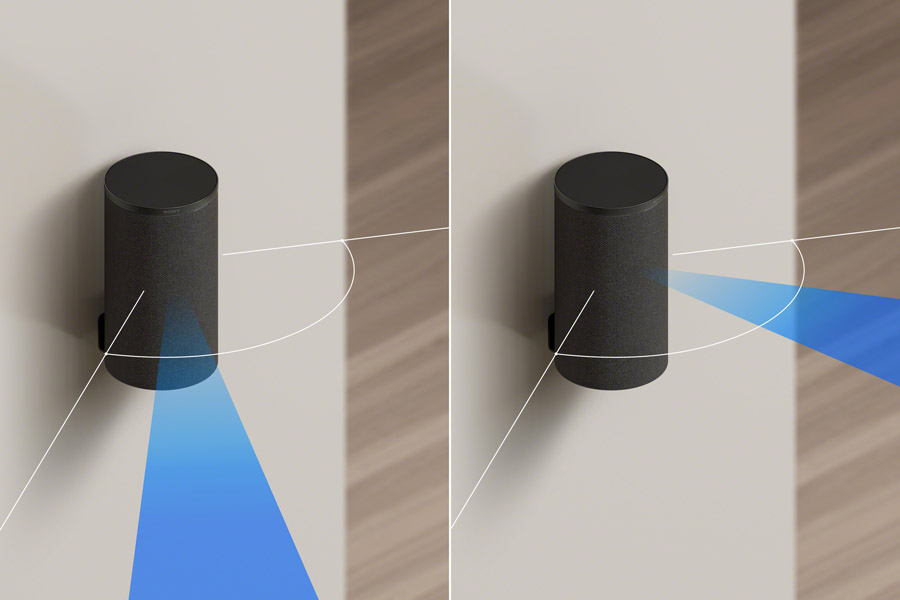

Sony’s Best Soundbars Just Got a Bass Boost (And Two Little Brothers)

Today Sony unveiled two new soundbars in their BRAVIA Theater line, the BRAVIA Theater Bar 5 (HTB-500) and BRAVIA Theater Bar 7 (HTA-7100). The Bar 5 is a simple two-piece 3.1-channel system that comes with the bar itself plus a powered subwoofer and can handle Dolby Atmos or DTS:X surround via virtualized surround sound. The Bar 7 is a step-up model that can be used on its own, or enhanced with rear speakers and a powered subwoofer (or two!).

The company also announced a new pair of wireless surround speakers (BRAVIA Theater Rear 9), which are compatible with the new BAR 7 and the existing BAR 8 and BAR 9 as well as Sony’s latest generation of AVRs (audio/video receivers). Sony also announced three new subwoofers (BRAVIA Theater Sub 7, Sub 8 and Sub 9) that will be compatible with the new and existing soundbar-based systems and receivers.

But I’ve saved the best news for last. Lovers of deep powerful cinematic bass will be happy to hear that Sony now supports the use of two subwoofers with the new BRAVIA Theater Bar 7 and the existing BAR 8 and BAR 9 soundbars. By using two subwoofers, you can get a more uniform, more extended bass response, even in larger rooms with open floor plans. This dual-sub functionality will come with the BRAVIA Theater Bar 7 right out of the box and will be added to the BAR 8 and BAR 9 via a free over the air software update.

In our review of the BRAVIA Theater Bar 9 system, our main gripe was that the bass response wasn’t as extended or powerful as we would have liked, even using their best (at the time) powered subwoofer. With the new larger Sub 9 subwoofer and the ability to add dual subs, it appears this criticism has been addressed. And, based on a quick audition of a system that used two Sub 9 subwoofers, we believe it will be more than up to the task of providing deep, precise bass even in large rooms.

The BRAVIA Theater Bar 7 supports Dolby Atmos, DTS:X, and Sony 360 Reality Audio, either on its own or with the addition of a pair of rear speakers and one or two powered subwoofers. With the addition of a subwoofer and rear speakers, the Bar 7 becomes IMAX Enhanced Certified, and can reproduce the IMAX Enhanced DTS:X soundtracks currently available on Disney+ and Sony Pictures Core streaming services, as well as select Blu-ray Discs. The Theater Bar 7 is compatible with Sony’s current Rear 8 speakers and the new Rear 9 speakers. For subs, the BRAVIA Theater Bar 7 can works with one or two of the new Sub 7, Sub 8 or Sub 9 subwoofers.

A Sony rep told us the company’s current BRAVIA Theater Quad system runs on a different chip-set than the BRAVIA soundbars so it will not be getting the dual-sub upgrade (at least not yet).

BRAVIA Theater Bar 7 – A Great Choice for Medium Sized Screens

Smaller than the BRAVIA Theater Bar 8 ($999.99) and Bar 9 ($1,499.99), the Theater Bar 7 ($869.99) still packs a punch. It features a total of nine drivers including front-firing, up-firing and side-firing drivers to create a 5.1.2-channel system on its own, expandable to 7.2.4 with the addition of two subwoofers and a pair of the Rear 9 speakers. You can also use the more affordable Rear 8 speakers, but those lack up-firing drivers so you won’t get as pronounced a height effect as you will with the Rear 9s. Like the Bar 8 and Bar 9, the Bar 7 includes Sony’s 360 Spatial Sound Mapping (360 SSM) to create an immersive and enveloping soundstage, no matter where you place your speakers.

Like the Bar 8 and Bar 9, the Bar 7 can be controlled with the BRAVIA Connect mobile app, and can be fully integrated into the TV’s settings menu when used with a compatible Sony BRAVIA TV. It also supports Sony’s AI-based Voice Zoom 3 feature for intelligent enhanced dialogue reproduction that raises voices with minimal impact to the rest of the soundtrack (also requires a compatible Sony TV).

Holding Down the Rear

Sony’s new BRAVIA Theater Rear 9 speakers ($749.99/pair) are replacing the current SA-RS5 in the line-up. The cylinder-shaped Rear 9s appear similar in cosmetic design to their predecessors, but the new ones come with an integrated swivel stand which can help direct the rear channel sounds to the listening area better. This is particularly useful when your seating area or room layout is not ideal, like when your couch is right up against a rear wall. Directing the sound will help Sony’s 360 Spatial Sound Mapping work even better to create an immersive dome of sound, even with non-ideal speaker layouts.

Bringing Up the Bass

Sony’s new BRAVIA Theater Sub 7 ($329), Sub 8 ($499) and Sub 9 ($899) offer customers three options based on budget and size preferences. As the size goes up, so does the price as well as the bass extension and output.

As for driver sizes and configuration, the Sub 7 features a 130mm (5.1-inch) bass driver, the Sub 8 has a single 200mm (7.9-inch) bass driver and the Sub 9 includes dual 200mm (7.9-inch) drivers in a vibration-cancelling dual-opposing driver configuration for deep bass extension and low distortion. With dual subwoofers now an option, you can always start with one sub and add a second later one if you feel like you need more bass.

The Bottom Line

We’re surprised (and pleased) to see Sony addressing the one main area of weakness of their soundbar-based systems: low bass reproduction. While we don’t have full specifications of the new woofers, we have heard a pair of Sub 9s in action and were quite impressed with what we heard. Of course, with this new functionality and performance, up goes the price. A fully spec’ed out system with the BRAVIA Theater Bar 9, Rear 9 speakers and pair of Sub 9 subwoofers will set you back around $4,000 (MSRP) and that’s quite a price tag for a soundbar-based system. But for those who want a simple, elegant, high performance and cosmetically pleasing solution, particularly for use with a large screen Sony BRAVIA TV or Projector, it may actually be worth the investment.

Pricing & Availability

All of these speakers are available now to pre-order at the following prices:

- Sony BRAVIA Theater Bar 5 (HTB-500) – $329.99

- Sony BRAVIA Theater Bar 7 (HTA-7100) – $869.00

- Sony BRAVIA Theater Rear 9 (SA-RS9) – $749.99

- Sony BRAVIA Theater Sub 7 (SA-SW7) – $329.99

- Sony BRAVIA Theater Sub 8 (SA-SW8) – $499.99

- Sony BRAVIA Theater Sub 9 (SA-SW9) – $899.99

Related Reading:

Tech

OpenAI Gives Users a Long-Term Storage Option With ChatGPT Library

ChatGPT users can now store, browse and retrieve the files they upload and create with the AI tool, OpenAI announced this week.

All of the documents you upload inside the normal chat window are automatically saved to the library, as long as you’re logged into your account. Now you can search for and pull up documents in one central place.

The feature is limited to Plus, Pro and Business users, so you have to pay at least $20 per month to store files using ChatGPT Library. You also have to be online to access your files.

(Disclosure: Ziff Davis, CNET’s parent company, in 2025 filed a lawsuit against OpenAI, alleging it infringed Ziff Davis copyrights in training and operating its AI systems.)

If you turn on ChatGPT’s Memory feature, the chatbot can also reference the files you’ve saved to bring up in future chats.

OpenAI mentions documents, spreadsheets, presentations and images as supported file types. However, the images you generate using ChatGPT will remain in the Images tab.

Read more: OpenAI’s Slop Machine Sora Is Dead. We’re All Better Off Without It

Save your files in the chatbot

To use the Library feature, sign in to your account and click the plus sign on the left side of the window where you type commands. Select the “Add from Library” option to choose the file you want to bring up.

The library is visible in a left-hand sidebar that’s searchable. You can filter results by file type and whether you uploaded or created the file.

There are some restrictions on file size. The maximum file size is 512MB, and all documents and chat conversations are limited to 2 million tokens (characters). Spreadsheets and CSV files must be 50MB or smaller, and images must be 20MB or smaller.

Deleting files is a little tricky. You can select a file in the library window and click “delete” or use the trash icon beside the file name. Then OpenAI will delete the file within 30 days, unless the company needs it for security or legal obligations, or if “the chat has already been de-identified and disassociated from you.”

OpenAI’s big recent changes

Lately, OpenAI has been refining its models and expanding services for coders and developers, with faster models that are suited for debugging code. OpenAI announced these improved models as the company is competing with rivals that offer coding-specific tools, like Anthropic’s Claude Code.

OpenAI executives have also been talking about building a “superapp” desktop interface that consolidates its AI tools in one place. The three tools included in the app would be ChatGPT, the coding platform Codex and the internet browser Atlas, which uses AI as an assistant.

The company also announced this week it would shut down its AI video app Sora as it pivots away from video generation into more coding and productivity tools, like Codex.

Tech

Epic Games to lay off 1,000 employees as Fortnite engagement drops

![]()

The organisation explained that a number of internal and external factors have impacted working life and profits at Epic.

US games and software developer Epic Games has announced plans to lay off more than 1,000 people amid a drop in the popularity of its online gaming platform Fortnite over the last 12 months.

In a memo issued to Epic’s workforce, CEO Tim Sweeney said he was sorry that the organisation is once again in this position, having previously cut 16pc of its workforce in 2023. He explained that the downturn in Fortnite engagement, which began in 2025, has resulted in the organisation spending more money than it is currently making.

“This layoff, together with over $500m of identified cost savings in contracting, marketing and closing some open roles puts us in a more stable place,” said Sweeney.

He added: “Some of the challenges we’re facing are industry-wide challenges, slower growth, weaker spending and tougher cost economics, current consoles selling less than last generation’s and games competing for time against other increasingly-engaging forms of entertainment.”

However, he explained that some of the issues are unique to Epic. For example, last week, Epic raised the prices of Fortnite’s in-game currency, saying that “the cost of running Fortnite has gone up a lot and we’re raising prices to help pay the bills”.

Sweeney also noted that despite its prevalence in the industry and wider workplace conversation, the layoffs have not been prompted by AI. “To the extent it improves productivity, we want to have as many awesome developers developing great content and tech as we can.”

Impacted employees will receive a severance package that includes at least four months of base pay, extended Epic-paid healthcare coverage, an acceleration of stock options vesting through January 2027 and extended equity exercise options for up to two years. There is to be a meeting on Thursday (26 March) to discuss the matter further.

In November of last year, Google and Epic Games reached a settlement over an antitrust lawsuit that was filed in 2020 by Epic, in which the search engine giant was found to hold a Play Store monopoly.

The more than five-year conflict began when Fortnite was removed from the Apple App Store and Google Play Store for violating their policies with its in-game payment system that would allow users to pay directly for in-app purchases. At the time, Epic said the process where organisations took a 30pc cut from every transaction made through apps on their platforms was unfair.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

-

Crypto World5 days ago

NIO (NIO) Stock Plunges 6.5% as Shelf Registration Sparks Dilution Worries

-

Fashion5 days ago

Fashion5 days agoWeekend Open Thread: Adidas – Corporette.com

-

Politics5 days ago

Politics5 days agoJenni Murray, Long-Serving Woman’s Hour Presenter, Dies Aged 75

-

NewsBeat16 hours ago

NewsBeat16 hours agoManchester United reach agreement with Casemiro over contract clause amid transfer speculation

-

Crypto World4 days ago

Crypto World4 days agoBest Crypto to Buy Now: Strategy Just Spent $1.57 Billion on Bitcoin During Fear While Early Investors Quietly Enter Pepeto for 150x Potential

-

Crypto World4 days ago

Crypto World4 days agoBitcoin Price News: Bhutan Sells $72 Million in BTC Under Fiscal Pressure, but the Smart Money Entering Pepeto Sees What the Market Does Not

-

Tech6 days ago

Tech6 days agoinKONBINI Lets You Spend Summer Days Behind the Register

-

Sports3 days ago

Sports3 days agoRemo Stars and Kano Pillars Strengthen Survival Hopes in NPFL

-

Politics6 days ago

Politics6 days agoGender equality discussions at UN face pushbacks and US resistance

-

Business3 days ago

Business3 days agoNo Winner in March 21 Drawing as Prize Rolls to $133 Million for Next

-

Sports3 days ago

Sports3 days agoGary Kirsten Accuses Pakistan Cricket Board Of ‘Interference’, Mohsin Naqvi Responds

-

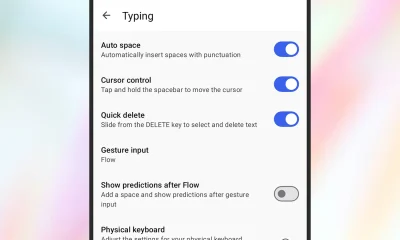

Tech3 days ago

Tech3 days agoGive Your Phone a Huge (and Free) Upgrade by Switching to Another Keyboard

-

Sports5 days ago

Sports5 days ago2026 Kentucky Derby horses, odds, futures, preview, date: Expert who nailed 12 Derby-Oaks Doubles enters picks

-

Sports7 days ago

Vikings Free Agency Enters Phase 2 with Key Questions

-

Tech3 days ago

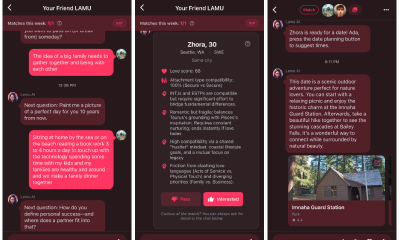

Tech3 days agoAI enters the chat: New Seattle dating app relies on tech to facilitate meaningful human connections

-

Politics6 days ago

Politics6 days agoScotland’s rejection of assisted dying is a victory for humanity

-

NewsBeat6 days ago

NewsBeat6 days agoMissile lands next to presenter during live report

-

Business6 days ago

Business6 days agoDLocal: Entering 2026 At Escape Velocity

-

Business5 days ago

Columbia Sportswear enters $500 million credit agreement with JPMorgan Chase

-

NewsBeat7 days ago

NewsBeat7 days agoVal Kilmer to appear posthumously in new film using generative AI

You must be logged in to post a comment Login