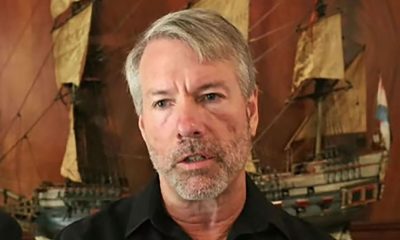

UL’s Dr Muzaffar Rao discusses the professional diploma in OT security programme, and what motivates his research in OT and ICS cybersecurity.

For Dr Muzaffar Rao, University of Limerick (UL) has been a research base for a number of years.

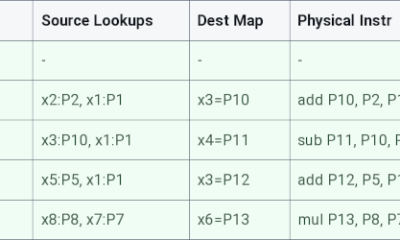

When Rao first joined UL in 2013, he was a PhD student conducting research on reconfigurable hardware for security, specifically field programmable gate array (FPGA)‑based cryptographic systems.

After his PhD, Rao began working at the university as a postdoctoral researcher with the Centre for Robotics and Intelligent Systems, a role that Rao says allowed him to further develop “expertise in hardware‑based cryptographic systems”.

Fast-forward to the current day, and Rao is now an associate professor in the Department of Electronic and Computer Engineering at UL, as well as an associate investigator with Lero, the Research Ireland Centre for Software.

Rao is also the course director of the professional diploma in operational technology (OT) security programme – a specialised Level 9 programme that Rao says is a “unique offering in Ireland”, as it’s dedicated specifically to OT and industrial control systems (ICS) security.

The primary objective of the programme, according to Rao, is to equip professionals with the practical knowledge and specialised skills required to “securely integrate IT and OT systems while effectively managing associated cyber risks”.

“Developed in close collaboration with industry partners, the course focuses on real-world operational challenges, OT-specific threats, relevant legal and regulatory frameworks, and risk mitigation strategies,” he explains.

“A strong emphasis is placed on bridging workforce skills gaps to ensure graduates can protect and secure complex operational environments.”

Rao tells SiliconRepublic.com that recently, the course was provided with the Airbus CyberRange, a simulation and training platform that provides “immersive, hands-on learning through realistic, scenario-based exercises that reflect real-world critical infrastructure and smart manufacturing systems”.

Securing OT and ICS

While his duties have expanded to new duties such as teaching and curriculum development, his cybersecurity research continues to be a major part of his post at UL.

Rao’s current research focuses on strengthening the security and resilience of OT and ICS, particularly in critical infrastructure environments that rely on legacy systems.

“These systems,” he tells us, “are often difficult or impossible to patch, replace or take offline, which makes conventional security approaches impractical.”

He says a “central strand” of his work involves developing lightweight cryptographic mechanisms specifically tailored for ageing industrial hardware with limited processing power, constrained bandwidth and long operational life cycles – with the goal of introducing strong security controls without disrupting industrial operations.

He also researches early‑warning and intrusion‑detection frameworks for “advanced, including nation‑state-level, threats in OT and ICS environments”.

“This includes addressing situations where monitoring is minimal or absent, with particular attention to unmonitored industrial sensors and peripheral devices that create blind spots attackers can exploit.”

But why is this research important?

Rao explains that much of Ireland’s critical infrastructure – including energy, water, healthcare and manufacturing – still depends on “ageing operational technology that cannot be easily upgraded or taken offline”.

“These constraints create significant security gaps and make essential services especially vulnerable to sophisticated cyberthreats, including those from nation‑state actors targeting industrial systems across Europe,” says Rao.

“By developing lightweight cryptographic solutions suitable for legacy devices, improving early‑warning intrusion detection and securing the increasingly interconnected IT/OT environment, this research directly addresses these risks.

“It enhances system visibility, limits lateral movement by attackers, and strengthens Ireland’s ability to prevent and respond to cyber‑physical attacks. Ultimately, this work contributes to national resilience, the continuity of essential services and public safety at a time when cyberattacks are becoming more frequent, targeted and complex.”

Misconceptions and motivation

Rao says he was drawn to this specific area of research because it lies at “the intersection of fields that have consistently shaped my academic path”.

In fact, he says his PhD research on FPGA‑based cryptographic designs naturally exposed him to the “unique and under‑addressed security challenges” of OT and ICS.

“These environments depend heavily on legacy hardware that underpins critical infrastructure yet lacks the protections expected in modern IT systems.”

One misconception about his research that Rao often encounters is the belief that improving security in OT and ICS environments is “simply a matter of applying traditional IT security controls or waiting for outdated systems to be replaced”.

“In reality, critical infrastructure rarely has the option of downtime, frequent patching or uniform visibility, and many industrial systems were never designed with security in mind,” he explains.

He adds that there’s also a belief that effective security requires heavy monitoring, expensive hardware or “intrusive changes that risk disrupting operations”. Rao says his research directly challenges this assumption by “demonstrating that strong security and early intrusion detection can be achieved using lightweight, domain-aware techniques that respect operational constraints”.

“These methods address blind spots such as unmonitored sensors and can detect sophisticated attacks well before they escalate into physical or safety incidents, without disrupting essential services.”

With a number of years spent in this research area, one has to wonder what keeps bringing Rao back to the OT and ICS domain.

As Rao explains to us, he continues to find motivation in “the combination of intellectual challenge and real‑world impact”.

“Unlike conventional IT systems, OT environments cannot simply be patched, replaced or taken offline, even as they face increasingly sophisticated nation‑state threats and growing IT/OT convergence,” he says.

“Developing lightweight cryptography, early‑stage intrusion detection and secure architectures under strict resource and operational constraints is both technically demanding and societally important.

“The opportunity to produce research that has practical relevance and contributes directly to the resilience of essential services is what keeps this work compelling for me.”

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

You must be logged in to post a comment Login