Last year, Spotify removed more than 75m spam tracks from its platform.

The world’s biggest music streaming platform, Spotify, does not ban AI-generated music, but does admit that it finds it hard to detect it.

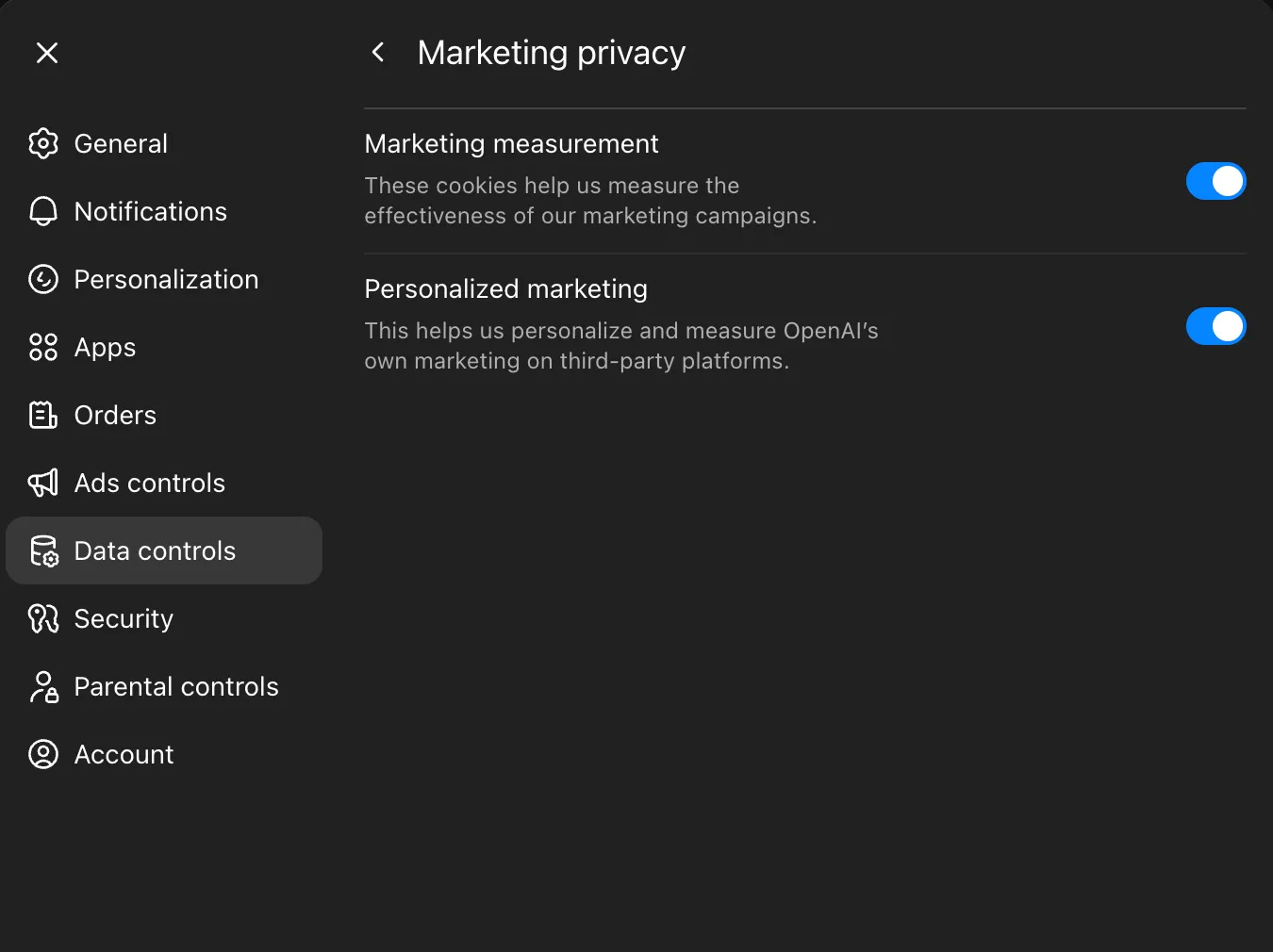

In its latest attempt to tackle growing AI spam on the platform, Spotify is introducing a vetted artist verification badge to help users identify human-made music from AI-generated ones.

Fraudulent music distribution is especially an issue for the platform, whose total artist payouts have grown from $1bn in 2014 to $10bn in 2024. However, artist payout per stream has reduced on average since 2021, further incentivising spam music to increase earnings.

Artists who receive this verification badge are understood to show consistent listener activity and engagement, compliance with Spotify’s policies and signal a human artist behind the account. The company said that it would also look for off-platform presence such as concert dates and social media accounts.

It added that at launch, “profiles that appear to primarily represent AI-generated or AI-persona artists are not eligible for verification”.

The new badges will begin rolling out in the coming weeks, Spotify said. These will appear next to artist names in search, represented with a light green checkmark icon.

“In today’s music landscape, the concept of artist authenticity is complex and quickly evolving, and we’ll continue to develop our approach over time,” Spotify said in a blogpost on 30 April.

“At launch, we have ensured that more than 99pc of the artists Spotify listeners actively search for will be verified, representing hundreds of thousands of artists – the majority independent – spanning genres, career stages and geographies.”

The company already has a number of other features, including ‘expanded song credits’, ‘about the song’ sections and AI credits, which help listeners find more information about the artists they listen to.

The new standards for verification, according to the company, would be paired with human reviews to identify “real artists” behaving in good faith, Spotify said. It also has an AI impersonation policy, as well as mechanisms to “better” stop fraudulent music distribution.

Last year, Spotify said that it removed more than 75m spam tracks from its platform. It acknowledged that AI is used by bad actors and content farms to create deepfakes and spam to deceive artists, pushing “slop” into the ecosystem. Spam tactics also include mass uploads, duplicates, SEO hacks and artificially short track abuse.

The verification badge comes after publisher Sony Music requested the removal of 135,000 songs by fraudsters impersonating its artists on streaming services.

Meanwhile, direct-to-fan music platform Bandcamp took a more aggressive approach in January by outright banning songs “generated wholly or in substantial part by AI”.

“Any use of AI tools to impersonate other artists or styles is strictly prohibited,” the company said in a post on Reddit. Spotify, however, allows artist impersonations as long as consent is provided.

Last October, Spotify’s founder and CEO Daniel Ek stepped down from his role and became the company’s executive chair in January.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

You must be logged in to post a comment Login