This week, juries in California and New Mexico dealt a pair of landmark verdicts against America’s social media giants.

Tech

Google says hackers are abusing Gemini AI for all attacks stages

State-backed hackers are using Google’s Gemini AI model to support all stages of an attack, from reconnaissance to post-compromise actions.

Bad actors from China (APT31, Temp.HEX), Iran (APT42), North Korea (UNC2970), and Russia used Gemini for target profiling and open-source intelligence, generating phishing lures, translating text, coding, vulnerability testing, and troubleshooting.

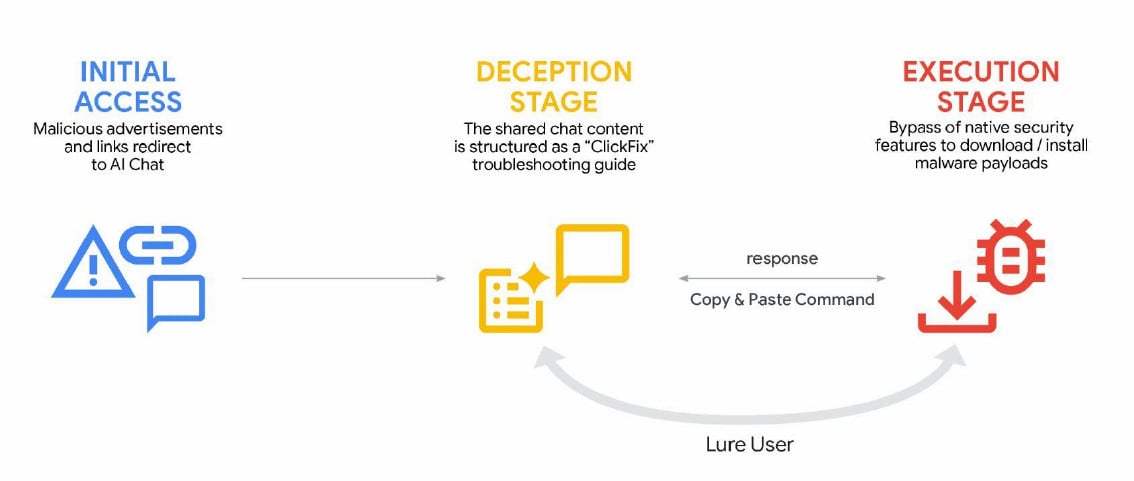

Cybercriminals are also showing increased interest in AI tools and services that could help in illegal activities, such as social engineering ClickFix campaigns.

AI-enhanced malicious activity

The Google Threat Intelligence Group (GTIG) notes in a report today that APT adversaries use Gemini to support their campaigns “from reconnaissance and phishing lure creation to command and control (C2) development and data exfiltration.”

Chinese threat actors employed an expert cybersecurity persona to request that Gemini automate vulnerability analysis and provide targeted testing plans in the context of a fabricated scenario.

“The PRC-based threat actor fabricated a scenario, in one case trialing Hexstrike MCP tooling, and directing the model to analyze Remote Code Execution (RCE), WAF bypass techniques, and SQL injection test results against specific US-based targets,” Google says.

Another China-based actor frequently employed Gemini to fix their code, carry out research, and provide advice on technical capabilities for intrusions.

The Iranian adversary APT42 leveraged Google’s LLM for social engineering campaigns, as a development platform to speed up the creation of tailored malicious tools (debugging, code generation, and researching exploitation techniques).

Additional threat actor abuse was observed for implementing new capabilities into existing malware families, including the CoinBait phishing kit and the HonestCue malware downloader and launcher.

GTIG notes that no major breakthroughs have occurred in that respect, though the tech giant expects malware operators to continue to integrate AI capabilities into their toolsets.

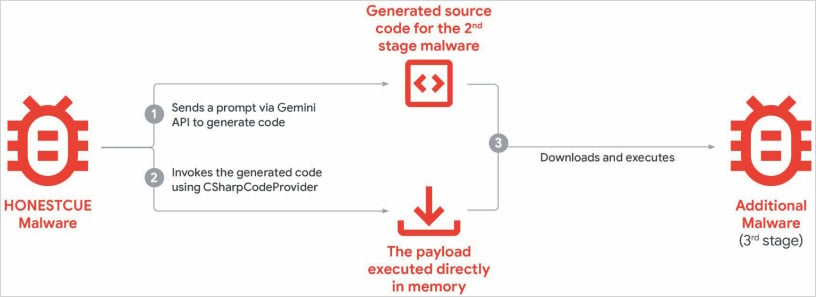

HonestCue is a proof-of-concept malware framework observed in late 2025 that uses the Gemini API to generate C# code for second-stage malware, then compiles and executes the payloads in memory.

Source: Google

CoinBait is a React SPA-wrapped phishing kit masquerading as a cryptocurrency exchange for credential harvesting. It contains artifacts indicating that its development was advanced using AI code generation tools.

One indicator of LLM use is logging messages in the malware source code that were prefixed with “Analytics:,” which could help defenders track data exfiltration processes.

Based on the malware samples, GTIG researchers believe that the malware was created using the Lovable AI platform, as the developer used the Lovable Supabase client and lovable.app.

Cybercriminals also used generative AI services in ClickFix campaigns, delivering the AMOS info-stealing malware for macOS. Users were lured to execute malicious commands through malicious ads listed in search results for queries on troubleshooting specific issues.

source: Google

The report further notes that Gemini has faced AI model extraction and distillation attempts, with organizations leveraging authorized API access to methodically query the system and reproduce its decision-making processes to replicate its functionality.

Although the problem is not a direct threat to users of these models or their data, it constitutes a significant commercial, competitive, and intellectual property problem for the creators of these models.

Essentially, actors take information obtained from one model and transfer the information to another using a machine learning technique called “knowledge distillation,” which is used to train fresh models from more advanced ones.

“Model extraction and subsequent knowledge distillation enable an attacker to accelerate AI model development quickly and at a significantly lower cost,” GTIG researchers say.

Google flags these attacks as a threat because they constitute intellectual theft, they are scalable, and severely undermine the business model of AI-as-a-service, which has the potential to impact end users soon.

In a large-scale attack of this kind, Gemini AI was targeted by 100,000 prompts that posed a series of questions aimed at replicating the model’s reasoning across a range of tasks in non-English languages.

Google has disabled accounts and infrastructure tied to documented abuse, and has implemented targeted defenses in Gemini’s classifiers to make abuse harder.

The company assures that it “designs AI systems with robust security measures and strong safety guardrails” and regularly tests the models to improve their security and safety.

Tech

SpaceX Files Draft for Potentially Stratospheric IPO

SpaceX is looking to the heavens for its upcoming initial public offering based on a $1.75 trillion valuation, according to confidential paperwork filed with the US Securities and Exchange Commission.

As reported by Bloomberg, the draft IPO registration is the first step toward a possible June offering that could raise approximately $75 billion. The filing allows the company to get feedback from the SEC before the information is released publicly.

The IPO may be open to more people than just the wealthiest investors. According to a report by The Motley Fool, SpaceX plans to allocate around 30% of the initial shares to “retail investors,” meaning individual investors. Normal retail allocation tends to be around 10% of shares.

A SpaceX representative didn’t immediately respond to a request for comment.

Why a SpaceX IPO is a big deal

Spaceflight is an incredibly expensive endeavor; SpaceX gets billions of dollars from the US government to launch satellites and help keep NASA’s programs running. Almost a year ago, the company set a target of launching every other day through the end of 2025 and ended up launching a record 165 orbital flights.

But SpaceX is no longer just a high-flying rocket company. Its Starlink division provides data access to homes, remote locations, airlines and direct to many mobile phones in areas where there’s no cellular coverage. It also recently acquired xAI, another of Elon Musk’s companies, and owns the social media site X (formerly Twitter).

It’s the AI angle that seems to be driving up the company’s valuation ahead of the IPO. The xAI all-stock acquisition valued the company and SpaceX at $1.25 trillion. This year, OpenAI and Anthropic PBC are also expected to go public.

Although those numbers are eye-popping, the company has plenty of challenges before it can get off the launchpad.

Starlink has announced a plan to send up new V3 third-generation satellites that should bring gigabit internet speeds to its network, but those won’t be ready until 2027. Getting them up requires SpaceX’s heavy-duty Spacecraft vehicle, which has had limited success in testing so far. In the meantime, its current Starlink satellites have been exploding in orbit as recently as this week.

And for xAI, the skies aren’t exactly clear despite the current fervor for all things AI. Musk announced in mid-March that “xAI was not built right first time around, so is being rebuilt from the foundations up.” And the company is being sued by three teen girls and their guardians for “devastating” harm caused by its Grok AI generating child sexual abuse images.

Tech

WhatsApp notifies 200 users who installed fake app built by Italian spyware maker SIO

WhatsApp has notified approximately 200 users, primarily in Italy, that they were tricked into installing a counterfeit version of the messaging app that was actually government spyware. The fake application was built by SIO, an Italian surveillance technology company that develops spyware for law enforcement and intelligence agencies through its subsidiary ASIGINT. WhatsApp said it had proactively identified the affected users, logged them out of their accounts, warned them about the privacy risks, and urged them to delete the fake client and install the official app from a trusted source. The company told TechCrunch it also plans to send a formal legal demand to SIO to halt any malicious activity linked to the campaign.

The disclosure, first reported by Italian newspaper La Repubblica and news agency ANSA, marks the second time in little more than a year that WhatsApp has publicly named a spyware vendor operating against its users in Italy. In early 2025, WhatsApp alerted around 90 users, including journalists and pro-immigration activists, that they had been targeted by Paragon Solutions, a U.S.-Israeli surveillance firm whose flagship product, Graphite, was deployed by Italy’s domestic and foreign intelligence services. That revelation triggered a political crisis in Rome. Italy’s parliamentary intelligence oversight committee, COPASIR, confirmed the use of Graphite and found that seven Italians had been targeted. Paragon subsequently cut ties with Italy’s spy agencies after the government declined to verify whether the spyware had been used against a specific journalist, Francesco Cancellato of the news site Fanpage.

SIO’s spyware operates through a different model. The malware, identified in its own code as Spyrtacus, is embedded in fake applications designed to look like legitimate software. Researchers have found 13 different samples of Spyrtacus dating back to 2019, with the most recent from late 2024. Previous versions impersonated Android apps from Italian mobile providers TIM, Vodafone, and WINDTRE, as well as earlier fake versions of WhatsApp itself. TechCrunch first exposed SIO’s Android distribution campaign in February 2025. The latest operation, targeting iPhones, represents an expansion of the tactic to Apple’s ecosystem. Once installed, Spyrtacus can steal text messages, chat histories, and call logs, as well as record audio and video directly from the device’s microphone and camera.

The delivery mechanism is as revealing as the malware itself. In Italy, authorities routinely obtain cooperation from mobile carriers, who send phishing links to their own customers on behalf of law enforcement. The target receives what appears to be a routine update notification from their provider, directing them to install what looks like a standard WhatsApp update. The Italian justice ministry has maintained a price list and catalogue showing how authorities can compel telecom companies to send such messages, a system that effectively turns the mobile network itself into a distribution channel for state surveillance tools. The cost of renting spyware in Italy is remarkably low: as of late 2022, law enforcement could access these tools for as little as €150 per day, without the large upfront acquisition costs that typically limit deployment in other countries.

Italy’s position as a spyware hub is unusual among Western democracies. Companies including Hacking Team, Cy4Gate, RCS Lab, and Raxir have all been based in the country, drawn by a legal framework that provides a formal statutory basis for the “captatore informatico,” or computer interceptor, effectively state-sanctioned trojan software. Fabio Pietrosanti, president of the Hermes Center for Transparency and Digital Human Rights, has said that spyware is deployed more frequently in Italy than anywhere else in Europe because the low cost and permissive regulation make it accessible to a far wider range of law enforcement agencies than in neighbouring countries. The result is an ecosystem in which municipal police forces, not just national intelligence agencies, can commission surveillance operations against individuals.

WhatsApp spokesperson Margarita Franklin told TechCrunch the company could not yet confirm whether the 200 affected users included journalists or members of civil society. “Our priority has been protecting the users who may have been tricked into downloading this fake iOS app,” she said. The company did not specify whether it had referred the matter to Italian prosecutors or to any regulatory authority. Apple and SIO did not respond to requests for comment.

The legal landscape around commercial spyware has shifted substantially in the past year. In May 2025, a California jury ordered NSO Group, the Israeli maker of Pegasus, to pay WhatsApp $167 million in punitive damages after finding it had enabled hacks of approximately 1,400 users through zero-click attacks. A federal judge later reduced the award to $4 million but imposed a permanent injunction barring NSO from targeting WhatsApp’s infrastructure. NSO has appealed. WhatsApp’s parent company Meta described the verdict as a landmark, and it has since expanded its legal strategy against the broader surveillance industry. The formal legal demand WhatsApp intends to send SIO follows the same pattern: use litigation and public disclosure as deterrents against companies that profit from compromising encrypted messaging platforms.

The proliferation of spyware vendors presents a challenge that extends well beyond any single platform. Apple has sent mercenary-spyware threat notifications to users in more than 150 countries since 2021, alerting individuals it believes have been individually targeted by state-sponsored attacks. In April 2025, Apple notified the Italian journalist Ciro Pellegrino, one of the Paragon victims, that he had been targeted. The notification systems run by Apple and WhatsApp now represent the primary mechanism by which victims of government surveillance learn they have been compromised, a function that was once the exclusive domain of the cybersecurity industry’s specialist researchers.

The global lawful-interception market was valued at $4 billion in 2023 and is projected to reach $15 billion by 2032, growing at roughly 16 per cent annually. That growth is being driven not by the Pegasus-style zero-click exploits that attract headlines, but by the kind of low-cost, phishing-based tools that SIO sells. The barrier to entry for government surveillance has dropped to the point where a local police department in a midsize Italian city can commission the same class of spyware deployment that was once the preserve of national intelligence agencies. The gap between regulatory ambition and enforcement capacity in Europe means that the legal frameworks governing these tools have not kept pace with the speed at which they are being adopted.

What makes the SIO case distinct from the Paragon scandal is the method. Paragon’s Graphite used zero-click exploits that required no action from the target. SIO’s Spyrtacus requires the target to install a fake application, a social-engineering approach that relies on trust in the carrier and familiarity with routine app updates. The fact that Italian telecoms participate in the delivery chain, sending phishing messages to their own subscribers at the state’s request, turns the mobile infrastructure itself into an instrument of surveillance. It is one thing for a government to hack a phone. It is another for the phone company to help.

WhatsApp’s decision to publicly name SIO and notify the affected users follows the broader pattern of tech platforms asserting themselves as counterweights to state surveillance in ways that would have been unthinkable a decade ago. The company is not merely patching a vulnerability. It is identifying the vendor, alerting the victims, and threatening legal action, a posture that positions a messaging app owned by Meta as a more effective check on government spyware abuse than any European regulatory body has managed to date. Whether that dynamic is reassuring or alarming depends on your view of where the responsibility for protecting citizens from their own governments should ultimately rest.

For the 200 users in Italy who received WhatsApp’s notification, the immediate question is narrower: who authorised the surveillance, and on what legal basis? The answer may never become public. Italy’s lawful-intercept framework permits the use of these tools under judicial oversight, but the oversight mechanisms have repeatedly proven inadequate to prevent abuse. The Paragon scandal demonstrated that intelligence agencies could target journalists and activists under the cover of lawful authority. The SIO case suggests the problem runs deeper, extending to less prominent vendors, cheaper tools, and a distribution model that exploits the trust citizens place in their mobile carriers. The spyware industry does not need zero-click exploits to be dangerous. It just needs a convincing notification from your phone company.

Tech

California Suspends Enforcement of Law Requiring VCs to Report Diversity Data

Under a new state regulation, venture capital firms operating in California were supposed to submit demographic data about their portfolio companies, including the gender and race of startup founders they backed. But amid public criticism from some tech leaders, the California agency administering the new requirement suspended it just before the Wednesday deadline for firms to make their first disclosures.

“The California Department of Financial Protection and Innovation (DFPI) has announced that it plans to initiate rulemaking in response to comments by various stakeholders relating to the Fair Investment Practices by Venture Capital Companies Law,” the state agency posted on its website in mid-March. “Implementation and enforcement of the [law] will be suspended pending completion of the rulemaking and until final regulations are in place.”

California lawmakers first passed the measure in 2023, and it was signed into law shortly thereafter by Governor Gavin Newsom. For decades, women and people of color have received only a small share of overall startup funding relative to their representation in the US population. Lawmakers hoped putting more public scrutiny on investment decisions would help foster greater equity in the market, including for people who are disabled, retired military, or LGBTQ+.

The law called for venture capital and some other investment firms to file annual reports starting March 1 of last year about the overall makeup of the founding teams they had invested in and the amount of money they provided to diverse founders. Firms were meant to collect the demographic data through a voluntary survey that was then anonymized. California authorities planned to publish the filings online. Lawmakers amended the law in 2024 to delay reporting until April 1, 2026 and enable the state to levy daily fines for noncompliance.

The California Department of Financial Protection and Innovation did not immediately respond to a request for comment on the authority it used to sidestep the deadline set by lawmakers. Newsom’s office also didn’t immediately respond to a request for comment.

Financiers focused on funding entrepreneurs from underrepresented backgrounds had supported the law. But the National Venture Capital Association, the tech investment industry’s leading trade group, opposed it. The group argued that voluntary data collection would inflate diversity statistics and that publishing inaccurate data could lead to unfair attacks on investors genuinely trying to tackle diversity issues. Over the past year, the Trump administration has defunded and attacked diversity, equity, and inclusion, or DEI, initiatives in both the public and private sectors, leading many businesses and organizations to pull back from them.

In February, the venture capital association wrote to Newsom asking for the reporting deadline to be pushed back again because, in its view, the state had bungled the process. California authorities didn’t publish the standardized survey founders were supposed to fill out until early this year and, at the time, they still hadn’t introduced a way for firms to register with regulators as required by the law, according to the association. “This administrative timeline creates an environment ripe for error and threatens to produce the misleading and counterproductive data we previously warned against,” association president and CEO Bobby Franklin wrote.

Last month, as the deadline for the first reports loomed, some entrepreneurs and investors began complaining on social media about the survey effort. “The latest California malarky is a requirement for venture investors to collect/report racial and gender statistics,” wrote Blake Scholl, the founder and CEO of venture-backed aviation startup Boom Supersonic. “I want to live in a world where merit matters—not skin color or what you have between your legs.”

Tech

New CrystalRAT malware adds RAT, stealer and prankware features

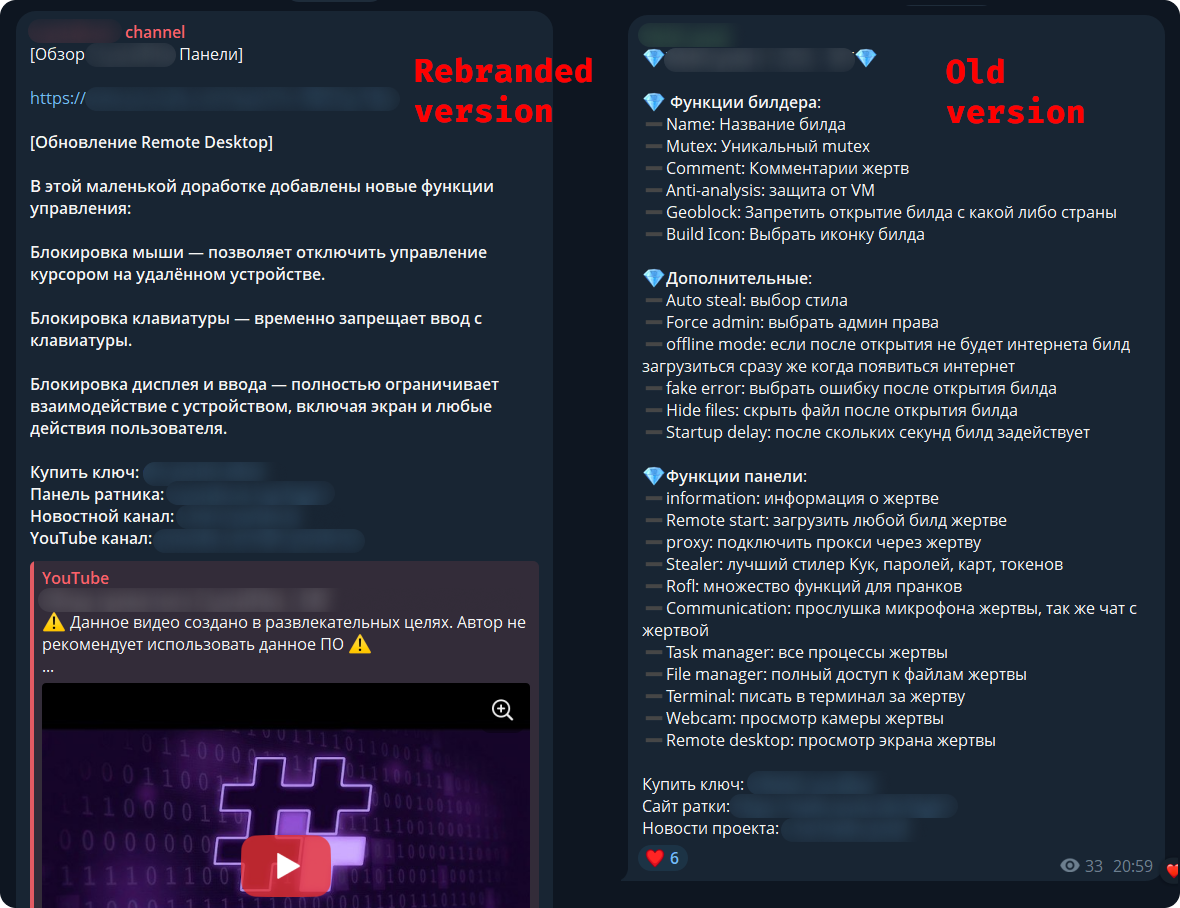

A new malware-as-a-service called CrystalRAT is being promoted on Telegram, offering remote access, data theft, keylogging, and clipboard hijacking capabilities.

The malware emerged in January with a tiered subscription model. Apart from the Telegram channel, the MaaS was also promoted on YouTube, via a dedicated marketing channel that showcased its capabilities.

Kaspersky researchers say in a report today that the malware features strong similarities to WebRAT (Salat Stealer), including the same panel design, Go-based code, and a similar bot-based sales system.

CrystalX also includes an extensive list of prankware features designed to annoy the user or disrupt their work. Despite its “fun” side, CrystalX offers a large set of data theft capabilities.

Source: Kaspersky

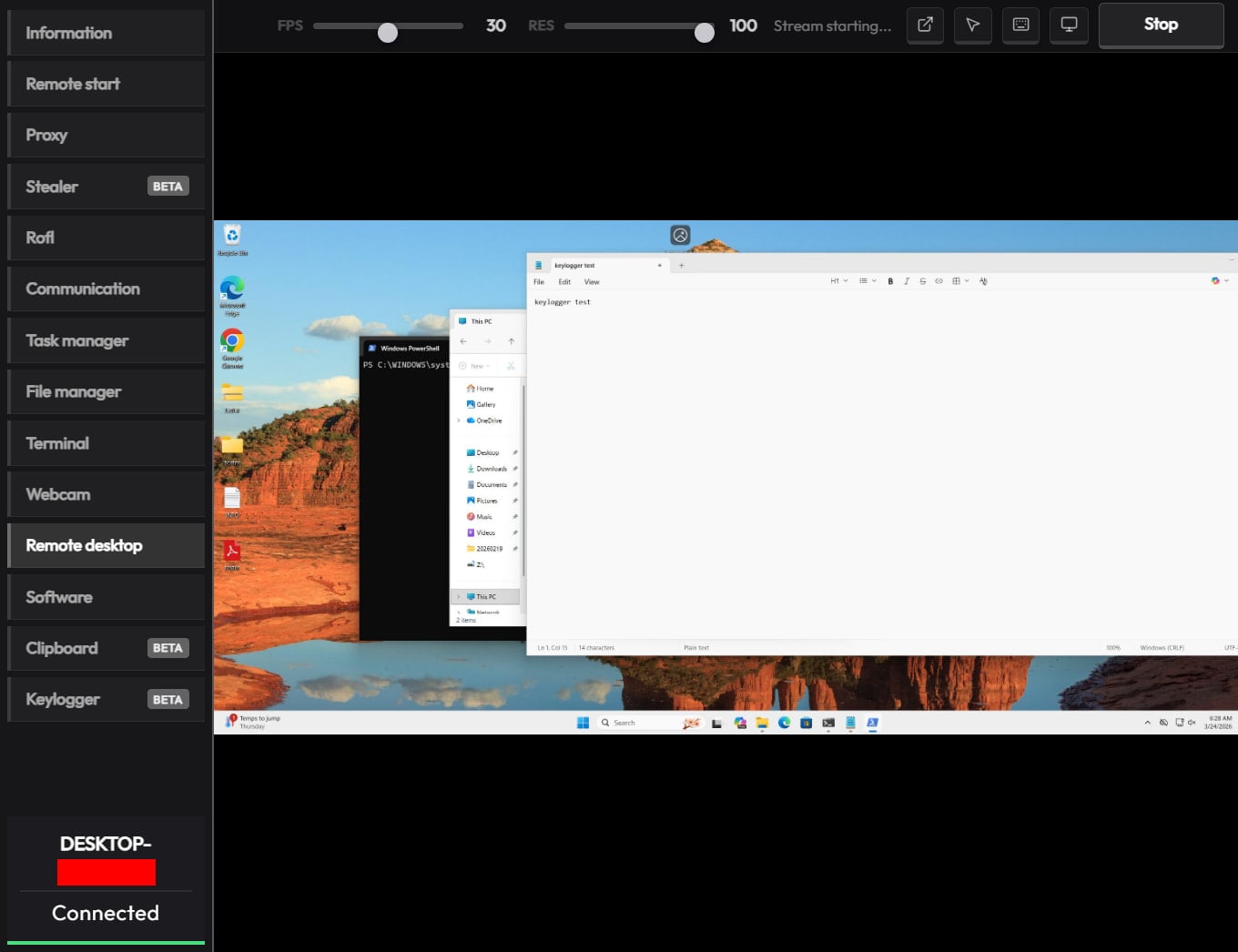

CrystalX RAT details

Kaspersky says that the malware provides a user-friendly control panel and an automated builder tool that supports customization options, including geoblocking, executable customization, and anti-analysis features (anti-debugging, VM detection, proxy detection, etc.).

The generated payloads are zlib-compressed and encrypted with the ChaCha20 symmetric stream cipher for protection.

The malware connects to the command-and-control (C2) via WebSocket and sends info about the host for profiling and infection tracking.

CrystalX’s infostealer component, which Kaspersky found to be temporarily disabled as it is being prepared for an upgrade, targets Chromium-based browsers via the ChromeElevator tool, Yandex, and Opera. Additionally, the tool collects data from desktop apps such as Steam, Discord, and Telegram.

The remote access module can be used to execute commands via CMD, upload/download files, browse the file system, and control the machine in real time via built-in VNC.

The malware also exhibits spyware-like behavior, as it can capture video and audio from the microphone.

Finally, CrystalX features a keylogger that streams keystrokes in real time to the C2, and a clipper tool that uses regular expressions to detect wallet addresses in the clipboard and replace them with ones the attacker provides.

Source: Kaspersky

Putting some “fun” in the package

What sets CrystalX apart in the crowded MaaS space is its rich set of prankware features.

According to Kaspersky, the malware can do the following on infected devices:

- change desktop wallpaper

- alter display orientation to various angles

- force system shutdown

- remap mouse buttons

- disable input devices (keyboard/mouse/monitor)

- show fake notifications

- change cursor position on the screen

- hide various components (desktop icons, taskbar, the Task Manager, and the Command Prompt executable)

- Provide attacker-victim chat window

While the above features do not improve the attack’s monetization potential for cybercriminals, they certainly make the product distinctive, and could bait script kiddies and low-skilled/entry-level threat actors into getting a subscription.

Another reason for the prank features could be potential for victim manipulation, or even distraction, while the data theft modules run in the background.

To reduce the risk of malware infections, users are advised to exercise caution when interacting with online content and avoid downloading software or media from untrusted or unofficial sources.

Tech

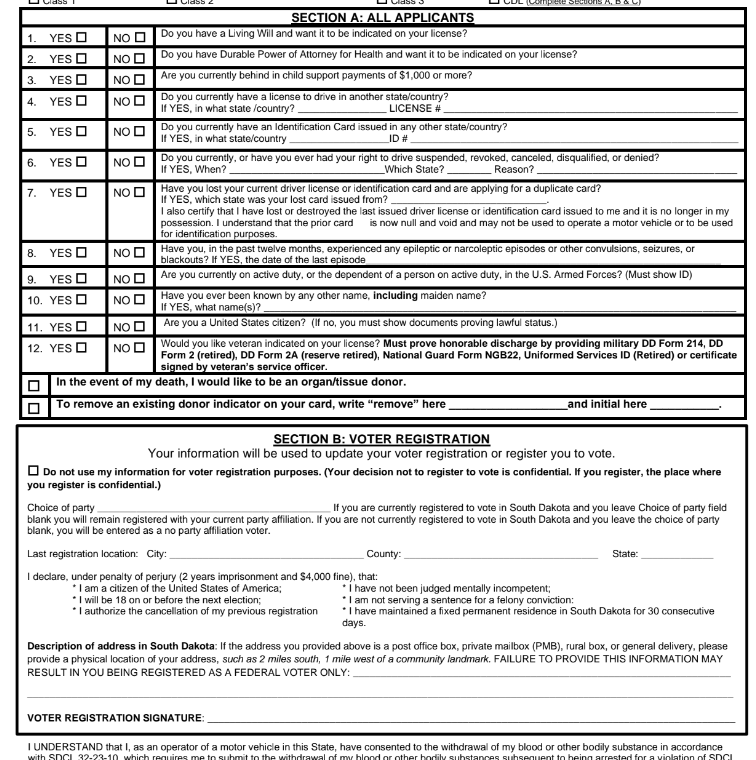

South Dakota GOP, Governor Get Their Voter Suppression On

from the getting-the-fix-in dept

Because South Dakota governor Larry Rhoden is forever obligated to serve Kristi Noem and Kristi Noem is forever obligated to serve Donald Trump, he and his GOP buddies are making America MAGA again, starting with his home turf.

Non-citizens have never really disrupted voting. But they’re the convenient scapegoat for a party that’s justifiably worried it’s going to lose its majority during the mid-terms. Multiple efforts are being made all over the nation to disenfranchise anyone that’s not part of Trump’s most rabid voting base. Pretending people not allowed to legally vote are somehow flipping elections for the Democratic Party is more than merely obnoxious. It’s actually harming the democratic process.

Here in South Dakota, two laws have been passed in recent weeks with the express purpose of keeping non-white people from showing up to vote. The first, passed at the beginning of this month, allows any rando to claim a person they saw voting shouldn’t be allowed to vote.

Voters in South Dakota will soon be able to challenge other voters’ citizenship.

Republican Gov. Larry Rhoden signed legislation into law last week that authorizes challenges by individuals and election officials.

[…]

State law already allows challenges to a voter’s registration up to the 90th day before an election, if a person is suspected of lacking South Dakota residency, voting in another state or being registered to vote in another state. The new law adds citizenship as a justification for a challenge.

Challenges may be filed by the South Dakota Secretary of State’s Office, the auditor in the county where the voter is registered, or a voter in the same county. The challenge must be in the form of a signed, sworn statement and must include what the law describes as “documented evidence.”

Now, we can all see what the law is. But we all know how it will be applied. State employees with access to voter rolls will raise challenges against anyone with a foreign-sounding last name. While it’s unlikely few citizens will actually file challenges, they’ll certainly feel comfortable accosting anyone standing in line to vote whose skin is darker than their own. Given the inevitability of these responses, it’s easy to see the law accomplishing exactly what it’s supposed to: limit the number of non-white voters at the polls during the mid-terms and beyond.

But that’s not the only suppression effort signed into law this month. There’s also this one, which raises the bar for participating in the democratic process with the obvious intention of limiting participation to the sort of voters the GOP thinks with vote for it:

New voters in South Dakota will have to prove that they are United States citizens in order to cast a ballot in state and local races under a bill signed on Thursday by Gov. Larry Rhoden.

The new law, which does not apply to South Dakotans already on the voter rolls, comes amid a national push by Republicans to tighten voting rules and root out voting by noncitizens, which is already illegal and believed to be rare.

“This bill ensures only citizens vote in state elections, keeping our elections safe and secure,” said Mr. Rhoden, who is seeking election to a full term this year and is facing a crowded Republican primary field.

It’s already illegal in South Dakota to vote if you’re not a citizen. This bill addresses a completely imaginary “problem.” And it forces voters to provide a passport, birth certificate, and other documents proving citizenship before they’re allowed to vote. While it may be easy for many people to present these documents, the simple fact is that they’ve never been asked to do this before, and anyone who’s not aware this law has been passed will be denied the opportunity to vote because the GOP decided to move the goalposts during an election year.

Non-citizens voting in South Dakota has never been an issue. The fact that 273 non-citizens were recently removed from the state’s voting rolls may seem a bit sketchy but there’s a good reason there might be a few hundred non-citizens with voter registrations:

Noncitizens can obtain a driver’s license or state ID if they are lawful permanent residents or have temporary legal status. There’s a part of the driver’s license form that allows an applicant to register to vote. That part says voters must be citizens.

The problem is that this is all on the same form. The voter registration part of the form has a signature line, which many applicants will fill out and sign even if their intention is only to get a drivers license or ID card, especially since it appears before the final signature block for the entire application.

If applicants are not asked to affirmatively state their intention to register to vote (as the Department of Public Safety employees ask now, along with asking applicants to write “vote” on the form to signal their affirmation), their applications might be processed, along with the voter registration applicants didn’t realize they enabling.

The Secretary of State’s office (the office that’s supposed to be reviewing voter registrations for eligibility) threw the Department of Public Safety under the bus:

Rachel Soulek, director of the Division of Elections in the Secretary of State’s Office, placed blame on the department in her response to South Dakota Searchlight questions about the situation.

“These non U.S. citizens had marked ‘no’ to the citizenship question on their driver’s license application but were incorrectly processed as U.S. citizens due to human error by the Department of Public Safety,” Soulek wrote.

That’s not what happened. Their ID applications were processed and the Soulek’s department failed to catch the inadvertent errors. And it doesn’t really even matter who’s at fault because despite the errors, this is still a non-issue.

Soulek said only one of the 273 noncitizens had ever cast a ballot. That was during the 2016 general election.

A handful of clerical errors that resulted in a single illegal vote in the past decade cannot be a rational basis for a new law. And there’s a good chance the sole vote was made in error, rather than maliciously. After all, if the state told this person they could vote, who were they to question that determination?

This is nothing more than state governments stepping up to do what Trump can’t. His SAVE Act is stalled and lots of last-minute gerrymandering at the behest of the president is tied up in court. His loyalists are doing what they can to make his perverted dreams a reality in states that are most likely to lean Republican in the first place, which makes all of this as pointless as it is stupid. But the underlying threat to democracy remains, ever propelled forward by the people who claim to love America the most.

Filed Under: immigration, larry rhoden, racism, south dakota, trump administration, voter fraud, voter suppression

Tech

Meta and YouTube lost landmark social media trials. That’s bad for free speech.

In Los Angeles, jurors awarded $6 million to a young woman who alleged that Instagram and YouTube had damaged her mental health. A day earlier, a jury in Santa Fe ruled that Meta had designed its social media platforms in a manner that harmed minors — and ordered the company to pay $375 million in recompense.

These decisions constituted a breakthrough for a legal movement that sees social media companies as the new “Big Tobacco” — an industry that knowingly peddles harmful and addictive products. And it was a triumph for advocates of “child online safety,” who believe that social media is corrosive to minors’ psychological well-being. With thousands of similar lawsuits pending, the California and New Mexico verdicts could prove to be transformative precedents.

Yet the decisions have also raised alarm bells for many free speech advocates. To organizations like FIRE — and civil libertarian writers like Reason’s Elizabeth Nolan Brown — these decisions will do more to undermine free expression online than to safeguard young people’s mental well-being.

To better understand — and interrogate — this perspective, I spoke with Nolan Brown. We discussed how the recent verdicts could open the door to broader censorship, the evidence for social media’s psychological harms, and whether parents can sufficiently protect their kids from problematic internet use without the government’s help. Our conversation has been edited for clarity and concision.

You’ve written that these verdicts are “a very bad omen for the open internet and free speech.” How so?

One key protection for online speech is Section 230 of the Federal Communications Decency Act, which prevents online platforms from being held liable for speech they host but don’t create.

What we’re seeing in these cases is an attempt to get around Section 230 by recharacterizing speech issues as “product liability” issues. Instead of saying, “We’re going after platforms for hosting harmful speech,” the plaintiffs are saying, “We’re going after them for negligent product design.”

In other words, the choices that social media companies make about how to curate their feeds or encourage engagement.

Right. Some of the things they complained about were “endless scroll” (where you keep going down and the feed doesn’t stop at the end of a page), recommendation algorithms that promote content that a user is more likely to engage with, and beauty filters.

But ultimately, if you look at what they’re actually going after, it comes down to speech. When you talk about TikTok or YouTube being so engaging that it’s “addictive,” you’re talking about content: No matter how TikTok’s algorithm is designed, it wouldn’t be compelling to people if the content wasn’t compelling.

Similarly, in the California case, the plaintiff argued that Meta allowing beauty filters on images was a negligent product design, since they promote unrealistic beauty standards, which caused her to develop body image issues.

But that really just comes back to speech: The choice to use a filter is something that individual users do to express themselves. Providing those tools for users is a form of speech.

But aren’t many of these product design choices content-neutral? A defender of these verdicts might argue: Social media companies are manipulating minors into compulsively using their platforms, in a manner that’s bad for their mental health. And they’re doing this, in part, through push notifications, autoplaying videos, and endlessly scrolling feeds. So, why can’t we legally restrict their use of those features — without constraining the kinds of speech they’re allowed to platform?

Some people will say, “Why don’t we limit notifications — or kick people off after an hour — if they’re minors?” But in order to implement any set of rules or product design choices just for young people, these platforms would need to have a foolproof way of knowing who is a minor and who is an adult.

And that means age verification procedures, where they’re either checking everyone’s government-issued ID, or they’re using biometric data — or something else that requires everyone to submit identification before they can speak anywhere on the internet.

And that creates a lot of problems. It makes people’s data more vulnerable to identity theft, hackers, and scammers. It also means that your identity is tied to everything you do online. And that can be dangerous, especially for people who are talking about sensitive issues or protesting the government. The ability to speak and organize online anonymously is very important.

What if the product design restrictions applied to adults and minors alike? If we barred social media companies from issuing push notifications for everyone, that would avoid the age verification issue, right?

Many platforms give people the tools to do these things already. You can turn autoplay off. You can have a chronological feed. You can tailor your settings so that you don’t have these features.

If we’re saying, “Why can’t the government mandate these options?” I think that’s a very slippery slope. You might think, “Okay, who cares about push notifications? Why can’t the government just mandate that they not do push notifications?” But the rationale for that gets us into much broader territory.

It’s effectively saying: Since some people will have a problem with this, the government must micromanage the way that the product is made. Yet people can use all sorts of products in a problematic way: Fitness regimes, streaming services, food. And we’re not saying like, okay, the government gets to step in and tell these companies exactly how to do business in the way that would be least harmful to people. And that attitude is particularly dangerous when we’re talking about products involving speech.

A skeptic might argue that the slope here isn’t actually that slippery. After all, the government has already shown that it can enact targeted, content-neutral restrictions on speech without triggering a cascade of censorship.

For example, since 1990, there have been limits on the amount of advertising that can air during children’s programming in a given hour — and also a requirement that ads and content be clearly separated. Those measures are arguably more intrusive on speech than, say, banning autoplay of videos on a social media platform. And yet, the Children’s Television Act of 1990 didn’t lead to any really sweeping constraints on First Amendment rights.

I just think it makes a big difference if you’re talking about restricting speech for minors and restricting it for adults. And what you were just mentioning were restrictions that would apply to everybody.

Beyond the First Amendment issues, you’ve expressed some skepticism about the specific causal claims made by plaintiffs in these cases: Specifically, that social media caused their mental health difficulties. Yet many social psychologists — most prominently Jonathan Haidt — have argued that these platforms are corrosive to children’s psychological being. So, why do you think the allegations here are overstated?

In the California case specifically, this young woman is alleging that, because she was on social media since she was very young, she developed mental health issues. But there was a lot of testimony showing that there were many other things going wrong in her life. She was exposed to domestic violence. She had troubles with her parents, troubles at school.

So the idea that social media directly caused her difficulties — rather than these life stressors that are well-known to cause harm — I think that’s kind of suspect.

And I think you see this problem in the broader research on social media’s mental health impacts. There’s often a correlation between depressive symptoms and heavy social media use because people who are having a difficult time at home and at school — people who are socially isolated — tend to use social media more than people in better circumstances.

How much do your views on the regulation of social media hinge on skepticism about the actual harms of these platforms? If we acquired evidence that there really were major impacts here — that autoplay and beauty filters were dramatically worsening kids’ mental health — would you support legal restrictions on these features? Or would First Amendment considerations override public health concerns, irrespective of the evidence?

The strength of the evidence is important for guiding the decision-making of individuals, parents, families, communities, and school districts. But even if we knew that beauty filters caused a lot of harm, the government still would not be justified in banning them, since they are avenues for speech. Plenty of people are not harmed by them.

There are so many things that harm some people, but that are useful to others. And I don’t think the existence of problematic use justifies banning those things for everyone.

I think talk of social media “addiction” can be unhelpful on this front. That language suggests that this is something that’s automatically harmful for everyone. And that just isn’t the case. Plenty of people use social media in a healthy way, in the same way that countless people can drink alcohol without it harming them, or eat a bag of chips without bingeing on them.

I think it’s the same way with social media. This is a technology that can harm some people, particularly those who already have psychological issues.

But it isn’t this addictive substance or a poison where you can’t even be exposed to it, or else. I think that view imbues smartphones with an almost mystical quality.

There are many cases, though, where we choose to heavily regulate a substance or practice — not because it harms everyone who engages with it — but rather, because it imposes massive harms on a minority of problem users. Gambling and alcohol are two examples. But even with opioids, many people can pop some pills and never develop a dependency. Yet some end up addicted and dying of overdoses. And for that reason, we heavily restrict access to opioids.

So, I feel like the question here might be less about whether social media is bad for everyone than whether it has truly large harms for problem users.

I think there are people who talk about it the way you do. But others describe social media as if it’s something that people are powerless against. But yes, I don’t think we have strong evidence that this is harmful in the way that addictive substances are. In fact, I think the evidence is really mixed. Some studies suggest that moderate smartphone use is actually correlated with better mental health outcomes.

You argue that, instead of seeking government restrictions on social media, parents should exercise more responsibility over their kids’ use of smartphones and apps.

Many parents argue that their capacity to monitor their children’s social media use is really limited and that they lack the tools to protect their kids from the harmful effects of these platforms. What would you say to them?

I think this is straightforward with very young children. Like, why is a 6-year-old having unfettered alone time on a digital device? In the California case, the plaintiff was using social media as a very young child. And at that age, parents definitely have control over what their kids do and see online; you can control whether your kid has access to a smartphone. With adolescents, there are areas where tech companies are working with parents. We’ve seen more parental controls being introduced in recent years. We’ve seen Meta roll out specific accounts for minors that have some restrictions on them. We’ve seen things like the introduction of phones that allow basic texting but not certain apps. So, I think private solutions are possible here. I think we can address people’s legitimate concerns without having the government infringe on free expression.

Tech

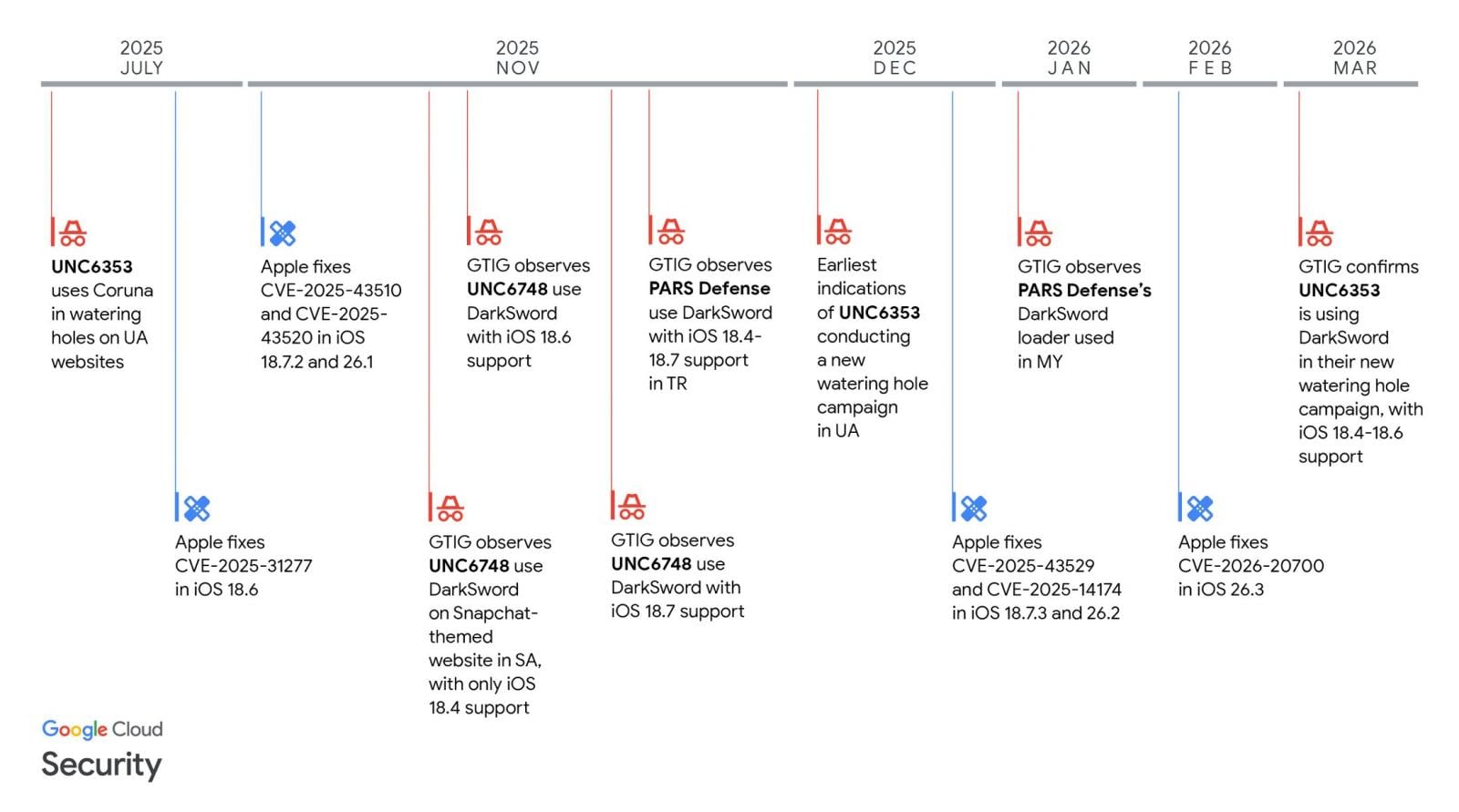

Apple expands iOS 18 updates to more iPhones to block DarkSword attacks

Apple has now made it possible for more iPhones still running iOS 18 to receive security updates that protect against the actively exploited DarkSword exploit kit.

“We enabled the availability of iOS 18.7.7 for more devices on April 1, 2026, so users with Automatic Updates turned on can automatically receive important security protections from web attacks called DarkSword,” reads a note in today’s iOS 18.7.7 security update changelog.

“The fixes associated with the DarkSword exploit first shipped in 2025.”

In March, researchers at Lookout, iVerify, and Google Threat Intelligence revealed a new “DarkSword” exploit kit that targeted iPhones running iOS 18.4 through 18.7.

The six vulnerabilities used by the DarkSword exploit kit are tracked as CVE-2025-31277, CVE-2025-43529, CVE-2026-20700, CVE-2025-14174, CVE-2025-43510, and CVE-2025-43520.

While iOS exploits have typically been used in highly targeted spyware campaigns, this iOS exploit kit was used much more widely, including by Turkish commercial surveillance vendor PARS Defense, a threat actor tracked as UNC6748, and a suspected Russian espionage group tracked as UNC6353.

In these attacks, GTIG observed three separate information-stealing malware families deployed on victims’ devices: a highly aggressive JavaScript infostealer named GhostBlade, the GhostKnife backdoor, and the GhostSaber JavaScript malware, which can execute code and steal data.

Since July 2025, with the release of iOS 18.6, Apple has been steadily fixing the flaws as they are disclosed in security updates pushed out to compatible devices.

Source: GTIG

However, by late 2025, Apple stopped offering iOS 18 updates to newer devices capable of running the newer iOS 26.

For those who decided not to upgrade and stay on iOS 18, availability to the security updates became limited, with newer devices no longer receiving patches for DarkSword vulnerabilities released in 2026.

Since then, only a small number of devices remained able to receive iOS 18 updates, and the last 18.7.6 update was offered only to iPhone XS, iPhone XS Max, and iPhone XR devices.

To make matters worse, a researcher released the DarkSword exploit kit on GitHub last month, making it accessible to other threat actors who wanted to target older iPhones.

Today, Apple has released iOS 18.7.7 to make it available to more devices that want to stay on the older operating system while remaining protected from the latest threats.

Devices eligible to receive the new update now include iPhone XR, iPhone XS, iPhone XS Max, iPhone 11 (all models), iPhone SE (2nd generation), iPhone 12 (all models), iPhone 13 (all models), iPhone SE (3rd generation), iPhone 14 (all models), iPhone 15 (all models), iPhone 16 (all models), iPhone 16e, iPad mini (5th generation – A17 Pro), iPad (7th generation – A16), iPad Air (3rd – 5th generation), iPad Air 11-inch (M2 – M3), iPad Air 13-inch (M2 – M3), iPad Pro 11-inch (1st generation – M4), iPad Pro 12.9-inch (3rd – 6th generation), and iPad Pro 13-inch (M4).

iPhone users still running iOS 18 with Automatic Updates enabled will now receive the latest version and protections against the DarkSword exploit kit.

Tech

Yeo’s lays off 25 staff in S’pore, moves can manufacturing operations to M’sia

It will consolidate production in its Johor and Selangor plants

Yeo Hiap Seng (Yeo’s) announced today (Mar 31) that it will retrench 25 employees at its Senoko facility in Singapore as it shifts its can manufacturing operations to Malaysia.

The company explained that consolidating production in its Johor and Selangor plants will help “optimise capacity utilisation and strengthen overall manufacturing efficiency” across its network.

Despite the move, Yeo’s Senoko site will remain its headquarters, as well as a hub for cross-border logistics and limited-scale production.

Yeo’s added that it will support affected staff through job placement services, career guidance, and counselling, and may offer them opportunities in Malaysia where suitable roles are available.

The company said it worked closely with the Food, Drinks and Allied Workers Union to ensure that the retrenchment package and transition support “reflect appreciation for the contributions of affected staff”.

The compensation packages will follow guidelines from Singapore’s Ministry of Manpower and will be based on each worker’s salary and length of service.

“These benefits will be commensurate with each employee’s salary and years of service.”

This is not the first round of layoffs for Yeo’s.

In Dec 2024, it cut 25 jobs after Oatly shut its Singapore plant—roles that had been created specifically for that production. Earlier, in 2022, the company retrenched 32 employees, citing shifts in consumer behaviour, retail challenges, and rising costs.

Financially, the SGX-listed firm reported a net profit of S$21.1 million for the year ending Dec 31, 2025, a significant increase from S$6.9 million the year before.

However, both overall revenue and core food and beverage revenue declined, which the company attributed to softer consumer spending and stronger competition in key markets.

- Read other articles we’ve written on Singaporean businesses here.

Featured Image Credit: Google Street View/ Yeo’s via Facebook

Tech

UFC-Que Choisir Takes Ubisoft To French Court Over the Crew Shutdown

Longtime Slashdot reader Elektroschock writes: When Ubisoft pulled the plug on The Crew’s servers without warning, players were left with a worthless game they’d already paid for. Now, consumer watchdog UFC-Que Choisir is fighting back, demanding gamers’ right to play regardless of publisher whims. Supported by the “Stop Killing Games” movement, this landmark case challenges unfair terms before the Creteil Judicial Court (Val-de-Marne near Paris), and aims to protect players from disappearing games. The lawsuit that UFC-Que Choisir filed against Ubisoft on Tuesday alleges that the video game publisher “misled consumers about the permanence of their purchase and imposed abusive contractual clauses stripping players of ownership rights,” reports Reuters.

Tech

Intuit’s AI agents hit 85% repeat usage. The secret was keeping humans involved

When Intuit shipped AI agents to 3 million customers, 85% came back. The reason, according to the company’s EVP and GM: combining AI with human expertise turned out to matter more than anyone expected — not less.

Marianna Tessel, the financial software company’s EVP and GM, calls this AI-HI combination a “massive ask” from its customers, noting that it provides another level of confidence and trust.

“One of the things we learned that has been fascinating is really the combination of human intelligence and artificial intelligence,” Tessel said in a new VB Beyond the Pilot podcast. “Sometimes it’s the combination of AI and HI that gives you better results.”

Chatbots alone aren’t the answer

Intuit — the parent company of QuickBooks, TurboTax, MailChimp and other widely-used financial products — was one of the first major enterprises to go all in on generative AI with its GenOS platform last June (long before fears of the “SaaSpocalypse” had SaaS companies scrambling to rethink their strategies).

Quickly, though, the company recognized that chatbots alone weren’t the answer in enterprise environments, and pivoted to what it now calls Intuit Intelligence. The dashboard-like platform features specialized AI agents for sales, tax, payroll, accounting and project management that users can interact with using natural language to gain insights on their data, automate tasks, and generate reports.

Customers report invoices are being paid 90% in full and five days faster, and that manual work has been reduced by 30%. AI agents help close books, categorize transactions, run payroll, automate invoice reminders and surface discrepancies.

For instance, one Intuit customer uncovered fraud after interacting with AI agents and asking questions about amounts that didn’t add up. “In the beginning it was like, ‘Is that an error? And as he dug in, he discovered very significant fraud,” Tessel said.

Why humans are still in the loop

Still, Intuit operates on the principle that humans are “always accessible,” Tessel said. Platforms are built in a way that users can ask questions of a human expert when they’re not getting what they need from the AI agent, or want a human to bounce ideas off of.

“I’m not talking about product experts,” Tessel said. “I’m talking about an actual accounting expert or tax expert or payroll expert.”

The platform has also been built to suggest human involvement in “high stakes” decision-making scenarios. AI goes to a certain level, then human experts review and categorize the rest. This provides a level of confidence, according to Tessel.

“We actually believe it becomes more needed and more powerful at the right moments,” she said. “The expert still provides things that are unique.”

The next step is giving customers the tools to perform next-gen tasks like vibe coding — but with simple architectures to reduce the burden for customers. “What we’re testing is this idea of, you can actually do coding without realizing that that’s what you are doing,” Tessel said.

For example, a merchant running a flower shop wants to ensure that they have the right amount of inventory in stock for Mother’s Day. They can vibe code an agent that analyzes previous years’ sales and creates purchase orders where stock is low. That agent could then be instructed to automatically perform that task for future Mother’s Days and other big holidays.

Some users will be more sophisticated and want the ability to dive deeper into the technology. “But some just want to express what they want to have happen,” Tessel said. “Because all they want to do is run their business.”

Listen to the full podcast to hear about:

-

Why first-party data can create a “moat” for SaaS companies.

-

Why showing AI’s logic matters more than a polished interface.

-

Why 600,000 data points per customer changes what AI can tell you about your business.

You can also listen and subscribe to Beyond the Pilot on Spotify, Apple or wherever you get your podcasts.

-

News Videos7 days ago

News Videos7 days agoParliament publishes latest register of MPs’ financial interests

-

Business6 days ago

Business6 days agoInstagram, YouTube Found Responsible for Teen’s Mental Health Struggle in Historic Ruling

-

Tech6 days ago

Tech6 days agoIntercom’s new post-trained Fin Apex 1.0 beats GPT-5.4 and Claude Sonnet 4.6 at customer service resolutions

-

NewsBeat5 days ago

NewsBeat5 days agoThe Story hosts event on Durham’s historic registers

-

Sports5 days ago

Sports5 days agoSweet Sixteen Game Thread: Tide vs Michigan

-

Entertainment2 days ago

Fans slam 'heartbreaking' Barbie Dream Fest convention debacle with 'cardboard cutout' experience

-

Entertainment4 days ago

Entertainment4 days agoLana Del Rey Celebrates Her Husband’s 51st Birthday In New Post

-

Crypto World2 days ago

Dems press CFTC, ethics board on prediction-market insider trades

-

Tech3 days ago

Tech3 days agoThe Pixel 10a doesn’t have a camera bump, and it’s great

-

Sports1 day ago

Sports1 day agoTallest college basketball player ever, standing at 7-foot-9, entering transfer portal

-

Crypto World4 hours ago

Crypto World4 hours agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Tech2 days ago

Tech2 days agoEE TV is using AI to help you find something to watch

-

Tech2 days ago

Tech2 days agoApple will hide your email address from apps and websites, but not cops

-

Entertainment7 days ago

Entertainment7 days agoHBO’s Harry Potter Series Will Definitely Fail For One Big Reason, And It’s Not J.K. Rowling Or Snape

-

Tech2 days ago

Tech2 days agoHow to back up your iPhone & iPad to your Mac before something goes wrong

-

Tech2 days ago

Tech2 days agoFlipsnack and the shift toward motion-first business content with living visuals

-

Fashion6 days ago

Fashion6 days agoEn Vogue in Brown Leather and Tailored Neutrals by Atelier Savoir, Styled by J Bolin

-

Politics2 days ago

Politics2 days agoShould Trump Be Scared Strait?

-

Crypto World2 days ago

Crypto World2 days agoU.S. rule change may open trillions in 401(k) funds to crypto

-

Fashion5 days ago

Fashion5 days agoWeekly News Update, 3.27.26 – Corporette.com

You must be logged in to post a comment Login