News Beat

Trump says new Epstein photos are ‘no big deal’ but claims he hasn’t seen them: Live updates

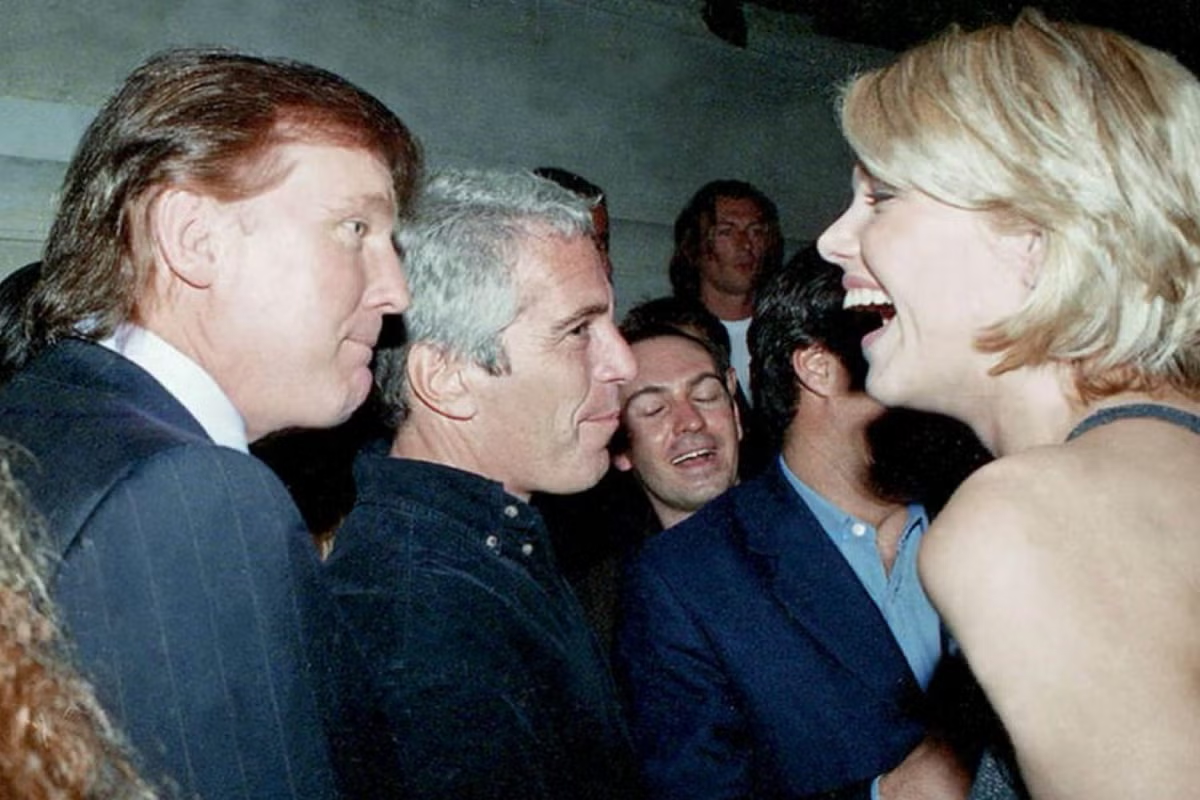

President Donald Trump said newly released photos showing he and Jeffrey Epstein mingling with several women were “no big deal,” but later claimed he had not seen them.

“Everybody knew this man [Epstein] – he was all over Palm Beach,” he told reporters Friday evening. “He has photos with everybody… there are hundreds and hundreds of people that have them.

“That’s no big deal… I know nothing about them.”

Trump has not been criminally charged or accused of wrongdoing and has consistently denied close involvement with Epstein in the later years of the convicted sex offender’s life.

It comes after House Democrats shared two tranches of photos from Epstein’s estate, which include numerous famous faces such as the president, former president Bill Clinton, former Treasury Secretary Larry Summers, Microsoft founder Bill Gates, and Britain’s former Prince Andrew.

The faces of some of the women who feature in the photos have been redacted by the Democrats, who have vowed to continue releasing photos.

“Once again, House Democrats are selectively releasing cherry-picked photos with random redactions to try and create a false narrative,” Abigail Jackson, White House Deputy Press Secretary, told The Independent.

The release of the photos comes ahead of a deadline set for next Friday December 19 for the Department of Justice to release the full files.

Epstein files: Full list of names in disgraced financier’s contact book

Jeffrey Epstein has garnered international attention for his crimes and alleged connection to powerful people while abusing girls for years.

The disgraced financier died by suicide in a New York prison cell in 2019 while awaiting trial on federal sex-trafficking charges. His case continues to be in the public eye for his alleged ties to the famous – and names that are found in his alleged contact book.

Those names include Donald Trump, Bill Clinton, Michael Jackson and former Prince Andrew.

Graig Graziosi13 December 2025 04:25

More than half of Americans think the government is hiding Epstein secrets

More than half of Americans disapprove of how President Donald Trump has been handling demands for transparency concerning the Jeffrey Epstein investigation.

A Reuters/Ipsos poll found that 70 percent of respondents said they believed the government was hiding information about Epstein, his alleged “clients,” and how those people might be tied to the victimization of young women in Epstein’s employ.

On Friday, Democrats from the House Oversight Committee shared a trove of photos from the Epstein estate, re-igniting calls from Epstein survivors, lawmakers, and many in the public for the full release of the Epstein investigation files by the Trump administration. Trump approved legislation that forces the information to be released, but Attorney General Pam Bondi has yet to actually release the files.

Graig Graziosi13 December 2025 03:59

Dem Congressman says unreleased Epstein estate photos are “disturbing” but won’t get specific

Congressman Robert Garcia, the top Democrat on the House Oversight Committee, said that some of the photos the panel received from the Epstein estate are “quite disturbing” and have to go through further review before they can be released to the public.

He made the comments on Friday night to CNN’s Kaitlan Collins on The Source. The segment aired hours after the House Oversight Democrats released a trove of photos from the Epstein estate, some of which include President Donald Trump and former President Bill Clinton.

Garcia said the photos contain “many, many” images of women, and that the oversight panel is ensuring that the individuals featured in the photos are protected.

He said some of the photos are “very disturbing” and feature “women, and their conditions,” though he did not elaborate on that comment.

Collins pressed him, telling Garcia he made it sound like there were images of women being “assaulted or in sexual acts.”

Garcia did not confirm or deny what was in the photos, only that they were “disturbing.”

“I think what I would say is that there are very disturbing photos, and many of those photos we have are not just of the island… but also of women,” he said. “And I think it’s something that is concerning to us because at the end of the day we’re going to protect the identities of the survivors and we’re working with the survivors very closely to ensure that we don’t reveal information that can be damaging to any of them.”

Graig Graziosi13 December 2025 03:20

Conservative pundit Scott Jennings complains that Democrats are trying to unfairly link Trump to Epstein

Conservative pundit and radio host Scott Jennings claimed that Democrats, in trying to link President Donald Trump to Jeffrey Epstein, only succeeded in linking fellow Democrats to the alleged sex trafficker.

“They have tried to create a narrative that Trump had something to do with Epstein, and all we now know is, is that a lot of Democrats have something to do with Epstein,” he said on CNN’s The Source on Friday night.

His comments come after the House Oversight Democrats released a trove of photos from Epstein’s estate, some of which include Trump, former President Bill Clinton, and Trump’s former advisor Steve Bannon, among others.

CNN’s Kaitlan Collins pushed back on Jennings’ comments, noting that Democrats were knowingly releasing photos that included members of their own party. She asked why they would do that if all they wanted to do was weave a narrative about Trump and Epstein.

“Well look, those guys [Clinton, etc] are already roadkill… we already know about that,” Jennings said.

He then insisted there was not a “single shred of evidence or any credible allegations that Trump did anything wrong at all or had anything to do with this, period.”

Jennings added that the president signed a law forcing the release of the Epstein files as a point proving he did nothing wrong, but CNN’s Van Jones questioned that argument, noting Trump only signed the law “under massive pressure.”

Graig Graziosi13 December 2025 02:54

WATCH: House Democrats release new photos from estate

Graig Graziosi13 December 2025 02:45

Republican House Oversight chair threatens contempt charges if Clintons don’t sit for Epstein depositions

Congressman James Comer, who chairs the House Oversight Committee, said on Friday that he would pursue contempt charges against former President Bill Clinton and former Secretary of State Hillary Clinton if they do not cooperate with Congress’s examination of the Epstein investigation, according to a Politico report.

He made the comments on Friday after the House Oversight Democrats released a trove of photos from the Epstein estate, some of which feature political figures including President Donald Trump and former President Bill Clinton.

Neither Trump nor Clinton have been accused of wrongdoing and both have said they distanced themselves from Epstein before he was convicted for sex crimes.

It has been well established that Clinton knew Epstein personally, though the former president has maintained he ended his associations with the disgraced financier before he was convicted for child sex trafficking.

Graig Graziosi13 December 2025 02:24

Congressman Suhas Subramanyam says Friday photo dump is just the “tip of the iceberg”

Congressman Suhas Subramanyam told MS NOW on Friday night that the Epstein estate photos released on Friday were just the “tip of the iceberg.”

He noted that the released photos are just items controlled by Epstein’s estate — they are not part of the investigative evidence held by the federal government that has come to be known as the “Epstein files.”

Graig Graziosi13 December 2025 02:15

Epstein survivor says that while some photos released Friday are “triggering,” it is worth the discomfort to see the information made public

Liz Stein, a Jeffrey Epstein survivor, told CNN that seeing the photos released today of the convicted sex trafficker, his home, and his friends can be “really difficult” for those who suffered abuse at his hands.

“When we’re seeing these photos, things that might seem like they don’t matter to the general public can really be meaningful to us. I was talking to a survivor earlier who said, ‘to the rest of the world that just looks like a room, but to me, that’s the phone that I picked up to call for help,’” she said.

“So these things can be really, incredibly triggering for us. And at the same time we realize how important it is for this to all come out.”

Stein said some of the images released Friday were “particularly difficult for me.”

Despite the potentially traumatic memories the images might bring back, Stein said the survivors are going to “stand united” and “sit in that discomfort” as more information becomes public about Epstein and the individuals in his orbit.

Graig Graziosi13 December 2025 02:12

House Oversight Republicans blast their Democratic colleagues after Epstein photo dump

A spokesperson for the House Oversight Republicans criticized House Oversight Democrats after the body released trove of photos from Jeffrey Epstein’s estate, including some that featured President Donald Trump.

“Once again, Ranking Member Robert Garcia and Oversight Committee Democrats are cherry-picking photos and making targeted redactions to create a false narrative about President Trump. We received over 95,000 photos and Democrats released just a handful,” a spokesperson said in a statement.

“Democrats’ hoax against President Trump has been completely debunked. Nothing in the documents we’ve received shows any wrongdoing. It is shameful Rep. Garcia and Democrats continue to put politics above justice for the survivors.”

Graig Graziosi13 December 2025 02:00