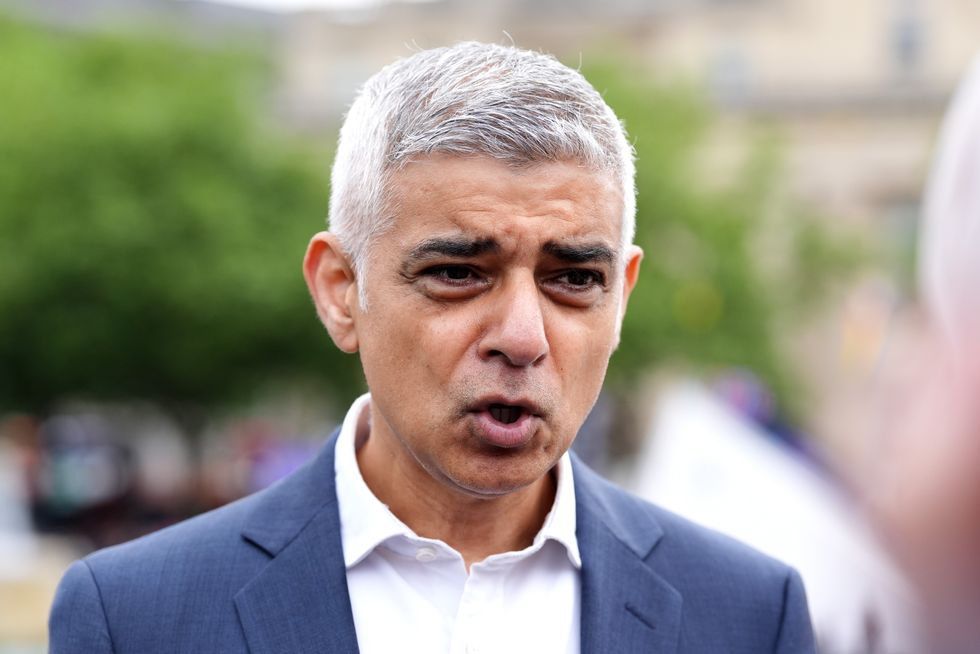

Sadiq Khan has been slammed after refusing to back a knife crime taskforce tabled by Reform UK.

The London Mayor was responding to a question from Reform’s London Assembly member Alex Wilson.

Wilson, the assembly member for Londonwide, was speaking in City Hall, listing incidents of knife crime.

He outlined a plan which aimed to recruit around 1,000 new police officers for a specialist knife crime taskforce, one which was slapped down by the Labour mayor.

Alex Wilson has slammed Sadiq Khan

PA/London Assembly

Wilson called on other groups from across the political spectrum to support the party’s amendment to the Labour mayor’s budget, demanding the recruitment of nearly 1,000 police officers to join a knife crime task force.

Reform claims that it would be funded by cutting Khan’s staffing budget back to 2016 levels, which they say would save over £75million.

When asking Khan about the proposals during Mayor’s Question Time, Wilson listed out a series of knife crime incidents in the city, asking: “Would you encourage members of other groups from your own group in the Labour Party, from the Conservatives perhaps anybody, to second that amendment so that it can be discussed and debated in full?”

Khan responded saying: “As a rule of thumb, I never encourage anyone to work with Reform.”

LATEST DEVELOPMENTS

London Mayor Sadiq Khan said: “As a rule of thumb, I never encourage anyone to work with Reform”

PA

Alex Wilson, Reform UK’s London Assembly member, told GB News: “Today, Reform proposed a cross party resolution to London’s knife crime crisis.

“Our funded plan seeks to hire 1,000 new specialist police officers to create a new knife crime taskforce.

“Sadiq’s arrogant dismissal of our suggestion shows that tackling London’s knife crime emergency is clearly not a priority of his.

“Let’s be clear – The London Mayor is putting politics over people’s lives. Tackling knife crime is, and always will be, Reform’s number one priority in London.”

It comes after Reform’s Deputy Leader Richard Tice alongside Wilson, requested for a ramp up of stop and search, as well as a boosted police presence last week.

The letter said: “In your London, we’re now at a point where parents have the constant worry they may receive a call from school telling them their child is not returning from school.

“Not because they’re at an after-school club. Not because they’ve got a detention. Because they’ve been stabbed to death.”

In 2024, there were more than 15,000 knife related offences in London – 2,200 up on the year before.

While the number of teenagers stabbed to death last year rose to 18, five times more than the number of youngsters killed in 2022.

+ There are no comments

Add yours