Crypto World

How the 2026 U.S. Midterm Elections Could Reshape Crypto Markets

TLDR:

- Prediction markets show a 60% Republican Senate and 83% Democratic House probability in 2026 elections.

- The GENIUS Act, enacted in 2025, awaits full implementation within 12 to 24 months after the midterms.

- ERC20 stablecoin supply surpassed $150 billion in 2024, approaching highs last seen during the 2021 cycle.

- A divided Congress points to gradual regulatory clarity, favoring steady capital inflows over sudden market shifts.

The 2026 U.S. midterm elections are drawing close attention from crypto markets worldwide. At the center of that attention is the GENIUS Act, a landmark stablecoin law enacted in 2025.

Prediction markets currently show a 60% probability of Republican Senate control and an 83% probability of Democratic House control.

That split points to a divided Congress as the most likely outcome. For crypto markets, this political structure could determine how quickly regulatory clarity translates into capital movement.

Why the Midterm Election Outcome Matters for Stablecoin Regulation

The 2026 midterms carry direct consequences for how the GENIUS Act moves toward full implementation. Enacted in 2025, the law established the first federal framework governing stablecoins in the United States.

Full implementation is expected to arrive within 12 to 24 months following the November 2026 elections. The political composition of Congress after that vote will influence how smoothly that process unfolds.

A divided Congress, the current base case, reduces the probability of sudden or sweeping regulatory reversals. Instead, markets can expect incremental policy progress as implementation details surface over time.

This gradual approach allows institutions and traders to adjust their positioning steadily. It also lowers the risk of abrupt disruption to existing market structures built around stablecoin liquidity.

“Regulation does not follow price—it reshapes the conditions under which price forms.” — XWIN Research Japan

Broader legislative efforts, such as the CLARITY Act, face a harder path under split congressional control. Without a unified legislative majority, comprehensive digital asset market reform may move slowly.

Crypto participants should therefore expect a multi-year regulatory window rather than a single decisive moment. Each phase of implementation will carry its own market repricing effect.

The midterms will not produce an overnight transformation in crypto markets. However, they will set the regulatory tempo for the following two years.

That tempo matters enormously for institutional capital planning cycles. A stable, predictable regulatory environment consistently attracts longer-term capital commitments into digital asset markets.

Stablecoin Supply Data Points to a Liquidity Cycle Already in Motion

On-chain data from CryptoQuant shows that the ERC20-based stablecoin supply has exceeded $150 billion as of 2024. That level approaches the historical highs last recorded during the 2021 market cycle.

Stablecoin supply functions as the most direct available measure of crypto market liquidity. When supply expands at this scale, it signals that capital is being staged ahead of broader risk allocation.

📊 CryptoQuant data confirms total ERC20 stablecoin supply surpassed $150 billion in 2024, nearing all-time highs.

Historical market patterns show that stablecoin supply growth has consistently preceded major bull cycles. The current supply level suggests that liquidity is already structurally present across the market.

This condition holds even as short-term volatility continues to affect crypto asset prices. Markets have historically used such periods of elevated liquidity to absorb risk before moving higher.

The combination of the GENIUS Act’s regulatory timeline and current supply data creates a specific market setup. Liquidity appears to be accumulating well ahead of the formal regulatory catalyst the midterms may deliver.

If divided government produces gradual clarity as expected, markets could reprice steadily throughout the implementation window.

That measured repricing environment tends to support sustained capital inflows rather than short-lived speculative spikes.

Ultimately, the 2026 midterms may not reshape crypto markets through legislation alone. Their larger role may be confirming the regulatory environment under which the next liquidity cycle accelerates.

The stablecoin supply structure already suggests that a foundation is forming. The election outcome will determine how quickly that foundation translates into the next market phase.

Crypto World

F1’s Multi-Million Crypto Sponsorships at Risk as Middle East Conflict Forces Race Cancellations: FIA

Two Formula One races in the Middle East face cancellation due to ongoing regional conflict, threatening major cryptocurrency sponsorship deals with F1 teams.

Two Formula One races in the Middle East are set to be canceled because of ongoing war in the region, according to multiple reports. The FIA is maintaining contact with local authorities as it evaluates the situation regarding upcoming F1 races in Bahrain and Saudi Arabia.

The cancellations threaten crypto’s multi-million dollar F1 sponsorship investments. Other major business events across the UAE, including Middle East Energy Dubai and the Dubai International Boat Show, have also been postponed or delayed. This comes as crypto brands already face headwinds on F1 vehicles, with the sector reeling from high-profile collapses like FTX, which sponsored Mercedes AMG F1.

Sources: CoinDesk | Yahoo Sports | Road and Track

This article was generated automatically by The Defiant’s AI news system from publicly available sources.

Crypto World

Hoskinson might be wrong about the future of decentralized compute

The blockchain trilemma reared its head once more at Consensus in Hong Kong in February, to some extent, putting Charles Hoskinson, the founder of Cardano, on the back foot – having to reassure attendees that hyperscalers like Google Cloud and Microsoft Azure are not a risk to decentralisation.

The point was made that major blockchain projects need hyperscalers, and that one shouldn’t be concerned about a single point of failure because:

- Advanced cryptography neutralizes the risk

- Multi-party computation distributes key material

- Confidential computing shields data in use

The argument rested on the idea that ‘if the cloud cannot see the data, the cloud cannot control the system,’ and it was left there due to time constraints.

But there’s an alternative to Hoskinson’s argument in favor of hyperscalers that deserves more attention.

MPC and Confidential Computing Reduce Exposure

This was somewhat of a strategic bastion in Charles’ argument – that technologies like multi-party computation (MPC) and confidential computing ensure that hardware providers wouldn’t have access to the underlying data.

They are powerful tools. But they do not dissolve the underlying risk.

MPC distributes key material across multiple parties so that no single participant can reconstruct a secret. That meaningfully reduces the risk of a single compromised node. However, the security surface expands in other directions. The coordination layer, the communication channels and the governance of participating nodes all become critical.

Instead of trusting a single key holder, the system now depends on a distributed set of actors behaving correctly and on the protocol being implemented correctly. The single point of failure does not disappear. In fact, it simply becomes a distributed trust surface.

Confidential computing, particularly trusted execution environments, introduces a different trade-off. Data is encrypted during execution, which limits exposure to the hosting provider.

But Trusted Execution Environments (TEEs) rely on hardware assumptions. They depend on microarchitectural isolation, firmware integrity and correct implementation. Academic literature, for example, here and here, has repeatedly demonstrated that side-channel and architectural vulnerabilities continue to emerge across enclave technologies. The security boundary is narrower than traditional cloud, but it is not absolute.

More importantly, both MPC and TEEs often operate on top of hyperscaler infrastructure. The physical hardware, virtualization layer and supply chain remain concentrated. If an infrastructure provider controls access to machines, bandwidth or geographic regions, it retains operational leverage. Cryptography may prevent data inspection, but it does not prevent throughput restrictions, shutdowns, or policy interventions.

Advanced cryptographic tools make specific attacks harder, but they still do not remove infrastructure-level failure risk. They simply replace a visible concentration with a more complex one.

The ‘No L1 Can Handle Global Compute’ Argument

Hoskinson made the point that hyperscalers are necessary because no single Layer 1 can handle the computational demands of global systems, referencing the trillions of dollars that have helped to build such data centres.

Of course, Layer 1 networks were not built to run AI training loops, high-frequency trading engines, or enterprise analytics pipelines. They exist to maintain consensus, verify state transitions and provide durable data availability.

He is correct on what Layer 1 is for. But global systems mainly need results that anyone can verify, even if the computation happens elsewhere.

In modern crypto infrastructure, heavy computation increasingly happens off-chain. What matters is that results can be proven and verified onchain. This is the foundation of rollups, zero-knowledge systems and verifiable compute networks.

Focusing on whether an L1 can run global compute misses the core issue of who controls the execution and storage infrastructure behind verification.

If computation happens offchain but relies on centralized infrastructure, the system inherits centralized failure modes. Settlement remains decentralized in theory, but the pathway to producing valid state transitions is concentrated in practice.

The issue should be about dependency at the infrastructure layer, not computational capacity inside Layer 1.

Cryptographic Neutrality Is Not the Same as Participation Neutrality

Cryptographic neutrality is a powerful idea and something Hoskinson used in his argument. It means rules cannot be arbitrarily changed, hidden backdoors cannot be introduced and the protocol remains fair.

But cryptography runs on hardware.

That physical layer determines who can participate, who can afford to do so and who ends up excluded, because throughput and latency are ultimately constrained by real machines and the infrastructure they run on. If hardware production, distribution, and hosting remain centralized, participation becomes economically gated even when the protocol itself is mathematically neutral.

In high-compute systems, hardware is the game-changer. It determines cost structure, who can scale, and resilience under censorship pressure. A neutral protocol running on concentrated infrastructure is neutral in theory but constrained in practice.

The priority should shift toward cryptography combined with diversified hardware ownership.

Without infrastructure diversity, neutrality becomes fragile under stress. If a small set of providers can rate-limit workloads, restrict regions, or impose compliance gates, the system inherits their leverage. Rule fairness alone does not guarantee participation fairness.

Specialization Beats Generalization in Compute Markets

Competing with AWS is often framed as a question of scale, but this too is misleading.

Hyperscalers optimize for flexibility. Their infrastructure is designed to serve thousands of workloads simultaneously. Virtualization layers, orchestration systems, enterprise compliance tooling and elasticity guarantees – these features are strengths for general-purpose compute, but they are also cost layers.

Zero-knowledge proving and verifiable compute are deterministic, compute-dense, memory-bandwidth constrained, and pipeline-sensitive. In other words, they reward specialization.

A purpose-built proving network competes on proof per dollar, proof per watt and proof per latency. When hardware, prover software, circuit design, and aggregation logic are vertically integrated, efficiency compounds. Removing unnecessary abstraction layers reduces overhead. Sustained throughput on persistent clusters outperforms elastic scaling for narrow, constant workloads.

In compute markets, specialization consistently outperforms generalization for steady, high-volume tasks. AWS optimizes for optionality. A dedicated proving network optimizes for one class of work.

The economic structure differs as well. Hyperscalers’ price for enterprise margins and broad demand variability. A network aligned around protocol incentives can amortize hardware differently and tune performance around sustained utilization rather than short-term rental models.

The competition becomes about structural efficiency for a defined workload.

Use Hyperscalers, But Do Not Be Dependent on Them

Hyperscalers are not the enemy. They are efficient, reliable, and globally distributed infrastructure providers. The problem is dependence.

A resilient architecture uses major vendors for burst capacity, geographic redundancy, and edge distribution, but it does not anchor core functions to a single provider or a small cluster of providers.

Settlement, final verification and the availability of critical artifacts should remain intact even if a cloud region fails, a vendor exits a market, or policy constraints tighten.

This is where decentralized storage and compute infrastructure become a viable alternative. Proof artifacts, historical records and verification inputs should not be withdrawable at a provider’s discretion. Instead, they should live on infrastructure that is economically aligned with the protocol and structurally difficult to turn off.

Hypescalers should be used as an optional accelerator rather than something foundational to the product. Cloud can still be useful for reach and bursts, but the system’s ability to produce proofs and persist what verification depends on is not gated by a single vendor.

In such a system, if a hyperscaler disappears tomorrow, the network would only slow down, because the parts that matter most are owned and operated by a broader network rather than rented from a big-brand chokepoint.

This is how to fortify crypto’s ethos of decentralization.

Crypto World

IOTA Tests Securitization Infrastructure That Could Reshape Real-World Asset Finance on Blockchain

TLDR:

- IOTA’s code reveals a three-tier securitization model mirroring traditional structured finance architecture.

- The infrastructure could support invoice factoring, SME lending, and energy project financing on-chain.

- Analysts link the testing to SALUS and ADAPT platforms operating within the AfCFTA trade framework.

- No IOTA Foundation statement confirms the purpose, but the architecture suits digital capital markets.

IOTA is currently testing a full securitization infrastructure on its blockchain, based on early code analysis. The architecture mirrors traditional structured finance models, dividing pooled assets into senior, mezzanine, and junior tranches.

This points toward a broader financial layer being constructed on the IOTA network. Community observers are connecting this work to platforms like SALUS, ADAPT, and TWIN. All three platforms operate within the African Continental Free Trade Area framework.

IOTA Code Points to a Foundational Structured Finance Layer

Securitization involves pooling real assets, like loans or invoices, and converting them into tradeable instruments. On IOTA, the code being tested applies this same principle across the network.

This structure points to a foundational layer for managing and structuring real-world assets on-chain.

The architecture reflects the three-tier model widely used in traditional structured finance. Senior tranches carry the lowest risk and hold first priority on repayment.

Mezzanine tranches occupy the middle ground, balancing risk and return. Junior tranches carry the highest risk but offer the greatest potential return.

Community analyst Salima flagged this on X, noting the architecture fits platforms like SALUS and ADAPT. She pointed out that the code does not appear to be a standalone product.

Rather, it resembles the base layer for managing digital real-world assets at scale. Any direct link to AfCFTA trade platforms remains unconfirmed at this stage.

What stands out is that this process could run entirely on IOTA without external financial rails. No third-party intermediaries or legacy systems would be required.

Portfolios of real-world assets could become programmable digital financial structures on-chain. Investors could then participate based on their individual risk profiles.

Trade Finance to Capital Markets: IOTA’s Potential Use Cases

The infrastructure on IOTA could support several practical financial applications. Invoice factoring and trade finance are among the most immediate potential use cases.

SME lending and productive financing also fit within this securitization model. Equipment leasing and energy projects are additional sectors where this architecture could apply.

Digital capital markets for real-world assets represent a wider area of interest. Tokenized portfolios could open participation to a broader global investor base.

This removes the geographic barriers that traditionally limit access to structured finance. IOTA’s feeless and scalable design makes it technically suited for this type of infrastructure.

The timing of these tests aligns with growing global interest in real-world asset tokenization. Traditional finance is increasingly exploring blockchain alternatives to legacy securitization models.

If IOTA’s architecture develops further, it could serve as a foundational layer for this shift. No official statement has come from the IOTA Foundation as of this writing.

As the code evolves, observers are watching for further technical developments and announcements. The current architecture does not confirm any specific platform or official partnership.

What is clear is that IOTA is building technical groundwork for real-world asset finance. The full scope and intent of this infrastructure is yet to be publicly confirmed.

Crypto World

Ethereum Foundation sells 5,000 ether to BitMine in $10.2 million OTC deal

The Ethereum Foundation (EF) said it finalized the sale of 5,000 ether (ETH) in an over-the-counter transaction with one of the top crypto treasury firm Bitmine Immersion Technologies.

The sale cleared at an average price of $2,042.96 per ETH, the Foundation said, placing the transaction’s value at roughly $10.2 million.

The non-profit organization, established in 2014 to support the Ethereum blockchain and its ecosystem, said the funds will support its core operations, including protocol research and development, ecosystem growth, and community grants.

The transactions, it said, are in line with the policy that governs its reserve management. The framework aims to strike a balance between holding ETH and maintaining sufficient fiat or fiat-like assets to cover operating costs. EF currently aims to keep annual operating expenses near 15% of treasury value with a 2.5-year operating buffer, a strategy that determines how often it sells ETH.

The sale comes less than a month after the Ethereum Foundation began staking up to 70,000 ETH to support its operations and deepen its role in the Ethereum ecosystem.

Bitmine, helmed by Fundstrat’s Tom Lee, was the counterparty in the deal and is the largest publicly traded ether treasury firm, currently holding around 4.53 million ETH, worth more than $9.4 billion.

The firm’s portfolio is almost entirely ether. The company also holds around 195 BTC and more than $1 billion in cash, along with equity stakes. These stakes also include a share of Beast Industries, the company behind YouTube creator MrBeast, after a $200 million investment in it, along with a 7% stake in the worldcoin treasury firm Eightco.

Read more: ‘Mini crypto winter’ nearly over, says Tom Lee as Bitmine ramps up pace of ether acquisition

Crypto World

Former UK PM Johnson Calls BTC a Scam, Draws Criticism From Bitcoiners

Boris Johnson, the former prime minister of the United Kingdom, called Bitcoin (BTC) a “Ponzi Scheme” that has less value than Pokémon cards, collectibles he said had a wide appeal and a multi-decade history.

Johnson wrote an opinion article published in the Daily Mail on Friday that began with a story about a friend who had given 500 British pounds, or about $661, to a man who promised to “double his money” by investing it in BTC.

The friend continued to pay additional “fees” to the scheme’s promoter over the next three and a half years, but was never able to retrieve his funds, despite sinking 20,000 British pounds, or about $26,474, which led to financial hardship, Johnson said.

“He was struggling to pay his bills. He wasn’t the only one, said my friend. Other people in the neighborhood were going through the same nightmare,” Johnson added. Johnson then argued that collectible Pokémon cards are a more tradable asset than BTC:

“These curious little Japanese cartoon beasties seem to exercise the same fascination over the five-year-old mind as they did 30 years ago. The kids drool over them. They boast and squabble about them.

Even if you remain pretty impervious to the charm of Pikachu, you can just about see why a decades-old Pikachu card is still a tradeable asset,” he added.

The opinion article drew a wave of online criticism from the Bitcoin community and crypto industry executives, who refuted it by explaining Bitcoin’s fundamental properties and arguing that debt-based fiat currency systems are Ponzi schemes.

Related: Bitcoiners celebrate as the network produces its 20 millionth coin

Bitcoiners educate and ridicule Johnson for his take

“Bitcoin is not a Ponzi scheme. A Ponzi requires a central operator promising returns and paying early investors with funds from later ones,” Strategy co-founder Michael Saylor said in response.

“Bitcoin has no issuer, no promoter, and no guaranteed return, just an open, decentralized monetary network driven by code and market demand,” Saylor continued.

Pierre Rochard, CEO of The Bitcoin Bond Company, a BTC-backed financial product issuer, said that the UK is a “giant Ponzi scheme” financed by debt.

Magazine: Bitcoin’s ‘narrative vacuum,’ Ethereum now inevitable: Trade Secrets

Crypto World

BTC Wobbles at $70K as France Deploys Ships to Hormuz and Trump Rejects Peace Deal Attempt (Report)

Meanwhile, Russia reportedly became the first country to send aid to Iran since the war began.

Bitcoin’s price moves continue to be quite muted despite the most recent developments on the rapidly increasing Middle East tension. After today’s big strikes against a key Iranian island, Trump urged numerous countries to send military ships to defend the oil export through the Strait of Hormuz, and France was among the first to respond positively.

At the same time, Oman officials said they tried to broker a peace deal between the US and Iran, but to no avail.

France Sends Ships

CryptoPotato reported earlier on Saturday that the US military carried out a targeted operation against Iran’s Kharg Island, which the POTUS described as “the most powerful bombing raids in Middle East history.” However, he added that the US intentionally did not attack any oil infrastructure but threatened to do so if Iran interferes in any way with the free and safe passage of ships through the Strait of Hormuz.

Hours later, Trump urged other countries, including China, France, Japan, South Korea, and the UK, to send “Warships” to the region to ensure the Strait remains open and safe. Reports from minutes ago suggested that France concurred with the US President’s message, sending 10 warships to the region. However, the UK has refused to deploy any military aircraft carriers as of press time.

In a separate development on the matter, The Kobeissi Letter reported that Russia has become the first nation to aid Iran in some official way after the war began, sending 13 tons of medical aid.

No Peace Deal Yet

Another report that just came out indicated that officials from Oman have “reached out to the US in an attempt to broker a peace deal with Iran,” but the US President declined.

Some of the details on the matter suggest that Oman has tried “multiple times” to open a line of communication, but the White House was “not interested.” According to a cited senior official from the Trump administration, the President is “focused on pressing ahead with the war.”

You may also like:

BREAKING: Oman has reached out to the US in an attempt to broker a peace deal with Iran, but President Trump declined, per Reuters.

Details include:

1. Oman has tried “multiple times” to open a line of communication, but the White House is “not interested”

2. A senior White…

— The Kobeissi Letter (@KobeissiLetter) March 14, 2026

Bitcoin’s price continues to be unaffected by these developments, trading above $70,000 as of press time. However, the asset has historically dumped after most financial markets open on late Sunday and early Monday.

Binance Free $600 (CryptoPotato Exclusive): Use this link to register a new account and receive $600 exclusive welcome offer on Binance (full details).

LIMITED OFFER for CryptoPotato readers at Bybit: Use this link to register and open a $500 FREE position on any coin!

Crypto World

Iran war cancels crypto events and hits multi-million dollar Formula 1 partnerships

The ongoing war in the Middle East hasn’t just disrupted the flow through the Strait of Hormuz, but it has also hit a plethora of high-profile business events in the region, including major crypto conferences.

TOKEN2049 Dubai, one of the largest crypto conferences in the world, will not take place this year. Organizers said the event, originally scheduled for late April, has been postponed to April 21–22, 2027, due to ongoing uncertainty in the region.

The conference typically attracts more than 15,000 attendees, including founders, venture investors, developers and exchange executives.

Organizers said concerns around safety, international travel and logistics played a central role in the decision. Tickets and registrations will remain valid for next year’s event.

And this is just one of the crypto events.

TON Gateway Dubai, another crypto gathering, has been canceled outright. The event focused on The Open Network ecosystem and was expected to bring developers and partners working on the TON blockchain together in early May. The team behind the event said it scrapped the in-person conference due to heightened security risks in the region, and that those who purchased tickets received full refunds.

The impact has also reached global sports. The Bahrain Grand Prix scheduled for April 12 and the Saudi Arabian Grand Prix on April 19 are set to be canceled due to safety risks tied to the conflict, including nearby military strikes, disrupted airspace and travel complications for teams and staff.

Formula 1 and the FIA are expected to formally confirm the decision over the weekend.

Later Middle East races are still scheduled for now, including the Qatar Grand Prix and the season-ending Abu Dhabi Grand Prix in December. However, organizers are closely monitoring the regional security situation as travel and logistics remain uncertain across the Gulf.

The disruptions extend beyond crypto and motorsport. Several major business events in the UAE have also shifted dates. Middle East Energy Dubai, a large trade show that usually draws tens of thousands of attendees, has been moved to September. Affiliate World Global postponed its Dubai edition to 2027, while the Dubai International Boat Show has delayed its next event without announcing new dates.

Some sporting events across the region have also been postponed, including tennis tournaments in the UAE and football matches tied to Asian competitions.

Crypto industry impact

The Formula 1 cancellations carry additional implications for the cryptocurrency industry, which has become one of the sport’s largest sponsor categories.

Exchanges and blockchain companies have spent tens to hundreds of millions of dollars on F1 partnerships to reach a global audience and target fast-growing markets in the Middle East.

Cryptocurrency exchange OKX, which was recently valued at $25 billion, has been a primary partner of McLaren since 2022. It maintains prominent branding across the team’s cars, driver suits and trackside activations.

Crypto.com serves as a global Formula 1 partner through 2030, while exchanges such as Bybit have previously signed deals worth up to $150 million with top teams like Red Bull Racing. Kraken, Coinbase and Binance are also sponsors of motorsports that may be affected.

OKX and Crypto.com didn’t immediately reply to the request for comments.

When a sponsored team reaches the podium, logos appear during televised ceremonies, interviews and trophy presentations, moments watched by a global audience of more than a billion viewers each year.

For Dubai-based and regional exchanges, the Bahrain and Saudi races were especially valuable because they connect global broadcasts with a local audience in the Gulf, one of the world’s most active crypto markets.

The hit carries weight because of Dubai’s role in the global crypto industry. Over the past few years, the emirate has positioned itself as one of the world’s most active crypto hubs.

A tax-friendly environment and the creation of the Virtual Assets Regulatory Authority, an independent regulator for the sector, helped attract exchanges, venture funds and startup teams seeking clearer rules than those found in many other jurisdictions.

Companies, including Binance, have built large operational footprints in the city, turning Dubai into a central meeting point for the global Web3 sector.

Crypto World

Coinbase and Bybit in Investment Talks: Could Bybit Finally Enter the US Crypto Market?

TLDR:

- Coinbase and Bybit are in early-stage investment talks, with no official confirmation from either party yet.

- Bybit’s valuation is estimated at around $25 billion, mirroring ICE’s recent investment deal with OKX.

- The partnership could give Dubai-based Bybit a fully regulated entry point into the US crypto market.

- COIN stock rose 1.18% on the news, gaining nearly 20% over the past month amid rising investor confidence.

Coinbase and Bybit are reportedly in discussions for a major investment deal. The potential partnership could give Dubai-based Bybit a regulated path into the US crypto market.

Three sources confirmed the talks to WuBlockchain, although neither party has officially commented. No final outcome has been reached as of now.

Reports indicate Bybit’s valuation could reach around $25 billion, based on a comparable deal involving OKX and ICE. The deal could also expand Coinbase’s global reach if confirmed.

Coinbase-Bybit Deal Could Open US Market to Offshore Exchange

The current discussions between Coinbase and Bybit cover a broad range of possible cooperation. This includes potential investment and other forms of formal collaboration between the two exchanges. However, the talks remain exploratory, and no binding agreement has been reached yet.

Bybit is the world’s second-largest offshore crypto exchange, headquartered in Dubai. The platform serves a large global user base and offers a wide range of trading products. Gaining a regulated foothold in the US market has been a strategic priority for the exchange.

Coinbase, as the largest US-based crypto exchange, brings deep regulatory experience to the table. Experts say its background in licensing, reporting, and customer protection could benefit Bybit considerably.

Through this partnership, Bybit could navigate US compliance requirements far more effectively. Coinbase’s strong track record with US regulators adds credibility that Bybit would need in the market.

The deal also mirrors other recent strategic moves in the crypto sector. ICE recently invested in offshore exchange OKX at a valuation of $25 billion.

Additionally, Coinbase acquired Deribit last year in a $2.9 billion transaction, reflecting a pattern of expansion through strategic deals.

Industry Response and Market Reaction to the Coinbase-Bybit Reports

Social media has seen a wave of speculation since WuBlockchain first reported the story. On X, OKX founder Star Xu shared his reaction to the reports, stating: “If it’s true, good for the industry. Higher standards, less regulatory arbitrage.” His comment reflects a broader positive sentiment toward regulated collaboration in the crypto space.

Experts note that a successful Coinbase-Bybit deal could benefit both parties in distinct ways. For Coinbase, it offers an expanded global reach and a stronger international presence.

For Bybit, the deal opens a structured and compliant entry into the US market. The partnership could also position both companies more competitively in an evolving global landscape.

The COIN stock price responded positively to the emerging reports. Shares closed at $195.53, recording a 1.18% gain in a single trading day. Over the past month, the stock has risen nearly 20%, pointing to growing investor confidence around Coinbase’s strategic direction.

The talks remain in their early stages, and no official timeline has been confirmed. Both Coinbase and Bybit have yet to release any public statement on the matter. The industry continues to watch closely for further developments as speculation around the deal grows.

Crypto World

Can ETH Launch a Strong Rebound After Reclaiming $2K?

Ethereum is still in recovery mode, but the rebound is starting to look more organized than before. The asset continues to hold above the February base and is pressing closer to a key breakout area, which suggests buyers are gradually gaining confidence even if the larger trend has not fully turned yet.

Ethereum Price Analysis: The Daily Chart

The daily chart still carries the scars of the broader downtrend. ETH remains below the 100-day and 200-day moving averages, and both are still sloping in a way that favors sellers on the higher timeframe. The descending structure from the prior months also remains intact, so the market is not out of danger yet.

Even so, the picture has improved at the margin. Ethereum has spent several weeks defending the $1,800 zone and has now pushed back toward the $2,150 short-term resistance area again. If that ceiling breaks, the next upside region to watch sits around $2,300 to $2,400, while the much larger barrier remains near $2,800. On the downside, losing the $1,800 support cluster would weaken the recovery thesis considerably and likely lead to another round of decline capitulation.

ETH/USDT 4-Hour Chart

On the 4-hour chart, ETH looks more constructive than it does on the daily. The market has been printing a sequence of higher lows from the February bottom, and the rising trendline underneath the price shows that dip buyers are still active. That does not guarantee a breakout, but it does show that the short-term structure is leaning upward rather than flat or weak.

What matters now is the repeated test of $2,143. The asset has reached that level several times, which usually makes the next reaction important. A decisive move through it could trigger a fast push into the next supply zone around $2,400 and possibly higher. Another rejection, however, would likely keep ETH rotating sideways and send it back toward the trendline and the $1,800 support area.

Sentiment Analysis

Funding data shows that sentiment is no longer fearful, but it is not overheated either. Rates are mostly positive, which means long positioning is present, and traders are generally leaning bullish, yet the readings are still relatively moderate compared to the stronger speculative phases seen in the past.

That is usually a healthier backdrop than an aggressively crowded long market. In other words, sentiment is supportive, but not euphoric. This gives ETH room to extend higher if price confirms with a breakout, though it also means the market still needs spot follow-through rather than relying purely on leveraged optimism.

Binance Free $600 (CryptoPotato Exclusive): Use this link to register a new account and receive $600 exclusive welcome offer on Binance (full details).

LIMITED OFFER for CryptoPotato readers at Bybit: Use this link to register and open a $500 FREE position on any coin!

Disclaimer: Information found on CryptoPotato is those of writers quoted. It does not represent the opinions of CryptoPotato on whether to buy, sell, or hold any investments. You are advised to conduct your own research before making any investment decisions. Use provided information at your own risk. See Disclaimer for more information.

Crypto World

Bitcoin Beats US Stocks as Strategy’s STRC Hints at a $776M BTC Purchase

Bitcoin (BTC) is on track for its strongest weekly gain since September 2025, defying a broader risk-off backdrop driven by the escalating US and Israel-Iran war.

Key takeaways:

-

Strategy raised $776 million this week, which could lead to the purchase of over 11,000 BTC.

-

US Bitcoin ETFs had $767 million in inflows in the same period.

STRC hints at $776 million in Bitcoin buying power

As of Saturday, BTC/USD had risen more than 7% over the past week to around $70,625. Over the same period, the benchmark S&P 500 (SPX) was down 1.60%.

The divergence came as STRC.LIVE estimates indicated that Strategy may have raised enough cash through at-the-market sales of its STRC instrument this week to buy more than 11,000 BTC.

At current prices, that would amount to roughly $776 million in Bitcoin.

STRC is Strategy’s exchange-traded income-paying instrument that helps it raise investor cash for Bitcoin buys. When it trades at or above its $100 par value, Strategy can issue more shares and turn that demand into fresh BTC-buying capital.

Related: Bitcoin ‘passing geopolitical stress test’ as BTC price spikes above $72K

Last week, Strategy had purchased 17,994 BTC, equivalent to about $1.28 billion at that time. About 30% of the BTC allocation was funded by STRC sale proceeds.

Bitcoin’s price was also boosted by US spot Bitcoin ETFs, which attracted $767 million in net inflows across five straight trading days, reflecting growing demand for BTC despite the Middle East crisis.

Bitcoin gains during geopolitical crises

In the past, Bitcoin has experienced selloffs at the start of major geopolitical conflicts, only to recover and deliver larger gains.

In February 2022, Russia’s invasion of Ukraine caused an initial dump, but was followed by a 40% BTC price rally, as shown below.

A similar sequence played out after Israel’s June 2025 strikes on Iran. Bitcoin dipped in the immediate aftermath, then flipped higher, gaining about 25% over the next two months.

During the January 2020 US–Iran flare-up after General Qasem Soleimani’s killing, Bitcoin rose more than 50% overall, even though the first reaction included a brief price drop.

Bitcoin price may rise further if history is any indication, with macro models hinting at an escalation toward $100,000 in the coming months.

Bear flag keeps BTC’s downside risks intact

Conversely, a bear flag formation on the Bitcoin chart increases the likelihood of a bull trap.

Bear flags form when the price rises inside an ascending, parallel channel after a strong downtrend. They usually resolve when the price breaks below the lower boundary and falls by as much as the previous downtrend’s height.

As of Saturday, Bitcoin showed signs of upside exhaustion near the flag’s upper boundary, also aligning with the 50-day exponential moving average (50-day EMA, the red line) at around $72,750.

Applying the bear flag principle to Bitcoin’s chart places the measured downside target at around $51,000.

This article does not contain investment advice or recommendations. Every investment and trading move involves risk, and readers should conduct their own research when making a decision. While we strive to provide accurate and timely information, Cointelegraph does not guarantee the accuracy, completeness, or reliability of any information in this article. This article may contain forward-looking statements that are subject to risks and uncertainties. Cointelegraph will not be liable for any loss or damage arising from your reliance on this information.

-

Tech3 days ago

Tech3 days agoA 1,300-Pound NASA Spacecraft To Re-Enter Earth’s Atmosphere

-

News Videos5 days ago

News Videos5 days ago10th Algebra | Financial Planning | Question Bank Solution | Board Exam 2026

-

Crypto World14 hours ago

Crypto World14 hours agoHYPE Token Enters Net Deflation as HyperCore Buybacks Outpace Staking Rewards

-

Business4 days ago

Business4 days agoExxonMobil seeks to move corporate registration from New Jersey to Texas

-

Crypto World5 days ago

Crypto World5 days agoParadigm, a16z, Winklevoss Capital, Balaji Srinivasan among investors in ZODL

-

Fashion1 day ago

Fashion1 day agoWeekend Open Thread: Addict Lip Glow

-

Tech4 days ago

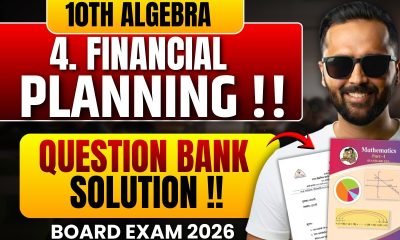

Tech4 days agoChatGPT will now generate interactive visuals to help you with math and science concepts

-

Sports4 hours ago

Why Duke and Michigan Are Dead Even Entering Selection Sunday

-

Sports7 days ago

Sports7 days agoThree share 2-shot lead entering final round in Hong Kong

-

Sports7 days ago

Sports7 days agoBraveheart Lakshya downs Lai in epic battle to enter All England Open final | Other Sports News

-

NewsBeat3 days ago

NewsBeat3 days agoResidents reaction as Shildon murder probe enters second day

-

Business6 days ago

Business6 days agoSearch for Nancy Guthrie Enters 37th Day as FBI Probes Wi-Fi Jammer Theory

-

Business3 days ago

Business3 days agoSearch Enters Sixth Week With New Leads in Tucson Abduction Case

-

NewsBeat5 days ago

NewsBeat5 days agoPagazzi Lighting enters administration as 70 jobs lost and 11 stores close across Scotland

-

Tech5 days ago

Tech5 days agoDespite challenges, Ireland sixth in EU for board gender diversity

-

Business6 hours ago

Business6 hours agoUS Airports Launch Donation Drives for Unpaid TSA Workers as Partial Government Shutdown Enters Fifth Week

-

NewsBeat3 days ago

NewsBeat3 days agoI Entered The Manosphere. Nothing Could Prepare Me For What I Found.

-

Business5 days ago

Business5 days agoSearch Enters 39th Day with FBI Tip Line Developments and No Major Breakthroughs

-

Crypto World57 minutes ago

Crypto World57 minutes agoCoinbase and Bybit in Investment Talks: Could Bybit Finally Enter the US Crypto Market?

-

Sports5 days ago

Sports5 days agoSkateboarding World Championships: Britain’s Sky Brown wins park gold