Social media is going the way of alcohol, gambling, and other social sins: Societies are deciding it’s no longer kid stuff. Lawmakers point to compulsive use, exposure to harmful content, and mounting concerns about adolescent mental health. So, many propose to set a minimum age, usually 13 or 16.

In cases when regulators demand real enforcement rather than symbolic rules, platforms run into a basic technical problem. The only way to prove that someone is old enough to use a site is to collect personal data about who they are. And the only way to prove that you checked is to keep the data indefinitely. Age-restriction laws push platforms toward intrusive verification systems that often directly conflict with modern data-privacy law.

This is the age-verification trap. Strong enforcement of age rules undermines data privacy.

How Does Age Enforcement Actually Work?

Most age-restriction laws follow a familiar pattern. They set a minimum age and require platforms to take “reasonable steps” or “effective measures” to prevent underage access. What these laws rarely spell out is how platforms are supposed to tell who is actually over the line. At the technical level, companies have only two tools.

The first is identity-based verification. Companies ask users to upload a government ID, link a digital identity, or provide documents that prove their age. Yet in many jurisdictions, 16-year-olds do not have IDs. In others, IDs exist but are not digital, not widely held, or not trustworthy. Storing copies of identity documents also creates security and misuse risks.

The second option is inference. Platforms try to guess age based on behavior, device signals, or biometric analysis, most commonly facial age estimation from selfies or videos. This avoids formal ID collection, but it replaces certainty with probability and error.

In practice, companies combine both. Self-declared ages are backed by inference systems. When confidence drops, or regulators ask for proof of effort, inference escalates to ID checks. What starts as a light-touch checkpoint turns into layered verification that follows users over time.

What Are Platforms Doing Now?

This pattern is already visible on major platforms.

Meta has deployed facial age estimation on Instagram in multiple markets, using video-selfie checks through third-party partners. When the system flags users as possibly underaged, it prompts them to record a short selfie video. An AI system estimates their age and, if it decides they are under the threshold, restricts or locks the account. Appeals often trigger additional checks, and misclassifications are common.

TikTok has confirmed that it also scans public videos to infer users’ ages. Google and YouTube rely heavily on behavioral signals tied to viewing history and account activity to infer age, then ask for government ID or a credit card when the system is unsure. A credit card functions as a proxy for adulthood, even though it says nothing about who is actually using the account. The Roblox games site, which recently launched a new age-estimate system, is already suffering from users selling child-aged accounts to adult predators seeking entry to age-restricted areas, Wired reports.

For a typical user, age is no longer a one-time declaration. It becomes a recurring test. A new phone, a change in behavior, or a false signal can trigger another check. Passing once does not end the process.

How Do Age-Verification Systems Fail?

These systems fail in predictable ways.

False positives are common. Platforms identify as minors adults with youthful faces, or adults who are sharing family devices, or have otherwise unusual usage. They lock accounts, sometimes for days. False negatives also persist. Teenagers learn quickly how to evade checks by borrowing IDs, cycling accounts, or using VPNs.

The appeal process itself creates new privacy risks. Platforms must store biometric data, ID images, and verification logs long enough to defend their decisions to regulators. So if an adult who is tired of submitting selfies to verify their age finally uploads an ID, the system must now secure that stored ID. Each retained record becomes a potential breach target.

Scale that experience across millions of users, and you bake the privacy risk into how platforms work.

Is Age Verification Compatible With Privacy Law?

This is where emerging age-restriction policy collides with existing privacy law.

Modern data-protection regimes all rest on similar ideas: Collect only what you need, use it only for a defined purpose, and keep it only as long as necessary.

Age enforcement undermines all three.

To prove they are following age-verification rules, platforms must log verification attempts, retain evidence, and monitor users over time. When regulators or courts ask whether a platform took reasonable steps, “We collected less data” is rarely persuasive. For companies, defending themselves against accusations of neglecting to properly verify age supersedes defending themselves against accusations of inappropriate data collection.

It is not an explicit choice by voters or policymakers, but instead a reaction to enforcement pressure and how companies perceive their litigation risk.

Less Developed Countries, Deeper Surveillance

Outside wealthy democracies, the trade-off is even starker.

Brazil’s Statute of Child-rearing and Adolescents (ECA in Portuguese) imposes strong child-protection duties online, while its data-protection law restricts data collection and processing. Now providers operating in Brazil must adopt effective age-verification mechanisms and can no longer rely on self-declaration alone for high-risk services. Yet they also face uneven identity infrastructure and widespread device sharing. To compensate, they rely more heavily on facial estimation and third-party verification vendors.

In Nigeria many users lack formal IDs. Digital service providers fill the gap with behavioral analysis, biometric inference, and offshore verification services, often with limited oversight. Audit logs grow, data flows expand, and the practical ability of users to understand or contest how companies infer their age shrinks accordingly. Where identity systems are weak, companies do not protect privacy. They bypass it.

The paradox is clear. In countries with less administrative capacity, age enforcement often produces more surveillance, not less, because inference fills the void of missing documents.

How Do Enforcement Priorities Change Expectations?

Some policymakers assume that vague standards preserve flexibility. In the U.K., then–Digital Secretary Michelle Donelan, argued in 2023 that requiring certain online safety outcomes without specifying the means would avoid mandating particular technologies. Experience suggests the opposite.

When disputes reach regulators or courts, the question is simple: Can minors still access the platform easily? If the answer is yes, authorities tell companies to do more. Over time, “reasonable steps” become more invasive.

Repeated facial scans, escalating ID checks, and long-term logging become the norm. Platforms that collect less data start to look reckless by comparison. Privacy-preserving designs lose out to defensible ones.

This pattern is familiar, including online sales-tax enforcement. After courts settled that large platforms had an obligation to collect and remit sales taxes, companies began continuous tracking and storage of transaction destinations and customer location signals. That tracking is not abusive, but once enforcement requires proof over time, companies build systems to log, retain, and correlate more data. Age verification is moving the same way. What begins as a one-time check becomes an ongoing evidentiary system, with pressure to monitor, retain, and justify user-level data.

The Choice We Are Avoiding

None of this is an argument against protecting children online. It is an argument against pretending there is no trade-off.

Some observers present privacy-preserving age proofs involving a third party, such as the government, as a solution, but they inherit the same structural flaw: Many users who are legally old enough to use a platform do not have government ID. In countries where the minimum age for social media is lower than the age at which ID is issued, platforms face a choice between excluding lawful users and monitoring everyone. Right now, companies are making that choice quietly, after building systems and normalizing behavior that protects them from the greater legal risks. Age-restriction laws are not just about kids and screens. They are reshaping how identity, privacy, and access work on the Internet for everyone.

The age-verification trap is not a glitch. It is what you get when regulators treat age enforcement as mandatory and privacy as optional.

From Your Site Articles

Related Articles Around the Web

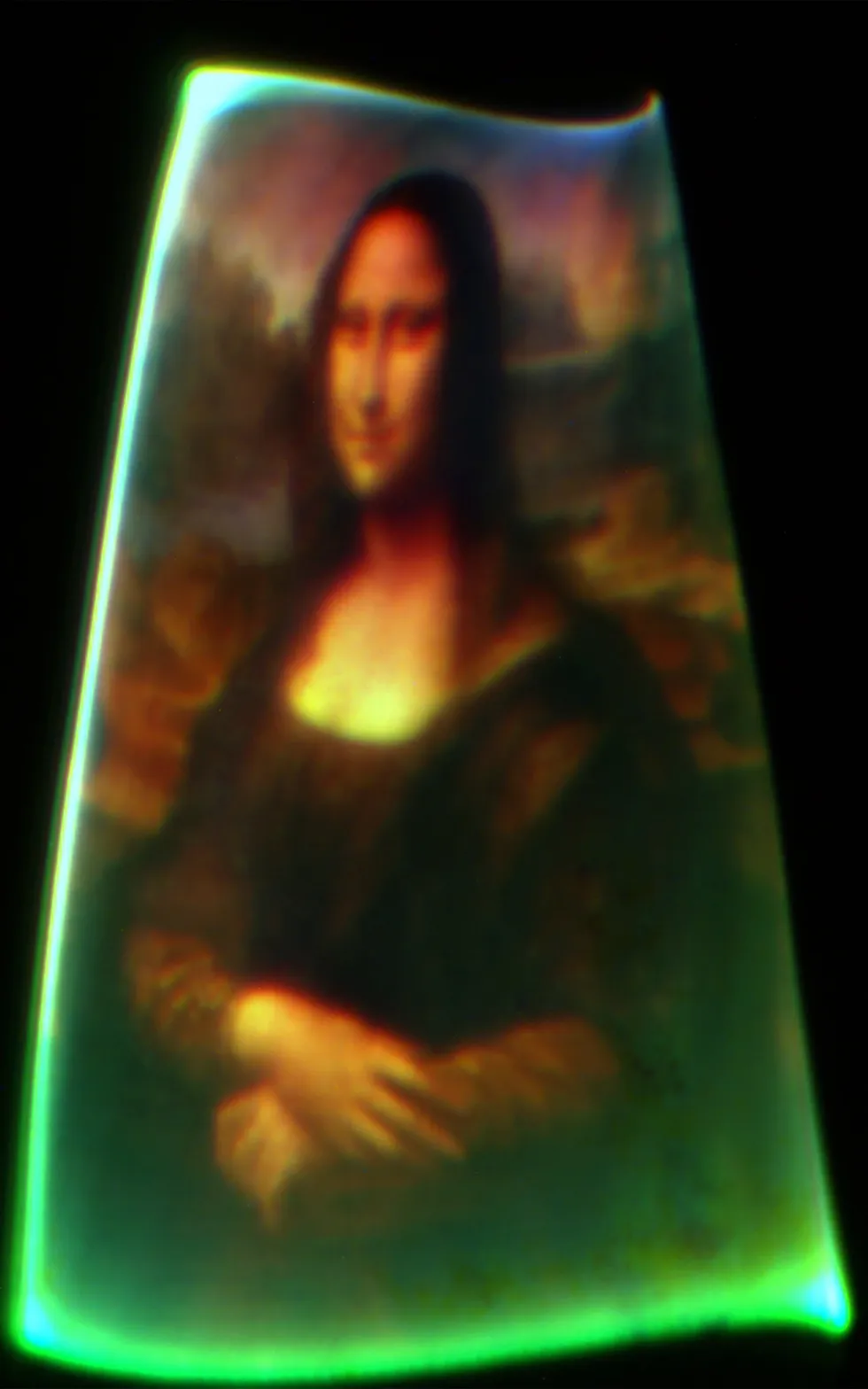

The chip projected a roughly 125-micrometer image of the Mona Lisa.

The chip projected a roughly 125-micrometer image of the Mona Lisa.

You must be logged in to post a comment Login