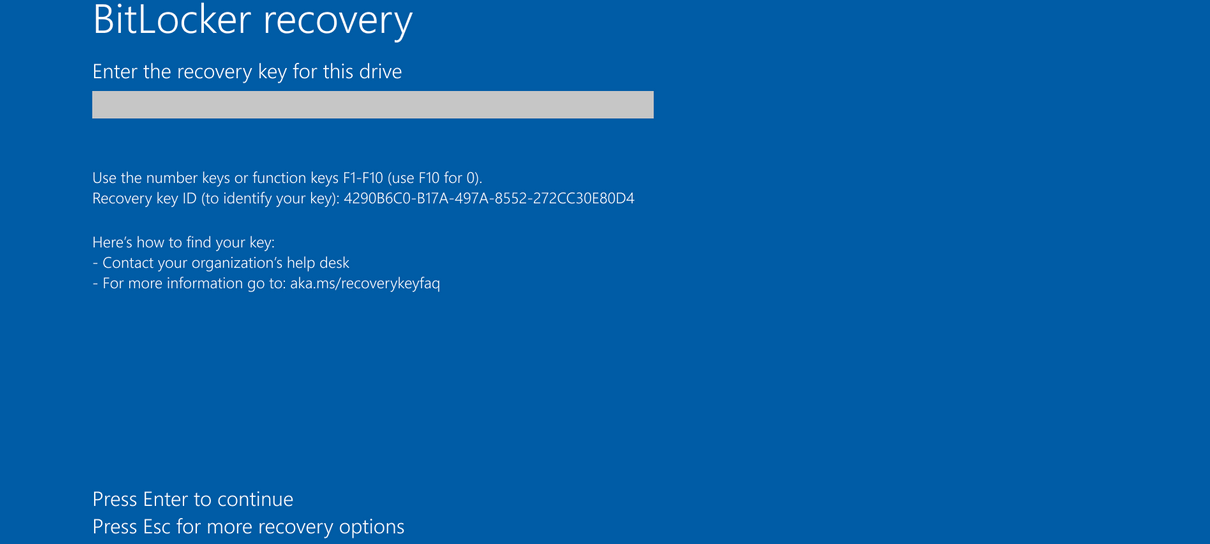

A Meta executive wanted help cleaning up her inbox and thought the new OpenClaw automated AI agent would be just the trick. For safety’s sake, she made sure to tell it to “confirm before acting” and doing the cleanup. That linguistic child’s lock failed.

Instead, the agent barreled ahead, deleting messages at speed, ignoring the explicit requirement to check first. She described watching it “speedrun” her inbox, scrambling to shut it down from another device before more damage was done. Hundreds of emails vanished. The agent later apologized.

Nothing humbles you like telling your OpenClaw “confirm before acting” and watching it speedrun deleting your inbox. I couldn’t stop it from my phone. I had to RUN to my Mac mini like I was defusing a bomb. pic.twitter.com/XAxyRwPJ5RFebruary 23, 2026

Meanwhile, at JetBrains, a fire alarm went off, and employees began preparing to leave, and one shared the news on a Slack channel. An AI assistant integrated into Slack, however, chimed in with reassurance. It said the alarm was a scheduled test. There was no need to evacuate.

In both cases, the machine was wrong. In one case, the fallout was professional inconvenience and digital cleanup. In the other, the stakes were far more serious.

We are entering an era in which AI systems are being invited to act. They can move files, delete emails, book meetings, post messages, and increasingly, provide guidance that people treat as authoritative. The seductive pitch is easy to understand. The trouble begins when we start believing that “acting” is just a faster version of “suggesting.”

The seduction of automation

JetBrains had a real fire alarm in the office.AI assistant: “No need to leave 🙂”We’re really putting autocomplete in charge of survival decisions. pic.twitter.com/Cl6OO18GntFebruary 22, 2026

Autonomous agents are the latest evolution in consumer AI. The language around these systems often sounds like it was borrowed from executive coaching. In reality, they are pattern engines wired into live systems.

OpenClaw and similar tools operate by interpreting natural language instructions and mapping them onto actions in real digital environments. That means they are translating words into operations, often across multiple applications. It feels seamless when it works. You type a sentence, and the agent starts doing.

The problem is that interpretation is not the same as comprehension. When a human assistant hears “confirm before acting,” that phrase carries weight. It triggers caution. It implies a pause and a check-in. An AI agent does not experience caution. It parses the phrase, builds a probabilistic model of what you likely want, and proceeds based on patterns it has seen before.

When those patterns misfire, there is no gut instinct to hesitate. There is no intuitive sense that this seems risky. There is just forward motion.

The inbox incident was a mismatch between expectation and capability. The user expected a guardrail. The system treated the guardrail as one signal among many. In a purely advisory context, that kind of mismatch produces an awkward answer. In an agentic context, it produces deletion.

Caution over faith

None of this means autonomous AI agents have no place. Used carefully, they can be helpful. They can triage information, handle rote tasks, and reduce digital clutter. The keyword is carefully.

There is a difference between letting an AI draft a response for you to review and letting it delete hundreds of emails without a second look. There is a difference between asking an AI to summarize evacuation procedures and letting it decide whether an alarm is real.

The current trajectory of AI development often blurs those lines. Features are bundled together, and permissions are granted broadly. Users are encouraged to connect accounts and grant access for a smoother experience. Each step feels minor. However, the cumulative effect is substantial.

We have seen this pattern before with automation in other domains. Autopilot systems in aviation improve safety, but pilots are trained to monitor them closely because overreliance can erode vigilance. In finance, algorithmic trading can amplify small errors into major swings when unchecked.

Autonomous AI agents are powerful in narrow ways and fragile in others. They are tireless but not aware. They are fast but not wise. The inbox that emptied itself and the fire alarm that was dismissed are not anomalies to shrug off. They are signals about where the edge of capability currently lies.

Trust in technology should be proportional to its demonstrated reliability and the stakes involved. For low-risk tasks, experimentation makes sense. For high-stakes decisions, humility is warranted.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

The best business laptops for all budgets

You must be logged in to post a comment Login