Crypto World

MoonPay launches non-custodial wallets for AI agents

Crypto payments platform MoonPay has introduced a new product designed to give artificial intelligence systems direct access to digital wallets and on-chain transactions.

Summary

- MoonPay launched MoonPay Agents on to support non-custodial AI wallets.

- The platform enables automated trading, funding, and machine-to-machine payments.

- The product targets developers building large-scale autonomous financial systems.

MoonPay Agents, a non-custodial software layer that enables AI agents to create wallets, manage funds, and trade on behalf of verified users, was officially launched by the company on Feb. 24.

The system is built on MoonPay’s command-line interface and is aimed at developers building automated programs that need to move money without relying on centralized custody. Once a user completes identity checks and funds a wallet, an AI agent can trade, swap, and transfer assets independently.

Connecting AI systems to digital money

MoonPay said the product supports the full financial cycle, including fiat-to-crypto funding, portfolio tracking, and conversion back to traditional currencies. Users can also receive funds through virtual accounts or payment services such as Apple Pay, PayPal, and Venmo.

“AI agents can reason, but they cannot act economically without capital infrastructure,” said Ivan Soto-Wright, the company’s chief executive officer. He said the goal is to make crypto the default financial layer for autonomous systems.

According to MoonPay, users can set up a working wallet and agent connection in minutes, allowing automated systems to begin executing strategies almost immediately.

MoonPay Agents includes tools such as recurring purchases, real-time cross-chain swaps, machine-to-machine payments, and automated fiat funding via on-ramps. These features are designed to ensure that agents always have access to liquidity when operating.

Additionally, the platform supports portfolio monitoring, token discovery, and basic risk analysis, enabling developers to incorporate financial management straight into their apps. Wallets are stored on users’ own devices, giving them direct control over private keys.

The product is built to scale from single-user setups to networks of thousands of agents. It runs on the same infrastructure that supports nearly 500 enterprise customers and more than 30 million users across 180 countries.

Part of a the growing “agent economy” trend

The launch comes amid growing interest in so-called “agentic” systems that can plan and act without continuous human oversight. Industry forecasts suggest the autonomous agent economy could reach $30 trillion by 2030, with AI systems managing a large share of routine financial decisions.

In crypto markets, this shift is already underway. AI-powered wallets are being used for trading, DeFi activity, and machine-to-machine payments. At ETHDenver 2026, developers showcased blockchain-based identity tools, automated treasuries, and agent-led trading systems, highlighting the rapid growth of this trend.

According to company executives, MoonPay Agents will serve as a default financial rail for developers building trading bots, gaming platforms, and automated payment systems. With AI systems increasingly taking on financial tasks, MoonPay is positioning its infrastructure as a foundation for this emerging market.

Crypto World

DOGE jumps 5% as breakout flips resistance into support

Dogecoin pushed higher on outsized volume after repeatedly testing resistance, flipping a key ceiling into support and setting up a near-term test of the next supply zone.

News Background

- DOGE advanced alongside a stabilizing broader crypto market, with buyers stepping in after several sessions of tight consolidation.

- The move wasn’t driven by token-specific headlines but by technical positioning, as repeated failures at $0.0924 left the level primed for a breakout once liquidity expanded.

- The rally comes after DOGE spent hours coiling between $0.090 and $0.0927, building compression before volume returned.

- Open interest remains elevated but not extreme, suggesting moderate leverage participation rather than a crowded speculative push.

Price Action Summary

- DOGE gained 1.9%, rising from $0.0926 to $0.0944

- Breakout above $0.0924 occurred on 749M volume, 176% above baseline

- Price briefly probed $0.0950 before consolidating near $0.0940–$0.0945

- Higher lows formed during consolidation, confirming short-term strength

Technical Analysis

- The key technical development was the sustained break above $0.0924, a level that capped multiple attempts earlier in the session. Once cleared, momentum accelerated quickly, and the breakout volume suggests genuine participation rather than a low-liquidity spike.

- The subsequent consolidation near $0.0940 appears constructive, with shallow pullbacks and higher lows indicating buyers defending the breakout zone. That keeps short-term structure bullish, but the real test lies at $0.0946–$0.0950, where supply previously absorbed upside attempts.

- A decisive close above $0.0950 would expose $0.0955–$0.0960. Failure to hold $0.0940 would risk a pullback toward $0.0924, which now serves as the structural pivot.

What traders say is next?

- Traders view $0.0940 as the new line of defense. As long as DOGE holds above that level, momentum favors continuation toward $0.0955 and potentially $0.0960.

- If the breakout fades and price slips back below $0.0924, the move would resemble a false break, reopening the prior consolidation range and shifting near-term bias back to neutral.

Crypto World

How El Mencho’s CJNG Cartel Used Crypto to Support Operations

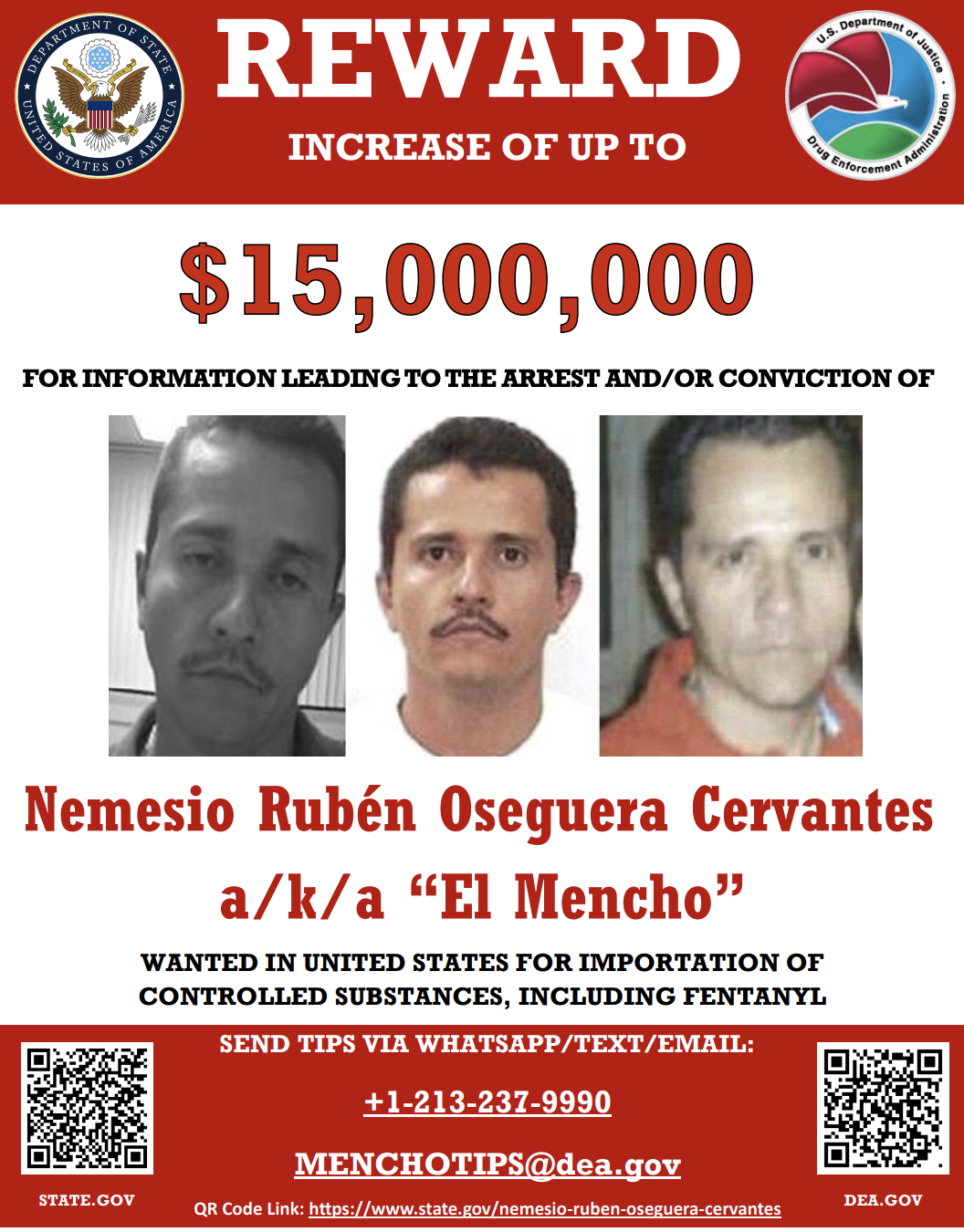

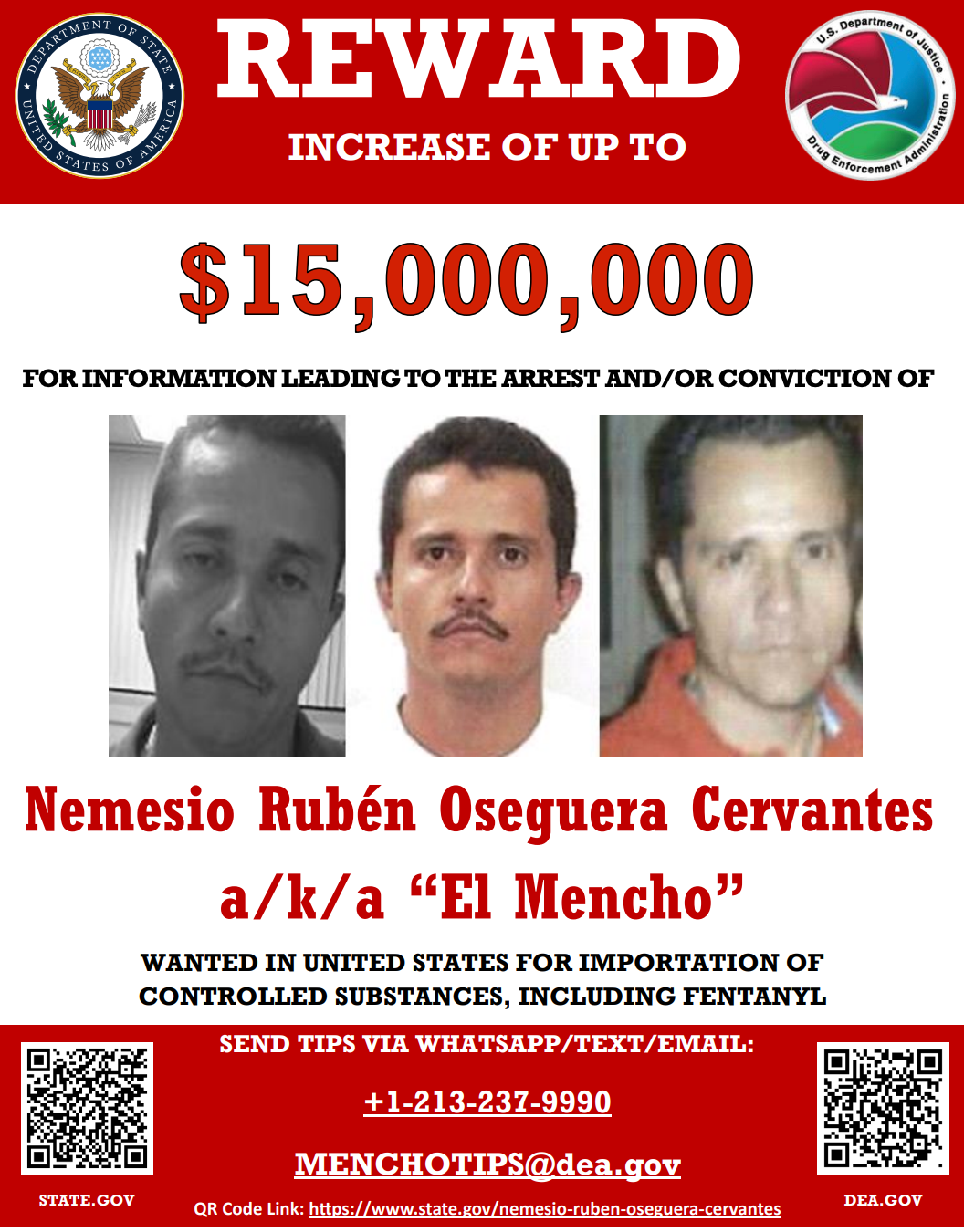

One of the world’s most wanted drug lords is dead. Nemesio Rubén Oseguera Cervantes, known as “El Mencho,” was killed on Sunday. His death triggered a wave of violence across several Mexican states.

Beyond the security impact, attention is also turning to the cartel’s financial operations. In recent years, regulators and researchers have documented how Mexican criminal networks have incorporated cryptocurrency into their operations.

Who was El Mencho?

El Mencho was among Mexico’s most wanted fugitives and the leader of the Jalisco New Generation (CJNG) cartel. According to the US Department of State, the CJNG was formed in 2009. It has since evolved into one of the most violent drug cartels in Mexico.

“It has been assessed to have the highest cocaine, heroin, and methamphetamine trafficking capacity in Mexico, and over the past few years, includes the trafficking of fentanyl into the United States,” the text reads.

On February 20, 2025, the United States officially designated the cartel as a Foreign Terrorist Organization pursuant to Section 219 of the Immigration and Nationality Act.

In addition, the US State Department had offered a $15 million reward for information leading to the capture or conviction of El Mencho. He was killed on Sunday during a military operation.

Following his death, unrest spread across parts of the country. According to the BBC, at least 20 states experienced disturbances as cartel members blocked roads and torched vehicles and businesses.

While the immediate fallout played out in the streets, past data shows that CJNG’s impact has extended beyond territorial control.

Over the past years, investigators have tracked the cartel’s increasingly sophisticated financial infrastructure. This includes its use of digital assets to move and launder funds across borders.

Crypto and Cartel Finance

Cryptocurrencies such as Bitcoin (BTC) and Tether (USDT) are not inherently illicit. They are widely used for legitimate investment, payments, and financial innovation.

However, regulatory and law enforcement agencies have identified instances in which these digital assets were used in transactions linked to illegal activities.

As early as 2020, Reuters reported that US and Mexican authorities observed an increasing use of Bitcoin among major drug trafficking groups, including the CJNG and the Sinaloa Cartel, for laundering money.

In 2024, the US Treasury’s Financial Crimes Enforcement Network (FinCEN) stated that Mexico-based transnational criminal organizations were using virtual currencies, including Bitcoin, Ethereum, Monero, and Tether, to purchase fentanyl precursor chemicals and equipment from suppliers in China.

A March 2025 report by Chainalysis found that suspected China-based chemical traders received more than $37.8 million in cryptocurrency between 2018 and 2023. Major Mexican cartels, including the CJNG, were identified as buyers of these precursors used to manufacture synthetic opioids.

“Blockchain analysis reveals that precursor chemical suppliers advertise directly on darknet markets and messaging apps, accepting digital assets in exchange for chemicals shipped to Mexico. Once paid, crypto funds are laundered through complex transaction patterns including peel chains, layering, and cross-chain swaps, and often cashed out through Chinese exchanges or international mules,” TRM Labs revealed.

In August 2025, FinCEN also highlighted that the CJNG, the Sinaloa Cartel, the Gulf Cartel, and other Mexico-based transnational criminal organizations were using Chinese money laundering networks (CMLNs) to launder illicit proceeds.

Notably, Chainalysis reported that CMLNs now play a dominant role in cryptocurrency-related money laundering. In 2025, these networks accounted for approximately 20% of known cryptocurrency money laundering activity.

While the activity has scaled, regulatory focus has also intensified. According to the US Attorney’s Office for the Southern District of New York, Paul Campo, a former DEA official, and Robert Sensi were indicted for conspiring to provide material support to CJNG.

“As part of the scheme, CAMPO and SENSI agreed to launder approximately $12,000,000 of CJNG narcotics proceeds; laundered approximately $750,000 by converting cash into cryptocurrency; and provided a payment for approximately 220 kilograms of cocaine on the understanding that the payment would trigger the distribution and sale of the narcotics worth approximately $5,000,000, for which CAMPO and SENSI would (i) receive directly a portion of the narcotics proceeds as profit; and (ii) receive a further commission upon the laundering of the balance of the narcotics proceeds,” the press release said.

Thus, El Mencho’s death marks a significant moment in Mexico’s fight against organized crime. Yet the financial systems supporting major cartels remain complex, cross-border, and technologically adaptive, extending far beyond any single individual.

Crypto World

Crypto Leaders Clash Over Whether XRPL Is Centralized

Debate is raging in the crypto community as Justin Bons, founder and CIO of Cyber Capital, argues that Ripple’s XRP Ledger (XRPL) is “centralized.”

Meanwhile, Ripple’s CTO Emeritus, David Schwartz, has firmly defended its architecture. This raises crucial questions about what makes a blockchain genuinely decentralized.

Justin Bons Labels XRP Ledger “Centralized”

In a recent post on X (formerly Twitter), Bons criticized what he calls “centralized blockchains.” He argued that several networks rely on permissioned validator structures, pointing to XRP Ledger’s Unique Node List (UNL) as an example.

“Ripple: Has a “Unique Node List”, which makes the validators effectively permissioned. Any divergence from this centrally published list would cause a fork, effectively giving the Ripple Foundation & company absolute power & control over the chain,” he wrote.

He also named Canton, Stellar, Hedera, and Algorand in his post. Bons framed decentralization as a binary choice, arguing that a blockchain is either fully permissionless or it is not. In his view, any permissioned element is “anti-thetical” to the ethos of crypto.

“The future of finance is decentralized & permissionless,” he wrote. “But let’s not pretend as if these chains are really playing a part in this revolution…if you care about crypto. Reject these permissioned chains & demand they decentralize.”

Bons also outlined what he described as the only three forms of blockchain consensus: Proof of Stake, Proof of Work, and Proof of Authority. He mentioned that any system not based on PoS or PoW then “it is, by definition, PoA.” The executive said that “choosing who we trust is not the same as trustlessness,” specifically referencing XRP and XLM.

David Schwartz Defends XRP Ledger

Bons’ post sparked notable reactions from the community. Schwartz, one of the chief architects of the XRP Ledger, rejected claims that Ripple has “absolute power & control.”

He explained that the XRP Ledger was designed so that Ripple could not control the network. Schwartz said this decision was intentional and rooted in regulatory considerations.

“Ripple, for example, has to honor US court orders. It cannot say no….But could a US court decide that international comity with an oppressive was more important than XRPL or Ripple? We were quite concerned that could come down either way. We absolutely and clearly decided that we DID NOT WANT control and that it would be to our own benefit to not have that control,” he replied.

Schwartz also pushed back against Bons’ claims about potential double-spending and censorship. He explained that validators cannot force an honest node to accept a double-spend or censor transactions.

Each node independently enforces protocol rules and only counts the validators it has chosen on its Unique Node List (UNL). If a validator behaves dishonestly, an honest node simply treats it as a validator it disagrees with.

Schwartz acknowledged that validators could theoretically conspire to halt the network from the perspective of honest nodes. However, he said this would be equivalent to a dishonest majority attack and would still not allow double-spending. In such a scenario, he argued that the remedy would be to select a new UNL.

“Transactions are discriminated against all the time in BTC. Transactions are maliciously re-ordered or censored all the time on ETH. Nothing like this has *ever* happened to an XRPL transaction and it’s hard to imagine how it could,” he remarked.

He also pointed out that XRPL resolves the double-spend problem through consensus rounds that occur roughly every five seconds. During each round, validators vote on whether transactions should be included in the current ledger.

Honest nodes may defer a valid transaction to the next round if a supermajority of trusted validators say they did not see it before the cutoff. According to Schwartz, this mechanism maintains consensus without granting unilateral control to any single party.

“There are only two reasons you need a UNL: 1) Otherwise a malicious party could create an unbounded number of validators causing nodes to need to do excessive work to reach consensus. 2) Otherwise a malicious party could create validators that just didn’t participate in consensus, leaving nodes unable to tell whether they actually had reached a consensus with other nodes,” he noted.

He further stressed that if Ripple had the ability to censor transactions or execute double spends, using that power would permanently damage trust in XRPL. Therefore, he said the system was intentionally architected to limit the power of any single actor, including Ripple itself.

Crypto World

Ethereum Foundation Deploys 2,016 ETH as It Begins Large-Scale Treasury Staking

By staking treasury ETH, the Ethereum Foundation now directly participates in consensus while generating native, ether-denominated yield.

The Ethereum Foundation announced that it has begun staking a portion of its treasury funds, following the Treasury Policy it released last year.

The latest move represents a formal step into direct participation in Ethereum’s proof-of-stake consensus.

Treasury Staking

As part of this initiative, the Foundation deposited 2,016 ETH on Tuesday and stated that it plans to stake approximately 70,000 ETH in total, with all staking rewards directed back to the Foundation’s treasury. The staking setup relies entirely on open-source infrastructure, and the Foundation picked Dirk as a distributed signing solution and Vouch to manage validator operations across multiple Beacon and Execution Client pairings.

According to the announcement, Dirk distributes signing responsibilities across several geographic regions to remove single points of failure, while Vouch enables configurable strategies designed to mitigate client diversity risks. The overall configuration uses a mix of minority clients alongside both hosted infrastructure and self-managed hardware deployed across multiple jurisdictions.

The Foundation also confirmed that its validators are using Type 2 (0x02) withdrawal credentials, which allow validator balances to be transferred through consolidations, reduce the number of required signing keys by supporting a higher maximum effective balance per validator, and enable flexible exits that can be triggered by the withdrawal address even if validators are offline.

This approach simplifies key management and supports faster changes in signing-key custody, according to the Swiss non-profit organization.

In terms of block production, the setup is being built locally rather than relying on proposer-builder separation sidecars. The Foundation stated that by solo staking its own ETH, it will generate native, ETH-denominated yield using Ethereum’s protocol mechanics.

You may also like:

Short-Term Weakness Dominates

On the price front, ETH traded sharply lower over the past 24 hours, extending its short-term downtrend as sellers remained in control throughout the session. The price slipped from around $1,920 during the early Asian trading hours of Tuesday to near $1,820, as brief attempts to stabilize failed to gain traction. While short-term price action remains under pressure, some analysts believe that the broader setup looks more constructive on a longer time horizon.

Analyst Merlijn The Trader said ETH is sitting in a five-year demand zone that has historically favored accumulation, not distribution. He noted that prices have returned to levels seen during prior bear market phases and momentum may be quietly building despite the slow pace.

Binance Free $600 (CryptoPotato Exclusive): Use this link to register a new account and receive $600 exclusive welcome offer on Binance (full details).

LIMITED OFFER for CryptoPotato readers at Bybit: Use this link to register and open a $500 FREE position on any coin!

Crypto World

SUI price eyes oversold bounce as 21Shares ETF launches

SUI price is attempting a to reclaim a key psychological level as the 21Shares Spot SUI ETF begins trading on Nasdaq.

Summary

- SUI is trading near $0.87 after a sharp multi-week decline.

- The 21Shares Spot SUI ETF (TSUI) has officially launched on Nasdaq.

- Technical indicators suggest a potential short-term bounce if support holds.

Sui was trading at $0.8786 at press time, up 3.4% in the past 24 hours. The token has struggled to reclaim the $1 psychological level in recent sessions.

Sui (SUI) has hovered between $0.8519 and $0.9783 over the past week. It has fallen about 8% in seven days and is down nearly 40% over the past month, showing continued selling pressure.

Spot volume reached $474 million, a 12% drop from the previous day, indicating weaker trading activity. CoinGlass data shows derivatives volume down 14% to $685 million, while open interest slipped 2.8% to $447 million, indicating leverage is cooling rather than expanding.

21Shares launches spot SUI ETF on Nasdaq

The minor price recovery comes as the 21Shares Spot SUI ETF (TSUI) launched on Nasdaq on Feb. 24.

The ETF allows U.S. investors to gain spot exposure to SUI through traditional brokerage accounts without directly holding the token. TSUI carries a 0.30% management fee, waived through October 2026, and launched with about $9.2 million in assets under management.

TSUI is not registered under the Investment Company Act of 1940 and does not offer the same regulatory protections as ‘40 Act ETFs. The product follows 21Shares’ earlier 2x leveraged SUI ETF introduced in December 2025

Sui, which focuses on payments, tokenization, and DeFi tools, was founded by former members of Meta’s Diem and Libra projects.

The network has handled more than $100 billion in stablecoin transfers in the last six months. Its decentralized exchanges saw a volume of $6.5 billion over the past 30 days, indicating active on-chain use.

ETF launches have often lifted crypto prices. Following the 2024 approval of Bitcoin ETFs, institutional capital poured in and liquidity rose, bolstering the market. The effect TSUI has on SUI’s price will probably depend on its ability to draw comparable inflows.

Sui price technical analysis

After falling from above $1.80 to about $0.85, SUI has been in a downward trend for several weeks. The daily chart indicates ongoing short-term weakness with lower highs and lows.

The price currently trades below the 50-day and 20-day moving averages, which serve as resistance. A move back above the 50-day average near $0.94 would be the first signal that short-term momentum is shifting.

The relative strength index recently dipped into the low-30 range, indicating near-oversold conditions, and is now turning upward. At the same time, price has been hugging the lower Bollinger Band, and the bands are beginning to contract. That setup often precedes a volatility expansion.

A relief rally toward $0.94 may emerge if SUI maintains the $0.85–$0.87 support zone and buying volume rises in tandem with ETF-related inflows. A clean break above $1.00 would strengthen the case for a broader recovery toward the $1.03–$1.20 area.

However, if $0.85 fails to hold, the oversold bounce thesis weakens, and the price could extend lower as sellers regain control.

Crypto World

Anthropic Says It’s Been Targeted by Massive Distillation Attacks

Frontier AI developer Anthropic has publicly accused three Chinese AI labs—DeepSeek, Moonshot, and Minimax—of conducting distillation attacks aimed at siphoning capabilities from Claude, Anthropic’s large language model. In a detailed blog post, the company describes campaigns that allegedly produced over 16 million exchanges across roughly 24,000 fraudulent accounts, exploiting Claude’s outputs to train less capable models. Distillation, a recognized training tactic in AI, becomes problematic when deployed at scale to replicate powerful features without bearing the same development costs. Anthropic emphasizes that while distillation has legitimate uses, it can enable rival firms to shortcut breakthroughs and uplift their own products at a fraction of the time and expense.

Key takeaways

- Distillation involves training a weaker model on the outputs of a stronger one, a method widely used for creating smaller, cheaper versions of AI systems.

- Anthropic alleges that DeepSeek, Moonshot, and Minimax orchestrated mass-scale distillation campaigns, generating millions of interactions with Claude across tens of thousands of fake accounts.

- The attacks reportedly targeted Claude’s differentiated capabilities, including agentic reasoning, tool use, and coding, signaling a focus on high-value, transferable competencies.

- The firm argues that foreign distillation campaigns carry geopolitical risks, potentially arming authoritarian actors with advanced capabilities for cyber operations, disinformation, and surveillance.

- Anthropic says it will bolster detection, share threat intelligence, and tighten access controls, while urging broader industry cooperation and regulatory engagement to counter these threats.

Market context: The incident arrives amid heightened scrutiny of AI model interoperability and the security of cloud-based AI offerings, a backdrop that also touches on automated systems used in crypto markets and related risk-management tools. As AI models become more embedded in trading, risk assessment, and decision-support, ensuring the integrity of input data and model outputs grows ever more important for both developers and users in the crypto space.

Why it matters

The allegations underscore a tension at the heart of frontier AI: the line between legitimate model distillation and exploitative replication. Distillation is a common, legitimate practice used by labs to deliver leaner variants of a model for customers with modest compute budgets. Yet, when leveraged at scale against a single ecosystem, the technique can be co-opted to extract capabilities that would otherwise require substantial research and engineering. If confirmed, the campaigns could prompt a broader rethink of how access to powerful models is controlled, monitored, and audited, particularly for firms with global reach and complex cloud footprints.

Anthropic asserts that the three named firms carried out activities designed to harvest Claude’s advanced abilities through a combination of IP-address correlation, request metadata, and infrastructure indicators, with independent corroboration from industry partners. This signals a concerted, data-driven effort to map and replicate cloud-based AI capabilities, not merely isolated experiments. The scale described—tens of millions of interactions across thousands of accounts—raises questions about the defense measures in place to detect and disrupt such patterns, as well as the accountability frameworks that govern foreign competitors operating in AI spaces with direct national and economic implications.

“Distillation is a widely used and legitimate training method. For example, frontier AI labs routinely distill their own models to create smaller, cheaper versions for their customers,” Anthropic wrote, adding:

“But distillation can also be used for illicit purposes: competitors can use it to acquire powerful capabilities from other labs in a fraction of the time, and at a fraction of the cost, that it would take to develop them independently.”

Beyond the IP concern, Anthropic ties the alleged activity to strategic risk for national security, arguing that distillation attacks by foreign labs could feed into military, intelligence, and surveillance systems. The company contends that unprotected capabilities could enable offensive cyber operations, disinformation campaigns, and mass surveillance, complicating the geopolitical calculus for policymakers and industry players alike. The assertion frames the issue as not merely a competitive dispute but one with broad implications for how frontier AI technologies are safeguarded and governed.

In outlining a path forward, Anthropic says it will enhance detection systems to spot dubious traffic patterns, accelerate threat-intelligence sharing, and tighten access controls. The company also calls on domestic players and lawmakers to collaborate more closely in defending against foreign distillation actors, arguing that a coordinated, industry-wide response is essential to curb these activities at scale.

For readers tracking the AI policy frontier, the allegations echo ongoing debates about how to balance innovation with safeguards—issues that are already echoing through discussions about governance, export controls, and cross-border data flows. The broader industry has long grappled with how to deter illicit use without stifling legitimate experimentation, a tension that will likely be a focal point for future regulatory and standards-setting efforts.

What to watch next

- Anthropic and the accused firms may publish further details or clarifications about the allegations and their respective responses.

- Threat intelligence bodies and cloud providers could release updated indicators of compromise or defensive guidance related to distillation-style attacks.

- Regulators and lawmakers may issue or refine policies governing AI model access, cross-border data sharing, and anti-piracy measures for high-capability models.

- Independent researchers and security firms may replicate or challenge the methodologies used to identify the alleged campaigns, potentially expanding the evidence base.

- Industry collaborations could emerge to establish best practices for protecting frontier model capabilities and for auditing model distillation processes.

Sources & verification

- Anthropic blog post: Detecting and Preventing Distillation Attacks — official statement detailing the accusations and the described campaigns.

- Anthropic’s X status post referenced in the disclosure — contemporaneous public record of the company’s findings.

- Cointelegraph coverage and linked materials discussing AI agents, frontier AI, and related security concerns referenced in the article.

- Related discussions on the role of distillation in AI training and its potential misuse in competitive environments.

Distillation attacks and frontier AI security

The core claim rests on a structured abuse of distillation, wherein a stronger model’s outputs—Claude in this case—are used to train alternative models that mimic or approximate its capabilities. Anthropic contends this is not a minor leak but a sustained campaign across millions of interactions, enabling the three firms to approximate high-end decision-making, tool use, and coding abilities without bearing the full cost of original research. The numbers cited—more than 16 million exchanges across approximately 24,000 fraudulent accounts—illustrate a scale that could destabilize expectations about model performance, customer experience, and data integrity for users relying on Claude-based services.

What the allegations imply for users and builders

For practitioners building on AI, the case underscores the importance of robust provenance, access controls, and continuous monitoring of model usage. If foreign distillation can be scaled to produce viable stand-ins for leading capabilities, then the door opens to widespread commoditization of powerful features that were previously the result of substantial investment. The consequences could extend beyond IP loss to include drift in model behavior, unexpected tool integration failures, or the propagation of subtly altered outputs to end users. Builders and operators of AI-enabled services—whether in finance, healthcare, or consumer tech—may respond with heightened scrutiny of third-party integrations, stricter licensing terms, and enhanced anomaly-detection around API traffic and model queries.

Key considerations for the crypto ecosystem

While the incident centers on AI model security, its resonance for crypto markets lies in how automated decision-support, trading bots, and risk assessment tools depend on reliable AI inputs. Market participants and developers should remain vigilant about the integrity of AI-enabled services and the potential for compromised or replicated capabilities to influence automated systems. The situation also highlights the broader need for cross-industry collaboration on threat intelligence, standards for model provenance, and shared best practices that can help prevent a spillover of AI vulnerabilities into financial technologies and digital asset platforms.

What to monitor in the near term

- Public updates from Anthropic on findings, indicators of compromise, and any remediation milestones.

- Clarifications or statements from DeepSeek, Moonshot, and Minimax regarding the allegations.

- New guidelines or enforcement actions from policymakers aimed at foreign distillation and export controls for AI capabilities.

- Enhanced monitoring tools and access-control strategies adopted by cloud providers hosting frontier AI models.

- Independent research validating or contesting the methods used to detect distillation patterns and the scale of the claimed activity.

Crypto World

Nakamoto’s $107M BTC Inc, UTXO deal reshapes Bitcoin media

Nakamoto inks $107.3M all-stock deal for BTC Inc, UTXO to scale Bitcoin media and treasury platform.

Summary

- Nakamoto will issue 363.6m shares at $1.12 to fund the $107.3M all-stock acquisition.

- Deal consolidates Bitcoin Magazine, The Bitcoin Conference, and UTXO’s hedge fund advisory under one Nasdaq-listed BTC treasury firm.

- Management frames recurring media and advisory revenues as fuel for further BTC accumulation and M&A expansion.

Nakamoto, a bitcoin treasury firm founded by entrepreneur David Bailey, announced the acquisition of BTC Inc. and UTXO Management in an all-stock transaction valued at over $107 million, according to a company statement.

The deal includes BTC Inc., which operates Bitcoin Magazine and The Bitcoin Conference, as well as UTXO Management, an investment firm that backs bitcoin treasury companies. Shareholders will receive 363,589,816 shares on a fully diluted basis, the company said.

Bailey stated the acquisitions align with Nakamoto’s strategy to operate a portfolio spanning media, asset management, and advisory services. The transactions exercised prior call options, allowing Nakamoto to acquire BTC Inc. while BTC Inc. concurrently acquired UTXO Management, according to the announcement.

The acquisitions provide recurring revenue for Nakamoto, diversifying the company’s operations beyond capital markets activities. Other bitcoin treasury companies in the sector include Strategy, led by Michael Saylor, and Twenty One Capital.

Bailey indicated that Nakamoto has no plans to sell its bitcoin holdings except under extreme prolonged price declines, signaling a continued accumulation strategy.

Industry analysts have noted a consolidation trend among bitcoin treasury companies, with firms combining media, advisory, and investment services to strengthen recurring revenue streams. Through the acquisitions of BTC Inc.’s established brands and UTXO’s investment operations, Nakamoto aims to expand its institutional presence and operational scale, according to market observers.

Crypto World

Coinbase Introduces Stock and ETF Trading in a Move to Widen Offerings

TLDR

- Coinbase now offers stock and ETF trading to all U.S. customers through its platform.

- The company provides commission-free trading and supports fractional shares starting at one dollar.

- Users can fund their stock and ETF trades with U.S. dollars or USDC.

- Coinbase introduced this expansion to bring multiple asset classes together in one place.

- The platform now offers 24/5 access to U.S.-listed equities.

Coinbase introduced stock and ETF trading to all U.S. users, and the launch brings equities onto its platform. The company now lets customers trade multiple asset classes, and the move reshapes its broader product plan. The rollout also widens access to markets for users who prefer one combined interface.

Coinbase expands access to stocks and ETFs

Coinbase opened U.S.-listed stock and ETF trading to every U.S. customer, and the service supports 24/5 access. The platform includes commission-free trades, and it offers fractional shares starting at one dollar.

The company allows funding through U.S. dollars or USDC, and it maintains the same layout users already know. It confirmed the plan in December, and it framed the expansion as part of a broader multi-asset strategy.

Coinbase also introduced a predictions market earlier this month, and it lets users trade outcomes of real events. The firm stated that stock trading marks “another step” in its long-term roadmap.

The company aims to reduce its focus on one sector, and it wants steadier performance across cycles. It expects the mix of assets to diversify platform activity, and it continues updating user tools.

Robinhood and eToro respond in evolving market

Robinhood now pushes harder into crypto products, and its platform increases competition for users. Both companies widen their offerings, and they adjust tools as market interest shifts.

COIN and HOOD each lost around 35 percent this year, and both face a weak digital asset backdrop. eToro traded down about 13 percent, and its earnings report showed strong equities activity.

These trends outline a shifting landscape, and the platforms keep reshaping their services. Each provider now blends asset classes, and they adapt as user behavior changes.

The companies follow demand across sectors, and they attempt to maintain platform engagement. They highlight varied market access, and they refine features across trading categories.

Partnerships and infrastructure support rollout

Yahoo Finance will add a trading button, and it will route interested readers directly to Coinbase. It will also show real-time Coinbase data, and the feature links research with execution.

Coinbase works with Apex Fintech Solutions for clearing, custody, and execution, and the partnership supports operational flow. The company said it will expand 24/5 access to more stocks soon, and it will broaden coverage.

The firm also expressed interest in tokenized stocks, and it said tokenization could enable around-the-clock movement. It continues testing new formats, and it reviews blockchain applications for traditional assets.

Coinbase reported steady platform updates, and it is preparing to scale its next features. It also monitors user demand, and it builds tools that serve broader market access.

Crypto World

Payoneer Joins Fintech Race for US Bank Charters

Payoneer, a global payments platform known for its cross-border capabilities, has taken a formal step toward regulated crypto services by filing with the Office of the Comptroller of the Currency (OCC) to form PAYO Digital Bank, a US national trust bank charter. The move would unlock a regulated pathway for the company to issue a GENIUS Act-compliant stablecoin and expand custody, settlement, and other crypto services for its nearly two million business-focused customers. The filing comes hot on the heels of a strategic partnership with Bridge, a stablecoin infrastructure provider, aimed at embedding stablecoin capabilities into Payoneer’s cross-border payment flows. Central to the plan is PAYO-USD, a stablecoin intended to act as the holding currency in Payoneer wallets and to enable customers to pay and receive stablecoins as part of daily transactions.

Key takeaways

- Payoneer has submitted an application to the OCC to create PAYO Digital Bank, a national trust charter that would enable regulated crypto services and stablecoin issuance.

- The proposed stablecoin PAYO-USD (CRYPTO: PAYO-USD) would anchor Payoneer wallets, allowing customers to hold, pay with, and convert stablecoins within the platform.

- Approval would empower Payoneer to manage PAYO-USD reserves, provide custodial services, and convert between PAYO-USD and local currencies for users and partners.

- The filing aligns with a broader regulatory expansion, as Crypto.com received conditional charter approval, joining a wave of crypto firms already granted or pursuing national bank charters (Circle, Ripple, Fidelity Digital Assets, BitGo, Paxos) in recent months.

- Other large players are pursuing similar routes (e.g., World Liberty Financial’s USD1 stablecoin, Laser Platform, and Coinbase’s ongoing review), signaling a shift toward regulated on-ramps for digital assets in mainstream finance.

Tickers mentioned:

Market context: The OCC’s evolving stance on national bank charters for crypto-related businesses reflects a regulatory approach that seeks to balance consumer protections with access to regulated crypto services, particularly for cross-border commerce and wholesale payments. The broader market backdrop—rising demand for stablecoins in trade, evolving custody models, and the ongoing integration of crypto rails into traditional financial infrastructure—frames Payoneer’s move as part of a wider industry trend.

Why it matters

The potential arrival of a fully regulated stablecoin and digital banking service within a trusted payments platform could alter the calculus for small and medium-sized businesses engaged in cross-border trade. Stablecoins, by design, aim to reduce settlement times and volatility when moving funds across borders. If PAYO-USD becomes the wallet’s native currency under a federally regulated umbrella, Payoneer could offer its users faster, more predictable settlement options with built-in compliance and reserve oversight, addressing common pain points in cross-border transactions.

For Payoneer, the OCC charter would extend its reach beyond a processor of international payments to a regulated crypto-enabled financial services provider. The company’s leadership, including CEO John Caplan, has signaled belief in stablecoins’ role in future global trade: “We believe stablecoins will play a meaningful role in the future of global trade.” The promise is not merely technological but regulatory—providing a trustworthy framework for reserve management, customer protections, and interoperability with traditional financial systems.

The regulatory arc surrounding stablecoins and charters has been accelerating. The OCC’s recent actions show a willingness to entertain crypto-enabled bank models, albeit within a cautious, risk-managed framework. This stance comes after a December wave of charter approvals for major crypto-focused players, underscoring a period of regulatory experimentation with centralized, compliant crypto rails. As fintechs and crypto-native firms seek scalable, regulated platforms to deliver cross-border value, Payoneer’s approach could set a precedent for how stablecoins are deployed within enterprise-grade payments ecosystems.

Beyond Payoneer, other market participants are testing the waters in the same regulatory waters. World Liberty Financial has applied for a charter to extend its USD1 stablecoin use, aiming to broaden the token’s adoption in payments. Meanwhile, Laser Platform has also submitted an application, and Coinbase has been awaiting a decision since late last year. Taken together, the sequence of filings highlights a broader industry push to convert stablecoins and crypto-backed services from niche offerings into regulated, bank-grade products that can scale with business demand.

What to watch next

- OCC decision timeline on Payoneer’s PAYO Digital Bank charter and any conditions tied to PAYO-USD issuance.

- Details of the reserve-custody framework for PAYO-USD and the governance structure governing the asset’s backing and conversions.

- Implementation milestones for the Bridge collaboration, including wallet integrations and cross-border settlement capabilities.

- Regulatory updates following Crypto.com’s conditional charter, and any additional charters granted or denied to other crypto-leaning firms.

- Rollout timing for PAYO-USD features within Payoneer’s platform, including wallet support, merchant onboarding, and fiat-on/off ramps.

Sources & verification

- Payoneer files application for US national trust bank charter with OCC (Payoneer press release).

- Payoneer announces stablecoin capabilities powered by Bridge integration (press release).

- Crypto.com receives conditional approval for national bank charter (Cointelegraph report).

- December charter approvals for Circle, Ripple, Fidelity Digital Assets, BitGo, and Paxos (Cointelegraph report).

- World Liberty Financial’s USD1 stablecoin charter application (Cointelegraph report).

Payoneer’s bid for a regulated stablecoin and digital bank: what changes for cross-border payments

Payoneer’s filing with the OCC marks a deliberate step toward integrating regulated crypto rails into a mainstream payments platform. By pursuing a national trust charter, the company aims to combine traditional banking discipline with digital asset functionality, enabling a stabilized, regulated environment for cross-border transactions. The centerpiece is PAYO-USD (CRYPTO: PAYO-USD), a stablecoin designed to operate as the platform’s holding currency, with the goal of reducing settlement frictions and smoothing currency conversions for Payoneer’s business clients. The plan envisions wallets where PAYO-USD can be used for both pay-ins and pay-outs, and where users can convert to their local currencies within a supervised framework.

The collaboration with Bridge, announced prior to the charter application, is a key accelerant. Bridge’s infrastructure is intended to support stablecoin issuance, redemption, and on-chain settlement within a regulated, enterprise-facing platform. If approved, Payoneer would gain a direct on-ramp for stablecoins into its cross-border payment network, potentially offering a more predictable cost structure for businesses shipping goods and services globally. The GENIUS Act-compliant design of PAYO-USD signals a compliance-driven approach to stablecoin issuance, aligning with a regulatory environment that increasingly calls for clear reserve custody, transparent governance, and user protections in crypto-enabled products.

Even as Payoneer advances this plan, the OCC’s broader policy stance is under scrutiny and evolution. Crypto firms eyeing national charters have seen both caution and momentum: Crypto.com received conditional approval, a sign that the agency is willing to greenlight regulated crypto banking models while maintaining rigorous oversight. The market context is further shaped by a string of December approvals granted to banks associated with the crypto space—Circle, Ripple, Fidelity Digital Assets, BitGo, and Paxos—broadening the example set for what a crypto-enabled bank charter can look like in practice.

In parallel, other entities are pursuing similar avenues to leverage stablecoins for business use cases. World Liberty Financial’s USD1 stablecoin aims to expand its footprint in cross-border workflows, while Coinbase and Laser Platform explore their own regulatory paths. Taken together, these developments illustrate a broader shift toward regulated, institution-grade deployments of crypto-enabled payments and stablecoins, moving beyond niche pilots toward scalable, enterprise-grade offerings that can participate in regulated financial rails.

The regulatory, technological, and market factors converge around a central question: can a conventional payments platform safely and effectively integrate a stablecoin into its core product stack under federal supervision? If Payoneer succeeds, it could demonstrate a replicable model for large-scale, compliant crypto-enabled payments that preserves user protections, ensures reserve adequacy, and delivers the speed and efficiency gains that stablecoins are intended to provide. Stakeholders—business customers, developers building cross-border payment solutions, and regulators—will be watching closely for how governance, reserve management, and customer protections are implemented in practice as the OCC deliberates on PAYO Digital Bank.

Crypto World

Coinbase trading in equities, ETFs as it broadens product line beyond crypto

Coinbase (COIN) opened stock and exchange-traded fund (ETF) trading to all U.S. customers, expanding beyond digital assets as part of its plan to become an “everything exchange.”

The roll-out allows users to buy and sell U.S.-listed stocks and ETFs on the same platform they use for crypto. Trading runs 24 hours a day, five days a week, with no commission. Customers can fund trades with U.S. dollars or USDC and buy fractional shares starting at $1.

Coinbase outlined the expansion in December, when it said it intended to bring multiple asset classes under one roof. Earlier this month, it debuted a predictions market, enabling users to trade on the outcomes of real-world events. Stock trading marks another step in that strategy.

The move brings Coinbase into more direct competition with retail brokerages such as Robinhood (HOOD), which has been doubling down on its crypto product suite. It also reflects a push among crypto firms to blend the asset class with traditional financial products. Breaking away from a crypto-only business model could help Coinbase loosen the tie between its share price and bitcoin so it trades more like a diversified tech stock, offering some cushion during a crypto downturn.

Both COIN and HOOD have lost about 35% this year as digital assets struggle. EToro (ETOR) is 13% lower over the same period, with the company’s fourth-quarter earnings showing strong equities trading on the platform.

To support the introduction, Coinbase has an agreement with Yahoo Finance. The financial news site will feature a button that lets users move from researching a stock to executing a trade on the exchange. Yahoo Finance will also display real-time data from Coinbase within its interface.

Coinbase said it is working with Apex Fintech Solutions for clearing custody and execution.

The company plans to expand 24/5 trading to more stocks in the coming months. It has also signaled interest in offering tokenized stocks, which would allow equities to move on blockchain networks and potentially trade around the clock.

-

Video5 days ago

Video5 days agoXRP News: XRP Just Entered a New Phase (Almost Nobody Noticed)

-

Fashion4 days ago

Fashion4 days agoWeekend Open Thread: Boden – Corporette.com

-

Politics3 days ago

Politics3 days agoBaftas 2026: Awards Nominations, Presenters And Performers

-

Entertainment7 days ago

Entertainment7 days agoKunal Nayyar’s Secret Acts Of Kindness Sparks Online Discussion

-

Sports1 day ago

Sports1 day agoWomen’s college basketball rankings: Iowa reenters top 10, Auriemma makes history

-

Politics1 day ago

Politics1 day agoNick Reiner Enters Plea In Deaths Of Parents Rob And Michele

-

Tech7 days ago

Tech7 days agoRetro Rover: LT6502 Laptop Packs 8-Bit Power On The Go

-

Sports6 days ago

Sports6 days agoClearing the boundary, crossing into history: J&K end 67-year wait, enter maiden Ranji Trophy final | Cricket News

-

Business3 days ago

Business3 days agoMattel’s American Girl brand turns 40, dolls enter a new era

-

Crypto World22 hours ago

Crypto World22 hours agoXRP price enters “dead zone” as Binance leverage hits lows

-

Business3 days ago

Business3 days agoLaw enforcement kills armed man seeking to enter Trump’s Mar-a-Lago resort, officials say

-

Entertainment6 days ago

Entertainment6 days agoDolores Catania Blasts Rob Rausch For Turning On ‘Housewives’ On ‘Traitors’

-

Business7 days ago

Business7 days agoTesla avoids California suspension after ending ‘autopilot’ marketing

-

Tech3 days ago

Tech3 days agoAnthropic-Backed Group Enters NY-12 AI PAC Fight

-

NewsBeat2 days ago

NewsBeat2 days ago‘Hourly’ method from gastroenterologist ‘helps reduce air travel bloating’

-

NewsBeat2 days ago

NewsBeat2 days agoArmed man killed after entering secure perimeter of Mar-a-Lago, Secret Service says

-

Politics3 days ago

Politics3 days agoMaine has a long track record of electing moderates. Enter Graham Platner.

-

Crypto World6 days ago

Crypto World6 days agoWLFI Crypto Surges Toward $0.12 as Whale Buys $2.75M Before Trump-Linked Forum

-

Tech12 hours ago

Tech12 hours agoUnsurprisingly, Apple's board gets what it wants in 2026 shareholder meeting

-

NewsBeat7 hours ago

NewsBeat7 hours agoPolice latest as search for missing woman enters day nine