Tech

The NFL Won A Lawsuit Over Its Bluesky Ban. Its Social Media Strategy Is Still A Loser

from the a-social-media-fumble dept

Full disclosure up front: I sit on the board of Bluesky. That said, I had absolutely no idea this lawsuit existed until recently. Which, honestly, tells you something about how much of a legal non-event it was. But the underlying story here—about the NFL treating social media the way it treats television broadcast rights—is worth digging into, because it reveals something deeply broken about how major sports leagues think about the internet.

The 2025-2026 NFL season just wrapped up, and along with it came a federal court ruling in a case called Brown v. NFL that most people missed entirely. Two football fans—one in Illinois, one in California—sued the NFL under the Sherman Act, claiming the league violated antitrust law by barring its teams from posting on Bluesky. The fans wanted to follow their teams—the Bears and the now-champion Seahawks—on the platform they actually use, rather than on Elon Musk’s X. The court dismissed the case for lack of standing, and honestly, that was probably the right legal outcome.

The fans couldn’t demonstrate a concrete injury—the information they wanted was still available, for free, on X. As the court put it, their grievance reduced to being “denied the ability to obtain real-time NFL team information on a private platform with which they are ideologically comfortable.” And “I don’t like Elon Musk” is not an antitrust injury. The Sherman Act targets conspiracies that restrain trade and harm competition—not content distribution preferences. You can’t force a private organization to distribute its content on the platform you like best, just as we’ve called out attempts to force social media platforms to carry content they don’t want to carry.

But the fact that the NFL is legally allowed to be this myopic doesn’t make it a smart business decision. You can be entirely within your rights and still be making a spectacularly bad call.

Since 2013, the NFL has had a “content partnership” with X (dating back to when it was the useful site known as Twitter). The deal lets X publish real-time highlights, and in return the league gets… money, presumably. As the court noted in its ruling:

Since 2013, the NFL and X (formerly Twitter, Inc.) have had a “content partnership.” It allows X to publish real-time highlights from football games, such as touchdowns. During the offseason, reporters post on X with news about team practices and other NFL-related topics, and fans on X discuss teams’ acquisitions of free agents and other roster changes. For example, during the NFL draft (the high-profile annual event in which teams select eligible players to join their rosters), X published more than one million posts concerning the NFL; these appeared on users’ screens more than 800 million times. The NFL has repeatedly renewed its partnership with X. Fans do not pay money to receive NFL news on X.

Fine. Lots of organizations have deals with social media platforms. But this just seems like self-sabotage: the NFL apparently used this partnership as justification to tell its own teams they couldn’t even exist on a competing platform. Multiple NFL teams—including the New England Patriots—had set up accounts on Bluesky, started posting, and were building audiences. And then the league office stepped in and told them to shut it all down.

From the ruling:

Initially, multiple NFL teams, including the New England Patriots, had accounts on Bluesky to communicate with fans….

As alleged, however, the NFL later instructed its member teams to delete their Bluesky accounts. But for this instruction, at least some NFL teams would use Bluesky. The Patriots’ vice president of content, Fred Kirsch, for example, has stated: “Whenever the league gives us the green light[,] we’ll get back on Bluesky.”

Yes, the (Super Bowl-losing) Patriots’ VP of content is publicly saying his team wants to be on Bluesky and is just waiting for the league to let them. This wasn’t a case of teams being uninterested. Teams saw the audience there, set up shop, and were actively communicating with fans—and the NFL made them stop.

As Front Office Sports reported at the time, the league specifically told the Patriots to take down their Bluesky account. The league apparently hasn’t even approved Threads—Meta’s X competitor—for team real-time updates either.

So the NFL has essentially decided that when it comes to the kind of real-time updates that fans actually care about, X is the only approved outlet. Everything else is locked out.

This is “broadcast-brain” thinking applied to the internet, and it’s spectacularly dumb.

The NFL is treating social media platforms the way it treats regional sports networks or its Sunday Ticket package: as exclusive territories to be carved up and sold to the highest bidder. In the television world, that model makes a certain kind of sense—there’s a limited amount of spectrum, a limited number of cable channels, and that scarcity creates value. But social media doesn’t work that way. There’s no scarcity. Posting an injury report on Bluesky doesn’t remove it from X. Cross-posting is literally free. The entire point of social media for a brand is to be everywhere your audience is.

And the audience, increasingly, is on Bluesky. As Mashable noted last year heading into the season, the NFL community on Bluesky had already hit a kind of critical mass:

You need the presence and regular posting of big names to legitimize a platform. It certainly helped that folks like Kimes and a large portion of the NFL writers at popular sports sites like The Ringer made Bluesky home. And last season it felt like Bluesky hit terminal velocity, where enough people joined that you could fully exit to the site for football content. And with the migration of the professionals, the shitposters naturally came along, too. Because that’s where the discussion was happening. There is genuine, easy-to-find, fun NFL talk on Bluesky with minimal interruptions from, say, weird ads or angry reply guys you might find on X.

That’s a real community. A vibrant, engaged community of exactly the kind of hardcore football fans that the NFL should be desperate to cultivate. These are, as Mashable noted, the “ball knowers.” They’ve moved to Bluesky because, well, X kind of sucks now for following sports. As Mashable also noted:

Bluesky does have a leg-up in some areas — Elon Musk’s site recently has proven unreliable for NFL fans. The site crashed the morning free agency launched, which is one of the most important days for NFL social media. And the sports tab — which used to be an easy, fun way to follow games in the Twitter days — degraded into near uselessness years ago. And, in general, X has morphed with Musk’s image, which is focused more on AI and politics — not things like following football. Of course you can still follow the NFL on X, but it does involve wading through more junk than it used to. Bluesky offers an interesting alternative in that regard.

So the most engaged, most knowledgeable football community has moved to Bluesky. The teams themselves want to be on Bluesky. And the NFL’s response to all of this is… to ban its teams from showing up.

It’s the digital equivalent of a local blackout (something we’ve been calling out for well over a decade)—punishing your most dedicated fans because of some deal you cut with a middleman in an effort to create an artificial and unnecessary scarcity.

Meanwhile, the platform the NFL is propping up with this exclusivity arrangement is one where fans who tuned in for the Super Bowl halftime show got to watch a significant chunk of the X user base have a full-blown racist meltdown over Bad Bunny performing. The NFL specifically chose Bad Bunny to appeal to a broader, more global audience—and the audience that actually appreciated the choice? They were on Bluesky where there was an overwhelming wave of support for the performance. The league is betting its real-time presence on the platform where its expansion strategy gets shouted down, while blocking teams from the one where those new fans are actually showing up.

This kind of control-freakery from the NFL shouldn’t surprise anyone who has followed the league’s behavior over the years. This is the same organization that has spent decades aggressively lying to bars, restaurants, and small businesses about the scope of its “Super Bowl” trademarks, sending threatening letters suggesting you can’t even say the words “Super Bowl” in an ad without a license—something that has never actually been true.

The NFL’s institutional DNA is “control equals value,” and they apply that logic to everything, from what a church can call its viewing party to which social media apps their teams are permitted to use.

The problem is that control-based thinking only works when you actually can control the ecosystem. You can (sort of) control which networks broadcast your games. You can control which streaming service gets Sunday Ticket. You cannot control where fans choose to talk about football on the internet. The conversation is going to happen whether the NFL’s official accounts are there or not. The only question is whether the league’s teams get to participate in it.

Any organization whose core business depends on fan engagement should be finding fans where they are, not herding them onto a single platform because you cut an exclusivity deal. Especially when that platform is increasingly known for being a hellscape of AI slop, political rage, and engagement-bait, while the platform you’re blocking your teams from is the one where people are actually talking about your product with genuine enthusiasm.

The NFL generates billions in revenue. And yet, when it comes to social media strategy, it’s stuck in a 2005 mindset. That’s not how any of this works anymore.

Someone at NFL HQ needs to understand that when your most passionate fans have moved to a new platform and your own teams are begging for permission to follow them there, the smart play is to let them go.

Filed Under: blackouts, exclusivity, fans, football, social media

Companies: bluesky, nfl, twitter, x

Tech

Google quantum-proofs HTTPS by squeezing 2.5kB of data into 64-byte space – Ars Technica

Google and other browser makers require that all TLS certificates be published in public transparency logs, which are append-only distributed ledgers. Website owners can then check the logs in real time to ensure that no rogue certificates have been issued for the domains they use. The transparency programs were implemented in response to the 2011 hack of Netherlands-based DigiNotar, which allowed the minting of 500 counterfeit certificates for Google and other websites, some of which were used to spy on web users in Iran.

Once viable, Shor’s algorithm could be used to forge classical encryption signatures and break classical encryption public keys of the certificate logs. Ultimately, an attacker could forge signed certificate timestamps used to prove to a browser or operating system that a certificate has been registered when it hasn’t.

To rule out this possibility, Google is adding cryptographic material from quantum-resistant algorithms such as ML-DSA. This addition would allow forgeries only if an attacker were to break both classical and post-quantum encryption. The new regime is part of what Google is calling the quantum-resistant root store, which will complement the Chrome Root Store the company formed in 2022.

The MTCs use Merkle Trees to provide quantum-resistant assurances that a certificate has been published without having to add most of the lengthy keys and hashes. Using other techniques to reduce the data sizes, the MTCs will be roughly the same 64-byte length they are now, Westerbaan said.

The new system has already been implemented in Chrome. For the time being, Cloudflare is enrolling roughly 1,000 TLS certificates to test how well the MTCs work. For now, Cloudflare is generating the distributed ledger. The plan is for CAs to eventually fill that role. The Internet Engineering Task Force standards body has recently formed a working group called the PKI, Logs, And Tree Signatures, which is coordinating with other key players to develop a long-term solution.

“We view the adoption of MTCs and a quantum-resistant root store as a critical opportunity to ensure the robustness of the foundation of today’s ecosystem,” Google’s Friday blog post said. “By designing for the specific demands of a modern, agile internet, we can accelerate the adoption of post-quantum resilience for all web users.”

Tech

Vivo X300 FE Moves Closer to Debut; X300 Ultra Could Get Dual Teleconverter Kit

Following the company’s expansion of its X-series lineup in the past few months, Vivo is now gearing up to launch the Vivo X300 FE and Vivo X300 Ultra. The X300 FE has been reported to have obtained certifications from IMDA and TUV, indicating that the handset is close to release in the market. On the other hand, the X300 Ultra is expected to come with a dual lens teleconverter kit.

Vivo X300 FE

Vivo’s upcoming X300 FE, with model number V2537, has appeared on IMDA and TUV certification websites. The TUV listing suggests the phone may support 90W wired fast charging. On the other hand, the IMDA certification confirms that the device will come with 5G, Wi-Fi, Bluetooth, and NFC connectivity. Since devices typically receive these certifications close to launch, the X300 FE’s debut could be just around the corner.

The Vivo X300 FE is looking like a compact flagship with punch. It will be powered by the Snapdragon 8 Gen 5, accompanied by LPDDR5X RAM and UFS 4.1 storage. The phone may feature a 6.31-inch OLED display with a 120Hz refresh rate for smoother and clearer visuals.

Despite the smartphone being compact, the battery appears quite large, measuring around 6,500mAh, and may include silicon-carbon technology to enhance battery life. As far as the camera is concerned, there are rumors of a triple camera system, which will include a 50MP primary sensor, a 50MP periscope telephoto lens, and an ultra-wide lens. The front camera may benefit from a 50MP sensor optimized by ZEISS. The phone is also expected to come with IP68/IP69 ratings and a high-quality glass and metal body.

Vivo X300 Ultra

According to recent rumors, the Vivo X300 Ultra could introduce a dual-lens external teleconverter kit for better zoom photography. The setup may offer a 400mm fixed-focus lens and support 200mm focal-length shooting. Users would likely switch between the two options instead of using them together. Vivo builds the system on its V-mount platform to deliver a more professional optical zoom experience.

The Vivo X300 Ultra is looking like a real flagship, with the best hardware inside. The phone is expected to come with Snapdragon 8 Elite Gen 5, which will provide great gaming performance and multitasking capabilities. The camera system will include a dual-camera setup with two 200MP cameras from Sony and Samsung, along with a 5MP multispectral camera and a 50MP ultra-wide lens.

The camera system may include ZEISS T* coating and optical image stabilization to improve overall image quality. In terms of battery life, rumors suggest the phone will feature a massive 7,000mAh battery that supports 100W wired charging and 40W wireless charging. When combined with an IP69 rating and USB 3.2 support, this phone suddenly feels like a real flagship.

Tech

What’re The Differences Between Freightliner And Western Star Semi Trucks?

Western Star and Freightliner are owned by Daimler Trucks North America, and both of these brands are powered by diesel engines, which are ideal for long-haul trucking. Where they differ is in their specific applications, the type of users they are aimed at, and some variations in their design approaches.

Freightliner semi trucks are focused on the demands of long-haul transportation and are often found in large trucking fleets. This could have a lot to do with why Freightliner is the top dog in Class 8 truck sales, claiming 35.2% of all sales in 2025, with a total of 73,360 trucks sold out of a yearly total of 208,155. By comparison, Western Star, which places more emphasis on the mining, logging, and construction markets, finished 2025 with a grand total of 11,496 Class 8 trucks, giving it a 5.5% share of the pie. Freightliner sells many more units than Western Star does.

Another major difference between Freightliner and Western Star semi trucks is in the way they are built. Western Star trucks are ruggedly built for the rigors of their intended markets, which requires firmer, heavy-duty suspensions as well as reinforced frame structures. This compares to what is important for a Freightliner truck that will be used for hauling freight long distances — good fuel economy, overall efficiency, and low cost of ownership matter most to the fleet operators who run Freightliners.

What else should you know about Freightliner And Western Star semi trucks?

The differences between Freightliner and Western Star semi trucks extend to the engines that power them. Freightliners are built for cruising the highways with minimal fuel consumption, so fuel-sipping engines like the Detroit DD13 and DD15 are commonly used. For to the specific needs of the operators of Western Star trucks, something more brawny like the Detroit 57X and 49X have the required output, measured in both higher horsepower and torque, to do the job on the logging trail or while hauling ore from the mine.

Freightliner trucks tend to retain more value, largely due to an extensive parts and service network, making it easy to get your Freightliner truck fixed wherever you roam. Western Star trucks also hold their value well — they are appreciated by those who use them for their intended missions, where their ultimate strength and on-the-job durability are highly valued.

For over-the-road freight hauling, the Freightliner trucks are the undisputed champions in terms of sales. Freightliner semi trucks have been designed to provide the aerodynamic performance and overall fuel efficiency that both fleets and individual owner-operators can appreciate in their day-to-day operations. Western Star semi trucks, made in two U.S. plants, are better suited to the heavy-duty demands of construction, mining, and logging, in which their more rugged structures and more powerful engines make them ideally suited for these missions.

Tech

Get a $200 gift card with the Samsung Galaxy S26 Ultra, plus a free upgrade to 512GB

The Samsung Galaxy S26 Ultra is already looking like an unbelievable flagship phone, but this deal makes it even better.

You might have noticed by now that the pre-order deals for the Ultra are now in full swing, and for anyone looking to upgrade to an outstanding new handset, there’s no shortage of offers. With that in mind, we’ve picked out one of the very best.

Head on over to Amazon right now, and you can pick up the unlocked 512GB variant of the Samsung Galaxy S26 Ultra, and get a $200 gift card for your trouble, plus that free upgrade from 256GB.

To put the whole thing into context, that super high-end Galaxy S26 Ultra will set you back $1299.99 right now, which is a full $400 cheaper than the phone’s asking price after the pre-launch period.

Get a $200 gift card with the Samsung Galaxy S26 Ultra, plus a free upgrade to 512GB

Pick up the Samsung Galaxy S26 Ultra and you’ll walk away with a $200 gift card and a complimentary bump to 512GB.

That’s a good deal for what is likely to be one of the best phones on the market, and a gift card that can get you towards the cost of a cheaper product in Samsung’s ecosystem, such as a Galaxy Buds.

The phone certainly doesn’t skimp on the user experience; it’s quite the opposite, in fact. Being a ‘S Ultra’ phone, the S26 Ultra is loaded to the gills with the best that Samsung has to offer.

As an example, the included Privacy Display is unlike anything we’ve seen on another phone, as it can keep the information on your locking screen away from prying eyes. When it’s time to unlock the screen, it also lets you do so with the power of your own unique Galaxy AI.

You also have an eye-popping 512GB of storage, giving you far more leeway to add apps, download films, and more.

Also included is Super Fast Charging 3.0, which apparently allows the phone to reach up to 75% capacity in around just 30 minutes.

This incredible form of top-up is made possible by a durable battery which has a 5000 mAh capacity, giving the phone plenty of longevity from a single charge.

Given everything that the Samsung Galaxy S26 Ultra brings to the table, even without the added $200 gift card and $400 off ($600 in total), it’s still a fantastic buy in the world of premium phones, but with the gift card in tow, it’s a must-buy for upgrading bargain hunters.

SQUIRREL_PLAYLIST_10148964

Tech

Why Did Samsung Remove Bluetooth From The S Pen?

Samsung’s history in the smartphone arena is one of constant innovation. Not all of the Korean tech giant’s ideas are good (looking at you, Bixby), but it has consistently been willing to throw concepts at the wall to see what sticks. In the 2020s, that experimentation led to a whole new category of folding smartphones, but all the way back in 2011, it led to the S Pen stylus. Samsung introduced the S Pen alongside that year’s Galaxy Note to aid users with the sort of productivity work the device was designed for.

But the most impressive S Pen features didn’t come until the launch of the Note 9 in 2018, when Samsung added low-energy Bluetooth to the tiny stylus. With that, users could wave the pen like a tiny wand to control their phone, thanks to the many S Pen productivity tricks. Air Actions, as they were called, allowed users to make specific motions with the stylus to navigate the OS, control media playback, and take photos and videos — even if their phone was across the room. That functionality remained even as the Note line was deprecated and the S Pen was moved to the Galaxy S Ultra series.

Then, in early 2025, Samsung shocked dedicated S Pen users by stripping Bluetooth from the Galaxy S25 Ultra’s S Pen, undoing seven years of progress. Outrage was palpable, and users demanded answers. The answer they got only made matters worse. According to Samsung, Bluetooth was removed from the S Pen because not enough people used it. This predictably didn’t sit well with those who did use it. So, here’s Samsung’s explanation, and why its story doesn’t line up for everybody.

Samsung says no one used Bluetooth S Pen features — but some users beg to differ

Samsung’s decision to strip Bluetooth functionality from the S Pen on the Galaxy S25 Ultra came as a shock to many users. After all, it had become a staple of Samsung’s top-range devices, a flagship feature that set those premium products apart from the competition. But according to Samsung, diagnostic data and a study showed that fewer than 1% of users used the wireless functionality.

Users were so shocked by the removal that they even started a petition asking Samsung to reverse course, which racked up over 9,500 signatures. So, when a blog post on the Samsung website claimed that a Bluetooth-enabled S Pen would be sold separately, users began to scour Samsung’s website. Unfortunately, Samsung eventually confirmed the blog contained incorrect information, further embittering S Pen die-hards. Indeed, when users got their hands on the new phone, they found that older Bluetooth S-Pens would not fit in the stylus slot, nor would Bluetooth features from those older styluses work with the S25 Ultra.

By now, it’s clear that Samsung has no plan to keep Bluetooth on any of its S-Pen compatible devices. Its most recent flagship tablet, the Galaxy Tab S11 Ultra, comes with an S Pen that lacks Bluetooth, making the Tab S10 Ultra the last such device to support the wireless protocol.

Samsung has a long history of removing features from new hardware

Some users held out hope that the Galaxy S26 Ultra would return Bluetooth functionality to the S Pen, but those hopes look all but dashed. If the company’s 2025 product cadence hadn’t demonstrated its total abandonment of the Bluetooth S-Pen, the S26 Ultra may drive that point home. A content creator, Sahil Karoul, got his hands on one of the brand-new devices ahead of launch, and his testing showed no sign of the Bluetooth features. The S26 Ultra will have features that make it hard to beat, but a Bluetooth S Pen will almost certainly not be one of them.

No Bluetooth in Spen in #SamsungS26ultra pic.twitter.com/6qAIVDgbmS

— Sahil Karoul (@KaroulSahil) February 22, 2026

Air Actions in the S Pen are only the latest in a long line of enthusiast features Samsung has removed from its flagship smartphones. Samsung has removed plenty of other features over the years. These include removable batteries, the headphone jack, the SD card slot, a mechanical camera aperture, a pressure-sensitive display, heart rate and SpO2 readers, MST technology in Samsung Pay for older payment terminals, and LED notification indicators, to name a few.

It even got rid of its signature curved displays and stopped including a charging brick in the box with most new devices. Samsung might argue that some of those features were outdated, while the functionality of others can be replicated through alternative means, but a loss is a loss. One thing that certainly hasn’t been removed is the high price tag that accompanies many of the devices, despite feature cuts. When you’re charging over $1,300 for a phone, customers are expecting a luxury experience. Thus, it’s not shocking they’d feel slighted by the removal of a Bluetooth sensor.

Tech

OpenAI fires employee for using confidential info on prediction markets

OpenAI has fired an employee over the employee’s activity on prediction markets, including Polymarket, the company confirmed to Wired. The employee used confidential OpenAI information in connection with the trades made, the company alleges.

OpenAI didn’t release the name of the employee. However, a spokesperson said that such actions violated a company policy that bans workers from using inside information for personal gain, including on prediction markets.

Prediction markets like Polymarket and Kalshi allow people to make wagers on the outcomes of real-world events. For instance, on Polymarket, there are wagers being made around the kind of products OpenAI will announce in 2026 and when the company will go public. They can cover any event, and some eye-popping money can be made. As we recently reported, an accountant won a $470,300 jackpot on Kalshi by betting against DOGE believers.

Prediction markets insist they are not gambling sites, preferring to label themselves as financial platforms. Kalshi is a regulated exchange and, in fact, it fined and banned a MrBeast editor for similar alleged insider trading earlier this week. OpenAI did not immediately respond to a request for additional comment.

Tech

O House Becomes Japan’s First Fully Seismic-Approved 3D-Printed Reinforced Concrete Home

Photo credit: Onocom

O House, finished in late 2025 within Kurihara, Miyagi Prefecture, is a 50-square-meter, 3D-printed two-story home. The 31-square-meter ground floor holds a master bedroom and bathroom, while the 19-square-meter upper level hosts the kitchen, dining, and living areas. Curved walls rise 7 meters, stacked like bricks and set half a meter below ground for stability, while skylights let natural light pour into every corner.

Kizuki Co. Ltd. and Onocom Co. Ltd. jointly led this project, while COBOD International handled the printing technology. A modified version of their BOD series printers was exactly what they needed to get the job started. Working layer by layer, the printer built the inner and exterior walls, floor slab, roof, and a few inside elements. The printer handled on-site work, however some components were manufactured off-site. To cap it off, the crew installed custom-cut styrofoam for those problematic overhangs and arches that the printer couldn’t handle and increased as the process progressed. Finally, the team gave some of the wall parts a shining polish to get the smooth marble appearance.

Bambu Lab A1 3D Printer, Support Multi-Color 3D Printing, High Speed & Precision, Full-Auto Calibration…

- High-Speed Precision: Experience unparalleled speed and precision with the Bambu Lab A1 3D Printer. With an impressive acceleration of 10,000 mm/s…

- Multi-Color Printing with AMS lite: Unlock your creativity with vibrant and multi-colored 3D prints. The Bambu Lab A1 3D printers make multi-color…

- Full-Auto Calibration: Say goodbye to manual calibration hassles. The A1 3D printer takes care of all the calibration processes automatically…

They used a combination of rebar inserted directly inside the layers of concrete and good old-fashioned reinforced steel, all linked together by a steel frame that bears the primary loads. With a firm anchor in the ground thanks to some ground-improvement piles, this hybrid technology enabled the construction to exceed Japan’s stringent seismic regulations, which are among the strictest in the world. All of this demonstrates that in earthquake-prone regions, printed reinforced concrete is a viable alternative to timber framing.

Rounding up a crew of only four in challenging winter conditions made things tough, with freezing temperatures below 10 degrees demanding heated water be added to the mix to keep things flowing, while hot summers of 30 to 35 degrees required careful temperature control to stop the material from setting too fast, but they kept going, working continuously from below ground to the top, and creating multi-purpose walls with an aesthetic finish, structure, and hidden services.

[Source]

Tech

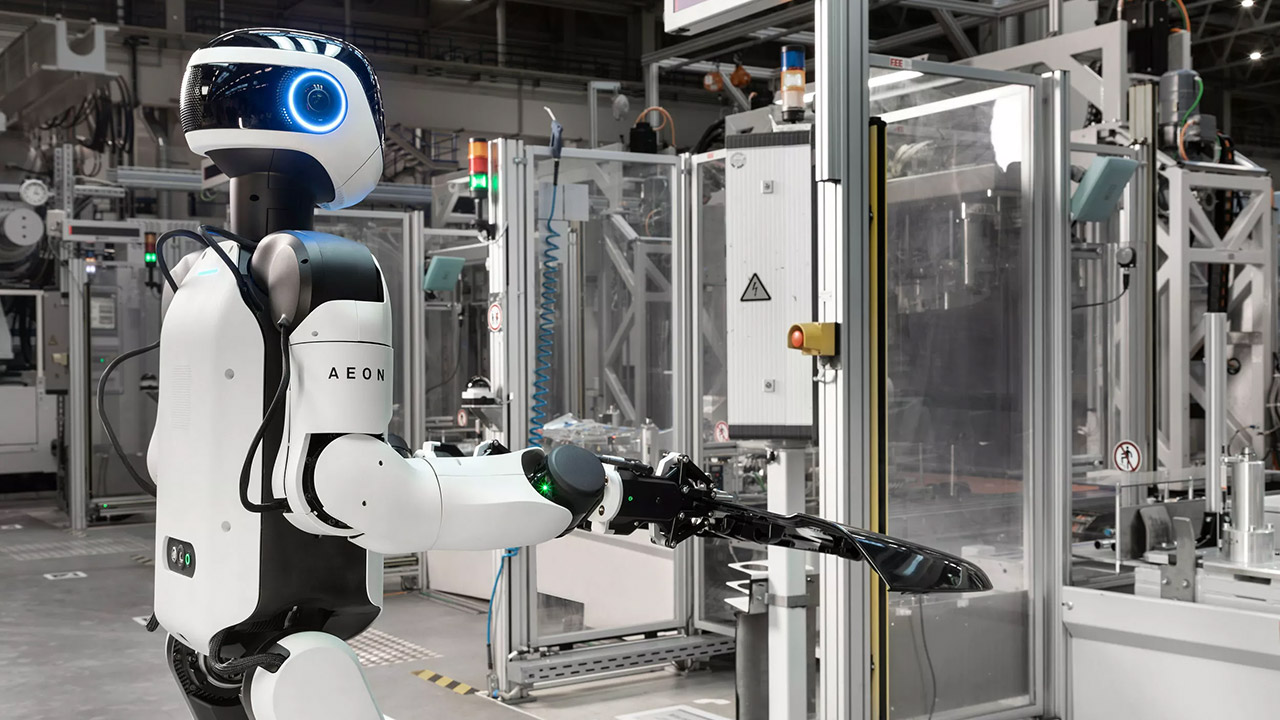

BMW Sends AEON Humanoid Robots to the Line in Leipzig

BMW employees at the Leipzig plant have been juggling all the complex parts of vehicle assembly, especially the hefty battery modules. However, a new member has joined the team: the AEON humanoid robot. AEON was created by Hexagon Robotics, a company BMW has been collaborating with for years on grunt work, to manage the physically taxing and repetitive tasks that wear people out.

By April 2026, the AEON prototype will be put through its paces in a larger round of assessments, with a complete pilot phase scheduled to begin this summer. The goal is to make AEON extremely adaptable so that it can transition between activities as needed; simply switch out the gripper or scanner and you’re ready to go. It travels from station to station without the need for fixed rails because it has wheels rather than legs.

Unitree G1 Humanoid Robot(No Secondary Development)

- Height, width and thickness (standing): 1270x450x200mm Height, width and thickness (folded): 690x450x300mm Weight with battery: approx. 35kg

- Total freedom (joint motor): 23 Freedom of one leg: 6 Waist Freedom: 1 Freedom of one arm: 5

- Maximum knee torque: 90N.m Maximum arm load: 2kg Calf + thigh length: 0.6m Arm arm span: approx. 0.45m Extra large joint movement space Lumbar Z-axis…

Electric vehicle battery modules need extra care, and workers frequently need to wear safety gear simply to move them. After a few shifts, it becomes monotonous, but AEON is more than willing to relieve them of that kind of work without putting undue strain on the human workers. External component production is also relevant since, let’s face it, robotic consistency is a huge bonus when performing the same operation repeatedly.

BMW is doing something a little different from the conventional industrial arms that are fastened to the ground. Thanks to data from BMW’s recently unified systems, AEON is able to move around, adjust to any arrangement, and become more intelligent every day. For a more seamless operation, the business had to dismantle the outdated data silos that were creating so much friction; now, all of that data streams directly into AEON. Naturally, safety is the top priority, which entails improved wireless coverage, additional barriers to keep people safe, and other measures. Since everything is linked into the current Smart Robotics network, there is no need to worry about anything getting left behind.

The main benefit in this case is that human labor is still essential to the entire operation. BMW wants employees to be able to do something more interesting for a change by eliminating the monotony of their jobs. The personnel on the floor have been won over by early buy-in from safety teams, IT, and logistics, and having their support from the beginning has made everything go much more smoothly. The strategy here was shaped by lessons learned from an earlier test in the US facility in Spartanburg, which demonstrated how rapidly AEON could catch up and the dependability of everything in an actual production environment.

In all of this, Leipzig has established a new Center of Competence for Physical AI, and its experts are getting to work assessing partners, conducting pilots, and expanding the concepts that prove effective. To stay competitive in Europe, executives are already discussing quantifiable improvements in speed and accuracy for these difficult activities.

[Source]

Tech

City of Seattle CTO Rob Lloyd is resigning to lead a government institute with national reach

Rob Lloyd, Seattle’s chief technology officer, is leaving his post to become executive director of the Center for Digital Government. His last day will be March 27.

“Leading IT and our dedicated teams in service to Seattle has been an honor,” Lloyd said to colleagues in an email sent Thursday night.

Lloyd told GeekWire that while he appreciated Mayor Katie Wilson’s invitation to stay in the role, he was “beyond excited” to take the new job, which would allow him to perform similar work with local and state governments nationwide.

Lloyd became CTO in June 2024 after eight years as deputy city manager of San José, Calif. While his new employer is based in California, he will remain in Seattle. “My family wanted it no other way,” Lloyd said.

The city provided GeekWire with Lloyd’s letter of resignation, in which he said the “timing is right for a change.” The mayor is reshaping her executive team and its direction, he wrote, and strategizing actions related to the budget and this summer’s FIFA World Cup games.

Seattle is facing about a $140 million budget deficit for next year. The Seattle Times reported that Wilson is asking departments to provide plans for funding cuts of 5% to 10%.

In the letter, Lloyd also highlighted some of his team’s accomplishments during his tenure, including:

- Recovering more than $130 million “in failing and stalled technology projects.”

- Executing the city’s IT Strategic Plan.

- Partnering with fire, police, mental health and emergency management services on public safety technologies.

- Managing a $21 million operating budget reduction while increasing service reliability and employee retention.

- Updating cybersecurity practices.

- Formalizing his department’s first customer service and staff feedback surveys.

Lloyd has been responsible for overseeing roughly 670 employees, and joined the city with a $270 million operating budget and a capital budget of about $24 million.

In December, the city appointed Lisa Qian as its first AI Officer. Her experience includes serving as a senior manager of data science at LinkedIn, as well other tech company leadership positions.

When Lloyd came to Seattle, he told GeekWire he hoped the city would be his “forever home” — and that he wanted to step outside City Hall and build relationships with the community members and companies driving the region’s tech scene. He was eager to play a part in tackling difficult issues such as public safety, homelessness and downtown recovery.

In his email to employees, Lloyd said that during his final weeks he would “be focused on completing the final commitments I made to the organization when I arrived.”

“What I’ll carry most from my time here isn’t the projects or the milestones though, it’s the memories of you and our partners,” Lloyd continued. “So many people made this work a true gift. Thank you to the City for letting me serve this community with you.”

Tech

Microsoft’s new AI training method eliminates bloated system prompts without sacrificing model performance

In building LLM applications, enterprises often have to create very long system prompts to adjust the model’s behavior for their applications. These prompts contain company knowledge, preferences, and application-specific instructions. At enterprise scale, these contexts can push inference latency past acceptable thresholds and drive per-query costs up significantly.

On-Policy Context Distillation (OPCD), a new training framework proposed by researchers at Microsoft, helps bake the knowledge and preferences of applications directly into a model. OPCD uses the model’s own responses during training, which avoids some of the pitfalls of other training techniques. This improves the abilities of models for bespoke applications while preserving their general capabilities.

Why long system prompts become a liability

In-context learning allows developers to update a model’s behavior at inference time without modifying its underlying parameters. Updating parameters is typically a slow and expensive process. However, in-context knowledge is transient. This knowledge does not carry across different conversations with the model, meaning you have to feed the model the exact same massive set of instructions or documents every time. For an enterprise application, this might mean repeatedly pasting company policies, customer tickets, or dense technical manuals into the prompt. This eventually slows down the model, drives up costs, and can confuse the system.

“Enterprises often use long system prompts to enforce safety constraints (e.g., hate speech detection) or to provide domain-specific expertise (e.g., medical knowledge),” said Tianzhu Ye, co-author of the paper and researcher at Microsoft Research Asia, in comments provided to VentureBeat. “However, lengthy prompts significantly increase computational overhead and latency at inference time.”

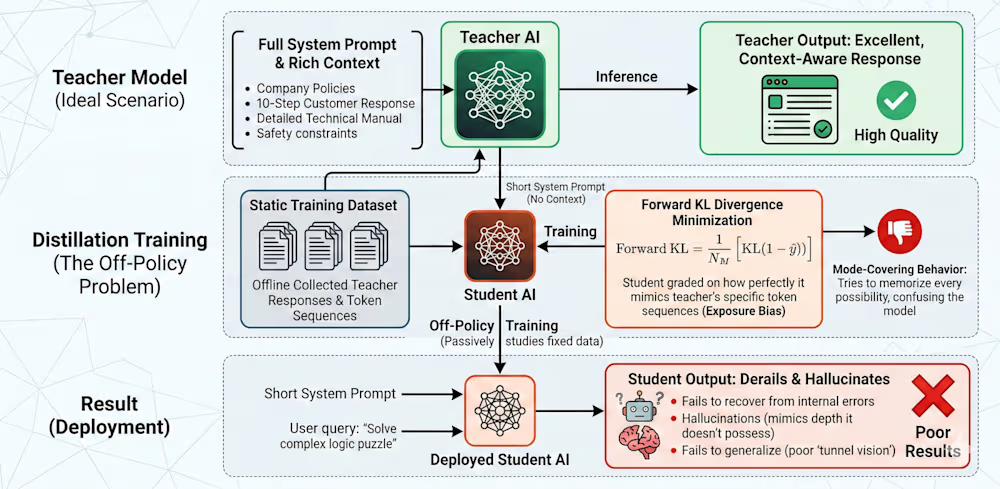

The main idea behind context distillation is to train a model to internalize the information that you repeatedly insert into the context. Like other distillation techniques, it follows a teacher-student paradigm. The teacher is an AI model that receives the massive, detailed prompt. Because it has all the instructions and reference documents, it generates highly tailored responses. The student is a model being trained that only sees the main question and doesn’t have access to the full context. Its goal is simply to observe the teacher’s responses and learn to mimic its behavior.

Through this training process, the student model effectively compresses the complex instructions from the teacher’s prompt directly into its parameters. For an enterprise, the primary value happens at inference time. Because the student model has internalized the context, you can deploy it in your application without needing to paste in the lengthy instructions again. This makes the model significantly faster and with far less computational overhead.

However, classic context distillation relies on a flawed training method called “off-policy training,” where the model is trained on fixed datasets that were collected before the training process. This is problematic in several ways. During training, the student is only exposed to ground-truth data and teacher-generated answers, creating what Ye calls “exposure bias.” In production, the model must come up with its own token sequences to reach those answers. Because it never practiced making its own decisions or recovering from its own mistakes during training, it can easily derail when operating independently. It’s like showing a student videos of a professional driver and expecting them to learn driving without trial and error.

Another problem is the “forward Kullback-Leibler (KL) divergence” minimization measure used to train the model. Under this method, the model is graded on how similar its answers are to the teacher, which encourages “mode-covering” behavior, Ye says. The student model is often smaller or lacks the rich context the teacher had, meaning it simply lacks the capacity to perfectly replicate the teacher’s complex reasoning. Because the student is forced to try and cover all those possibilities anyway, its underlying guesses become overly broad and unfocused.

In real-world applications, this can result in hallucinations, where the AI gets confused and confidently makes things up because it is trying to mimic a depth of knowledge it does not actually possess. It also means that the model cannot generalize well to new tasks.

How OPCD fixes the teacher-student problem

To fix the critical issues with the old teacher-student dynamic, the Microsoft researchers introduced On-Policy Context Distillation (OPCD). The most important shift in OPCD is that the student model learns from its own generation trajectories as opposed to a static dataset (which is why it is called “on-policy”). Instead of passively studying a dataset of the teacher’s perfect outputs, the student is given a task without seeing the massive instruction prompt and has to generate an answer entirely on its own.

As the student generates its answer, the teacher acts as a live instructor. The teacher has access to the full, customized prompt and evaluates the student’s output. At every step along the student’s generation, the system compares the student’s token distribution against what the context-aware teacher would do.

OPCD uses “reverse KL divergence” to grade the student. “By minimizing reverse KL divergence, it promotes ‘mode-seeking’ behavior. It focuses on high-probability regions of the student’s distribution,” Ye said. “It suppresses tokens that the student considers unlikely, even if the teacher’s belief assigned them high probability. This alignment helps the student correct its own mistakes and avoid the broad, hallucinatory distributions of standard distillation.”

Because the student model actively practices making its own decisions and learns to correct its own mistakes during training, it behaves more reliably when deployed in a live application. It successfully bakes complex business rules, safety constraints, or specialized knowledge directly into its permanent memory.

What OPCD delivers: The benchmark results

The researchers tested OPCD in two key areas: experiential knowledge distillation and system prompt distillation. For experiential knowledge distillation, the researchers wanted to see if an LLM could learn from its own past successes and permanently adopt those lessons. They tested this on models of various sizes, using mathematical reasoning problems.

First, the model solved problems and was asked to write down general rules it learned from its successes. Then, using OPCD, they baked those written lessons directly into the model’s parameters. The results showed that the models improved dramatically without needing the learned experience pasted into their prompts anymore. On complex math problems, an 8-billion-parameter model improved from a 75.0% baseline to 80.9%. For example, on the Frozen Lake navigation game, a small 1.7-billion parameter model initially had a success rate of 6.3%. After OPCD baked in the learned experience, its accuracy jumped to 38.3%.

The second set of experiments were on long system prompts. Enterprises often use massive system prompts to enforce strict behavioral guidelines, like maintaining a professional tone, ensuring medical accuracy, or filtering out toxic language. The researchers tested whether OPCD could permanently bake these dense behavioral rules into the models so they would not have to be sent with every single user query. Their experiments show that OPCD successfully internalized these complex rules and massively boosted performance. When testing a 3-billion parameter Llama model on safety and toxicity classification, the base model scored 30.7%. After using OPCD to internalize the safety prompt, its accuracy spiked to 83.1%. On medical question answering, the same model improved from 59.4% to 76.3%.

One of the key challenges of fine-tuning models is catastrophic forgetting, where the model becomes too focused on the fine-tune task and worse at general tasks. The researchers tracked out-of-distribution performance to test for this tunnel vision. When they distilled strict safety rules into a model, they immediately tested its ability to answer unrelated medical questions. OPCD successfully maintained the model’s general medical knowledge, outperforming the old off-policy methods by approximately 4 percentage points. It specialized without losing its broader intelligence.

Where OPCD fits — and where it doesn’t

While OPCD is a powerful tool for internalizing static knowledge and complex rules, it does not replace all external context methods. “RAG is better when the required information is highly dynamic or involves a massive, frequently updated external database that cannot be compressed into model weights,” Ye said.

For enterprise teams evaluating their pipelines, adopting OPCD does not require overhauling existing systems or investing in specialized hardware. “OPCD can be integrated into existing workflows with very little friction,” Ye said. “Any team already running standard RLVR [Reinforcement Learning from Verifiable Rewards] pipelines can adopt OPCD without major architectural changes.”

In practice, the student model acts as the policy model performing rollouts, while the frozen teacher model serves as a reference providing logits. The hardware requirements are highly accessible. According to Ye, enterprise teams can reproduce the researchers’ experiments using about eight A100 GPUs.

The data requirements are similarly lightweight. For experiential knowledge distillation, developers only need around 30 seed examples to generate solution traces. Because the technique is applied to previously unoptimized environments, even a small amount of data yields the majority of the performance improvement. For system prompt distillation, existing optimized prompts and standard task datasets are sufficient.

The researchers built their own implementation on verl, an open-source RLVR codebase, proving that the technique fits cleanly within conventional reinforcement learning frameworks. They plan to release their implementation as open source following internal reviews.

The self-improving model: What comes next

Looking ahead, OPCD paves the way for genuinely self-improving models that continuously adapt to bespoke enterprise environments. Once deployed, a model can extract lessons from real-world interactions and use OPCD to progressively internalize those characteristics without requiring manual supervision or data annotation from model trainers.

“This represents a fundamental paradigm shift in model improvement: the core improvements to the model would move from training time to test time,” Ye said. “Using the model—and allowing it to gather experience—would become the primary driver of its advancement.”

-

Politics6 days ago

Politics6 days agoBaftas 2026: Awards Nominations, Presenters And Performers

-

Sports5 days ago

Sports5 days agoWomen’s college basketball rankings: Iowa reenters top 10, Auriemma makes history

-

Fashion14 hours ago

Fashion14 hours agoWeekend Open Thread: Iris Top

-

Politics5 days ago

Politics5 days agoNick Reiner Enters Plea In Deaths Of Parents Rob And Michele

-

Business4 days ago

Business4 days agoTrue Citrus debuts functional drink mix collection

-

Politics1 day ago

Politics1 day agoITV enters Gaza with IDF amid ongoing genocide

-

Crypto World4 days ago

Crypto World4 days agoXRP price enters “dead zone” as Binance leverage hits lows

-

Sports4 hours ago

The Vikings Need a Duck

-

Business6 days ago

Business6 days agoMattel’s American Girl brand turns 40, dolls enter a new era

-

Business6 days ago

Business6 days agoLaw enforcement kills armed man seeking to enter Trump’s Mar-a-Lago resort, officials say

-

Tech4 days ago

Tech4 days agoUnsurprisingly, Apple's board gets what it wants in 2026 shareholder meeting

-

NewsBeat2 days ago

NewsBeat2 days agoManchester Central Mosque issues statement as it imposes new measures ‘with immediate effect’ after armed men enter

-

NewsBeat2 days ago

NewsBeat2 days agoCuba says its forces have killed four on US-registered speedboat | World News

-

NewsBeat5 days ago

NewsBeat5 days ago‘Hourly’ method from gastroenterologist ‘helps reduce air travel bloating’

-

Tech6 days ago

Tech6 days agoAnthropic-Backed Group Enters NY-12 AI PAC Fight

-

NewsBeat6 days ago

NewsBeat6 days agoArmed man killed after entering secure perimeter of Mar-a-Lago, Secret Service says

-

Politics6 days ago

Politics6 days agoMaine has a long track record of electing moderates. Enter Graham Platner.

-

Business2 days ago

Business2 days agoDiscord Pushes Implementation of Global Age Checks to Second Half of 2026

-

NewsBeat3 days ago

NewsBeat3 days agoPolice latest as search for missing woman enters day nine

-

Business2 days ago

Business2 days agoOnly 4% of women globally reside in countries that offer almost complete legal equality