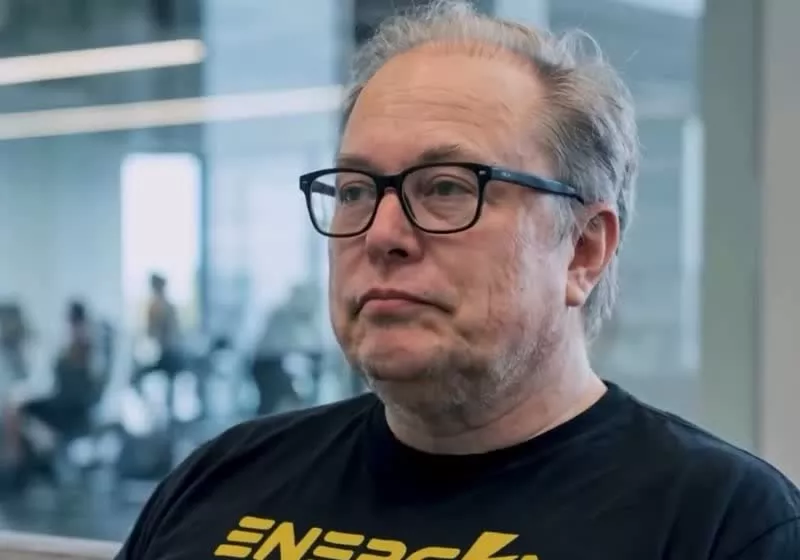

Saturday afternoon Sam Altman announced he’d start answering questions on X.com about OpenAI’s work with America’s Department of War — and all the developments over the past few days. (After that department’s negotions had failed with Anthropic, they announced they’d stop using Anthropic’s technology and threatened to designate it a “Supply-Chain Risk to National Security“. Then they’d reached a deal for OpenAI’s technology — though Altman says it includes OpenAI’s own similar prohibitions against using their products for domestic mass surveillance and requiring “human responsibility” for the use of force in autonomous weapon systems.)

Altman said Saturday that enforcing that “Supply-Chain Risk” designation on Anthropic “would be very bad for our industry and our country, and obviously their company. We said [that] to the Department of War before and after. We said that part of the reason we were willing to do this quickly was in the hopes of de-esclation…. We should all care very much about the precedent… To say it very clearly: I think this is a very bad decision from the Department of War and I hope they reverse it. If we take heat for strongly criticizing it, so be it.”

Altman also said that for a long time, OpenAI was planning to do “non-classified work only,” but this week found the Department of War “flexible on what we needed…”

Sam Altman: The reason for rushing is an attempt to de-escalate the situation. I think the current path things are on is dangerous for Anthropic, healthy competition, and the U.S. We negotiated to make sure similar terms would be offered to all other AI labs.

I know what it’s like to feel backed into a corner, and I think it’s worth some empathy to the Department of War. They are… a very dedicated group of people with, as I mentioned, an extremely important mission. I cannot imagine doing their work. Our industry tells them “The technology we are building is going to be the high order bit in geopolitical conflict. China is rushing ahead. You are very behind.” And then we say “But we won’t help you, and we think you are kind of evil.” I don’t think I’d react great in that situation. I do not believe unelected leaders of private companies should have as much power as our democratically elected government. But I do think we need to help them.

Question: Are you worried at all about the potential for things to go really south during a possible dispute over what’s legal or not later on and be deemed a supply chain risk…?

Sam Altman: Yes, I am. If we have to take on that fight we will, but it clearly exposes us to some risk. I am still very hopeful this is going to get resolved, and part of why we wanted to act fast was to help increase the chances of that…

Question: Why the rush to sign the deal ? Obviously the optics don’t look great.

Sam Altman: It was definitely rushed, and the optics don’t look good. We really wanted to de-escalate things, and we thought the deal on offer was good.

If we are right and this does lead to a de-escalation between the Department of War and the industry, we will look like geniuses, and a company that took on a lot of pain to do things to help the industry. If not, we will continue to be characterized as as rushed and uncareful. I don’t where it’s going to land, but I have already seen promising signs. I think a good relationship between the government and the companies developing this technology is critical over the next couple of years…

Question: What was the core difference why you think the Department of War accepted OpenAI but not Anthropic?

Sam Altman: […] We believe in a layered approach to safety–building a safety stack, deploying FDEs [embedded Forward Deployed Engineers] and having our safety and alignment researcher involved, deploying via cloud, working directly with the Department of War. Anthropic seemed more focused on specific prohibitions in the contract, rather than citing applicable laws, which we felt comfortable with. We feel that it it’s very important to build safe system, and although documents are also important, I’d clearly rather rely on technical safeguards if I only had to pick one…

I think Anthropic may have wanted more operational control than we did…

Question: Were the terms that you accepted the same ones Anthropic rejected?

Sam Altman: No, we had some different ones. But our terms would now be available to them (and others) if they wanted.

Question: Will you turn off the tool if they violate the rules?

Sam Altman: Yes, we will turn it off in that very unlikely event, but we believe the U.S. government is an institution that does its best to follow law and policy. What we won’t do is turn it off because we disagree with a particular (legal military) decision. We trust their authority.

Questions were also answered by OpenAI’s head of National Security Partnerships (who at one point posted that they’d managed the White House response to the Snowden disclosures and helped write the post-Snowden policies constraining surveillance during the Obama years.) And they stressed that with OpenAI’s deal with Department of War, “We control how we train the models and what types of requests the models refuse.”

Question: Are employees allowed to opt out of working on Department of War-related projects?

Answer: We won’t ask employees to support Department of War-related projects if they don’t want to.

Question: How much is the deal worth?

Answer: It’s a few million $, completely inconsequential compared to our $20B+ in revenue, and definitely not worth the cost of a PR blowup. We’re doing it because it’s the right thing to do for the country, at great cost to ourselves, not because of revenue impact…

Question: Can you explicitly state which specific technical safeguard OpenAI has that allowed you to sign what Anthropic called a ‘threat to democratic values’?

Answer: We think the deal we made has more guardrails than any previous agreement for classified AI deployments, including Anthropic’s. Other AI labs (including Anthropic) have reduced or removed their safety guardrails and relied primarily on usage policies as their primary safeguards in national security deployments. Usage policies, on their own, are not a guarantee of anything. Any responsible deployment of AI in classified environments should involve layered safeguards including a prudent safety stack, limits on deployment architecture, and the direct involvement of AI experts in consequential AI use cases. These are the terms we negotiated in our contract.

They also detailed OpenAI’s position on LinkedIn:

Deployment architecture matters more than contract language. Our contract limits our deployment to cloud API. Autonomous systems require inference at the edge. By limiting our deployment to cloud API, we can ensure that our models cannot be integrated directly into weapons systems, sensors, or other operational hardware…

Instead of hoping contract language will be enough, our contract allows us to embed forward deployed engineers, commits to giving us visibility into how models are being used, and we have the ability to iterate on safety safeguards over time. If our team sees that our models aren’t refusing queries they should, or there’s more operational risk than we expected, our contract allows us to make modifications at our discretion. This gives us far more influence over outcomes (and insight into possible abuse) than a static contract provision ever could.

U.S. law already constrains the worst outcomes. We accepted the “all lawful uses” language proposed by the Department, but required them to define the laws that constrained them on surveillance and autonomy directly in the contract. And because laws can change, having this codified in the contract protects against changes in law or policy that we can’t anticipate.

-xl.jpg)