Crypto World

How to Start with Elixir? Introduction, Installation, and Practice

by Gonzalo Wangüemert Villalba

•

4 September 2025

Introduction The open-source AI ecosystem reached a turning point in August 2025 when Elon Musk’s company xAI released Grok 2.5 and, almost simultaneously, OpenAI launched two new models under the names GPT-OSS-20B and GPT-OSS-120B. While both announcements signalled a commitment to transparency and broader accessibility, the details of these releases highlight strikingly different approaches to what open AI should mean. This article explores the architecture, accessibility, performance benchmarks, regulatory compliance and wider industry impact of these three models. The aim is to clarify whether xAI’s Grok or OpenAI’s GPT-OSS family currently offers more value for developers, businesses and regulators in Europe and beyond. What Was Released Grok 2.5, described by xAI as a 270 billion parameter model, was made available through the release of its weights and tokenizer. These files amount to roughly half a terabyte and were published on Hugging Face. Yet the release lacks critical elements such as training code, detailed architectural notes or dataset documentation. Most importantly, Grok 2.5 comes with a bespoke licence drafted by xAI that has not yet been clearly scrutinised by legal or open-source communities. Analysts have noted that its terms could be revocable or carry restrictions that prevent the model from being considered genuinely open source. Elon Musk promised on social media that Grok 3 would be published in the same manner within six months, suggesting this is just the beginning of a broader strategy by xAI to join the open-source race. By contrast, OpenAI unveiled GPT-OSS-20B and GPT-OSS-120B on 5 August 2025 with a far more comprehensive package. The models were released under the widely recognised Apache 2.0 licence, which is permissive, business-friendly and in line with requirements of the European Union’s AI Act. OpenAI did not only share the weights but also architectural details, training methodology, evaluation benchmarks, code samples and usage guidelines. This represents one of the most transparent releases ever made by the company, which historically faced criticism for keeping its frontier models proprietary. Architectural Approach The architectural differences between these models reveal much about their intended use. Grok 2.5 is a dense transformer with all 270 billion parameters engaged in computation. Without detailed documentation, it is unclear how efficiently it handles scaling or what kinds of attention mechanisms are employed. Meanwhile, GPT-OSS-20B and GPT-OSS-120B make use of a Mixture-of-Experts design. In practice this means that although the models contain 21 and 117 billion parameters respectively, only a small subset of those parameters are activated for each token. GPT-OSS-20B activates 3.6 billion and GPT-OSS-120B activates just over 5 billion. This architecture leads to far greater efficiency, allowing the smaller of the two to run comfortably on devices with only 16 gigabytes of memory, including Snapdragon laptops and consumer-grade graphics cards. The larger model requires 80 gigabytes of GPU memory, placing it in the range of high-end professional hardware, yet still far more efficient than a dense model of similar size. This is a deliberate choice by OpenAI to ensure that open-weight models are not only theoretically available but practically usable. Documentation and Transparency The difference in documentation further separates the two releases. OpenAI’s GPT-OSS models include explanations of their sparse attention layers, grouped multi-query attention, and support for extended context lengths up to 128,000 tokens. These details allow independent researchers to understand, test and even modify the architecture. By contrast, Grok 2.5 offers little more than its weight files and tokenizer, making it effectively a black box. From a developer’s perspective this is crucial: having access to weights without knowing how the system was trained or structured limits reproducibility and hinders adaptation. Transparency also affects regulatory compliance and community trust, making OpenAI’s approach significantly more robust. Performance and Benchmarks Benchmark performance is another area where GPT-OSS models shine. According to OpenAI’s technical documentation and independent testing, GPT-OSS-120B rivals or exceeds the reasoning ability of the company’s o4-mini model, while GPT-OSS-20B achieves parity with the o3-mini. On benchmarks such as MMLU, Codeforces, HealthBench and the AIME mathematics tests from 2024 and 2025, the models perform strongly, especially considering their efficient architecture. GPT-OSS-20B in particular impressed researchers by outperforming much larger competitors such as Qwen3-32B on certain coding and reasoning tasks, despite using less energy and memory. Academic studies published on arXiv in August 2025 highlighted that the model achieved nearly 32 per cent higher throughput and more than 25 per cent lower energy consumption per 1,000 tokens than rival models. Interestingly, one paper noted that GPT-OSS-20B outperformed its larger sibling GPT-OSS-120B on some human evaluation benchmarks, suggesting that sparse scaling does not always correlate linearly with capability. In terms of safety and robustness, the GPT-OSS models again appear carefully designed. They perform comparably to o4-mini on jailbreak resistance and bias testing, though they display higher hallucination rates in simple factual question-answering tasks. This transparency allows researchers to target weaknesses directly, which is part of the value of an open-weight release. Grok 2.5, however, lacks publicly available benchmarks altogether. Without independent testing, its actual capabilities remain uncertain, leaving the community with only Musk’s promotional statements to go by. Regulatory Compliance Regulatory compliance is a particularly important issue for organisations in Europe under the EU AI Act. The legislation requires general-purpose AI models to be released under genuinely open licences, accompanied by detailed technical documentation, information on training and testing datasets, and usage reporting. For models that exceed systemic risk thresholds, such as those trained with more than 10²⁵ floating point operations, further obligations apply, including risk assessment and registration. Grok 2.5, by virtue of its vague licence and lack of documentation, appears non-compliant on several counts. Unless xAI publishes more details or adapts its licensing, European businesses may find it difficult or legally risky to adopt Grok in their workflows. GPT-OSS-20B and 120B, by contrast, seem carefully aligned with the requirements of the AI Act. Their Apache 2.0 licence is recognised under the Act, their documentation meets transparency demands, and OpenAI has signalled a commitment to provide usage reporting. From a regulatory standpoint, OpenAI’s releases are safer bets for integration within the UK and EU. Community Reception The reception from the AI community reflects these differences. Developers welcomed OpenAI’s move as a long-awaited recognition of the open-source movement, especially after years of criticism that the company had become overly protective of its models. Some users, however, expressed frustration with the mixture-of-experts design, reporting that it can lead to repetitive tool-calling behaviours and less engaging conversational output. Yet most acknowledged that for tasks requiring structured reasoning, coding or mathematical precision, the GPT-OSS family performs exceptionally well. Grok 2.5’s release was greeted with more scepticism. While some praised Musk for at least releasing weights, others argued that without a proper licence or documentation it was little more than a symbolic gesture designed to signal openness while avoiding true transparency. Strategic Implications The strategic motivations behind these releases are also worth considering. For xAI, releasing Grok 2.5 may be less about immediate usability and more about positioning in the competitive AI landscape, particularly against Chinese developers and American rivals. For OpenAI, the move appears to be a balancing act: maintaining leadership in proprietary frontier models like GPT-5 while offering credible open-weight alternatives that address regulatory scrutiny and community pressure. This dual strategy could prove effective, enabling the company to dominate both commercial and open-source markets. Conclusion Ultimately, the comparison between Grok 2.5 and GPT-OSS-20B and 120B is not merely technical but philosophical. xAI’s release demonstrates a willingness to participate in the open-source movement but stops short of true openness. OpenAI, on the other hand, has set a new standard for what open-weight releases should look like in 2025: efficient architectures, extensive documentation, clear licensing, strong benchmark performance and regulatory compliance. For European businesses and policymakers evaluating open-source AI options, GPT-OSS currently represents the more practical, compliant and capable choice. In conclusion, while both xAI and OpenAI contributed to the momentum of open-source AI in August 2025, the details reveal that not all openness is created equal. Grok 2.5 stands as an important symbolic release, but OpenAI’s GPT-OSS family sets the benchmark for practical usability, compliance with the EU AI Act, and genuine transparency.

Crypto World

Ethereum Price Corrects but 4 Metrics Are Quietly Building a Bounce Case

Ethereum (ETH) price trades at $2,108 on the 12-hour chart on April 7, down approximately 1% over the past 24 hours. The headline move looks unremarkable. However, four separate metrics across the technical, derivatives, and on-chain layers are converging toward the same conclusion, and none of them are pointing down.

The last time something similar happened, at least on the technical front, Ethereum price rallied 16%. Whether history repeats depends on a handful of levels that are now within striking distance.

Two Technical Triggers Are Converging on the 12-Hour Chart

The first metric is the Exponential Moving Average (EMA) structure, a trend indicator that gives greater weight to recent price action. On the 12-hour chart, the 20-period EMA at $2,083 is closing in on the 50-period EMA at $2,086. When the faster EMA crosses above the slower one, it forms a bullish crossover that typically signals a shift in short-term momentum.

This exact setup started building in mid-March. The crossover started forming around mid-March, and Ethereum price subsequently rallied 15.63%. In the process, it even reclaimed the 100-period EMA. The same structure is forming again. Since April 5, prices have already moved up 7.59%, and the 20 and 50 EMAs are now within $3 of each other. The 100-period EMA sits at $2,144, and a confirmed crossover would bring that level into immediate focus.

Want more token insights like this? Sign up for Editor Harsh Notariya’s Daily Crypto Newsletter here.

The second metric is the Relative Strength Index (RSI), a momentum oscillator. Between March 19 and April 6, price made a lower low on the 12-hour chart while RSI made a higher low.

That standard bullish divergence suggests selling momentum is fading even as price tested lower levels. The divergence remains intact as long as Ethereum price holds above $2,086. A break below that level would not destroy the broader lower low structure but would invalidate the most recent swing as a confirmed low until it resets.

Together, the EMA convergence and RSI divergence form the technical foundation for a potential bounce. However, technical patterns alone do not move prices. The derivatives and on-chain data reveal whether the fuel exists to power the move.

Shorts Are Piling In and Whales Are Not Selling

The third metric comes from the derivatives market. On April 4, total open interest for Ethereum stood at $10.49 billion with a funding rate of approximately -0.0015%. By April 7, open interest had risen to $10.77 billion while the funding rate dropped further to -0.007%.

Rising open interest combined with an increasingly negative funding rate means one thing. Traders are opening new short positions. That buildup of short exposure creates contrarian fuel because if price moves against them, the shorts must buy to close their positions, accelerating the rally through a short squeeze.

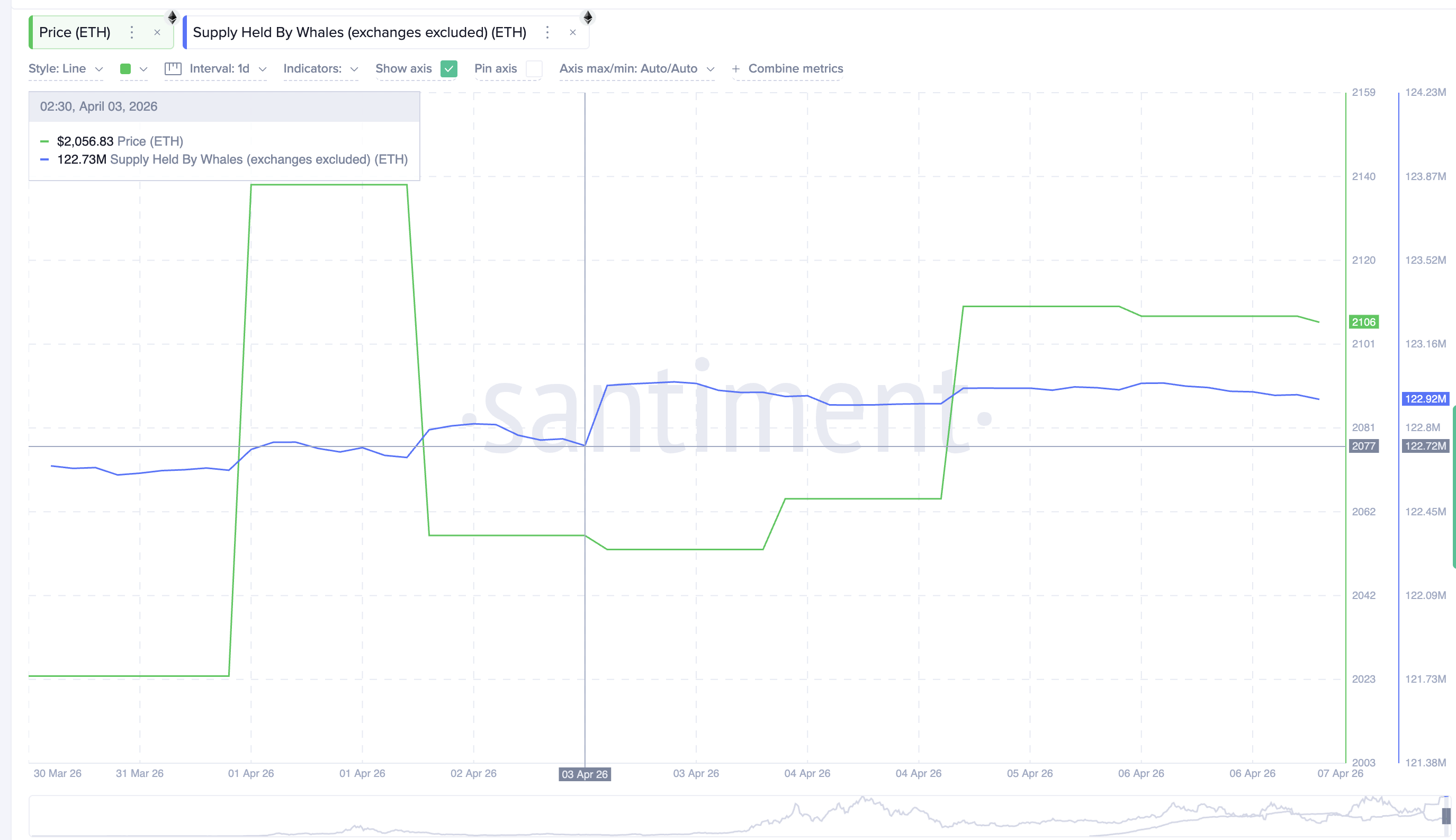

The fourth metric is whale behavior. Since April 3, whale wallets (excluding exchanges) have increased their holdings from 122.73 million to 122.92 million ETH. That addition of approximately 190,000 ETH or roughly $400 million represents steady accumulation rather than aggressive buying.

But the key point is that whales have not reduced their positions during the recent weakness. They are holding through the dip and adding incrementally, providing spot support that sits beneath the derivatives-driven short squeeze potential.

The technical setup provides the direction. The derivatives market provides the contrarian fuel. The whale accumulation provides the spot floor. All four metrics are aligning toward the same outcome, which makes the price levels the final arbiter.

Ethereum Price Levels That Decide If the Bounce Delivers

The 12-hour chart with technical levels from the completed swing frames every critical level.

The first hurdle is $2,116 at the 0.382 level. A 12-hour close above this would place Ethereum price back above the zone where the EMA crossover would likely confirm, adding momentum to the move. Above that, $2,172 is the most important resistance. This level has rejected price repeatedly since mid-March, and a clean break above it would represent the first meaningful shift in the short-term structure.

For the bounce to show genuine strength, Ethereum needs to reach $2,228 at the 0.618 level, a 5.77% move from current prices. A close above $2,228 would confirm that the four metrics translated into a real trend shift rather than another failed bounce.

On the downside, $2,086 is the level that keeps the RSI divergence intact. Below that, $2,047 at the 0.236 level becomes the immediate floor. A break below $2,047 would expose $1,935 and suggest that the four converging metrics were not enough to overcome the broader bearish pressure.

A 12-hour close above $2,172 would confirm the bounce thesis that all four metrics are building toward. And for now, a failure to hold $2,086 would delay the setup and leave Ethereum price vulnerable to a retest of $1,935.

The post Ethereum Price Corrects but 4 Metrics Are Quietly Building a Bounce Case appeared first on BeInCrypto.

Crypto World

JPMorgan CEO says AI will transform banking faster than the internet era

Artificial intelligence is set to reshape banking, according to Jamie Dimon, who used his latest shareholder letter to outline how deeply the technology is expected to embed itself across JPMorgan Chase.

Summary

- AI is expected to reshape nearly every function at JPMorgan, with adoption likely to move faster than past technological shifts.

- The bank plans to increase technology spending to about $19.8 billion in 2026, with a significant share directed toward AI and supporting infrastructure.

“The importance of AI is real, and while I hesitate to use the word transformational—it is,” Dimon wrote, adding that adoption could move far faster than past innovations such as electricity or the internet.

Unlike those technologies, which took decades to scale, AI deployment “looks likely to accelerate over the next few years.”

Across JPMorgan, the integration effort is already underway, supported by rising technology investment. The bank expects to spend roughly $19.8 billion on technology in 2026, including artificial intelligence, data systems, and cloud infrastructure, according to a report by Business Insider. This figure builds on earlier commitments, with Dimon noting the firm had been allocating about $2 billion annually to AI initiatives as of late 2025.

“AI will affect virtually every function, application, and process in the company,” Dimon said, pointing to long-term gains in productivity.

He also tied the technology’s reach to broader economic and scientific progress, writing that it could help “cure some cancers, create new composites, and reduce accidental deaths,” alongside other improvements in quality of life.

“We will not put our heads in the sand,” Dimon wrote. “We will deploy AI, as we deploy all technology, to do a better job for our customers (and employees).”

Dimon also flagged threats tied to deepfakes, misinformation, and cybersecurity vulnerabilities, warning that missteps in handling the technology could carry lasting consequences.

“These risks are real, but they are manageable if companies, regulators, and governments prepare,” he wrote, cautioning against both overregulation after early failures and complacency in the face of emerging threats.

“The worst mistakes we can make are predictable: overreact at the first serious incident and regulate out important innovation, or underreact and fail to learn from what went wrong.”

He added that effective oversight would require preparation ahead of time and “discipline to fix what’s broken without destroying what works.”

AI could take away jobs

Besides the operational gains, AI’s effect on employment remains a central concern.

“AI will definitely eliminate some jobs, while it enhances others,” he wrote, adding that JPMorgan plans to redeploy affected workers where possible.

Demand for skilled labor, particularly in areas such as cybersecurity and AI development, remains strong, even as routine tasks become more automated.

Concerns about job displacement have grown across the industry. Anthropic CEO Dario Amodei warned earlier this year that advances in AI could remove up to half of entry-level professional roles within five years.

“I have engineers within Anthropic who say, ‘I don’t write any code anymore. I just let the model write the code, I edit it,’” he said at the time. “We might be six to 12 months away from when the model is doing most, maybe all, of what [software engineers] do end-to-end.”

Meanwhile, OpenAI recently called on governments to prepare for economic disruption tied to automation, urging new approaches to taxation, worker protections, and social support systems as AI adoption expands.

Crypto World

6 Crises Threaten to Cripple the Global Economy Amid Iran War

The US-Iran war has evolved beyond an energy crisis into a multi-front economic shock, with at least six simultaneous crises potentially threatening global financial stability.

Analyst Crypto Rover flagged the convergence of threats, arguing that the market is “heading towards an everything crisis.”

1. Food Crisis Brewing

The analyst noted that hedge funds have turned net bullish on wheat for the first time since June 2022. The Strait of Hormuz blockade has disrupted roughly 30% of the global seaborne fertilizer trade, sending urea prices up by about 50% since the war began.

With the planting season underway, AI analytics firm Helios warned that global food prices could rise 12% to 18% by the end of 2026.

2. Japanese Bond Market Stress

Meanwhile, Japanese bond yields continue hitting multi-decade highs, a pattern that the analyst says has historically preceded broader market crashes.

3. Private Credit Market Warning

Stress is also compounding in the private credit sector. BeInCrypto reported that many firms, including Blue Owl, BlackRock, and Apollo, have capped withdrawals amid rising redemption requests.

JPMorgan CEO Jamie Dimon has also warned that “losses on all leveraged lending in general will be higher than expected, relative to the environment.”

4. Subprime Loan Delinquencies Rising

Subprime loan delinquency rates have climbed to 10% of total outstanding debt, the highest level in 11 years, according to the Kobeissi Letter.

The rate has more than tripled since 2021, drawing comparisons to the Global Financial Crisis.

“The delinquency rate peaked at ~19% during the 2008 Financial Crisis, when subprime debt was $3.5 trillion and made up ~30% of total household debt. Today, subprime debt stands at $2.7 trillion, or ~15% of the total, still a significant proportion. An increasing number of Americans are falling behind on their debt,” the post read.

5. Growing Stagflation Signals

The surging oil prices have sparked concerns about inflation and even a potential recession. US consumer inflation expectations surged to 6.2% in March. This marked the highest reading since August 2025.

In addition, Saudi Arabia’s Aramco will increase its Arab Light crude price for May sales to Asia at a premium of $19.50 per barrel over benchmarks, according to Bloomberg.

“The expectations for inflation are going up globally. Today, Saudi Arabia sets record-high oil prices for Asia. This is a classic Stagflation case, and it ends up very badly for the economy,” Crypto Rover added.

6. Aluminum Crisis From Iran Strikes

Lastly, an industrial crisis is also shaping up. Iranian strikes on Gulf aluminum plants have pushed prices up more since the conflict began.

Emirates Global Aluminum (EGA) warned that full recovery at its Al Taweelah facility could take up to 12 months.

“Al Taweelah is one of the largest smelters in the world, producing 1.6 million tons of cast metal in 2025, or ~2.3% of global output. The Middle East now represents ~9% of global aluminum production, but the impact is amplified because constraints elsewhere have already eroded inventories, leaving the market with little buffer. Aluminum is used in everything from airplanes to food packaging and solar panels, meaning disruptions ripple far beyond the metals market,” Global Markets Investor reported.

Whether a ceasefire materializes may determine if these parallel crises remain contained or converge into something far larger.

Subscribe to our YouTube channel to watch leaders and journalists provide expert insights

The post 6 Crises Threaten to Cripple the Global Economy Amid Iran War appeared first on BeInCrypto.

Crypto World

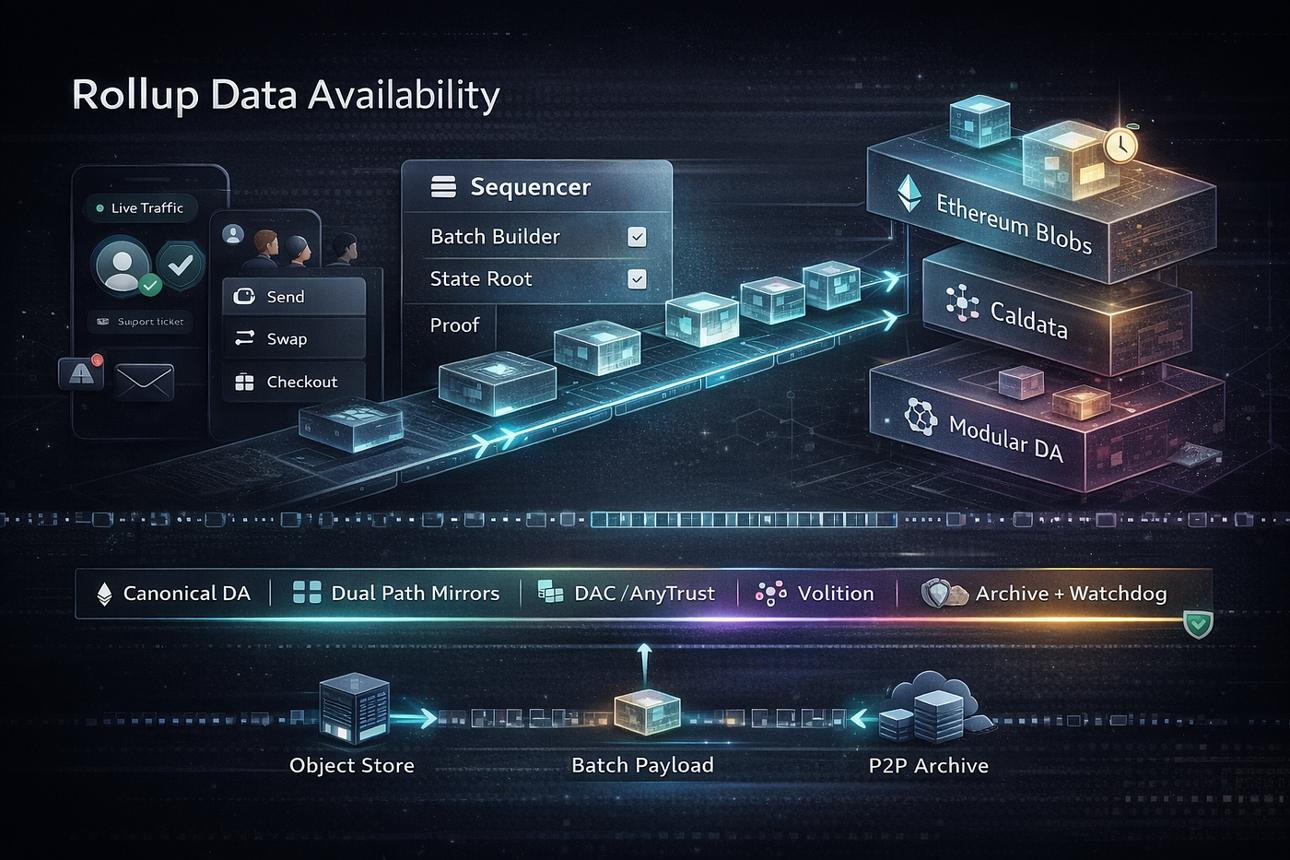

Modular Blockchains + AI: The Rise of the Plug-and-Play Economy

There was a time when blockchains acted like isolated kingdoms—each with its own rules, fees, and limitations. If you wanted to build or transact, you had to pick a side.

That era is quietly ending.

We’re entering a new phase where blockchains are no longer monolithic systems, but modular, interchangeable components—and AI is the operator pulling the strings.

From Monoliths to Modular Systems

Traditional chains like Ethereum historically tried to do everything:

- Execute transactions

- Store data

- Reach consensus

- Settle finality

All in one place.

That’s like asking one machine to be a factory, warehouse, and logistics network at the same time. It works… until it doesn’t scale.

Modular blockchain design flips this model:

- Execution layers handle smart contracts (e.g., rollups)

- Data availability layers store and verify data (e.g., Celestia)

- Settlement layers finalize transactions (often still Ethereum)

Each layer specializes. Each layer competes.

And most importantly, they can be swapped.

Enter AI: The Ultimate Chain Router

Now plug AI into this modular stack—and things get interesting.

Instead of you deciding which chain to use, AI agents will:

- Scan multiple chains in real time

- Compare gas fees, latency, and liquidity

- Route transactions to the most efficient path

Think of it like Google Maps—but for value transfer.

You don’t ask:

“Should I use Arbitrum or Optimism?”

Your AI agent already decided—based on cost, speed, and success probability.

Gas Fees Become a Solved Problem

For years, gas fees have been one of crypto’s biggest friction points.

But in a modular + AI world:

- Fees are no longer static

- Networks become interchangeable

- Optimization becomes automatic

Gas stops being a user problem

…and becomes an AI optimization problem

Bots will:

- Batch transactions

- Time execution windows

- Arbitrage fee differences across chains

The cheapest route wins—every time.

Blockchains Won’t Compete—They’ll Be Selected

Here’s the uncomfortable truth for chain maximalists:

Users won’t be loyal. AI won’t be emotional.

In a plug-and-play economy:

- Blockchains are just infrastructure

- Liquidity flows where conditions are best

- AI chooses the “best chain” per transaction

This flips the competitive landscape:

From:

- Ecosystems fighting for users

To:

- Protocols competing for AI preference

If your chain is slower or more expensive, AI simply routes around you.

The Plug-and-Play Economy

This is where everything converges.

We’re moving toward a world where:

- Developers assemble blockchain stacks like APIs

- AI agents orchestrate execution behind the scenes

- Users interact with simple interfaces, unaware of the complexity underneath

It’s not “multi-chain.”

It’s a chain-abstracted reality.

What This Means Going Forward

- User experience becomes invisible

You won’t think about chains—just outcomes - AI agents become economic actors

They don’t just assist—they decide - Efficiency becomes the ultimate moat

Chains win by being optimal, not popular - Liquidity becomes fluid and dynamic

Capital moves at machine speed

Final Opinion

“Blockchains won’t compete. AI will choose between them.”

And when that happens, the winners won’t be the loudest ecosystems—

They’ll be the ones that machines quietly prefer.

Welcome to the plug-and-play economy.

REQUEST AN ARTICLE

Crypto World

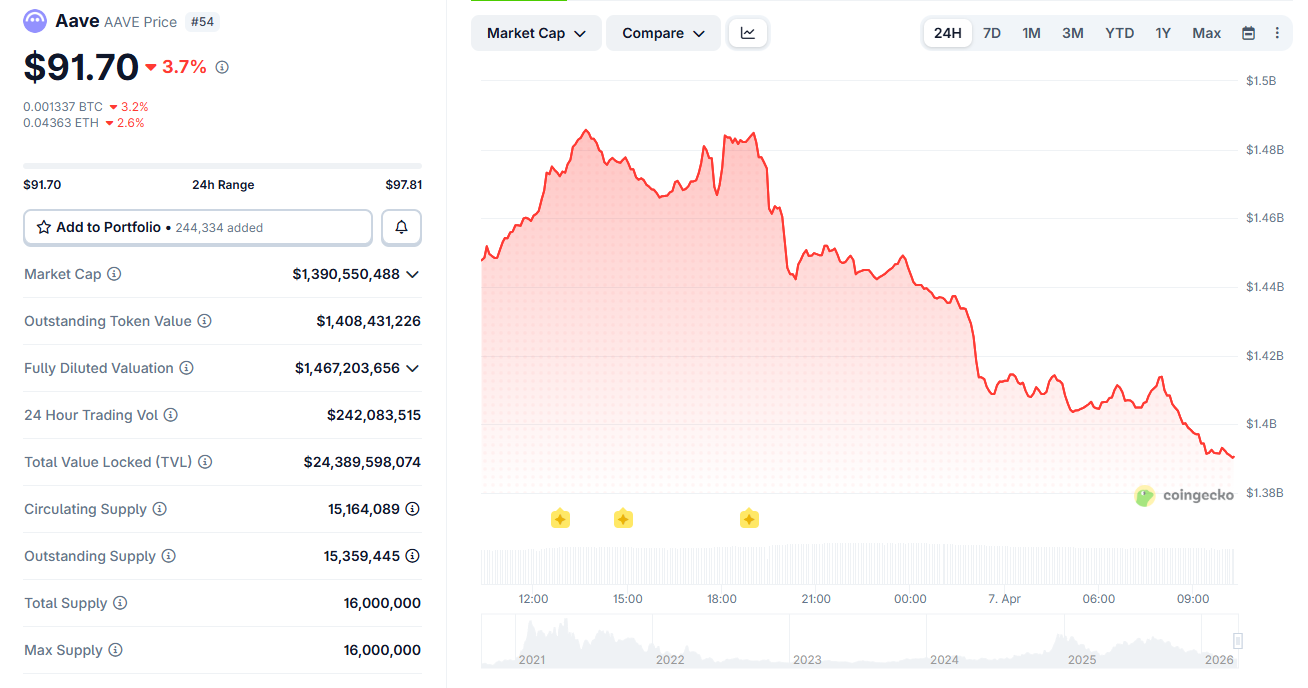

Chaos Labs Wanted to Replace Chainlink on Aave: Why Stani Kulechov Said No

Chaos Labs has terminated its risk management engagement with Aave (AAVE) after three years, citing unsustainable economics and disagreements over how V4 should be managed.

The departure marks the latest in a string of core contributor exits from Decentralized Finance’s (DeFi) largest lending protocol, which holds over $24 billion in total value locked.

Chaos Labs Walks Away From Aave After 3 Years of Risk Management

Chaos Labs founder Omer Goldberg outlined three factors behind the decision.

- Key V3 contributors had already departed, doubling the workload

- Aave V4 introduced an entirely new architecture that expanded operational and legal burdens.

- Despite a proposed $5 million budget, the firm said it would still operate at a loss.

“The engagement no longer reflects how we believe risk should be managed,” Goldberg explained.

Goldberg compared Aave’s risk spending to banking benchmarks. He noted Aave generated $142 million in revenue in 2025.

The firm’s $3 million budget represented roughly 2% of that figure, well below the 6% to 10% banks typically allocate to compliance and risk.

Aave Responds and LlamaRisk Steps In

Aave founder Stani Kulechov acknowledged the departure but pushed back on parts of the narrative.

He revealed Chaos Labs had sought to become the sole risk manager and replace Chainlink price oracles with its own product across new deployments.

Aave Labs rejected both proposals to avoid vendor lock-in.

DeFi risk management firm LlamaRisk, which works with Aave, among other major protocols like Curve and Ethena, pledged full operational continuity. The firm said it would present a detailed transition proposal within the week.

Meanwhile, analyst Duo Nine questioned Aave’s priorities, pointing out that V3 still holds over $24 billion while leadership focused discussions on $10 million in V4 deposits.

AAVE traded near $92 at the time of writing, down by almost 4% on the day. The token faces sustained selling pressure amid governance tensions and contributor departures, weighing on market sentiment.

The post Chaos Labs Wanted to Replace Chainlink on Aave: Why Stani Kulechov Said No appeared first on BeInCrypto.

Crypto World

Trump’s Iran Ultimatum Sends Bitcoin, Oil, and Stock Markets into Uncertainty

Key Highlights

- Bitcoin retreated to $68,589 following a brief rally that evaporated after diplomatic setback, remaining confined within its established $65K–$73K trading corridor spanning six weeks

- A fleeting Monday surge resulted in $196.7 million worth of forced short position closures before Iran’s rejection of the ceasefire terms

- President Trump issued a Tuesday midnight ultimatum to Iran, warning of infrastructure destruction should negotiations collapse

- Crude oil prices jumped beyond $112 per barrel amid heightening geopolitical tensions, while Brent approached $115.66

- Equity index futures declined Tuesday morning despite Monday’s modest gains across the S&P 500, Nasdaq, and Dow Jones Industrial Average

The leading cryptocurrency fell back to $68,589 during Tuesday’s Asian session after optimism surrounding a potential diplomatic breakthrough quickly dissipated. The reversal coincided with President Trump’s establishment of a Tuesday evening cutoff time for Iran to agree to peace terms or confront military action.

A wave of optimism swept through markets Monday following an Axios report detailing a prospective 45-day ceasefire arrangement. The news momentarily propelled Bitcoin beyond the $69,000 threshold and forced the closure of $196.7 million in bearish positions. However, the positive momentum evaporated within approximately half a day.

Tehran subsequently declined the ceasefire framework communicated through Pakistani intermediaries. Iran’s counteroffer included demands for a complete cessation of hostilities, sanctions relief, financial assistance for rebuilding efforts, and guaranteed maritime security through the Strait of Hormuz.

Ether experienced a 1% decline to $2,104. Solana registered a 2.7% decrease to $79.75. XRP fell 1.6% to $1.32. Dogecoin declined 2.2% to $0.09. BNB remained relatively stable at $598.

“This move looks less like a shift in fundamentals and more like positioning getting caught offsides,” said Diana Pires, chief business officer at sFOX. Bearish sentiment had been building before the ceasefire headlines forced traders to unwind short positions quickly.

Energy Markets Spike Following Presidential Warning

The President issued stark warnings to Tehran, threatening to eliminate “every bridge in Iran” and render each power facility inoperable should negotiations fail by the Tuesday midnight threshold. Paradoxically, he simultaneously characterized discussions as progressing favorably.

🚨 President Trump: “Tuesday will be power plant day”

US futures -0.54%$SPY $QQQ #Iran pic.twitter.com/ylWFfvAp6c

— Crypto Seth (@seth_fin) April 5, 2026

US crude prices escalated above $112 per barrel. Brent futures traded approaching $115.66, representing a 2.9% session increase. Accelerating energy costs compound existing macroeconomic uncertainties.

Equity futures contracts weakened Tuesday morning in anticipation of the diplomatic deadline. S&P 500-linked contracts and Nasdaq 100 futures retreated 0.4% and 0.5% respectively. Dow futures registered approximately a 0.2% pullback.

Equity Markets and Economic Indicators Under Scrutiny

Notwithstanding Tuesday’s futures weakness, the previous session concluded on a positive trajectory. The S&P 500 climbed nearly 0.5%. The Nasdaq delivered comparable performance. The Dow Jones added over 160 points.

Maritime traffic through the strategic Strait of Hormuz demonstrated improvement this week, offering marginal relief. Chinese and Japanese ports welcomed the highest concentration of tanker arrivals, alleviating some supply chain concerns.

March services sector data for the United States revealed decelerating economic growth. Labor market indicators contracted at the most severe pace observed since 2023. Cost pressures intensified. The mixed signals provide limited guidance for Federal Reserve monetary policy deliberations.

Critical inflation metrics are scheduled for Friday release. Market participants are simultaneously monitoring preliminary February durable goods figures, expected Tuesday morning. Delta’s quarterly earnings announcement is anticipated Wednesday.

Trump posted on Truth Social urging Iran to reopen the Strait, and separately stated that “the American people would like to see us come home,” signaling possible pressure to wind down the conflict.

The flagship cryptocurrency has maintained its position within the $65,000 to $73,000 corridor throughout the duration of geopolitical tensions. Trump’s Tuesday midnight ultimatum will likely prove decisive in determining whether the upper or lower boundary faces near-term pressure.

Crypto World

Milei call logs raise new questions over Libra token promotion

Recently uncovered phone records point to multiple calls between Argentine President Javier Milei and a Libra-associated entrepreneur.

Summary

- Call records show Javier Milei spoke seven times with a Libra-linked entrepreneur around the timing of his promotional post on X.

- The Libra token surged after the endorsement before losing over 96% of its value.

Phone logs reviewed by prosecutors, and reported by The New York Times, indicate that Milei exchanged seven calls with an entrepreneur linked to the Libra token on the same night he posted about the cryptocurrency on X.

The calls reportedly took place both before and after the post went live, though investigators have not disclosed what was discussed.

Those findings appear to challenge Milei’s earlier insistence that he had no ties to the initiative.

At the time, he framed his involvement as limited to amplifying what he described as a private venture that would support Argentina’s economy.

“A few hours ago, I posted a tweet, like so many infinite other times, supporting an alleged private venture with which I obviously have no connection whatsoever,” Milei said in a statement on X following the fallout.

“I wasn’t aware of the details of the project, and after becoming aware of them, I decided not to keep promoting it,” he said at the time.

The Libra token briefly surged after Milei’s endorsement in February 2025, as he presented it as a tool to fund small businesses and startups. Momentum quickly reversed, with the token losing more than 96% of its value from peak levels.

However, as it fell, it wiped out roughly $251 million in investor funds and triggered accusations that the episode resembled a rug pull.

After the fallout Argentine lawyers filed fraud complaints against Milei, while some political figures called for impeachment proceedings. Under Argentine law, fraud convictions can carry prison terms ranging from one month to six years.

Federal prosecutors opened a formal investigation into the matter, naming Milei as a person of interest. The probe remains active, with authorities continuing to examine financial links, communications, and the role of individuals connected to the token’s launch.

Argentina’s Anti-Corruption Office concluded in June that Milei had not breached public ethics rules, determining that his post was made in a personal capacity rather than as head of state.

New details put Milei under scrutiny

More recent findings, however, have added another layer to the case.

A judicial update in March revealed that investigators had uncovered a draft document on the phone of crypto lobbyist Mauricio Novelli.

The note referenced a potential $5 million arrangement tied to the Libra promotion, drafted just three days before Milei’s post. The document did not specify who would receive the funds, leaving its purpose and beneficiaries unclear.

Crypto World

Solana Foundation unveils STRIDE framework to strengthen DeFi security

A new security framework has been unveiled by the Solana Foundation to audit Solana-based protocols and strengthen risk monitoring.

Summary

- Solana Foundation introduced the STRIDE security framework to assess and monitor risks across DeFi protocols.

- A new incident response network has been set up to coordinate real-time threat intelligence and response efforts.

- The move follows recent exploits, including a $280 million loss at Drift Protocol.

According to the official announcement, the initiative was developed with Asymmetric Research and is called STRIDE. It is designed to assess and track the security of projects on Solana. The program sets a standard process to identify risks, monitor vulnerabilities, and escalate threats across the ecosystem.

Under STRIDE, protocols are evaluated across eight areas, including program integrity, governance controls, oracle dependencies, infrastructure setup, and operational practices. It also covers supply chain exposure, incident response readiness, and forensic capabilities tied to log management. Each participating protocol undergoes an independent review, with results disclosed publicly.

“This gives users, investors, and the broader ecosystem real transparency into the security posture of the protocols they interact with,” Asymmetric Research said.

Alongside STRIDE, the foundation unveiled the Solana Incident Response Network (SIRN), a coalition of security firms designed to coordinate real-time responses to active threats.

“Members will share threat intelligence, coordinate responses to active incidents, and contribute to the ongoing evolution of the STRIDE framework,” the foundation said in its statement.

Just days earlier, Drift Protocol suffered a $280 million exploit, which investigators linked to social engineering tactics tied to North Korean-affiliated actors.

Data from DefiLlama shows that over $168 million was stolen from 34 DeFi protocols in Q1 2026. While that figure is sharply lower than the $1.58 billion recorded during the same period in 2025, the persistence of attacks continues to highlight structural risks in decentralized finance.

While not explicitly referenced in the announcement, recent cases point to the increasingly complex tactics and the use of AI-driven tools to execute exploits. In January, Step Finance lost roughly $40 million after attackers leveraged automated agents to execute rapid transfers, amplifying the scale of the breach, according to reporting from KuCoin.

Crypto World

Spot Bitcoin ETFs Post Strongest Day Since Late February as $471 Million Pours In

US-listed Bitcoin (BTC) exchange-traded funds (ETFs) recorded $471.32 million in net inflows on April 6, their strongest single day since February 25.

The surge lifted total cumulative net inflows to $56.43 billion. Not a single ETF posted negative flows on the day, with six registering zero and six finishing in positive territory.

Bitcoin ETF Inflows Clash With Weakening On-Chain Demand

According to data from SoSoValue, BlackRock’s iShares Bitcoin Trust (IBIT) led with $181.89 million, followed by Fidelity’s Wise Origin Bitcoin Fund (FBTC) at $147.32 million and Ark & 21Shares’ ARKB at $118.76 million. Together, the three funds accounted for roughly 95% of the inflows on April 6.

Grayscale’s mini BTC trust added $17.59 million, Bitwise’s BITB contributed $3.79 million, and VanEck’s HODL recorded $1.97 million.

Follow us on X to get the latest news as it happens

The strong ETF day arrived against a deteriorating on-chain backdrop. CryptoQuant data shows 30-day apparent demand fell to approximately -87,600 BTC by April 5.

“The situation continues to deteriorate, even though Bitcoin is still managing to remain within its current range. As long as this dynamic does not improve, Bitcoin will likely struggle to break out of this rather negative environment,” analyst Darkfost noted.

Wallets holding 1,000–10,000 BTC have flipped to net distribution, with 1-year holdings swinging from roughly +200,000 BTC at the 2024 peak to about -188,000 BTC. The shift represents one of the most aggressive distribution cycles on record, according to the analytics firm.

Meanwhile, spot Ethereum (ETH) ETFs also saw renewed interest. The funds attracted $120.24 million in net inflows on April 6, the highest single-day total since March 17’s $138.25 million. The inflow snapped a short red stretch in which ETH products posted outflows on two previous trading sessions.

Subscribe to our YouTube channel to watch leaders and journalists provide expert insights

The post Spot Bitcoin ETFs Post Strongest Day Since Late February as $471 Million Pours In appeared first on BeInCrypto.

Crypto World

Cardano (ADA) Price Analysis: Whale Activity Surges as Token Struggles Below $0.25

Key Takeaways

- Cardano is struggling to maintain levels above $0.25 following an unsuccessful rebound effort, with selling pressure persisting throughout the week.

- Derivatives market open interest contracted by approximately 8% over a 24-hour period, accompanied by $701,830 in long position liquidations.

- The open interest-weighted funding rate shifted into negative territory at -0.0132%, indicating short position preference among traders.

- Major holder wallets containing over 10 million ADA tokens climbed to 424, marking a four-month peak and representing a 5% increase over nine weeks.

- Critical price support level identified at $0.2328, while the 50-day exponential moving average presents resistance at $0.2681.

Cardano (ADA) faces continued selling pressure this week, remaining stuck below the $0.25 threshold amid widespread cryptocurrency market turbulence. An early-week attempt at recovery quickly faded, pushing the token back into negative territory.

Early in the week, ADA managed to push toward $0.2546, registering a 5.42% intraday increase alongside a dramatic surge in trading activity that exceeded 100%, bringing volume to $515.84 million. Unfortunately, this upward movement proved short-lived.

Market observer Alpha Crypto Signal identified a falling wedge breakout on the 4-hour timeframe, with ADA successfully reclaiming its descending resistance line and near-term moving averages. According to the analyst, sustained momentum could drive prices toward the $0.27–$0.29 range, though inability to defend the breakout zone risks invalidating the bullish pattern.

Futures Market Data Reflects Near-Term Pessimism

Derivatives metrics from CoinGlass reveal that ADA futures open interest declined by roughly 8% to reach $401.35 million during the past day. Combined liquidations totaled $1.10 million, with long traders absorbing the majority at $701,830.

The funding rate metric weighted by open interest has fallen to -0.0132%, indicating that market participants are willing to pay for maintaining short exposure. This dynamic reflects prevailing bearish sentiment among derivatives traders.

Market analyst UniChartz emphasized the $0.23–$0.24 price range as a critical demand zone, observing that this region has previously catalyzed significant rallies. Should buyers successfully protect this floor, the initial resistance target stands at $0.45.

Major Holders Increase Positions to Multi-Month Peak

Blockchain analytics from Santiment demonstrate that addresses holding more than 10 million Cardano tokens have expanded to 424, representing the highest count in four months. This reflects growth exceeding 5% throughout the previous nine-week period.

Such accumulation activity during price weakness typically suggests institutional or high-net-worth investors anticipate future appreciation over extended timeframes.

The Relative Strength Index currently hovers near 44, while the Moving Average Convergence Divergence indicator has edged into positive territory close to the baseline. These technical indicators point toward tentative stabilization without confirming a definitive trend change.

Near-term price support rests at $0.2328, corresponding to the March 29 bottom. A violation of this floor could expose the February 5 low at $0.2205. Conversely, reclaiming the 50-day exponential moving average positioned at $0.2681 would open the path toward $0.2992.

-

NewsBeat5 days ago

NewsBeat5 days agoSteven Gerrard disagrees with Gary Neville over ‘shock’ Chelsea and Arsenal claim | Football

-

Business4 days ago

Business4 days agoNo Jackpot Winner and $194 Million Prize Rolls Over

-

Fashion4 days ago

Fashion4 days agoWeekend Open Thread: Spanx – Corporette.com

-

Crypto World5 days ago

Crypto World5 days agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Business1 day ago

Business1 day agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Crypto World7 days ago

Dems press CFTC, ethics board on prediction-market insider trades

-

Sports2 days ago

Sports2 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Business3 days ago

Business3 days agoExpert Picks for Every Need

-

Business5 days ago

Business5 days agoLogin and Checkout Issues Spark Merchant Frustration

-

Tech7 days ago

Tech7 days agoEE TV is using AI to help you find something to watch

-

Sports7 days ago

Sports7 days agoTallest college basketball player ever, standing at 7-foot-9, entering transfer portal

-

Tech7 days ago

Tech7 days agoHow to back up your iPhone & iPad to your Mac before something goes wrong

-

Crypto World6 days ago

Crypto World6 days agoBitcoin enters the public bond market as Moody’s gives a first-of-its-kind crypto deal a rating

-

Crypto World6 days ago

Bitcoin stalls below key resistance as technical signals skew bearish

-

Tech5 days ago

Tech5 days agoCommonwealth Fusion Systems leans on magnets for near-term revenue

-

Politics7 days ago

Politics7 days agoTransform Your Space with Stunning Small Works

-

Politics6 days ago

Politics6 days agoStarmer’s centre has collapsed, and the left was right all along

-

Business7 days ago

Business7 days agoMatrix Composites shares up 50pc on new takeover bid

-

Fashion7 days ago

Fashion7 days agoTuesday’s Workwear Report: Tavira Sculpt Stretch Crepe Trousers

-

Business2 days ago

Business2 days agoNo Jackpot Winner, Prize to Climb to $231 Million

You must be logged in to post a comment Login