Crypto World

Revolutionising AI Application Development with Language Models

by Gonzalo Wangüemert Villalba

•

4 September 2025

Introduction The open-source AI ecosystem reached a turning point in August 2025 when Elon Musk’s company xAI released Grok 2.5 and, almost simultaneously, OpenAI launched two new models under the names GPT-OSS-20B and GPT-OSS-120B. While both announcements signalled a commitment to transparency and broader accessibility, the details of these releases highlight strikingly different approaches to what open AI should mean. This article explores the architecture, accessibility, performance benchmarks, regulatory compliance and wider industry impact of these three models. The aim is to clarify whether xAI’s Grok or OpenAI’s GPT-OSS family currently offers more value for developers, businesses and regulators in Europe and beyond. What Was Released Grok 2.5, described by xAI as a 270 billion parameter model, was made available through the release of its weights and tokenizer. These files amount to roughly half a terabyte and were published on Hugging Face. Yet the release lacks critical elements such as training code, detailed architectural notes or dataset documentation. Most importantly, Grok 2.5 comes with a bespoke licence drafted by xAI that has not yet been clearly scrutinised by legal or open-source communities. Analysts have noted that its terms could be revocable or carry restrictions that prevent the model from being considered genuinely open source. Elon Musk promised on social media that Grok 3 would be published in the same manner within six months, suggesting this is just the beginning of a broader strategy by xAI to join the open-source race. By contrast, OpenAI unveiled GPT-OSS-20B and GPT-OSS-120B on 5 August 2025 with a far more comprehensive package. The models were released under the widely recognised Apache 2.0 licence, which is permissive, business-friendly and in line with requirements of the European Union’s AI Act. OpenAI did not only share the weights but also architectural details, training methodology, evaluation benchmarks, code samples and usage guidelines. This represents one of the most transparent releases ever made by the company, which historically faced criticism for keeping its frontier models proprietary. Architectural Approach The architectural differences between these models reveal much about their intended use. Grok 2.5 is a dense transformer with all 270 billion parameters engaged in computation. Without detailed documentation, it is unclear how efficiently it handles scaling or what kinds of attention mechanisms are employed. Meanwhile, GPT-OSS-20B and GPT-OSS-120B make use of a Mixture-of-Experts design. In practice this means that although the models contain 21 and 117 billion parameters respectively, only a small subset of those parameters are activated for each token. GPT-OSS-20B activates 3.6 billion and GPT-OSS-120B activates just over 5 billion. This architecture leads to far greater efficiency, allowing the smaller of the two to run comfortably on devices with only 16 gigabytes of memory, including Snapdragon laptops and consumer-grade graphics cards. The larger model requires 80 gigabytes of GPU memory, placing it in the range of high-end professional hardware, yet still far more efficient than a dense model of similar size. This is a deliberate choice by OpenAI to ensure that open-weight models are not only theoretically available but practically usable. Documentation and Transparency The difference in documentation further separates the two releases. OpenAI’s GPT-OSS models include explanations of their sparse attention layers, grouped multi-query attention, and support for extended context lengths up to 128,000 tokens. These details allow independent researchers to understand, test and even modify the architecture. By contrast, Grok 2.5 offers little more than its weight files and tokenizer, making it effectively a black box. From a developer’s perspective this is crucial: having access to weights without knowing how the system was trained or structured limits reproducibility and hinders adaptation. Transparency also affects regulatory compliance and community trust, making OpenAI’s approach significantly more robust. Performance and Benchmarks Benchmark performance is another area where GPT-OSS models shine. According to OpenAI’s technical documentation and independent testing, GPT-OSS-120B rivals or exceeds the reasoning ability of the company’s o4-mini model, while GPT-OSS-20B achieves parity with the o3-mini. On benchmarks such as MMLU, Codeforces, HealthBench and the AIME mathematics tests from 2024 and 2025, the models perform strongly, especially considering their efficient architecture. GPT-OSS-20B in particular impressed researchers by outperforming much larger competitors such as Qwen3-32B on certain coding and reasoning tasks, despite using less energy and memory. Academic studies published on arXiv in August 2025 highlighted that the model achieved nearly 32 per cent higher throughput and more than 25 per cent lower energy consumption per 1,000 tokens than rival models. Interestingly, one paper noted that GPT-OSS-20B outperformed its larger sibling GPT-OSS-120B on some human evaluation benchmarks, suggesting that sparse scaling does not always correlate linearly with capability. In terms of safety and robustness, the GPT-OSS models again appear carefully designed. They perform comparably to o4-mini on jailbreak resistance and bias testing, though they display higher hallucination rates in simple factual question-answering tasks. This transparency allows researchers to target weaknesses directly, which is part of the value of an open-weight release. Grok 2.5, however, lacks publicly available benchmarks altogether. Without independent testing, its actual capabilities remain uncertain, leaving the community with only Musk’s promotional statements to go by. Regulatory Compliance Regulatory compliance is a particularly important issue for organisations in Europe under the EU AI Act. The legislation requires general-purpose AI models to be released under genuinely open licences, accompanied by detailed technical documentation, information on training and testing datasets, and usage reporting. For models that exceed systemic risk thresholds, such as those trained with more than 10²⁵ floating point operations, further obligations apply, including risk assessment and registration. Grok 2.5, by virtue of its vague licence and lack of documentation, appears non-compliant on several counts. Unless xAI publishes more details or adapts its licensing, European businesses may find it difficult or legally risky to adopt Grok in their workflows. GPT-OSS-20B and 120B, by contrast, seem carefully aligned with the requirements of the AI Act. Their Apache 2.0 licence is recognised under the Act, their documentation meets transparency demands, and OpenAI has signalled a commitment to provide usage reporting. From a regulatory standpoint, OpenAI’s releases are safer bets for integration within the UK and EU. Community Reception The reception from the AI community reflects these differences. Developers welcomed OpenAI’s move as a long-awaited recognition of the open-source movement, especially after years of criticism that the company had become overly protective of its models. Some users, however, expressed frustration with the mixture-of-experts design, reporting that it can lead to repetitive tool-calling behaviours and less engaging conversational output. Yet most acknowledged that for tasks requiring structured reasoning, coding or mathematical precision, the GPT-OSS family performs exceptionally well. Grok 2.5’s release was greeted with more scepticism. While some praised Musk for at least releasing weights, others argued that without a proper licence or documentation it was little more than a symbolic gesture designed to signal openness while avoiding true transparency. Strategic Implications The strategic motivations behind these releases are also worth considering. For xAI, releasing Grok 2.5 may be less about immediate usability and more about positioning in the competitive AI landscape, particularly against Chinese developers and American rivals. For OpenAI, the move appears to be a balancing act: maintaining leadership in proprietary frontier models like GPT-5 while offering credible open-weight alternatives that address regulatory scrutiny and community pressure. This dual strategy could prove effective, enabling the company to dominate both commercial and open-source markets. Conclusion Ultimately, the comparison between Grok 2.5 and GPT-OSS-20B and 120B is not merely technical but philosophical. xAI’s release demonstrates a willingness to participate in the open-source movement but stops short of true openness. OpenAI, on the other hand, has set a new standard for what open-weight releases should look like in 2025: efficient architectures, extensive documentation, clear licensing, strong benchmark performance and regulatory compliance. For European businesses and policymakers evaluating open-source AI options, GPT-OSS currently represents the more practical, compliant and capable choice. In conclusion, while both xAI and OpenAI contributed to the momentum of open-source AI in August 2025, the details reveal that not all openness is created equal. Grok 2.5 stands as an important symbolic release, but OpenAI’s GPT-OSS family sets the benchmark for practical usability, compliance with the EU AI Act, and genuine transparency.

Crypto World

Why Everyone’s Wrong About the AI Services Market

The opportunity isn’t that AI is new. It’s that most businesses still don’t understand it.

Everyone says the same thing: Build an AI agency. The market is wide open. They’re half right. The market is open, but not for the reasons people think.

The real opportunity isn’t that AI is new. It’s the intelligence gap—the distance between what’s possible and what businesses actually understand. And almost nobody is positioning themselves to profit from it.

The Numbers Are Misleading

1.3 billion people use free ChatGPT. Sounds massive until you realize 15-25 million pay for any AI tool, and only 2.5 million actively use AI for coding. These numbers collapse when you compare them to 400+ million businesses worldwide.

Most businesses haven’t touched AI in any meaningful way. They heard the hype. Maybe they tried ChatGPT once to write an email. Then they forgot about it. The technology exists in their world as an abstract concept, not as a solution to their specific problems.

Here’s Where Most People Go Wrong

They chase tech companies. Startup founders. People who already understand AI. Why? Psychologically, it’s comfortable. These prospects get it. Conversations move faster. You don’t have to explain automation basics.

But strategically? It’s the worst market you could choose. You’re competing against thousands of other people with the same idea. Pricing is brutal. Margins evaporate. These companies shop aggressively because they understand your value.

The Smart Move: Chase “Boring” Industries

Dentists. Contractors. Accountants. Real estate brokers. Insurance agents. Dental practices. These industries have three things in common:

- They make real money. An HVAC contractor who closes one extra job monthly from faster lead response doesn’t blink at a $500 retainer. That’s a 10-20x ROI.

- Zero AI competition. Nobody is systematically selling automation to dental offices. The market is massive and completely unsaturated.

- They refer constantly. These industries are tight-knit networks. One successful implementation leads to introductions to three more. Build once, sell six times.

The Framework That Changes Everything

Everyone knows they should chase boring industries. Almost nobody does. The gap between knowing and executing is where the real competitive advantage lives.

Here’s How to Position Correctly

- Identify their specific expensive problem. Not that they need AI. Something concrete. Leads going cold. Proposals taking three hours. Data scattered across systems.

- Quantify the cost. You’re losing 15 leads monthly because nobody answers the phone. That’s $75,000 in lost annual revenue.

- Show them a solution that costs 1% of that impact. A $400/month system that prevents 10% of those losses pays for itself in one week.

Suddenly you’re not expensive. You’re obviously cheap. This is how you close deals.

What This Means for You

Stop chasing prestige prospects. Stop trying to impress people who understand AI. Pick one unsexy industry—dentists, contractors, accountants. Go deep on understanding their specific problems. Learn their language. Build solutions to their expensive bottlenecks.

These business owners are hungry. They see the opportunity but don’t know how to implement it. They have money and they’re willing to spend it. And they’re desperately underserved by specialists who actually understand their business.

That’s the intelligence gap. And if you’re the one filling it, you win.

Crypto World

Polymarket to rebuild engine, launch native dollar stablecoin

Polymarket will rebuild its core engine, introduce a hybrid CLOB, and launch Polymarket USD, a USDC‑backed stablecoin on Polygon aimed at cheaper, more institution‑friendly trading.

Summary

- Prediction market Polymarket plans its “largest infrastructure upgrade” in the next 2–3 weeks, overhauling its matching engine and smart contracts.

- The upgrade will introduce a new hybrid CLOB model and a native stablecoin, Polymarket USD, pegged 1:1 to USDC on Polygon.

- The changes aim to cut gas costs, boost efficiency, and make the platform friendlier to institutions via EIP‑1271 and multi‑sig support.

On‑chain prediction market Polymarket will roll out what it calls “the largest infrastructure upgrade since its launch” in the coming 2–3 weeks, rebuilding its core trading engine and debuting a native dollar stablecoin, Polymarket USD, according to plans shared with The Block. The company said the overhaul will “completely reconstruct” its matching engine via a new CTF Exchange V2 smart‑contract system, while introducing a native stablecoin pegged 1:1 to USDC to replace the current bridged USDC.e on Polygon. Existing order books will be cleared during the migration, with Polymarket promising to give users at least one week’s notice before maintenance begins.

At the heart of the upgrade is a redesigned Central Limit Order Book that uses a hybrid model of off‑chain order matching combined with on‑chain, non‑custodial settlement. In technical documentation for its CTF Exchange, Polymarket describes the architecture as a “hybrid‑decentralized model” where an operator handles off‑chain matching while settlement remains on‑chain, a setup it says optimizes “performance and security” for high‑volume event markets. The Block reports that CTF Exchange V2 will introduce new matching logic and order‑data structures intended to improve matching efficiency and reduce gas costs for traders.

Polymarket has grown into one of the largest fully on‑chain prediction venues, recently drawing hundreds of millions of dollars in liquidity and a $600 million strategic investment from Intercontinental Exchange (ICE) as part of a broader bet on decentralized betting markets. ICE said its combined $1.6 billion of direct and secondary investment is not expected to be material to its financial results but positions the exchange operator as a key backer in what it calls a “David and Goliath battle” to bring prediction markets into the financial mainstream.

On the asset side, Polymarket USD formalizes a shift already underway in partnership with Circle to move from bridged USDC.e to native USDC on Polygon for all trading, order placement, and settlement. Circle has said native USDC, redeemable 1:1 for US dollars through its regulated entities, offers a “capital‑efficient” and more secure alternative to bridged tokens by eliminating cross‑chain bridge risk and tying collateral directly to its reserves. In line with that, Polymarket USD will be pegged 1:1 to USDC and used as the core collateral across the platform, with deposits from networks such as Ethereum, Solana, Arbitrum, and Base automatically converted into the new stablecoin on Polygon.

Polymarket will also add support for the EIP‑1271 (ERC‑1271) standard, allowing smart‑contract wallets such as Safe to validate signatures and trade directly, a move aimed at “expanding use cases for institutions and advanced users.” EIP‑1271 lets contracts define an isValidSignature method with arbitrary logic, making it easier for DAOs, funds, and multi‑sig setups to participate in non‑custodial markets without relying on externally owned accounts. The upgrade comes as competition in prediction markets intensifies, with Polymarket using performance, native dollar liquidity, and institutional‑grade wallet support to defend its lead in what it brands “The World’s Largest Prediction Market.”

Crypto World

Bitcoin Profit Takers Keep BTC Price Action Away From $70,000 Reclaim

Bitcoin found familiar resistance as it crossed the $70,000 mark to hit new April highs, with analysis blaming “profit-taking pressure.”

Bitcoin (BTC) coiled below $70,000 at Monday’s Wall Street open as analysis blamed profit taking for price inertia.

Key points:

-

Bitcoin and stocks wobble as the US trading session begins amid nerves over the US-Iran war outcome.

-

Profit taking activity is keeping BTC price action away from a $70,000 reclaim, says research.

-

A Trader says $71,000 will act as fuel for a surge $10,000 higher.

BTC price meets “profit-taking pressure”

Data from TradingView showed BTC price action consolidating after hitting new April highs of $70,275 on Bitstamp.

Market nerves over the US-Iran war resulted in uncertain trading, with US stocks treading water at the open.

Speaking to the media at a military event, US President Donald Trump reiterated earlier comments that Iran would “have no bridges” and “no power plants” unless a deal was reached.

“I won’t go further because there are other things that are worse than those two,” he told reporters.

Trump previously stated that the deadline for a deal was 8pm Eastern time on Tuesday.

With price pinned below the $70,000 mark, onchain analytics platform Glassnode pointed to internal market forces as the reason for the lack of continuation higher.

“As price probed the $70K region, Realized Profit/hour spiked above $20M, signalling a local exhaustion,” it noted in a post on X.

“A pattern consistent since February 2026: Every approach to the $70k–$80K band meets thin liquidity and profit-taking pressure, capping the bounce.”

Pseudonymous trader LP added that Mondays and Thursdays had seen the upper and lower end of the week’s trading range throughout 2026.

“Price pushed higher into Monday, increasing the probability of this pivot forming a weekly high. If the correlation continues to play out, this would suggest Thursday forms the low of the week,” they told X followers.

“Watch price action closely today and tomorrow, it will confirm whether this intra-week pivot resolved as a high or a low.”

Bitcoin trader eyes $71,000 springboard

Continuing, crypto trader Michaël Van de Poppe said the line in sand for bears lay slightly higher than Monday’s current peak.

Related: First real bull signal since 2025? Five things to know in Bitcoin this week

“Pretty strong momentum on the markets of Bitcoin,” he wrote on X about the initial move to $70,000.

“Volatility picking up, and I think it’s fireworks during this week as we might be getting to the end stage of the entire situation in the Strait of Hormuz. If Bitcoin breaks $71K, then markets are in for a test at $80K.”

Van de Poppe further cautioned on following blanket market consensus over new lows coming next.

“Given that all the markets are so oversold at this point, all on-chain indicators are looking overextended and are at similar levels to the bottom areas in 2018, 2020 and 2022, I wouldn’t be surprised that we’re getting a relief run that’s going to turn the sentiment quickly,” he concluded.

This article is produced in accordance with Cointelegraph’s Editorial Policy and is intended for informational purposes only. It does not constitute investment advice or recommendations. All investments and trades carry risk; readers are encouraged to conduct independent research before making any decisions. Cointelegraph makes no guarantees regarding the accuracy or completeness of the information presented, including forward-looking statements, and will not be liable for any loss or damage arising from reliance on this content.

Crypto World

BTC USD Price Finally Moving Up: Saylor Strategy Bought More Before The Rally

BTC USD price is moving again, at $69,000, it is up by 4% in just a day, bouncing hard off the long-term trendline that has defined every major cycle low since 2017. Before the movement, Strategy’s latest filing reveals that the firm was loading up just before this leg higher, spending $329.9 million in a single week at prices well below current levels.

Michael Saylor’s Strategy added 4,871 BTC to its treasury between late March and early April at an average cost of $67,718 per coin, bringing total holdings to 766,970 BTC acquired for $58.02 billion. The purchase was funded primarily through $227.3 million in STRC preferred stock sales, supplemented by $72 million in common stock proceeds.

At current prices, the full position sits roughly 8% underwater, about $5 billion in unrealized losses, yet the buying continued without hesitation. This conviction, right at a trendline support test, tends to matter.

The broader context makes this accumulation harder to dismiss. Strategy and spot ETFs are now the two dominant institutional absorption channels in a thinning market, with Strategy alone accumulating roughly 44,000 BTC over 30 days through late March.

Discover: The best crypto to diversify your portfolio with

Can BTC USD Price Break $72,000 This Week?

BTC USD is consolidating just below the $72,000 price resistance zone after reclaiming the 100-hour simple moving average. Volume confirmation arrived Monday evening and has held, which is a structurally positive development.

Daily RSI reads 53, MACD(12,26) at 499.5, and ADX(14) at 37.847, all of which point to sustained bullish momentum, though STOCH indicators are flashing overbought.

A daily close above $69,500 opens the path to $72,000 and potentially the $74,000 area that briefly traded in mid-March. Catalyst would be a softer-than-expected US jobs or inflation print, shifting Fed rate expectations.

Or a consolidation between $67,500 and $69,500 for several sessions, as the market digests the bounce, can also happen. Analysts forecast $67,000 by quarter-end, suggesting a range-bound grind before the next directional move.

However, a close below $66,000 and the long-term trendline would invalidate the current setup and expose the $64,000 range.

TradingView analysts noted this week: “A lot of people are turning very bearish on Bitcoin, but I don’t think it’s time to be bearish; the bearish trend is not confirmed.”

Price movement from here will largely depend on macro data and whether ETF inflows accelerate alongside the Strategy’s continued accumulation.

Discover: The best pre-launch token sales

Bitcoin Hyper Targets Early-Mover Upside While BTC Rally

Bitcoin rebounding toward $70,000 is undeniably bullish, but at a $1.4 trillion market cap, the asymmetric upside that characterized 2020 and 2021 is simply getting slimmer. The ship has sailed somewhere under $50,000.

Traders looking for leverage on a Bitcoin bull cycle without the ceiling constraints are increasingly scanning the infrastructure layer, specifically projects that extend Bitcoin’s utility rather than just price-follow it.

Bitcoin Hyper ($HYPER) is one presale generating real traction in that context. Positioned as the first-ever Bitcoin Layer 2 with Solana Virtual Machine (SVM) integration, it targets Bitcoin’s three structural weaknesses directly: slow transactions, high fees, and absent programmability.

The SVM integration is the differentiator; it has a faster performance than Solana itself through extremely low-latency Layer 2 processing, combined with a Decentralized Canonical Bridge for native BTC transfers.

The presale has raised more than $32 million at a current token price of just low $0.013, with staking available at a high 36% APY for early participants.

Research the Bitcoin Hyper presale thoroughly and join the army.

The post BTC USD Price Finally Moving Up: Saylor Strategy Bought More Before The Rally appeared first on Cryptonews.

Crypto World

Polymarket Launches Stablecoin, Overhauls Trading System

Polymarket is rolling out its biggest platform upgrade to date, introducing a new stablecoin and rebuilding its trading system.

The changes will take place over the next few weeks and aim to make the platform faster, simpler, and more reliable for users.

At the center of the update is a new collateral token called “Polymarket USD.” It will replace USDC.e and is backed 1:1 by USDC.

For most users, the switch will happen automatically with a one-time approval. However, advanced users and bot traders will need to manually convert their funds.

At the same time, Polymarket is upgrading how trades are placed and matched. The platform is introducing a new order book system and updated smart contracts.

These changes are designed to improve speed, reduce costs, and support more advanced trading activity.

As part of the transition, all existing order books will be cleared, and trading will pause briefly during a scheduled maintenance window. Polymarket said it will announce the exact timing in advance.

For everyday users, the impact will be minimal. The interface will handle most changes in the background. However, traders may notice smoother performance and quicker order execution after the upgrade.

Overall, the update signals a shift in how Polymarket operates. The platform is moving toward a more structured, exchange-like system built for higher trading volume and broader use.

The post Polymarket Launches Stablecoin, Overhauls Trading System appeared first on BeInCrypto.

Crypto World

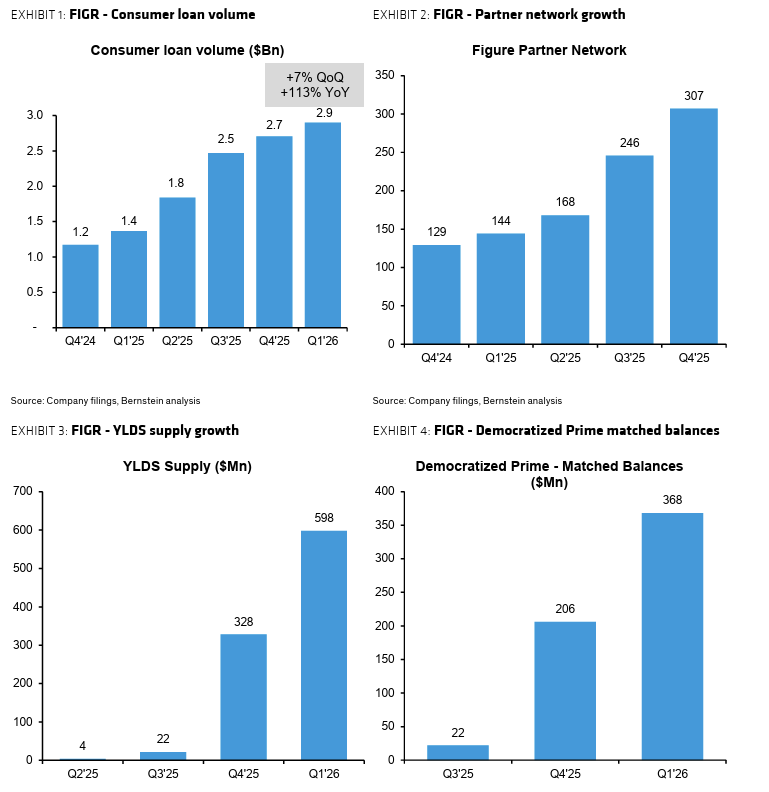

Bernstein Sees Upside from Loan Growth, Tokenization

Figure Technology Solutions, a blockchain-based lending platform that went public last year, may be undervalued at current levels as loan originations accelerate and its tokenized credit marketplace scales, according to Bernstein analysts.

In a report published Monday, Bernstein assigned Figure an “Outperform” rating and a $67 price target — nearly double the stock’s recent trading level of around $32.

The bullish call follows a surge in lending activity. Figure originated $1.2 billion in loans in March, up 33% from the previous month and marking the first time monthly volumes exceeded $1 billion.

The company primarily originates home equity lines of credit (HELOCs), which allow homeowners to borrow against their equity in the property, typically at lower interest rates than unsecured loans.

It uses the Provence blockchain to reduce friction in the loan process which it claims makes it more efficient than traditional lenders. According to Provenance, Figure is able to shave 117 basis points per loan by transacting on the blockchain.

First-quarter originations reached $2.9 billion, more than doubling from a year earlier and defying the usual seasonal slowdown in HELOC demand. The figure is now tracking roughly $12 billion in annualized loan volume.

Figure’s strong start to the year follows a largely positive fourth quarter, where earnings and revenue increased, though profits fell short of expectations.

Related: CoinShares stock makes US debut on Nasdaq following SPAC merger

Figure stock struggles despite strong fundamentals

Despite improving operating performance, Figure shares have fallen more than 20% this year, reflecting broader volatility across digital asset–linked stocks and sector-specific pressures.

The stock has also struggled to regain momentum following its high-profile Nasdaq market debut last September. That closely watched initial public offering valued the company at nearly $800 million.

Still, Bernstein’s analysis valued the company at roughly 25 times its projected 2027 EBITDA — meaning the stock trades at a multiple of its expected earnings before interest, taxes, depreciation and amortization.

This valuation sits above existing digital asset companies, reflecting what analysts describe as Figure’s “structural prospects” as both a tokenization platform and a profitable lending business.

However, risks remain. According to Bernstein, HELOC demand can be sensitive to mortgage refinancing trends, while the broader private credit market — a key pillar of Figure’s growth strategy — has shown signs of increasing pressure.

Related: Crypto Biz: Bitcoin treasuries break ranks as BTC dips below $70K

Crypto World

Bitcoin climbs above $70,000 as more contrarian bottoming signs emerge

Crypto has added to a Sunday rally, with bitcoin rising above $70,000 in quiet post-Easter U.S. trading hours.

The gains come alongside a modest advance in the major stock market averages ahead of President Trump’s Tuesday ultimatum for Iran to open the Strait of Hormuz. Just past the noon hour on the East Coast, the Nasdaq is higher by 0.45% and the S&P 500 by 0.3%.

Bitcoin is now higher by nearly 4% over the past 24 hours, with ether, XRP and solana posting similar gains.

Contrarian bitcoin bulls — as bitcoin crashed to $60,000 in early February — first took hope that a bottom was forming, as the strongly no-coiner Financial Times took a victory lap.

The bulls may have been even more pleased over this past weekend by a couple of other bottoming signals. First was the late Friday news that Jeff Park was exiting his role as chief investment officer at ProCap Financial (BRR). Led by Anthony Pompliano, ProCap was among 2025’s hastily formed bitcoin treasury companies aiming to hitch their wagon to the BTC bull market and replicate the success of Michael Saylor’s Strategy.

As with others of the 2025 crop — David Bailey’s Nakamoto (NAKA) and Jack Mallers’ Twenty One Capital (XXI) among them — ProCap stock has struggled mightily, performing far worse for shareholders than bitcoin itself.

Second was well-followed, longtime bull Willy Woo, suggesting that bitcoin could trade sideways for 8 to 12 years from here before finally entering a major bull market.

Other signals of the past couple of weeks: bitcoin miner MARA Holdings unloading more than 15,000 of its bitcoin stack, peer Riot Platforms selling off its entire March BTC production of 3,778 coins, and the aforementioned Nakamoto parting with some its holdings.

Whether the true bottom is in remains to be seen, but the bottoming signs continue to grow.

Crypto World

Federal Court Backs Kalshi in Historic Prediction Market Ruling

Key Takeaways

- Appeals court rules federal oversight supersedes state jurisdiction for Kalshi

- CFTC authority confirmed over prediction market contracts

- State gambling regulations blocked from interfering with Kalshi operations

- Decision establishes crucial precedent for U.S. prediction market industry

- Kalshi’s federally-regulated status validated by Third Circuit judges

A federal appeals court delivered a decisive victory for Kalshi, establishing that state regulators cannot enforce gambling restrictions against the prediction market platform. The Third Circuit Court of Appeals determined that Kalshi operates exclusively under Commodity Futures Trading Commission supervision, creating a protective federal shield against conflicting state laws. This watershed moment significantly expands Kalshi’s operational certainty across the nation.

Court Establishes Federal Supremacy Over Prediction Markets

The Third Circuit judges unanimously determined that Kalshi’s event-based contracts constitute federally regulated commodities rather than state-controlled gambling activities. The panel recognized Kalshi’s designation as a contract market under direct CFTC jurisdiction. This classification prevents individual states from applying their gambling statutes to the platform’s offerings.

The court’s analysis centered on the Commodity Exchange Act’s framework, which assigns comprehensive regulatory authority to the CFTC for swap agreements and related financial instruments. Judges found that sports outcome contracts traded on Kalshi qualify as swaps under federal commodity law. This interpretation grants Kalshi immunity from state-level enforcement measures.

The legal challenge originated when multiple state authorities, notably New Jersey, issued cease-and-desist directives targeting Kalshi’s operations. Kalshi contested these actions by asserting that federal regulatory approval preempts state-level prohibitions. The appellate court validated this argument, reinforcing the primacy of federal oversight.

State Regulators Face Jurisdictional Setback

New Jersey’s attorney general contended that Kalshi’s contract offerings violated state gambling prohibitions and operated illegally within state borders. The court dismissed this position, determining that the Commodity Exchange Act explicitly reserves regulatory power for federal authorities. Kalshi successfully defended against potential operational restrictions that threatened its business model.

The majority opinion stressed that Congressional intent clearly established the CFTC as the sole regulator for designated contract markets and swap transactions. According to the ruling, states retain enforcement capabilities only over activities falling outside federal regulatory frameworks. Kalshi’s compliance with CFTC requirements places it firmly within protected federal territory.

A lone dissenting judge raised concerns that Kalshi’s products functionally mirror conventional sports wagering activities. This dissent advocated for preserving state regulatory rights over betting-like offerings. Despite this objection, the prevailing judicial opinion affirmed Kalshi’s status as a legitimate commodities exchange platform.

Implications for the Prediction Market Industry

This judicial determination establishes critical legal foundation for prediction market growth throughout American financial markets. Kalshi now enjoys enhanced regulatory clarity enabling nationwide service expansion without confronting conflicting state requirements. The decision eliminates substantial legal ambiguity that previously clouded federal-state jurisdictional boundaries.

The Commodity Futures Trading Commission has consistently backed Kalshi and comparable platforms when facing state regulatory challenges. Federal regulators have actively opposed state attempts to impose restrictions on CFTC-approved exchanges. This appellate victory provides additional confirmation of Kalshi’s regulatory compliance and legitimacy.

The precedent established by this case will likely shape how emerging prediction market ventures structure their platforms under federal commodity regulations. Kalshi emerges with strengthened competitive positioning and validated legal framework for continued growth. This ruling represents a defining moment for prediction markets’ integration into mainstream financial infrastructure.

Crypto World

Kalshi wins key court ruling as U.S. judges curb state power over prediction markets

A US appeals court sided with Kalshi, ruling that CFTC‑regulated event contracts fall under federal law, not New Jersey gambling rules, reshaping prediction market oversight.

Summary

- U.S. appeals court says New Jersey cannot regulate Kalshi’s CFTC‑supervised sports contracts.

- Ruling strengthens federal preemption and could reshape how prediction markets compete with sportsbooks.

- Decision lands amid a broader legal war between states, Kalshi, and the CFTC over who controls event‑based trading.

A federal appeals court has ruled that New Jersey cannot bar Kalshi from offering sports‑related event contracts in the state, declaring that the Commodity Exchange Act and the Commodity Futures Trading Commission (CFTC) hold exclusive authority over those markets. In a 2‑1 decision, the 3rd U.S. Circuit Court of Appeals in Philadelphia held that trading on Kalshi’s designated contract market is governed by federal derivatives law, not state gambling codes, effectively blocking New Jersey regulators from enforcing their cease‑and‑desist order. The ruling cements a major legal win for Kalshi, which has argued for years that its contracts are swaps and hedging tools rather than traditional sports bets.

The case stems from a series of cease‑and‑desist letters sent by New Jersey in 2025, accusing Kalshi’s sports markets of violating the state’s Sports Wagering Act and constitution and threatening fines of up to $100,000 per violation. Kalshi sued in federal court, claiming that, as a CFTC‑regulated designated contract market, its event contracts sit squarely within federal jurisdiction and are “a type of ‘swap’ regulated by the Commodity Exchange Act.” A New Jersey federal judge had already granted Kalshi a preliminary injunction in 2025, writing that he was “persuaded that Kalshi’s sports‑related event contracts fall within the CFTC’s exclusive jurisdiction,” a view the 3rd Circuit has now largely endorsed.

The appeals court’s opinion aligns with Kalshi’s broader strategy as it fights regulators in multiple states, including Nevada, Maryland, and Tennessee, over whether its markets are illegal gambling or federally protected derivatives. In Tennessee, for example, U.S. District Judge Aleta Trauger recently granted a temporary restraining order halting enforcement of that state’s cease‑and‑desist order, finding that Kalshi is likely to succeed on its argument that federal law preempts state gambling statutes. More broadly, the CFTC and U.S. Department of Justice have escalated the fight by suing Arizona, Connecticut, and Illinois over what CFTC Chair Mike Selig called “aggressive and overzealous attempts to overstep the CFTC” in their efforts to police prediction markets.

Responding to the New Jersey decision, Kalshi co‑founder and CEO Tarek Mansour called the appeals ruling a “significant victory” and argued that regulated prediction markets “offer greater transparency and fairness” than opaque traditional betting channels. In earlier commentary, Mansour has said that prediction markets can outperform conventional financial instruments by delivering “clean, crowd‑driven probabilities instead of noisy headlines,” framing platforms like Kalshi as information infrastructure rather than casinos. The decision also lands as rivals such as Polymarket secure their own CFTC approvals, with the agency “effectively welcoming” Polymarket into the club of fully regulated U.S. exchanges and binding it to full designated‑contract‑market‑style surveillance and self‑regulatory duties.

Despite the 3rd Circuit win, Kalshi’s regulatory risk is far from over. A Nevada judge recently extended a ban preventing the company from offering event‑based contracts in that state, underscoring the fragmented legal landscape facing prediction platforms. At the federal level, a bipartisan group of U.S. senators has floated legislation to ban sports‑bet and casino‑style contracts on CFTC‑regulated prediction markets altogether, raising the prospect that Congress, not just courts, will decide how far companies like Kalshi can push into sports.

Crypto World

Tom Lee’s BitMine Storms the NYSE With $11 Billion in Crypto

Bitmine Immersion Technologies (BMNR) announced $11.4 billion in total crypto and cash holdings alongside approval to uplist to the New York Stock Exchange (NYSE).

The company will begin trading on the NYSE on April 9, 2026, after its stock ceases trading on the NYSE American following market close on April 8. BMNR will retain its ticker symbol.

BitMine’s ETH Treasury Grows to Nearly 4% of Total Supply

BitMine’s holdings as of this writing include 4,803,334 Ethereum (ETH) tokens valued at $2,146 per coin, 198 Bitcoin (BTC), a $200 million position in Beast Industries, a $92 million stake in Eightco Holdings (ORBS), and $864 million in cash.

The company now controls 3.98% of all ETH in circulation, placing it over 79% toward its stated goal of accumulating 5% of the total supply.

That target has been central to BitMine’s strategy since its pivot from Bitcoin mining to Ethereum accumulation in mid-2025.

BitMine acquired 71,252 ETH in the week ending April 5, its highest weekly purchase since late December 2025.

The company has steadily increased its weekly buying pace throughout 2026, rising from roughly 33,000 tokens per week in early January to above 70,000.

Tom Lee Frames ETH as a Wartime Safe Haven

Chairman Thomas “Tom” Lee, also known for his role at Fundstrat, positioned Ethereum’s performance against the backdrop of the ongoing Iran conflict, which began on February 28 with joint US-Israeli strikes.

“ETH remains the second best performing asset since the start of the war, with a 6.8% gain and outperforming the S&P 500 by 1,130bp. And ETH beating gold by 1,840bp demonstrates ETH is the wartime store of value,” read an excerpt in the announcement, citing Tom Lee.

Lee added that Ethereum benefits from Wall Street’s shift toward blockchain tokenization and growing demand from agentic AI systems for public, neutral networks.

The Iran war has triggered what the International Energy Agency called the largest supply disruption in oil market history, sending shockwaves through equities and commodities globally.

Against that backdrop, Lee argued that ETH’s absolute gains signal investor confidence that could eventually pull sidelined capital back into risk assets.

These remarks align with sentiment from Geoff Kendrick, Global Head of Digital Asset Research at Standard Chartered, during a recent BeInCrypto Experts Council.

“I think Ethereum probably wins for the next little while on the back of TradFi getting involved. As banks and other build stuff on the blockchain space, it’s almost all going to happen on Ethereum for the next couple of years, I think,” Kendrick told BeInCrypto.

Staking and Institutional Backing

BitMine has 3,334,637 ETH staked, generating an annualized yield of 2.78% and annualized staking revenues of $196 million. The company also launched MAVAN, its institutional-grade Ethereum staking platform built to serve custodians and ecosystem partners.

The firm ranks as the 96th most traded stock in the US by daily dollar volume at $987 million, placing it between Schlumberger and Adobe.

Its institutional investor base includes ARK Invest’s Cathie Wood, Founders Fund, Pantera, Kraken, Galaxy Digital, and personal investor Tom Lee.

BitMine now trails only Strategy Inc. (MSTR) as the second-largest crypto treasury company globally. Strategy holds 766,970 BTC valued at approximately $53.5 billion.

The NYSE uplisting, increasing weekly ETH accumulation, and growing staking revenue suggest BitMine’s next phase will test whether institutional appetite for an Ethereum-focused treasury model can rival the attention that Strategy has drawn with Bitcoin.

The post Tom Lee’s BitMine Storms the NYSE With $11 Billion in Crypto appeared first on BeInCrypto.

-

NewsBeat4 days ago

NewsBeat4 days agoSteven Gerrard disagrees with Gary Neville over ‘shock’ Chelsea and Arsenal claim | Football

-

Business4 days ago

Business4 days agoNo Jackpot Winner and $194 Million Prize Rolls Over

-

Fashion3 days ago

Fashion3 days agoWeekend Open Thread: Spanx – Corporette.com

-

Crypto World5 days ago

Crypto World5 days agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Business18 hours ago

Business18 hours agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Crypto World6 days ago

Dems press CFTC, ethics board on prediction-market insider trades

-

Sports2 days ago

Sports2 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Business5 days ago

Business5 days agoLogin and Checkout Issues Spark Merchant Frustration

-

Tech6 days ago

Tech6 days agoEE TV is using AI to help you find something to watch

-

Sports6 days ago

Sports6 days agoTallest college basketball player ever, standing at 7-foot-9, entering transfer portal

-

Politics7 days ago

Politics7 days agoShould Trump Be Scared Strait?

-

Tech7 days ago

Daily Deal: StackSkills Premium Annual Pass

-

Tech7 days ago

Tech7 days agoFlipsnack and the shift toward motion-first business content with living visuals

-

Sports7 days ago

Sports7 days agoWomen’s hockey camp eyes fitness boost, tactics ahead of WC 2026 campaign | Other Sports News

-

Crypto World7 days ago

Crypto World7 days agoU.S. rule change may open trillions in 401(k) funds to crypto

-

Tech6 days ago

Tech6 days agoHow to back up your iPhone & iPad to your Mac before something goes wrong

-

NewsBeat7 days ago

NewsBeat7 days agoNewscast – Scott Mills Sacked By BBC

-

Politics7 days ago

Politics7 days agoUsha Vance: Disney Hats Over MAGA Caps?

-

Crypto World7 days ago

Valinor raises $25m to put private credit on-chain

-

Business7 days ago

Business7 days agoFunctional benefits brewing in coffee innovation

You must be logged in to post a comment Login