Crypto World

Sui Foundation Unveils Infrastructure Framework for Autonomous AI Agent Execution

TLDR:

- Sui Foundation released framework addressing infrastructure gaps for autonomous AI agents executing workflows.

- Platform provides shared verifiable state, atomic execution, and proof mechanisms for AI-driven operations.

- Traditional internet architecture lacks coordination needed for autonomous software operating at machine speed.

- Sui’s execution layer enables multi-step workflows to complete fully or fail cleanly without partial states.

Sui Foundation released a comprehensive framework on January 30, 2026, addressing infrastructure requirements for autonomous AI agents.

The platform enables AI systems to execute multi-step workflows with verifiable outcomes and shared state management.

Sui’s execution layer treats autonomous software actions as core functionality rather than supplementary features, addressing limitations in current internet architecture designed for human-driven interactions.

Infrastructure Gaps in Current Web Architecture

Traditional internet infrastructure operates under assumptions that autonomous AI agents cannot accommodate effectively.

Session timeouts, manual retries, and human intervention patterns create friction when software executes independently. APIs function as isolated endpoints without shared state coordination across different services and platforms.

Current web systems fragment authoritative information across multiple applications that lack common truth sources. Partial successes and ambiguous failures become problematic when AI agents operate without human oversight.

Reconciling outcomes across disparate systems introduces risks of duplication and inconsistency that humans can manage but autonomous software cannot.

Agentic workflows spanning multiple platforms compound these architectural weaknesses as execution becomes assumption chains rather than coordinated processes.

Logs record events but require interpretation to determine authoritative outcomes. The shift from AI recommendations to actual execution introduces irreversible consequences requiring different trust mechanisms.

Actions trigger permanent changes, including bookings, resource allocations, and financial transactions that cannot be reversed like advisory outputs.

Authorization, intent alignment, and auditable outcomes become mandatory rather than optional features. The fundamental question evolves from whether systems produce plausible answers to whether they execute correct actions under proper constraints.

Sui’s Execution Layer Capabilities

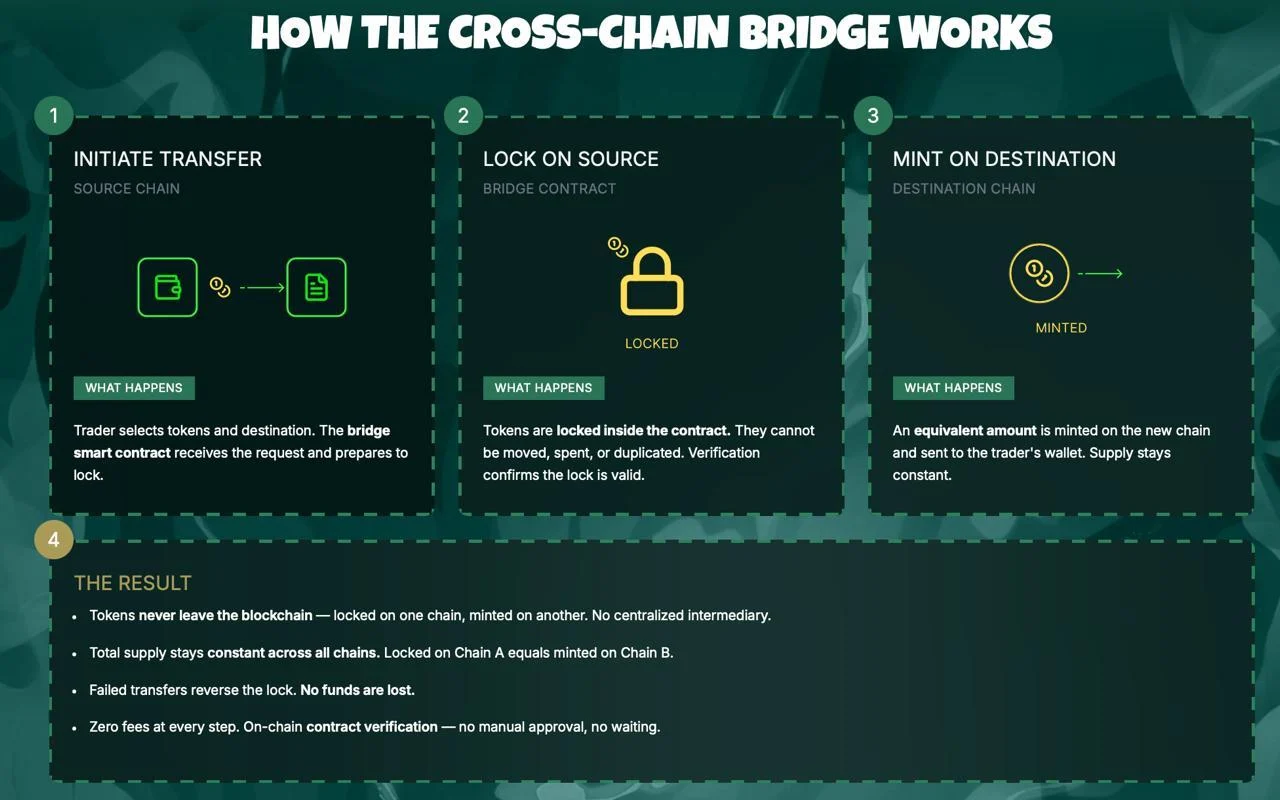

Sui Foundation designed its platform with four foundational capabilities addressing autonomous agent requirements.

The network provides shared verifiable state allowing systems to determine current conditions, changes, and outcomes directly.

Rules and permissions travel with governed data and actions rather than requiring redefinition at system boundaries.

Atomic execution across workflows ensures multi-step processes complete fully or fail cleanly without partial states.

An agent booking travel can reserve flights, confirm hotels, and process payments as single operations. Either the entire workflow succeeds or nothing commits, eliminating reconciliation needs and ambiguity.

The platform generates proof of execution establishing how actions occurred, under which permissions, and whether intended rules were followed.

Verifiable evidence replaces reconstruction requirements and interpretation efforts after fact. Outcomes settle as definitive results rather than requiring piecing together from multiple log sources.

Sui groups data, permissions, and history together within the network for clarity on action scope and authorization. Complex tasks execute directly and settle as single outcomes instead of coordinating intent across applications afterward.

The execution layer coordinates intent, enforces rules, and settles outcomes by default without constant human oversight.

The foundation published detailed technical documentation covering verifiable inputs, execution accountability, value exchange mechanisms, and end-to-end system integration.

These components address data provenance, integrity, policy-aware access, licensing, payments, and agentic commerce handled safely through programmatic methods.

The framework positions execution infrastructure as the differentiator as AI agents assume greater operational responsibility beyond intelligence capabilities alone.

Crypto World

Nakamoto (NAKA), Sharplink Gaming (SBET), and Stive (ASST) viewed positively at Cowen

After declines of 90% or more in digital asset treasury companies Nakamoto (NAKA), Sharplink Gaming (SBET) and Strive (ASST), TD Cowen’s Lance Vitanza is spotting value.

He argued that each could outperform spot crypto exchange-traded products if crypto prices recover and the firms keep expanding token holdings on a per-share basis.

Nakamoto Holdings

Vitanza initiated coverage of Nakamoto (NAKA) with a Buy rating and a $1.00 price target, suggesting nearly a five-hold increase from today’s close of $0.21. He based that target on estimated bitcoin dollar gains of $394 million for fiscal 2027, a 2x multiple and a bitcoin price of about $140,000 at the end of 2026.

He said Nakamoto stands out among public bitcoin treasury companies because it combines direct bitcoin accumulation with minority stakes in overseas treasury firms such as Metaplanet and Treasury BV. He also pointed to operating businesses in media, bitcoin advocacy and digital asset management, saying those assets create “distinct synergy potential.”

SharpLink Gaming

Starting SharpLink Gaming (SBET) with a buy rating and a $16 price target, Vitanza sees dollar gains of $93 million for fiscal 2026, a 2x multiple and an ether price of about $3,650 by December 2026. SBET closed Thursday at $6.42.

He described SharpLink, which is led by ex-BlackRock head of digital assets, Joseph Chalom and Ethereum co-founder Joseph Lubin, as an Ethereum treasury company that aims to grow ether per share through treasury operations and staking. Vitanza said the company may deliver better staking yield than spot ether ETPs because fund investors absorb fees, and many products cannot stake a large share of holdings.

He also argued that even if ether stays weak, staking income should more than cover operating costs. That, he said, could help SharpLink continue to produce positive ETH yield while it waits for capital markets to reopen.

Strive

Vitanza initiated Strive (ASST) with a buy rating and a $26 price target, or nearly triple today’s closing price of $9.64. He tied that target to estimated bitcoin dollar gains of $142 million for fiscal 2026, a 2x multiple and bitcoin at about $140,000 by year-end 2026.

He said Strive is the first public bitcoin treasury company to acquire another one, citing its January 2026 purchase of Semler Scientific. Vitanza called it a “watershed event” and said it supports the view that Strive could become a logical consolidator if more treasury companies trade at a discount to the value of their bitcoin.

He also highlighted Strive’s mix of asset management, social media marketing and bitcoin education businesses. In TD Cowen’s view, those units could support treasury operations and help the company outperform spot bitcoin funds in a favorable market.

Crypto World

Distributed Tokenized RWA Market to Hit $400B by 2030: Keyrock, Securitize

RWA perpetuals tied to assets like gold, silver and oil, grew 40x in six months, the new report shows.

Market maker Keyrock and tokenization platform Securitize published a new report on the future of real-world asset (RWA) tokenization today, April 9. According to the research, the distributed RWA market — meaning tokenized assets that are freely transferable on-chain — is projected to grow from around $29 billion today to $400 billion by 2030 as a base case, an over 1,000% increase.

The joint report also flags perpetual futures as the fastest-growing on-chain channel for RWA exposure, already on track to dominate derivatives by 2028.

The report, titled “The $400T Future of Tokenised Assets,” covers five RWA classes — Treasuries, private credit, equities, commodities, and alternative funds — and maps the regulatory, liquidity, and infrastructure conditions needed for each to scale.

Today, tokenized RWAs represent less than 0.1% of the $400 trillion global market that is eligible for tokenization, per the report. In the base case, Keyrock and Securitize project the broader market of blockchain-tracked RWAs, often referred to as represented RWAs, hitting $5 trillion by 2030.

Equities represent the largest notional upside, while Treasuries are positioned to lead in the near term, scoring highest in the report’s “readiness framework,” which grades asset classes across standardization, liquidity, valuation frequency, redemption speed, regulatory clarity, and on-chain demand.

Demand for RWA Perps

RWA perps, namely perpetual futures tied to commodities like oil, gold and silver, have surged in popularity in recent months, driven by broader adoption of on-chain derivatives and demand for 24/7 macro exposure. Geopolitical tensions and, more recently, an escalating war in the Middle East, have likely contributed to short-term spikes in trading activity.

The new report found that RWA perpetual volumes grew 40x in six months to $67 billion in monthly volume, even as volumes across the broader on-chain derivatives market fell by half.

Specifically, RWA perps jumped from 0.1% to 10.1% of all on-chain derivatives volume since October 2025, the report states. At the current pace, the report projects RWA perps could account for 50% of all on-chain derivatives volume by 2028.

The engine behind that growth is largely Hyperliquid’s HIP-3 upgrade, which launched in October 2025 and enables permissionless deployment of perpetual futures markets.

Monthly equity perp volume on HIP-3 grew from $760 million in October 2025 to $20 billion by last month, per the report. Commodity perps — spanning gold, silver, copper, oil, and others — hit $40 billion in March alone. The report frames perps not as a workaround but as a crypto-native evolution of tokenization: synthetic exposure to real-world assets without the compliance overhead of direct ownership.

Treasuries vs DeFi Yield

The report also highlights yield on tokenized Treasuries, especially against the backdrop of waning DeFi yields. Per the report, tokenized T-bills have paid more than DeFi’s benchmark stablecoin lending rate on 64% of all days since mid-2024. In Q1 2026 alone, that figure reached 98% — with 3.6x lower yield volatility than DeFi lending rates over the same period.

Keyrock and Securitize identify 2027 as the first year where regulation, market depth, liquidity infrastructure, and distribution are likely to mature simultaneously — a “convergence window” they say will concentrate growth in whichever asset classes hit all four milestones first.

The findings arrive as institutional pressure on tokenization intensifies. The IMF recently argued that tokenization represents a “structural shift in financial architecture,” while The Defiant has previously reported on how RWAs became Wall Street’s gateway to crypto in 2025 and tokenized assets’ shift from wrappers to DeFi building blocks.

This article was written with the assistance of AI workflows. All our stories are curated, edited and fact-checked by a human.

Crypto World

Kamino Introduces Contract-Level Security Controls for Lending Vaults

The feature prevents compromised curator keys from redirecting depositor funds to unvetted reserves.

Kamino, the largest lending protocol on Solana, has rolled out a new security feature called Whitelisted Reserves that enforces allocation controls at the smart contract level across its lending vaults.

The move comes just over a week after the Drift Protocol exploit, in which attackers drained roughly $270M from the Solana-based perpetual futures exchange using social engineering and compromised admin keys. The attack, which security firms have since attributed to DPRK-linked threat actors, rattled the broader Solana ecosystem and prompted the Solana Foundation to launch a new tiered security program for decentralized finance (DeFi) protocols.

Kamino’s Whitelisted Reserves mechanism ensures that vault funds can be deployed only to reserves explicitly approved by a protocol-level multisig. If a vault curator’s keys are compromised, an attacker would be unable to redirect depositor funds into a malicious or unvetted market, a scenario that could otherwise drain a vault’s liquidity.

“With Whitelisted Reserves, that attack path is closed,” Kamino said. “The smart contract rejects any allocation or investment into a reserve that Kamino has not explicitly whitelisted, regardless of who signs the transaction.”

The feature enforces two onchain restrictions: curators cannot create or increase allocations outside the whitelist, and depositor funds cannot flow into any unvetted reserves via the vaults. Both restrictions are irreversible once activated by a curator.

All vaults currently displayed on Kamino’s frontend — including those managed by Sentora, Gauntlet, Steakhouse, Allez Labs, and RockawayX — now have Whitelisted Reserves enabled. Going forward, the feature will be a requirement for any vault to appear on the Kamino interface.

Withdrawals remain unaffected by the whitelist; depositors can exit vaults at any time, subject to available liquidity.

Kamino is the largest DeFi protocol on Solana and ranks among the top lending platforms across all chains. Earlier this year, the protocol launched Lend V2, introducing modular markets, automated lending vaults, margin leverage, and RWA integration.

This article was written with the assistance of AI workflows. All our stories are curated, edited and fact-checked by a human.

Crypto World

Ex-SEC Official Lands Securitize Presidency Just Before Its IPO

Blockchain infrastructure company Securitize has appointed Brett Redfearn, a former US Securities and Exchange Commission (SEC) official, as president.

The move comes amid a broader wave of former regulators moving into executive roles as crypto seeks greater credibility.

Securitize Scales Up Ahead of Public Debut

As president, Redfearn will work with Securitize’s leadership team to scale the company’s platform across issuance, trading, and fund administration, while driving engagement with regulators, exchanges, and institutional partners.

Redfearn is not new to Securitize. He has served as chairman of the company’s advisory board for the past four years, giving him direct familiarity with the business ahead of his expanded role.

“Securitize is perfectly positioned to lead the implementation of the tokenized financial infrastructure of the future,” Redfearn said in a statement. “The company has taken a compliance-first approach to tokenization from the beginning, without cutting corners.”

Beyond the SEC, Redfearn spent 14 years at JP Morgan and served as head of capital markets at Coinbase.

Carlos Domingo, co-founder and CEO of Securitize, said Redfearn had been “instrumental in how modern markets are structured and regulated,” adding that his experience would help ensure the transition to tokenized infrastructure is built with the “protections and integrity investors expect.”

The appointment comes as Securitize prepares to go public. The company has announced a proposed business combination with Cantor Equity Partners II, listed on Nasdaq.

From Agency Chairs to Industry Insiders

Securitize’s recent hire is the latest in a string of senior regulatory appointments across the crypto industry.

Last month, crypto exchange Backpack named Mark Wetjen, a former acting chairman of the Commodity Futures Trading Commission (CFTC), as president of its US entity.

Before that, former CFTC Acting Chair Caroline Pham departed the agency to become chief legal officer at crypto finance company MoonPay.

The appointments reflect a fundamental shift in the US regulatory scene, making former officials newly valuable to the industry.

Under Trump, the SEC and CFTC moved from adversaries locked in a jurisdictional turf war to active co-regulators. In March, the two agencies signed a memorandum of understanding and later jointly issued landmark guidance on crypto asset classification.

That shift has made former senior officials from both agencies among the most sought-after hires in the industry. They bring institutional knowledge, existing relationships, and credibility with the very regulators their new employers now need to court.

Critics, however, warn that the trend carries risks.

In May 2025, the Revolving Door Project argued the Blockchain Association’s hire of former CFTC Commissioner Summer Mersinger went beyond rewarding a friendly regulator. It was, the group warned, potentially a way of acquiring control over the agency itself.

As crypto enters its most consequential regulatory phase yet, the line between those who write the rules and those who profit from them remains an open question.

The post Ex-SEC Official Lands Securitize Presidency Just Before Its IPO appeared first on BeInCrypto.

Crypto World

AI news Perplexity jumps 50% after one big change

The AI news out of Perplexity this week confirmed what many had been watching build since February: the company’s annual recurring revenue hit $450 million in March, a 50 percent jump in a single month, after it launched an AI agents product called Computer and shifted to usage-based pricing.

Summary

- The Financial Times reported the $450 million ARR milestone, citing figures seen by the publication; the jump is the fastest monthly revenue increase in Perplexity’s history since its 2022 founding, bringing ARR from $305 million to $450 million in approximately 30 days

- The revenue acceleration was driven by two changes made on February 25: the launch of Computer, an autonomous agent platform that orchestrates 19 specialized AI models to complete complex tasks, and a credits-based pricing model that charges users beyond a set monthly allocation

- Perplexity now has over 100 million monthly active users including tens of thousands of enterprise clients, with subscription tiers ranging from $20 to $200 per month; the company was valued at $20 billion in September 2025 and had set an internal target of $656 million in ARR by end of 2026

As PYMNTS reported, the revenue surge tracked closely with Perplexity’s pivot from AI-powered search toward autonomous agents that execute tasks rather than answer questions. Computer, the flagship agentic product, functions as an orchestration layer coordinating up to 19 specialized AI models from providers including OpenAI, Anthropic, and Google to execute multi-step workflows. CEO Aravind Srinivas described the system as one where “one reasons, another codes, another writes.” Perplexity also dropped advertising entirely in February, citing concerns that ads would erode trust in AI-generated outputs, concentrating its revenue entirely on subscriptions and usage fees tied to performance.

The revenue trajectory tells the story. Perplexity grew ARR from $16 million to $305 million over two years, which was already fast. Then in a single month it added $145 million in annualized revenue. That acceleration reflects something becoming a core thesis across the AI industry: users will pay significantly more to have AI do things than to have AI say things. The usage-based pricing model reinforces this because revenue now scales with actual compute consumed by agent workflows, aligning monetization directly with value delivered. The company still faces lawsuits from publishers including The New York Times and Britannica alleging copyright infringement, as well as a separate privacy suit it has denied.

What the $450 Million Figure Means for Enterprise AI Broadly

The competitive landscape has shifted. Perplexity is no longer positioned against search engines but against enterprise automation platforms, where execution and measurable outcomes define success. Gartner projects that 40 percent of enterprise applications will include task-specific agents by end of 2026. As crypto.news has reported, AI integration is now reshaping headcount and spending patterns across industries as companies shift budgets toward tools that produce outputs rather than answers.

What Perplexity Needs to Sustain This Pace

The internal target of $656 million in ARR by end of 2026 once looked aggressive. At the current monthly pace it is within reach. As crypto.news has noted, monetization signals from mid-size AI companies are closely tracked by investors evaluating whether the broader AI infrastructure buildout produces durable revenue or speculative valuations. Perplexity’s next test is whether enterprise retention holds as the novelty of agents matures and competitors deploy similar orchestration layers at scale.

Crypto World

Circle (CRCL) and Bullish (BLSH) fail to participate in Thursday rally

Crypto prices and U.S. stocks rallied Thursday on diminishing Middle East worries, but Circle (CRCL), Bullish (BLSH) and Coinbase (COIN) all posted sizable declines.

Circle tumbled 9.9% to $85.10 after Compass Point downgraded the stock to Sell from Neutral and cut its price target by $2 to $77. The brokerage said USDC has held up better than in prior down cycles, but argued that supply growth is moving into lower-margin areas. It also said Circle now trades at 40 times what it called optimistic 2027 adjusted EBITDA estimates, and warned that consensus forecasts for 2026 and 2027 may have to come down as first-half 2026 gross margins contract.

The firm said more USDC is now sitting on platforms such as Sky, Binance and Ethena, where revenue-sharing agreements reduce Circle’s economics. In bear markets, that can matter. A stablecoin may keep its supply, but the profit pool can shrink if more of that supply sits in lower-yield channels.

Bullish also faced sell-side pressure, declining 6.5% to $36.12 after Rosenblatt downgraded the stock to Neutral from Buy while keeping its $39 price target. Rosenblatt said Bullish now trades at 28 times consensus adjusted EBITDA, a premium to peers, including Coinbase and Robinhood (HOOD), and added that estimates are becoming more vulnerable as crypto activity weakens and IPO-related boosts to non-trading revenue fade.

Bitcoin , meanwhile, climbed above the $72,000 mark and is trading at its highest level in more than three weeks. The move appeared tied to what markets read as positive news around the U.S.-Iran conflict. Israeli Prime Minister Benjamin Netanyahu said Thursday that he had instructed his cabinet to launch direct negotiations with Lebanon.

The development drew attention because senior U.S. officials said envoy Steve Witkoff had asked Netanyahu to scale back strikes in Lebanon and open talks. It also marked a shift from President Donald Trump’s earlier stance, after he gave Netanyahu room to continue the war in Lebanon shortly before announcing a ceasefire with Iran on Tuesday.

The Nasdaq climbed 0.8% and the S&P 500 rose 0.6%.

Crypto World

Everything About the Ethereum Price Prediction and Whether $5,000 Is Possible While Pepeto Attracts Whale Capital

The ethereum price prediction just got a major signal. BlackRock dropped $60.8 million on ETH on April 7 according to Watcher Guru, the largest single-day ETH ETF buy in months. That kind of size does not show up unless the smart money sees something the crowd has not priced in.

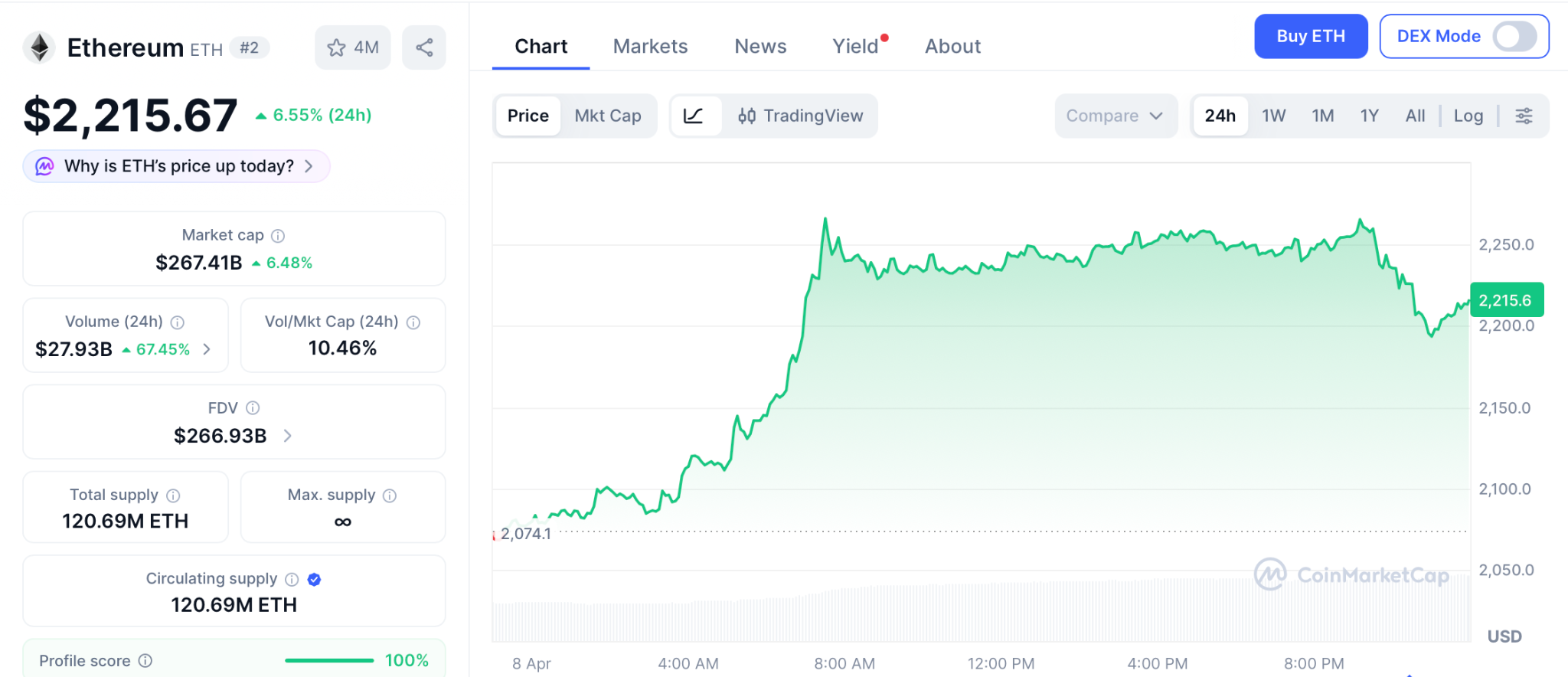

The ethereum price prediction rides on whether institutions keep buying at this pace. While ETH climbed 6.55% to $2,215 on the ceasefire rally, whale capital chasing faster returns is stacking into Pepeto, where the cofounder who built Pepe to $11 billion runs a presale with live exchange tools and a Binance listing confirmed.

Ethereum Price Prediction Gets a Boost as BlackRock and Central Banks Move In

BlackRock’s ETH ETF bought $60.8 million on April 7 according to Watcher Guru, while central banks including Banque de France, UBS, and Societe Generale started moving parts of the $12.5 trillion repo market onto Ethereum according to CoinMarketCap.

ETH also broke out of the same chart pattern that kicked off a 250% rally in April 2025 according to Blockchain News. The Glamsterdam upgrade is scheduled for June 2026, and Standard Chartered raised its ethereum price prediction target to $7,500 for this cycle.

When BlackRock buys $60.8 million in a day and central banks start settling trillions on your chain, the ethereum price prediction stops being a guess and starts being a timeline.

The Ethereum Price Prediction, Pepeto Presale, and What This Bull Run Changes

Pepeto Combines Meme Energy With Exchange Tools No Other Presale Has Built

Beyond the ethereum price prediction, Pepeto is not another meme token riding a trend. It is a presale powered by live exchange products that generate value in any direction, built at a stage where hype and real tools almost never exist together. The cofounder who launched Pepe to $11 billion now runs a project where every product already works.

The bridge connects ETH, BNB, and Solana at zero cost, letting holders on any chain move liquidity without losing a cent. Over $8.84 million raised while the Fear Index sat at 9 shows serious capital entering when the rest of the market could barely move.

The token scanner rates every contract before your wallet touches it, flagging traps that wiped out portfolios in past crashes. PepetoSwap handles every trade with no fees. At $0.0000001863 with the Binance listing approaching, 186% APY staking grows balances daily. SolidProof audited the entire codebase before the first round opened.

Whale wallets that sat quiet through the fear cycle are now increasing their Pepeto holdings round after round. These are the same addresses that loaded early positions in past presales and rode them to listing day. They know a bull run is forming, they know how to pick the entry that prints the hardest, and Pepeto clearly proves the historical pattern that formed every crypto millionaire, is repeating here, and only the investors entering now to be part of it.

Ethereum Forecast: Can ETH Actually Reach $5,000?

ETH trades at $2,215 after bouncing 6.55% on the ceasefire rally, still 54% below its all-time high of $4,953 according to CoinMarketCap.

The ethereum price prediction crowd keeps asking about $5,000, and the honest take is simple. ETH already came within 4% of that number when it hit $4,953 in August 2025. Reaching $5,000 needs a market cap around $600 billion, a level this market has supported before. With BlackRock accumulating, central banks building on the chain, the Glamsterdam upgrade in June, and Standard Chartered targeting $7,500, the road to $5,000 is the base case for most institutional models this cycle.

Near-term, the ethereum price prediction lands between $3,000 and $6,000 depending on ETF inflows and the broader bull run. Support sits at $2,050 and resistance at $2,450. If BlackRock keeps buying at this pace and Glamsterdam ships clean, $5,000 could land before year end.

Conclusion

The ethereum price prediction toward $5,000 looks like a question of timing, not possibility, given institutional flows and upgrades landing this year. Meanwhile, Pepeto offers the kind of presale entry that large caps at $2,215 need cycles to match.

Right now the market is splitting into two groups. One entered Pepeto before the Binance listing and watched live tools plus viral momentum turn early pricing into the biggest gains of the cycle. The other sat on the ethereum price prediction waiting for confirmation and paid listing prices for what the presale sold at a sliver. The Pepeto official website is where whale wallets are investing heavily, and following them is the smartest move before the official launch on Binance.

Click To Visit Pepeto Website To Enter The Presale

FAQs

What makes Pepeto the top entry alongside the ethereum price prediction?

Pepeto ships a live exchange with real trading tools and a confirmed Binance listing. The bull cycle now forming is set to push it toward 100x from presale to listing.

How does the ethereum price prediction compare to what Pepeto offers?

Ethereum targets $5,000 for roughly 2.2x from current levels if institutional buying holds. Pepeto targets 100x from presale to Binance listing at $0.0000001863 with 186% APY compounding daily.

Disclaimer: This is a Press Release provided by a third party who is responsible for the content. Please conduct your own research before taking any action based on the content.

Crypto World

StarkWare Researcher Publishes Quantum-Safe Bitcoin Transaction Scheme

The QSB scheme uses only existing Bitcoin consensus rules, sidestepping the network’s contentious upgrade process.

A researcher at StarkWare has published an open-source scheme for making Bitcoin transactions resistant to quantum computing attacks using only the network’s existing consensus rules — requiring no softfork, no protocol upgrade, and no community-wide coordination.

The project, called Quantum Safe Bitcoin (QSB), was released on GitHub by Avihu Levy, StarkWare’s chief product officer and a leading Bitcoin researcher at the firm who previously co-authored ColliderScript, a protocol for enabling stateful computation on Bitcoin without consensus changes. Levy also co-authored BIP-360, the quantum-resistant address proposal that was merged into Bitcoin’s official BIP repository in February — a proposal that, unlike QSB, would require a softfork.

“StarkWare has some of the best hackers on the planet,” Eric Wall, co-founder of Taproot Wizards and board member of the Starknet Foundation, wrote on X. “It is beautiful to see when hackers use their powers for good.”

QSB builds on Binohash, a transaction introspection technique developed by BitVM creator Robin Linus of ZeroSync and Stanford University that was demonstrated on Bitcoin mainnet in February.

No Softfork Required

The no-softfork distinction is what sets QSB apart. Most paths to hardening Bitcoin against quantum attacks, including BIP-360 and hash-based signature schemes like SPHINCS+, require protocol-level changes that must navigate Bitcoin’s notoriously slow and contentious governance process.

That governance bottleneck is increasingly seen as the real vulnerability. A Google Quantum AI paper published March 30 concluded that breaking Bitcoin’s elliptic-curve cryptography could require fewer than 500,000 physical qubits — a roughly 20-fold reduction from prior estimates. The paper warned that a sufficiently advanced machine could derive a private key from an exposed public key in about nine minutes, narrowly inside Bitcoin’s 10-minute block window. Google itself has set a 2029 deadline to migrate its own authentication services to post-quantum cryptography.

QSB sidesteps the governance question entirely. The scheme operates within Bitcoin’s tightest legacy script constraints — 201 opcodes and a 10,000-byte script limit — and can be used by anyone willing to pay roughly $75 to $150 in cloud GPU compute and submit their transaction directly to a miner via a service like MARA’s Slipstream.

StarkWare has been at the center of Bitcoin’s quantum-defense efforts. Co-founder Eli Ben-Sasson has argued that Bitcoin must begin responding to the quantum threat now.

How It Works

Standard Bitcoin transactions use a digital signature scheme called ECDSA to prove ownership of funds. A quantum computer running Shor’s algorithm could reverse-engineer that signature process, deriving private keys from public keys and stealing coins.

QSB swaps out the security model. Instead of relying on the mathematical hardness of elliptic curves — which quantum computers can break — it relies on the hardness of reversing hash functions, which they cannot. The scheme forces a would-be spender to solve a computationally expensive hash puzzle that binds the transaction to a specific set of parameters. Any attempt to alter the transaction invalidates the puzzle solution, requiring the attacker to redo the work from scratch.

The result is roughly 118 bits of security against Shor’s algorithm, compared to effectively zero for standard Bitcoin transactions in a post-quantum world.

Early Stage

The project remains a work in progress. The GPU pinning search — the first of three phases required to construct a quantum-safe transaction — has been successfully tested, finding a valid result after roughly six hours across eight Nvidia RTX PRO 6000 GPUs. But the digest search and on-chain broadcast have not yet been completed end-to-end.

There are practical constraints as well. The transactions exceed default relay policy limits and must be submitted directly to miners. The locking script must be placed as a bare output because it exceeds P2SH’s 520-byte redeem script limit.

Still, the release demonstrates that a degree of quantum resistance is achievable on Bitcoin today — for anyone willing to bear the cost — without waiting for the community to agree on a softfork.

This article was written with the assistance of AI workflows. All our stories are curated, edited and fact-checked by a human.

Crypto World

ETH Price Eyes $2.5K As Data Points To Undervalued Conditions

Ether (ETH) may be on the path to retesting $2,500 if the current rally above $2,150 and the bullish spot and futures market volumes pushing prices higher are sustained.

Ether is also supported by a key macro indicator that places the altcoin in a rare undervaluation zone not seen since 2022. The data points to fading selling pressure and the early stages of an accumulation process for Ether.

ETH price structure strengthens above $2,150

Ether’s daily chart shows bulls leading the charge after a 6.33% rally pushed the price above the $2,150 resistance. ETH now eyes a retest of its March highs near $2,385, with further upside toward the $2,475–$2,635 fair-value gap acting as a price magnet for bulls.

Repeat retests of $2,150 over the past two months suggest weakening resistance, as buyers continue stepping in at higher levels.

Charts show ETH market structure improving and the current volumes being largely spot market driven. On the four-hour chart, ETH maintains higher lows while attempting to break into the $2,250–$2,300 range.

The aggregated spot cumulative volume delta (CVD) has remained elevated in April at 184,500 ETH, reflecting sustained spot demand.

The futures CVD has also trended gradually upward to 4.36 million ETH, suggesting that derivatives traders are beginning to support, rather than lead, the move.

The funding rate remains positive at 0.0052, indicating a long bias, and the open interest near 4.75 million ETH is still range-bound, signaling limited leverage.

Data shows ETH is in a controlled accumulation phase, marginally led by spot demand, though a stronger breakout would likely require an expansion in futures positioning.

Related: Ethereum stablecoin supply hits $180B all-time high: Token Terminal

Macro index shows ETH in a “rare” undervalued zone

Ether may be nearing a macro bottom according to the Capriole Macro Index Oscillator with a reading at -2.42. This puts Ether in a rare undervalued zone historically linked with capitulation and trend reversals.

The indicator tracks investment behavior, cycle positioning, and onchain data, with deeply negative values often signaling seller exhaustion.

Previous signals highlight the metric’s reliability. In June to July 2022, ETH bottomed near $1,000–$1,200 when the indicator fell to -2.2. In October to November 2023, a drop to -1 aligned with ETH’s price breaking out after a drop to $1,500.

In April 2025, another negative reading marked a local bottom near $1,500, setting the stage for a rally above $4,000.

The current setup mirrors prior capitulation phases. ETH has fallen from highs near $4,800 to $2,100, while the oscillator sits near cycle lows.

With ETH now in a rare undervalued zone, the downside risk appears limited relative to the upside potential. However, the confirmation would come with a reclaim of the $2,400–$2,500 level and a move back toward zero for the macro indicator.

Analyst crypto sunmoon noted that the ETH taker buy/sell ratio has been trending upward for four to five months.

Combined with the current drawdown, the structure resembles the setup preceding the April to May 2025 rally, suggesting a similar recovery phase may be forming.

Related: Three reasons why Ether traders expect ETH to hold above $1.8K

This article is produced in accordance with Cointelegraph’s Editorial Policy and is intended for informational purposes only. It does not constitute investment advice or recommendations. All investments and trades carry risk; readers are encouraged to conduct independent research before making any decisions. Cointelegraph makes no guarantees regarding the accuracy or completeness of the information presented, including forward-looking statements, and will not be liable for any loss or damage arising from reliance on this content.

Crypto World

Polygon, Frax and Curve Launch Onchain Forex Liquidity Pools

Curve’s FXSwap pools use frxUSD as the base dollar pairing for cross-currency swaps spanning the Brazilian real, Indonesian rupiah, British pound, Australian dollar, Korean won and USDT.

Polygon Labs, Frax, Curve Finance and DFB Network have launched a suite of foreign exchange liquidity pools on the Polygon blockchain, enabling onchain swaps between fiat-pegged stablecoins using Frax’s frxUSD as the base dollar pairing.

The pools are live on Curve’s Polygon deployment and pair frxUSD against BRZ (Brazilian real), IDRX (Indonesian rupiah), tGBP (British pound), AUDF (Australian dollar), KRWQ (Korean won) and USDT, with additional currency pairs in development. The four partners have also collaborated on an incentive program to bootstrap liquidity across the pools, with gauges live for reward distribution.

$6 Trillion Market

The launch targets the $6.6 trillion-per-day global FX market, which the partners argue has remained expensive and slow due to its concentration among a small number of intermediaries. Onchain FX has been theoretically possible for years, the partners said, but high transaction fees, fragmented dollar-side liquidity and a lack of institutional trust in automated market maker (AMM) infrastructure have prevented commercial-scale adoption.

“When you pair sub-cent transaction fees with a stable dollar base like frxUSD and Curve’s liquidity infrastructure, you get something the traditional FX market has never offered: transparent pricing, instant settlement, and access for any company,” Polygon Labs CEO Marc Boiron said in a blog post.

How the Stack Works

Each layer of the stack handles a different function. Frax’s frxUSD serves as the dollar anchor for every pool. The stablecoin is fully backed by tokenized U.S. Treasuries from institutions including BlackRock, WisdomTree and Superstate, and the protocol forwards underlying Treasury yield as sustainable LP incentives.

Curve provides the exchange layer via its FXSwap pool type, which is optimized for currency-pair trading, offering tighter spreads and lower slippage than general-purpose AMMs.Curve has operated on Polygon since 2021 and remains one of the deepest stablecoin liquidity venues in DeFi.

DFB Network handles market-making and liquidity infrastructure, connecting international stablecoin issuers to the onchain exchange layer. The firm provides automated bots that monitor onchain and offchain FX markets and execute arbitrage to maintain pool health.

Polygon itself functions as the settlement layer. A typical token transfer on the network costs roughly $0.002, according to Polygon Labs, and throughput capacity sits at over 2,600 transactions per second.

Commercial FX

The pools are being pitched as practical infrastructure for cross-border business payments. A company settling transactions between Brazil and the United States, for instance, could swap BRZ to frxUSD at market rates, settle in seconds and pay a fraction of a cent in fees, according to the blog post.

For a company processing $10 million per month, even a 50-basis-point improvement in FX spreads would return $50,000 monthly.

Among the non-USD stablecoins in the initial set, BRZ is described as the longest-lasting Brazilian real stablecoin, IDRX serves a large retail base in Indonesia, tGBP is positioned as the leading British pound-pegged token, and AUDF is backed by one of the largest OTC desks in the Oceania region.

This article was written with the assistance of AI workflows. All our stories are curated, edited and fact-checked by a human.

-

Business7 days ago

Business7 days agoNo Jackpot Winner and $194 Million Prize Rolls Over

-

Fashion6 days ago

Fashion6 days agoWeekend Open Thread: Spanx – Corporette.com

-

Business4 days ago

Business4 days agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Business6 days ago

Business6 days agoExpert Picks for Every Need

-

Sports5 days ago

Sports5 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Tech2 days ago

Tech2 days agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Business4 days ago

Business4 days agoNo Jackpot Winner, Prize to Climb to $231 Million

-

Fashion3 days ago

Fashion3 days agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Politics6 days ago

Wings Over Scotland | The quality of mercy

-

Fashion2 days ago

Fashion2 days agoLet’s Discuss: DEI in 2026

-

Business5 days ago

Business5 days agoAkebia Therapeutics, Inc. (AKBA) Discusses Pipeline Progress and Strategic Focus on Kidney Disease Treatments at R&D Day – Slideshow

-

Fashion7 days ago

Fashion7 days agoStatement Sunglasses: The Accessory Shaping Modern Fashion

-

Crypto World1 day ago

Crypto World1 day agoBitcoin recovers as US and Iran Agree a Ceasefire Deal

-

Politics7 days ago

Politics7 days agoEast Jerusalem Palestinian families eviction orders

-

Politics7 days ago

Politics7 days agoWhy so many children are now classified as ‘disabled’

-

Fashion7 days ago

Fashion7 days agoFor Love & Lemons’ Spring 2026 Line is for the Romantics

-

Politics7 days ago

Politics7 days agoNuclear rockets, moon bases and NASA’s Mars plan

-

Tech7 days ago

Tech7 days agoThe Threadless Ball Screw Never Took Off, But Don’t Write It Off

-

Business7 days ago

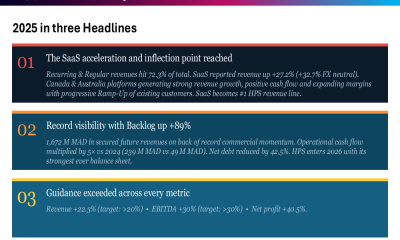

Business7 days agoHPS FY 2025 slides: SaaS inflection drives 22% revenue growth

-

Fashion6 days ago

Fashion6 days agoTory Burch’s Spring 2026 Campaign Goes on a Getaway

You must be logged in to post a comment Login