Prof Nir Eisikovits and Jacob Burley of the University of Massachusetts Boston discuss the ethics of AI in higher education and the technology’s role in ‘cognitive offloading’.

A version of this article was originally published by The Conversation (CC BY-ND 4.0)

Public debate about artificial intelligence in higher education has largely orbited a familiar worry: cheating. Will students use chatbots to write essays? Can instructors tell? Should universities ban the tech? Embrace it?

These concerns are understandable. But focusing so much on cheating misses the larger transformation already underway, one that extends far beyond student misconduct and even the classroom.

Universities are adopting AI across many areas of institutional life. Some uses are largely invisible, like systems that help allocate resources, flag ‘at-risk’ students, optimise course scheduling or automate routine administrative decisions. Other uses are more noticeable. Students use AI tools to summarise and study, instructors use them to build assignments and syllabuses, and researchers use them to write code, scan literature and compress hours of tedious work into minutes.

People may use AI to cheat or skip out on work assignments. But the many uses of AI in higher education, and the changes they portend, beg a much deeper question: As machines become more capable of doing the labour of research and learning, what happens to higher education? What purpose does the university serve?

Over the past eight years, we’ve been studying the moral implications of pervasive engagement with AI as part of a joint research project between the Applied Ethics Center at UMass Boston and the Institute for Ethics and Emerging Technologies. In a recent white paper, we argue that as AI systems become more autonomous, the ethical stakes of AI use in higher ed rise, as do its potential consequences.

As these technologies become better at producing knowledge work – designing classes, writing papers, suggesting experiments and summarising difficult texts – they don’t just make universities more productive. They risk hollowing out the ecosystem of learning and mentorship upon which these institutions are built, and on which they depend.

Nonautonomous AI

Consider three kinds of AI systems and their respective impacts on university life.

AI-powered software is already being used throughout higher education in admissions review, purchasing, academic advising and institutional risk assessment. These are considered ‘nonautonomous’ systems because they automate tasks, but a person is ‘in the loop’ and using these systems as tools.

These technologies can pose a risk to students’ privacy and data security. They also can be biased. And they often lack sufficient transparency to determine the sources of these problems. Who has access to student data? How are ‘risk scores’ generated? How do we prevent systems from reproducing inequities or treating certain students as problems to be managed?

These questions are serious, but they are not conceptually new, at least within the field of computer science. Universities typically have compliance offices, institutional review boards and governance mechanisms that are designed to help address or mitigate these risks, even if they sometimes fall short of these objectives.

Hybrid AI

Hybrid systems encompass a range of tools, including AI-assisted tutoring chatbots, personalised feedback tools and automated writing support. They often rely on generative AI technologies, especially large language models. While human users set the overall goals, the intermediate steps the system takes to meet them are often not specified.

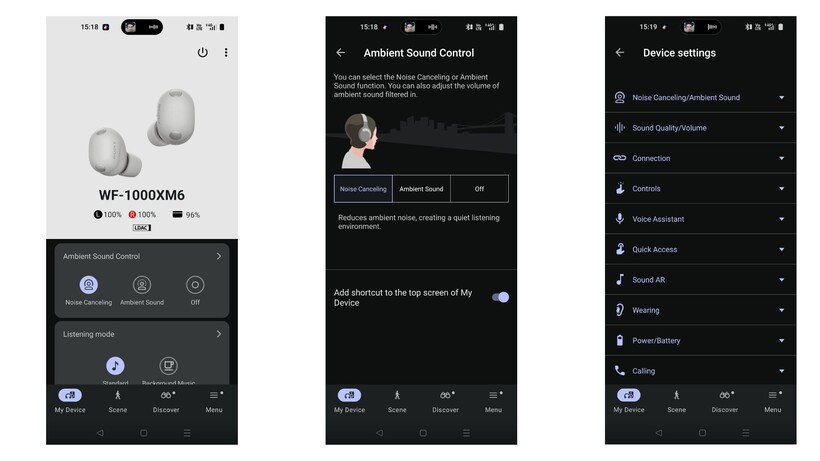

Hybrid systems are increasingly shaping day-to-day academic work. Students use them as writing companions, tutors, brainstorming partners and on-demand explainers. Faculty use them to generate rubrics, draft lectures and design syllabuses. Researchers use them to summarise papers, comment on drafts, design experiments and generate code.

This is where the ‘cheating’ conversation belongs. With students and faculty alike increasingly leaning on technology for help, it is reasonable to wonder what kinds of learning might get lost along the way. But hybrid systems also raise more complex ethical questions.

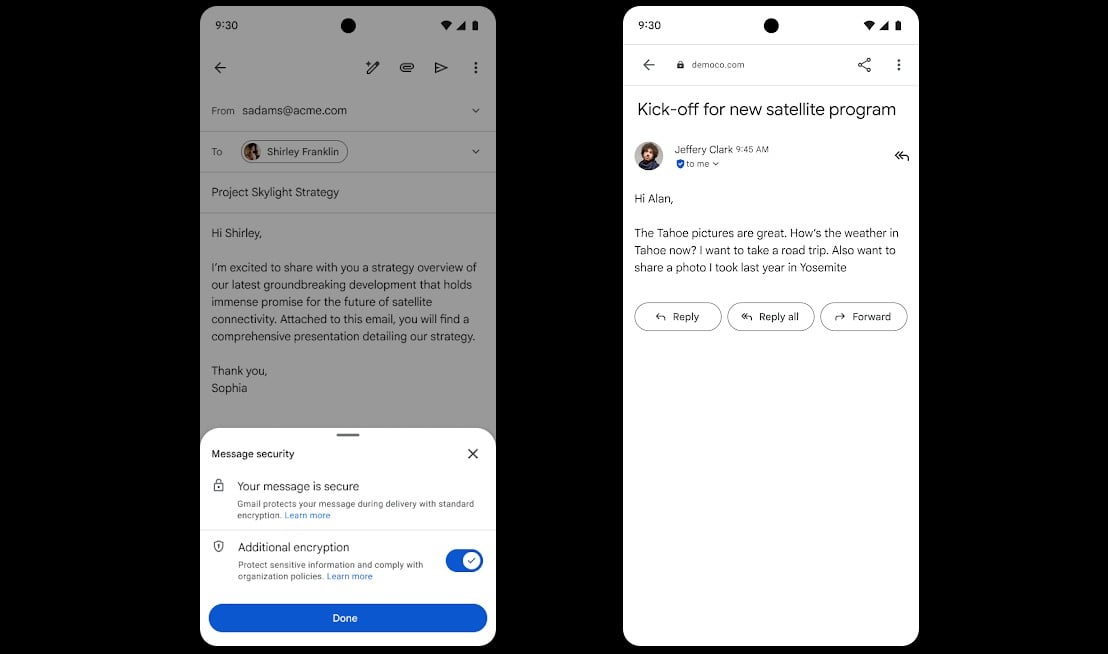

One has to do with transparency. AI chatbots offer natural-language interfaces that make it hard to tell when you’re interacting with a human and when you’re interacting with an automated agent. That can be alienating and distracting for those who interact with them. A student reviewing material for a test should be able to tell if they are talking with their teaching assistant or with a robot.

A student reading feedback on a term paper needs to know whether it was written by their instructor. Anything less than complete transparency in such cases will be alienating to everyone involved and will shift the focus of academic interactions from learning to the means or the technology of learning. University of Pittsburgh researchers have shown that these dynamics bring forth feelings of uncertainty, anxiety and distrust for students. These are problematic outcomes.

A second ethical question relates to accountability and intellectual credit. If an instructor uses AI to draft an assignment and a student uses AI to draft a response, who is doing the evaluating, and what exactly is being evaluated? If feedback is partly machine-generated, who is responsible when it misleads, discourages or embeds hidden assumptions? And when AI contributes substantially to research synthesis or writing, universities will need clearer norms around authorship and responsibility – not only for students, but also for faculty.

Finally, there is the critical question of cognitive offloading. AI can reduce drudgery, and that’s not inherently bad. But it can also shift users away from the parts of learning that build competence, such as generating ideas, struggling through confusion, revising a clumsy draft and learning to spot one’s own mistakes.

Autonomous agents

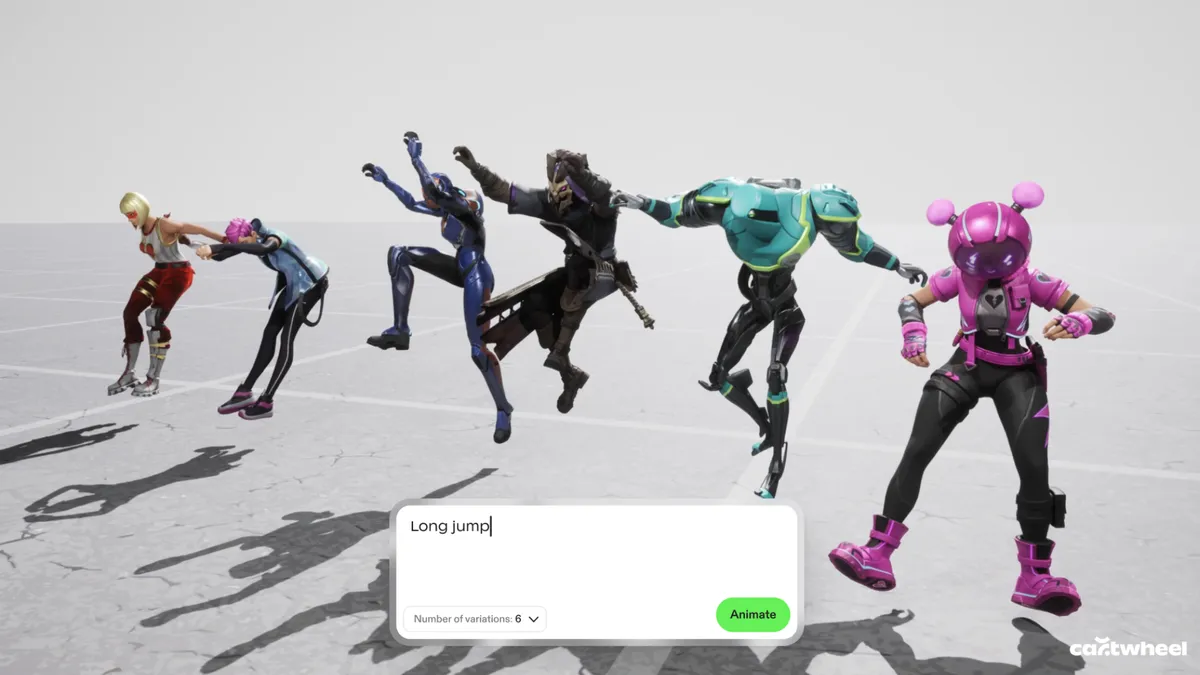

The most consequential changes may come with systems that look less like assistants and more like agents. While truly autonomous technologies remain aspirational, the dream of a researcher ‘in a box’ – an agentic AI system that can perform studies on its own – is becoming increasingly realistic.

Agentic tools are anticipated to ‘free up time’ for work that focuses on more human capacities like empathy and problem-solving. In teaching, this may mean that faculty may still teach in the headline sense, but more of the day-to-day labour of instruction can be handed off to systems optimised for efficiency and scale. Similarly, in research, the trajectory points toward systems that can increasingly automate the research cycle. In some domains, that already looks like robotic laboratories that run continuously, automate large portions of experimentation and even select new tests based on prior results.

At first glance, this may sound like a welcome boost to productivity. But universities are not information factories; they are systems of practice. They rely on a pipeline of graduate students and early-career academics who learn to teach and research by participating in that same work. If autonomous agents absorb more of the ‘routine’ responsibilities that historically served as on-ramps into academic life, the university may keep producing courses and publications while quietly thinning the opportunity structures that sustain expertise over time.

The same dynamic applies to undergraduates, albeit in a different register. When AI systems can supply explanations, drafts, solutions and study plans on demand, the temptation is to offload the most challenging parts of learning. To the industry that is pushing AI into universities, it may seem as if this type of work is ‘inefficient’ and that students will be better off letting a machine handle it. But it is the very nature of that struggle that builds durable understanding. Cognitive psychology has shown that students grow intellectually through doing the work of drafting, revising, failing, trying again, grappling with confusion and revising weak arguments. This is the work of learning how to learn.

Taken together, these developments suggest that the greatest risk posed by automation in higher education is not simply the replacement of particular tasks by machines, but the erosion of the broader ecosystem of practice that has long sustained teaching, research and learning.

An uncomfortable inflection point

So what purpose do universities serve in a world in which knowledge work is increasingly automated?

One possible answer treats the university primarily as an engine for producing credentials and knowledge. There, the core question is output: Are students graduating with degrees? Are papers and discoveries being generated? If autonomous systems can deliver those outputs more efficiently, then the institution has every reason to adopt them.

But another answer treats the university as something more than an output machine, acknowledging that the value of higher education lies partly in the ecosystem itself. This model assigns intrinsic value to the pipeline of opportunities through which novices become experts, the mentorship structures through which judgement and responsibility are cultivated, and the educational design that encourages productive struggle rather than optimising it away. Here, what matters is not only whether knowledge and degrees are produced, but how they are produced and what kinds of people, capacities and communities are formed in the process. In this version, the university is meant to serve as no less than an ecosystem that reliably forms human expertise and judgement.

In a world where knowledge work itself is increasingly automated, we think universities must ask what higher education owes its students, its early-career scholars and the society it serves. The answers will determine not only how AI is adopted, but also what the modern university becomes.

![]()

By Prof Nir Eisikovits and Jacob Burley

Nir Eisikovits is a professor of philosophy and founding director of the Applied Ethics Center at the University of Massachusetts Boston. Eisikovits’s research focuses on the ethics of war and the ethics of technology and he has written many books and articles on these topics.

Jacob Burley is a junior research fellow at the University of Massachusetts Boston, specialising in the ethics of emerging technologies. His work explores how artificial intelligence reshapes human decision-making, responsibility and knowledge practices, with particular attention to the normative and epistemic challenges posed by increasingly autonomous systems.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

You must be logged in to post a comment Login