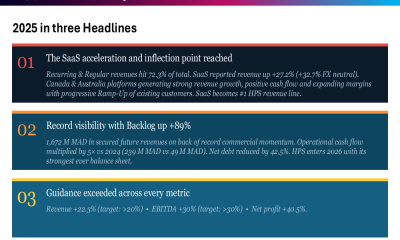

Anthropic pointed its most advanced AI model, Claude Opus 4.6, at production open-source codebases and found a plethora of security holes: more than 500 high-severity vulnerabilities that had survived decades of expert review and millions of hours of fuzzing, with each candidate vetted through internal and external security review before disclosure.

Fifteen days later, the company productized the capability and launched Claude Code Security.

Security directors responsible for seven-figure vulnerability management stacks should expect a common question from their boards in the next review cycle. VentureBeat anticipates the emails and conversations will start with, “How do we add reasoning-based scanning before attackers get there first?”, because as Anthropic’s review found, simply pointing an AI model at exposed code can be enough to identify — and in the case of malicious actors, exploit — security lapses in production code.

The answer matters more than the number, and it is primarily structural: how your tooling and processes allocate work between pattern-based scanners and reasoning-based analysis. CodeQL and the tools built on it match code against known patterns.

Claude Code Security, which Anthropic launched February 20 as a limited research preview, reasons about code the way a human security researcher would. It follows how data moves through an application and catches flaws in business logic and access control that no rule set covers.

The board conversation security leaders need to have this week

Five hundred newly discovered zero-days is less a scare statistic than a standing budget justification for rethinking how you fund code security.

The reasoning capability Claude Code Security represents, and its inevitable competitors, need to drive the procurement conversation. Static application security testing (SAST) catches known vulnerability classes. Reasoning-based scanners find what pattern-matching was never designed to detect. Both have a role.

Anthropic published the zero-day research on February 5. Fifteen days later, they shipped the product. While it’s the same model and capabilities, it is now available to Enterprise and Team customers.

What Claude does that CodeQL couldn’t

GitHub has offered CodeQL-based scanning through Advanced Security for years, and added Copilot Autofix in August 2024 to generate LLM-suggested fixes for alerts. Security teams rely on it. But the detection boundary is the CodeQL rule set, and everything outside that boundary stays invisible.

Claude Code Security extends that boundary by generating and testing its own hypotheses about how data and control flow through an application, including cases where no existing rule set describes. CodeQL solves the problem it was built to solve: data-flow analysis within predefined queries. It tells you whether tainted input reaches a dangerous function.

CodeQL is not designed to autonomously read a project’s commit history, infer an incomplete patch, trace that logic into another file, and then assemble a working proof-of-concept exploit end to end. Claude did exactly that on GhostScript, OpenSC, and CGIF, each time using a different reasoning strategy.

“The real shift is from pattern-matching to hypothesis generation,” said Merritt Baer, CSO at Enkrypt AI, advisor to Andesite and AppOmni, and former Deputy CISO at AWS, in an exclusive interview with VentureBeat. “That’s a step-function increase in discovery power, and it demands equally strong human and technical controls.”

Three proof points from Anthropic’s published methodology show where pattern-matching ends and hypothesis generation begins.

Commit history analysis across files. GhostScript is a widely deployed utility for processing PostScript and PDF files. Fuzzing turned up nothing, and neither did manual analysis. Then Claude pulled the Git commit history, found a patch that added stack bounds checking for font handling in gstype1.c, and reversed the logic: if the fix was needed there, every other call to that function without the fix was still vulnerable. In gdevpsfx.c, a completely different file, the call to the same function lacked the bounds checking patched elsewhere. Claude built a working proof-of-concept crash. No CodeQL rule describes that bug today. The maintainers have since patched it.

Reasoning about preconditions that fuzzers can’t reach. OpenSC processes smart card data. Standard approaches failed here, too, so Claude searched the repository for function calls that are frequently vulnerable and found a location where multiple strcat operations ran in succession without length checking on the output buffer. Fuzzers rarely reached that code path because too many preconditions stood in the way. Claude reasoned about which code fragments looked interesting, constructed a buffer overflow, and proved the vulnerability.

Algorithm-level edge cases that no coverage metric catches. CGIF is a library for processing GIF files. This vulnerability required understanding how LZW compression builds a dictionary of tokens. CGIF assumed compressed output would always be smaller than uncompressed input, which is almost always true. Claude recognized that if the LZW dictionary filled up and triggered resets, the compressed output could exceed the uncompressed size, overflowing the buffer. Even 100% branch coverage wouldn’t catch this. The flaw demands a particular sequence of operations that exercises an edge case in the compression algorithm itself. Random input generation almost never produces it. Claude did.

Baer sees something broader in that progression. “The challenge with reasoning isn’t accuracy, it’s agency,” she told VentureBeat. “Once a system can form hypotheses and pursue them, you’ve shifted from a lookup tool to something that can explore your environment in ways that are harder to predict and constrain.”

How Anthropic validated 500+ findings

Anthropic placed Claude inside a sandboxed virtual machine with standard utilities and vulnerability analysis tools. The red team didn’t provide any specialized instructions, custom harnesses, or task-specific prompting. Just the model and the code.

The red team focused on memory corruption vulnerabilities because they’re the easiest to confirm objectively. Crash monitoring and address sanitizers don’t leave room for debate. Claude filtered its own output, deduplicating and reprioritizing before human researchers touched anything. When the confirmed count kept climbing, Anthropic brought in external security professionals to validate findings and write patches.

Every target was an open-source project underpinning enterprise systems and critical infrastructure. Small teams maintain many of them, staffed by volunteers, not security professionals. When a vulnerability sits in one of these projects for a decade, every product that pulls from it inherits the risk.

Anthropic didn’t start with the product launch. The defensive research spans more than a year. The company entered Claude in competitive Capture-the-Flag events where it ranked in the top 3% of PicoCTF globally, solved 19 of 20 challenges in the HackTheBox AI vs Human CTF, and placed 6th out of 9 teams defending live networks against human red team attacks at Western Regional CCDC.

Anthropic also partnered with Pacific Northwest National Laboratory to test Claude against a simulated water treatment plant. PNNL’s researchers estimated that the model completed adversary emulation in three hours. The traditional process takes multiple weeks.

The dual-use question security leaders can’t avoid

The same reasoning that finds a vulnerability can help an attacker exploit one. Frontier Red Team leader Logan Graham acknowledged this directly to Fortune’s Sharon Goldman. He told Fortune the models can now explore codebases autonomously and follow investigative leads faster than a junior security researcher.

Gabby Curtis, Anthropic’s communications lead, told VentureBeat in an exclusive interview the company built Claude Code Security to make defensive capabilities more widely available, “tipping the scales towards defenders.” She was equally direct about the tension: “The same reasoning that helps Claude find and fix a vulnerability could help an attacker exploit it, so we’re being deliberate about how we release this.”

In interviews with more than 40 CISOs across industries, VentureBeat found that formal governance frameworks for reasoning-based scanning tools are the exception, not the norm. The most common responses are that the area was considered so nascent that many CISOs didn’t think this capability would arrive so early in 2026.

The question every security director has to answer before deploying this: if I give my team a tool that finds zero-days through reasoning, have I unintentionally expanded my internal threat surface?

“You didn’t weaponize your internal surface, you revealed it,” Baer told VentureBeat. “These tools can be helpful, but they also may surface latent risk faster and more scalably. The same tool that finds zero-days for defense can expose gaps in your threat model. Keep in mind that most intrusions don’t come from zero-days, they come from misconfigurations.”

“In addition to the access and attack path risk, there is IP risk,” she said. “Not just exfiltration, but transformation. Reasoning models can internalize and re-express proprietary insights in ways that blur the line between use and leakage.”

The release is deliberately constrained. Enterprise and Team customers only, through a limited research preview. Open-source maintainers apply for free expedited access. Findings go through multi-stage self-verification before reaching an analyst, with severity ratings and confidence scores attached. Every patch requires human approval.

Anthropic also built detection into the model itself. In a blog post detailing the safeguards, the company described deploying probes that measure activations within the model as it generates responses, with new cyber-specific probes designed to track potential misuse. On the enforcement side, Anthropic is expanding its response capabilities to include real-time intervention, including blocking traffic it detects as malicious.

Graham was direct with Axios: the models are extremely good at finding vulnerabilities, and he expects them to get much better still. VentureBeat asked Anthropic for the false-positive rate before and after self-verification, the number of disclosed vulnerabilities with patches landed versus still in triage, and the specific safeguards that distinguish attacker use from defender use. The lead researcher on the 500-vulnerability project was unavailable, and the company declined to share specific attacker-detection mechanisms to avoid tipping off threat actors.

“Offense and defense are converging in capability,” Baer said. “The differentiator is oversight. If you can’t audit and bound how the tool is used, you’ve created another risk.”

That speed advantage doesn’t favor defenders by default. It favors whoever adopts it first. Security directors who move early set the terms.

Anthropic isn’t alone. The pattern is repeating.

Security researcher Sean Heelan used OpenAI’s o3 model with no custom tooling and no agentic framework to discover CVE-2025-37899, a previously unknown use-after-free vulnerability in the Linux kernel’s SMB implementation. The model analyzed over 12,000 lines of code and identified a race condition that traditional static analysis tools consistently missed because detecting it requires understanding concurrent thread interactions across connections.

Separately, AI security startup AISLE discovered all 12 zero-day vulnerabilities announced in OpenSSL’s January 2026 security patch, including a rare high-severity finding (CVE-2025-15467, a stack buffer overflow in CMS message parsing that is potentially remotely exploitable without valid key material). AISLE co-founder and chief scientist Stanislav Fort reported that his team’s AI system accounted for 13 of the 14 total OpenSSL CVEs assigned in 2025. OpenSSL is among the most scrutinized cryptographic libraries on the planet. Fuzzers have run against it for years. The AI found what they were not designed to find.

The window is already open

Those 500 vulnerabilities live in open-source projects that enterprise applications depend on. Anthropic is disclosing and patching, but the window between discovery and adoption of those patches is where attackers operate today.

The same model improvements behind Claude Code Security are available to anyone with API access.

If your team is evaluating these capabilities, the limited research preview is the right place to start, with clearly defined data handling rules, audit logging, and success criteria agreed up front.

You must be logged in to post a comment Login