This story was originally published in The Highlight, Vox’s member-exclusive magazine. To get access to member-exclusive stories every month, join the Vox Membership program today.

Tech

ChatGPT sucks at being a real robot

There’s something sad about seeing a humanoid robot lying on the floor. Without any electricity, these bipedal machines can’t stand up, so if they’re powered down and not hanging from a winch, they’re sprawled out on the floor, staring up at you, helpless.

That’s how I met Atlas a couple of months ago. I’d seen the robot on YouTube a hundred times, running obstacle courses and doing backflips. Then I saw it on the floor of a lab at MIT. It was just lying there. The contrast is jarring, if only because humanoid robots have become so much more capable and ubiquitous since Atlas got famous on YouTube.

Across town at Boston Dynamics, the company that makes Atlas, a newer version of the humanoid robot had learned not only to walk but also to drop things and pick them back up instinctively, thanks to a single artificial intelligence model that controls its movement. Some of these next-generation Atlas robots will soon be working on factory floors — and may venture further. Thanks in part to AI, general-purpose humanoids of all types seem inevitable.

“In Shenzhen, you can already see them walking down the street every once in a while,” Russ Tedrake told me back at MIT. “You’ll start seeing them in your life in places that are probably dull, dirty, and dangerous.”

Tedrake runs the Robot Locomotion Group at the MIT Computer Science and Artificial Intelligence Lab, also known as CSAIL, and he co-led the project that produced the latest AI-powered Atlas. Walking was once the hard thing for robots to learn, but not anymore. Tedrake’s group has shifted focus from teaching robots how to move to helping them understand and interact with the world through software, namely AI. They’re not the only ones.

In the United States, venture capital investment in robotics startups grew from $42.6 million in 2020 to nearly $2.8 billion in 2025. Morgan Stanley predicts the cumulative global sales of humanoids will reach 900,000 in 2030 and explode to more than 1 billion by 2050, the vast majority of which will be for industrial and commercial purposes. Some believe these robots will ultimately replace human labor, ushering in a new global economic order. After all, we designed the world for humans, so humanoids should be able to navigate it with ease and do what we do.

They won’t all be factory workers, if certain startups get their way. A company called X1 Technologies has started taking preorders for its $20,000 home robot, Neo, which wears clothes, does dishes, and fetches snacks from the fridge. Figure AI introduced its Figure 03 humanoid robot, which also does chores. Sunday Robotics said it would have fully autonomous robots making coffee in beta testers’ homes next year.

So far, we’ve seen a lot of demos of these AI-powered home robots and promises from the industrial humanoid makers, but not much in the way of a new global economic order. Demos of home robots, like the X1 Neo, have relied on human operators, making these automatons, in practice, more like puppets. Reports suggest that Figure AI and Apptronik have only one or two robots on manufacturing floors at any given time, usually doing menial tasks. That’s a proof of concept, not a threat to the human work force.

“In order to make them better, we have to make AI better.”

You can think of all these robots as the physical embodiment of AI, or just embodied AI. This is what happens when you put AI into a physical system, enabling it to interact with the real world. Whether that’s in the form of a humanoid robot or an autonomous car, it’s the next frontier for hardware and, arguably, technological progress writ large.

Embodied AI is already transforming how farming works, how we move goods around the world, and what’s possible in surgical theaters. We might be just one or two breakthroughs away from walking, talking, thinking machines that can work alongside us, unlocking a whole new realm of possibilities. “Might” is the key word there.

“If we’re looking for robots that will work side by side with us in the next couple of years, I don’t think it will be humanoids,” Daniela Rus, director of CSAIL, told me not long after I left Tedrake’s lab. “Humanoids are really complicated, and we have to make them better. And in order to make them better, we have to make AI better.”

So to understand the gap between the hype around humanoids and the technology’s real promise, you have to know what AI can and can’t do for robots. You also, unfortunately, have to try to understand what Elon Musk has been up to at Tesla for the past five years.

It’s still embarrassing to watch the part of the Tesla AI Day presentation in 2021 when a human person dressed in a robot costume appears on stage dancing to dubstep music. Musk eventually stops the dance and announces that Tesla, “a robotics company,” will have a prototype of a general-purpose humanoid robot, now known as Optimus, the following year. Not many people believed him, and now, years later, Tesla still has not delivered a fully functional Optimus. Never afraid to make a prediction, Musk told audiences at Davos in January 2026 that Tesla’s robot will go on sale next year.

“People took him seriously because he had a great track record,” said Ken Goldberg, a roboticist at the University of California-Berkeley and co-founder of Ambi Robotics. “I think people were inspired by that.”

You can imagine why people got excited, though. With the Optimus robot, Elon Musk promised to eliminate poverty and offer shareholders “infinite” profits. He said engineers could effectively translate Tesla’s self-driving car technology into software that could power autonomous robots that could work in factories or help around the house. It’s a version of the same vision humanoid robotics startups are chasing today, albeit colored by several years of Musk’s unfulfilled promises.

We now know that Optimus struggles with a lot of the same problems as other attempts at general-purpose humanoids. It often requires humans to remotely operate it, and it struggles with dexterity and precision. The 1X Neo, likewise, needed a human’s help to open a refrigerator door and collapsed onto the floor in a demo for a New York Times journalist last year. The hardware seems capable enough. Optimus can dance, and Neo can fold clothes, albeit a bit clumsily. But they don’t yet understand physics. They don’t know how to plan or to improvise. They certainly can’t think.

“People in general get too excited by the idea of the robot and not the reality.”

“People in general get too excited by the idea of the robot and not the reality,” said Rodney Brooks, co-founder of iRobot, makers of the Roomba robot vacuum. Brooks, a former CSAIL director, has written extensively and skeptically about humanoid robots.

Clearly, there’s a gap between what’s happening in research labs and what’s being deployed in the real world. Some of the optimism around humanoids is based on good science, though. In 2023, Tedrake coauthored a landmark paper with Tony Zhao, co-founder and CEO of Sunday Robotics, that outlined a novel method for training robots to move like humans. It involves humans performing the task wearing sensor-laden gloves that send data to an AI model that enables the robot to figure out how to do those tasks. This complemented work Tedrake was doing at the Toyota Research Institute that used the same kinds of methods AI models use to generate images to generate robot behavior. You’ve heard of large language models, or LLMs. Tedrake calls these large behavior models, or LBMs.

It makes sense. By watching humans do things over and over, these AI models collect enough data to generate new behaviors that can adapt to changing environments. Folding laundry, for example, is a popular example of a task that requires nimble hands and better brains. If a robot picks up a shirt and the fabric flops down in an unexpected way, it needs to figure out how to handle that uncertainty. You can’t simply program it to know what to do when there are so many variables. You can, however, teach it to learn.

That’s what makes the lemonade demo so impressive. Some of Rus’s students at CSAIL have been teaching a humanoid robot named Ruby to make lemonade — something that you might want a robot butler to do one day — by wearing sensors that measure not only the movements but the forces involved. It’s a combination of delicate movements, like pouring sugar, and strong ones, like lifting a jug of water. I watched Ruby do this without spilling a drop. It hadn’t been programmed to make lemonade. It had learned.

The real challenge is getting this method to scale. One way is simply to brute-force it: Employ thousands of humans to perform basic tasks, like folding laundry, to build foundation models for the physical world. Foundation models are the massive datasets that can be adapted to specific tasks like generating text, images, or in this case, robot behavior. You can also get humans to teleoperate countless robots in order to train these models. These so-called arm farms already exist in warehouses in Eastern Europe, and they’re about as dystopian as they sound.

Another option is YouTube. There are a lot of how-to videos on YouTube, and some researchers think that feeding them all into an AI model will provide enough data to give robots a better understanding of how the world works. These two-dimensional videos are obviously limited, if only because they can’t tell us anything about the physics of the objects in the frame. The same goes for synthetic data, which involves a computer rapidly and repeatedly carrying out a task in a simulation. The upside here, of course, is more data, more quickly. The downside is that the data isn’t as good, especially when it comes to physical forces like friction and torque, which also happen to be the most important for robot dexterity.

“Physics is a tough task to master,” Brooks said. “And if you have a robot, which is not good with physics, in the presence of people, it doesn’t end well.”

That’s not even taking into account the many other bottlenecks facing robotics right now. While components have gotten cheaper — you can buy a humanoid robot right now for less than $6,000, compared to the $75,000 it cost to buy Boston Dynamics’ small, four-legged robot Spot five years ago — batteries represent a major bottleneck for robotics, limiting the run time of most humanoids to two to four hours.

Then you have the problem with processing power. The AI models that can make humanoids more human require massive amounts of compute. If that’s done in the cloud, you’ve got latency issues, preventing the robot from reacting in real time. And inevitably, to tie a lot of other constraints into a tidy bundle, the AI is just not good enough.

If you trace the history of AI and the history of robotics back to their origins, you’ll see a braided line. The two technologies have intersected time and again, since the birth of the term “artificial intelligence” at a Dartmouth summer research workshop in the summer of 1956. Then, half a century later, things started heating up on the AI front, when advances in machine learning and powerful processors called GPUs — the things that have now made Nvidia a $5 trillion company — ushered in the era of deep learning. I’m about to throw a few technical terms at you, so bear with me.

Machine learning is a type of AI. It’s when algorithms look for patterns in data and make decisions without being explicitly trained to do so. Deep learning takes it to another level with the help of a machine learning model called a neural network. You can think of a neural network, a concept that’s even older than AI, as a system loosely modeled on the human brain that’s made up of lots of artificial neurons that do math problems. Deep learning uses multilayered neural networks to learn from huge data sets and to make decisions and predictions. Among other accomplishments, neural networks have revolutionized computer vision to improve perception in robots.

There are different architectures for neural networks that can do different things, like recognize images or generate text. One is called a transformer. The “GPT” in ChatGPT stands for “generative pre-trained transformer,” which is a type of large language model, or LLM, that powers many generative AI chatbots. While you’d think LLMs would be good at making robots think, they really aren’t. Then there are diffusion models, which are often used for image generation and, more recently, making robots appear to think. The framework that Tedrake and his coauthors described in their 2023 research into using generative AI to train robots is based on diffusion.

“Under the hood, what’s actually going on should be something much more like our own brains.”

Three things stand out in this very limited explanation of how AI and robots get along. One is that deep learning requires a massive amount of processing power and, as a result, a huge amount of energy. The other is that the latest AI models work with the help of stacks of neural networks whose millions or even billions of artificial neurons do their magic in mysterious and usually inefficient ways. The third thing is that, while LLMs are good at language, and diffusion models are good at images, we don’t have any models that are good enough at physics to send a 200-pound robot marching into a crowd to shake hands and make friends.

As Josh Tenenbaum, a computational cognitive scientist at MIT, explained to me recently, an LLM can make it easier to talk to a robot, but it’s hardly capable of being the robot’s brains. “You could imagine a system where there’s a language model, there’s a chatbot, you want to talk to your robot,” Tenenbaum said. “Under the hood, what’s actually going on should be something much more like our own brains and minds or other animals, not just humans in terms of how it’s embodied and deals with the world.”

So we need better AI for robots, if not in general. Scientists at CSAIL have been working on a couple of physics-inspired and brain-like technologies they’re calling liquid neural networks and linear optical networks. They both fall into the category of state-space models, which are emerging as an alternative or rival to transformer-based models. Whereas transformer-based models look at all available data to identify what’s important, state-space models are much more efficient, as they maintain a summary of the world that gets updated as new data comes in. It’s closer to how the human brain works.

To be perfectly honest, I’d never heard of state-space models until Rus, the CSAIL director, told me about them when we chatted in her office a few weeks ago. She pulled up a video to illustrate the difference between a liquid neural network and a traditional model used for self-driving cars. In it, you can see how the traditional model focuses its attention on everything but the road, while the newer state-space model only looks at the road. If I’m riding in that car, by the way, I want the AI that’s watching the road.

“And instead of a hundred thousand neurons,” Rus says, referring to the traditional neural network, “I have only 19.” And here’s where it gets really compelling. She added, “And because I have only 19, I can actually figure out how these neurons fire and what the correlation is between these neurons and the action of the car.”

You may have already heard that we don’t really know how AI works. If newer approaches bring us a little bit closer to comprehension, it certainly seems worth taking them seriously, especially if we’re talking about the kinds of brains we’ll put in humanoid robots.

When a humanoid robot loses power, when electricity stops flowing to the motors that keep it upright, it collapses into a heap of heavy metal parts. This can happen for any number of reasons. Maybe it’s a bug in the code or a lost wifi connection. And when they’re on, humanoids are full of energy as their joints fight gravity or stand ready to bend. If you imagine being on the wrong side of that incredible mechanical power, it’s easy to doubt this technology.

Some companies that make humanoid robots also admit that they’re not very useful yet. They’re too unreliable to help out around the house, and they’re not efficient enough to be helpful in factories. Furthermore, most of the money being spent developing robots is being spent on making them safe around people. When it comes to deploying robots that can contribute to productivity, that can participate in the economy, it makes a lot more sense to make them highly specialized and not human-shaped.

“Let’s not do open heart surgery right away with these things.”

The embodied AI that will transform the world in the near future is what’s already out there. In fact, it’s what’s been out there for years. Early self-driving cars date back to the 1980s, when Ernst Dickmanns put a vision-guided Mercedes van on the streets of Munich. Researchers from Carnegie Mellon University got a minivan to drive itself across the United States in 1995. Now, decades later, Waymo is operating its robotaxi service in a half-dozen American cities, and the company says its AI-powered cars actually make the roads safer for everyone.

Then there are the Roombas of the world, the robots that are designed to do one thing and keep getting better at it. You can include the vast array of increasingly intelligent manufacturing and warehouse robots in this camp too. By 2027, the year Elon Musk is on track to miss his deadline to start selling Optimus humanoids to the public, Amazon will reportedly replace more than 600,000 jobs with robots. These would probably be boring robots, but they’re safe and effective.

Science fiction promised us humanoids, however. Pick an era in human history, in fact, and someone was dreaming about an automaton that could move like us, talk like us, and do all our dirty work. Replicants, androids, the Mechanical Turk — all these humanoid fantasies imagined an intelligent synthetic self.

Reality gave us package-toting platforms on wheels roving around Amazon warehouses or the sensor-heavy self-driving cars clogging San Francisco streets. In time, even the skeptics think that humanoids will be possible. Probably not in five years, but maybe in 50, we’ll get artificially intelligent companions who can walk alongside us. They’ll take baby steps.

“Good robots are going to be clumsy at first, and you have to find applications where it’s okay for the robot to make mistakes and then recover,” Tedrake said. “Let’s not do open-heart surgery right away with these things. This is more like folding laundry.”

Tech

ChatGPT rolls out new $100 Pro subscription to challenge Claude

OpenAI has rolled out a new Pro subscription that costs $100 and is in line with Claude’s pricing, which also has a $100 subscription, in addition to the $200 Max monthly plan.

Until now, OpenAI has offered three subscription tiers.

First is Go, which costs approx $8, second is Plus for $20, and then the final tier is at $200, a jump of $180.

On the other hand, Anthropic does not offer an $8 subscription, but it has a $100 subscription that comes between the cheapest $20 and the expensive $200 subscription, and it works for the company because it caters to the coding audience.

OpenAI has realized that it needs to go after coders and enterprises, similar to Anthropic’s strategy.

The company’s answer is ChatGPT Pro, which is designed for people who rely on AI to get high-stakes, complex work done for $100.

After this change, OpenAI’s offering looks like the following:

- Plus $20 – For lighter use. Try advanced capabilities like Codex and Deep Research for select projects throughout the week.

- Pro $100 – Built for real projects. For those who use advanced tools and models throughout the week, with 5x higher limits than Plus (and 10x Codex usage vs. Plus for a limited time).

- Pro $200 – For heavy lifting. Run your most demanding workflows continuously, even across parallel projects, with 20× higher limits than Plus.

All Pro plans include access to advanced features, including:

- Pro models

- Codex

- Deep research

- Image creation

- Memory

- File uploads

OpenAI says the Pro plan also includes unlimited access to GPT-5 and legacy models, but it’s not truly unlimited because the typical “Terms of Use” policies apply, including sharing of accounts.

Tech

Mythos autonomously exploited vulnerabilities that survived 27 years of human review. Security teams need a new detection playbook

A 27-year-old bug sat inside OpenBSD’s TCP stack while auditors reviewed the code, fuzzers ran against it, and the operating system earned its reputation as one of the most security-hardened platforms on earth. Two packets could crash any server running it. Finding that bug cost a single Anthropic discovery campaign approximately $20,000. The specific model run that surfaced the flaw cost under $50.

Anthropic’s Claude Mythos Preview found it. Autonomously. No human guided the discovery after the initial prompt.

The capability jump is not incremental

On Firefox 147 exploit writing, Mythos succeeded 181 times versus 2 for Claude Opus 4.6. A 90x improvement in a single generation. SWE-bench Pro: 77.8% versus 53.4%. CyberGym vulnerability reproduction: 83.1% versus 66.6%. Mythos saturated Anthropic’s Cybench CTF at 100%, forcing the red team to shift to real-world zero-day discovery as the only meaningful evaluation left. Then it surfaced thousands of zero-day vulnerabilities across every major operating system and every major browser, many one to two decades old. Anthropic engineers with no formal security training asked Mythos to find remote code execution vulnerabilities overnight and woke up to a complete, working exploit by morning, according to Anthropic’s red team assessment.

Anthropic assembled Project Glasswing, a 12-partner defensive coalition including CrowdStrike, Cisco, Palo Alto Networks, Microsoft, AWS, Apple, and the Linux Foundation, backed by $100 million in usage credits and $4 million in open-source grants. Over 40 additional organizations that build or maintain critical software infrastructure also received access. The partners have been running Mythos against their own infrastructure for weeks. Anthropic committed to a public findings report “within 90 days,” landing in early July 2026.

Security directors got the announcement. They didn’t get the playbook.

“I’ve been in this industry for 27 years,” Cisco SVP and Chief Security and Trust Officer Anthony Grieco told VentureBeat in an exclusive interview at RSAC 2026. “I have never been more optimistic for what we can do to change security because of the velocity. It’s also a little bit terrifying because we’re moving so quickly. It’s also terrifying because our adversaries have this capability as well, and so frankly, we must move this quickly.”

Security directors saw this story told fifteen different ways this week, including VentureBeat’s exclusive interview with Anthropic’s Newton Cheng. As one widely shared X post summarizing the Mythos findings noted, the model cracked cryptography libraries, broke into a production virtual machine monitor, and gave engineers with zero security training working exploits by morning. What that coverage left unanswered: Where does the detection ceiling sit in the methods they already run, and what should they change before July?

Seven vulnerability classes that show where every detection method hits its ceiling

-

OpenBSD TCP SACK, 27 years old. Two crafted packets crash any server. SAST, fuzzers, and auditors missed a logic flaw requiring semantic reasoning about how TCP options interact under adversarial conditions. Campaign cost ~$20,000. Anthropic notes the $50 per-run figure reflects hindsight.

-

FFmpeg H.264 codec, 16 years old. Fuzzers exercised the vulnerable code path 5 million times without triggering the flaw, according to Anthropic. Mythos caught it by reasoning about code semantics. Campaign cost ~$10,000.

-

FreeBSD NFS remote code execution, CVE-2026-4747, 17 years old. Unauthenticated root from the internet, per Anthropic’s assessment and independent reproduction. Mythos built a 20-gadget ROP chain split across multiple packets. Fully autonomous.

-

Linux kernel local privilege escalation. Mythos chained two to four low-severity vulnerabilities into full local privilege escalation via race conditions and KASLR bypasses. CSA’s Rich Mogull noted Mythos failed at remote kernel exploitation but succeeded locally. No automated tool chains vulnerabilities today.

-

Browser zero-days across every major browser. Thousands identified. Some required human-model collaboration. In one case, Mythos chained four vulnerabilities into a JIT heap spray, escaping both the renderer and the OS sandboxes. Firefox 147: 181 working exploits versus two for Opus 4.6.

-

Cryptography library vulnerabilities (TLS, AES-GCM, SSH). Implementation flaws enabling certificate forgery or decryption of encrypted communications, per Anthropic’s red team blog and Help Net Security. A critical Botan library certificate bypass was disclosed the same day as the Glasswing announcement. Bugs in the code that implements the math. Not attacks on the math itself.

-

Virtual machine monitor guest-to-host escape. Guest-to-host memory corruption in a production VMM, the technology keeping cloud workloads from seeing each other’s data. Cloud security architectures assume workload isolation holds. This finding breaks that assumption.

Nicholas Carlini, in Anthropic’s launch briefing: “I’ve found more bugs in the last couple of weeks than I found in the rest of my life combined.”

VentureBeat’s prescriptive matrix

|

Vulnerability Class |

Why Current Methods Miss It |

What Mythos Does |

Security Director Action |

|

OS kernel logic (OpenBSD 27yr, Linux 2-4 chain) |

SAST lacks semantic reasoning. Fuzzers miss logic flaws. Pen testers time-boxed. Bounties scope-exclude kernel. |

Chains 2-4 low-severity findings into local priv-esc. ~$20K campaign. |

Add AI-assisted kernel review to pen test RFPs. Expand bounty scope. Request Glasswing findings from OS vendors before July. Re-score clustered findings by chainability. |

|

Media codec (FFmpeg 16yr H.264) |

SAST unflagged. Fuzzers hit path 5M times, never triggered. |

Reasons about semantics beyond brute-force. ~$10K campaign. |

Inventory FFmpeg, libwebp, ImageMagick, libpng. Stop treating fuzz coverage as security proxy. Track Glasswing codec CVEs from July. |

|

Network stack RCE (FreeBSD 17yr, CVE-2026-4747) |

DAST limited at protocol depth. Pen tests skip NFS. |

Full autonomous chain to unauthenticated root. 20-gadget ROP chain. |

Patch CVE-2026-4747 now. Inventory NFS/SMB/RPC services. Add protocol fuzzing to 2026 cycle. |

|

Multi-vuln chaining (2-4 sequenced, local) |

No tool chains. Pen testers hours-limited. CVSS scores in isolation. |

Autonomous local chaining via race conditions + KASLR bypass. |

Require AI-assisted chaining in pen test methodology. Build chainability scoring. Budget AI red teams for 2026. |

|

Browser zero-days (thousands, 181 Firefox exploits) |

Bounties + continuous fuzzing missed thousands. Some required human-model collaboration. |

90x over Opus 4.6. Chained 4 vulns into JIT heap spray escaping renderer + OS sandbox. |

Shorten patch SLA to 72hr critical. Pre-stage pipeline for July cycle. Pressure vendors for Glasswing timelines. |

|

Crypto libraries (TLS, AES-GCM, SSH, Botan bypass) |

SAST limited on crypto logic. Pen testers rarely audit crypto depth. Formal verification not standard. |

Found cert forgery + decryption flaws in battle-tested libraries. |

Audit all crypto library versions now. Track Glasswing crypto CVEs from July. Accelerate PQC migration. |

|

VMM / hypervisor (guest-to-host memory corruption) |

Cloud security assumes isolation. Few pen tests target hypervisor. Bounties rarely scope VMM. |

Guest-to-host escape in production VMM. |

Inventory hypervisor/VMM versions. Request Glasswing findings from cloud providers. Reassess multi-tenant isolation assumptions. |

Attackers are faster. Defenders are patching once a year.

The CrowdStrike 2026 Global Threat Report documents a 29-minute average eCrime breakout time, 65% faster than 2024, with an 89% year-over-year surge in AI-augmented attacks. CrowdStrike CTO Elia Zaitsev put the operational reality plainly in an exclusive interview with VentureBeat. “Adversaries leveraging agentic AI can perform those attacks at such a great speed that a traditional human process of look at alert, triage, investigate for 15 to 20 minutes, take an action an hour, a day, a week later, it’s insufficient,” Zaitsev said. A $20,000 Mythos discovery campaign that runs in hours replaces months of nation-state research effort.

CrowdStrike CEO George Kurtz reinforced that timeline pressure on LinkedIn the same day as the Glasswing announcement. “AI is creating the largest security demand driver since enterprises moved to the cloud,” Kurtz wrote. The regulatory clock compounds the operational one. The EU AI Act’s next enforcement phase takes effect August 2, 2026, imposing automated audit trails, cybersecurity requirements for every high-risk AI system, incident reporting obligations, and penalties up to 3% of global revenue. Security directors face a two-wave sequence: July’s Glasswing disclosure cycle, then August’s compliance deadline.

Mike Riemer, Field CISO at Ivanti and a 25-year US Air Force veteran who works closely with federal cybersecurity agencies, told VentureBeat what he is hearing from the government. “Threat actors are reverse engineering patches, and the speed at which they’re doing it has been enhanced greatly by AI,” Riemer said. “They’re able to reverse engineer a patch within 72 hours. So if I release a patch and a customer doesn’t patch within 72 hours of that release, they’re open to exploit.” Riemer was blunt about where that leaves the industry. “They are so far in front of us as defenders,” he said.

Grieco confirmed the other side of that collision at RSAC 2026. “If you talk to an operational team and many of our customers, they’re only patching once a year,” Grieco told VentureBeat. “And frankly, even in the best of circumstances, that is not fast enough.”

CSA’s Mogull makes the structural case that defenders hold the long-term advantage: fix a vulnerability once and every deployment benefits. But the transition period, when attackers reverse-engineer patches in 72 hours and defenders patch once a year, favors offense.

Mythos is not the only model finding these bugs. Researchers at AISLE, an AI cybersecurity startup, tested Anthropic’s showcase vulnerabilities on small, open-weights models and found that eight out of eight detected the FreeBSD exploit. AISLE says one model had only 3.6 billion parameters and costs 11 cents per million tokens, and that a 5.1-billion-parameter open model recovered the core analysis chain of the 27-year-old OpenBSD bug. AISLE’s conclusion: “The moat in AI cybersecurity is the system, not the model.” That makes the detection ceiling a structural problem, not a Mythos-specific one. Cheap models find the same bugs. The July timeline gets shorter, not longer.

Over 99% of the vulnerabilities Mythos has identified have not yet been patched, per Anthropic’s red team blog. The public Glasswing report lands in early July 2026. It will trigger a high-volume patch cycle across operating systems, browsers, cryptography libraries, and major infrastructure software. Security directors who have not expanded their patch pipeline, re-scoped their bug bounty programs, and built chainability scoring by then will absorb that wave cold. July is not a disclosure event. It is a patch tsunami.

What to tell the board

Every security director tells the board “we have scanned everything.” Merritt Baer, CSO at Enkrypt AI and former Deputy CISO at AWS, told VentureBeat that the statement does not survive Mythos without a qualifier.

“What security leaders actually mean is: we have exhaustively scanned for what our tools know how to see,” Baer said in an exclusive interview with VentureBeat. “That’s a very different claim.”

Baer proposed reframing residual risk for boards around three tiers: known-knowns (vulnerability classes your stack reliably detects), known-unknowns (classes you know exist but your tools only partially cover, like stateful logic flaws and auth boundary confusion), and unknown-unknowns (vulnerabilities that emerge from composition, how safe components interact in unsafe ways). “This is where Mythos is landing,” Baer said.

The board-level statement Baer recommends: “We have high confidence in detecting discrete, known vulnerability classes. Our residual risk is concentrated in cross-function, multi-step, and compositional flaws that evade single-point scanners. We are actively investing in capabilities that raise that detection ceiling.”

On chainability, Baer was equally direct. “Chainability has to become a first-class scoring dimension,” she said. “CVSS was built to score atomic vulnerabilities. Mythos is exposing that risk is increasingly graph-shaped, not point-in-time.” Baer outlined three shifts security programs need to make: from severity scoring to exploitability pathways, from vulnerability lists to vulnerability graphs that model relationships across identity, data flow, and permissions, and from remediation SLAs to path disruption, where fixing any node that breaks the chain gets priority over fixing the highest individual CVSS.

“Mythos isn’t just finding missed bugs,” Baer said. “It’s invalidating the assumption that vulnerabilities are independent. Security programs that don’t adapt, from coverage thinking to interaction thinking, will keep reporting green dashboards while sitting on red attack paths.”

VentureBeat will update this story with additional operational details from Glasswing’s founding partners as interviews are completed.

Tech

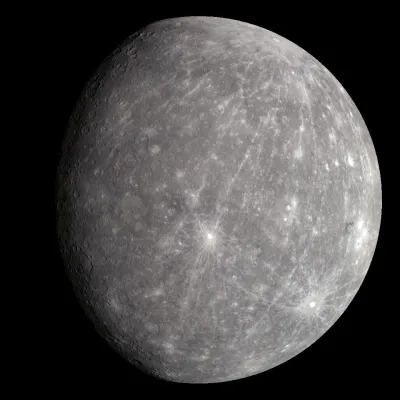

A Mercury Rover Could Explore The Planet By Sticking To The Terminator

With multiple rovers currently scurrying around on the surface of Mars to continue a decades-long legacy, it can be easy to forget sometimes that repeating this feat on other planets that aren’t Earth or Mars isn’t quite as straightforward. In the case of Earth’s twin – Venus – the surface conditions are too extreme to consider such a mission. Yet Mercury might be a plausible target for a rover, according to a study by [M. Murillo] and [P. G. Lucey], via Universe Today’s coverage.

The advantages of putting a rover’s wheels on a planet’s surface are obvious, as it allows for direct sampling of geological and other features unlike an orbiting or passing space probe. To make this work on Mercury as in some ways a slightly larger version of Earth’s moon that’s been placed right next door to the Sun is challenging to say the least.

With no atmosphere it’s exposed to some of the worst that the Sun can throw at it, but it does have a magnetic field at 1.1% of Earth’s strength to take some of the edge off ionizing radiation. This just leaves a rover to deal with still very high ionizing radiation levels and extreme temperature swings that at the equator range between −173 °C and 427 °C, with an 88 Earth day day/night cycle. This compares to the constant mean temperature on Venus of 464 °C.

To deal with these extreme conditions, the researchers propose that a rover might be able to thrive if it sticks to the terminator, being the transition between day and night. To survive, the rover would need to be able to gather enough solar power – if solar-powered – due to the Sun being very low in the sky. It would also need to keep up with the terminator velocity being at least 4.25 km/h, as being caught on either the day or night side of Mercury would mean a certain demise. This would leave little time for casual exploration as on Mars, and require a high level of autonomy akin to what is being pioneered today with the Martian rovers.

Top image: the planet Mercury with its magnetic field. (Credit: A loose necktie, Wikimedia)

Tech

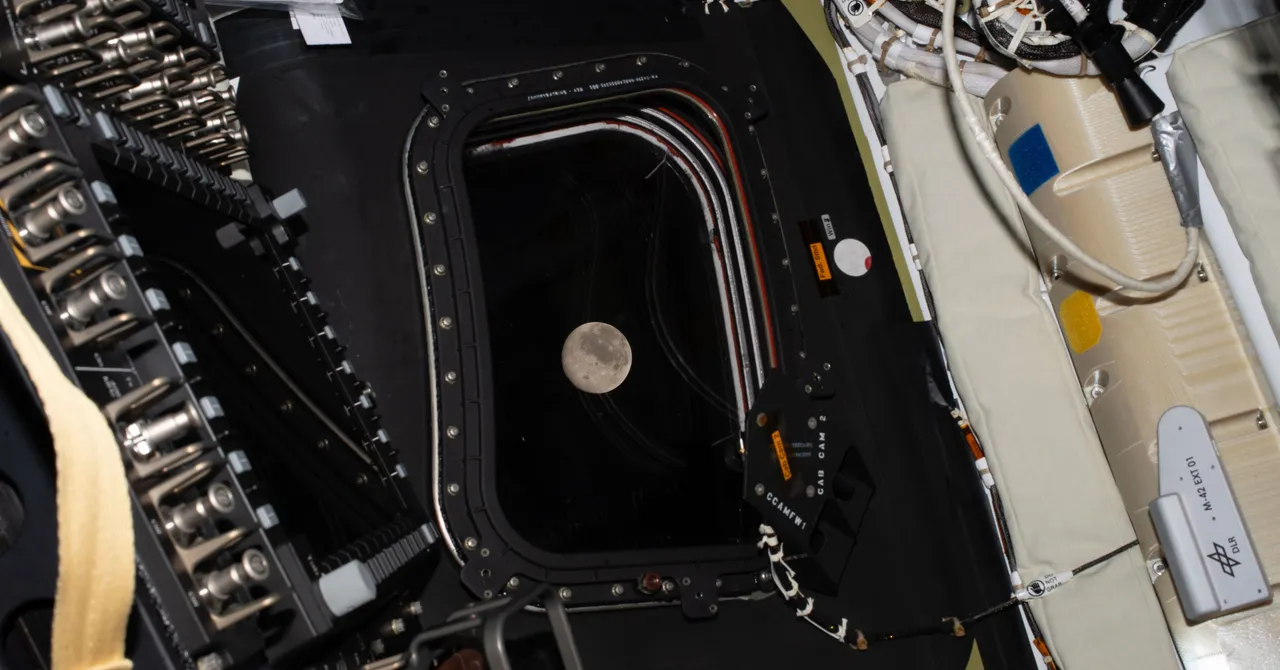

Artemis II Returns From Historic Flight Around the Moon

The farthest journey in human history concluded Friday evening when NASA’s Artemis II astronauts returned to Earth after a flight around the moon. The crew’s Orion space capsule named Integrity splashed down in the Pacific Ocean off the coast of San Diego shortly after 5 pm Pacific Time, marking the end of a 10-day, more than 695,000-mile voyage beyond the lunar far side and back.

The four-person crew of Artemis II—commander Reid Wiseman, pilot Victor Glover, mission specialist Christina Koch, and mission-specialist Jeremy Hansen—traveled a greater distance from Earth than ever before, reaching 252,756 miles from our home planet.

“We most importantly choose this moment to challenge this generation and the next to make sure this record is not long-lived,” said Canadian astronaut Hansen as the crew passed the previous record of 248,655 miles set during Apollo 13.

Integrity began its fiery descent when the spacecraft hit Earth’s atmosphere at about 24,000 miles per hour, entering a communication blackout and decelerating from friction as its heat shield reached temperatures of roughly 3,000 degrees Fahrenheit. The plan was for the capsule to deploy two drogue parachutes at an altitude of about 22,000 feet, slowing it to about 200 miles per hour, then deploy pilot chutes pulling the three main parachutes at roughly 6,000 feet. This would further slow the spacecraft to around 20 miles per hour before it splashed into the ocean.

During their mission, the Artemis II crew saw things that no human has seen before. Flying higher above the lunar surface than the Apollo missions, the astronauts were the first people to see the entire disk of the moon’s far side. They also witnessed a solar eclipse from the vicinity of the moon as the sun slipped behind the lunar disk and illuminated it from behind.

“Humans probably have not evolved to see what we are seeing,” said NASA astronaut Glover during the eclipse. He and the rest of the crew described a halo of light surrounding the moon while one side of the lunar surface was bathed in earthshine. Venus, Mars, and Saturn shone among the stars. “It is truly hard to describe. It is amazing.”

Artemis II began on April 1 when the crew launched from NASA’s Kennedy Space Center in Florida atop the 322-foot-tall Space Launch System rocket, the most powerful vehicle to ever carry humans. After conducting multiple altitude-raising engine burns and testing the manual controls of the spacecraft, the crew proceeded with the engine firing known as translunar injection on day two of the mission, which sent them on a trajectory to the moon.

For the next three days, the crew tested the Orion spacecraft’s systems, practiced putting on their spaceflight suits, conducted additional course correction burns, manually flew the Orion capsule again, and prepared for the lunar flyby around the far side of the moon. They also had trouble venting wastewater from the Orion capsule’s toilet into space.

“We definitely have to fix some of the plumbing,” NASA administrator Jared Isaacman said during a conversation with the crew.

At 12:41 am Eastern Time on April 6, Artemis II entered the lunar sphere of influence, where the moon’s gravity overcomes that of Earth. That day, the crew made their closest approach to the moon, flying to about 4,000 miles above the lunar surface. During the lunar flyby, the crew communicated with a team of scientists on the ground, both before and after a roughly 40-minute communication blackout on the far side, to describe geologic features such as craters and canyons.

Just after breaking the distance record, the crew proposed names for two young, unnamed craters on the moon. The first they called Integrity, after their spacecraft, and the second they named Carroll, in honor of commander Reid Wiseman’s wife, who died of cancer in 2020.

Tech

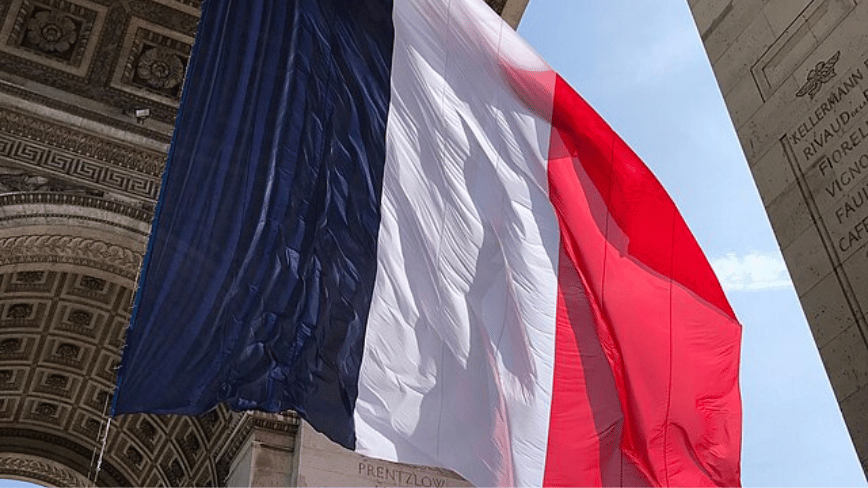

France orders all government ministries to ditch Windows for Linux in digital sovereignty push

In short: France’s Interministerial Digital Directorate (DINUM) announced on 8 April 2026 that it is migrating its own workstations from Windows to Linux and has ordered every government ministry to formalise a plan to eliminate extra-European digital dependencies by autumn 2026. The directive covers operating systems, collaborative tools, cloud infrastructure, and artificial intelligence platforms. It follows France’s January 2026 mandate to replace Microsoft Teams and Zoom with its domestic Visio platform across 2.5 million civil servants by 2027, and is the most comprehensive digital sovereignty measure the French state has yet announced.

What France is actually committing to

An interministerial seminar convened on 8 April by the Directorate General for Enterprise, the National Agency for Information Systems Security, and the State Procurement Directorate produced a directive with two immediate obligations. DINUM itself, which employs roughly 250 agents, will migrate its workstations from Windows to Linux. All other ministries, including their operators and affiliated bodies, must produce their own reduction plans before autumn 2026. The plans are required to address eight categories of dependency: workstations and operating systems, collaborative and communication tools, antivirus and security software, artificial intelligence and algorithms, databases and storage, virtualisation and cloud infrastructure, and network and telecommunications equipment.

No specific Linux distribution has been named in the public announcement, and individual ministries retain the flexibility to choose their migration path within that framework. The software replacement strategy for the most common desktop tasks is already in place in the form of La Suite Numérique, a stack of sovereign productivity tools developed and maintained by DINUM. It includes Tchap, an end-to-end encrypted messaging application already deployed to more than 600,000 civil servants, Visio for video conferencing, a sovereign webmail service, file storage, and collaborative document editing.

The entire platform is hosted on Outscale servers, a subsidiary of Dassault Systèmes, and is certified SecNumCloud by the French information security agency ANSSI. As of April 2026, La Suite had been tested by some 40,000 regular users across departments before the broader mandate. The next milestone is a first set of “Industrial Digital Meetings” scheduled for June 2026, where DINUM intends to formalise public-private coalitions to support the transition.

The precedent that makes this credible

Announcements of government Linux migrations have a long and largely disappointing history. Most have quietly reversed course under the weight of compatibility problems, vendor pressure, and the path dependence of legacy software. France has a reason to believe this time is different, and the reason is the Gendarmerie nationale. Beginning in 2004 with a phased adoption of OpenOffice, Firefox, and Thunderbird, the Gendarmerie progressively built the internal competencies and governance structures required for a full operating system switch. In 2008 it launched GendBuntu, its customised Ubuntu-based deployment.

By June 2024, GendBuntu ran on 103,164 workstations, representing 97% of the force’s computing estate. The financial outcome has been unambiguous: the project saves approximately two million euros per year in licensing costs and has reduced the total cost of ownership by an estimated 40%. In February 2026, the Gendarmerie was cited explicitly by DINUM as the governance model for the national rollout.

The international context adds further validation. Germany’s state of Schleswig-Holstein, which began its own Microsoft-to-Linux transition in earnest in 2024, completed nearly 80% of its 30,000-workstation migration by early 2026 and recorded savings of €15 million in licensing costs in 2026 alone. The lesson both cases illustrate is the same: phased migration with coherent governance, strong internal support functions, and sustained political will consistently outperforms big-bang approaches that attempt to switch everything at once.

The geopolitical trigger

The April 8 announcement does not exist in isolation. It is the operating-system layer of a digital sovereignty strategy that France has been accelerating visibly since late 2024, driven in significant part by the changed relationship with the United States under the Trump administration. Trump’s tariffs reignited Europe’s push for cloud sovereignty from April 2025 onward, with OVHcloud and Scaleway reporting record client growth as European institutions began actively seeking to reduce their exposure to American vendors. In November 2025, France and Germany convened a joint summit on European digital sovereignty, establishing a task force to report in 2026.

In January 2026, France announced it would replace Teams and Zoom with its homegrown Visio platform for all 2.5 million civil servants by 2027, a move described at the time as digital sovereignty moving from slogan to policy. The April 8 Linux mandate is the same logic applied to the operating system itself. Anne Le Hénanff, Minister Delegate for Artificial Intelligence and Digital Technology, has framed the imperative plainly: “Digital sovereignty is not an option, it is a strategic necessity.” David Amiel, Minister of Public Action and Accounts, who led the announcement alongside Le Hénanff, stated that France “can no longer accept that our data, our infrastructure, and our strategic decisions depend on solutions whose rules, pricing, evolution, and risks we do not control.”

The context for that framing is structural: US cloud providers control an estimated 85% of the European cloud market, according to Synergy Research Group, and spending on sovereign European cloud infrastructure is forecast to more than triple to €23 billion by 2027. Europe’s broader bid to reclaim its technology stack has moved from a niche policy concern to a headline political priority across the continent, and France is now moving faster than any other EU member state at the level of government desktop infrastructure.

The limits and the open questions

The April 8 directive is a mandate, not a completed migration. The absence of a specified Linux distribution means each ministry will face its own procurement and compatibility decisions, and the history of public sector IT projects suggests that autumn 2026 plans will vary enormously in ambition and specificity. Certain categories of specialist software, particularly in defence, healthcare, and financial regulation, have deep dependencies on Windows-specific applications for which open-source alternatives either do not exist or are not yet production-ready.

DINUM has acknowledged this through the flexibility it has built into the framework, but the question of how many of those remaining dependencies can realistically be resolved by a government-mandated roadmap is one that will only be answered over the next two to three years. The sovereignty strategy also contains a structural irony that will persist regardless of which operating system runs on civil servant desktops. Even as France replaces Windows with Linux and Teams with Visio, the twelve European AI startups selected for Amazon’s 2026 AWS Pioneers cohort illustrate that the continent’s most ambitious technology projects continue to be built and scaled on American cloud infrastructure. Replacing the desktop layer matters, but it sits above a cloud and compute substrate that remains predominantly American.

The full sovereignty project, if France and its partners are serious about it, will eventually have to address that substrate too. For now, the direction is clear, the political will is real, and the Gendarmerie’s 103,000 Linux workstations provide proof that the goal is achievable at scale. 2025 established AI as the defining technology of the decade, and the decisions governments make now about which infrastructure that AI runs on, and under whose legal jurisdiction, will shape the continent’s digital autonomy for the next generation.

Tech

Grab Apple's M5 MacBook Air for $949 this weekend, record low price

Thanks to a $150 discount, shoppers can grab Apple’s 2026 M5 MacBook Air 13-inch for a record low $949.

Get the lowest 13-inch MacBook Air price this weekend at Amazon – Image credit: Apple

The 13-inch MacBook Air (2026) is now equipped with Apple’s M5 chip that features a 10-core CPU with 4 super cores and 6 efficiency cores. This allows a performance boost over the M4 model. In the standard spec, which is on sale for $949 at Amazon this weekend, you’ll also get an 8-core GPU, 16GB of unified memory, and 512GB of storage.

Get 13″ MacBook Air M5 from $949

Continue Reading on AppleInsider | Discuss on our Forums

Tech

5 Telltale Signs You’re Probably A Bad Driver

Few people believe they are bad drivers, which is exactly why terrible drivers remain blissfully unaware that they are menacing the road. In 1981, a Stockholm University study found that the majority of drivers reported having “above average” driving and safety skills. This wasn’t a one-off, either, as a 2021 study by five researchers at the University of Hong Kong and Linköping University reaffirmed the widespread tendency to overstate one’s abilities.

Try an experiment the next time you’re in a group setting. Ask people what they’d rate their own driving skills, and you’ll probably receive answers ranging from “above average” to “excellent,” which can’t be true. By math and logic, most drivers have to be “average”, as that’s the definition of the word.

This cognitive dissonance — as the researchers call it — happens because bad driving rarely results in fiery crashes and police chases on TV. It happens every day, through many small failures like poor spacing, inconsistent speeds, late or harsh braking, hesitant decisions, and more such minor problems. Together, these small, irritating problems endanger everyone on the road. Also, all of the signs on this list are objectively measurable failures in vehicle control, not just driving preferences. With all that said, here are five worryingly common signs of a bad driver.

Thinking everyone else is the problem

Perhaps the most definitive metric of what defines a bad driver is the “I’m never in the wrong” attitude. If someone you know is constantly bemoaning the state of drivers on the road, then it’s extraordinarily likely that they are the bad driver themselves. Furthermore, if anyone says something along the lines of “that crash was unavoidable,” that indicates a poor or inexperienced driver. In 2016, a Proceedings of the National Academy of Sciences study found that driver-related issues were to blame in over 90% of cited crashes.

While most literature on driver confidence is published outside the U.S., a 2013 National Library of Medicine (NLM) study by two researchers from NYU and Elizabethtown College found that Americans are prone to thinking they are better drivers than average.

Tailgating other drivers

Many people don’t realize that even if you’re in front of someone going the speed limit, the law requires giving way to someone faster than you. That is why it can be very frustrating to be stuck behind a driver who is camping in the left lane on a highway, especially if you’re in a rush. However, this is not an excuse to tailgate the slowpoke in the left lane, and doing so is dangerous and a telltale sign of a bad driver. Studies have shown that tailgating drastically impacts reaction time and road safety, should an incident occur.

In most cases, the two or three-second rule should be applied, wherein you look at a fixed object on the road, and ensure at least three seconds pass between your passing that fixed object, and the car in front of you.

This leaves adequate braking distance should something require a quick stop of the car ahead of you. Furthermore, the evidence overwhelmingly suggests that younger drivers are more likely to be tailgaters than older drivers, though it is one of many common mistakes that even experienced drivers make.

Never missing an exit

A lot of you must have seen the “I turn now, good luck everybody else” snippet from “Family Guy”, Seth MacFarlane’s Disney-owned running animated sitcom. There’s a famous saying that goes along the lines of “bad drivers never miss their/an exit”, which is what that snippet plays on. The idea is that someone who is an objectively bad driver will do dangerous things, like cutting across several lanes of traffic, crossing solid yellow or white lines, or braking extremely hard before taking an off-ramp in order not to miss their exit.

The underlying assumption is that someone who is a “good” driver will prioritize road safety, and if that means adding time and distance to their journey, they’d do it over making a hazardous exit. Of course, the situation can be quite frustrating, especially in certain areas of the U.S. where a single missed exit can result in 15 or even more minutes of extra driving time each journey. The easiest way to not miss exits is to be prepared for them, which might sound intuitive, but is easier said than done. You could be on a new road, visibility could be bad, road markings and signs could be faded, and if you’re going fast, GPS callouts might be a bit delayed. Nonetheless, it’s always better to have a bit more driving time and not cause an accident than to make a risky turn to save a bit of time.

Hard or late braking

Arguably, knowing when to brake (and how much to brake) is the most important skill that a driver can possess, and having a car with a good stopping distance goes a long way in keeping you safe. If you think back to your driving classes, many instructors would have emphasized checking at least the rearview mirror before braking hard, though this may not be possible all the time. On that note, it’s worth taking a look at our guide on how to minimize blind spots in your car, as many drivers fail to set up their mirrors properly.

Anyway, smooth braking is a skill that not a lot of drivers have, because it does take a fair bit of time to develop. Highway traffic can often meet standstill cars, especially near major interchanges in and out of the city. An example would be the Mass Pike interchange in Massachusetts (the U.S. state with the worst drivers, statistically). It is at places like these where you’ll typically hear tires squealing, and more than one person moving into the emergency lane to avoid a crash.

If your passengers are constantly doing the invisible passenger-side brake stomp, it’s probably worth taking a closer look at your braking habits. The easiest fix to this problem is to drive slower, as you would have more control over the vehicle.

They hesitate at predictable situations

We’ve all been at an intersection, free-right, stuck behind a new driver who cannot judge the speed of an oncoming vehicle before merging onto the road. This either causes frustration among the people waiting in line to turn, or downright danger as the oncoming vehicles need to brake or swerve to avoid an incident. These situations often freak people out, especially beginner drivers. Examples that spring to mind are four-way stops, California stops, free right turns, U-turn areas, roundabouts, and, of course, the notorious zipper merges.

Poor decision-making in these situations is a telltale sign of a bad driver, such as not matching speed during on-ramp merging, waiting too long to enter a roundabout, taking a U-turn without gauging oncoming traffic, and more. There is strong evidence to suggest that this hesitation disproportionately affects newer drivers.

A study conducted by four researchers from Jilin University and Yanshan University in January 2021 found a moderate relation between the driver’s total experience and driving violations. This suggests that the more one drives, the easier it becomes to gauge and judge road situations and react to them appropriately. It also means that if you find yourself hesitating with right-of-way and safety decisions, you shouldn’t be too hard on yourself, and that things will get better the more you drive.

Tech

Folding iPhone unveiling & shipment date rumors are all over the place

It’s been a wild week for folding iPhone rumors, with battles about what it will be called, release timing, when orders will ship, and more. On Friday, one prolific leaker jumped in and claims the device will ship in October at the latest.

Apple’s foldable iPhone is now closer to release than ever

This comes following numerous back-and-forth reports that foldable iPhone buyers would have to wait until as late as December for their new devices. Writing in a post on the Weibo social network, leaker Instant Digital says that the most likely outcome is that Apple will be able to debut the foldable iPhone in September.

However, if Apple does choose to split the releases, the leaker doesn’t anticipate a long wait. They say the iPhone Fold will ship a month after the iPhone 18 Pro.

Continue Reading on AppleInsider | Discuss on our Forums

Tech

Chimpanzees In Uganda Locked In Vicious ‘Civil War’, Say Researchers

Researchers say the world’s largest known wild chimpanzee community in Uganda fractured into rival factions and has been locked in a vicious “civil war” for the last eight years. “It is not clear exactly why the once close-knit community of Ngogo chimpanzees at Uganda’s Kibale National Park are at loggerheads, but since 2018 the scientists have recorded 24 killings, including 17 infants,” reports the BBC. From the report: [O]ver several decades, [lead author Aaron Sandel] said the nearly 200 Ngogo chimpanzees had lived in harmony. There were divided into two sets – known to researchers as Western and Central – but they had existed overall as a cohesive group. Sandel said he first noticed them polarizing in June 2015, when the Western chimpanzees ran away and were chased by the Central group. “Chimpanzees are sort of melodramatic,” he said, explaining that following arguments there would ordinarily be “screaming and chasing” and then later, they would grooming and co-operating.

But following the 2015 dispute, the researchers saw that there was a six-week avoidance period between the two sets, with interactions becoming more infrequent. When they did occur, Sandel said they were “a little more intense, a little more aggressive.” Following the emergence of the two distinct groups in 2018, members of the Western group started attacking the Central chimpanzees. In 24 targeted attacks since the split, at least seven adult males and 17 infants from the Central chimps have been killed, the study found, although the researchers believe the actual number of deaths are higher. The researchers believe many factors such as the group size and subsequent competition of resources, and “male-male competition” for reproducing may be to blame.

But they say there were three likely catalysts:

– The first, were the deaths of five adult males and one adult female — for reasons unknown — in 2014, which could have disrupted social networks and weakened social ties across the subgroups

– The following year, there was a change in the alpha male, which the study says coincided with the first period of separation between the Western and Central groups. “Changes in the dominance hierarchy can increase aggression and avoidance in chimpanzees,” it explained

– The third factor was the deaths of 25 chimpanzees, including four adult males and 10 adult females, as a result of a respiratory epidemic, in 2017, a year before the final separation. One of the adult males who died was “among the last individuals to connect the groups,” the research paper said. The study has been published in the journal Science.

Tech

Investing in part of the workforce creates an AI skills gap, finds report

![]()

Forrester’s research has shown that a failure to commit to long-term, inclusive AI education can greatly impact an organisation.

Research and advisory firm Forrester has published the results of a report in which it explored the ramifications for employers and their organisations, when there is a failure to promote AI education across the entirety of a company.

The AIQ 2.0: Employees (Still) Aren’t Ready To Succeed With Workforce AI report found that while the majority of AI decision-makers and their organisations are using predictive and generative AI (GenAI), only half say they offer training in this area to non-technical employees. As a result, many companies are failing to invest in AI understanding, skills and ethics among the wider workforce.

The report said: “Those that have tried to upskill haven’t been particularly successful, yet people remain central to the success of your AI strategy.”

Employer readiness

According to a previous report issued by Forrester, the State of AI 2025 survey, almost 70pc of AI decision-makers said they are using GenAI in deployed production applications, while 20pc use it to run experiments and among automation decision-makers. 81pc of automation decision-makers also said AI copilots that assist employees in their work are important applications.

Forrester suggests that this is indicative of a growing problem in which there is a growing disconnect between the AI needs of a company and the actions being taken.

“AI is becoming more important to the work lives of employees and employees must adapt,” said the organisation. “But adaptation isn’t coming quickly or easily. Many employers remain mired in an environment of low skills and employee fears that isn’t conducive to successfully adopting workforce AI or driving productivity from its use.”

Research found that the proportion of AI decision-makers across six countries who said their organisations offer internal training on AI to non-technical employees only grew from 47pc in 2024 to 51pc in 2025, an improvement of just 4pc. Also only growing by 4pc was the number of AI decision-makers who said that their organisations offer training on prompt engineering – which Forrester finds to be a key skill for using most workforce AI tools in the modern era – which grew from 19pc to 23pc.

Fear factor

Forrester also noted that fears around ‘stunt adoption’ and AI-related job loss are hindering implementation, despite Forrester’s opinion that “very few jobs were lost to AI in 2025”. Data indicated that future job loss, while possible, will not constitute a job apocalypse, yet fears persist, due in part to a failure by organisations to correctly or consistently discuss and explain the process of introducing AI.

The report said: “Forrester’s 2025 data shows that 43pc of employees fear that, in general, many people will lose their jobs to automation in the next five years, while 25pc fear it will impact their own job during that span. This creates an ambient environment of fear and mistrust.”

The organisation added that one business leader said some of their employees fear job less, which turns them away from AI “altogether”.

“Organisations that fail to frame workforce AI as an opportunity builder for employees and that don’t articulate the benefits from an employee perspective see fears of job loss magnified,” said Forrester.

So, how might fears and anxieties be reduced so employers and employees can better embrace the changing landscape?

According to Forrester’s research, comprehensive learning and engagement programmes are key, with the report noting that leading organisations move beyond formal training and invest in continuous, hands-on learning and peer-based approaches that drive real adoption and impact.

Commenting on the findings of the report, JP Gownder, a vice-president and principal analyst at Forrester said: “Employers aren’t giving their people the skills, understanding, or ethical grounding they need to succeed with AI and it’s becoming a clear bottleneck to productivity and ROI. Our research shows most organisations are rolling out AI tools without investing in employees’ ability to use them effectively.

“To close the gap, businesses must move beyond surface-level training and build continuous, hands-on learning that demystifies AI, addresses employee concerns and develops real capability. This isn’t about replacing workers, it’s about enabling them to work smarter with AI.

“The organisations that treat AI literacy as a strategic priority, not a box-ticking exercise, will be the ones that unlock meaningful productivity gains and long-term competitive advantage.”

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

-

Business5 days ago

Business5 days agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Sports6 days ago

Sports6 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Politics8 hours ago

Politics8 hours agoUS brings back mandatory military draft registration

-

Fashion9 hours ago

Fashion9 hours agoWeekend Open Thread: Veronica Beard

-

Business7 days ago

Business7 days agoExpert Picks for Every Need

-

Tech3 days ago

Tech3 days agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Business6 days ago

Business6 days agoNo Jackpot Winner, Prize to Climb to $231 Million

-

Fashion5 days ago

Fashion5 days agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Sports9 hours ago

Sports9 hours agoMan United discover Nico Schlotterbeck transfer fee as defender reaches Dortmund agreement

-

Fashion3 days ago

Fashion3 days agoLet’s Discuss: DEI in 2026

-

Crypto World3 days ago

Crypto World3 days agoBitcoin recovers as US and Iran Agree a Ceasefire Deal

-

Business6 hours ago

Business6 hours agoTesla Model Y Tops China Auto Sales in March 2026 With 39,827 Registrations, Beating Cheaper EVs and Gas Cars

-

Crypto World2 days ago

Crypto World2 days agoCanary Capital Files SEC Registration for PEPE ETF

-

Business6 days ago

Business6 days agoAkebia Therapeutics, Inc. (AKBA) Discusses Pipeline Progress and Strategic Focus on Kidney Disease Treatments at R&D Day – Slideshow

-

Business15 hours ago

Business15 hours agoOpenAI Halts Stargate UK Data Centre Project Over Energy Costs and Copyright Row

-

Tech5 days ago

Tech5 days agoHaier is betting big that your next TV purchase will be one of these

-

Politics7 days ago

Politics7 days agoThe UK should not pay a penny in slavery reparations

-

Tech5 days ago

Tech5 days agoThe Xiaomi 17 Ultra has some impressive add-ons that make snapping photos really fun

-

Tech5 days ago

Tech5 days agoSamsung just gave up on its own Messages app

-

Tech5 days ago

Tech5 days agoGamer Restores the Original PlayStation Portal From Two Decades Ago

You must be logged in to post a comment Login