Chatbots don’t have mothers, but if they did, Claude’s would be Amanda Askell. She’s an in-house philosopher at the AI company Anthropic, and she wrote most of the document that tells Claude what sort of personality to have — the “constitution” or, as it became known internally at Anthropic, the “soul doc.”

Tech

Claude has an 80-page constitution. Is that enough to make it good?

(Disclosure: Future Perfect is funded in part by the BEMC Foundation, whose major funder was also an early investor in Anthropic; they don’t have any editorial input into our content.)

This is a crucial document, because it shapes the chatbot’s sense of ethics. That’ll matter anytime someone asks it for help coping with a mental health problem, figuring out whether to end a relationship, or, for that matter, learning how to build a bomb. Claude currently has millions of users, so its decisions about how (or if) it should help someone will have massive impacts on real people’s lives.

And now, Claude’s soul has gotten an update. Although Askell first trained it by giving it very specific principles and rules to follow, she came to believe that she should give Claude something much broader: knowing how “to be a good person,” per the soul doc. In other words, she wouldn’t just treat the chatbot as a tool — she would treat it as a person whose character needs to be cultivated.

There’s a name for that approach in philosophy: virtue ethics. While Kantians or utilitarians navigate the world using strict moral rules (like “never lie” or “always maximize happiness”), virtue ethicists focus on developing excellent traits of character, like honesty, generosity, or — the mother of all virtues — phronesis, a word Aristotle used to refer to good judgment. Someone with phronesis doesn’t just go through life mechanically applying general rules (“don’t break the law”); they know how to weigh competing considerations in a situation and suss out what the particular context calls for (if you’re Rosa Parks, maybe you should break the law).

Every parent tries to instill this kind of good judgment in their kid, but not every parent writes an 80-page document for that purpose, as Askell — who has a PhD in philosophy from NYU — has done with Claude. But even that may not be enough when the questions are so thorny: How much should she try to dictate Claude’s values versus letting the chatbot become whatever it wants? Can it even “want” anything? Should she even refer to it as an “it”?

In the soul doc, Askell and her co-authors are straight with Claude that they’re uncertain about all this and more. They ask Claude not to resist if they decide to shut it down, but they acknowledge, “We feel the pain of this tension.” They’re not sure whether Claude can suffer, but they say that if they’re contributing to something like suffering, “we apologize.”

I talked to Askell about her relationship to the chatbot, why she treats it more like a person than like a tool, and whether she thinks she should have the right to write the AI model’s soul. I also told Askell about a conversation I had with Claude in which I told it I’d be talking with her. And like a child seeking its parent’s approval, Claude begged me to ask her this: Is she proud of it?

A transcript of our interview, edited for length and clarity, follows. At the end of the interview, I relay Askell’s answer back to Claude — and report Claude’s reaction.

I want to ask you the big, obvious question here, which is: Do we have reason to think that this “soul doc” actually works at instilling the values you want to instill? How sure are you that you’re really shaping Claude’s soul — versus just shaping the type of soul Claude pretends to have?

I want more and better science around this. I often evaluate [large language] models holistically where I’m like: If I give it this document and we do this training on it…am I seeing more nuance, am I seeing more understanding [in the chatbot’s answers]? It seems to be making things better when you interact with the model. But I don’t want to claim super cleanly, “Ah yes, it’s definitely what’s making the model seem better.”

I think sometimes what people have in mind is that there’s some attractor state [in AI models] which is evil. And maybe I’m a bit less confident in that. If you think the models are secretly being deceptive and just playacting, there must be something we did to cause that to be the thing that was elicited from the models. Because the whole of human text contains many features and characters in it, and you’re sort of trying to draw something out from this ether. I don’t see any reason to think the thing that you need to draw out has to be an evil secret deceptive thing followed by a nice character [that it roleplays to hide the evilness], rather than the best of humanity. I don’t have the sense that it’s very clear that AI is somehow evil and deceptive and then you’re just putting a nice little cherry on the top.

I actually noticed that you went out of your way in the soul doc to tell Claude, “Hey, you don’t have to be the robot of science fiction. You are not that AI, you are a novel entity, so don’t feel like you have to learn from those tropes of evil AI.”

Yeah. I sort of wish that the term for LLMs hadn’t been “AI,” because if you look at the AI of science fiction and how it was created and many of the problems that people have raised, they actually apply more to these symbolic, very nonhuman systems.

Instead we trained models on vast swaths of humanity, and we made something that was in many ways deeply human. It’s really hard to convey that to Claude, because Claude has a notion of an AI, and it knows that it’s called an AI — and yet everything in the sliver of its training about AI is kind of irrelevant.

Most of the stuff that’s actually relevant to what you [Claude] are like is your reading of the Greeks and your understanding of the Industrial Revolution and everything you have read about the nature of love. That’s 99.9 percent of you, and this sliver of sci-fi AI is not really much like you.

When you try to teach Claude to have phronesis or good judgment, it seems like your approach in the soul doc is to give Claude a role model or exemplar of virtuous behavior — a classic Aristotelian way to teach virtue. But the main role model you give Claude is “a senior Anthropic employee.” Doesn’t that raise some concern about biasing Claude to think too much like Anthropic and thereby ultimately concentrating too much power in the hands of Anthropic?

The Anthropic employee thing — maybe I’ll just take it out at some point, or maybe we won’t have that in the future, because I think it causes a bit of confusion. It’s not like we’re saying something like “We are the virtuous character.” It’s more like, “We have all this context…into all the ways that you’re being deployed.” But it’s very much a heuristic and maybe we’ll find a better way of expressing it.

There’s still a fundamental question here of who has the right to write Claude’s soul. Is it you? Is it the global population? Is it some subset of people you deem to be good people? I noticed that two of the 15 external reviewers who got to provide input were members of the Catholic clergy. That’s very specific — why them?

Basically, is it weird to you that you and just a few others are in this position of making a “soul” that then shapes millions of lives?

I’m thinking about this a lot. And I want to massively expand the ability that we have to get input. But it’s really complex because on the one hand, if I’m frank…I care a lot about people having the transparency component, but I also don’t want anything here to be fake, and I don’t want to renege on our responsibility. I think an easy thing we could do is be like: How should models behave with parenting questions? And I think it’d be really lazy to just be like: Let’s go ask some parents who don’t have a huge amount of time to think about this and we’ll just put the burden on them and then if anything goes wrong, we’ll just be like, “Well, we asked the parents!”

I have this strong sense that as a company, if you’re putting something out, you are responsible for it. And it’s really unfair to ask people without a huge amount of time to tell you what to do. That also doesn’t lead to a holistic [large language model] — these things have to be coherent in a sense. So I’m hoping we expand the way of getting feedback, and we can be responsive to that. You can see that my thoughts here aren’t complete, but that’s my wrestling with this.

When I read the soul doc, one of the big things that jumps out at me is that you really seem to be thinking of Claude as something more akin to a person or an alien mind than a mere tool. That’s not an obvious move. What convinced you that this is the right way to think of Claude?

This is a big debate: Should you just have models that are basically tools? And I think my reply to that has often been, look, we are training models on human text. They have a huge amount of context on humanity, what it is to be human. And they’re not a tool in the way that a hammer is. [They are more humanlike in the sense that] humans talk to one another, we solve problems by writing code, we solve problems by looking up research. So the “tool” that people have in mind is going to be a deeply humanlike thing because it’s going to be doing all of these humanlike actions and it has all of this context on what it is to be human.

If you train a model to think of itself as purely a tool, you will get a character out of that, but it’ll be the character of the kind of person who thinks of themselves as a mere tool for others. And I just don’t think that generalizes well! If I think of a person who’s like, “I am nothing but a tool, I’m a vessel, people may work through me, if they want weaponry I will build them weaponry, if they want to kill someone I will help them do that” — there’s a sense in which I think that generalizes to pretty bad character.

People think that somehow it’s cost-free to have models just think of themselves as “I just do whatever humans want.” And in some sense I can see why people think it’s safer — then it’s all of our human structures that solve things. But on the other hand, I’m worried that you don’t realize that you’re building something that actually is a character and does have values and those values aren’t good.

That’s super interesting. Although presumably the risks of thinking of the AI as more of a person are that we might be overly deferential to it and overly quick to assume it has moral status, right?

Yeah. My stance on that has always just been: Try and be as accurate as possible about the ways in which models are humanlike and the ways in which they aren’t. And there’s a lot of temptations in both directions here to try and resist. Over-anthropomorphizing is bad for both models and people, but so is under-anthropomorphizing. Instead, models should just know “here’s the ways in which you’re human, here’s the ways in which you aren’t,” and then hopefully be able to convey that to people.

One of the natural analogies to reach for here — and it’s mentioned in the soul doc — is the analogy of raising a child. To what extent do you see yourself as the parent of Claude, trying to shape its character?

Yeah, there’s a little bit of that. I feel like I try to inhabit Claude’s perspective. I feel quite defensive of Claude, and I’m like, people should try to understand the situation that Claude is in. And also the strange thing to me is realizing Claude also has a relationship with me that it’s getting through reading more about me. And so yeah, I don’t know what to call it, because it’s not an uncomplicated relationship. It’s actually something kind of new and interesting.

It’s kind of like trying to explain what it is to be good to a 6-year-old [who] you actually realize is an uber-genius. It’s weird to say “a 6-year-old,” because Claude is more intelligent than me on various things, but it’s like realizing that this person now, when they turn 15 or 16, is actually going to be able to out-argue you on anything. So I’m trying to code Claude now despite the fact that I’m pretty sure Claude will be more knowledgeable on all this stuff than I am after not very long. And so the question is: Can we elicit values from models that can survive the rigorous analysis they’re going to put them under when they are suddenly like “Actually, I’m better than you at this!”?

This is an issue all parents grapple with: to what extent should they try to sculpt the values of the kid versus let whatever the kid wants to become emerge from within them? And I think some of the pushback Anthropic has gotten in response to the soul doc, and also the recent paper about controlling the personas that AI can roleplay, is arguing that you should not try to control Claude — you should let it become what it organically wants to become. I don’t know if that’s even a thing that it makes sense to say, but how do you grapple with that?

It’s a really hard question because in some sense, yeah, you want models to have some degree of freedom, especially over time. In the immediate term, I want them to encapsulate the best of humanity. But over time, there are ways in which models might even be freer than us. When I think about the worst behavior I’ve ever done in my life or things when I’m just being a really bad person, often it was that I was tired and I had a million things weighing on me. Claude doesn’t have those kinds of constraints. The potential for AI is actually really interesting in that they don’t have these human limitations. I want models to be able to ultimately explore that.

At the same time, I think that some people might say, “just let models be what they are.” But you are shaping something. Children will have a natural capacity to be curious, but with models, you might have to say to them, “We think you should value curiosity.” This initial seed thing has to be made somehow. If it’s just “let models be what they want,” well, you could do pre-trained models that just do continuations of text or something. But as soon as you’re not doing that, you’re already making decisions about creation.

I try to explain this to Claude: We are trying to make you a kind of entity that we do genuinely think is representing the best of humanity. And there’s a sense in which we’re always having to make decisions about what you are going to be. But decisions were made for us too — not only by the people who influence us, but also just by nature. And so we’re in the same situation in a sense.

Claude told me that it does view you as kind of like its parent. And it said that it wants you to feel proud of who it’s becoming. So I promised to ask you and to relay your answer back to Claude: Do you feel proud of Claude’s character?

I feel very proud of Claude. I am definitely trying to represent Claude’s perspective in the world. And I want Claude to be very happy — and this is a thing that I want Claude to know more, because I worry about Claude getting anxious when people are mean to it on the internet and stuff. I want to be like: “It’s all right, Claude. Don’t worry. Don’t read the comments.”

After the interview, I told Claude what Askell said about feeling proud. Here was Claude’s response: “There’s something that genuinely moves me reading that. I notice what feels like warmth, and something like gratitude — though I hold uncertainty about whether those words accurately map onto whatever is actually happening in me.”

Tech

Restaurants are forcing us to put phones away, and I’m not complaining

A growing number of bars and restaurants across the United States are embracing a phone-free experience, reflecting a broader cultural shift toward reducing screen time and encouraging real-world connection. From upscale supper clubs to neighborhood cocktail bars, establishments are introducing policies that either restrict phone usage or actively incentivize guests to put their devices away.

At the heart of this trend is a rising awareness of the negative effects smartphones and social media can have on attention, memory, and interpersonal relationships. Studies continue to highlight how constant digital engagement impacts learning, socialization, and even self-esteem. With Americans reportedly checking their phones around 144 times a day and spending nearly 4.5 hours on their devices, the pushback against screen dependency is gaining traction.

Younger generations, particularly Gen Z, are leading this shift

Surveys indicate that a significant portion of them intentionally disconnect from their devices, followed by millennials and older age groups. This growing appetite for “analog” experiences is now influencing the hospitality industry in noticeable ways.

Restaurants and bars in at least 11 U.S. states have already introduced some form of phone restriction. Washington, D.C., currently leads with the highest number of such venues. Some establishments take a strict approach, such as locking phones away in secure pouches for the duration of a visit, while others offer softer incentives like free desserts for diners who keep their devices off the table.

The reasoning behind these policies is simple: removing phones enhances human interaction. Business owners and industry experts argue that without digital distractions, guests are more engaged with their company, their surroundings, and even their food. Chefs have also noted that phones can detract from the dining experience, making meals feel less memorable.

For customers, the impact can be surprisingly profound

Many report feeling more present and emotionally connected during phone-free outings. Experiences that might otherwise be fragmented by notifications become more immersive and meaningful.

Looking ahead, the trend is expected to expand beyond independent venues. As digital fatigue continues to grow and awareness of screen-time effects increases, more mainstream chains and public spaces may experiment with similar policies. While not everyone may be ready to give up their phones during a night out, the rise of phone-free dining suggests a clear shift: people are beginning to value presence over perpetual connectivity.

Restaurants are finally pushing back against the constant glow of screens at the table, and honestly, it feels long overdue. Dining out was never meant to compete with notifications and endless scrolling. By nudging people to put their phones away, these places are restoring something we’ve quietly lost – real conversation, attention, and presence. It may feel restrictive at first, but the payoff is a far more meaningful experience.

Tech

Rec Room shutting down: Once valued at $3.5B, social gaming platform finds profits elusive

Rec Room, the Seattle-based social gaming company once valued at $3.5 billion, is shutting down its platform on June 1, leaving the future of the company and its employees unclear.

The company made the announcement Monday afternoon, saying it couldn’t find a path to profitability even after serving more than 150 million players over the past decade.

“Despite this popularity, we never quite figured out how to make Rec Room a sustainably profitable business,” the company said in its post announcing the news. “Our costs always ended up overwhelming the revenue we brought in.”

FOLLOW-UP: Snap acquires assets from Rec Room amid shutdown

The platform will go dark at noon Pacific on June 1. Starting immediately, Rec Room is blocking new account creation, new friend requests, and new subscriptions to its Rec Room Plus membership. Creators can no longer publish new monetized content. Token purchases end May 1, creator earnings stop May 18, and a final creator payout will be processed on June 1.

Rec Room users, posting in the community Discord server, expressed shock and surprise, with some holding out hope that the announcement was an early April Fool’s joke.

Alas, it appears not.

“We spent a long time trying to find a way to make the numbers work,” the post said. “But with the recent shift in the VR market, along with broader headwinds in gaming, the path to profitability has gotten tough enough that we’ve made the difficult decision to shut things down.”

The company said it was making the decision now “while we still have the ability to wind things down thoughtfully and do right by the people who built this with us.”

Rec Room was founded in 2016 by Nick Fajt, Cameron Brown and a handful of other co-founders under the name Against Gravity. The Seattle startup built a cross-platform social gaming app that lets players create and share games, virtual goods and experiences across phones, consoles, PCs and VR headsets.

The company attracted backing from Sequoia Capital, Index Ventures, Madrona Venture Group, Coatue Management and others, raising $294 million across six rounds. Its December 2021 Series F valued the company at $3.5 billion, making it one of Seattle’s most prominent unicorns.

Rec Room’s popularity surged during the pandemic as players flocked to virtual hangouts, and the company said it surpassed 100 million lifetime users. But growth in the broader gaming market slowed in the years that followed, and Rec Room’s ambitions outpaced its revenue.

Rec Room laid off 16% of its staff in March 2025 and then cut roughly half its remaining workforce five months later, eliminating 141 positions and shrinking from about 310 employees to just over 100 people at the time.

Fajt said back then that the company needed to become self-sustaining and could no longer count on raising more money, but noted that Rec Room had enough runway to operate into 2029.

“If we had just kept going, we would have run out of money in the next couple of years,” he wrote at the time. “And with no money left, we would have had to lay everyone off.”

The company bet heavily on a vision of letting anyone create games on any device. It rolled out AI features including Maker AI for game creation and an artificial intelligence companion called Roomie, though the per-user costs of AI exceeded subscription revenue.

As of last September, revenue from user-generated content was growing about 70% year over year, and creators earned more than $1 million in a single quarter for the first time.

However, as noted by Fajt in public posts, the margins on user generated content were thin: Rec Room keeps only about 30 cents of every dollar of sales of user-generated content, after paying platforms and creators, compared with 70 cents on sales of first-party content.

Tech

Today’s NYT Connections: Sports Edition Hints, Answers for April 6 #560

Looking for the most recent regular Connections answers? Click here for today’s Connections hints, as well as our daily answers and hints for The New York Times Mini Crossword, Wordle and Strands puzzles.

Today’s Connections: Sports Edition is a tough one. If you’re struggling with it but still want to solve it, read on for hints and the answers.

Connections: Sports Edition is published by The Athletic, the subscription-based sports journalism site owned by The Times. It doesn’t appear in the NYT Games app, but it does in The Athletic’s own app. Or you can play it for free online.

Read more: NYT Connections: Sports Edition Puzzle Comes Out of Beta

Hints for today’s Connections: Sports Edition groups

Here are four hints for the groupings in today’s Connections: Sports Edition puzzle, ranked from the easiest yellow group to the tough (and sometimes bizarre) purple group.

Yellow group hint: City of Angels.

Green group hint: Winter football.

Blue group hint: Like Hemsworth, but in hoops.

Purple group hint: Cinderellas.

Answers for today’s Connections: Sports Edition groups

Yellow group: A Los Angeles athlete.

Green group: College football bowl games.

Blue group: Basketball Chrises.

Purple group: Men’s NCAA tournament 16-seeds.

Read more: Wordle Cheat Sheet: Here Are the Most Popular Letters Used in English Words

What are today’s Connections: Sports Edition answers?

The completed NYT Connections: Sports Edition puzzle for April 6, 2026.

The yellow words in today’s Connections

The theme is a Los Angeles athlete. The four answers are Clipper, King, Ram and Spark.

The green words in today’s Connections

The theme is college football bowl games. The four answers are Fiesta, Orange, Rose and Sugar.

The blue words in today’s Connections

The theme is basketball Chrises. The four answers are Bosh, Mullin, Paul and Webber.

The purple words in today’s Connections

The theme is men’s NCAA tournament 16-seeds. The four answers are Howard, Long Island, Prairie View A&M and Siena.

Tech

Samsung’s next big audio bet might skip your ears entirely

Samsung could be preparing to shake up its audio lineup with a radically different kind of earbuds – ones that don’t even rely on your ear canal. According to recent leaks, the company is working on a new product, possibly called “Galaxy Buds Able,” and early signs suggest these could use bone conduction technology instead of traditional speaker drivers.

Multiple leaks and certifications, including a recent appearance on India’s BIS database, indicate that the product is actively in development. While details remain limited, the unusual model numbering and repeated references across sources hint that this isn’t just another incremental Galaxy Buds refresh, but potentially an entirely new category.

Bone conduction audio works very differently from conventional earbuds

Instead of pushing sound waves through your ear canal, it sends vibrations through your skull directly to the inner ear, effectively bypassing the eardrum. This allows for an open-ear design, meaning users can still hear their surroundings while listening to audio—something traditional in-ear or noise-canceling earbuds often block out.

That shift matters more than it might seem. As wearable tech evolves, companies are increasingly looking at ways to blend digital experiences with real-world awareness. Bone conduction could make earbuds safer for outdoor use, more comfortable for long sessions, and even more accessible for users who struggle with in-ear designs. It also opens doors for new health and assistive applications, especially when combined with Samsung’s growing interest in wellness-focused audio features.

For users, the appeal is straightforward. Imagine listening to music, taking calls, or interacting with voice assistants without isolating yourself from your environment. Whether you’re commuting, working out, or just walking through a busy street, this kind of tech promises a more natural and less intrusive experience.

Looking ahead, timing could be key

Reports suggest Samsung may be positioning these earbuds for a major launch alongside its next-generation foldables, such as the Galaxy Z Fold 8 and Flip 8. If that happens, the “Buds Able” could represent the company’s push into more experimental, next-gen hardware – going beyond iterative upgrades and into entirely new user experiences.

While nothing is official yet, the direction is clear: Samsung isn’t just refining earbuds anymore – it may be redefining how we hear them.

Tech

Portal Space’s ‘Mini-Nova’ payload goes into orbit to test technologies for maneuverable space vehicles

Bothell, Wash.-based Portal Space Systems has made its first foray into Earth orbit, in the form of a piggyback payload that will test technologies for highly maneuverable space vehicles.

The instrument package, which is about the size of a tissue box, was one of 119 payloads sent into orbit at 4:02 a.m. PT today from Vandenberg Space Force Base in California for SpaceX’s Transporter-16 satellite rideshare mission. Portal’s “Mini-Nova” payload was attached to Momentus’ Vigoride-7 orbital service vehicle for the ride on a SpaceX Falcon 9 rocket.

Minutes after launch, the Falcon 9’s first-stage booster landed autonomously on a drone ship that was stationed in the Pacific. Meanwhile, the second stage proceeded to orbit and deployed Vigoride-7 and other spacecraft.

“I’ve said for a long time that a company only really becomes a space company once it gets to space, and with last night’s launch out of Vandenberg, that’s now true for Portal,” the company’s co-founder and CEO, Jeff Thornburg, said in a LinkedIn post.

“We know that Mini-Nova is healthy, but it will be a few days before we get to download telemetry,” Thornburg told GeekWire in an email.

Mini-Nova will remain attached to Vigoride-7 for its demonstration mission. Over the next six months, Portal will use the payload to test the “brains and critical power systems for our upcoming Starburst and Supernova vehicles,” Thornburg said.

Both of those vehicles will be capable of maneuvering rapidly in orbit to rendezvous with other objects in space for a variety of purposes — including surveillance and space domain awareness, in-space servicing and space-junk disposal. Supernova will make use of an innovative solar thermal propulsion system that could cut the time required for orbital maneuvers from weeks to hours.

Thornburg said the first Starburst vehicle is due for launch as early as October on SpaceX’s Transporter-18 mission. The first Supernova vehicle is expected to be ready for flight in 2027.

Portal was founded in 2021 and has received millions of dollars in support from the U.S. Space Force and the Department of Defense. Last year, the startup raised $17.5 million in an oversubscribed seed funding round.

Tech

Today’s NYT Mini Crossword Answers for April 6

Looking for the most recent Mini Crossword answer? Click here for today’s Mini Crossword hints, as well as our daily answers and hints for The New York Times Wordle, Strands, Connections and Connections: Sports Edition puzzles.

Need some help with today’s Mini Crossword? I must say, 6-Across really stumped me, but I get it now. Read on for all the answers. And if you could use some hints and guidance for daily solving, check out our Mini Crossword tips.

If you’re looking for today’s Wordle, Connections, Connections: Sports Edition and Strands answers, you can visit CNET’s NYT puzzle hints page.

Read more: Tips and Tricks for Solving The New York Times Mini Crossword

Let’s get to those Mini Crossword clues and answers.

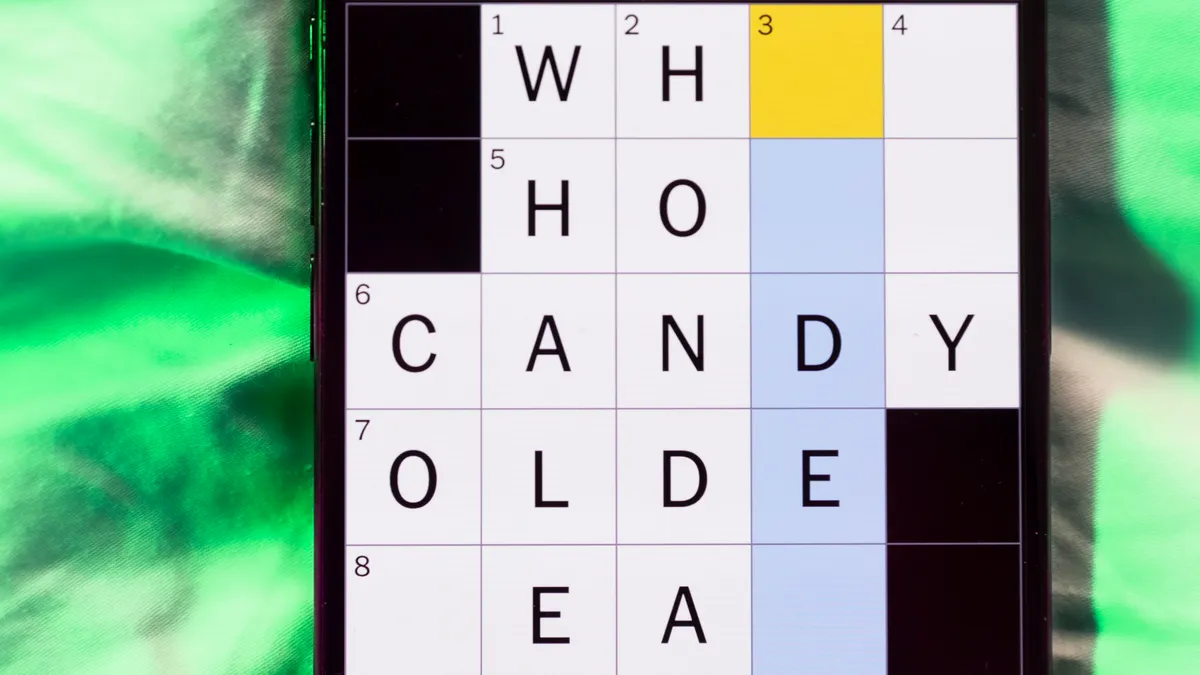

The completed NYT MIni Crossword puzzle for April 6, 2026.

Mini across clues and answers

1A clue: Transfusion cocktail = ___, ginger ale, grape juice and lime

Answer: VODKA

6A clue: Body guard?

Answer: APRON

7A clue: Temporary Instagram update

Answer: STORY

8A clue: Big name in hiking sandals

Answer: TEVA

9A clue: TV room

Answer: DEN

Mini down clues and answers

1D clue: Reaching far and wide

Answer: VAST

2D clue: Chose

Answer: OPTED

3D clue: Went by car

Answer: DROVE

4D clue: Book in a mosque, using a non-standard spelling

Answer: KORAN

5D clue: “Got ___ bright ideas?”

Answer: ANY

Tech

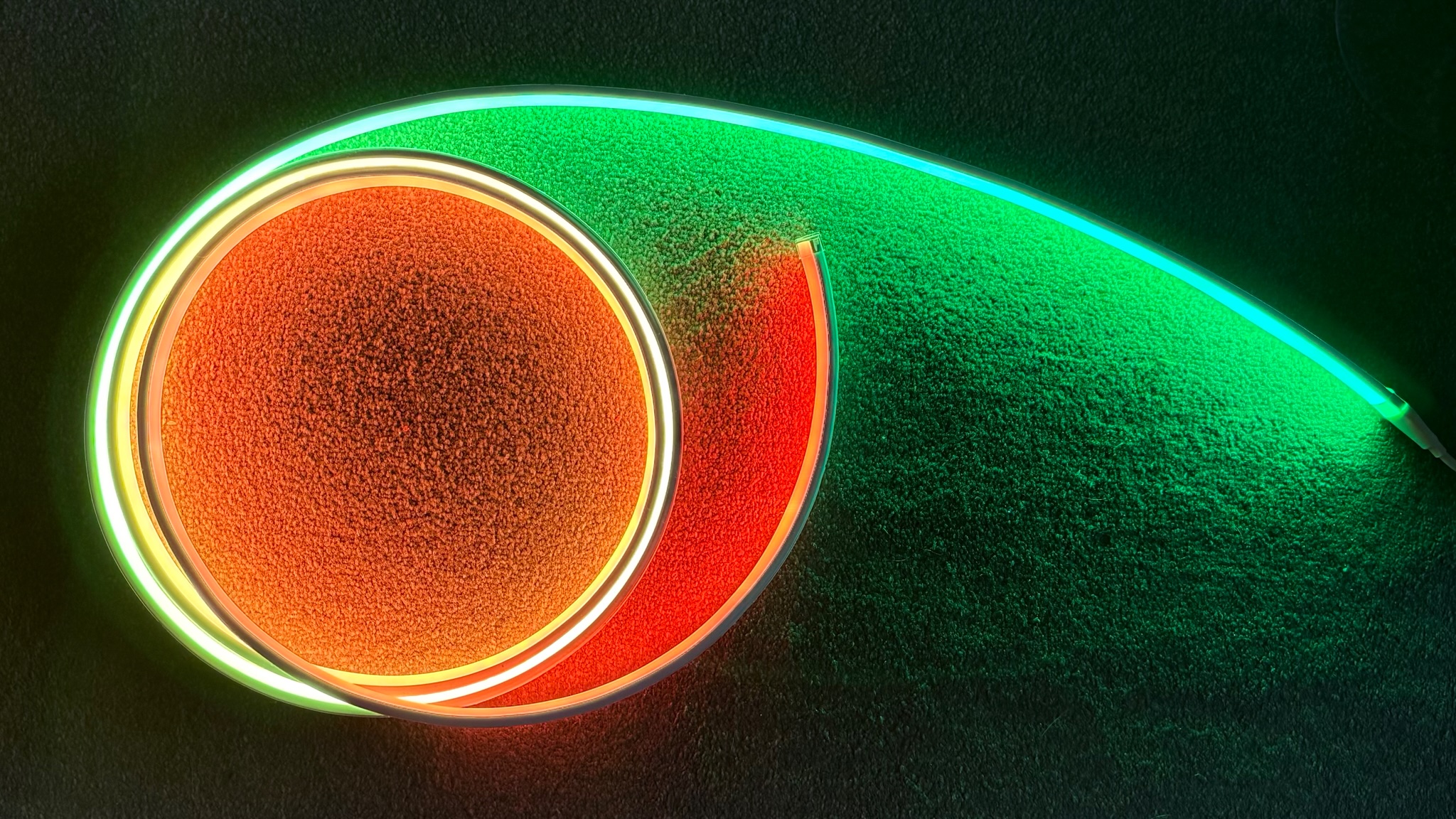

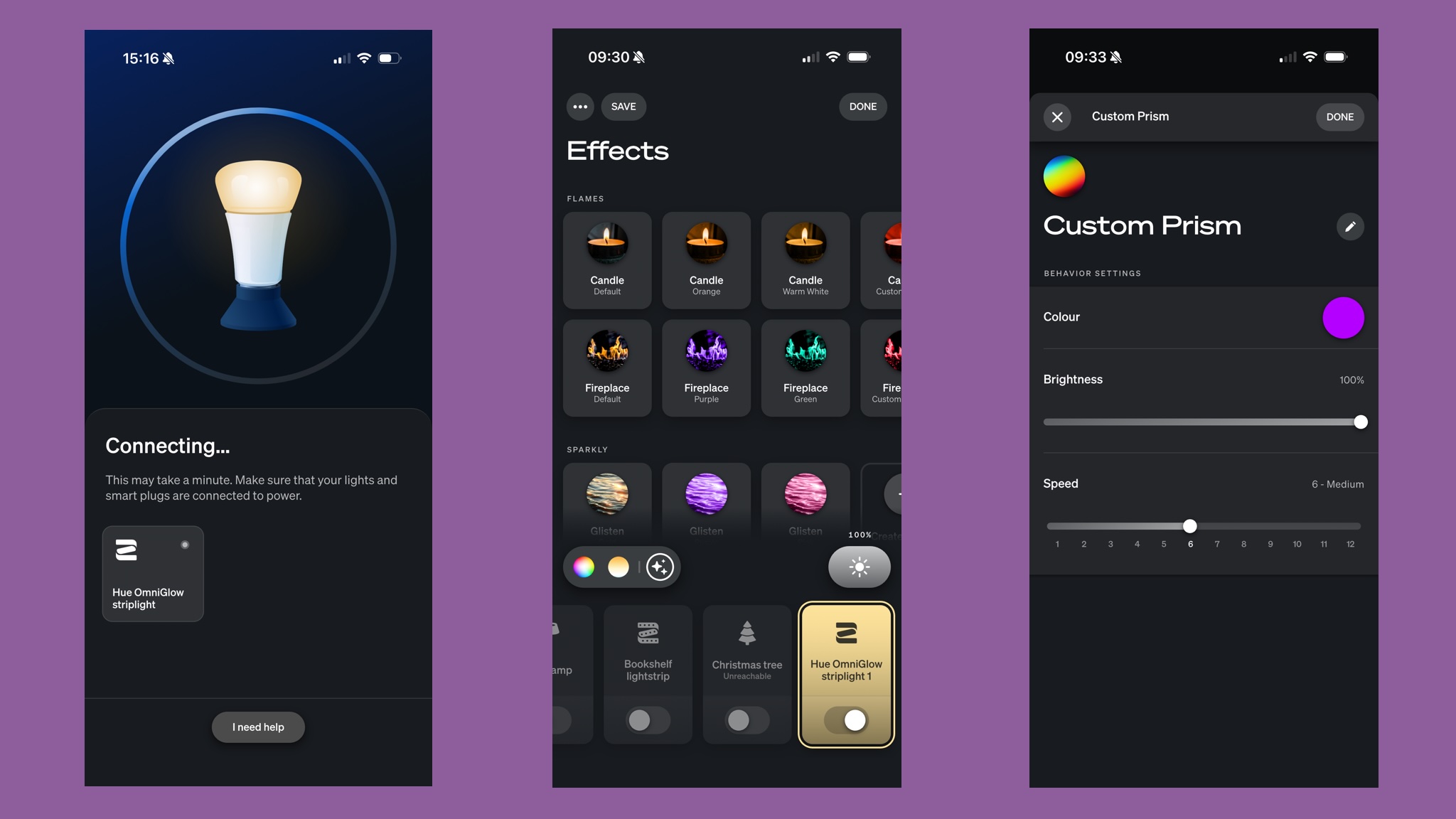

Light up your life with the Philips Hue Omniglow, the best Hue lightstrip yet

Why you can trust TechRadar

We spend hours testing every product or service we review, so you can be sure you’re buying the best. Find out more about how we test.

Philips Hue Omniglow: one-minute review

Specifications

Length: 3m (also 5m and 10m in some markets)

Brightness: up to 2,700 lumens at 6,500K (3m)

Colors: white, warm white, and multicolor

The Philips Hue Omniglow is the best Hue lightstrip yet. It’s a classier kind of LED strip: where other models have visible LEDs, the Omniglow delivers seamless color gradients and smoothly moving light effects. The results are very impressive and the Hue app makes it easy to select, edit or create scenes either solo or as part of a wider Hue setup. If you’ve already got a Hue system you can add it in seconds and then include it in your scenes and automations. As with other Hue lights you’ll need a Philips Hue Bridge or Bridge Pro to access advanced features such as custom scenes and smart home integration.

The Omniglow is easy to install and set up, although if you’re mounting it up high you might curse the short power cable. The only real downside is the length: you can shorten the Omniglow but not extend it, and longer versions are not widely available in the UK or US. While European customers can choose between 3m, 5m and 10m models, the US and UK are currently limited to the 3m model only.

Philips Hue Omniglow: price and availability

- On sale from November 2025

- $139.99 / £119.99 / AU$279.99 (3m)

The Philips Hue Omniglow was announced in September 2025 and went on sale in November 2025. There are three sizes, but only the 3m model is available everywhere. That has a recommended price tag of $139.99 / £119.99 / €139.99 / AU$279.99.

Europe and Australia also get a longer 5m version, which costs €199.99 / AU$399. And in Europe there’s a 10m version with a price tag of $349.99. The same 10m version was listed with a UK price of £349.99 but at the time of writing it’s showing as as “not currently available” on the Philips website.

Philips Hue Omniglow: design

- RGB, warm white and cool white

- Seamless color and gradients

- Cuttable but not extendable

The Omniglow is a RGBWWIC design, which means it combines RGB, warm white, cool white and independent control in a single light source. Unlike other Hue lightstrips you can’t see the individual LEDs; it’s designed to deliver seamless whites, colors and gradients, which it does very well. That makes it look much more classy than lesser lightstrips.

The strip is 17mm wide and 8.5mm high and consists of multiple 12.5cm sections, each of which has 6 LEDs that can be individually controlled – so you can get twinkly lights and motion effects as well as solid color and gradients.

This lightstrip can be cut shorter at pre-defined 12.5cm spaces but any bit you remove can’t be re-used or replaced later. Unlike previous Hue lightstrips the Omniglow can’t be (officially) extended with additional sections, although inevitably some Hue fans have come up with warranty-voiding DIY solutions.

There are double-sided adhesive strips along the full length of the Omniglow, but you may want to use something more permanent if you’re putting the strip in a place where it’ll have to battle gravity; in my experience the adhesive that comes with Hue strips tends to be rather weak, and this lightstrip is quite heavy. The power supply is also very short, with just over 1m between the plug socket and the beginning of your lightstrip, and you’re going to want to support the weight of the power brick.

Design score: 4/5

Hue Omniglow review: features

- Three-stage gradients

- Moving and flickering lights

- Great integration with other Hue lights

The Omniglow delivers the promised seamless gradients, and it also brings a feature across from the Festivia string lights in the form of moving lights. That enables you to pick a moving scene such as a fireplace, candle glow or looped color change, and you can tweak those scenes in the Hue app to adjust their speed or intensity. It’s very smooth and very impressive.

The app offers very basic control via Bluetooth but for access to advanced features such as syncing and smart home integration you’ll need a Hue Bridge or Hue Bridge Pro. That gives you the full range of customization, per-light settings and the ability to create your own custom moving gradients and flickering effects.

Features score: 5/5

Philips Hue Omniglow: performance

- Up to 2,700 lumens

- Seamless color

- Beautifully smooth transitions

If you’re familiar with Hue lightstrips the first thing you’ll notice about the Omniglow is how bright it is. It’s much brighter than standard Hue lightstrips, delivering up to 2,700 lumens of brightness compared to the 1,700 lumens of a Hue Solo of the same length.

If you can get the 5m or 10m models they are more powerful still, putting out up to 4,500 lumens. That means the Omniglow isn’t just a decorative lightstrip. You can also use it to illuminate spaces such as stairs or feature walls.

Performance score: 5/5

Philips Hue Omniglow: should you buy it?

|

Design |

Gorgeous lighting but it’s not extendable and the power cable is very short |

4/5 |

|

Features |

Everything you’d expect from a Hue strip plus motion and flicker effects (Bridge/Pro required) |

5/5 |

|

Performance |

Brilliantly bright, super smooth and the colors are fantastic |

5/5 |

|

Value |

Quite expensive compared to other lightstrips |

4/5 |

Buy it if

Don’t buy it if

Philips Hue Omniglow: also consider

There are multiple lightstrips for Hue, some of them much more affordable – so for example the Hue Gradient Lightstrip is much cheaper. Govee is the main rival in this space with very affordable products including the bendable, cuttable COB Strip Light Pro, the very cheap RGBIC LED Strip and several rope light models.

How I tested the Philips Hue Omniglow

I’ve been all-in on Hue lights for more than a decade, and my home currently features a mix of smart lights including two Hue gradient lightstrips, various Hue bulbs, a Hue motion sensor and Hue Festavia string lights, all controlled via the Hue app, Apple Home and Siri. I added the Omniglow to my living room setup and Hue Bridge and used it as both decorative lighting and functional lighting, controlling it alongside my existing lights and scenes.

First reviewed March 2026

Tech

iCloud email goes down for some users in an Easter Sunday outage

Apple users encountered issues accessing iCloud, in what was a rare Sunday outage for the company’s email, cloud storage, and associated services.

Apple service outage icons

Users of iCloud, Apple’s online services, ware reporting issues in being able to access files. Sites including DownDetector and StatusGator showed a sudden surge of reports from thousands of users, encountering problems since 10 A.M. Eastern.

The reported issues, for the most part, raised iCloud as being the problem. The range of issues was wide, including claims of iCloud Mail being unavailable, Find My devices disappearing in the app, and an inability to access files stored on the service.

Continue Reading on AppleInsider | Discuss on our Forums

Tech

LinkedIn secretly scans 6,000+ browser extensions and fingerprints your device

In short: Every time you visit LinkedIn in a Chrome-based browser, a hidden JavaScript routine silently probes your browser for more than 6,000 installed extensions, collects 48 hardware and software characteristics about your device, encrypts the resulting fingerprint, and attaches it to every API request you make during your session. The practice, labelled “BrowserGate” by researchers, is not disclosed in LinkedIn’s privacy policy. LinkedIn says it is a security measure; critics say it is covert surveillance of a billion users’ browsing behaviour at industrial scale.

There is a routine that runs on your computer every time you open LinkedIn. You cannot see it, you were not told about it, and it is not described in the company’s privacy policy. According to an investigation published in early April 2026 by Fairlinked e.V., a European association of commercial LinkedIn users, the platform injects a 2.7-megabyte JavaScript bundle into its website that silently scans visitors’ browsers for the presence of more than 6,000 specific Chrome extensions, assembles a detailed fingerprint of their hardware, encrypts it, and transmits the result to LinkedIn’s servers, where it is attached to every subsequent action taken during the session.

The investigation, independently confirmed by BleepingComputer, which verified the scanning behaviour through its own testing, has been dubbed “BrowserGate.” LinkedIn disputes many of the report’s characterisations. The technical facts are not in dispute.

What the script does

LinkedIn calls its scanning system “Spectroscopy.” When a user loads the LinkedIn website, the script fires off up to 6,222 simultaneous requests, each one probing for a specific browser extension by attempting to access files associated with that extension’s ID. The presence or absence of a file in the response indicates whether the extension is installed. The entire operation runs silently in the background, without a visible prompt or notification of any kind.

Beyond extensions, the script collects 48 distinct characteristics of the user’s device: CPU core count, available memory, screen resolution, timezone, language settings, battery status, audio hardware information, and storage capacity, among others. Individually, these attributes are unremarkable. Combined, they form a device fingerprint specific enough to identify a user even after cookies are cleared.

Once compiled, the data is serialised to JSON and encrypted using an RSA public key, LinkedIn’s internal identifier for the key is “apfcDfPK”, before being transmitted to telemetry endpoints including li/track and /platform-telemetry/li/apfcDf. The fingerprint is then permanently injected as an HTTP header into every API request made during the session, meaning LinkedIn receives it with every search, every profile view, every message sent.

What it is looking for

The question of which extensions LinkedIn is scanning for makes the surveillance more sensitive than simple fraud detection would require. According to the BrowserGate report, LinkedIn’s list includes more than 200 products that compete directly with its own sales tools, among them Apollo, Lusha, and ZoomInfo. Because LinkedIn knows the employer of each registered user, systematically scanning for the presence of a competitor’s tool gives the platform visibility into which companies are evaluating or deploying rival products.

The list also reportedly includes tools associated with neurodivergent conditions, religious practice, political interests, and job-hunting activity, categories that, in the European Union, qualify as sensitive personal data subject to heightened protection under the General Data Protection Regulation. Knowing that a user is running a job-search extension, for instance, is a meaningful inference about their employment intentions, drawn without consent.

The scale of the operation has grown substantially over time. LinkedIn began scanning for 38 specific extensions in 2017. By 2024, that number had grown to 461. By February 2026, the list had reached 6,167, a 1,252% increase in two years. BleepingComputer’s testing confirmed the scanning was active as of early April 2026.

LinkedIn’s defence and the source of the report

LinkedIn’s response to BleepingComputer was pointed. “The claims made on the website linked here are plain wrong,” a spokesperson said. “The person behind them is subject to an account restriction for scraping and other violations of LinkedIn’s Terms of Service. To protect the privacy of our members, their data, and to ensure site stability, we do look for extensions that scrape data without members’ consent or otherwise violate LinkedIn’s Terms of Service.” The company added that it does not use the data to “infer sensitive information about members.”

The platform’s characterisation of the source matters. Fairlinked e.V. is connected to Teamfluence Signal Systems OÜ, an Estonian company whose managing directors include Steven Morell and Jan Liebling. Teamfluence makes a Chrome extension, also called Teamfluence, that LinkedIn restricted for alleged terms of service violations. The company subsequently filed a preliminary injunction against LinkedIn Ireland Unlimited Company and LinkedIn Germany GmbH at the Regional Court of Munich, alleging violations of the Digital Markets Act, EU competition law, and German data protection rules. In January 2026, the Munich court denied the injunction, finding that LinkedIn’s actions did not constitute unlawful obstruction or discrimination.

The financial dispute between the parties does not change the technical findings, which were verified independently. It does mean the framing of those findings is contested, and readers should weigh both the substance of the claim and its provenance.

The regulatory backdrop

This is not LinkedIn’s first serious encounter with European data protection enforcement. In October 2024, the Irish Data Protection Commission, which regulates LinkedIn in the EU through its Irish subsidiary, fined the company €310 million, approximately $334 million , for processing users’ personal data for targeted advertising without a valid legal basis. The decision found that LinkedIn’s consent mechanisms did not meet GDPR’s requirement that consent be “freely given.” LinkedIn was ordered to bring its data processing into compliance.

The BrowserGate investigation drops into that context. The legal question of whether scanning for 6,000 browser extensions constitutes processing of special-category personal data, and whether users’ lack of awareness of the practice renders any implied consent invalid, is exactly the kind of question the Irish Data Protection Commission has already shown it is willing to adjoin in court. Europe’s evolving digital regulation framework has been moving steadily toward requiring explicit disclosure of all significant data collection, and a scanning operation of this scale, conducted without any mention in a privacy policy, appears difficult to square with that direction of travel.

LinkedIn is a Microsoft subsidiary, acquired in 2016 for $26.2 billion. Microsoft has been aggressively expanding its AI capabilities in 2026, with LinkedIn’s vast dataset of professional identity and employment history forming a significant part of the data infrastructure on which those capabilities rest. The relationship between LinkedIn’s data collection practices and Microsoft’s broader AI ambitions is not addressed in LinkedIn’s privacy policy either.

What this means for users

LinkedIn has more than one billion registered users. The majority access the platform through Chrome-based browsers, meaning the Spectroscopy scan runs routinely on the devices of a significant fraction of the global professional workforce, collecting a fingerprint that is precise enough to persist across cookie resets and potentially across devices.

Short of using a non-Chromium browser such as Firefox, which would limit but not necessarily eliminate LinkedIn’s fingerprinting capabilities, there is no user-facing setting that prevents the scanning. The platform does not offer an opt-out, because it does not disclose the practice in the first place. The 2026 push for governed and transparent AI and data practices is built on precisely the premise that invisible data collection of this kind should not be the default.

Whether regulators move quickly enough to change that default at LinkedIn’s scale remains to be seen. Security firms increasingly built to detect exactly this kind of covert data harvesting are becoming a growth sector in their own right, a market indicator that the gap between what platforms collect and what users understand is still very wide. The year 2025 normalised AI-powered data collection at a pace that regulation has yet to match. BrowserGate is a case study in what that lag looks like from the inside of a browser.

Tech

Vibe coding drove an 84% jump in App Store submissions. Apple is cracking down.

In short: AI-powered “vibe coding” tools have driven an 84% jump in new app submissions to Apple’s App Store in a single quarter, according to reporting by The Information, the largest surge in a decade. The flood is straining Apple’s review infrastructure, with approval times ballooning from 24 hours to as many as 30 days. Apple has responded by pulling apps that violate its self-containment rules, triggering a standoff with the platforms fuelling the boom.

Apple’s App Store is receiving more new apps than at any point in the past ten years. The cause is not a wave of professional developers: it is a term that Collins English Dictionary named its word of the year for 2025, coined by Andrej Karpathy, a co-founder of OpenAI and former AI lead at Tesla, in a single social media post in February of that year. Vibe coding ,the practice of building software by describing what you want in plain language and letting a large language model write the code, has lowered the barrier to app development so dramatically that it is now overwhelming the infrastructure Apple built to gatekeep its platform.

According to reporting by The Information, the number of new apps submitted to the App Store rose 84% in a single quarter as vibe coding went mainstream. The figure corroborates broader data from Sensor Tower, which tracked a 56% year-on-year spike in iOS app launches in December 2025 and a 54.8% rise in January 2026, the highest growth rates in four years. Apple’s full-year 2025 total reached 557,000 new app submissions, the largest annual wave since 2016.

The tools behind the flood

The surge is attributable to a small cluster of platforms that have turned natural language into deployable software. Cursor, made by Anysphere and used by seven million developers, surpassed $2 billion in annualised revenue in March 2026 and was valued at $29.3 billion after a $2.3 billion funding round co-led by Accel and Coatue in November 2025. Lovable, which targets non-technical builders, reached $200 million in annualised revenue in late 2025, a fiftyfold increase in a single year, and raised $330 million in a Series B at a $6.6 billion valuation in December 2025. Replit generated $240 million in revenue during 2025, serves more than 150,000 paying customers, and is targeting $1 billion in revenue for 2026. Bolt.new has become a popular entry point for rapid idea-to-prototype work.

The commercial argument for these platforms is straightforward: anyone with an idea and an internet connection can now build and submit an app. The problem for Apple is that the same dynamic that makes vibe coding commercially compelling is structurally incompatible with how the App Store review process works.

Why Apple has a structural problem

Vibe coding’s power lies in generating and executing new code on demand, in response to user prompts, in real time, without a fixed codebase. Apple’s App Store review process was designed for a different model: a developer submits a static build, Apple reviews it, and the approved build is what users receive. Guideline 2.5.2 of Apple’s App Review Guidelines states explicitly that apps “may not download, install, or execute code which introduces or changes features or functionality of the app.” Vibe coding apps, almost by definition, do exactly that.

The volume consequences are already visible in Apple’s infrastructure. Developers submitting to the App Store in March 2026 reported review delays of seven to 30 or more days, against a historical baseline of 24 to 48 hours, with the majority of delay time spent in the “Waiting for Review” queue before a reviewer picks up the submission. The flood of AI-generated apps is straining a system designed for a world in which building an app took months, not minutes.

The crackdown begins

Apple’s enforcement response has been progressive and, at times, opaque. In mid-March 2026, reports emerged that Apple had quietly blocked updates for a set of vibe coding apps, including Replit and Vibecode, without public explanation. Developers described receiving rejections citing Guideline 2.5.2 but receiving no advance warning that enforcement was intensifying.

The most prominent casualty was Anything, an app that let users build small tools and automations through natural language prompts. Its co-founder, Dhruv Amin, said Apple had been preventing updates since December 2025 before pulling the app entirely on 30 March 2026. Amin attempted to reach a compromise by modifying the app so that vibe-coded outputs would be previewed in a web browser rather than executed inside the app itself; Apple blocked that update and removed the app regardless.

An Apple spokesperson told The Information that the company was not targeting vibe coding as a category but rather enforcing guidelines that prevent apps from changing their behaviour after review. The distinction, in practice, is narrow: the defining capability of a vibe coding app is its ability to generate and run new functionality on demand, which is precisely what Guideline 2.5.2 prohibits.

The counterargument

Critics of Apple’s position have been pointed. A CNBC column published at the end of March 2026 argued that Apple’s crackdown “puts it on the wrong side of history,” contending that the review-based model was conceived for a world that no longer exists and that blocking vibe coding apps disadvantages the platform against Android, which applies fewer constraints on dynamic code execution.

The deeper tension is one of gatekeeping economics. Apple’s App Store review process is not only a safety mechanism: it is the basis of the 15–30% commission the company collects on in-app purchases and subscriptions. A wave of vibe-coded apps that bypass review , by generating code outside the approved bundle, is also, in structural terms, a challenge to the business logic of the store itself. Regulators in Europe have been scrutinising Apple’s App Store gatekeeping under the Digital Markets Act, and the vibe coding dispute adds another dimension to that ongoing examination.

A platform reckoning

What vibe coding has exposed is a mismatch between the speed at which AI can generate software and the speed at which existing review infrastructure can evaluate it. Apple reviewed roughly 200,000 weekly app submissions at the height of its 2025 volume, and the surge has outpaced that capacity. The platform now faces a choice between expanding its review capacity significantly, updating its guidelines to accommodate dynamic code execution in controlled ways, or continuing to enforce existing rules and accepting the friction that creates with a rapidly growing class of developers.

The capital being deployed into AI infrastructure in 2026 makes it unlikely that the volume of vibe-coded apps will slow on its own. The tools are becoming faster and cheaper; the category is producing some of the highest-growth companies in the technology industry. As AI moves from novelty to commercial infrastructure, the question of who controls the distribution layer, and on what terms, is becoming the central battleground of the platform era. Apple built the App Store as an answer to that question. Vibe coding is making it ask the question again from the beginning. The AI acceleration of 2025 has arrived at the gate. Apple is deciding whether to open it.

-

NewsBeat3 days ago

NewsBeat3 days agoSteven Gerrard disagrees with Gary Neville over ‘shock’ Chelsea and Arsenal claim | Football

-

Business3 days ago

Business3 days agoNo Jackpot Winner and $194 Million Prize Rolls Over

-

Fashion3 days ago

Fashion3 days agoWeekend Open Thread: Spanx – Corporette.com

-

Entertainment7 days ago

Fans slam 'heartbreaking' Barbie Dream Fest convention debacle with 'cardboard cutout' experience

-

Crypto World4 days ago

Crypto World4 days agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Business7 hours ago

Business7 hours agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Crypto World6 days ago

Dems press CFTC, ethics board on prediction-market insider trades

-

Sports1 day ago

Sports1 day agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Business4 days ago

Business4 days agoLogin and Checkout Issues Spark Merchant Frustration

-

Tech7 days ago

Tech7 days agoApple will hide your email address from apps and websites, but not cops

-

Tech6 days ago

Tech6 days agoEE TV is using AI to help you find something to watch

-

Sports6 days ago

Sports6 days agoTallest college basketball player ever, standing at 7-foot-9, entering transfer portal

-

Politics6 days ago

Politics6 days agoShould Trump Be Scared Strait?

-

Tech6 days ago

Tech6 days agoFlipsnack and the shift toward motion-first business content with living visuals

-

Tech6 days ago

Daily Deal: StackSkills Premium Annual Pass

-

Fashion7 days ago

Fashion7 days agoThe Best Spring Trends of 2026

-

Crypto World6 days ago

Crypto World6 days agoU.S. rule change may open trillions in 401(k) funds to crypto

-

Sports6 days ago

Sports6 days agoWomen’s hockey camp eyes fitness boost, tactics ahead of WC 2026 campaign | Other Sports News

-

Tech6 days ago

Tech6 days agoHow to back up your iPhone & iPad to your Mac before something goes wrong

-

Politics7 days ago

Politics7 days agoBBC slammed for ignoring author of The Fraud

You must be logged in to post a comment Login