Editor’s note, April 7, 2026, 5:10 pm ET: The Artemis II mission is conducting experiments that may radically advance our understanding of space medicine. The findings of A Virtual Astronaut Tissue Analog Response (AVATAR) experiment could help us create personalized medical kits for astronauts, and the Artemis Research for Crew Health & Readiness (ARCHeR) study will monitor the astronauts’ health as they go further into space than any human beings have gone before. As we await the findings of those experiments, Vox is republishing this article, which originally launched September 24, 2025.

Vox Members got to read this story first. Support independent journalism and get exclusive access to stories like this by becoming a Vox Member today.

One day, Mars might become a home to humans. But first, there’s the cinematic, sci-fi challenge of making the Red Planet suitable for life. There’s a problem, though: The typical person can’t get to space safely. That throws a wrench into the whole “let’s move to Mars” plan in the face of extreme climate change and other existential risks on Earth.

Today, the path to becoming an astronaut is “littered with the hopes and dreams of medically disqualified candidates,” said Shawna Pandya, a research astronaut with the International Institute for Astronautical Sciences (IIAS) and the director of its Space Medicine Group. “Once upon a time, kids being diagnosed with Type 1 diabetes in the doctor’s office would be told, ‘Well, you could still be anything, except an astronaut.’”

Here are some of the common reasons why you might be medically disqualified from becoming an astronaut:

- Tobacco use

- Autoimmune disorders

- Temporomandibular joint (TMJ) disorders

- Sleep apnea

- Asthma

- Hypertension

- Migraines

- Anxiety and depression

Astronauts inherently aren’t representative of the broader population — they’re selected for being in very good health. The stress of existing in essentially weightless microgravity conditions, like those on the International Space Station (ISS), can be incredibly tough on the human body. Astronauts face heightened risks of early-onset osteoporosis, insulin resistance, and significant muscle mass loss. Naturally, government space agencies want people whose bodies are more resilient to such pressures, and who can perform necessary duties without a ton of medical intervention.

According to Haig Aintablian, director of the UCLA Space Medicine Program, “just as pregnancy causes the body to undergo complex and unique changes, spaceflight also produces distinct and significant physiological changes.” It also requires its own medical specialty to manage (aptly called space medicine).

There’s a lot scientists don’t know, from the physical to the psychological. That’s a problem — for the future of science, space travel, and maybe even human existence at large.

NASA wants to go to Mars for research, and aims to send humans there as early as the 2030s. As the most similar planet to Earth in our solar system, Mars may have once harbored life, or may even currently. And in the future, we may even need it to support us.

Decades ago, seriously engaging with the idea of moving to Mars was extremely fringe for a multitude of reasons, ranging from a lack of technical feasibility to the desire to put scientific resources toward solving problems on Earth. Elon Musk — founder of the spaceflight company SpaceX — became a famed advocate for colonizing Mars in the early 2000s. He still is. Musk, who is currently worth around $410 billion, claims that he is only accumulating assets for the purpose of Martian space settlement. Last year, he said that he wants 1 million human settlers on the Red Planet in a self-sustaining city by 2050.

Now Musk isn’t alone. NASA experts, biologists, academics, futurists, disaster resilience researchers, and physicians are seriously considering the possibility of making humanity an interplanetary species.

“The biggest problem for humanity to solve is the guaranteed survival of our species — which the logical answer is to become multiplanetary,” Aintablian said. “I don’t think there’s a better solution than Mars.”

While we know some of the health effects of being on the ISS, we can’t really replicate the effects of Martian radiation exposure. Kelly Weinersmith — a biologist and co-author of A City on Mars: Can We Settle Space, Should We Settle Space, and Have We Really Thought This Through? — thinks that settling Mars on Musk’s timescale will be catastrophic. She argues that we shouldn’t rush to set up shop before understanding — and mitigating — the risks, even if this takes centuries rather than decades.

But many advocates for settling Mars are much more impatient. The only way to get there safely would be to unlock significant advances in space medicine, a nascent field that has just barely scratched the surface in its approximately 75-year history.

“Nothing that humanity has done that has been worthwhile has been easy,” Aintablian told me. “So much in our development as a civilization has been difficult, and the reason why we’re able to live such comfortable lives now is because of the extremely difficult challenges that humans have had to solve in the past.”

What we know — and don’t — about human health on Mars

Since extremely few people end up in space right now, the researchers trying to understand how to improve human health there have a limited sample size to work with. Yuri Gagarin became the first human in space in 1961, and more than 600 astronauts have followed him. Only about a sixth of them are women.

NASA researchers have identified some key ways that time in space can impact human health — radiation exposures, isolation, distance from Earth, altered gravity, and environmental consequences like an altered immune system. But we’re still lacking many specific examples of how these different dynamics play out in real life.

Former astronaut Scott Kelly, right, who commanded a one-year mission aboard the International Space Station, along with his twin brother, former astronaut Mark Kelly.NASA/AFP via Getty Images

One of the best studies we have is NASA’s famous 2019 twins study. Twin studies allow researchers to separate the effects of genetic predispositions from environmental influences on health outcomes. NASA compared the health of identical twin brothers Scott and Mark Kelly over the course of a year. Scott went into orbit on the ISS while Mark remained on Earth. Both underwent the same battery of physiological tests, and the results indicated some surprising new differences between the two men.

Scott’s telomeres — the bits of DNA at the end of our chromosomes — lengthened while he was in space and (mostly) reverted to normal once he returned to Earth, possibly indicating radiation-induced DNA damage and potential increased cancer risk. Scott also lost body mass, developed signs of cardiovascular damage that were not present in Mark, and experienced some short-term cognitive changes after returning to Earth.

While survivable with the right training, equipment, and precautions, the twin study demonstrated how space’s unique environment can have significant consequences for gene expression and overall health while in orbit.

If the best of the best struggle, what about the rest of us? We’re getting some insights here now, too.

Since space tourism has literally taken off, astronauts aren’t the only ones going to space now: Wealthy non-astronauts, like Jeff Bezos, Gayle King, and Katy Perry, have recently taken short, recreational jaunts into outer space through Bezos’s space tech company, Blue Origin.

“Teenage Dream” singer Katy Perry kisses the ground after returning to Earth from her short spaceflight earlier this year.Cover Images via AP Images

Aintablian is very excited about the prospect of civilian access to space increasing, which will inherently mean people with medical issues are also flying. This represents a huge opportunity for scientists to study the medical management of a much wider range of conditions.

That said, 10 or 15 minutes in space is hardly comparable to the conditions on the ISS. And Mars poses even worse consequences in terms of hostile environments and time spent away from Earth. Mars has toxic dust, lacks plant life and a breathable atmosphere, and only has about 40 percent of Earth’s gravity. Earth’s global magnetic field protects our planet from harmful radiation, and the Martian counterparts are localized, not planet-wide.

The longest time someone has been in space consecutively is 438 days aboard a space station. But crewed missions to Mars would probably take at least nine months just to get there, let alone stay or travel back (which could take up to three years). Mars is usually around 140 million miles from Earth based on its orbital path around the sun, with up to a 20-minute communication delay one way. If they experienced a medical emergency, astronauts likely wouldn’t be able to access telemedicine instructions in time, and they couldn’t turn back around for treatment.

A crewed mission to Mars would have to take all of their supplies with them before they left our planet. And when the first people heading to Mars set foot on the planet, they won’t have access to the intense support astronauts receive when landing back on Earth.

Getting to Mars is only part of the challenge. We’ve been to space, but so far, humans have only ever sent robots to the Red Planet. We are making educated guesses at what Mars is like for living things. Earth analogues aren’t able to truly replicate the closed, hostile conditions of the space environment, which can wreak havoc on astronauts’ mental health. Desert research stations have an atmosphere, while the moon barely has one — and setting up that modest base was a huge mission in its own right. Weinersmith told me that scientists at polar research stations are isolated in remote, inhospitable environments, but they can “still open the door, take a deep breath, and not die.”

We’re still pretty far from being able to breathe in Mars’ atmosphere — but it would be nice to get there one day and simply not die.

Programs dedicated to figuring out how to get humans safely into space for long periods of time are popping up, and non-physician health care providers are getting in on the action too. UCLA is planning to launch a space nursing program and possibly space paramedic training. SpaceMed is a European master’s program focused on human health in spaceflight and other extreme conditions.

Today, astronauts receive most of their care from Earth-based aerospace medicine physicians called flight surgeons through telemedicine. Aintablian envisions a future where health care providers directly accompany astronauts on their expedition-class missions, like to the moon or Mars. Artificial intelligence can act as a resource for the on-board flight surgeon, he predicted, and aid in the development of other technologies that will bring us closer to Mars.

Such technology is already in the works. Google recently collaborated with NASA to develop an AI system that could guide astronauts in diagnosing and treating medical conditions that arise in-flight when they lack access to telemedicine.

But the devil is in the details, Pandya told me. AI can help with just-in-time training for medical emergencies and diagnostics, but the data requirements would be massive. Since extremely few people end up in space — and the ones who do are overwhelmingly male — models might be trained on an unrepresentative dataset that could lead to inaccurate predictions of physiological changes in space. These kinks need to be worked out first.

Right now, there’s a gendered gap in the research — so much so that Weinersmith told me there’s never a line to the women’s restroom at space settlement conferences. Human reproduction and development in space, as a result, is wildly understudied.

As far as we know, no human being has ever been to space while pregnant, and we don’t know of any humans who have been conceived in space. We’re going to learn a lot about reproduction on Earth from the first human space pregnancy and space birth, a prerequisite for a self-sustaining settlement on Mars. (Plus, space tourism companies are talking about hotels in space, and we know what people do in hotels.) Ideally, you want to have an idea of what will happen to someone giving birth in space before they actually go through it.

“What we’re arguing is that we should do the research to understand those risks before we go out there because if there are massive risks, there usually are technological solutions for some of these,” Weinersmith said.

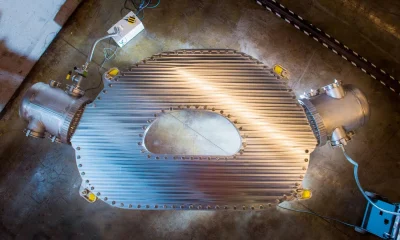

NASA will begin its second Crew Health and Performance Exploration Analog this October, a year-long “mission” to Mars in a 3D-printed habitat at Johnson Space Center in Houston, where it will collect behavioral health data on the effects of isolation and confinement. Scientists are conducting bed rest studies, which simulate the physiological effects of altered gravity and weightlessness. And as funding cuts transform the future of scientific research on Earth and beyond, space medicine researchers are among those advocating for continued investment in space and biomedical science.

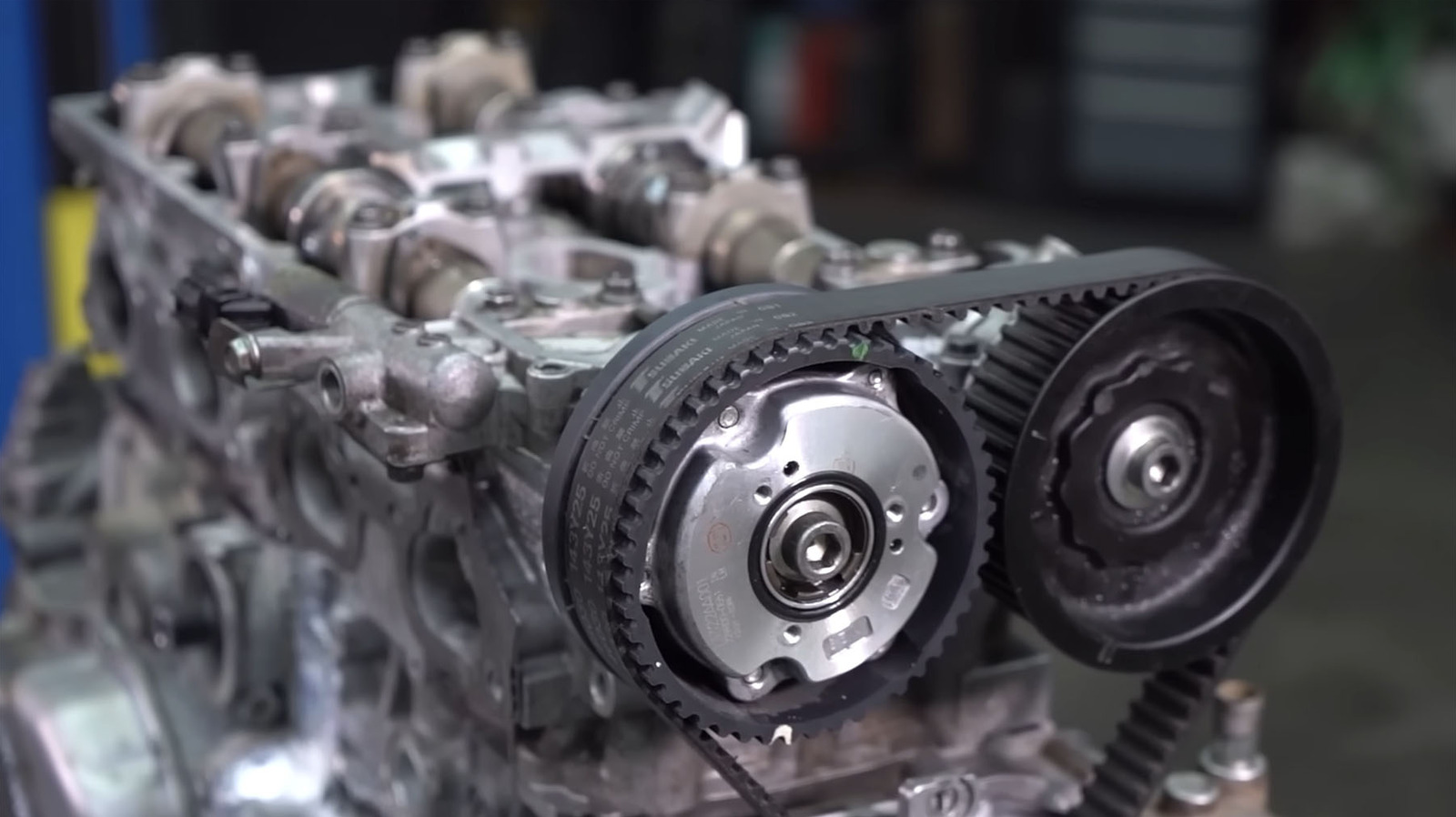

Maedeh Mozneb, a biomedical engineer and project scientist in the Sharma Lab at Cedars-Sinai Medical Center, told me that the ultimate goal is to send “avatars” of astronauts to space by taking their stem cells and creating 3D tissue cultures called organoids that represent different parts of their body — yes, miniature hearts, kidneys, and even brains made from Earth-dwelling humans. From there, scientists can determine personalized countermeasures such as workout plans or supplements tailored to each astronaut’s needs, before they actually end up in space.

The hope, for those space medicine physicians like Pandya, is that in a spacefaring future, all medical disciplines — from neurology to radiology — will be represented in space medicine.

Space medicine research and practice isn’t cheap. “I often get asked,” said Pandya, “‘Why are you spending money on space health when we have all of these problems on Earth?’” But that’s the wrong way to think about it, she said.

Research conducted in space has already improved health on this planet. Advances in digital imaging for moon photography during the 1972 Apollo 17 mission later played a crucial role in CT scans and MRIs. Remote health monitoring tools designed for astronauts in space are now widely used in hospitals.

One of the next big things in space medicine “is probably going to be the development of radiation protection mechanisms,” Aintablian told me.

Space medicine research will also allow more people to go to space. In 2023, Pandya’s team demonstrated the safety and functionality of a continuous glucose monitor in the spaceflight environment. This could eventually allow diabetics to check their blood sugar in space. It has implications for current astronauts, who can develop insulin resistance and pre-diabetes symptoms in longer-duration spaceflights. The child diagnosed with Type 1 diabetes who wants to be an astronaut may actually have the chance to live out their dream now, and studying how the body metabolizes glucose in space helps us better understand health on Earth.

Then there are the diseases that take decades to unfold. Muscle loss in space can help scientists better understand how to treat conditions like Duchenne’s muscular dystrophy. On Earth, neurodegenerative diseases like Alzheimer’s often aren’t apparent until a person is in their late 60s.

In microgravity, said Shelby Giza, the director of business development at Space Tango, a company that facilitates automated research and development in microgravity conditions, “you can see that kind of disease output in a matter of weeks.” Research on these conditions can be conducted much faster — and hopefully accelerate the pace of medical breakthroughs.

The same can be said for cancer. Not all radiation exposures are made equal, and susceptibility to the harmful effects of radiation varies between individuals. Since the ISS is within the protection of Earth’s magnetosphere, it’s not the best comparison to the elevated radiation levels astronauts would face on Mars.

According to former NASA astronaut and biologist Kate Rubins, most astronauts are healthy people in their 30s and 40s, an age when cancer typically doesn’t develop. Scientists must track astronauts for decades after their last spaceflight to see if cancer or other adverse health conditions occur. NASA’s Lifetime Surveillance of Astronaut Health program, which is voluntary for former astronauts and not specific to cancer alone, monitors the health status of people like Kelly and Rubins for the rest of their lives.

Exposure to space radiation is linked to developing cancer and degenerative diseases. To mitigate the risk of developing fatal cancers, NASA currently limits astronauts’ spaceflight radiation exposure to 600 millisieverts (mSv) — roughly the equivalent of 60 CT scans of the torso and pelvis — over the course of their entire career. A 2023 NASA white paper estimates that a healthy astronaut will have a 33 percent increased risk of dying from cancer in their lifetime after a 1,000-day mission to Mars.

One of the next big things in space medicine “is probably going to be the development of radiation protection mechanisms,” Aintablian told me. “I do believe that with the amount of emphasis being placed on radiation protection, we’re going to figure out ways to actually protect against significant amounts of radiation for the general public for multiple uses.”

While it’s still relatively early days for the space pharma industry, life science companies are taking note, seeing microgravity as a platform for better drug discovery.

Like fiber optic cables used for telecommunications, some pharmaceuticals are better synthesized in microgravity conditions. Scientists can produce more uniform protein crystals in microgravity, which can improve drug injectability and reduce the need for refrigeration.

Raphael Roettgen, an entrepreneur and the co-founder of space biotech startup Prometheus Life Technologies, told me that organoids — those 3D cell models replicating human organs — grow more cleanly in space without Earth’s gravity weighing them down. Derived from non-embryonic stem cells, these miniature organ models have tremendous potential for personalized medicine.

Roettgen hopes that human space organoids could reduce the need for animal testing in the near term. Eventually, he hopes that new organs could be regenerated for patients needing transplants. Since the new tissue would be derived from the patient’s own stem cells, there would not be a risk of immune rejection, saving transplant patients astronomical costs and immense suffering. He estimates that liver regeneration and transplants from these organoids could become a reality in patients within the next 20 years.

Microgravity is an “expensive tool,” but an important one nonetheless, said Mozneb, who studies the effects of low earth orbit on stem cell differentiation. She hopes increasing commercialization and new technologies will significantly decrease the cost of launching experiments into orbit over the next 10 years.

What we already know about space medicine is a drop in the ocean of what we will discover as more people — astronauts and otherwise — venture into space.

“It’s like if you were studying genetics back in the ’90s,” Mozneb said. “Everything is a discovery.”

You must be logged in to post a comment Login