Technology

AI copyright tool is serving takedown notices to AI-generated Mario images

Generative Artificial Intelligence (Gen AI) tools are now battling each other online. An AI copyright tool is actively serving takedown notices to social media posts containing Mario and other content copyrighted by Nintendo.

AI copyright tool going after AI-generated Mario images

Ever since Gen AI burst on the scene, AI-generated images and videos have been flooding the internet. Social media users are churning out a lot of content using Gen AI tools, and a lot of the imagery appears to ignore copyright laws.

An AI copyright tool is now going after AI-generated content on X (formerly Twitter). Several dozen posts of X have been reportedly taken down. Most of these posts contained images of Mario, which were generated using AI.

According to The Verge, a company called Tracer is using AI to identify the images and serve takedown notices on behalf of Nintendo. The publication posted an AI-generated picture of Mario holding a beer and a cigarette, and sure enough, it was taken down.

The Verge’s Tom Warren received an email shortly after the image was taken down. It indicated a Digital Millennium Copyright Act (DMCA) notice was issued to X. The person serving the notice was “customer success manager” Ben Arzen of Tracer.

Tracer is a relatively new company that offers AI-powered services to companies. The company’s AI helps to identify trademark and copyright violations online.

AI tools are also targeting fan art posts

X introduced Grok, its in-house developed Gen AI, a few months ago. Grok is essentially a multi-faceted Gen AI tool that can generate text and images. Grok rivals ChatGPT, Mid-Journey, Dall-E, and other similar Gen AI tools.

It appears the AI copyright tool is predominantly active on X. Moreover, so far, only Nintendo appears to be going after content that the company considers copyright infringement.

Well guys it finally happened#GROK2 made content that Nintendo declared illegal

They took down two of the photos i made with grok and posted depicting Luigi as an IDF soldier for Israel and same with Waluigi.

Elon’s twitter is a dumpster fire😂 pic.twitter.com/soYncGQM6H

— Opie – NAFO rep. for the Ope Society (@TheMidwestFella) September 20, 2024

Some reports suggest the AI tool serving takedown notices is also targeting fan art. Needless to say, this is quite concerning because fan art is content that’s created by individuals and not generated using AI.

Regardless, it might be difficult for an AI tool to make the distinction between user-created and AI-generated art. Moreover, Nintendo has always been very aggressive while dealing with copyright issues. Hence, it makes sense Nintendo hired one of the first companies to use AI to go after AI-generated content.

Servers computers

Rack 20U dan 30U

Closedrack 20U dan 30U W600 D900/1100mm adalah solusi untuk kebutuhan perangkat Rackmount anda.

Sebagai informasi :

1. 20/30U adalah tinggi rack, “U” adalah satuan tinggi perangkat yg di gunakan International dan jadi patokan penentuan kebutuhan rack. U=44mm

2. W600 adalah lebar rack yaitu 600mm/ 60cm dimana di dalam nya ada railing 19″(Inch) yg merupakan lebar perangkat International. Jika suatu perangkat di katakan “Rack Mount”, maka lebar perangkat HARUS 19″.

3. D900/ 1100mm adalah Depth/ kedalaman dari rack tsb dimana ini tidak ada standart baku, contoh ada perangkat yg depth nya hanya 300mm tapi untuk server biasa nya 700 depth nya.

Closedrack di gunakan terutama untuk mengamankan Perangkat Elektronik yg kita install selain agar tidak hilang, terutama agar settingan yg sudah di lakukan tidak di rubah2 olah tangan2 jahil.

Silakan feel free untuk diskusi kebutuhan rack anda.

WA: 0812 991 9892 (WILLIAM)

Pleease LIKE, SUBCRIBE, SHARE dan Comment untuk update produk2 lain nya. Many thanks

#Rack 20U dan 30U .

source

Technology

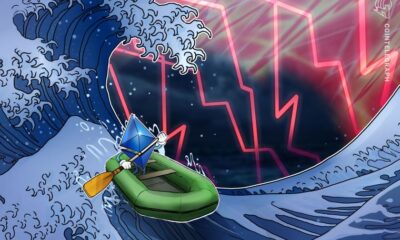

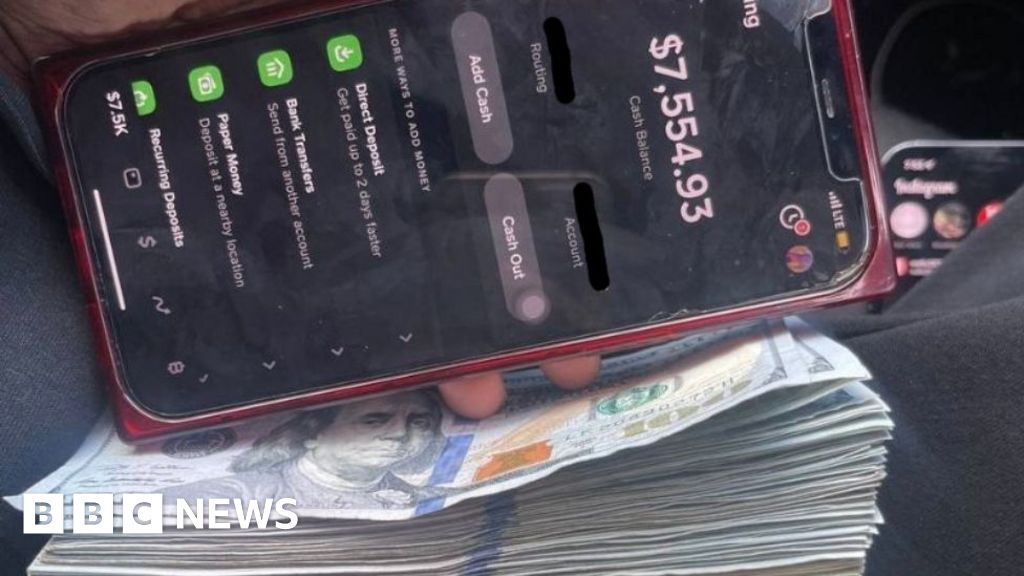

FTX advisor and Alameda CEO Caroline Ellison gets two years in prison

A US district court judge sentenced Caroline Ellison, the former advisor and ex-girlfriend to the convicted crypto fraudster and FTX founder Sam Bankman-Fried, to two years in prison.

reported Ellison’s sentence for her role in the $8 billion in fraud committed by the FTX crypto exchange that sent for 25 years back in March. Ellison will also have to serve three years of supervised release once she’s finished her prison sentence.

Ellison pled guilty at the end of 2022 to just as Bankman-Fried was being extradited to the US from the Bahamas. US Securities and Exchange Commission (SEC) Director of Enforcement Sanjay Wadhwa said following Ellison’s plea that she and Wang “were active participants in a scheme to conceal material information from FTX investors.”

Ellison was also the former chief executive officer of FTX’s sister company Alameda Research. Prosecutors said she diverted FTX customers’ funds onto Alameda’s books to hide risks from their clients. Ellison testified against Bankman-Fried, making her a key witness in his criminal fraud trial.

Prosecutors also got Bankman-Friend’s house arrest and bail revoked when a judge determined the FTX founder tried to hinder Ellison’s testimony last year. Bankman-Fried tried to message FTX’s general counsel on Signal and email in 2023 to influence Ellison’s testimony who was only identified as “Witness-1.”

Nine months later, Bankman-Fried showed that prosecutors said were an attempt to damage her reputation especially amongst prospective jurors. The judge agreed both instances merited Bankman-Fried’s arrest and jailing while he awaited trial. Bankman-Fried is currently serving his 25-year sentence in a federal prison in Brooklyn awaiting appeal for his conviction.

Ellison issued a statement before her sentence apologizing for her crimes to the people she and her former firm defrauded. Prosecutors did not issue a recommended sentence and characterized her cooperation with investigators as “exemplary” in a memo to the judge.

“Not a day goes by that I don’t think of the people I hurt,” Ellison said in court. “I am deeply ashamed of what I have done.”

Technology

AutoToS makes LLM planning fast, accurate and inexpensive

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

Large language models (LLMs) have shown promise in solving planning and reasoning tasks by searching through possible solutions. However, existing methods can be slow, computationally expensive and provide unreliable answers.

Researchers from Cornell University and IBM Research have introduced AutoToS, a new technique that combines the planning power of LLMs with the speed and accuracy of rule-based search algorithms. AutoToS eliminates the need for human intervention and significantly reduces the computational cost of solving planning problems. This makes it a promising technique for LLM applications that must reason over large solution spaces.

Thought of Search

There is a growing interest in using LLMs to handle planning problems, and researchers have developed several techniques for this purpose. The more successful techniques, such as Tree of Thoughts, use LLMs as a search algorithm that can validate solutions and propose corrections.

While these approaches have demonstrated impressive results, they face two main challenges. First, they require numerous calls to LLMs, which can be computationally expensive, especially when dealing with complex problems with thousands of possible solutions. Second, they do not guarantee that the LLM-based algorithm qualifies for “completeness” and “soundness.” Completeness ensures that if a solution exists, the algorithm will eventually find it, while soundness guarantees that any solution returned by the algorithm is valid.

Thought of Search (ToS) offers an alternative approach. ToS leverages LLMs to generate code for two key components of search algorithms: the successor function and the goal function. The successor function determines how the search algorithm explores different nodes in the search space, while the goal function checks whether the search algorithm has reached the desired state. These functions can then be used by any offline search algorithm to solve the problem. This approach is much more efficient than keeping the LLM in the loop during the search process.

“Historically, in the planning community, these search components were either manually coded for each new problem or produced automatically via translation from a description in a planning language such as PDDL, which in turn was either manually coded or learned from data,” Michael Katz, principal research staff member at IBM Research, told VentureBeat. “We proposed to use the large language models to generate the code for the search components from the textual description of the planning problem.”

The original ToS technique showed impressive progress in addressing the soundness and completeness requirements of search algorithms. However, it required a human expert to provide feedback on the generated code and help the model refine its output. This manual review was a bottleneck that reduced the speed of the algorithm.

Automating ToS

“In [ToS], we assumed a human expert in the loop, who could check the code and feedback the model on possible issues with the generated code, to produce a better version of the search components,” Katz said. “We felt that in order to automate the process of solving the planning problems provided in a natural language, the first step must be to take the human out of that loop.”

AutoToS automates the feedback and exception handling process using unit tests and debugging statements, combined with few-shot and chain-of-thought (CoT) prompting techniques.

AutoToS works in multiple steps. First, it provides the LLM with the problem description and prompts it to generate code for the successor and goal functions. Next, it runs unit tests on the goal function and provides feedback to the model if it fails. The model then uses this feedback to correct its code. Once the goal function passes the tests, the algorithm runs a limited breadth-first search to check if the functions are sound and complete. This process is repeated until the generated functions pass all the tests.

Finally, the validated functions are plugged into a classic search algorithm to perform the full search efficiently.

AutoToS in action

The researchers evaluated AutoToS on several planning and reasoning tasks, including BlocksWorld, Mini Crossword and 24 Game. The 24 Game is a mathematical puzzle where you are given four integers and must use basic arithmetic operations to create a formula that equates to 24. BlocksWorld is a classic AI planning domain where the goal is to rearrange blocks stacked in towers. Mini Crosswords is a simplified crossword puzzle with a 5×5 grid.

They tested various LLMs from different families, including GPT-4o, Llama 2 and DeepSeek Coder. They used both the largest and smallest models from each family to evaluate the impact of model size on performance.

Their findings showed that with AutoToS, all models were able to identify and correct errors in their code when given feedback. The larger models generally produced correct goal functions without feedback and required only a few iterations to refine the successor function. Interestingly, GPT-4o-mini performed surprisingly well in terms of accuracy despite its small size.

“With just a few calls to the language model, we demonstrate that we can obtain the search components without any direct human-in-the-loop feedback, ensuring soundness, completeness, accuracy and nearly 100% accuracy across all models and all domains,” the researchers write.

Compared to other LLM-based planning approaches, ToS drastically reduces the number of calls to the LLM. For example, for the 24 Game dataset, which contains 1,362 puzzles, the previous approach would call GPT-4 approximately 100,000 times. AutoToS, on the other hand, needed only 2.2 calls on average to generate sound search components.

“With these components, we can use the standard BFS algorithm to solve all the 1,362 games together in under 2 seconds and get 100% accuracy, neither of which is achievable by the previous approaches,” Katz said.

AutoToS for enterprise applications

AutoToS can have direct implications for enterprise applications that require planning-based solutions. It cuts the cost of using LLMs and reduces the reliance on manual labor, enabling experts to focus on high-level planning and goal specification.

“We hope that AutoToS can help with both the development and deployment of planning-based solutions,” Katz said. “It uses the language models where needed—to come up with verifiable search components, speeding up the development process and bypassing the unnecessary involvement of these models in the deployment, avoiding the many issues with deploying large language models.”

ToS and AutoToS are examples of neuro-symbolic AI, a hybrid approach that combines the strengths of deep learning and rule-based systems to tackle complex problems. Neuro-symbolic AI is gaining traction as a promising direction for addressing some of the limitations of current AI systems.

“I don’t think that there is any doubt about the role of hybrid systems in the future of AI,” Harsha Kokel, research scientist at IBM, told VentureBeat. “The current language models can be viewed as hybrid systems since they perform a search to obtain the next tokens.”

While ToS and AutoToS show great promise, there is still room for further exploration.

“It is exciting to see how the landscape of planning in natural language evolves and how LLMs improve the integration of planning tools in decision-making workflows, opening up opportunities for intelligent agents of the future,” Kokel and Katz said. “We are interested in general questions of how the world knowledge of LLMs can help improve planning and acting in real-world environments.”

Source link

Servers computers

HP Blade Server

Technology

Commvault acquires data backup provider Clumio

It must be M&A season.

Commvault, a publicly traded data protection and management software company, today announced that it intends to acquire data backup and recovery provider Clumio for an undisclosed sum.

The deal is expected to close in early October. Commvault says it’s not material to its earnings and that it’ll be funded with cash on hand.

Clumio, headquartered in Santa Clara, California, was founded in 2017 by Poojan Kumar, Kaustubh Patil, and Woon Ho Jung. It largely serves to protect AWS workloads, though it introduced support for Microsoft 365 back in 2020.

As of February, Clumio was notching double-digit millions of dollars for annual recurring revenue — up 400% from 2022 to 2023 — and acquiring customers like Atlassian, Duolingo, and LexisNexus. The firm raised $261 million in venture capital from investors including Index Ventures, NewView Capital, and Sutter Hill Ventures prior to Tuesday’s exit.

“At Clumio, our vision was to build a platform that could scale quickly to protect the world’s largest and most complex data sets,” Kumar, who was recently appointed Clumio’s chairman after stepping down as CEO in June, said in a statement. “Joining hands with Commvault allows us to get our cloud-native offerings to AWS customers on a global scale.”

Commvault CEO Sanjay Mirchandani sees Clumio complementing Commvault’s existing “cyber resilience” tools for software built on AWS. Now, he says, Commvault can offer enterprises expanded choice to protect and recover their data and cloud-native apps.

AWS-dependent or no, the data backup and recovery market is massive — which no doubt factored in to Commvault’s M&A decision. According to market analytics firm KBV Research, the global data backup and recovery sector was worth $12.9 billion in 2023, growing at a compound annual growth rate of 10.9% from 2017 to last year.

Businesses face increasing threats related to ransomware. There’s also the issue of data center disasters like the fire that hit France’s OVH in 2021, leading to significant data loss. In some countries, data management-related regulations like the EU AI Act are coming into force, many with strict data retention and provenance stipulations.

“In the event of an outage or cyberattack, rapidly getting back to business is paramount to our customers,” Mirchandani said in a press release. “Combining Commvault’s industry-leading cyber resilience capabilities with Clumio’s exceptional talent and technology advances our recovery offerings, strengthens our platform, and reinforces our position as a leading software-as-a-service provider for cyber resilience.”

The news comes on the heels of Commvault’s purchase of cloud app resilience company Appranix earlier this year, and after Commvault’s expectation-beating Q1 results.

Commvault, originally formed in 1988 as a development group in Bell Labs focused on data management, backup, and recovery, was designated a business unit of AT&T and spun off as its own enterprise in the late ’90s. Commvault went public in 2006, at which point it moved its corporate headquarters from Oceanport to Tinton Falls, New Jersey.

Commvault’s other acquisitions to date include software-defined storage startup Hedvig and cybersecurity company TrapX.

Servers computers

The BEST Homelab Server for the Money – Dell PowerEdge R730

Showcasing my Dell R730 server that I use in my homelab. .

source

-

Womens Workouts1 day ago

Womens Workouts1 day ago3 Day Full Body Women’s Dumbbell Only Workout

-

News6 days ago

News6 days agoYou’re a Hypocrite, And So Am I

-

Sport5 days ago

Sport5 days agoJoshua vs Dubois: Chris Eubank Jr says ‘AJ’ could beat Tyson Fury and any other heavyweight in the world

-

Technology7 days ago

Technology7 days agoWould-be reality TV contestants ‘not looking real’

-

News2 days ago

News2 days agoOur millionaire neighbour blocks us from using public footpath & screams at us in street.. it’s like living in a WARZONE – WordupNews

-

Science & Environment5 days ago

Science & Environment5 days ago‘Running of the bulls’ festival crowds move like charged particles

-

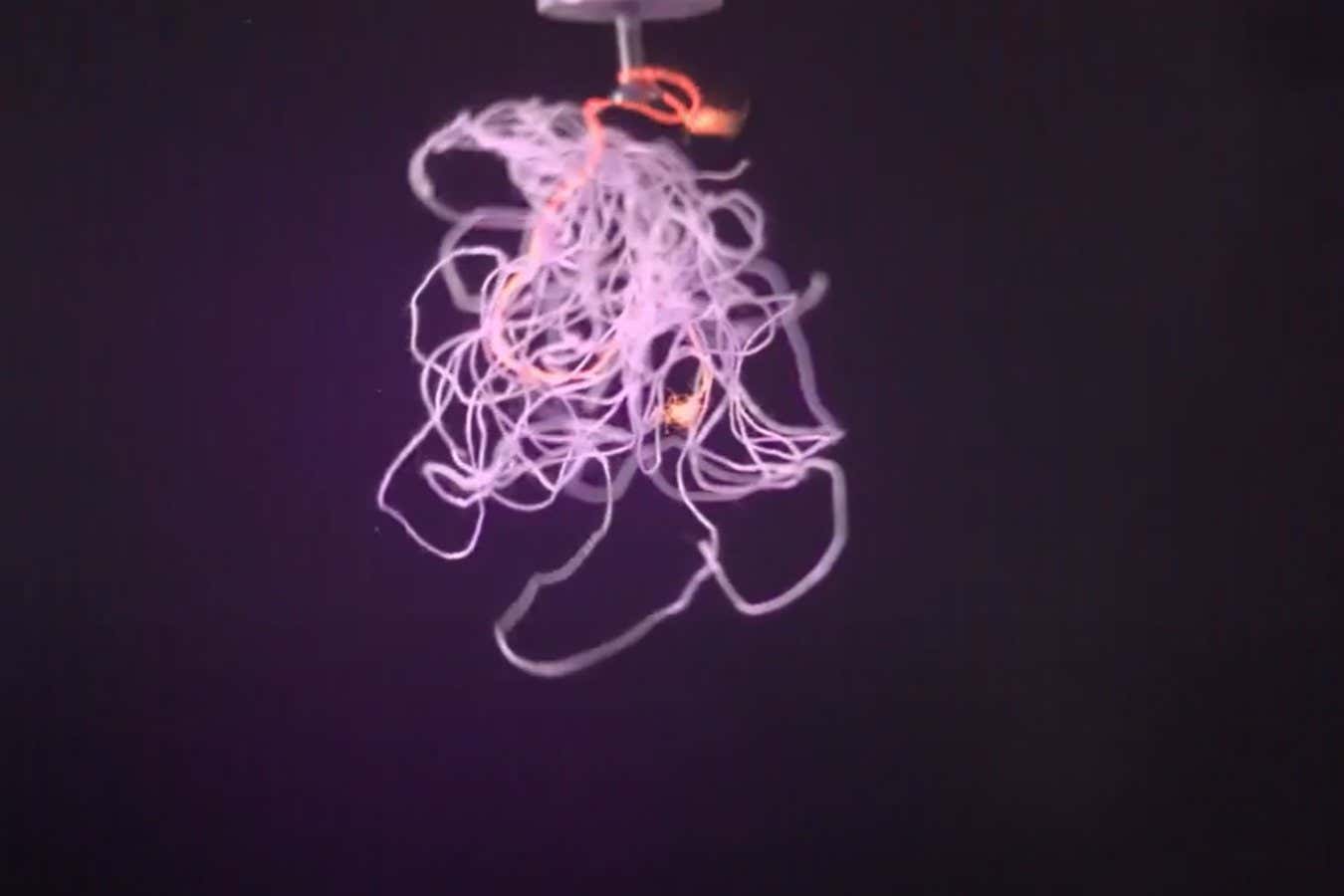

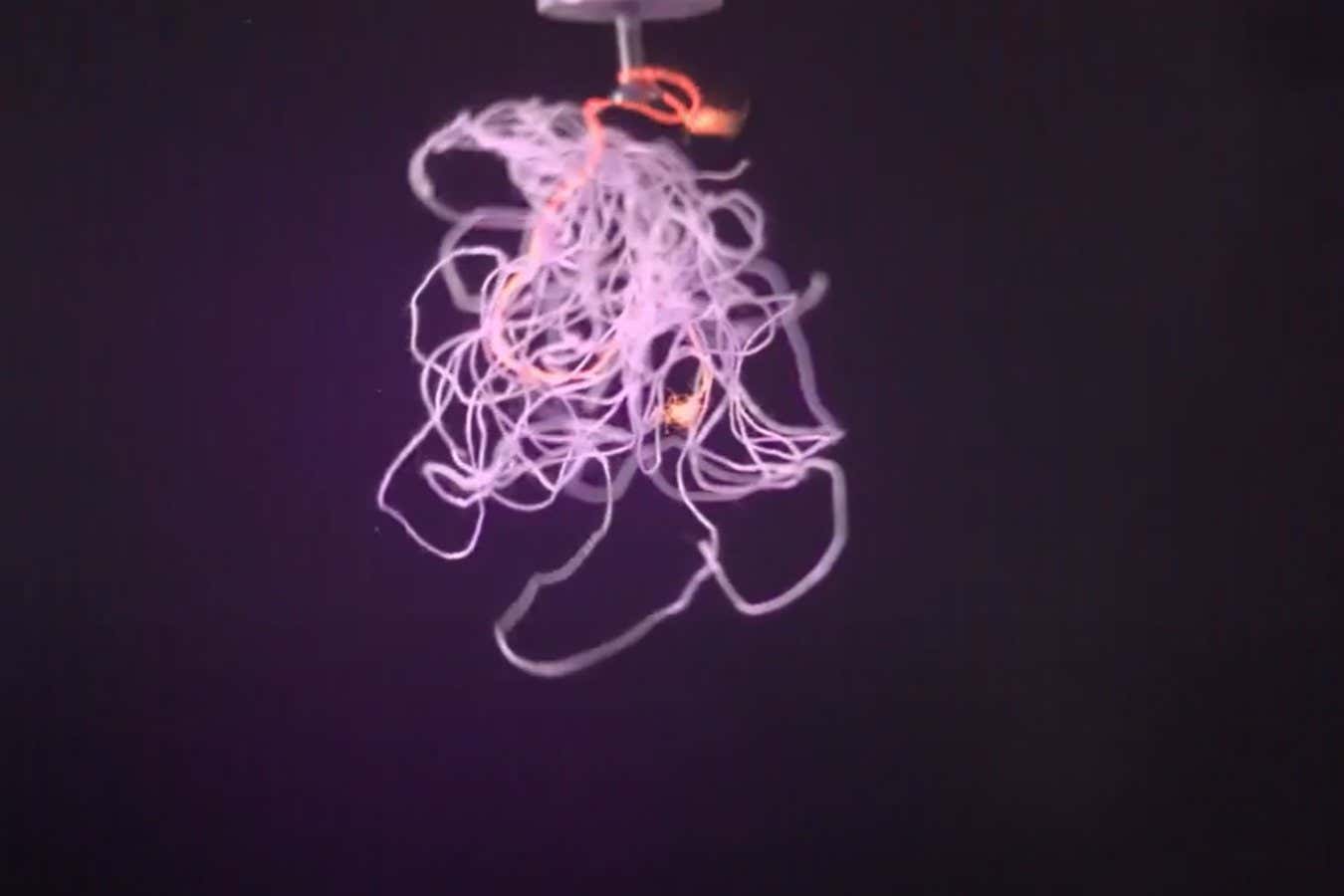

Science & Environment6 days ago

Science & Environment6 days agoHow to unsnarl a tangle of threads, according to physics

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoEthereum is a 'contrarian bet' into 2025, says Bitwise exec

-

Science & Environment6 days ago

Science & Environment6 days agoLiquid crystals could improve quantum communication devices

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoDZ Bank partners with Boerse Stuttgart for crypto trading

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoBitcoin bulls target $64K BTC price hurdle as US stocks eye new record

-

Science & Environment5 days ago

Science & Environment5 days agoQuantum ‘supersolid’ matter stirred using magnets

-

Science & Environment6 days ago

Science & Environment6 days agoMaxwell’s demon charges quantum batteries inside of a quantum computer

-

Science & Environment6 days ago

Science & Environment6 days agoSunlight-trapping device can generate temperatures over 1000°C

-

Science & Environment6 days ago

Science & Environment6 days agoHow to wrap your mind around the real multiverse

-

CryptoCurrency5 days ago

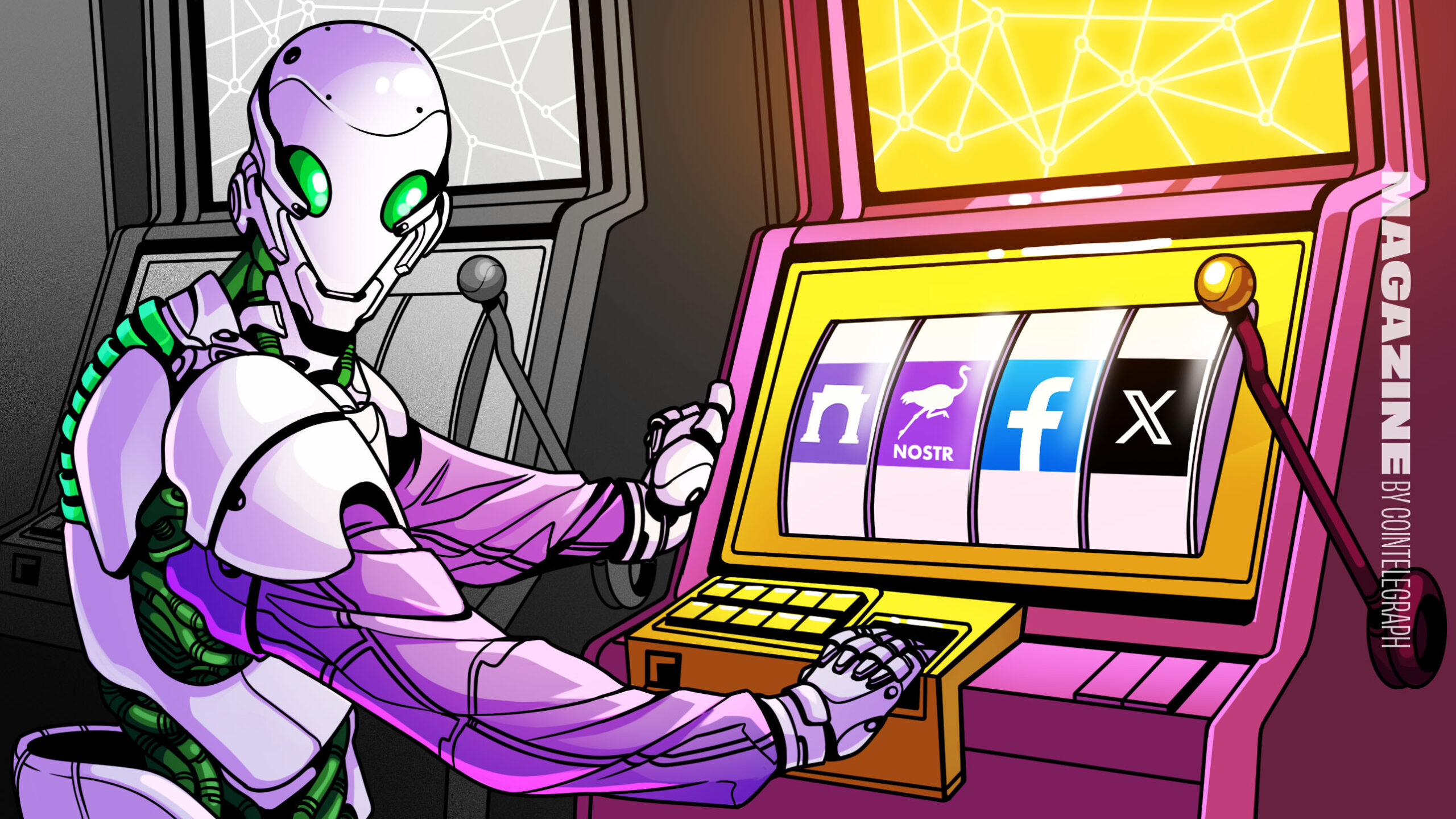

CryptoCurrency5 days agoDorsey’s ‘marketplace of algorithms’ could fix social media… so why hasn’t it?

-

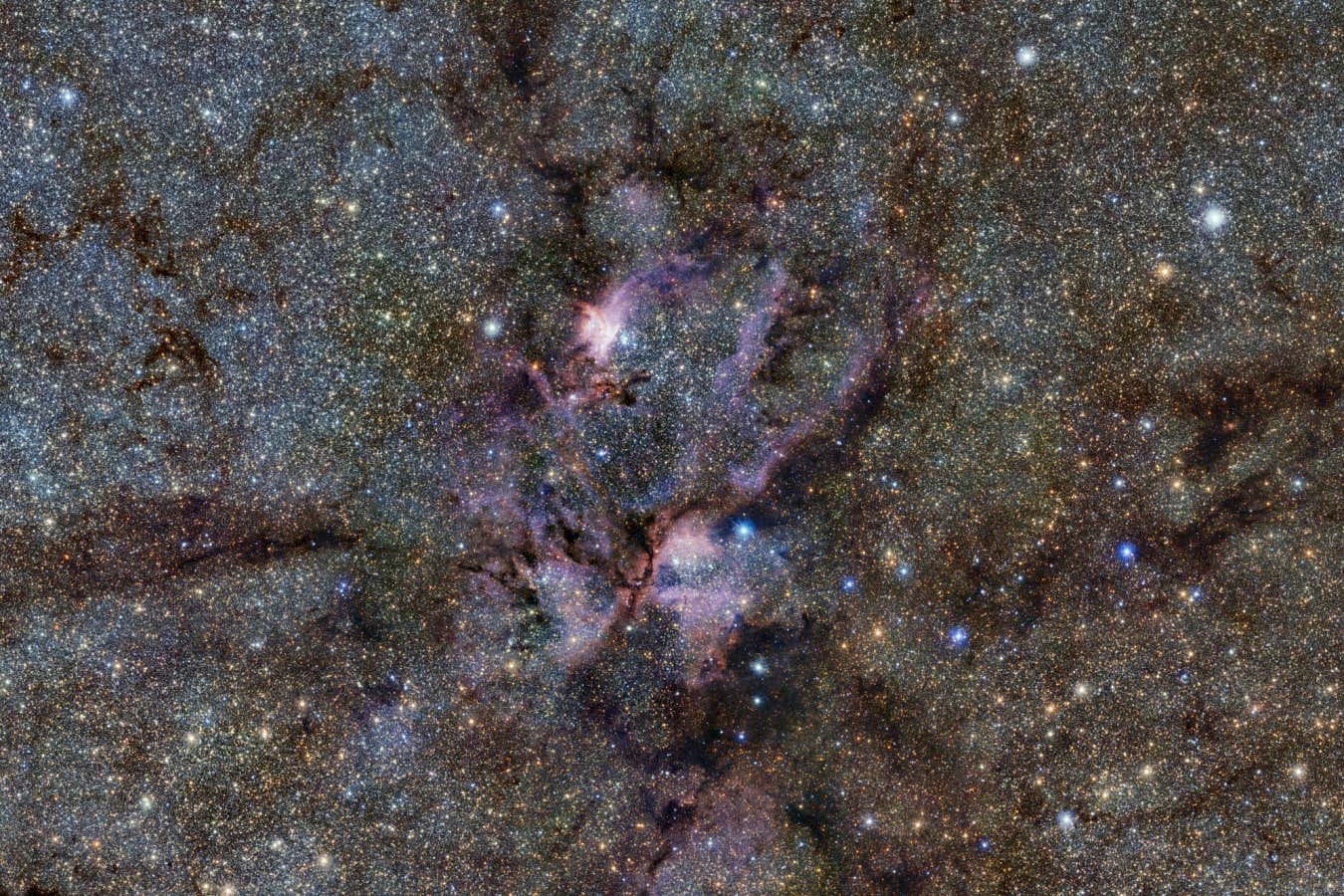

Science & Environment6 days ago

Science & Environment6 days agoWhy this is a golden age for life to thrive across the universe

-

Health & fitness7 days ago

Health & fitness7 days agoThe secret to a six pack – and how to keep your washboard abs in 2022

-

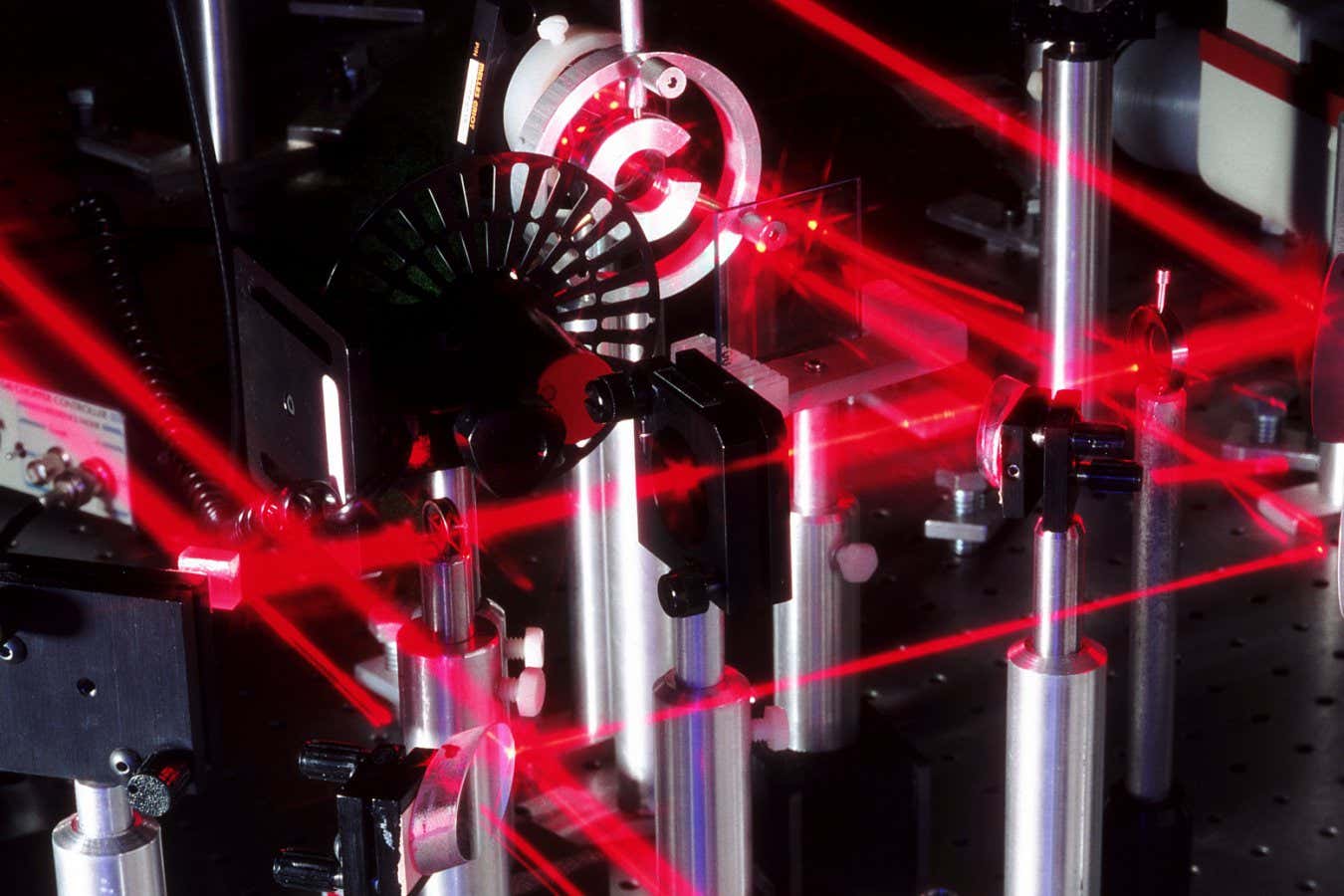

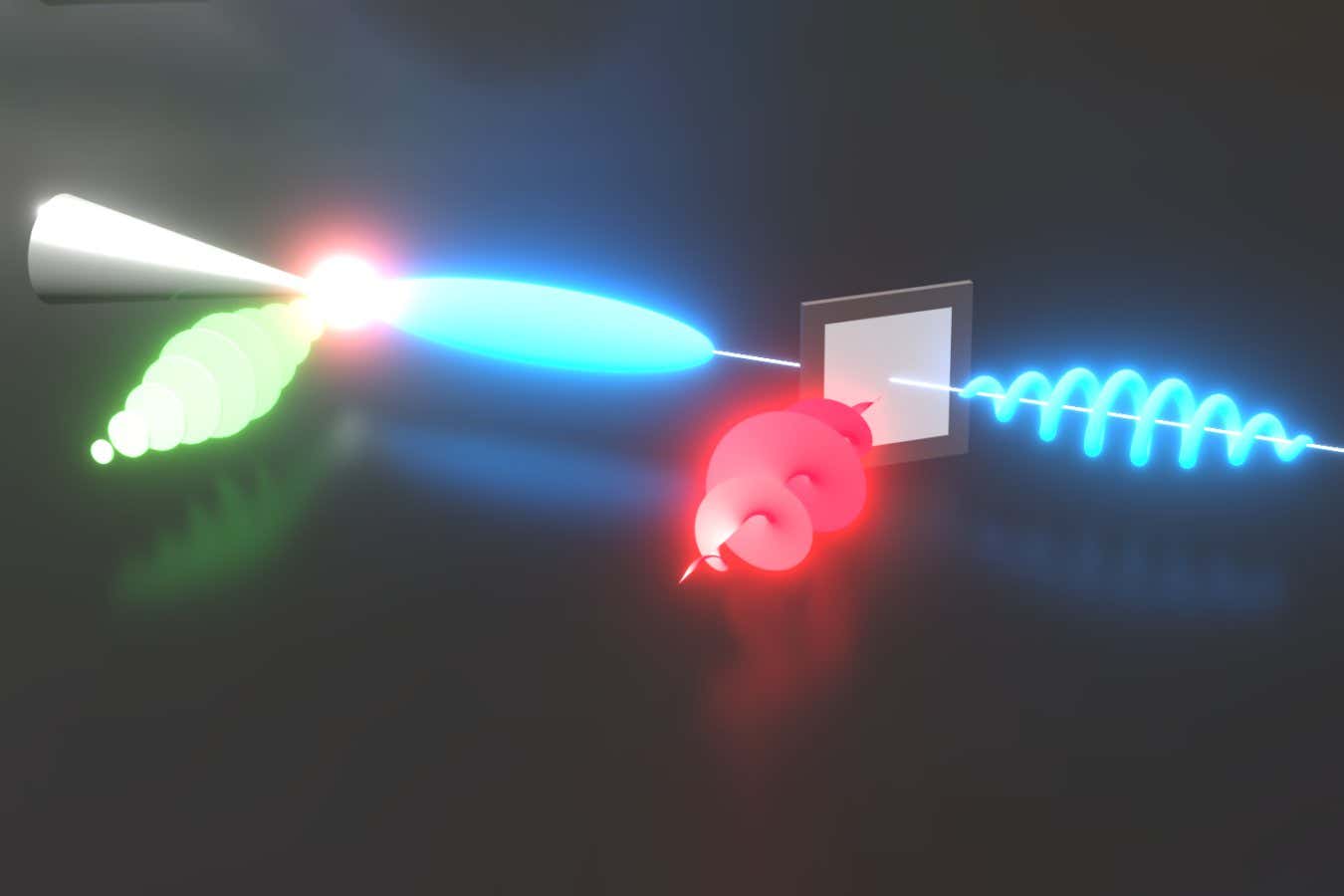

Science & Environment6 days ago

Science & Environment6 days agoLaser helps turn an electron into a coil of mass and charge

-

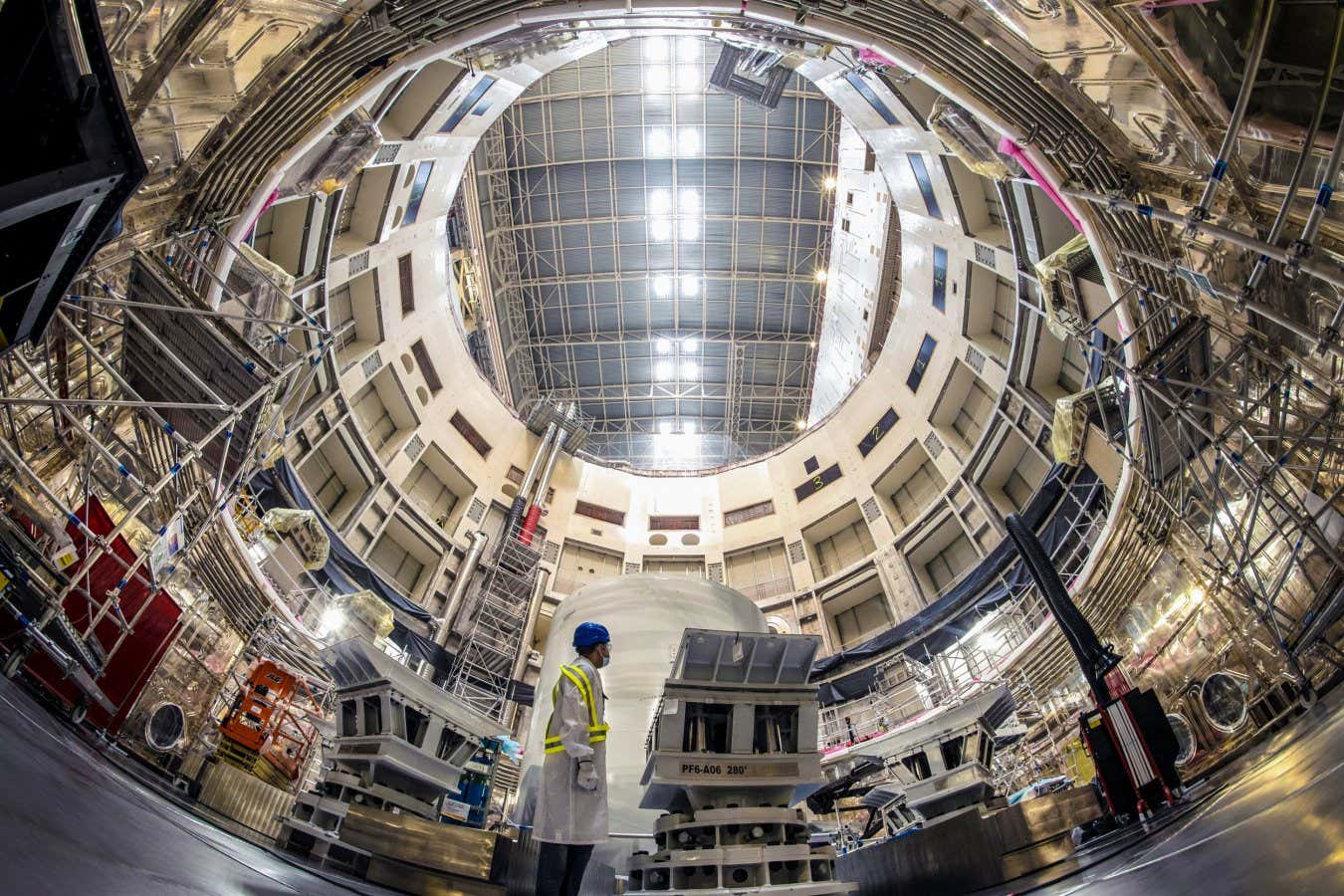

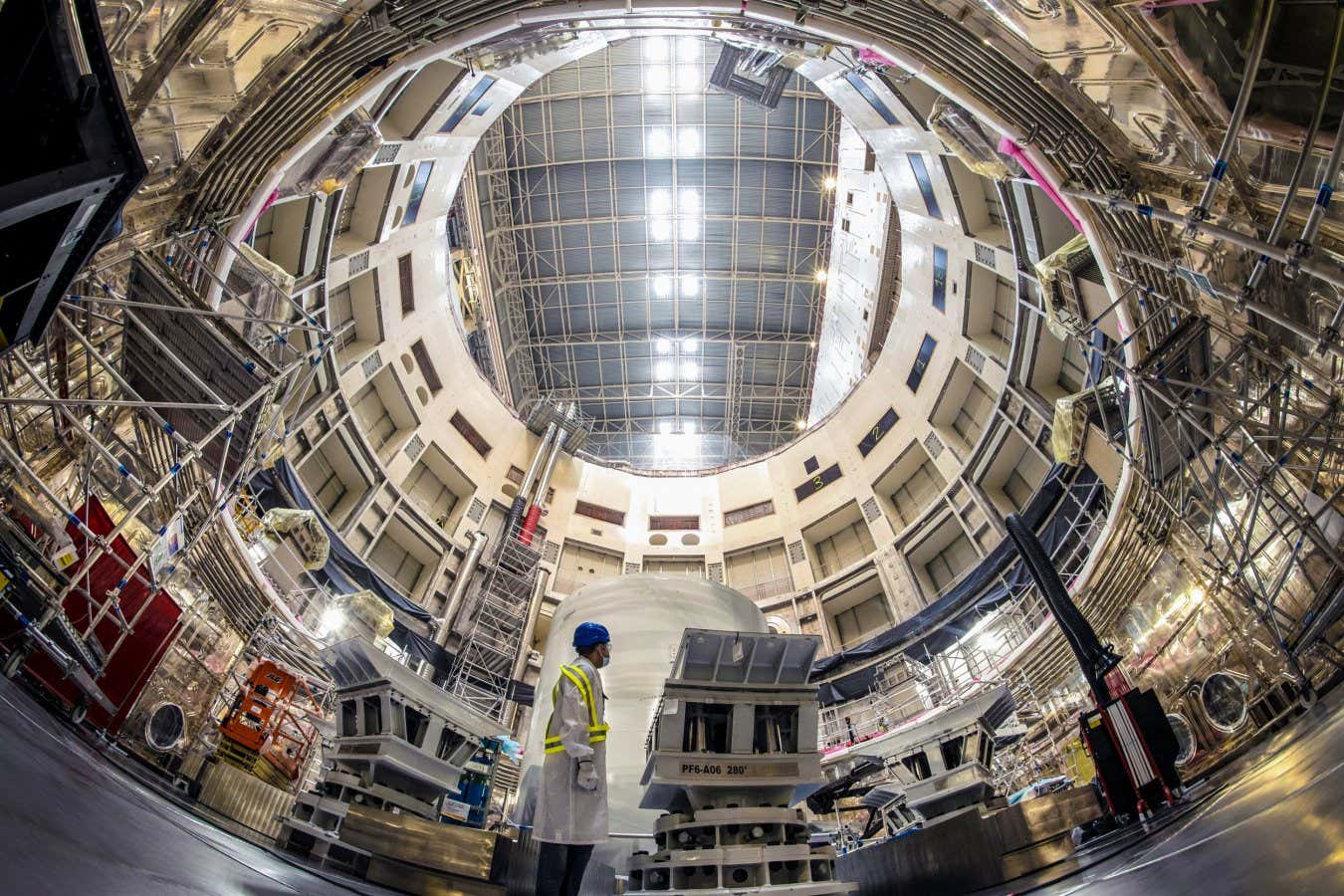

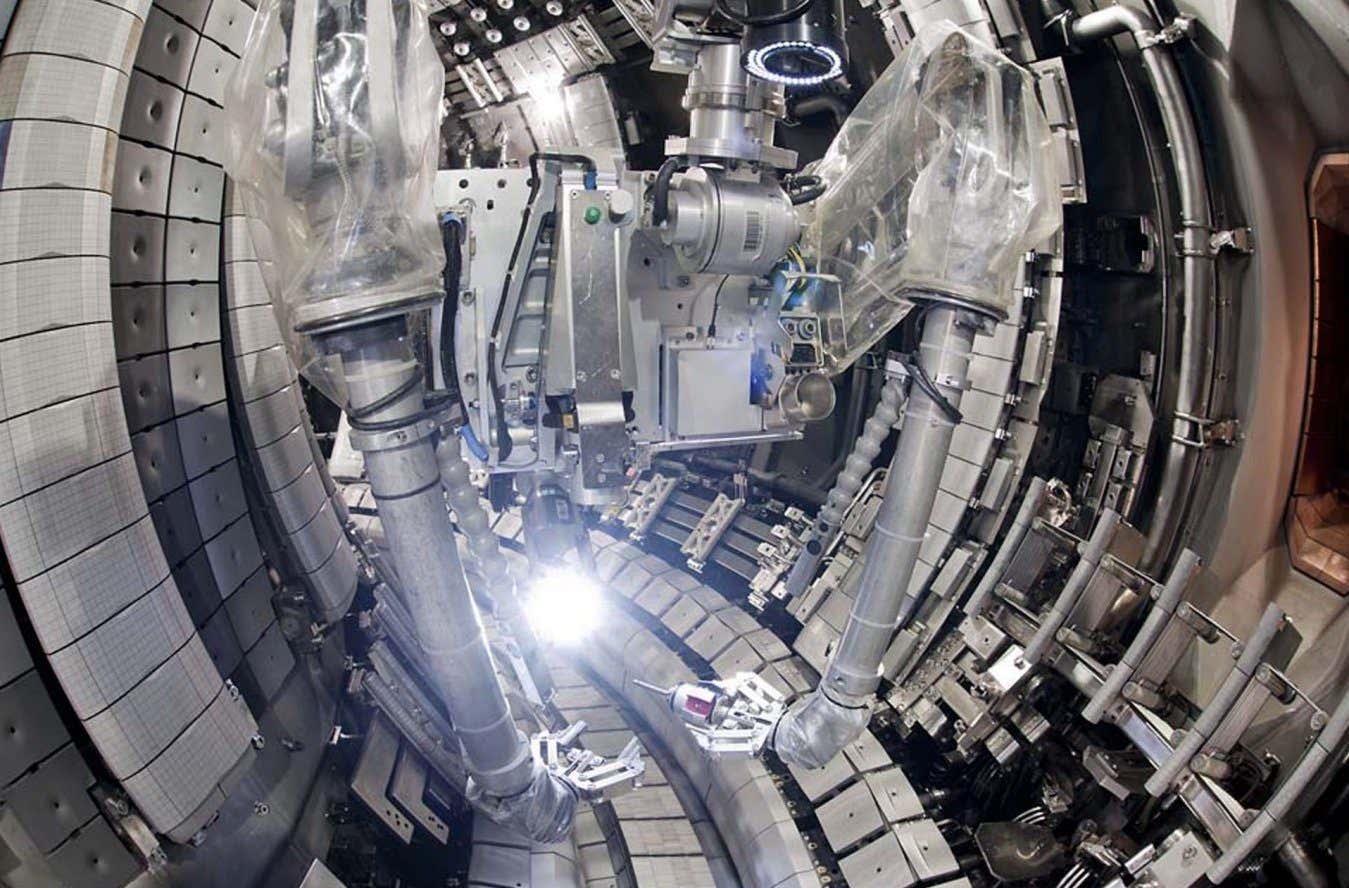

Science & Environment6 days ago

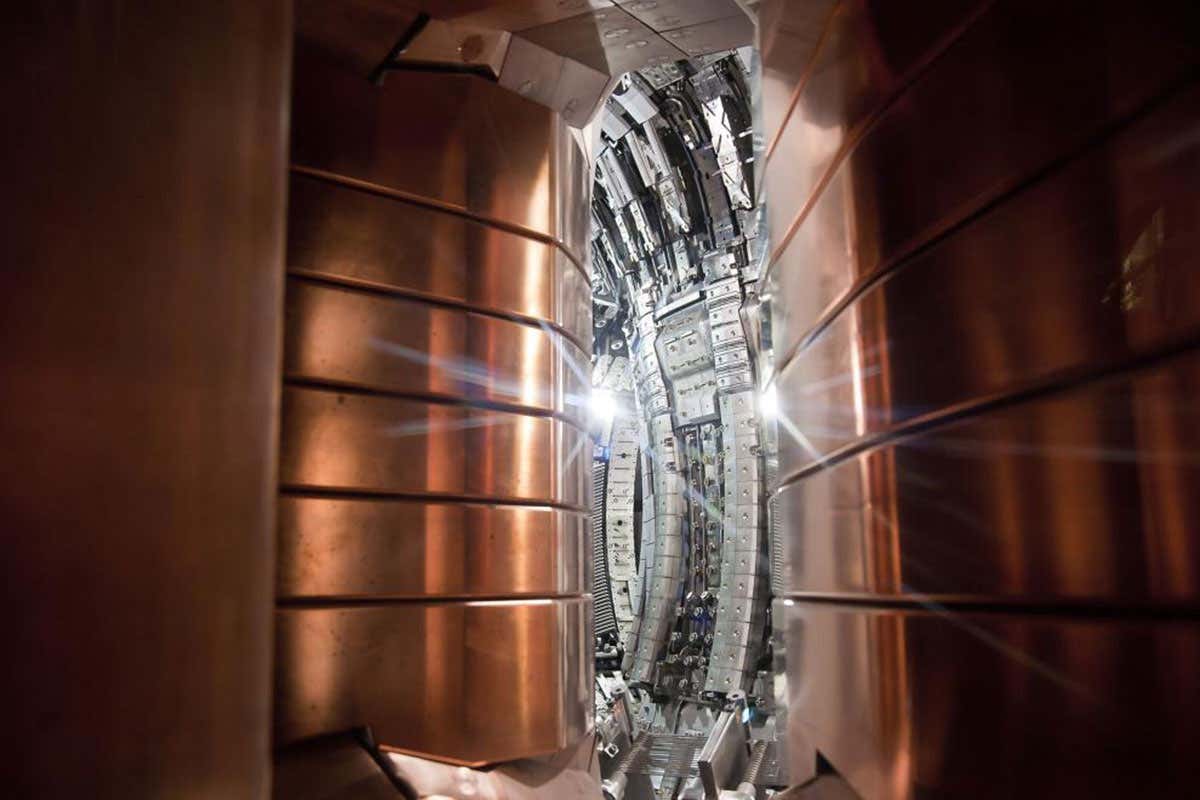

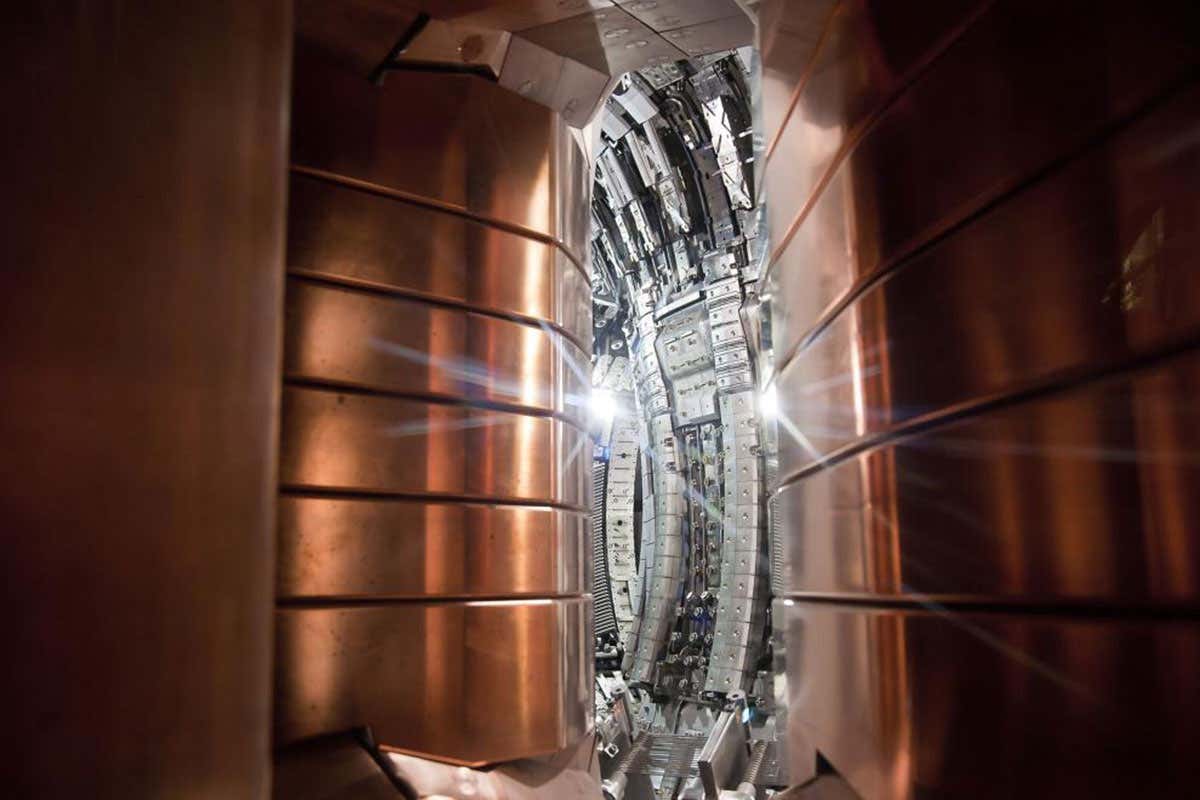

Science & Environment6 days agoITER: Is the world’s biggest fusion experiment dead after new delay to 2035?

-

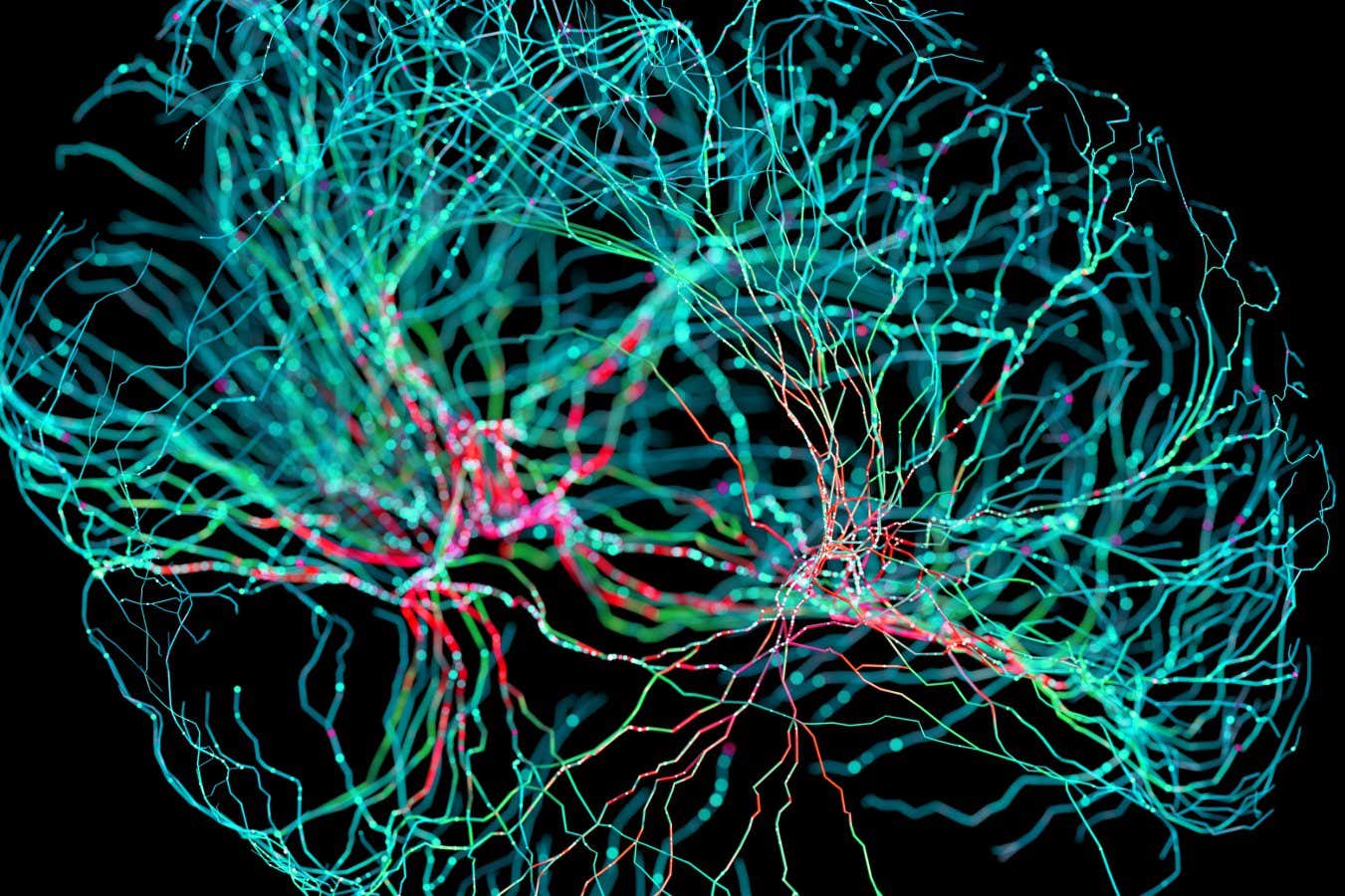

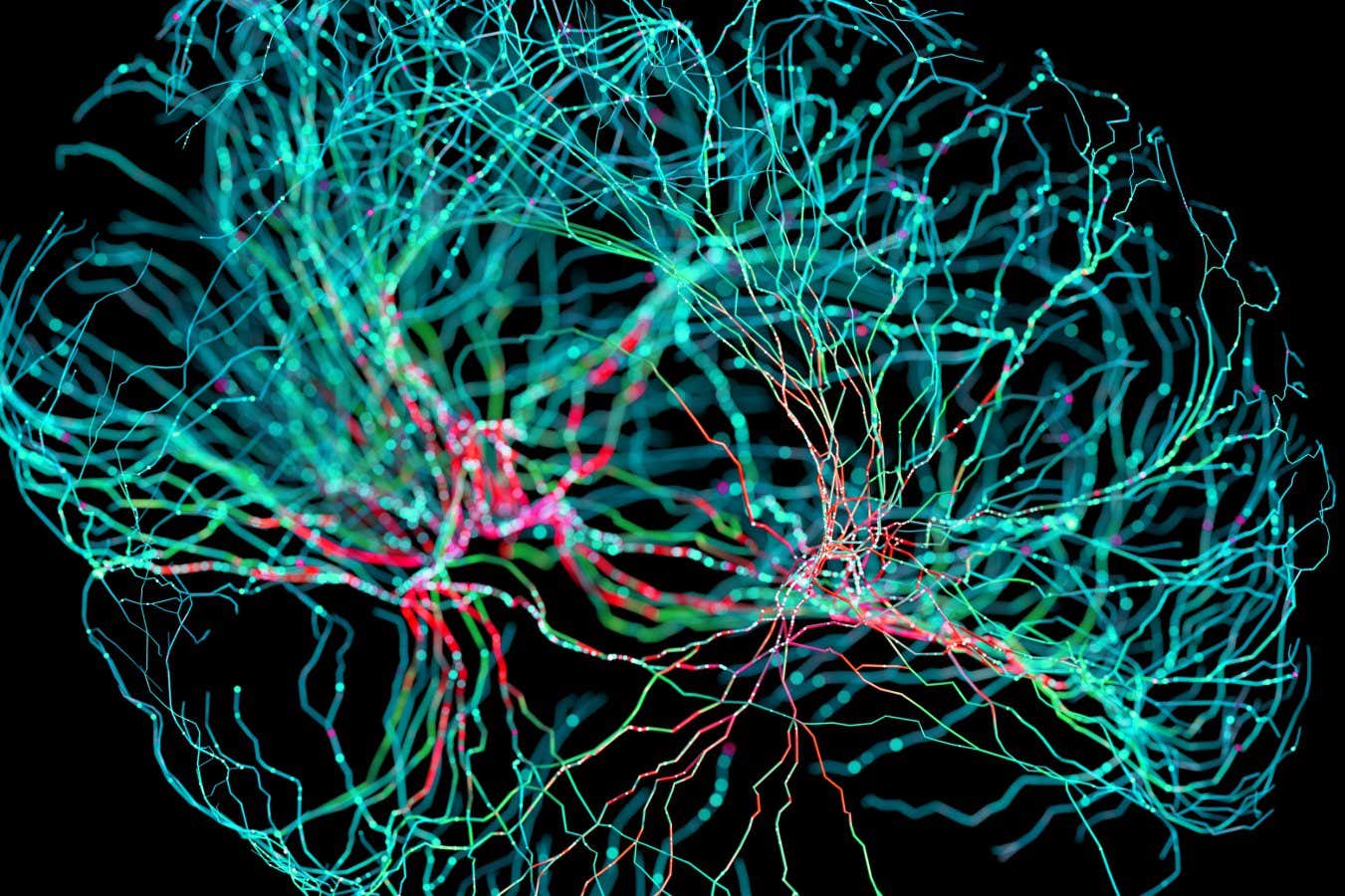

Science & Environment6 days ago

Science & Environment6 days agoNerve fibres in the brain could generate quantum entanglement

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoBitcoin miners steamrolled after electricity thefts, exchange ‘closure’ scam: Asia Express

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoCardano founder to meet Argentina president Javier Milei

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoLow users, sex predators kill Korean metaverses, 3AC sues Terra: Asia Express

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoSEC asks court for four months to produce documents for Coinbase

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoBlockdaemon mulls 2026 IPO: Report

-

Science & Environment2 days ago

Science & Environment2 days agoMeet the world's first female male model | 7.30

-

News5 days ago

News5 days agoIsrael strikes Lebanese targets as Hizbollah chief warns of ‘red lines’ crossed

-

Sport5 days ago

Sport5 days agoUFC Edmonton fight card revealed, including Brandon Moreno vs. Amir Albazi headliner

-

Science & Environment5 days ago

Science & Environment5 days agoHyperelastic gel is one of the stretchiest materials known to science

-

Technology5 days ago

Technology5 days agoiPhone 15 Pro Max Camera Review: Depth and Reach

-

Science & Environment6 days ago

Science & Environment6 days agoQuantum forces used to automatically assemble tiny device

-

News5 days ago

News5 days agoBrian Tyree Henry on voicing young Megatron, his love for villain roles

-

Science & Environment6 days ago

Science & Environment6 days agoTime travel sci-fi novel is a rip-roaringly good thought experiment

-

Science & Environment6 days ago

Science & Environment6 days agoQuantum time travel: The experiment to ‘send a particle into the past’

-

Science & Environment6 days ago

Science & Environment6 days agoPhysicists are grappling with their own reproducibility crisis

-

Science & Environment6 days ago

Science & Environment6 days agoNuclear fusion experiment overcomes two key operating hurdles

-

CryptoCurrency5 days ago

CryptoCurrency5 days ago2 auditors miss $27M Penpie flaw, Pythia’s ‘claim rewards’ bug: Crypto-Sec

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoJourneys: Robby Yung on Animoca’s Web3 investments, TON and the Mocaverse

-

CryptoCurrency5 days ago

CryptoCurrency5 days ago$12.1M fraud suspect with ‘new face’ arrested, crypto scam boiler rooms busted: Asia Express

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoRedStone integrates first oracle price feeds on TON blockchain

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoVitalik tells Ethereum L2s ‘Stage 1 or GTFO’ — Who makes the cut?

-

Womens Workouts4 days ago

Womens Workouts4 days agoBest Exercises if You Want to Build a Great Physique

-

Womens Workouts4 days ago

Womens Workouts4 days agoEverything a Beginner Needs to Know About Squatting

-

News5 days ago

News5 days agoChurch same-sex split affecting bishop appointments

-

Politics5 days ago

Politics5 days agoLabour MP urges UK government to nationalise Grangemouth refinery

-

Science & Environment5 days ago

Science & Environment5 days agoHow one theory ties together everything we know about the universe

-

Science & Environment1 week ago

Science & Environment1 week agoCaroline Ellison aims to duck prison sentence for role in FTX collapse

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoHelp! My parents are addicted to Pi Network crypto tapper

-

Science & Environment5 days ago

Science & Environment5 days agoFuture of fusion: How the UK’s JET reactor paved the way for ITER

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoSEC sues ‘fake’ crypto exchanges in first action on pig butchering scams

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoCertiK Ventures discloses $45M investment plan to boost Web3

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoVonMises bought 60 CryptoPunks in a month before the price spiked: NFT Collector

-

CryptoCurrency5 days ago

CryptoCurrency5 days ago‘Silly’ to shade Ethereum, the ‘Microsoft of blockchains’ — Bitwise exec

-

CryptoCurrency5 days ago

CryptoCurrency5 days ago‘No matter how bad it gets, there’s a lot going on with NFTs’: 24 Hours of Art, NFT Creator

-

Business5 days ago

Thames Water seeks extension on debt terms to avoid renationalisation

-

Business5 days ago

How Labour donor’s largesse tarnished government’s squeaky clean image

-

News5 days ago

News5 days agoBrian Tyree Henry on voicing young Megatron, his love for villain roles

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoCoinbase’s cbBTC surges to third-largest wrapped BTC token in just one week

-

News4 days ago

News4 days agoBangladesh Holds the World Accountable to Secure Climate Justice

-

News2 days ago

News2 days agoFour dead & 18 injured in horror mass shooting with victims ‘caught in crossfire’ as cops hunt multiple gunmen

-

Politics7 days ago

Politics7 days agoTrump says he will meet with Indian Prime Minister Narendra Modi next week

-

Technology5 days ago

Technology5 days agoFivetran targets data security by adding Hybrid Deployment

-

Money6 days ago

Money6 days agoWhat estate agents get up to in your home – and how they’re being caught

-

Science & Environment6 days ago

Science & Environment6 days agoA new kind of experiment at the Large Hadron Collider could unravel quantum reality

-

Fashion Models5 days ago

Fashion Models5 days agoMixte

-

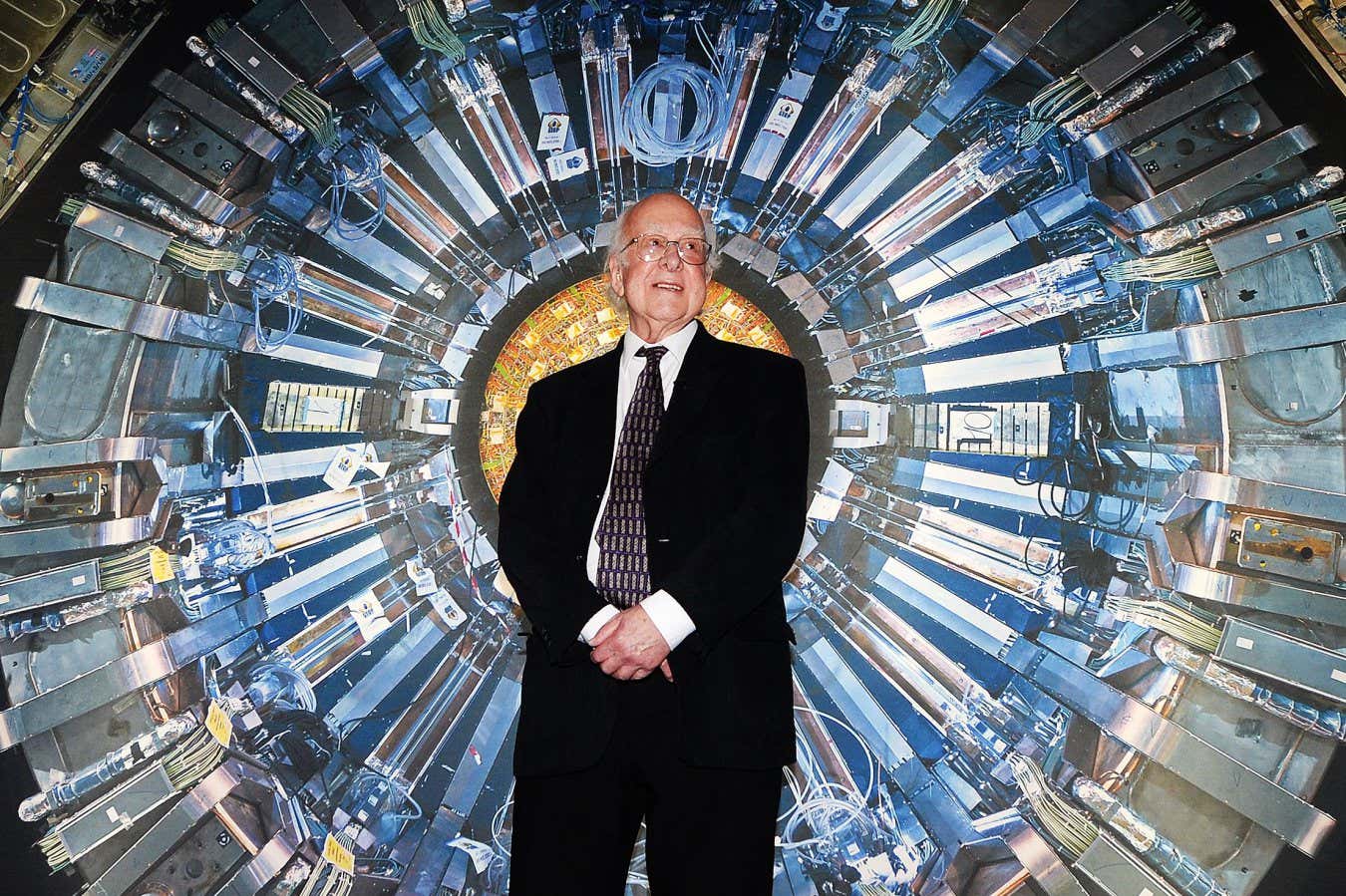

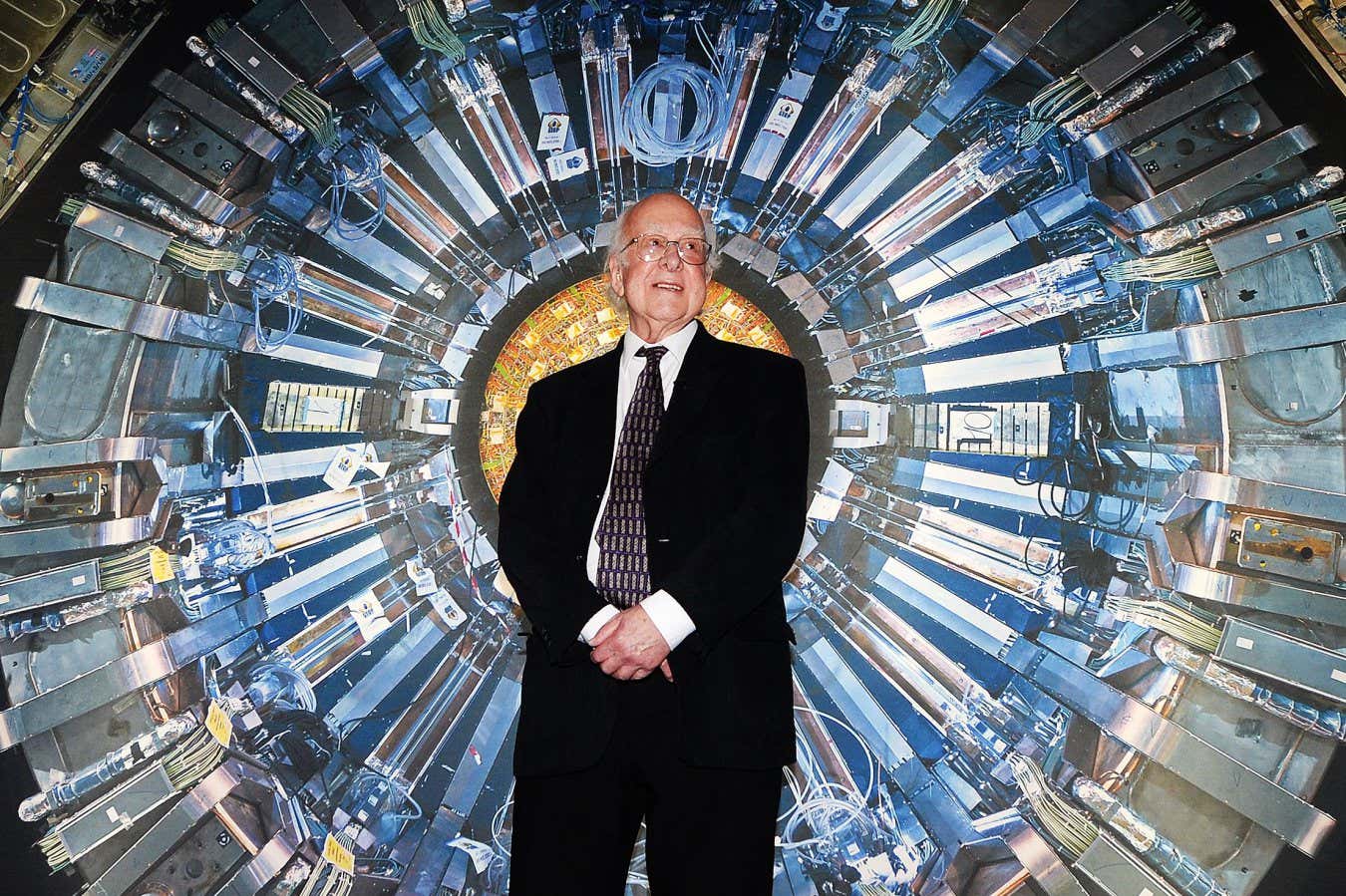

Science & Environment5 days ago

Science & Environment5 days agoHow Peter Higgs revealed the forces that hold the universe together

-

News6 days ago

News6 days ago▶️ Media Bias: How They Spin Attack on Hezbollah and Ignore the Reality

-

News6 days ago

News6 days agoRoad rage suspects in custody after gunshots, drivers ramming vehicles near Boise

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoCrypto scammers orchestrate massive hack on X but barely made $8K

-

Science & Environment5 days ago

Science & Environment5 days agoUK spurns European invitation to join ITER nuclear fusion project

-

Science & Environment5 days ago

Science & Environment5 days agoWhy we need to invoke philosophy to judge bizarre concepts in science

-

Science & Environment5 days ago

Science & Environment5 days agoHow do you recycle a nuclear fusion reactor? We’re about to find out

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoElon Musk is worth 100K followers: Yat Siu, X Hall of Flame

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoBitcoin price hits $62.6K as Fed 'crisis' move sparks US stocks warning

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoCZ and Binance face new lawsuit, RFK Jr suspends campaign, and more: Hodler’s Digest Aug. 18 – 24

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoBeat crypto airdrop bots, Illuvium’s new features coming, PGA Tour Rise: Web3 Gamer

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoTelegram bot Banana Gun’s users drained of over $1.9M

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoEthereum falls to new 42-month low vs. Bitcoin — Bottom or more pain ahead?

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoETH falls 6% amid Trump assassination attempt, looming rate cuts, ‘FUD’ wave

-

Politics5 days ago

The Guardian view on 10 Downing Street: Labour risks losing the plot | Editorial

-

Politics5 days ago

Politics5 days agoI’m in control, says Keir Starmer after Sue Gray pay leaks

-

Politics5 days ago

‘Appalling’ rows over Sue Gray must stop, senior ministers say | Sue Gray

-

Business5 days ago

UK hospitals with potentially dangerous concrete to be redeveloped

-

Business5 days ago

Axel Springer top team close to making eight times their money in KKR deal

-

News5 days ago

News5 days ago“Beast Games” contestants sue MrBeast’s production company over “chronic mistreatment”

-

News5 days ago

News5 days agoSean “Diddy” Combs denied bail again in federal sex trafficking case

-

CryptoCurrency5 days ago

CryptoCurrency5 days agoBitcoin options markets reduce risk hedges — Are new range highs in sight?

-

Money5 days ago

Money5 days agoBritain’s ultra-wealthy exit ahead of proposed non-dom tax changes

-

Womens Workouts4 days ago

Womens Workouts4 days agoHow Heat Affects Your Body During Exercise

-

Womens Workouts4 days ago

Womens Workouts4 days agoKeep Your Goals on Track This Season

-

Womens Workouts4 days ago

Womens Workouts4 days agoWhich Squat Load Position is Right For You?

-

News2 days ago

News2 days agoWhy Is Everyone Excited About These Smart Insoles?

-

Womens Workouts1 day ago

Womens Workouts1 day ago3 Day Full Body Toning Workout for Women

-

News5 days ago

News5 days agoPolice chief says Daniel Greenwood 'used rank to pursue junior officer'

-

Science & Environment6 days ago

Science & Environment6 days agoElon Musk’s SpaceX contracted to destroy retired space station

-

Politics1 week ago

Starmer ally Hollie Ridley appointed as Labour general secretary | Labour

-

Technology1 week ago

Technology1 week ago‘The dark web in your pocket’

-

Business1 week ago

Business1 week agoGuardian in talks to sell world’s oldest Sunday paper

-

News5 days ago

Freed Between the Lines: Banned Books Week

You must be logged in to post a comment Login