Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

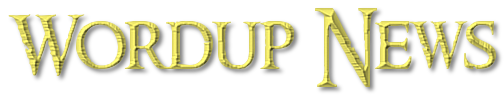

Nvidia announced that its Nvidia AI Blueprint will make it easy for developers in any industry to build AI agents to analyze video and image content.

With this technology, Nvidia said any industry can now search and summarize vast volumes of visual

data.

Accenture, Dell and Lenovo are among the companies tapping a new Nvidia AI Blueprint to develop visual AI agents that can boost productivity, optimize processes and create safer spaces.

Enterprises and public sector organizations around the world are developing AI agents to boost the capabilities of workforces that rely on visual information from a growing number of devices — including cameras, IoT sensors and vehicles.

To support their work, a new Nvidia AI Blueprint for video search and summarization will enable developers in virtually any industry to build visual AI agents that analyze video and image content. These agents can answer user questions, generate summaries and enable alerts for specific scenarios.

Part of Nvidia Metropolis, a set of developer tools for building vision AI applications, the blueprint is a customizable workflow that combines Nvidia computer vision and generative AI technologies.

Global systems integrators and technology solutions providers including Accenture, Dell and Lenovo are bringing the Nvidia AI Blueprint for visual search and summarization to businesses and cities worldwide, jump-starting the next wave of AI applications that can be deployed to boost productivity and safety in factories, warehouses, shops, airports, traffic intersections and more.

Announced ahead of the Smart City Expo World Congress, the Nvidia AI Blueprint gives visual computing developers a full suite of optimized software for building and deploying generative AI-powered agents that can ingest and understand massive volumes of live video streams or data archives.

Users can customize these visual AI agents with natural language prompts instead of rigid software code, lowering the barrier to deploying virtual assistants across industries and smart city applications.

Nvidia AI Blueprint harnesses vision language models

Visual AI agents are powered by vision language models (VLMs), a class of generative AI models that combine computer vision and language understanding to interpret the physical world and perform reasoning tasks.

The Nvidia AI Blueprint for video search and summarization can be configured with Nvidia NIM microservices for VLMs like Nvidia VILA, LLMs like Meta’s Llama 3.1 405B and AI models for GPU-accelerated question answering and context-aware retrieval-augmented generation.

Developers can easily swap in other VLMs, LLMs and graph databases and fine-tune them using the Nvidia NeMo platform for their unique environments and use cases.

Adopting the Nvidia AI Blueprint could save developers months of effort on investigating and optimizing generative AI models for smart city applications.

Deployed on Nvidia GPUs at the edge, on premises or in the cloud, it can vastly accelerate the process of combing through video archives to identify key moments.

In a warehouse environment, an AI agent built with this workflow could alert workers if safety protocols are breached. At busy intersections, an AI agent could identify traffic collisions and generate reports to aid emergency response efforts. And in the field of public infrastructure, maintenance workers could ask AI agents to review aerial footage and identify degrading roads, train tracks or bridges to support proactive maintenance.

Beyond smart spaces, visual AI agents could also be used to summarize videos for people with impaired vision, automatically generate recaps of sporting events and help label massive visual datasets to train other AI models.

The video search and summarization workflow joins a collection of Nvidia AI Blueprints that make it easy to create AI-powered digital avatars, build virtual assistants for personalized customer service and extract enterprise insights from PDF data.

Nvidia AI Blueprints are free for developers to experience and download, and can be deployed in production across accelerated data centers and clouds with Nvidia AI Enterprise, an end-to-end software platform that accelerates data science pipelines and streamlines generative AI development and deployment.

AI agents to deliver insights from warehouses to world capitals

Enterprise and public sector customers can also harness the full collection of Nvidia AI Blueprints with the help of Nvidia’s partner ecosystem.

Global professional services company Accenture has integrated Nvidia AI Blueprints into its Accenture AI Refinery, which is built on Nvidia AI Foundry and enables customers to develop custom AI models trained on enterprise data.

Global systems integrators in Southeast Asia — including ITMAX in Malaysia and FPT in Vietnam — are building AI agents based on the video search and summarization Nvidia AI Blueprint for smart city and intelligent transportation applications.

Developers can also build and deploy Nvidia AI Blueprints on Nvidia AI platforms with compute, networking and software provided by global server manufacturers. Nvidia AI Blueprints are incorporated in the Dell AI Factory with Nvidia and Lenovo Hybrid AI solutions.

Companies like K2K, a smart city application provider in the Nvidia Metropolis ecosystem, will use the new Nvidia AI Blueprint to build AI agents that analyze live traffic cameras in real time. This will enable city officials to ask questions about street activity and receive recommendations on ways to improve operations. The company also is working with city traffic managers in Palermo, Italy, to deploy visual AI agents using NIM microservices and Nvidia AI Blueprints.

Nvidia will talk more about this at the Smart Cities Expo World Congress, taking place in Barcelona through Nov. 7.

VB Daily

Stay in the know! Get the latest news in your inbox daily

By subscribing, you agree to VentureBeat’s Terms of Service.

Thanks for subscribing. Check out more VB newsletters here.

An error occured.

You must be logged in to post a comment Login