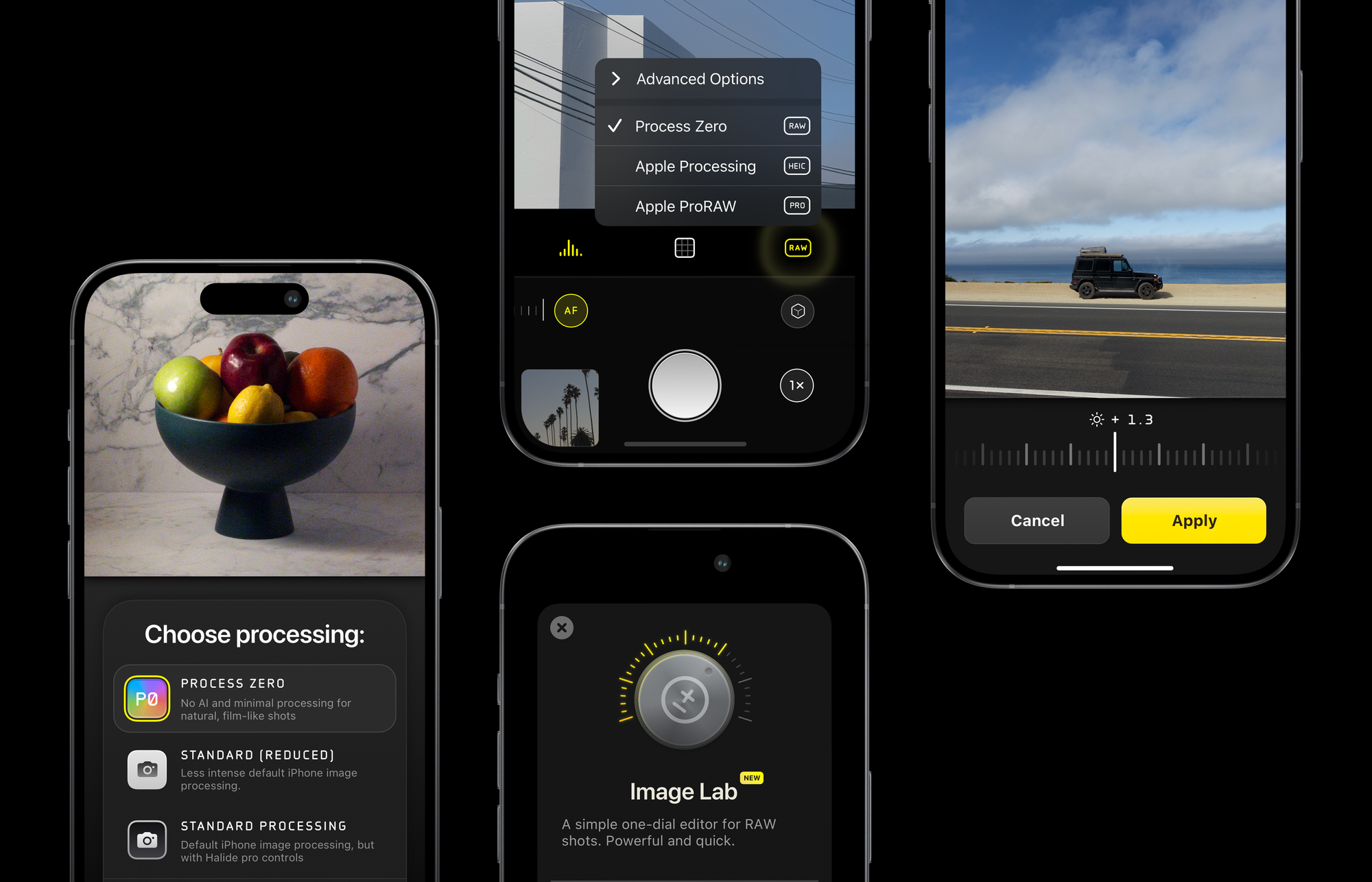

Today, we are launching something unlike any tech product in 2024: a product that uses zero AI and zero computational photography to produce natural, film-like photos. We call it Process Zero. It lives in Halide, and it turns your iPhone into a classic camera.

Process Zero is a new mode in Halide that skips over the standard iPhone image processing system. It produces photos with more detail and allows the photographer greater control over lighting and exposure. This is not a photo filter— it really develops photos at the raw, sensor-data level.

Just like film, Process Zero photos come with (digital) negatives, affording incredible control to change exposure after the fact. Much like film, it has grain. It works best in daytime or mixed lighting, rather than nighttime shots. Thankfully, unlike film, you don’t need any chemicals to develop these negatives. We give you one dial.

Best of all, Process Zero is available on every iPhone that runs Halide and iOS 17, not just the latest iPhones Pro.

Because Process Zero eschews magical algorithms, it has tradeoffs. This is why it’s a new choice in addition to the standard iPhone photo processing system in Halide. Read on to learn why we built this, what the tradeoffs are, and where are we going.

In Search of a Classic Camera

Today’s smartphones let anyone just press a button and get a nice picture. At the extreme, you have cameras that swap faces, insert stock photos of the moon, or use AI to generate completely new elements.

Thanks, I hate it.

By comparison, an iPhone is downright conservative, mostly a magic helping hand in difficult lighting situations. Consider the classic problem of capturing a window on a sunny day. If you’ve only captured photos on an iPhone, you might not even know this is a classic photography problem:

Classic cameras can either over-expose the outside or under-expose the room. But algorithms can combine these multiple photos and voilà!

This is great for aspiring photographers, who can now focus on learning high-level concepts instead of getting bogged down fiddling with knobs. Even experts can appreciate the convenience of just pressing a button and getting useful results.

In the years following Halide’s launch in 2017, iPhone cameras have gotten much smarter, adding sophisticated algorithms like Smart HDR and Deep Fusion. We worried about what this could mean for our app. What’s the point in manual control when a phone can do better if you stay out of the way?

It turns out we were wrong to worry. As cameras have gotten smarter, Halide has only thrived. Users want more than standard manual controls— they want control over algorithms. Tons of people tell us they love Halide for this one toggle:

To be clear, these algorithms are amazing, but leaving all decisions to a machine means sacrificing some choices as an artist. A machine can only make objective decisions, but many technical decisions are inherently subjective.

For example, a photographer manually editing their photos might ask themselves, “Do I want noise in my photo, or to eliminate it at the cost of detail?” The iPhone’s image processing pipeline doesn’t like noise at all, and that’s fine. We wouldn’t be surprised if most iPhone users prefer their photos that way.

But Sebastiaan and I like a bit of noise in our shots, and that toggle in Halide that reduces processing didn’t help. Noise reduction is just one of those things that gives iPhone photos their look. Because Halide was built on top of the system processing, we had to come along for the ride.

So our love of noise sent us down the path of building our own process.

Left: a standard iPhone photo. Right: Process Zero

What’s going on with the noise removal? We can’t say for sure, because we didn’t build the iPhone’s algorithm. We do know that when you combine multiple photos (as in the window example earlier) you are no longer capturing a single moment in time, and when you average together multiple photos, noise goes away.

Unfortunately, photo merging algorithms have to guess how each photo lines up. This is especially tricky with moving objects, and if the algorithm guesses wrong you see ghosts. In the end, all of this intricate guesswork costs more than just noise, but also fine details.

In contrast, Process Zero is a single-shot process. We take one, and only one, photo. If parts of your image are not properly exposed, we don’t have any algorithms to fix that. Sometimes this is a good thing! Consider this photo of Ethan from the day he came home from the hospital.

If an algorithm sees this image, it may think it needs to bring out details in the shadows and smooth away the noise. This can frustrate an experienced photographer who knows what they’re doing.

Left: Captured with ProRAW. Right: Process Zero.

Process Zero also lets you retain full control over your camera settings. For example, algorithm-based exposure logic can’t let you pick a specific shutter speed, because the whole job of the algorithm is to take nine photos at different shutter speeds and pick the best of the bunch.

As we’ve followed the iPhone’s algorithms getting more sophisticated over the years, we’ve found that our single-shot approach is the only reliable way to give users total control over shutter speed and other exposure settings.

Consider these shots I took last year from a boat in the Galapagos. By shooting a single photo with a fast shutter speed, I outperformed the algorithm.

Left: Captured with automatic settings and ProRAW. Right: Native RAW with Manual Settings.

Just be careful what you wish for. Turning off the algorithms has tradeoffs.

I mentioned grain earlier, and just like film, Process Zero will have an ideal ‘ISO’ range. In the dark, it will get noisy. Fortunately, newer iPhones with quad-bayer sensors have incredible low-light performance, compared to the past. Don’t go in expecting night mode, but I’ve been surprised by how useful the results can be.

Because Process Zero does not fuse multiple shots, you are limited by the dynamic range of the sensor. That means that if you’re shooting something like that window from earlier, you need to choose which bits you want exposed.

Finally, some flagship features of the iPhone are deeply integrated with algorithms. If you want a full, 48-megapixel resolution rather than binning, that isn’t possible. That also means that if you want a virtual 2× camera at a 12-megapixel resolution, that isn’t possible. However, the situation might improve if enough people file feedback with Apple!

Then there’s the issue of HDR output. If you’ve ever scrolled through photos and video in your camera roll, and your phone suddenly got bright, that’s what we’re talking about. Some people hate the look, but I think the results can be stunning when done with thought and care.

We’d love to show you examples, but browsers don’t really support it, and that cuts to the heart of the problem. If you shoot in HDR today, your photos can look pretty different from screen to screen. Later this year, new standards will land that help with HDR compatibility, and we’ll revisit it then.

To summarize: Process Zero gives you a single 12-megapixel shot. It will be less saturated, softer, grainier, and quite different than what you see from most phones. Each shot includes a true Bayer RAW file, if you want to use it in a full-fledged RAW editor, but we designed Halide so you don’t need one.

If any of Process Zero’s tradeoffs are dealbreakers, that’s fine! If Process Zero just shows you how valuable smart-processing can be in difficult situations, that’s cool. We find ourselves toggling between Process Zero and the system processing depending on the content of a scene and what we’re hoping to accomplish. That’s why we made it easy to switch modes with a tap.

As cameras make more and more creative choices on your behalf, we think the photographer should retain the agency to cast aside algorithms and do their own thing. Just as a photographer expresses themselves in their choice of lens, exposure settings, and film stock, Halide now lets you choose the process that works for you.

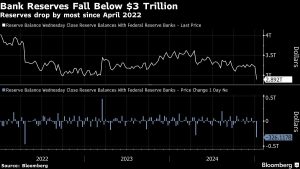

Image Lab

Back in the days of film photography, half of the art was in taking the photo, and the other half was developing your negative. Sometimes it was to correct mistakes, and sometimes it was for creative effect. When going analog, we love pushing and pulling film.

However, adjusting the exposure on a processed JPEG/HEIC is never as good as ‘re-developing’ a digital negative.

This is why Process Zero includes a digital negative. Rather than leave you to find an editor that supports them, we decided to go the extra mile and include Image Lab, a one-dial solution to developing your negative.

We think Image Lab gives Halide a great “batteries included” experience. Take a shot, tweak it if you have to, and share it — no other steps are required. We also built Image Lab because even if you’re comfortable working with RAW editors, they can yield different results than Process Zero.

Image Lab is not a full-fledged editor. It does not contain any color or contrast adjustment knobs. You can’t even crop! Think of Process Zero + Image Lab as “step zero” of your workflow.

But if you do take those DNGs we include with Process Zero into another app, they edit pretty well:

Native DNGs developed outside of Halide.

Process Zero is Step One

Today is also step zero in our journey to the next generation of Halide. We plan to make it bigger than our award-winning Mark II. We’re going to call it… wait for it… Mark III.

Our Plan for Mark III

We released Halide Mark II after a long silent period, so we could release one big, splashy update. While that’s fun, it’s both risky and ultimately less useful to our users. We’ve decided to try roll out some Mark III features a bit early, rather than saving them up for one big launch at the end. As much as we love surprises, we think getting these features into your hands and gathering feedback will make Mark III much better than developing it in a vacuum.

Process Zero is step one: the first part of giving you even more control, and beautiful output out of the box. We’re going to go beyond that.

Halide members will get early access to even more Mark III features, along with exclusive icons and other goodies. You can get the app here — and try it for free for a week by starting a membership.

If subscriptions aren’t your thing, that’s totally fine. We’re continuing to offer a one-time-purchase option too. While it’s technically a one-time purchase for Mark II, we’ve decided to include Mark III, too.

It has been a busy year. A few months ago, we shipped our new video app Kino. Today, we launched Process Zero, the first step on the road to Mark III. In a few weeks we’ll enter the busiest time of year. We feel incredibly privileged to be able to work on what we love, and it wouldn’t be possible without your support. Thank you.

We’d love to hear your feedback on Process Zero, and we can’t wait to see what you shoot with it. Make sure to tag your photos #ProcessZero so we can see and share your shots!

+ There are no comments

Add yours