Crypto World

SEC crypto-law interpretation marks a start, not an end

Regulators are signaling a shift in digital-asset oversight as the SEC outlines an interpretive framework for applying securities laws to crypto. SEC Chair Paul Atkins, in prepared remarks at the Practising Law Institute, said the agency intends to move away from a broad enforcement-first stance toward a more principled, interpretive approach. The remarks follow the agency’s interpretive notice on crypto regulation and a memorandum of understanding with the CFTC signed last week.

“While the interpretation provides long-needed clarity, I should like to assure this audience that it amounts to a beginning, not an end,” Atkins told attendees, underscoring the framework is intended to evolve alongside market developments.

The interpretive notice, released earlier in the week, frames how federal securities laws may apply to crypto assets. It suggests that most cryptocurrencies are unlikely to be securities under federal law, with a narrow exception: traditional securities that are tokenized. Atkins later clarified that digital commodities, digital tools, digital collectibles including non-fungible tokens (NFTs), and stablecoins are typically not within the SEC’s purview.

Key takeaways

- The SEC signals a shift from enforcement-by-press release toward a interpretive, rules-based approach to crypto regulation after a new interpretive notice and a memorandum with the CFTC.

- Under the framework, most crypto assets are unlikely securities; only tokenized traditional securities would fall under federal securities laws.

- Assets like digital commodities, digital tools, NFTs, and stablecoins are generally not considered securities by the agency’s current interpretation.

- Regulatory progress intersects with Congress and the White House, as lawmakers push a market-structure bill (the CLARITY Act) and seek consensus on stablecoin regulation and crypto-asset provisions.

- Watch for how the evolving framework interacts with legislative efforts, potential CFTC authority expansion, and ongoing industry pilots and experiments.

Regulatory posture shifts amid a mixed legislative backdrop

The SEC’s interpretive stance arrives as part of a broader recalibration of how crypto regulation will be enforced and applied. The agency had long faced criticism for a perceived “enforcement-by-crisis” approach, especially for startups and projects navigating an evolving market. By contrast, the latest framework emphasizes clarity and consistency, aiming to reduce guesswork for issuers, exchanges, and investors while preserving robust investor protections.

The interpretive notice explicitly clarifies that, for many digital assets, existing securities laws may not apply in the same way as for traditional stocks or bonds. The acknowledgment that most crypto assets are not securities could lower some regulatory friction for many projects—though it also places a clear boundary around assets that would still be subject to securities regulation.

Atkins connected the interpretation to ongoing SEC coordination with the CFTC, noting the memorandum signed last week. The agreement signals an intent to harmonize approaches where possible, a relevant development given the overlapping jurisdictions in crypto markets, market infrastructure, and derivatives. The result could be a more predictable regulatory environment for token issuers and market participants, even as questions about enforcement and future rulemaking linger.

Contextual backdrop: market structure, stablecoins, and the legislative path

Beyond the SEC’s interpretive framework, lawmakers are actively shaping the arc of crypto regulation through legislation and hearings. A market-structure bill, known in industry circles as the CLARITY Act, advanced in the House in mid-2025 but has faced a slower path in the Senate. As of the latest briefing, it had not yet been scheduled for a markup in the Senate Banking Committee, leaving a critical regulatory hinge unresolved.

In parallel, the White House has engaged with lawmakers behind closed doors to advance the same package. A spokesperson for Wyoming Senator Cynthia Lummis confirmed that Republican senators met with White House crypto adviser Patrick Witt to discuss advancing the market-structure bill. Lummis’ team described the session as very productive and positive, with negotiators “99% of the way there on stablecoin yield” and ongoing, productive talks on the digital-asset provisions of the bill.

Stablecoins remain a focal point of regulatory and policy debate, particularly around yield, banking implications, and consumer protections. The sense among some policymakers is that achieving a workable framework for stablecoin issuance and redemption is a prerequisite for broader bipartisan consensus on crypto regulation.

The regulatory dialogue is further colored by ongoing market experiments and pilot programs. For example, the market has seen pilots exploring tokenized trading and other asset-ization concepts under the watchful eye of multiple agencies. While these pilots illustrate a regulatory appetite for innovation, they also underscore that practical, real-world testing will continue to inform how rules evolve in practice.

As the SEC’s interpretive framework takes root, traders, issuers, and developers should prepare for a regulatory environment that favors clarity and predictability but remains nuanced. The boundary between what constitutes a security in crypto, and what does not, will likely continue to shift as new asset classes and products emerge. The interplay between the SEC, the CFTC, and Congress will shape the pace and direction of this evolution in the months ahead.

Readers should watch for updates on the CLARITY Act’s progression in the Senate, any further formal guidance from the SEC, and on-the-ground outcomes from ongoing tokenization trials and stablecoin regulatory debates. The convergence of executive and legislative activity suggests that substantial clarity—across asset classes and market infrastructure—may still be months away, even as the groundwork for a more predictable regulatory framework takes shape.

Crypto World

The Viral AI Agent Redefining Autonomous Automation

Artificial intelligence is undergoing a structural transformation. What began as conversational interfaces powered by large language models is rapidly evolving into autonomous systems capable of executing real world digital tasks. In this emerging landscape of AI agents, one name has attracted significant attention, OpenClaw.

OpenClaw is not merely another chatbot. It represents a broader shift in how artificial intelligence systems operate, moving from reactive text generation to proactive digital execution. Its rapid rise in popularity has positioned it at the centre of discussions surrounding autonomous AI, intelligent automation and the future of digital work.

This article explores what OpenClaw is, why it gained viral traction, how it works conceptually and what it signals for the next phase of AI agent development.

What Is OpenClaw?

OpenClaw is an AI agent designed to perform tasks in digital environments autonomously. Unlike traditional AI chat interfaces that generate responses based on prompts, OpenClaw aims to interpret objectives, plan actions and execute them across systems.

At its core, OpenClaw transforms a large language model from a conversational engine into an operational agent.

Rather than simply answering questions, an AI agent such as OpenClaw can interpret user goals rather than isolated prompts, break complex objectives into structured steps, interact with software interfaces and APIs, execute commands within digital environments, and adapt its actions based on contextual feedback.

This distinction is fundamental. The shift from responding to acting marks a qualitative evolution in artificial intelligence.

Why Did OpenClaw Go Viral?

Several factors contributed to OpenClaw’s rapid visibility within the AI and developer communities.

Compelling Demonstrations of Autonomous Behaviour

Public demonstrations showed the agent carrying out multi-step digital tasks with minimal supervision. Observers witnessed an AI system planning, executing and iterating, not merely producing text. This display created a strong perception of progress towards genuinely autonomous AI systems.

Alignment with the AI Agent Trend

The rise of autonomous AI agents has been one of the most discussed developments in the post-LLM era. As businesses search for scalable automation and developers explore agent-based frameworks, OpenClaw appeared at precisely the right moment in the innovation cycle.

Accessibility and Developer Interest

Projects that emphasise openness, experimentation and adaptability often gain rapid traction. The idea of an AI agent that developers could explore, extend or integrate resonated strongly with the technical community.

A Clear Narrative, From AI Assistant to Digital Worker

OpenClaw’s positioning as an autonomous agent rather than a chatbot reframed expectations. It was presented not as a conversational novelty, but as a prototype of the future digital workforce.

How Does OpenClaw Work?

While implementations evolve, AI agents like OpenClaw typically rely on a layered architecture that combines reasoning, planning and execution capabilities.

Large Language Model Core

At the cognitive centre of the system lies a large language model. This model interprets instructions, analyses context, reasons through objectives and generates structured action plans.

In this context, the language model is not the final output layer. It functions as the decision-making engine that informs action.

Task Planning Mechanism

A planning module translates high-level goals into manageable subtasks. If instructed to compile a report, the agent may identify required data sources, access relevant tools, extract information, structure the findings and format the output.

This decomposition capability is central to autonomous behaviour.

Execution Layer

The execution layer enables interaction with external systems. This function may involve calling APIs, navigating software interfaces, running scripts, interacting with operating systems or managing workflows across platforms.

This layer converts cognitive reasoning into operational activity.

Memory and Context Management

Persistent memory allows the agent to maintain coherence across extended tasks. Rather than treating each interaction in isolation, the system retains relevant context, previous steps, and intermediate outcomes.

This continuity is critical for complex, multi-stage processes.

OpenClaw Compared with Traditional Chatbots

Traditional chatbots primarily generate textual responses based on user prompts. OpenClaw, by contrast, is designed to execute digital actions in line with user objectives.

A chatbot focuses on conversational interaction. OpenClaw focuses on operational interaction with systems and tools.

Traditional chat interfaces typically lack persistent, task oriented memory. OpenClaw integrates contextual memory to manage longer workflows.

Chatbots do not directly manipulate external systems. OpenClaw is designed to integrate with tools, APIs and digital infrastructures.

In practical terms, a chatbot communicates information. An AI agent such as OpenClaw carries out tasks.

Potential Use Cases of OpenClaw

The strategic relevance of OpenClaw lies in its practical applications. AI agents capable of autonomous execution could reshape multiple sectors.

Enterprise Automation

Businesses increasingly rely on fragmented SaaS ecosystems. An AI agent can bridge tools and automate cross-platform workflows, including reporting pipelines, CRM updates, marketing automation tasks, and structured data processing.

This automated workflow reduces manual intervention and improves operational efficiency.

Software Development and Testing

Developers could leverage AI agents for automated code testing, environment configuration, continuous integration tasks, debugging assistance and deployment management.

An AI agent that understands project context could streamline development cycles and reduce repetitive workload.

Advanced Personal Productivity

Beyond enterprise environments, autonomous agents may assist individuals in managing complex digital workflows, including intelligent calendar coordination, automated document handling, research aggregation and workflow orchestration across multiple tools.

OpenClaw extends productivity beyond reminders and into active task completion.

Strategic Implications for the Future of AI Agents

OpenClaw represents more than a single project. It signals structural shifts in the development of artificial intelligence.

From Conversational AI to Autonomous Systems

The first generation of large language models focused primarily on dialogue. The next phase centres on execution. Competitive advantage will increasingly depend on agents that can act reliably in digital environments.

Emergence of Digital Labour

As AI agents become more capable, they may assume roles previously requiring human digital interaction. AI agents do not necessarily eliminate human oversight, but they do change the distribution of digital labour.

Routine operational tasks could become progressively automated.

Integration as Competitive Advantage

Future AI value may depend less on model size alone and more on integration capacity, specifically on how effectively agents interact with real-world software ecosystems.

OpenClaw reflects this integration-focused paradigm.

Risks and Challenges

Despite its promise, autonomous AI agents introduce substantial considerations.

Granting an AI system access to digital tools requires strict governance structures. A human administrator should manage security and permissions carefully.

Reliability remains critical. If an agent makes incorrect decisions during early stages of a workflow, those errors may propagate throughout the process.

Governance and accountability frameworks are still developing. Questions remain regarding responsibility when autonomous systems perform unintended actions.

There is also the risk of over-automation. Excessive reliance on autonomous systems could reduce human situational awareness in critical operations.

Balancing autonomy with oversight will be essential for responsible adoption.

Is OpenClaw the Beginning of a New AI Era?

The key question is not whether OpenClaw is technically flawless today. The more important consideration is what it represents.

It symbolises the evolution of artificial intelligence from passive assistant to active operator.

If the conversational AI wave defined the early 2020s, the coming phase may be characterised by autonomous AI agents capable of interacting independently with digital systems.

OpenClaw illustrates how large language models can transition from generating insight to delivering execution.

Whether it becomes a dominant platform or remains an early milestone, it clearly reflects a broader trajectory. Artificial intelligence is moving from conversation towards action.

Crypto World

Listings And On-Ramps Are Ending, As Intent Protocols Make Access Native

Opinion by: Jason Dominique, co-founder and CEO of ONCHAIN® Labs

For years, whenever we explain what we’re building, the reaction is familiar. There’s curiosity, some skepticism, and then the question that almost always follows:

“If this is such a big problem, why hasn’t it been fixed already?”

The answer is not that the industry failed to notice it, nor that the technology was too immature to address it. Access remained broken because fixing it correctly required rearchitecting how coordination, execution and settlement work together, while leaving it broken was both easier and profitable.

By “access” we mean the path between intent and ownership: the rules, intermediaries and detours that determine whether someone can reach an onchain asset directly or only through a platform that controls the route.

For most of the industry’s history, access has been treated as something users must earn or purchase before participating. Assets must be listed. Wallets must support them.

What began as a pragmatic workaround hardened into a durable economic structure.

If an asset is listed, access is monetized directly. If it isn’t, the native asset required to reach it is still monetized. Either way, the detour pays, regardless of user intent.

In practice, this has created a vast, largely invisible rerouting of value. Today, significant onchain volume is not executed directly against the assets users intend to reach, but is first detoured through intermediary-controlled native assets required to transact on each network.

Access scarcity became an economic artifact

As onchain asset creation accelerated, platforms encountered a real constraint. No exchange, wallet or custodial ramp could realistically surface everything. Scarcity did not appear in liquidity or settlement. It appeared in distribution.

Listings became gates. Routing decisions determined reachability. Once these detours proved profitable, they stopped being temporary.

This was not a moral failure. It was an incentive-driven outcome. Monetizing access required far less coordination, capital and risk than redesigning how users reach onchain assets directly. Once intermediaries realized the detour itself could be priced, there was little reason to remove it, especially when removal required deep architectural changes few teams could afford.

Over time, users were trained to accept the detour as normal. Acquiring intermediary-controlled native assets unrelated to intent. Bridging value across chains. Approving opaque transactions. These steps stopped feeling like friction and started feeling inevitable.

What emerged was an unspoken economic tax on participation, charged not in explicit fees, but in prerequisite assets, extra steps, delayed execution and abandoned intent.

Execution matured but access did not

While access remained economically gated, the execution layer matured rapidly. Automated market makers, permissionless liquidity and composable smart contracts turned execution into a largely solved problem.

These systems were never meant to be destinations. They were plumbing. Early on, interfaces were necessary, so decentralized exchanges became places users “went,” and on-ramps became gateways. Over time, the industry confused those interfaces with the infrastructure itself.

Related: An overview of intent-based architectures and applications in blockchain

That confusion is now unraveling. People are no longer consciously navigating execution venues. Trading increasingly happens inside wallets and applications, with execution abstracted away.

The data reflects this shift. In 2025, the DEX-to-CEX spot volume ratio crossed 21% and peaked above 37% earlier in the year. Centralized platforms still matter, but decentralized execution is becoming the default regardless of where users interact.

As execution fades into the background, the remaining bottleneck becomes impossible to ignore.

Builders are running into a ceiling

For builders, access has quietly become the limiting factor. Reaching users often requires relationships, listing approvals, or forcing users through native assets unrelated to the product’s core value.

This distorts incentives. Innovation slows not because ideas dry up, but because permission becomes the bottleneck. Teams optimize for gatekeepers rather than users. Distribution depends on capital and relationships instead of relevance.

Scale amplifies the problem. Even after issuance slowed in 2025, tens of thousands of tokens continued launching each day. Listing-based access cannot keep up with permissionless creation.

Permissionless issuance paired with permissioned access does not produce open markets. It produces fragmentation.

Access is moving to the transaction layer

The alternative is not another marketplace or aggregator. It is a redefinition of where access lives.

In intent-based and abstracted systems, users express outcomes rather than routes. Transactions dynamically source liquidity, assets and execution at the protocol level. Access stops being something granted by platforms and becomes something enforced by the network itself.

This shift is structural. Solving access at the transaction layer requires deep changes to coordination, execution and settlement, changes that were expensive, risky and slow to implement. That is precisely why monetized detours persisted for so long.

Once access becomes native to the network, the economics of the stack change. Listings lose leverage. Discovery becomes emergent rather than negotiated. Liquidity competes on execution quality rather than placement.

Execution works. Settlement scales. Value moves instantly and globally. The remaining question is whether access continues to be routed through detours users did not choose.

A quiet but irreversible transition

This transition will not arrive with a single protocol launch or headline-grabbing announcement. Systems built on structural friction rarely unwind overnight.

Access is moving closer to execution. When it does, the center of gravity in crypto shifts away from intermediaries and back toward networks.

The change will not be loud. It will be structural. By the time access feels “solved,” the old gates will already be impossible to justify.

Opinion by: Jason Dominique, co-founder and CEO of ONCHAIN® Labs.

This opinion article presents the author’s expert view, and it may not reflect the views of Cointelegraph.com. This content has undergone editorial review to ensure clarity and relevance. Cointelegraph remains committed to transparent reporting and upholding the highest standards of journalism. Readers are encouraged to conduct their own research before taking any actions related to the company.

Crypto World

Bitcoin Dips to $69,500 But Avoids Six-Week Lows Seen on Gold

Bitcoin (BTC) rebounded from weekly lows into Thursday’s Wall Street open as inflation targeted BTC price strength.

Key points:

-

Bitcoin price action preserves its new local trading range between 2021 highs and 2025 lows.

-

Gold leads a macro asset sell-off after the Federal Reserve continued a hawkish stance on interest-rate policy.

-

Fed Chair Jerome Powell says that the next rate cut depended on inflation “progress.”

Bitcoin struggles after hawkish Fed meeting

Data from TradingView showed a drop to $69,500 on the day, with BTC/USD reaching the area of its old all-time high from 2021.

The pair then returned above the $70,000 mark before circling the 2021 level, helping preserve a narrative of comparative strength despite various macro pressures.

On Wednesday, the focus switched from the Middle East and oil to US inflation as the Federal Reserve chose to hold interest rates at previous levels.

“Uncertainty about the economic outlook remains elevated. The implications of developments in the Middle East for the U.S. economy are uncertain,” Chair Jerome Powell said in an official statement.

Powell’s subsequent press conference reiterated that “progress” was required on inflation for rates to come down — a key tailwind for crypto markets.

“The rate forecast is conditional on the performance of the economy, so if we don’t see that progress, you won’t see the rate cut,” he told reporters.

SUMMARY OF FED DECISION (3/18/2026):

1. Fed halts rate cuts for the second straight meeting

2. Fed projects one rate cut in 2026, one in 2027

3. Fed 2026 PCE inflation forecast revised higher to 2.7%

4. Fed says implications of Middle East developments are “uncertain”

5. Fed…

— The Kobeissi Letter (@KobeissiLetter) March 18, 2026

With just a single cut in 2026 now expected, risk assets felt pressure from the Fed, with US stocks ending the day down by around 1.5%.

Trader: BTC price needs weekly close near $75,000

On Thursday, however, it was gold leading the comedown, falling 2.3% below $4,700 per ounce for the first time since Feb. 6.

Related: $58K BTC price still in play? Five things to know in Bitcoin this week

“All assets, except Oil, continue to sell off,” crypto analyst Michaël van de Poppe responded in a post on X.

“Not a bad case here. The opposite: Bitcoin is also correcting, and it’s correcting less than I would assume.”

BTC price action thus returned to a range bordered by the 2021 all-time high and the lowest level of 2025 at around $74,500.

“$BTC is still rejecting 2025 Yearly Lows. Won’t be of significance during the week, need weekly close above there,” trader Castillo Trading told X followers on Wednesday.

Van de Poppe said that he would be a “big buyer” of Bitcoin if it were to drop back to the low $60,000 zone.

This article does not contain investment advice or recommendations. Every investment and trading move involves risk, and readers should conduct their own research when making a decision. While we strive to provide accurate and timely information, Cointelegraph does not guarantee the accuracy, completeness, or reliability of any information in this article. This article may contain forward-looking statements that are subject to risks and uncertainties. Cointelegraph will not be liable for any loss or damage arising from your reliance on this information.

Crypto World

Coinbase Tokenizes Bitcoin Yield Fund on Base

Coinbase Asset Management’s Anthony Bassili says the Bitcoin Yield Fund’s tokenized share class checks “identity and eligibility at the token level” for compliance.

Coinbase has brought its Bitcoin Yield Fund onto its Base blockchain, launching a tokenized share class for the fund in partnership with the financial services firm Apex Group.

Apex said in a statement on Thursday that the tokenized share class of Coinbase Asset Management’s fund “is set up to interact with compatible platforms, wallets, and infrastructure without compromising compliance.”

Coinbase Asset Management president Anthony Bassili said that the share class integrates “identity and eligibility at the token level” for regulatory compliance.

Financial institutions have been tokenizing stocks, bonds, funds, commodities and real estate on the blockchain in search of lower costs, faster settlement and round-the-clock trading.

Asset managers like BlackRock, Fidelity Investments and Franklin Templeton have already launched tokenized funds on-chain.

Apex enables institutions to access ERC‑3643 tokens

The tokenized share class of Coinbase’s fund, which offers exposure to Bitcoin (BTC) and yield, will be available on Base only to institutional and accredited investors outside of the US.

The share class uses the ERC‑3643 permissioned token standard to ensure only eligible investors have access to the Bitcoin yield product.

Coinbase plans to launch a tokenized share class of the Coinbase Bitcoin Yield Fund for US investors in the future.

Related: SEC gives go-ahead to Nasdaq for tokenized trading trial

Apex acts as the on-chain transfer agent for the tokenized Coinbase Bitcoin Yield Fund, and is tasked with handling token ownership, enforcing compliance and transfer rules and maintaining a record of transactions on the Base blockchain.

Coinbase launched a non-US version of the Coinbase Bitcoin Yield Fund in April and a US version in October.

The non-US version targets a 4% to 8% annual return in Bitcoin. Coinbase said at the time that it launched the product to address Bitcoin’s inability to generate native yield, unlike proof-of-stake assets such as Ether (ETH) and Solana (SOL).

Magazine: Are DeFi devs liable for the illegal activity of others on their platforms?

Crypto World

Bitcoin Slips Below $70,000 as Fed Rate Pause and Oil Surge Pressure Markets

Key Takeaways

- Bitcoin fell to $70,000 as the Federal Reserve held interest rates steady and geopolitical tensions drove energy prices higher

- Nearly $600 million in leveraged crypto futures liquidations occurred in 24 hours, particularly wiping out long positions.

- Altcoins struggled on thin liquidity, though NEO and ETHFI recorded gains.

- Fear metrics spiked with bitcoin volatility jumping over 5%.

Bitcoin fell below $70,000 on Thursday as soaring energy prices and the Federal Reserve’s decision to hold interest rates steady weighed on risk assets globally.

BTC traded near $70,000, down 1.6% since midnight UTC, while Ether declined 1.7% to $2,160. The moves tracked a broader market selloff after the Fed maintained rates in the 3.50-3.75% range on Wednesday, bolstering the U.S. dollar and triggering risk-off sentiment across equities and crypto.

Energy markets amplified the pressure. Brent crude oil surged to $114, and Oman crude jumped to $150 after Iran attacked key Gulf energy infrastructure following an Israeli strike on its South Pars gas field. European natural gas futures spiked approximately 25% to above $78 per MWh. Nasdaq 100 futures fell around 0.3%, underscoring the broader market selloff.

Liquidations Hit $600 Million

The overnight decline sparked significant derivative liquidations, with nearly $600 million in leveraged crypto futures bets wiped out over 24 hours. Long positions accounted for most of the losses, indicating bullish traders were caught off guard.

Futures open interest fell 5.6% to $106.90 billion. Ether futures open interest dropped 9% alongside a 6% decline in ETH’s spot price, signaling capital outflows. Negative funding rates across BTC, ETH, BNB, SOL, and other tokens indicate bearish short positions are gaining favor again.

Fear metrics also deteriorated. Volmex’s BVIV, which measures 30-day implied bitcoin volatility, jumped over 5% to 58.36%, ending a week-long decline.

Altcoins Struggle on Thin Liquidity

The altcoin market faced headwinds from limited liquidity in a fractured ecosystem still recovering from October’s $19 billion leverage wipeout. Bittensor fell 8.8%, and hyperliquid declined 6.5% since midnight.

A few tokens bucked the trend. NEO gained 4.2% while restaking token ETHFI added 1.5% to reach $0.55, continuing its strong start to 2026. The CoinDesk 20 fell around 1%, while the DeFi Select Index and CoinDesk Memecoin Index declined 1.4% and 2%, respectively.

What’s Next

The market remains caught between macro headwinds from geopolitical tensions and monetary policy uncertainty. Bitcoin’s struggles below $70,000 suggest further volatility could test support levels as investors reassess risk exposure amid elevated energy costs and the Fed’s extended pause on rate cuts.

Crypto World

Can XRP price recover above $1.60 as a bullish reversal pattern forms?

After rallying to a multi-week high of $1.60, XRP price crashed amid a market-wide downtrend triggered by escalating geopolitical and macroeconomic tensions.

Summary

- XRP fell over 8% from its weekly high to $1.46 amid a broader crypto market downturn driven by geopolitical tensions and hawkish Fed signals.

- Network fundamentals strengthened, with XRP wallet addresses hitting a record 7.7 million and daily active users rising to a five-week high.

- Technical indicators point to a potential bullish reversal, with an Adam and Eve pattern forming, though a break below $1.44 could invalidate the setup.

According to data from crypto.news, XRP (XRP) price fell 4.4% over the past 24 hours to $1.46 at the time of writing, extending its losses to over 8% from its weekly high of $1.60.

XRP price dropped amid deteriorating market sentiment for risk assets as Bitcoin fell below the $70,000 support, sparking market concerns of a potential drop to $60,000 next. This occurred as investors turned cautious amid rising oil prices that followed Israel’s drone strike against one of Iran’s largest gas facilities at South Pars.

The altcoin’s drop also follows bearish macroeconomic signals after the Federal Reserve Chair Jerome Powell’s latest speech cast doubt on further interest rate cuts over this year, as the central bank intends to maintain a data-driven approach amid stubbornly sticky inflation.

While the market has not yet recovered from the shock, with the crypto market cap still struggling at the time of writing, a few metrics that have strengthened seem to point to a long-term silver lining for XRP.

Notably, on-chain tracker Santiment recently shared that XRP holders have climbed to a new all-time high of 7.7 million wallets, a sign of growing adoption despite the price volatility.

At the same time, daily active addresses on the network have risen to a 5-week high of 46,767 active addresses this week.

Together, these metrics mean the underlying utility and network participation are robust, which could sustain demand once the broader market stabilizes.

As reported by crypto.news earlier, whales have also entered an accumulation phase after months of distribution. Typically, such shifts often precede broader market recoveries as retail investors follow smart money flows.

On the daily chart, XRP price has formed an Adam and Eve pattern, a highly reliable bullish reversal pattern in technical analysis. XRP price touched the neckline of the pattern at $1.60 earlier this week but has since pulled back. A confirmed breakout could spark a massive rally, at least in the short term.

The 20-day SMA appears to be closing in on a bullish crossover with the 50-day SMA. At the same time, the MACD lines have pointed upwards, suggesting that bullish momentum is quietly building beneath the surface.

For now, traders will be keeping an eye on the $1.50 psychological resistance, a break above which could embolden bulls to target a breach of $1.60, which would also confirm the Adam and Eve pattern. The next potential target would be the 100-day SMA at $1.70.

On a bearish note, a drop below $1.44, the 50-day SMA, could invalidate the bullish prediction.

Disclosure: This article does not represent investment advice. The content and materials featured on this page are for educational purposes only.

Crypto World

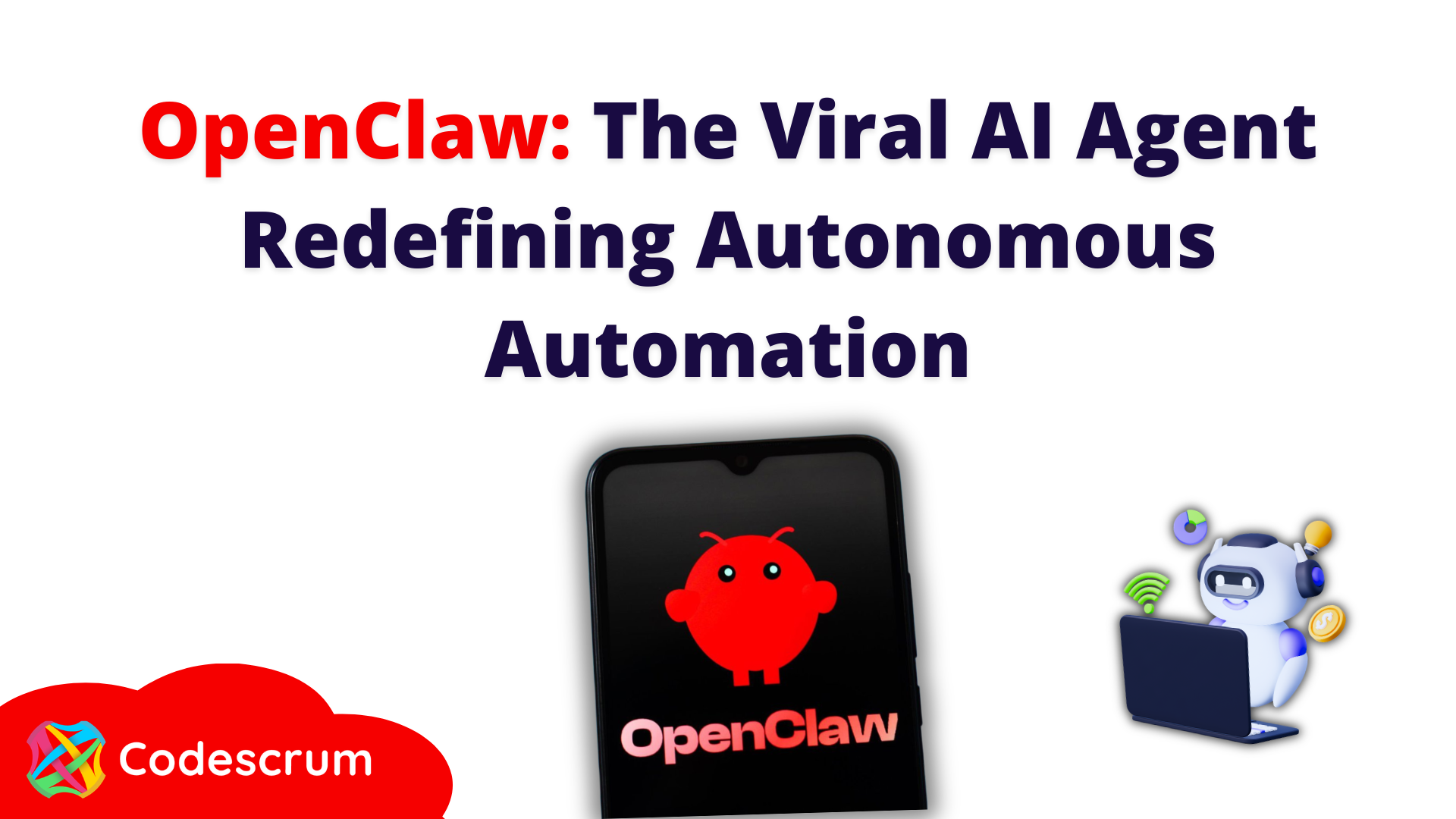

Crypto.com to Cut 12% of Workforce due to Enterprise AI Integration

Singapore-headquartered cryptocurrency exchange Crypto.com is set to cut up to 12% of its workforce due to company-wide artificial intelligence (AI) integrations, joining a growing list of companies announcing AI-linked mass layoffs, according to the exchange’s founder and CEO, Kris Marszalek.

Crypto.com recently expanded its AI offering and launched the AI agent platform ai.com on Feb. 9, which it positioned as a core business. The company also said it was the first crypto platform to receive the ISO/IEC 42001:2023 certification for AI system management in February.

“We are joining the list of companies integrating enterprise-wide AI,” Marszalek said in a Thursday X post, warning that companies that don’t pivot will fail.

Crypto.com lists around 1,500 employees, meaning that the 12% layoff would affect about 180 staff members. It marks the latest AI-linked large-scale layoff in the crypto and tech space, underscoring concerns over AI replacing more of the human workforce.

“We are joining the list of companies integrating enterprise-wide AI,” a spokesperson for Crypto.com told Cointelegraph, adding that the layoffs are part of the platform’s plans to “prioritize resources around key growth areas.” The spokesperson declined to comment on the roles that were affected by the layoffs.

Crypto and tech companies stage AI-linked mass layoffs

Other large crypto and tech companies have also announced AI-linked mass layoffs in recent months.

On Monday, blockchain analytics platform Messari announced more staff cuts as part of its pivot to an AI-first company. The company previously laid off roughly 15% of its full-time employees in January 2025 and made a similar workforce reduction in February 2023.

On Wednesday, the Algorand Foundation, the organization behind Layer-1 blockchain Algorand, also announced a 25% staff reduction, citing macroeconomic uncertainty and the current crypto market slump.

On Feb. 26, Jack Dorsey’s payment company Block announced cutting about 40% of its staff, citing the rapid acceleration of AI. However, some of the 4,000 fired workers have already returned to the company, according to multiple employees who were part of the initial layoffs.

Related: Nvidia’s Huang: AI will boost jobs as it needs trillions in infrastructure

Large tech companies have also announced AI-linked mass layoffs. On Jan. 27, visual discovery engine Pinterest announced it was cutting up to 15% of its staff to pivot to an AI-centric approach.

On March 11, software company Atlassian announced it was cutting 10% of its staff, or about 1,600 employees, as part of a restructuring to self-fund further AI investments.

Meta, Facebook’s parent company, is also reportedly planning a workforce cut of up to 20%, seeking to enable AI efficiencies and offset the costs of AI infrastructure, insiders familiar with the matter told news outlet Reuters on Saturday.

Magazine: 9 weirdest AI stories from 2025

Crypto World

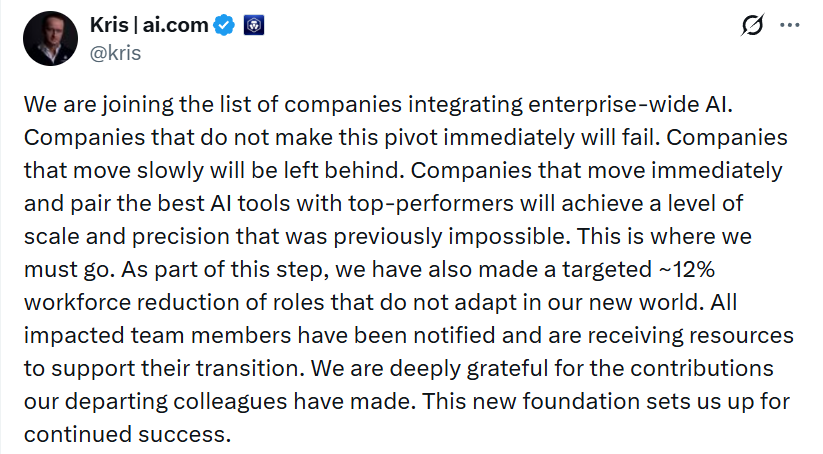

Crypto Hack Losses Driven by a Handful of Major Exploits: Immunefi

A new security report from Immunefi finds that crypto hacks continue at a steady pace while losses are becoming more concentrated in a small number of massive exploits.

Analyzing 425 publicly known incidents between 2021 and 2025, the report estimates that the average hack now results in about $25 million in stolen funds. In 2024 and 2025 alone, 191 hacks led to $4.67 billion in losses, with just five incidents accounting for 62% of the total.

Despite representing fewer incidents, centralized exchange breaches drove the majority of losses. Twenty exchange hacks accounted for roughly $2.55 billion, or about 55% of the total, reflecting how large pools of user funds are concentrated behind fewer points of failure.

Token markets also appear to be reacting more harshly to breaches. Across 82 hacked tokens tracked in the study, prices fell a median 61% within six months, with 83.9% remaining below their hack-day price over that period.

“The market has become less forgiving because expectations have changed,” Immunefi CEO Mitchell Amador told Cointelegraph, adding that breaches are now seen as signals of deeper issues in engineering, governance and operational resilience.

Amador said the long-term impact of exploits often extends well beyond the initial loss:

The stolen funds are only the first layer of damage. What follows is often more destructive: sustained token price suppression, reduced treasury capacity, leadership disruption, lost development time, and erosion of user trust.

The report also highlighted how interconnected DeFi systems can amplify the fallout from a single incident, with failures cascading across lending, collateral and liquidity networks.

One example involved the collapse of Elixir’s deUSD stablecoin in November 2025. Elixir had parked roughly 65% of deUSD’s collateral with Stream Finance, which disclosed a $93 million loss from an external fund manager. As Stream’s stablecoin xUSD fell 77%, deUSD’s backing deteriorated, redemptions halted and panic selling hit Curve pools, ultimately pushing deUSD down more than 97%.

Related: South Korea sells $21.5M in recovered Bitcoin after custody breach

Recent exploits highlight ongoing security risks in crypto

While crypto-related hack losses fell to $26.5 million in February, the lowest monthly total in nearly a year, according to PeckShield, several security incidents have already surfaced in March.

Researchers at Google reported a new exploit kit targeting Apple iPhone users that is designed to steal cryptocurrency wallet seed phrases. The toolkit, known as Coruna, contains multiple exploit chains capable of targeting devices running various versions of Apple’s iOS and has been linked to phishing websites posing as crypto platforms.

The Bitcoin-based DeFi platform Solv Protocol also reported that one of its token vaults was exploited for roughly $2.7 million, affecting fewer than 10 users. The project said it would cover the losses and offered the attacker a 10% bounty in exchange for returning the funds while security firms investigate the breach.

Separately, the domain of Bonk.fun was hijacked after attackers gained access to a team account and deployed a wallet-draining scheme through the site. The project warned users not to interact with the platform while the team worked to regain control of the domain.

Meanwhile, NFT lending platform Gondi disabled a faulty smart contract after an exploit allowed an attacker to steal roughly $230,000 worth of NFTs. The project said it is compensating affected users while investigating the vulnerability, which involved a contract used to sell escrowed NFTs and repay loans.

Magazine: All 21 million Bitcoin is at risk from quantum computers

Crypto World

OG Bitcoin whale offloads 1,000 BTC as selling pressure intensifies

A long-dormant Bitcoin whale wallet has offloaded 1,000 BTC on Wednesday.

Summary

- Long dormant Bitcoin whale offloads 1,000 BTC, extending total transfers to 3,500 BTC since November 2024 with roughly $330 million in realised profit.

- Additional selling from early investor Owen Gunden and Bhutan-linked wallets points to a pattern of distribution from large holders into the market.

On-chain data tracked by analytics provider EmberCN showed that the wallet “bc1q…6ym” has transferred a total of 3,500 BTC since November 2024.

The whale began accumulating around 13 years ago and reportedly bought Bitcoin at an average price of $332 per BTC and has sold at an average price of around $94,786, generating approximately $330 million in profits. At its peak, the wallet held 5,000 BTC.

After the latest sales, the wallet still holds around 1,500 BTC valued at $106.8 million at current prices.

Such transaction activity is not limited to this wallet. Separate data from early Bitcoin investor Owen Gunden shows he has sold another 650 BTC worth about $46.3 million on Wednesday, bringing his total disposals to roughly 11,000 BTC, or more than $1 billion.

The investor has yet to confirm ownership of the wallet, and such on-chain attributions remain unverified.

Meanwhile, crypto.news reported earlier that Bhutan has transferred roughly $72.3 million in Bitcoin. Wallets connected to Druk Holding and Investments have been offloading portions of its holdings, and the country’s reserves have significantly shrunk since their peak levels.

Recent whale activity may have contributed to this pressure. According to CryptoQuant data, the bitcoin exchange whale ratio, which tracks the share of top 10 deposits relative to total exchange inflows, hit 0.83 on March 14.

Whales have also been observed shorting. Notably, a pseudonymous whale called Jason has repeatedly taken large short positions on Bitcoin, including a recent 2,281 BTC short on Binance opened at around $74,238.

Bitcoin (BTC) price in the meantime has fallen over 4.5% and is down nearly 43% from its all-time high.

Crypto World

Apex and Polygon Launch ERC-3643 Chain for Tokenized Assets

Apex Group’s Tokeny has tapped Polygon Labs to launch T-REX Ledger, a compliance-focused blockchain designed to help regulated tokenized assets move across networks without repeating investor checks and transfer restrictions.

In a Thursday release shared with Cointelegraph, the project said it targets a key friction point in tokenized markets. ERC-3643 is an Ethereum-based token standard for permissioned tokens representing real-world assets that can support compliant issuance of RWAs, but identity checks, eligibility rules and transfer restrictions often remain fragmented when the same asset is distributed across multiple blockchains.

T-REX Ledger is being pitched as a shared compliance layer that other chains can query, while settlement continues to take place on external networks. Built with Polygon’s Chain Development Kit and connected to Agglayer, the system is intended to act as a common registry for investor eligibility and transfer rules across tokenized securities.

The launch comes as financial and crypto infrastructure groups race to build infrastructure for tokenized markets. The New York Stock Exchange parent company, Intercontinental Exchange, has outlined plans for a new platform for tokenized stocks and exchange-traded funds (ETFs), while the Depository Trust and Clearing Corporation (DTCC) joined the ERC-3643 Association in 2025 as institutions push deeper into tokenized collateral and securities infrastructure.

Fixing fragmented compliance

In the release, the network was described as a “shared source of truth” for investor eligibility and transfer rules.

The core problem T-REX aims to solve is that ERC-3643 enables compliant issuance but does not maintain a shared compliance state across chains. The same security measures applied to Ethereum and Polygon, for example, still run separate eligibility checks, identity attestations and transfer restrictions.

Joachim Lebrun, co-founder of T-REX Network and chief blockchain officer of Tokeny, told Cointelegraph that T-REX Ledger would support the issuance and lifecycle management of regulated digital securities, including bonds, funds, equities and structured products, with identity, eligibility and transfer rules embedded directly into ERC-3643 tokens.

Apex Group will act as the first onchain transfer agent and plans to adopt T-REX Ledger as its default multi-chain orchestration layer with an initial target of $100 billion in tokenized assets by June 2027.

Related: New Ethereum standard aims to set baseline for real-world asset tokenization

T-REX Ledger centralizes compliance logic in a dedicated chain that other networks can query, while settlement remains on external chains.

Lebrun said, “The market has grown into a multi-chain world for tokenization” and argued that T-REX Ledger turned other blockchains into “distribution channels,” enabling regulated assets to move to “wherever liquidity exists with speed, compliance, and control.”

Slotting into the tokenization race

Related: SEC gives go-ahead to Nasdaq for tokenized trading trial

T-REX is pitching itself as a neutral registry layer that can sit alongside players in the tokenization race. Lebrun said that a security issued via T-REX Ledger “could ultimately settle at DTCC” because “the compliance validation doesn’t need to live on the same network as the settlement.”

The chain itself will run as a sovereign Polygon CDK network governed by a dedicated steering committee, while ERC-3643 and its compliance framework remain open source under the ERC-3643 Association, not Polygon.

Magazine: Ethereum’s Fusaka fork explained for dummies — What the hell is PeerDAS?

-

Crypto World6 days ago

Crypto World6 days agoHYPE Token Enters Net Deflation as HyperCore Buybacks Outpace Staking Rewards

-

Tech4 days ago

Tech4 days agoYour Legally Registered ‘Motorcycle’ Might Not Count Under Proposed US Law

-

Fashion6 days ago

Fashion6 days agoWeekend Open Thread: Addict Lip Glow

-

Tech2 days ago

Tech2 days agoAre Split Spacebars the Next Big Gaming Keyboard Trend?

-

Sports5 days ago

Why Duke and Michigan Are Dead Even Entering Selection Sunday

-

Business4 days ago

Business4 days agoSearch for Savannah Guthrie’s Mother Enters Seventh Week with No Arrests

-

Business6 days ago

Business6 days agoUS Airports Launch Donation Drives for Unpaid TSA Workers as Partial Government Shutdown Enters Fifth Week

-

Crypto World5 days ago

Crypto World5 days agoCoinbase and Bybit in Investment Talks: Could Bybit Finally Enter the US Crypto Market?

-

Business4 days ago

Business4 days agoAustralian shares drop as Iran war enters third week

-

Business6 days ago

Business6 days agoCountry star Brantley Gilbert enters growing non-alcoholic beer market

-

Crypto World4 days ago

Crypto World4 days agoCrypto Lender BlockFills Enters Chapter 11 with Up to $500M in Liabilities

-

Sports6 days ago

Sports6 days agoCollege Basketball Best Bets: Conference Tournament Semifinal Picks

-

Politics2 days ago

Politics2 days agoThe House | The new register to protect children from their abusers shows Parliament at its best

-

Fashion4 days ago

Fashion4 days ago25 Celebrities with Curly Hair That Are Naturally Beautiful

-

News Videos1 day ago

News Videos1 day agoRBA board divided on rate cut, unusually buoyant share market | Finance Report | ABC NEWS

-

Crypto World1 day ago

Crypto World1 day agoCanada’s FINTRAC revokes registrations of 23 crypto MSBs in AML crackdown

-

Crypto World5 days ago

Crypto World5 days agoCrypto Losses Drop 87% in February, But Hackers Are Now Targeting People, Not Code

-

Politics3 days ago

Politics3 days agoReal-time pollution monitoring calls after boy nearly dies

-

NewsBeat1 day ago

NewsBeat1 day agoResidents in North Lanarkshire reminded to register to vote in Scottish Parliament Election

-

Business4 days ago

Business4 days agoMeta planning major layoffs as AI spending and automation reshape workforce

You must be logged in to post a comment Login