Entertainment

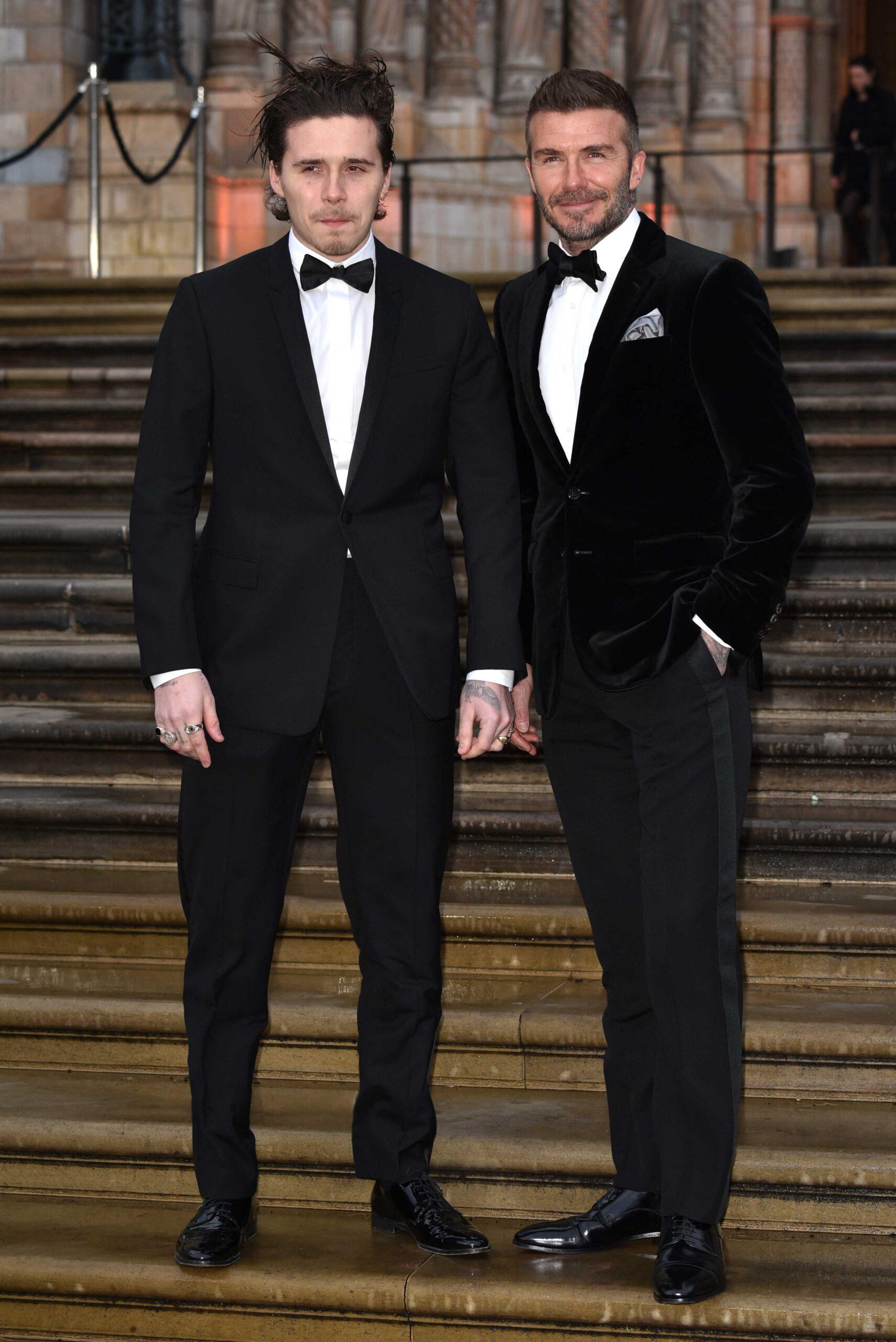

Nelson Peltz Speaks Out Following Ongoing Beckham Family Feud

Weeks after Brooklyn Beckham dropped the shocking allegations against his parents last month, his father-in-law has decided to chime in with his thoughts about the feud.

Article continues below advertisement

Nelson Peltz Offers First Public Comments About Beckham Family Feud

While speaking at “WSJ Live Event” on Tuesday, February 3, Nelson Peltz formally addressed the messy breakdown of the family relationship between Brooklyn Beckham and his parents, David and Victoria Beckham.

When asked about the public attention surrounding his daughter and her marriage, Peltz began his comments by jokingly responding, “Has my family been in the press lately? I haven’t noticed that at all,” he said, according to PEOPLE.

Peltz was then asked if he had given his family any advice amid the ongoing feud. “My advice is to stay the hell out of the press. How much good did that do?,” he replied.

He continued, addressing his co-in-laws by name while praising Nicola and Brooklyn:

Article continues below advertisement

“My daughter and the Beckhams are a whole other story. That’s not for coverage here today, but I’ll tell you my daughter’s great, my son-law Brooklyn, is great, and I look forward to them having a long, happy marriage together.”

Article continues below advertisement

Brooklyn Beckham Slammed His Parents In A Shocking Social Media Post Last Month

Following months of reported tensions between the Beckham family and Brooklyn, things officially came to a head after the couple’s eldest child slammed his parents on social media.

Claims that mom Victoria “hijacked” the first dance at his wedding by dancing “inappropriately” and making him feel “uncomfortable” and “humiliated” led his accusations. Brooklyn further provided a detailed account of the alleged behind-the-scenes drama of his parents repeatedly attempting to sabotage his marriage and tarnish him and Nicola in the media.

Article continues below advertisement

“I have been silent for years and have made every attempt to keep these matters private,” Brooklyn wrote in the lengthy post. “Unfortunately, my parents and their team have continued to go to the press, leaving me with no choice but to speak for myself and tell the truth about only some of the lies that have been printed.”

Brooklyn also made it very clear that he is not interested in mending fences anytime soon. “I do not want to reconcile with my family. I’m not being controlled, I’m standing up for myself for the first time in my life.”

Article continues below advertisement

David Beckham Addressed His Children’s Use Of Social Media Following Brooklyn’s Posts

Days after Brooklyn’s posts about his parents on social media, dad David Beckham appeared on CNBC’s Squawk Box on January 20, speaking about social media and his advice to his children about their online usage.

“I have always spoken about social media and the power of social media. For the good and for the bad,” Beckham said while on the show. “The bad we’ve talked about with what kids can access these days, it can be dangerous. But what I have found personally, especially with my kids as well, use it for the right reasons.”

David then noted the “mistakes” that his children make online.

“I’ve tried to do the same with my children, to educate them,” he shared. “They make mistakes, but children are allowed to make mistakes. That is how they learn. That is what I try to teach my kids. You sometimes have to let them make those mistakes as well.”

Article continues below advertisement

Amid The Beckham Family Drama, Victoria Reunited With The Spice Girls

Per PEOPLE, to celebrate the 50th birthday of Emma Bunton (Baby Spice), group members Geri Halliwell-Horner (Ginger Spice), Melanie Chisholm (Mel C), and Victoria were all on hand on Saturday, January 24, for Bunton’s birthday bash in the English countryside.

The day after the milestone birthday celebration, fans were thrilled to see the ladies together (minus Mel B, who sent a birthday video) when Victoria posted a photo on Instagram of them.

“Happy birthday to the most beautiful soul @emmaleebunton I love you girls so much @gerihalliwellhorner @melaniecmusic xxxxxxx,” Victoria’s caption read.

David also shared his thoughts on the meet-up via his wife’s comment section. “This made me happy. I can only imagine how the Spice Girls fans feel @spicegirls @victoriabeckham special night celebrating Emma @emmaleebunton x,” he wrote.

Article continues below advertisement

The Beckham Family Is A United Front In Brooklyn’s Absence

Victoria was also in the city to accept a special award, and during her speech, she thanked her family, who were in attendance to support her.

Continue Reading

Entertainment

6 Great HBO Shows With Glaring Plot Holes

Plot holes are kind of impossible to avoid in storytelling, and honestly, at times, the audience is willing to play along. If the story is good enough, a few logical leaps here and there don’t really matter. However, things get messy when writers push that limit a little too far. HBO, for all its prestige and reputation, is no exception. In fact, some of the network’s most iconic series are also the biggest offenders when it comes to obvious inconsistencies and unresolved arcs.

That doesn’t necessarily make them bad, though. If anything, it makes their success all the more fascinating. After all, it takes a truly great story to keep the viewers hooked even when the cracks in the plot are right there in plain sight. Here is a list of successful HBO shows that serve as perfect examples of that.

6

‘Westworld’ (2016–2022)

Westworld started as one of HBO’s most ambitious shows with a premise that instantly hooked the audience. The sci-fi series, created by Jonathan Nolan and Lisa Joy, is about a hyperrealistic Wild West theme park populated by android hosts, where wealthy guests can indulge in their darkest fantasies without consequence. The show was meant to be a philosophical exploration of free will, consciousness, and what it truly means to be human. Now, Westworld Season 1 perfectly set up this concept with a gripping and layered central mystery. However, as the show expands its world, it begins to lose the plot big time. The storytelling begins increasingly convoluted, and timelines start blurring into each other to the point where the rules of the show’s own universe start feeling inconsistent.

Characters are repeatedly killed and revived without clear stakes, major arcs are unresolved, and key plots like the fate of the Outliers never receive a satisfying payoff. The thing about Westworld is that it isn’t necessarily a bad show, but its tendency to constantly reinvent itself comes at the expense of the story and characters. Each season turns the status quo upside down and makes the audience feel that the show is more interested in escalating its complexity than in actually following through on already-established ideas.

5

‘Euphoria’ (2019–2026)

Euphoria has been one of the most talked-about shows of the last decade. The controversial teen drama, created by Sam Levinson, stars Zendaya as Rue Bennett, a high-schooler battling addiction along with a group of her friends and classmates, all navigating their own struggles with identity and trauma. The series takes place in a hyper-stylized world that instantly became its signature and mirrored the chaos that its characters live in. The show has been widely praised for its performances, cinematography, and raw depiction of teenage life. However, for all its strength, the drama has also faced consistent criticism for gaps in its storytelling. Euphoria Season 2 in particular feels messy, inconsistent, and clearly prioritizes shock value over any semblance of character arcs.

Some of these issues include Nate’s (Jacob Elordi) sudden fixation on Cassie despite hating her throughout Euphoria Season 1. Entire character arcs are dropped, like Kat’s (Barbie Ferriera) being reduced to a side character or McKay (Algee Smith) being written out with no explanation. However, Rue’s storyline with Laurie (Martha Kelly) and the fact that she escapes that dangerous situation without any consequence is easily one of the biggest plot holes in the series. Some might argue that all of these loose ends will be solved by Season 3. That doesn’t take away from the fact that the show struggles with its own stakes. Euphoria thrives on how it makes the audience feel in the moment, but once they step back, the cracks in the narrative are impossible to ignore.

4

‘Game of Thrones’ (2011–2019)

Game of Thrones has practically defined prestige television for the better part of the last two decades. The series, based on George R. R. Martin’s A Song of Ice and Fire novel series, takes place in an expansive, fantastical world and centers on the intense power struggle between the seven kingdoms for the Iron Throne. There’s no denying that the show has already gone down in pop culture history for its meticulous storytelling and unmatched worldbuilding. However, as the series moved beyond its source material, logic took a backseat to the spectacle, and that proved to be a major mistake. The later seasons destroyed the legacy that the show had spent around eight years constructing.

Entire storylines felt rushed or abandoned, including Cersei’s (Lena Headey) pregnancy and the prophecy of “The Prince That Was Promised.” Even major narrative arcs like Jon Snow’s (Kit Harrington) true lineage and Daenerys’ (Emilia Clarke) descent into madness ultimately had little impact on the outcome of the story. By the end, it felt like the show was just leaning on convenience to wrap things up, and in doing so, it set up many ideas that were never fully explored. The notorious ending of Game of Thrones raised more questions than answers and is widely considered one of the worst series finales of all time. Despite a rushed finish, though, Game of Thrones is still an undeniable landmark in TV history, with two spinoffs already airing and several others in the works.

3

‘The Leftovers’ (2014–2017)

The Leftovers is a show that fully embraces ambiguity, but that is no excuse for all the plot holes it has left behind. The series, created by Damon Lindelof and Tom Perrotta, begins with two percent of the world’s population suddenly vanishing without explanation. However, the show isn’t about where they went, but it focuses on the people left behind as they grapple with grief and faith in the wake of a phenomenon that just can’t be explained. The show’s deliberate refusal to provide easy answers gives it its emotional weight, but also tends to get frustrating at times. The Leftovers often sets up certain storylines only to abandon them in the middle. For example, Kevin Garvey’s (Justin Theroux) repeated resurrections are never grounded in any clear logic.

Not to mention the entire situation with the Guilty Remnant feels like one of the show’s most interesting elements until the viewer realizes that their larger purpose is never actually explored. Even broader world-building elements like the earthquakes or the surreal hotel world are introduced with weight but never really explained in relation to the overall narrative. Sure, some of this is intentional, but there is a fine line between leaving things to the audience’s interpretation and careless storytelling. Despite all that, though, there’s no denying that The Leftovers is one of HBO’s most emotionally complex stories.

2

‘True Detective’ (2014–Present)

True Detective Season 1 is peak TV, but unfortunately, it goes downhill from there. The HBO crime anthology begins with Matthew McConaughey and Woody Harrelson as two detectives trying to solve a disturbing ritualistic murder case. Each subsequent season has introduced a new cast and case, with the most recent one being True Detective: Night Country starring Jodie Foster and Kali Reis. For all its ambition, though, the one thing the show has struggled with is consistency.

True Detective Season 2, in particular, is often criticized for being overly convoluted and weighed down by too many characters and subplots that ultimately go nowhere. It often feels like the show is focusing more on mood and character rather than telling a believable story. Its best moments have always come from the dynamic between its detectives and philosophical dialogue, but even then, the show’s glaring plot holes are hard to miss. Even at its messiest, though, True Detective is undeniably a great watch.

1

‘The Last of Us’ (2023–Present)

The Last of Us quickly established itself as one of HBO’s biggest hits and set the gold standard for video game adaptations. The post-apocalyptic drama follows Joel (Pedro Pascal), who is tasked with escorting Ellie (Bella Ramsey) across a post-apocalyptic United States to a revolutionary group known as the Fireflies. Now, what raises the stakes is that this teenage girl may hold the key to curing a fungal infection that is slowly taking over humanity. The drama series is far from a typical zombie show, though, because it leans heavily into character-driven storytelling.

However, for a show that attempts to remain grounded in realism, there are plenty of moments where its internal logic starts to crack. Plenty of Joel’s decisions make little sense from a practical point of view. Even the broader stakes, such as the Fireflies’ plan to immediately operate on Ellie without exploring any other alternatives, feel rushed and oddly underdeveloped. A lot of moments in the show rely entirely on convenience or shock value. One can overlook these gaps thanks to the cast’s brilliant performances and the emotional payoff of it all, but they do make it harder to fully buy into the world at times.

The Last of Us

- Release Date

-

January 15, 2023

- Network

-

HBO

- Showrunner

-

Craig Mazin

- Directors

-

Craig Mazin, Peter Hoar, Jeremy Webb, Ali Abbasi, Mark Mylod, Stephen Williams, Jasmila Žbanić, Liza Johnson, Nina Lopez-Corrado

Entertainment

5 Years Later, Rebecca Ferguson’s Sci-Fi Movie Is One of the Best on Streaming

Some sci-fi movies are too strange, too sincere, or just too out-of-step with the moment they arrive in. Reminiscence was probably all three. Lisa Joy’s feature directorial debut had a very specific kind of dreamy, flooded-neon melancholy that never really clicked commercially, but it has started finding new attention on streaming. Earlier this year, coverage noted that the film was drawing fresh viewers on HBO Max, which makes sense for something this mood-driven and weirdly romantic.

The cast was never the problem. Reminiscence stars Hugh Jackman, Rebecca Ferguson, Thandiwe Newton, Daniel Wu, Cliff Curtis, Angela Sarafyan, Natalie Martinez, Brett Cullen, and Marina de Tavira. The story follows a private investigator who uses memory-exploration technology to help clients revisit their past, only to become obsessed with finding a vanished woman. It’s pure tech-noir pulp, just draped in a more mournful and romantic register than audiences maybe expected.

Is ‘Reminiscence’ Worth Watching?

Collider’s review of the movie stated that Reminiscence is an ambitious but ultimately disappointing attempt to fuse classic noir with futuristic sci-fi, undone by shallow thematic execution. Lisa Joy’s heavy-handed narration and underdeveloped class commentary talk down to the audience rather than trusting the visuals or story to do the work. Despite its intriguing premise and atmospheric setting, Reminiscence ends up feeling like stylish texture without substance, culminating in a forgettable and emotionally hollow conclusion.

“What’s more frustrating is that the class commentary is merely window dressing. It kind of positions Mae’s story as a consequence of class conflict, but it doesn’t have much to do with Nick. It’s simply the world he inhabits, and while he doesn’t need to be a class warrior or anything like that, his perceptions of the world exist separate from his personal journey to find Mae. He doesn’t see the world one way and have that perception changed through his relationship with Mae, so it’s just Joy embracing her own cleverness by showing a sci-fi world that emphasizes class conflict. However, she doesn’t do the work to connect that world to her protagonist’s story, so it all feels hollow. Reminiscence is texture without purpose.”

Reminiscence is streaming now.

- Release Date

-

August 20, 2021

- Runtime

-

116 minutes

- Director

-

Lisa Joy

Entertainment

Gayle King Addresses Savannah Guthrie’s Today Show Return

Gayle King is throwing her support behind Savannah Guthrie and her Today show comeback as the investigation surrounding her mother Nancy’s disappearance continues.

Speaking to Us Weekly at the Breakthrough Prize event in Los Angeles on Saturday, April 18, King, 71, said she was happy to see Savannah back on air despite the difficult circumstances she’s facing.

“Listen, we’re just glad Savannah’s back, but of course, our hearts are still aching and still breaking,” King told Us. She added, “There are no words to describe what she’s going through.”

The CBS Mornings presenter also urged anyone with information about what happened to Nancy to come forward.

“I’m still hoping that somebody will do the right thing,” King continued. “Somebody, somebody out there knows something, and it’s shocking to me after seeing Savannah open up her heart, after looking at the video that we all saw, and after the million dollars reward that there has not been some resolution in this case.”

She added, “So I am just here wishing her well and cheering. I’m glad that she’s back.”

Savannah, 54, returned to Today on April 6 after two months away dealing with the disappearance of her mother Nancy, who was reported missing in Arizona on February 1.

“Good morning, welcome to Today on this Monday morning. We are so glad you started your week with us, and it is good to be home,” she told viewers during her first episode back.

Savannah and Nancy Guthrie. Photo by: Nathan Congleton/NBC)

Savannah took a step back from the show at the time, traveling from New York to Arizona amid the police investigation into her mother’s disappearance. During Savannah’s absence from Today, Hoda Kotb filled in for her.

Savannah and her siblings Annie Guthrie and Camron Guthrie have pleaded for the public’s help in finding their mother since she disappeared, offering a $1 million reward for information leading to her recovery.

In one video released by Savannah, Annie and Camron via social media, they begged for Nancy’s safe return.

“We received your message and we understand,” Savannah said in a video shared on February 7, while flanked by and holding the hands of her siblings. “We beg you now to return our mother to us so that we can celebrate with her. This is the only way we will have peace. This is very valuable to us and we will pay.”

On February 10, the FBI released photos and video footage of a masked individual at Nancy’s home. However, no suspects have been officially identified since her disappearance.

Entertainment

Rebecca Ferguson’s Forgotten 115-Minute Sci-Fi Sequel Is Quietly Climbing Global Streaming Charts

Men in Black: International is one of those franchise reboots people more or less decided on immediately, which meant it never got much room to become anything else. But streaming is often kinder to movies that arrive with baggage, and that seems to be happening here. Earlier this year, the film started drawing renewed attention on Starz in the U.S., while overseas streaming charts have also shown it popping up in places like France. That doesn’t make it a full-scale global juggernaut, but it does mean the movie is finding a broader second life than its original reputation might suggest.

Men in Black: International stars Chris Hemsworth as Agent H, Tessa Thompson as Agent M, Liam Neeson as High T, Emma Thompson as Agent O, Rebecca Ferguson as Riza Stavros, Kumail Nanjiani as Pawny, and Rafe Spall as Agent C. On paper, that’s a really appealing sci-fi comedy ensemble, especially with Hemsworth and Thompson reuniting after the Thor movies.

Is ‘Men in Black: International’ Worth Watching?

Collider’s review stated that Men in Black: International really came down to the sheer appeal of its two stars. The dynamic helps carry the movie through action scenes and story beats that might otherwise feel pretty flat. The review also pointed out that touches like the broader world-building, some fun support from Pawny, and the natural pull of the central duo gave the film a sense of missed opportunity. It may not fully come together, but there’s still enough there to make it an entertaining watch.

“As Agent H, Hemsworth is basically ramping up the most dick-ish of Thor Odinson’s personality quirks, but weaponizing well-timed smirks or winks—or, let’s be honest, an unbuttoned button—to make us still like him. Thompson has the harder role; Agent M is extremely competent and a bit of a fangirl for the Men in Black at the same time. Thompson combines those two qualities into pure, crackling energy. That’s the funny part, really. Thanks to the combination of Hemsworth + Thompson + the world-building, I’d watch the hell out of a sequel to this movie despite feeling cold about it overall.”

Men in Black: International is currently streaming.

- Release Date

-

June 12, 2019

- Runtime

-

115 minutes

- Director

-

F. Gary Gray

Entertainment

‘Olympus Has Fallen’ Star’s ‘Landman’ Replacement Is Taking Over U.S. Streaming

Aaron Eckhart fans are currently gearing up for a turbulent flight, as The Dark Knight star’s next project opens in theaters on May 1. An action-packed survival thriller from Deep Blue Sea director Renny Harlin, Deep Water follows a flight from Los Angeles to Shanghai that, while coasting over the middle of the Pacific, enters a terrifying storm that sends everyone on board into the cold ocean below. Just when things couldn’t get worse, along come the sharks. Alongside Eckhart, the movie also stars the likes of Ben Kingsley (Iron Man 3), Angus Sampson (Insidious), Lucy Barrett (Charmed), Kelly Gale (Plane), Richard Crouchley (Evil Dead Rise), and more.

In anticipation of Eckhart’s latest release, fans have been flocking to one of his lesser-spotted recent projects. Thieves Highway, a 2025 neo-Western that made very little impact upon arrival, is perhaps one of the more underrated entries in Eckhart’s impressive catalog, thanks simply to it falling so far under most radars. Directed by Jesse V. Jackson, who also worked with Eckhart on the 2024 conspiracy thriller Chief of Station, Thieves Highway also featured performances from the likes of Devon Sawa, Brooke Langton, and Lochlyn Munroe.

At the time of writing, and seemingly against the odds, Thieves Highway has risen to the very top of the Hulu movie streaming charts in the U.S., outperforming the likes of Gaten Matarazzo‘s new comedy Pizza Movie, the original The Devil Wears Prada, and Mike & Nick & Nick & Alice. A synopsis for Thieves Highway reads:

“Lawman Frank Bennett uncovers a massive smuggling operation after a deadly confrontation. Cut off from cell service and without his truck, he’s forced to take on a dangerous gang led by a deranged ex-military commander.”

What Did Critics Say About ‘Thieves Highway’?

So under-seen that it doesn’t even have a rating on review aggregator Rotten Tomatoes, those who did catch Thieves Highway in 2025 responded with mixed reviews. Whilst some praised the movie’s gripping lead performance, saying, “Eckhart anchors the film with a world-weary, classic sense of morality,” others were not so impressed with the project as a whole, saying, “Johnson and Mills do some fun maneuvering with their characters and Eckhart is a sturdy enough lead. But the storytelling takes too many shortcuts and the overall lack of suspense keeps us one step ahead.”

Thieves Highway is streaming on Hulu. Stay tuned to Collider for more updates, and check out Eckhart’s next movie, Deep Water, in theaters on May 1.

- Release Date

-

May 1, 2026

- Runtime

-

106 minutes

- Director

-

Renny Harlin

Entertainment

Steve Kerr Mulls Over Career After Warriors Playoff Loss

The Golden State Warriors’ future is looking murky after the team’s loss in the NBA play-in tournament, and head coach Steve Kerr seems to know it.

“I don’t know what’s gonna happen next,” said Kerr to players Stephen Curry and Draymond Green in a huddle on the sideline. “But I love you guys to death. Thank you.”

The heartfelt moment — picked up by a TV microphone — came in the waning seconds of a 111-96 loss to the Phoenix Suns on Friday, April 17, ending the Warriors’ season.

The final buzzer marked the end of Kerr’s contract with the Warriors, and the 60-year-old said after the game that he’s going to take a few weeks to mull over his future.

“I don’t know what’s going to happen,” Kerr said to reporters. “I still love coaching, but I get it. These jobs all have an expiration date. There is a run that happens, and when the run ends, sometimes it’s time for new blood and new ideas.”

He continued, “I don’t want to walk away from Steph. I’m definitely not going and coaching somewhere else next year in the NBA. I would never walk away from Steph. But all this stuff has to be aligned and right. Those are all discussions that will be had.”

Head coach Steve Kerr, Stephen Curry and Draymond Green hug during the final moments of an NBA play-in tournament game Christian Petersen/Getty Images

Curry, 38, joined the Warriors in 2009. Green, 36, was drafted by the team in 2012. Kerr took over as head coach in 2014, and together the three helped build one of the most dominant dynasties in NBA history.

In the 12 seasons since Kerr joined Curry and Green in Golden State, the Warriors have won four NBA titles (2015, 2017, 2018, 2022). They’ve reached the playoffs eight times and played in six NBA Finals.

“If [my time is done], then I will be nothing but grateful for the most amazing opportunity any person could have to coach this franchise in front of our fans and to coach Steph Curry, [Draymond Green], the whole group,” Kerr said. “It may still go on. It may not. I don’t know at this point. But we all need to step away a little bit and then reconvene.”

Both Curry and Green have expressed interest in continuing to play for the Warriors. While Curry still has one more season on his contract, Green has the option to opt out of his contract with his player option for next season.

Despite the option, Green reiterated after Friday’s game that he wants to remain in Golden State.

“Hopefully I’ve done enough to still be here,” Green said.

Entertainment

Summer House’s Kyle Reacts to Ex Amanda and West’s PDA

Summer House star Kyle Cooke has seen his estranged wife, Amanda Batula, kissing West Wilson — which might have been a bridge too far.

“Was not prepared to see that,” Cooke, 43, wrote via Threads on Saturday, April 18, responding to the Summer House chain. “And that. And that 🤢.”

Cooke’s costar Mia Calabrese replied, “Kyle, I left you for 1 hour….”

The Bravo show’s thread had been abuzz since Batula, 34, and Wilson, 31, were spotted at the New York Yankees vs. Kansas City Royals baseball game on Friday, April 17. The duo even packed on the PDA when the stadium’s Kiss Cam panned to their seats.

Batula and Cooke were married for four years, announcing in January that they had separated. She confirmed her romance with Wilson just three months later.

“We’ve seen the growing online speculation, so while this is still very new, we wanted to provide some clarity. It was never our intention to purposely hide anything,” West and Batula wrote in a joint statement last month. “Given the complicated relationship dynamics involved and the scrutiny that comes with being on a reality show, we needed a little space to process things privately before speaking on it.”

They continued at the time, “We’ve shown up for each other as friends over the years, through all the highs and lows, and what’s developed recently was the last thing either of us expected. Our connection grew out of a genuine, longstanding friendship, which made it especially important for us to approach this with care.”

Cooke and his Summer House costars were shocked by the reveal, including Ciara Miller. The former nurse, 30, dated Wilson in 2023 and was close friends with Batula. She told Glamour in a Friday profile published before the MLB game that she found out about their decision to go public t less than one hour before the statement was shared online.

“It’s one thing to experience hurt behind closed doors,” Miller told Glamour. “To experience it so publicly is like another layer, and then to have to see what you thought was your life still play out in season 10. It’s a major mindf***.”

As for Cooke, he was recently seen kissing The Real Housewives of Orange County alum Meghan King on Thursday, April 16.

“Kyle didn’t know Meghan prior to being at the same event last night. She had pursued him the second she saw him,” a source exclusively told Us. “It’s nothing serious, but they did hang out all night even after the event was over, and made out several times in public.”

Entertainment

Nicole Kidman Recalls the Moment She Found Out Her Mom Died

Nicole Kidman is reflecting on the moment she was told her mother Janelle Kidman had died right before she was about to go on stage to accept an award.

Speaking to Variety on Saturday, April 18, Nicole, 58, detailed how she found out about the loss at the Venice International Film Festival as she was preparing to accept a best actress award for her role in Babygirl.

“I was about to go out on stage, and I found out that my mother had passed,” Nicole told the outlet. “I went right back to my room in Venice, was getting into bed, and I was completely devastated.”

Nicole added that as she digested the sad news at the time, she thought to herself, “‘I’m not sure how I’m going to move forward or function now.’ She was so much a part of my existence.”

In September 2024, the Big Little Lies actress left Venice early to make her way home to Australia after learning of Janelle’s death.

Speaking to Variety on Saturday, Nicole also described her “harrowing” attempt to leave Venice in the middle of the night trying to return to her home country.

“I remember getting into a boat in the canal, literally at night, trying to find my way to the airport, and then turning around going, ‘I can’t even do this,’” she said. “Then I went back to bed. And I was alone. My husband wasn’t there, my children weren’t there. I was there to win an award, which should’ve been a beautiful thing. That there is the contrast of life.” (Nicole was married to Keith Urban at the time, with whom she shares daughters Sunday Rose, 17, and Faith Margaret, 14. Nicole and Urban finalized their divorce in January.)

Janelle and Nicole Kidman. (Photo by James D. Morgan/Getty Images)

At the time, Babygirl director Halina Reijn confirmed Janelle’s death as she read a statement on behalf of Nicole during a Venice International Film Festival panel.

“Today I arrived in Venice to find out shortly after, that my beautiful, brave mother Janelle Ann Kidman has just passed,” Reijn, 50, read on Nicole’s behalf. “I am in shock and I have to go to my family, but this award is for her, she shaped me, she guided me and she made me.”

The statement continued, “I am beyond grateful that I get to say her name to all of you through Halina, the collision of life and art is heart-breaking, and my heart is broken.”

Less than a week after their mother’s death Nicole and her sister Antonia, 55, took to Instagram to share a joint post thanking friends and fans for their condolences and well wishes.

“My sister and I along with our family want to thank you for the outpouring of love and kindness we have felt this week,” Nicole and Antonia wrote. “Every message we have received from those who loved and admired our Mother has meant more to us than we will ever be able to express. Thank you from our whole family for respecting our privacy as we take care of each other ❤️”

Entertainment

Prime Video’s Near-Perfect Action Hit Is Exactly What John Wick’s ‘Ballerina’ Should Have Been

Having clearly appealed to fans across demographics, a new Prime Video movie is proving to be a major hit for the streamer amid much bigger titles. It was released in the wake of star-driven tentpoles such as The Wrecking Crew, led by Jason Momoa and Dave Bautista, and Mercy, the sci-fi mystery starring Chris Pratt and Rebecca Ferguson. Both films dominated the Prime Video streaming charts for several weeks before the all-female action movie came out of nowhere to take the number one spot. It remains one of the top 10 movies on the global Prime Video leaderboard, and recently passed a major milestone.

The movie, directed by Vicky Jewson, features a quintet of young women as ballerinas who must defend themselves against a sinister adversary played by Uma Thurman. The five protagonists are played by Lana Condor, the star of Netflix’s To All the Boys trilogy; Iris Apatow, who played a supporting role in the Netflix series Love; Millicent Simmonds, who starred in A Quiet Place and its sequel; Maddie Ziegler, who rose to fame after appearing in a couple of Sia music videos; and Avantika, who played a supporting role in the Mean Girls remake.

Here’s the Action Movie That’s Ruling Prime Video’s Streaming Charts

The movie in question is Pretty Lethal. It premiered on Prime Video on March 25 and, according to FlixPatrol, has spent more than 20 days on the streamer’s top 10 charts. Pretty Lethal received mixed reviews and is now sitting at a 56% score on Rotten Tomatoes. The aggregator website’s consensus reads, “Starting off with a fun hook and diluting it with a plethora of clichés, Pretty Lethal doesn’t reach its full operatic potential but doles out enough balletic action to remain reasonably en pointe.” In his review, Collider’s Ross Bonaime praised the film’s action sequences and noted its similarity to other movies produced by the original John Wick‘s co-director David Leitch. He wrote, “In one particularly inspired choice, these ballerinas decide to stick a razor blade between their toes and utilize their dance moves to fight off their attackers. Many of these fights are blunt and full of big, wild moments that mostly carry the film, despite its fairly weak narrative.” Stay tuned to Collider for more updates.

- Release Date

-

March 25, 2026

- Runtime

-

88 minutes

- Director

-

Vicky Jewson

- Writers

-

Kate Freund

- Producers

-

Kelly McCormick, Mike Karz, Piers Tempest, Bill Bindley

Entertainment

10 Best Albums of the 1980s, Ranked

There was a real variety of music that came out in the 1980s, which makes it difficult to even assess what the best of that decade even was, but there’s no harm in trying. Actually, there’s a little harm in trying. People might be a bit unhappy, but there’s some personal bias here. If you want to have a semi-biased and semi-objective stab at throwing out the names of 10 albums from the 1980s that are the best, go for it.

A few of the albums below are among the most popular of all time, and deservedly so, while others are a little more underrated, or perhaps classifiable as cult classics (if that term applies to the world of music). Also, yes, like, three of these albums had songs that were prominently used in Stranger Things. Stranger Things is not the reason those albums are here. But it’s being acknowledged right out of the gate, and no more, once the intro’s over. Which it is… right about… now.

10

’16 Lovers Lane’ (1988)

The Go-Betweens

Things for The Go-Betweens were not so good, in 1988, with tensions that often seem to come about from just being in a band for more than a few years, and also some romance-related drama, particularly because members of the band were – or had been – romantically linked. It wasn’t quite as infamously messy as Fleetwood Mac around the time of Rumours, but like that album, heavy feelings may have been put into music… specifically, the music heard throughout 16 Lovers Lane.

It was the final Go-Betweens album done as a full band, and is easily the best of the bunch. 16 Lovers Lane is incredible throughout, as far as the lyrics are concerned, and then musically, everything is catchy and immediate without being overly simplistic. It feels ahead of its time, as far as alternative (or almost even indie) rock goes, and maybe that’s why it wasn’t hugely successful upon release, and needed some time before people really started to recognize how borderline-perfect it was.

9

‘Master of Puppets’ (1986)

Metallica

Resist them if you want, because Metallica are kind of to metal what U2 are to rock… well, maybe. Both bands were at their peak in the 1980s, and both became so popular that being a detractor of either is now kind of cool, especially because members of both bands are sometimes outspoken and a bit much. But… the music. It comes back to the music. And also, sorry to U2. The Joshua Tree was right on the cusp of being here, like at #11 or #12. That’s the only reason there’s been a big old U2 tangent.

You mightn’t even usually like this kind of metal or hard rock that much, but still find plenty to appreciate here.

As for Master of Puppets, it’s the best Metallica album, and there’s even an argument to be made that it’s the best metal album of all time. It certainly feels as though it could be the most approachable, because you mightn’t even usually like this kind of metal or hard rock that much, but still find plenty to appreciate here. It rocks. It’s an album that rocks. What more do you want?

8

‘Soul Mining’ (1983)

The The

There is an almost uncomfortable amount of introspection, self-doubt, and anxiety throughout Soul Mining, which was the debut album of a band somewhat frustratingly called The The. The The is sort of just Matt Johnson, though, and Soul Mining remains the greatest collection of songs he put out. But the struggles explored do have to be emphasized, since even the album’s sunniest song, “This Is the Day,” is one of those songs that’s got an energetic and possibly hopeful sound, but the lyrics get more cynical – maybe even more sarcastic – the more you think about them.

“Uncertain Smile” is also a highlight, as the centerpiece of the album (quite literally, being the fourth of seven tracks), with the piano outro being especially memorable. Elsewhere, Johnson pulls from the Bruce Springsteen circa-Born to Run playbook of having a perfect opening track and then an ideal – and epic-length – closing track, to really make a strong first and final impression (with Soul Mining, it kicks off with “I’ve Been Waitin’ for Tomorrow (All of My Life),” and ends with the appropriately named “Giant,” which runs for almost 10 minutes).

7

‘The Queen Is Dead’ (1986)

The Smiths

There were only four proper studio albums released by The Smiths during their rather short time together as a band, and of those, the third, The Queen Is Dead, is the greatest. It’s boring to say that, but the consistency here is undeniable, as is the fact that it contains so many of the band’s greatest songs (including the title track, “I Know It’s Over,” “Bigmouth Strikes Again,” and “There Is a Light That Never Goes Out”).

You also know The Queen Is Dead is good because it endures, even though Morrissey (the vocalist and lyricist of The Smiths) seems increasingly keen to be as polarizing as possible in his post-Smiths endeavors. It helps that there’s more than just Morrissey to appreciate on an album by The Smiths, and his lyrics and voice (as they were, back in the 1980s) were undeniably compelling and unique.

6

‘Hats’ (1989)

The Blue Nile

One more potentially niche album to put here alongside 16 Lovers Lane and Soul Mining, but Hats really is something special, and the way it also sounds so distinctly 1980s makes it easy to put here. It’s synth-heavy, but also a good deal more mellow than much of the full-on synth-pop that was popular throughout the 1980s, using that sort of instrumentation in a low-key and atmospheric fashion.

The Blue Nile did this, to some extent, on several other albums, but never quite as memorably as was done on Hats. Without visuals, you do feel like you’ve sat through some kind of movie through the music alone, and an album being able to create that sensation is remarkably impressive. It’s an undeniably beautiful album, and further, one that’s beautiful in a singular way, so it’s certainly worth celebrating.

5

‘Thriller’ (1982)

Michael Jackson

There is a song on Thriller called “The Girl Is Mine,” a duet with Paul McCartney, that might well be the worst song to appear on an otherwise fantastic album. It is agonizingly corny. And, sure, there are other songs on Thriller that get a bit hammy and more than a little over-the-top, but not to such an eye-rolling extent. If it wasn’t on the album, then this album would be placed even higher.

Maybe it speaks to the quality of everything else that Thriller is still right up there, and very much a classic of its decade (and of all time, really) regardless. Of its nine songs, seven were released as singles, and many of those singles are among the most recognizable songs of the 1980s, with the music videos for a bunch of them certainly helping. One of the non-single songs, though, shouldn’t be overlooked: “Baby Be Mine,” the second track on the album, which is honestly kind of a banger.

4

‘Remain in Light’ (1980)

Talking Heads

Talking Heads released their first albums in the 1970s, and they were pretty great, but the band’s best single album, Remain in Light, came out right at the start of the 1980s. For what it’s worth, the band’s most popular album, Speaking in Tongues, came out a few years later (and it does have “This Must Be the Place (Naive Melody)” on it, which could be the band’s very best song), but Remain in Light is still the strongest.

It’s one of those middle-ground albums you can look back on and appreciate in hindsight, being a marriage of the slightly weirder stuff Talking Heads were doing in the late 1970s with the (slightly) increased emphasis on pop/rock later in the 1980s. You’ve got a balance here, yet even then, Remain in Light doesn’t sound quite like any other Talking Heads album, which might make it more worthy of being described as a “lightning in a bottle” kind of album.

3

‘Disintegration’ (1989)

The Cure

There are earlier albums by The Cure that could be called “rock,” but Disintegration feels like the band drifting away from that genre to a greater extent than they had previously, and for the better. Not that there aren’t some more energetic songs on Disintegration, but many of them are more patiently-paced and drawn-out, which can be seen when you look at the album’s length of 72 minutes, and the fact that it houses 12 tracks… so, the average track length is about six minutes.

You really don’t mind, though, because of what is done across the length of some of these longer songs. “Pictures of You,” the second song on the album, is particularly impressive, and probably demonstrates, strongest of all, what the band’s going for with many of the songs here. It’s also an atmospherically unique and distinctly moving album, the latter so in ways that are admittedly a little difficult to put into words.

2

‘Hounds of Love’ (1985)

Kate Bush

Yes, it’s the album with “Running Up That Hill” on it, and sure, it’s probably the best song on the album, and it comes first, so you might be worried about the rest of Hounds of Love. Well, the pace and quality are maintained. The remaining 11 tracks on Kate Bush’s greatest overall album are also phenomenal, with special mention to “Cloudbusting,” since it truly deserves to be regarded and praised alongside “Running Up That Hill” and “Wuthering Heights.”

There is much more to Kate Bush than Hounds of Love, and if you like her music being a little quirkier or experimental, maybe you’d prefer something that sounds a bit less immediate and punchy. Then again, Hounds of Love has the art pop dominate the first half of the album, and then the second half does go into more experimental and out-there territory, making Hounds of Love feel a bit like listening to two amazing (albeit quite short) albums back to back.

1

‘Purple Rain’ (1984)

Prince and the Revolution

The placement of Prince over Michael Jackson on a ranking like this might lose you, but if it has, then it’s better you’ve been lost right near the end of the ranking than closer to the start. Silver lining to everything. But also, come on. It’s Purple Rain. It’s nine absolutely perfect songs that could’ve all been singles on their own (hell, a pretty impressive five of them were), and there are no weak tracks here; no corny duet with a former Beatle or anything of the sort.

And yes, Purple Rain is technically a soundtrack album, but in that case, it’s probably the best soundtrack ever. Purple Rain the movie is fine, and made a little finer because you hear the songs from Purple Rain (the album) throughout it, but the album is absolutely where it’s at. The album is Purple Rain. And Purple Rain is untouchable. It does also have to be noted that Prince was on fire throughout the whole of the 1980s, and albums like 1999 (1982) and Sign o’ the Times (1987) also deserve to be considered among the greatest of the decade. Still, nothing is as perfect as Purple Rain. In just under three-quarters of an hour, it lays out everything great about Prince, thoroughly laying bare why he was considered such a legend.

Purple Rain

- Release Date

-

July 27, 1984

- Runtime

-

111 minutes

- Director

-

Albert Magnoli

- Writers

-

Albert Magnoli, William Blinn

-

-

Apollonia Kotero

Apollonia

-

-

-

NewsBeat6 days ago

NewsBeat6 days agoPep Guardiola and Gary Neville agree over Arsenal title problem that benefits Man City

-

Crypto World6 days ago

Crypto World6 days agoThe SEC Conditionalises DeFi Platforms to Be Avoided for Broker Registration

-

Fashion2 days ago

Fashion2 days agoWeekend Open Thread: Theodora Dress

-

Politics7 days ago

Politics7 days agoWorld Cup exit makes Italy enter crisis mode

-

Crypto World5 days ago

Crypto World5 days agoSEC Signals Exemption for Crypto Interfaces From Broker Registration

-

News Videos4 days ago

News Videos4 days agoSecure crypto trading starts with an FIU-registered

-

Sports2 days ago

Sports2 days agoNWFL Suspends Two Players Over Post-Match Clash in Ado-Ekiti

-

Crypto World5 days ago

Crypto World5 days agoSEC Proposes Certain Crypto Interfaces Don’t Need to Register as Brokers

-

NewsBeat5 days ago

NewsBeat5 days agoTrump and Pope Leo: Behind their disagreement over Iran war

-

Politics2 days ago

Politics2 days agoPalestine barred from entering Canada for FIFA Congress

-

Crypto World1 day ago

Crypto World1 day agoRussia Pushes Bill to Criminalize Unregistered Crypto Services

-

Sports6 days ago

Sports6 days agoNWFL opens Pathway for new Clubs ahead of 2026 Season

-

Crypto World6 days ago

Crypto World6 days agoTrump whales load up ahead of Mar-a-Lago luncheon.

-

Business3 days ago

Business3 days agoCreo Medical agree sale of its manufacturing operation

-

Business6 days ago

Kering slides after Morgan Stanley downgrade, Gucci woes loom

-

Crypto World6 days ago

Crypto World6 days agoSei Network Enters Quiet Reset Phase as On-Chain Metrics Signal a Slowdown in 2026

-

Tech6 days ago

Tech6 days agoGoogle adds E2E encryption to Gmail for iOS and Android enterprise users

-

Entertainment5 days ago

Entertainment5 days agoBrand New Day’ Footage Reveals the Devastating Impact of ‘Now Way Home’

-

Tech6 days ago

Tech6 days agoApple glasses won’t go brand shopping like Meta did with Ray-Ban and Oakley

-

Entertainment5 days ago

Entertainment5 days agoKarol G’s ‘Ultra Raunchy’ Coachella Set Gave ‘Satanic Vibes’

You must be logged in to post a comment Login