Tech

BYD might have just solved the worst part of owning an EV

Electric vehicles are now common on the road, but charging still remains one of the biggest friction points. Even when you find a fast charger, stopping can easily add 30 minutes or more to a trip, which makes long distance travel feel less convenient compared to refueling a gas car.

At BYD’s charging facility in Beijing, the company is already demonstrating a system that aims to remove that delay. Vehicles are pulling in, plugging in, and charging using BYD’s second generation Blade Battery and flash charging setup, giving a clearer picture of how the technology works outside a controlled prototype environment.

Charging speeds are being pushed far beyond current standards

BYD’s pitch is centered on how quickly usable range can be added rather than how fast a battery can reach full charge. The company describes the experience in terms of a short stop, suggesting that a vehicle could gain a significant amount of range in the time it takes to grab a coffee.

The charging setup reflects that approach. The cable is suspended from an overhead rail instead of resting on the ground, which makes it easier to handle and allows it to move freely based on the position of the vehicle. It also supports connections from either side, which reduces the need to reposition the car in a busy charging area.

The battery is where most of the change is happening

While the charger itself draws attention, BYD is positioning the second generation Blade Battery as the core of the system. The company says the battery has been redesigned to handle higher charging speeds while addressing common bottlenecks such as heat buildup and performance in low temperatures.

According to BYD, the system can charge from 10 percent to 97 percent in around 12 minutes even at temperatures as low as minus 30 degrees Celsius. The company also states that the battery passes simultaneous nail penetration and charging tests, which are intended to simulate severe failure conditions.

How it compares to current fast charging

Most widely available fast chargers today operate at around 350 kilowatts, while some newer vehicles can reach closer to 500 kilowatts under peak conditions. Even in those cases, charging from 10 percent to 80 percent typically takes between 20 and 30 minutes.

BYD says its flash charging system can deliver up to 1,500 kilowatts through a single connector, which would place it well beyond current charging infrastructure. Under those conditions, the company claims the system can move from 10 percent to 70 percent in about five minutes and up to 97 percent in roughly nine minutes.

This is already in use, with plans to scale quickly

The system at BYD’s Beijing site is not being presented as a prototype, as vehicles are already using the charging stations on site, which provides a more practical indication of how the technology performs outside a controlled demonstration environment.

BYD positions this as an early stage of deployment and says it plans to build up to 20,000 of these charging stations by the end of 2026, with the network expected to expand beyond China as part of a broader global rollout, a scale that will ultimately determine whether the system remains limited to specific locations or becomes part of everyday charging infrastructure

Tech

This S’porean hand-sculpts ‘bootleg’ toys costing up to S$255

After two decades in tech, he now makes tiny collectible figures of pop culture characters

After spending two decades climbing the corporate ladder, including a stint at a FAANG company, 40-year-old Singaporean GT has found himself on a very different path.

The former tech worker has turned a hobby into a small business, making tiny, handmade action figures inspired by pop culture characters, from the Glico man to even local TV icon Phua Chu Kang.

Today, GT runs Bird Hand Toys from his home in Australia, where he sculpts and assembles 3.75-inch “bootleg” figurines based on movies, TV shows, and nostalgic cultural references.

A hobby that found him

GT’s path into toy-making was anything but deliberate.

Born and raised in Singapore and educated in Australia, he spent 15 to 17 years moving through corporate roles before eventually landing at a major global tech firm. By then, he and his wife had already built an ideal Singaporean life: stable jobs, a home, a dog, and a strong network of family and friends.

But GT also held Australian permanent residency, a document he had kept for years. Eventually, the chance to relocate and build a life in Australia became too compelling to ignore.

So in 2021, at the height of the COVID-19 pandemic, GT and his wife left their jobs, sold the car, and packed up for the move. The airports were ghostly quiet, with fewer than 10 people on their flight. Not exactly a glamorous beginning, but still, it was the start of a new chapter in their lives.

Once settled in Australia, GT returned to corporate work and began looking for a creative outlet to unwind from the pressures of office life.

One day, while scrolling through Instagram, he came across a community of toy makers creating miniature action figures. Intrigued, he decided to try making some himself sometime in 2022.

GT was able to teach himself through trial and error and picked up the hobby very quickly.

“There’s very little information out there, so a lot of the early process involved a lot of problem solving to figure out every step of the process, from the size of the card backing, types of glue and paper to use, plastic blister packaging supplier and how to make a bootleg toy.”

In those early days, GT’s work drew heavily from the films, TV shows, and pop culture he grew up with in the 20th century. The first few figures he made were enough to suggest he might actually be onto something.

The project took a more concrete turn when he won an eBay bid for more than 100 vintage Star Wars figures for US$180 (S$230), which he used as base models for his creations.

The making of a bootleg action figurine

Bird Hand Toys sits within a niche creative space in what GT calls the “bootleg toy space”—unofficial action figures of movie and TV characters that never got to the commercial production stage.

One of the earliest pieces he made was a miniature version of American artist Jackson Pollock, complete with a tiny paintbrush, paint splatters, a checked scarf and a cigarette. It took about a month to complete the action figure.

In the early days, GT’s process was relatively simple: he would source an existing action figure and then sculpt over it to transform it into an entirely new character.

However, he quickly realised this approach came with limitations.

Firstly, there’s a limited number of existing action figures he can get a hold of. Secondly, this approach was not economical as each base figure would cost anywhere from S$30 to S$50. As such, it became difficult to scale or fulfil bulk orders.

In one instance, a chef in the US commissioned 15 of a specific figure. GT recalled having to search across multiple stores in New South Wales just to secure enough base figures to complete the order.

While he still occasionally uses his original method, GT has moved into designing and 3D printing his base figures.

He said this change removed many of the earlier constraints and significantly lowered costs, allowing him to produce multiple figures more efficiently. It also gave him greater creative freedom, enabling more detailed sculpting and refinement around each base model, which in turn improved the overall quality of his work.

For each action figure, completion could take any time from a day to two weeks, depending on its complexity.

While the materials to create them may appear simple—basic tools such as a base figure, a Dremel, sculpting putty and paint—the process is far from mechanical.

GT treats each piece as a design exercise, requiring not just handcraft but an eye for composition. Each design has to reflect the character’s cultural context while still working as a cohesive visual piece that can sit on a wall or shelf.

All of his figurines are 3.75 inches tall. While it might seem like a random choice, the size was popularised by vintage Star Wars action figures, and for GT, it also came down to practical reasons.

“I guess they are this size because of the eBay lot, which started me in this scale, and everything else, like the blister packaging and card backing, has been configured for this scale, so I’ve kept to it,” said GT.

“Also, I find this size to be the most appealing as it has that nostalgic G.I. Joe and Star Wars feel to it.”

Gaining traction & recognition

Each one of GT’s figurines starts from S$70, though the most expensive piece he has ever sold has gone for US$200 (S$255). A Singaporean buyer also recently dropped nearly S$700 on his figurines.

At the start, GT focused on international characters. It was only this year that he launched a Singapore/Asia-focused page because he thought it would be “interesting to reimagine classic 90s Singapore TV characters and moments into action figures.”

Through this process, he found that a lot of the old logos and pictures of Singaporean pop culture figures were low resolution since they were from “way back.”

“I felt it would be meaningful to give them the love they deserve to have a fresh, sharpened logo and images so they don’t fade with time,” he said.

“Also, people do find these very nostalgic, and the format of it as a semi-art piece that they can own is something that really works.”

According to the 40-year-old, the first month of the Asia page has taken off very quickly.

He shared that he has been receiving a lot of positive feedback and encouragement for what he is doing, including from singer-songwriter Inch Chua, whom he made a figurine of.

Apart from Chua, his celebrity-inspired figures have also found their way to British DJ Fatboy Slim and Singapore actor Gurmit Singh. GT has also taken on commissioned work, including a custom piece for DJ Tim Oh, complete with miniature accessories based on his interests.

Part of this traction has also come from retail exposure. Since Mar, his designs have been stocked at Singapore fashion store The Corner Shop (杂货店), a collaboration that went viral online.

Beyond retail, most of GT’s sales still come online. Through direct commissions and orders, his handmade figurines have made their way to homes across the US, Japan, and Thailand.

Focusing on Bird Hand Toys full time

All this while, Bird Hand Toys had remained a side hustle for GT. But after being “recently laid off” from the FAANG company he worked at in Australia, he decided to make it his full-time focus.

“This is the first time I’ve had the opportunity to fully pursue a more creative path, and it is an opportunity to use my time towards a craft I’m passionate about,” he explained.

The challenge, of course, as it has been from the start, is running the business as a one-man show, from responding to customer messages on Instagram to creating new content and fulfilling commission orders.

Looking ahead, GT wants to continue honing his craft and get even better at it. He does have one goal that’s equal parts humble and quietly ambitious.

It would be nice to be on holiday and find my stuff on a shelf in a store now and then.

- Learn more about Bird Hand Toys here.

- Read other articles we’ve written on Singaporean businesses here.

Featured Image Credit: Bird Hand Toys

Tech

New Linux ‘Copy Fail’ Vulnerability Enables Root Access On Major Distros

A newly disclosed Linux kernel flaw dubbed “Copy Fail” can let a local, unprivileged attacker gain root access on major Linux distributions, with researchers claiming the bug affects kernels shipped since 2017. “The POC exploit works out of the box today, but a future version that can escape from containers like Docker is promised soon,” writes Slashdot reader tylerni7. “Technical details are available here.” Slashdot reader BrianFagioli shares a report from NERDS.xyz: A newly disclosed Linux kernel vulnerability called Copy Fail (CVE-2026-31431) allows an unprivileged user to gain root access using a tiny 732-byte script, and it works with unsettling consistency across major distributions. Unlike older exploits that relied on race conditions or fragile timing, this one is a straight-line logic flaw in the kernel’s crypto subsystem. It abuses AF_ALG sockets and splice to overwrite a few bytes in the page cache of a target file, such as /usr/bin/su. Because the kernel executes from the page cache, not directly from disk, the attacker can inject code into a setuid binary in memory and immediately escalate privileges.

What makes this especially concerning is how quiet it is. The file on disk remains unchanged, so standard integrity checks see nothing wrong, while the in-memory version has already been tampered with. The same primitive can also cross container boundaries since the page cache is shared, raising the stakes for multi-tenant environments and Kubernetes nodes. The underlying issue traces back to an in-place optimization added years ago, now being rolled back as part of the fix. Until patched kernels are widely deployed, this is one of those bugs that feels less like a theoretical risk and more like a practical, reliable path to full system compromise.

Tech

US Senators Ban Themselves From Prediction Markets Trading

The U.S. Senate unanimously passed a rule banning senators from trading on prediction markets effective immediately. CNBC reports: The move came amid rising concern about insider trading on prediction market platforms such as Kalshi and Polymarket, and about event contracts that can involve death or violence. On April 22, Kalshi said it had suspended and fined one U.S. Senate candidate and two candidates for the House of Representatives for political insider trading on their own campaigns.

Earlier on Thursday, a group of Democratic members of Congress called on the Commodity Futures Trading Commission to issue a rule “that prevents insider trading and corruption in the market and prohibits event contracts on the outcome of elections, war and military actions in the U.S. or abroad, sports, and government actions without a valid economic hedging interest.” Kalshi and Polymarket both praised the Senate’s action. “I applaud the Senate for passing this resolution to ban Senators and their offices from trading on prediction markets,” Kalshi CEO Tarek Mansour wrote in a post on X. “Kalshi already proactively blocks members of congress and enforces against insider trading. This is a great step to increase trust in our markets by making it an industry standard,” Mansour said. “Now, let’s pass this in the House!”

Polymarket, in its own post on X, said, “We’re in full support of this. Our Rulebook & Terms of Service already prohibit such conduct, but codifying this into law is a step forward for the industry. Happy to help move this forward however we can.”

Tech

AI SEO for the Next Generation of Search: Strategies & Benefits

Search is evolving quickly, and traditional strategies alone are no longer enough to stay competitive. Today, users are increasingly turning to AI-powered platforms to find answers, discover brands, and make decisions. AI SEO services are designed to help businesses adapt to this shift and remain visible in this new search landscape.

Blueprint Digital focuses on helping brands appear not only in traditional search engine results but also in AI-generated responses across platforms like ChatGPT, Google Gemini, and Perplexity.

What Are AI SEO Services?

AI SEO go beyond standard keyword optimization. They focus on making your content accessible, relevant, and authoritative enough to be included in AI-generated answers.

This approach combines several elements, including:

- Technical optimization to ensure content is easy for AI systems to interpret

- Structured content that clearly answers user intent

- Authority building through high-quality content and backlinks

- Audience research to align with how people ask questions in AI tools

The goal is to position your brand as a trusted source that AI platforms can reference and recommend.

Why AI Search Matters

The way people search online is changing. Instead of scrolling through pages of links, users are increasingly relying on direct answers generated by AI tools.

Recent data shows that AI is already influencing a significant portion of search behavior. For example, a large percentage of users have interacted with AI tools recently, and many search queries now trigger AI-generated summaries.

This shift means businesses need to optimize not just for rankings, but for visibility within these AI responses.

A Strategic Approach to AI-Driven SEO

Effective AI SEO require a combination of strategy, content, and technical expertise. Blueprint Digital takes a comprehensive approach that aligns with how modern search works.

This typically includes:

- Identifying topics and questions your audience is asking

- Creating content that directly addresses those queries

- Structuring content for clarity and easy extraction by AI systems

- Strengthening domain authority to increase trust signals

By focusing on these areas, businesses can improve their chances of being featured in AI-driven search results.

From Visibility to Conversions

Being mentioned in AI results is valuable, but it is only part of the equation. The ultimate goal is to turn that visibility into measurable outcomes.

AI SEO is designed to:

- Increase qualified traffic

- Improve engagement with your content

- Generate leads and conversions

- Strengthen overall brand authority

By aligning SEO efforts with business goals, companies can ensure their strategy delivers real results, not just impressions.

Who Can Benefit from AI SEO?

AI-focused optimization is especially useful for businesses that rely on online visibility to generate leads or sales. This includes:

- Service-based businesses

- eCommerce brands

- B2B companies

- Local and national organizations

As AI continues to influence how people search, adopting this approach early can provide a competitive advantage.

Preparing for the Future of Search

Search is no longer limited to traditional engines. AI platforms are becoming a primary way people discover information, and businesses need to adapt accordingly.

By investing in AI SEO, companies can position themselves for long-term success in a rapidly changing digital environment. With the right strategy, it becomes possible to stay visible, relevant, and competitive as search continues to evolve.

Related: Search Engine Ranking Reports: Unveiling SEO Tools and Strategies

Tech

Quality Concerns Remain as States Invest More Than Ever in Preschool Programs

More four-year-olds are enrolled in state-funded preschools than ever before, but the quality and availability of preschool programs have experts concerned about creating a system of haves and have-nots.

“If providing high-quality preschool education to all 3- and 4-year-olds were a race, some states are nearing the finish line, others have stumbled and fallen behind, and a few have yet to leave the starting line,” an annual report from the National Institute of Early Education Research states.

With the amount of funding and quality varying by state, it means that access for families in states that aren’t investing still widely varies.

The report, titled “State of Preschool: 2025 Yearbook,” breaks down the annual spending, quality and enrollment numbers across early childhood education programs in the U.S. The latest found states hit an all-time high for both spending and enrollment, but the quality of the programs remains a concern.

“We’re trying to make sure states are also thinking about quality,” Allison Friedman-Krauss, an associate research professor at NIEER, says. “Right now, it’s more about access. And we don’t want them to forget about quality.”

More Funding – But Not Always More Quality

The report found funding peaked at nearly $14.4 billion, though that was largely driven by a handful of states: $4.1 billion in California alone, along with $1.2 billion in New Jersey and $1 billion in New York. Those three states accounted for nearly half (45 percent) of all state pre-K spending.

More than two dozen states also increased their preschool spending, which can go toward things like improving teacher-to-student rations and improving teacher compensation, the latter which has long been a concern.

While states still increased their spending on pre-K this year, the rate at which states are investing is slowing down. Adjusted for inflation, each state spent an average of $45 more per child than the 2023-2024 year. However, last year’s increase in spending was 16 times as large.

New Jersey, Oregon and the District of Columbia gave more than $15,000 in state funding per child enrolled in preschool. Six other states (California, Connecticut, Delaware, Michigan, New Mexico, and Washington) spent more than $10,000 per child enrolled in pre-K. Twenty eight states overall spent more funding per child, adjusted for inflation, than past years.

Seventeen states spent less on preschool in 2024-2025 than they did in 2023-2024, when adjusted for inflation. The researchers attributed the spending decline in part to overall state deficits and falling enrollment across many states.

However, that’s not always the case. New Jersey had a budget deficit but invested an additional $100 million into expanding preschool programs for all.

Pointing toward this, Steve Barnett, director of NIEER, argues that it’s all about state priorities: “That’s a conscious decision to say we’re going to spend less,” he says. “And you have to ask if the declining enrollment – even if not intentional – is a way to reduce spending [in the sector]. As opposed to, ‘Maybe we should work on getting parents to enroll their kid.’”

The boost in funding did not always correlate to better early childhood education programs. Only six states met all of NIEER’s 10 quality standards benchmarks, which includes a maximum class size of 20 students, a requirement that teachers have bachelor’s degrees and a classroom ratio of at least one staff member for every 10 students.

Source: NIEER

States looking to enhance preschool quality should focus on class size and teacher pay, Barnett argues.

Teacher pay and class sizes account for most of the money, and once states have improved those, other metrics, like curriculum supports and health screenings, are easier to pay for later, he adds.

But changes won’t happen overnight.

“It does take time. You can’t just wave a magic wand and have classroom size and teachers’ pay magically fixed,” Barnett says.

NIEER’s Friedman-Krauss, pointed to Alabama and Georgia as examples of slowly, but surely, increasing preschool quality. Georgia hit all 10 quality benchmarks this year. Friedman-Krauss credits the improvement to a $97.6 million investment by the state, which helped lower classroom size from 22 to 20 and increased teacher pay.

“We make a big deal of it because it’s serving most of the 4-year-old [children] and hitting all the benchmarks,” Barnett says. “It’s a state that lost them and came back even stronger; that’s a good sign.”

Lion’s Share of Enrollment Only in a Few States

Enrollment, similarly to funding, reached an all-time high nationally last year, with 1.8 million children during the 2024-2025 school year. But roughly half of that comes from four states: California, Texas, New York and Florida.

Notably, a dozen states had more than half of their four-year-olds in state-funded preschool programs, with the District of Columbia topping the list: 94 percent of four-year-olds were enrolled in their state programs. California’s enrollment gains were buoyed in part due to the state’s universal pre-K promise.

Source: NIEER

However, twenty states enrolled fewer preschoolers in 2024-2025 than the prior year. Some could blame the dip on declining birth rates. But when adjusted by population percentage, 21 states still saw a dip.

For some states, the enrollment decline was steep. Indeed, six states (Arizona, Florida, New York, Ohio, Oklahoma, and Wisconsin) decreased enrollment by more than 1,000 children.

Three-year-old students made up only 9 percent enrollment across the nation, up from 5 percent a decade earlier. Some states are acting to counter this. For example, Illinois and New Jersey are both focusing on expanding preschool programs for three-year-olds, Friedman-Krauss says. However, she and Barnett expect a slow mass adoption of three-year-olds in state-funded programs.

“I think there will be more attention paid to that group – how much more, that’s the hard part,” Barnett says. “Nine percent is better than when we started, but it’s very lumpy. It’s still 0 percent in lots of places.”

Tech

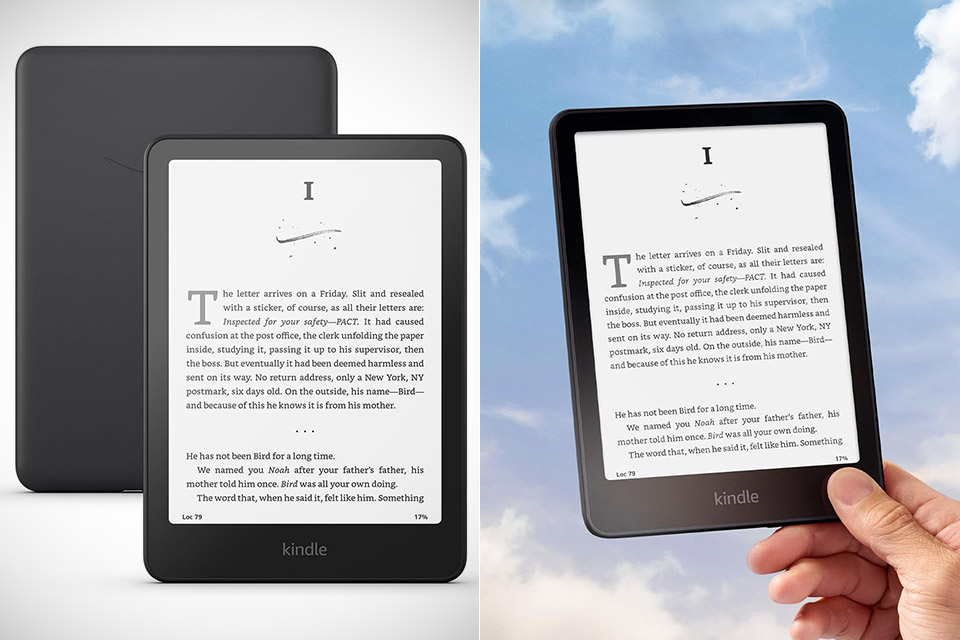

How the Kindle Paperwhite Makes Every Page Turn Feel Natural

Carrying a Kindle Paperwhite, priced at $134.99 (was $159.99), in one’s hands everyday acts as a constant reminder of what makes this device a must-have for bookworms. This e-reader weighs only 7 oz, hence why it seems like something that the device could float as it easily fits into one’s pockets or bag without them knowing.

To kick things off, it attempts to create the real page-turning experience, and compared to a regular book, it would be like day and night difference. The new model has 300 pixels per inch, outperforming its predecessor and rendering texts as clear as a crystal in direct sunshine, making it a lifesaver for readers like myself who love to read outside. Finally, the device’s backlight works well, since it automatically adjusts based on the illumination in the room you’re reading in, and there’s even a feature that turns the brightness into a warmer color when you read late at night.

The pages flip 25% faster than in the previous version, and all menus appear instantly. It is 20% faster, which is fairly astonishing, especially for those who read books, since they will not be bored or frustrated by the time it takes to turn the pages. Also, the touch response is exceptionally fast, so it does not interfere with the reader’s perspective when reading. The battery performance provides 12 weeks of reading time for 30 minutes of reading per day without WiFi or lighting. The recharge will just take 2.5 hours.

Among other advantages, IPX8 waterproof technology ensures that you can use it even in the rain or while taking a bath without damage. Furthermore, it can withstand immersion in 2 meters of fresh water for an hour, allowing you to take it to a pool, beach, or simply bathe. In addition, the Paperwhite has a large amount of storage space; 16 GB of built-in memory allows for the storage of thousands of books. A Wi-Fi connection enables you to connect to your library in minute, or if you want to listen to an audiobook, simply turn on Bluetooth.

Tech

MAGA Is Confused About ‘Animal Farm’

If you read George Orwell’s classic political satire Animal Farm in seventh grade, you probably remember the basic contours of the plot: fed up with human rule, a group of well-intentioned barnyard animals set up their own egalitarian society, with disastrous results. Published in 1945, Animal Farm has a timeless (and, certainly, contemporarily relevant) message: It’s about how the impulse to retain power will always come at the expense of our basic morality.

That message, however, seems to have been lost on most MAGA influencers assigned the book in middle school (if they even read it at all). After their failure to cancel Barbie or the Wicked movies, conservatives have moved on to a new film adaptation of Animal Farm. (The animated film, which is directed by Lord of the Rings star Andy Serkis, opens May 1).

The problem, however, is that they’ve failed to reach a consensus on what the actual message of Animal Farm is.

The right-wing outrage cycle over a movie featuring Seth Rogen making fart jokes appears to have been sparked by influencers like Emily Saves America and Riley Gaines, who recently posted the trailer for the film. In an April 28 X post, Gaines tweeted that the film was “incredibly well done. They do a perfect job of reminding viewers that Marxism always has and always will fail.” She hashtagged her tweet #AnimalFarmPartner, leading people to assume the post had been the result of a paid partnership between herself and Angel Studios, the Utah-based entertainment company distributing the film, which was also behind the faith-based blockbusters Sound of Freedom and The King of Kings.

Many on both the left and the right found Gaines’ tweet bizarre, in part because while Animal Farm is certainly a critique of Stalinism, it’s also very clearly not a full-throated endorsement of capitalist ideals. The human owner of the farm is a capitalist, and after he is overthrown, the power-hungry pigs mimic his behaviors, adopting human clothes and profiting off the labor of the other farm animals. The book is ultimately less a condemnation of specific systems of governance than a critique of mankind’s lust for power and blind adherence to ideology.

In the latest adaptation, Serkis also tweaked the plot by adding a greedy human character (voiced by Glenn Close) who wants to buy the farm, characterizing the film in USA Today as “about authoritarianism and power corrupting and our response to that”—a message that, in theory at least, would certainly resonate with 2026 audiences.

It clearly did not, however, resonate with many of Gaines’ ideological bedfellows, who pounced on her for being a Marxist shill. “Promoting communism is the new gay for pay,” right wing podcaster Tim Pool tweeted. Earlier this month, he posted that he had turned down an offer from Angel Studios to promote the film due to it being “pro communism and anti-capitalism.” The influencer Peachy Keenan also excoriated the film, calling it “retarded socialist propaganda.”

The inability to reach a consensus on the actual message of the new Animal Farm movie may very well be a reflection of its artistic merits, or lack thereof. (Indeed, the film currently has a 23 percent rating on Rotten Tomatoes.) But it’s also just generally a reflection of how little media literacy exists in our current information landscape—an issue that, in fairness, is far from specific to the right. Unless the moral messaging of a work of fiction is clearly and consistently telegraphed throughout, there seems to be a complete inability to accept ambiguity or contradiction, or to acknowledge that multiple ideas can be good or bad at the same time.

Though middle schoolers might be able to immediately grasp the takeaways from Animal Farm, it says something that high-profile political commentators can’t. In fairness, Orwell himself, who has been claimed by both the right and the left during his lifetime and beyond, probably would have appreciated the confusion his novel has wrought—even if he may not have appreciated Seth Rogen’s fart jokes.

Tech

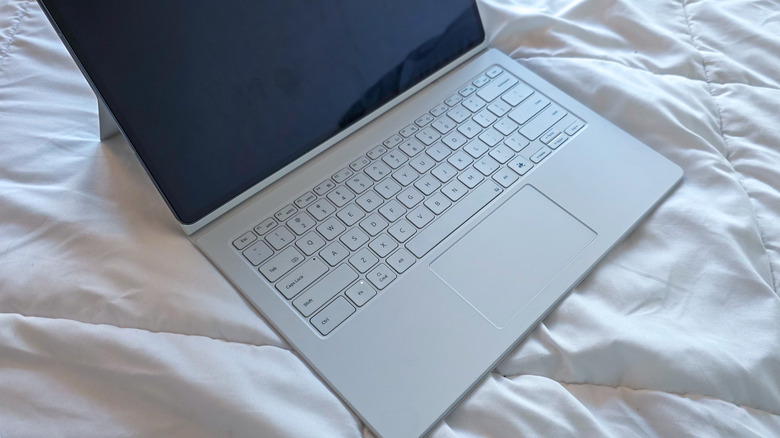

Can A Samsung Tablet Replace A Laptop?

The gap between a laptop and a tablet has never been smaller. In 2026, Apple is out here selling a laptop with a smartphone processor in it — the MacBook Neo — while also selling the iPad Pro, a tablet with a PC chip at its heart. The A18 Pro chip at the core of the Neo, the same chip which powered the iPhone 16 Pro, is more powerful than an Intel Core i7 processor from a decade ago. But while the iPad Pro may run laps around many laptops in sheer performance, what if you don’t want to be locked to the Apple ecosystem? Can a Samsung Galaxy Tab tablet replace your laptop?

No beating around the bush: a high-end Samsung tablet can easily replace a laptop for a large number of people. For the past two years, I’ve replaced my Windows laptop with a Samsung tablet. When I first embarked on what was, at the time, a bold experiment in my personal computing habits, I wrote that I was shocked at how capable Samsung tablets were but that there were still a few quirks. In 2026, I wouldn’t say all the kinks have been ironed out, but there are fewer of them with easier workarounds.

Crucially, I’m using a Galaxy Tab S10 Ultra, a beast of a tablet with a 14.5″ display and a Mediatek Dimensity 9300+ processor. I would strongly caution against trying to replace your laptop with one of Samsung’s budget tablets, as it simply won’t have the necessary power or display real estate. So, if you’re wondering whether your next computer should be a Samsung tablet, here are the pros and cons I’ve found after making the switch.

Samsung’s tablets are more laptop than ever

With the right accessories, Samsung’s most powerful tablets are easy laptop replacements. My current setup uses a Galaxy Tab S10 Ultra — a 14.5″ AMOLED display makes it bigger than some laptops, and much nicer to look at, too — paired with the official Book Cover Keyboard. A trackpad and backlit keyboard snaps magnetically into place and folds up like a laptop when not in use. Other times, I’ll opt for a mouse and a low-profile mechanical keyboard, bringing it closer to a desktop experience.

DeX, the built-in desktop mode, is Samsung’s secret sauce on the software side. Sure, you can use the Tab S10 Ultra in normal tablet mode, but with multi-monitor support and virtual desktops added in Samsung’s One UI 8.0 Android skin, DeX is now closer than ever to mimicking the functionality of a laptop without detracting from the Galaxy Tab’s strengths as a tablet.

Moreover, Samsung’s tablets are in a Goldilocks zone. No other device I own is so well-suited to both productivity and leisure. I can write articles like these with Google Docs, a web browser, and Slack open onscreen at once, and I can easily move from the desk to my bed if I want to kick back and enjoy an episode of “The Boys” using the tablet’s lavish display and shockingly good quad-firing speakers with Dolby Atmos support.

I’ve even had a blast gaming on my Galaxy Tab. Local titles like “Destiny: Rising” are a treat on the large screen, and I played through most of “Cyberpunk 2077” in the cloud through GeForce Now. It’s not the same as playing on my Windows gaming rig, but it’s remarkable that a device as thin as its own USB-C port can deliver these laptop-grade experiences.

Galaxy tablets still have some pain points compared to PCs

Not everyone can replace their laptop with a Samsung Galaxy Tab. If you’re a visual creative who often edits video, you’ll need to make do with mobile editors like Lumia Fusion or the endlessly buggy CapCut. That’s a non-starter for most who need pro-grade creative tools like Adobe Premiere Pro. Avid gamers are in a rough spot, too. Running mobile games on a 14.5″ tablet is ludicrous fun, but for AAA titles, you’ll have to rely on cloud streaming through a service like GeForce Now, which requires a constant Internet connection.

Even run-of-the-mill office work can be a slog if you’re not willing to adjust to Android’s limitations when figuring out how to turn your Android tablet into a laptop. If you use Microsoft Office 365 software like Word or Excel, you’ll find the Android apps are extremely limited. You’ll either need to run the web app versions in a browser or opt for something like Google Workspace which works natively. As a freelance writer, I switched from Word to Google Docs, and sync everything through Google Drive. That works perfectly for now, but if I want to switch to open-source solutions like LibreOffice, I’ll be back in a tough spot.

Still, these are all software limitations. The hardware itself is remarkably capable. In fact, I recently converted a decade-old laptop running an Intel Core i7 Kaby Lake processor to Fedora Linux and have been using it alongside my Galaxy Tab S10 Ultra. The laptop is no slouch, but the tablet still puts it to shame. As time goes on, you can only expect tablets to become even more performant. In the very near future, it may finally be time to redefine the boundaries between mobile and desktop hardware.

Tech

Good Luck Getting a Mac Mini for the Next ‘Several Months’

Apple CEO Tim Cook said on the company’s earnings call on Thursday that it could take “several months” to meet skyrocketing demand for the Mac Mini, the company’s compact but mighty, screen-free desktop computer. Cook’s remarks come after coders determined in recent months that the Mac Mini was the perfect machine for agentic AI tasks.

“On the Mac Mini and Mac Studio, both of these are amazing platforms for AI and agentic tools,” Cook said on the earnings call, in response to analyst questions. “And customer adoption of that is happening faster than we expected.”

The news comes amid another record-setting quarter for the company. iPhone sales came up shorter than expected, though demand for the iPhone 17 has been super high, and Apple’s subscription services business has continued to grow.

Apple faced supply constraints on both the iPhone and the Mac product line this quarter. iPhone shortages are being driven mostly by a limited supply of the advanced chips that power the phones. But as Cook made clear, at least two different factors are driving shortages in Apple’s Mac business: The rapid adoption of generative AI and unexpected demand for the company’s new, colorful, and more affordable MacBook Neo laptop.

Mac sales are typically a fraction of what iPhone sales are—$8.4 billion this quarter, compared to nearly $57 billion in sales of the iPhone—and the Mac Mini, specifically, is a fraction of that. But with the launch of OpenClaw earlier this year, an open-source AI tool, Mac Minis began flying off the shelves because they offer both enough power and a dedicated computing environment for agentic AI tasks.

Some eager customers have already been waiting months for their Mac Minis. MacRumors reported in early March that Apple had stopped selling a configuration of the computer that included 512 GB of memory. As of last week, the base model of Mac Mini was entirely sold out.

Cook, and his soon-to-be-successor John Ternus, also addressed Cook’s transition out of the CEO role later this year. Cook said on the earnings call that it’s the “right moment” to step into the executive chairman role for a “number of reasons,” including that Apple is well-positioned financially and that its upcoming product road map is “incredible.” He called Ternus a “person of remarkable character and a born leader.”

Ternus then joined the call for a minute to vouch for Cook as a business leader and to assure investors he’d take a similarly deliberate and thoughtful approach in leading the company. He, too, mentioned the company’s road map.

Both men were scant on details around this supposedly very exciting product road map, but hopefully, it includes more … road Macs.

Tech

DAIMON Robotics Wants to Give Robot Hands a Sense of Touch

This article is brought to you by DAIMON Robotics.

This April, Hong Kong-based DAIMON Robotics has released Daimon-Infinity, which it describes as the largest omni-modal robotic dataset for physical AI, featuring high resolution tactile sensing and spanning a wide range of tasks from folding laundry at home to manufacturing on factory assembly lines. The project is supported by collaborative efforts of partners across China and the globe, including Google DeepMind, Northwestern University, and the National University of Singapore.

The move signals a key strategic initiative for DAIMON, a two-and-a-half-year-old company known for its advanced tactile sensor hardware, most notably a monochromatic, vision-based tactile sensor that packs over 110,000 effective sensing units into a fingertip-sized module. Drawing on its high-resolution tactile sensing technology and a distributed out-of-lab collection network capable of generating millions of hours of data annually, DAIMON is building large-scale robot manipulation datasets that include vast amounts of tactile sensing data. To accelerate the real-world deployment of embodied AI, the company has also open-sourced 10,000 hours of its data.

Prof. Michael Yu Wang, co-founder and chief scientist at DAIMON Robotics, has pioneered Vision-Tactile-Language-Action (VTLA) architecture, elevating the tactile to a modality on par with vision.DAIMON Robotics

Prof. Michael Yu Wang, co-founder and chief scientist at DAIMON Robotics, has pioneered Vision-Tactile-Language-Action (VTLA) architecture, elevating the tactile to a modality on par with vision.DAIMON Robotics

Behind the strategy is Prof. Michael Yu Wang, DAIMON’s co-founder and chief scientist. Prof. Wang earned his PhD at Carnegie Mellon — studying manipulation under Matt Mason — and went on to found the Robotics Institute at the Hong Kong University of Science and Technology. An IEEE Fellow and former Editor-in-Chief of IEEE Transactions on Automation Science and Engineering, he has spent roughly four decades in the field. His objective is to address the missing “insensitivity” of robot manipulation, which practically relies on the dominant Vision-Language-Action (VLA) model. He and his team have pioneered Vision-Tactile-Language-Action (VTLA) architecture, elevating the tactile to a modality on par with vision.

We spoke with Prof. Wang about how tactile feedback aims to change dexterous manipulation, how the dataset initiative is foreseen to improve our understanding of robotic hands in natural environments, and where — from hotels to convenience stores in China — he sees touch-enabled robots making their first real-world inroads.

Daimon-Infinity is the world’s largest omni-modal dataset for Physical AI, featuring million-hour scale multimodal data, ultra-high-res tactile feedback, data from 80+ real scenarios and 2,000+ human skills, and more.DAIMON Robotics

The Dataset Initiative

This month, DAIMON Robotics released the largest and most comprehensive robotic manipulation dataset with multiple leading academic institutions and enterprises. Why releasing the dataset now, rather than continuing to focus on product development? What impact will this have on the embodied intelligence industry?

DAIMON Robotics has been around for almost two and a half years. We have been committed to developing high-resolution, multimodal tactile sensing devices to perceive the interaction between a robot’s hand (particularly its fingertips) and objects. Our devices have become quite robust. They are now accepted and used by a large segment of users, including academic and research institutes as well as leading humanoid robotics companies.

As embodied AI continues to advance, the critical role of data has been clearer. Data scarcity remains a primary bottleneck in robot learning, particularly the lack of physical interaction data, which is essential for robots to operate effectively in the real world. Consequently, data quality, reliability, and cost have become major concerns in both research and commercial development.

This is exactly where DAIMON excels. Our vision-based tactile technology captures high-quality, multimodal tactile data. Beyond basic contact forces, it records deformation, slip and friction, material properties and surface textures — enabling a comprehensive reconstruction of physical interactions. Building on our expertise in multimodal fusion, we have developed a robust data processing pipeline that seamlessly integrates tactile feedback with vision, motion trajectories, and natural language, transforming raw inputs into training-ready dataset for machine learning models.

Recognizing the industry-wide data gap, we view large-scale data collection not only as our unique competitive advantage, but as a responsibility to the broader community.

By building and open-sourcing the dataset, we aim to provide the high-quality “fuel” needed to power embodied AI, ultimately accelerating the real-world deployment of general-purpose robotic foundation models.

The robotics industry is highly competitive, and many teams have chosen to focus on data. DAIMON is releasing a large and highly comprehensive cross-embodiment, vision-based tactile multimodal robotic manipulation dataset. How were you able to achieve this?

We have a dedicated in-house team focused on expanding our capabilities, including building hardware devices and developing our own large-scale model. Although we are a relatively small company, our core tactile sensing technology and innovative data collection paradigm enable us to build large-scale dataset.

Our approach is to broaden our offering. We have built the world’s largest distributed out-of-lab data collection network. Rather than relying on centralized data factories, this lightweight and scalable system allows data to be gathered across diverse real-world environments, enabling us to generate millions of hours of data per year.

“To drive the advancement of the entire embodied AI field, we have open-sourced 10,000 hours of the dataset for the broader community.” —Prof. Michael Yu Wang, DAIMON Robotics

This dataset is being jointly developed with several institutions worldwide. What roles did they play in its development, and how will the dataset benefit their research and products?

Besides China based teams, our partners include leading research groups from universities, such as Northwestern University and the National University of Singapore, as well as top global enterprises like Google DeepMind and China Mobile. Their decision to partner with DAIMON is a strong testament to the value of our tactile-rich dataset.

Among the companies involved there are some that have already built their own models but are now incorporating tactile information. By deploying our data collection devices across research, manufacturing and other real-world scenarios, they help us to gather highly practical, application-driven data. In turn, our partners leverage the data to train models tailored to their specific use cases. Furthermore, to drive the advancement of the entire embodied AI field, we have open-sourced 10,000 hours of the dataset for the broader community.

Equipped with Daimon’s visuotactile sensor, the gripper delicately senses contact and precisely controls force to pick up a fragile eggshell.Daimon Robotics

Equipped with Daimon’s visuotactile sensor, the gripper delicately senses contact and precisely controls force to pick up a fragile eggshell.Daimon Robotics

From VLA to VTLA: Why Tactile Sensing Changes the Equation

The mainstream paradigm in robotics is currently the Vision-Language-Action (VLA) model, but your team has proposed a Vision-Tactile-Language-Action (VTLA) model. Why is it necessary to incorporate tactile sensing? What does it enable robots to achieve, and which tasks are likely to fail without tactile feedback?

Over these years of working to make generalist robots capable of performing manipulation tasks, especially dexterous manipulation — not just power grasping or holding an object, but manipulating objects and using tools to impart forces and motion onto parts — we see these robots being used in household as well as industrial assembly settings.

It is well established that tactile information is essential for providing feedback about contact states so that robots can guide their hands and fingers to perform reliable manipulation. Without tactile sensing, robots are severely limited. They struggle to locate objects in dark environments, and without slip detection, they can easily drop fragile items like glass. Furthermore, the inability to precisely control force often leads to failed manipulation tasks or, in severe cases, physical damage. Naturally, the VLA approach needs to be enhanced to incorporate tactile information. We expanded the VLA framework to incorporate tactile data, creating the VTLA model.

An additional benefit of our tactile sensor is that it is vision-based: We capture visual images of the deformation on the fingertip surface. We capture multiple images in a time sequence that encodes contact information, from which we can infer forces and other contact states. This aligns well with the visual framework that VLA is based upon. Having tactile information in a visual image format makes it naturally suitable for integration into the VLA framework, transforming it into a VTLA system. That is the key advantage: Vision-based tactile sensors provide very high resolution at the pixel level, and this data can be incorporated into the framework, whether it is an end-to-end model or another type of architecture.

DAIMON has been known for its vision-based tactile sensors that can pack over 110,000 effective sensing units.DAIMON Robotics

DAIMON has been known for its vision-based tactile sensors that can pack over 110,000 effective sensing units.DAIMON Robotics

The Technology: Monochromatic Vision-based Tactile Sensing

You and your team have spent many years deeply engaged in vision-based tactile sensing and have developed the world’s first monochromatic vision-based tactile sensing technology. Why did you choose this technical path?

Once we started investigating tactile sensors, we understood our needs. We wanted sensors that closely mimic what we have under our fingertip skin. Physiological studies have well documented the capabilities humans have at their fingertips — knowing what we touch, what kind of material it is, how forces are distributed, and whether it is moving into the right position as our brain controls our hands. We knew that replicating these capabilities on a robot hand’s fingertips would help considerably.

When we surveyed existing technologies, we found many types, including vision-based tactile sensors with tri-color optics and other simpler designs. We decided to integrate the best of these into an engineering-robust solution that works well without being overly complicated, keeping cost, reliability, and sensitivity within a satisfactory range, thus ultimately developing a monochromatic vision-based tactile sensing technique. This is fundamentally an engineering approach rather than a purely scientific one, since a great deal of foundational research already existed. With the growing realization of the necessity of tactile data, all of this will advance hand in hand.

DAIMON vision-based tactile sensor captures high-quality, multimodal tactile data.DAIMON Robotics

DAIMON vision-based tactile sensor captures high-quality, multimodal tactile data.DAIMON Robotics

Last year, DAIMON launched a multi-dimensional, high-resolution, high-frequency vision-based tactile sensor. Compared with traditional tactile sensors, where does its core advantage lie? Which industries could it potentially transform?

The key features of our sensors are the density of distributed force measurement and the deformation we can capture over the area of a fingertip. I believe we have the highest density in terms of sensing units. That is one very important metric. The other is dynamics: the frequency and bandwidth — how quickly we can detect force changes, transmit signals, and process them in real time. Other important aspects are largely engineering-related, such as reliability, drift, durability of the soft surface, and resistance to interference from magnetic, optical, or environmental factors.

A growing number of researchers and companies are recognizing the importance of tactile sensing and adopting our technology. I believe the advances in tactile sensing will elevate the entire community and industry to a higher level. One of our potential customers is deploying humanoid robots in a small convenience store, with densely packed shelves where shelf space is at a premium. The robot needs to reach into very tight spaces — tighter than books on a shelf — to pick out an object. Current two-jaw parallel grippers cannot fit into most of these spaces. Observing how humans pick up objects, you clearly need at least three slim fingers to touch and roll the object toward you and secure it. Thus, we are starting to see very specific needs where tactile sensing capabilities are essential.

From Academia to Startup

After 40 years in academia — founding the HKUST Robotics Institute, earning prestigious honors including IEEE Fellow, and serving as Editor-in-Chief of IEEE TASE — what motivated you to found DAIMON Robotics?

I have come a long way. I started learning robotics during my PhD at Carnegie Mellon, where there were truly remarkable groups working on locomotion under Marc Raibert, who founded Boston Dynamics, and on manipulation under my advisor, Matt Mason, a leader in the field. We have been working on dexterous manipulation, not only at Carnegie Mellon, but globally for many years.

However, progress has been limited for a long time, especially in building dexterous hands and making them work. Only recently have locomotion robots truly taken off, and only in the last few years have we begun to see major advancements in robot hands. There is clearly room for advancing manipulation capabilities, which would enable robots to do work like humans. While at Hong Kong University of Science and Technology, I saw increasingly greater people entering this area in the form of students and postdoctoral researchers. We wanted to jumpstart our effort by leveraging the available capital and talent resources.

Fortunately, one of my postdocs, Dr. Duan Jianghua, has a strong sense for commercial opportunities. Recognizing the rapid growth of robotics market and the unique value that our vision-based tactile sensing technology could bring, together we started DAIMON Robotics, and it has progressed well. The community has grown tremendously in China, Japan, Korea, the U.S., and Europe.

Robots equipped with DAIMON technology have been deployed in factory settings. The company aims to enable robots to achieve “embodied intelligence” and close the gap between what they can see and what they can feel.DAIMON Robotics

Robots equipped with DAIMON technology have been deployed in factory settings. The company aims to enable robots to achieve “embodied intelligence” and close the gap between what they can see and what they can feel.DAIMON Robotics

Business Model and Commercial Strategy

What is DAIMON’s current business model and strategic focus? What role does the dataset release play in your commercial strategy?

We started as a device company focused on making highly capable tactile sensors, especially for robot hands. But as technology and business developed, everyone realized it is not just about one component, rather the entire technology chain: devices, data of adequate quality and quantity, and finally the right framework to build, train, and deploy models on robots in real application environments.

Our business strategy is best described as “3D”: Devices, Data, and Deployment. We build devices for data collection, our own ecosystem, and for deploying them in our partners’ potential application domains. This enables the collection of real-world tactile-rich data and complete closed-loop validation. This will become an integral part of the 3D business model. Most startups in this space are following a similar path until eventually some may become more specialized or more tightly integrated with other companies. For now, it is mostly vertical integration.

Embodied Skills and the Convergence Moment

You’ve introduced the concept of “embodied skills” as essential for humanoid robots to move beyond having just an advanced AI “brain.” What prompted this insight? What new capabilities could embodied skills enable? After the rapid evolution of models and hardware over the past two years, has your definition or roadmap for embodied skills evolved?

We have come a long way now see a convergence point where electrical, electronic, and mechatronic hardware technologies have advanced tremendously in last two decades. Robots are now fully electric, do not require hydraulics, because hardware has evolved rapidly. Modern electronics provide tremendous bandwidth with high torques. If we can build intelligence into these systems, we can create truly humanoid robots with the ability to operate in unstructured environments, make decisions, and take actions autonomously.

“Our vision is for robots to achieve robust manipulation capabilities and evolve into reliable partners for humans.” —Prof. Michael Yu Wang, DAIMON Robotics

AI has arrived at exactly the right time. Enormous resources have been invested in AI development, especially large language models, which are now being generalized into world models that enable physical AI capabilities. We would like to see these manifested in real-world systems.

While both AI and core hardware technologies continue to evolve, the focus is much clearer now. For example, human-sized robots are preferred in a home environment. This is an exciting domain with a promise of great societal benefit if we can eventually achieve safe, reliable, and cost-effective robots.

The Road to Real-World Deployment

Today, many robots can deliver impressive demos, yet there remains a gap before they truly enter real-world applications. What could be a potential trigger for real-world deployment? Which scenarios are most likely to achieve large-scale deployment first?

I think the road toward large-scale deployment of generalist robots is still long, but we are starting to see signs of feasibility within specific domains. It is very similar to autonomous vehicles, where we are yet to see full deployment of robo-taxis, while we have already started to find mobile robots and smaller vehicles widely deployed in the hospitality industry. Virtually every major hotel in China now has a delivery robot — no arms, just a vehicle that picks up items from the hotel lobby (e.g., food deliveries). The delivery person just loads the food and selects the room number. It is up to the robot thereafter to navigate and reach the guest’s room, which includes using the elevator, to deliver the food. This is already nearly 100 percent deployed in major Chinese hotels.

Hotel and restaurant robots are viewed as a model for deploying humanoid robots in specific domains like overnight drugstores and convenience stores. I expect complete deployment in such settings within a short timeframe, followed by other applications. Overall, we can expect autonomous robots, including humanoids, to progressively penetrate specific sectors, delivering value in each and expanding into others.

Ultimately, our vision is for robots to achieve robust manipulation capabilities and evolve into reliable partners for humans. By seamlessly integrating into our homes and daily lives, they will genuinely benefit and serve humanity.

This interview has been edited for length and clarity.

-

Tech3 days ago

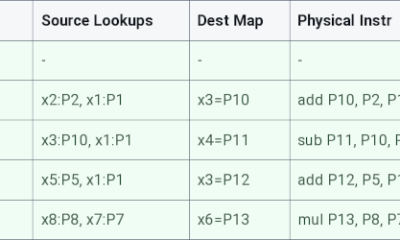

Tech3 days agoRegister Renaming | Hackaday

-

Fashion6 days ago

Fashion6 days agoWeekend Open Thread – Corporette.com

-

Crypto World5 days ago

Crypto World5 days agoHyperliquid $HYPE Rally Builds Momentum as AI Sector Enters Prove-It Phase

-

Politics3 days ago

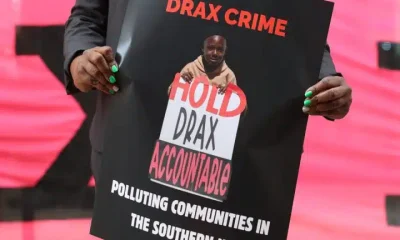

Politics3 days agoDrax board avoid their own AGM, accused of greenwashing & environmental racism

-

Sports5 days ago

Sports5 days agoIPL 2026: Ruturaj Gaikwad registers slowest fifty of the season, enters all-time unwanted list | Cricket News

-

Tech3 days ago

Tech3 days agoImages of Samsung’s rumored smart glasses have leaked

-

NewsBeat5 days ago

NewsBeat5 days agoLK Bennett closes all stores after entering administration

-

Tech4 days ago

Tech4 days agoWhy Blue Badges Disappeared From Toyota Hybrids

-

Crypto World7 days ago

Crypto World7 days agoMichael Saylor says BTC winter is over. Market analyst disagrees, says bitcoin was in a pullback

-

Fashion2 days ago

Fashion2 days agoKylie Jenner’s KHY Enters a New Era with ‘Born in LA’

-

Entertainment5 days ago

Entertainment5 days agoMariah Carey Slams Deposition Claims In Brother’s Lawsuit

-

Business2 days ago

Business2 days agoMost Commercial Energy Audits Miss the Real Losses

-

NewsBeat7 days ago

NewsBeat7 days agoTrump threatens to review UK’s claim to Falkland Islands and punish Nato allies over Iran war disagreement

-

Business6 days ago

Business6 days agoJeanine Pirro announces closure of Federal Reserve building cost probe

-

Business4 days ago

Business4 days ago(VIDEO) Charlize Theron Climbs Times Square Billboard to Promote New Netflix Thriller ‘Apex’

-

Tech5 days ago

Tech5 days agoOpenAI’s Sam Altman apologizes for not reporting ChatGPT account of Tumbler Ridge suspect to police

-

Tech5 days ago

Tech5 days agoMicrosoft to roll out Entra passkeys on Windows in late April

-

Crypto World3 days ago

Crypto World3 days agoCFTC’s AI will review U.S. crypto registration applications, chairman tells CoinDesk

-

Crypto World6 days ago

Nvidia (NVDA) Stock Jumps 5% as Intel Earnings Ignite Semiconductor Rally

-

Entertainment7 days ago

Snooki says “Jersey Shore” cast plans to film show 'until we're in a nursing home': 'It's not over for us'

You must be logged in to post a comment Login