In a lab room, a toddler, deaf from birth, sits while a tone plays. There’s no reaction. His face does not change.

Tech

Pentagon inks deals with Nvidia, Microsoft, and AWS to deploy AI on classified networks

After landing agreements with Google, SpaceX, and OpenAI, the U.S. Defense Department said on Friday that it has signed deals with Nvidia, Microsoft, Amazon Web Services, and Reflection AI that allow it to deploy their AI tech and models on its classified networks for “lawful operational use.”

“These agreements accelerate the transformation toward establishing the United States military as an AI-first fighting force and will strengthen our warfighters’ ability to maintain decision superiority across all domains of warfare,” the statement reads.

The deals come as the U.S. Department of Defense has accelerated its diversification of AI vendors in the wake of its controversial dispute with Anthropic over usage terms of its AI models. The Pentagon wanted unrestricted use of Anthropic’s AI tools, but the AI lab insisted on guardrails to prevent Anthropic’s tech from being used for domestic mass surveillance and autonomous weapons.

The two are fighting it out in court at the moment, though Anthropic in March won an injunction against the Pentagon’s move to brand the company a “supply-chain risk.”

“The Department will continue to build an architecture that prevents AI vendor lock-in and ensures long-term flexibility for the Joint Force,” the statement reads. “Access to a diverse suite of AI capabilities from across the resilient American technology stack will give warfighters the tools they need to act with confidence and safeguard the nation against any threat.”

The DOD said the companies’ AI hardware and models will be deployed on Impact Level 6 (IL6) and Impact Level 7 (IL7) environments to “streamline data synthesis, elevate situational understanding, and augment warfighter decision-making.” IL6 and IL7 are high-level security classifications for data and information systems that are deemed critical to national security and require that these systems be protected physically, through strict access controls and audits.

The Pentagon said more than 1.3 million DOD personnel have so far used its secure enterprise platform for generative AI, GenAI.mil, which provides access to large language models (LLMs) and other AI tools within government-approved cloud environments. It is designed to help primarily with non-classified tasks like research, document drafting, and data analysis.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

Tech

FDA’s approval of Otarmeni, the first gene therapy for hereditary deafness

Six weeks later, after a single injection of an experimental gene therapy, the same toddler is back in the same room. The tone plays. The toddler’s head turns toward the sound. And somewhere just off screen, the child’s grandfather says his name. The boy turns and looks. He can hear.

“When the parents realized their child had a response to sound they cried,” says Dr. Yilai Shu of the Eye & ENT Hospital of Fudan University, who co-led the trial, in a video that showed the results. “The whole family cried.” The video cuts to another child, thirteen weeks post-treatment, dancing to music.

This is what gene therapy can do in 2026. The clip comes from the international clinical trial of an OTOF gene therapy run by Mass Eye and Ear and China’s Fudan University that provided the underlying science behind a drug the Food and Drug Administration (FDA) approved last week.

On April 23, the FDA granted accelerated approval to Otarmeni, a gene therapy from the pharma company Regeneron for severe-to-profound hearing loss caused by mutations in a gene called OTOF. In a pivotal trial, 80 percent of treated patients gained measurable hearing, and 42 percent reached the level needed to pick up whispers. Two and a half years after treatment, 90 percent of patients in the underlying multi-center trial were still hearing.

It’s a drug that certainly feels like a miracle to those in the trials, taking patients from silence to sound. But what can feel almost as miraculous is how far the broader field of gene therapies like Otarmeni — which deliver a working copy of a broken gene directly into a patient’s cells — have come.

In 1999, the nascent field of gene therapy all but collapsed when a teenager named Jesse Gelsinger died four days after being injected with an experimental gene therapy at the University of Pennsylvania, the first publicly identified death in a gene therapy clinical trial. In the years that followed, funding evaporated, careers ended, and “gene therapy” became a cautionary tale.

It took years and major changes in how gene therapies are delivered for the field to recover. And now, 27 years after Gelsinger’s tragic death, we have a gene therapy that can effectively reverse some kinds of congenital hearing loss. The next decade is no longer about whether gene therapy can deliver clinical results. It’s about whether it can deliver results to enough patients, at prices people can actually pay, for diseases that affect more than a few hundred kids a year.

Get those answers right, and what feels like a miracle to some in 2026 could become ordinary medicine.

After Gelsinger died, the FDA halted gene therapy trials in the US, the National Institutes of Health tightened oversight, and the principal investigator of the Penn study — James Wilson — was barred from clinical trials for five years and stripped of his administrative titles. In the lean years that followed, two things happened.

The first was a change in delivery. Gene therapies use engineered viruses to deliver restorative genes to a patient’s cells. The therapy used on Gelsinger was carried by an adenovirus, which are highly immunogenic, meaning the human immune system recognizes them and reacts violently. It was that immune reaction that killed Gelsinger.

In the aftermath, the field increasingly turned to adeno-associated viruses (AAV), which are smaller, more tolerable, and capable of slipping a payload into the right cells without setting off a five-alarm immune reaction. AAV vectors are now the workhorse of in vivo gene therapy, including in Otarmeni.

The second thing that happened was CRISPR. Adapted in 2012 by Jennifer Doudna and Emmanuelle Charpentier into a precision gene-editing tool, CRISPR could do something AAV could not: find a specific spot in the patient’s own DNA and rewrite the letters there, correcting the broken gene in place. CRISPR also earned gene therapy a cultural moment it hadn’t had since before Gelsinger. Money and talent flooded back into the field — including into the AAV programs that produced Otarmeni.

The clearest sign something has shifted in the field is the lengthening list of therapy approvals. In December 2017, the FDA cleared Luxturna for hereditary blindness from RPE65 mutations — the first gene therapy in the US for an inherited disease. Two years later, Zolgensma was approved for spinal muscular atrophy, a wasting disease that kills children before age two in its severe form. In 2022, Hemgenix made hemophilia B the first bleeding disorder with a one-shot fix. In 2023, Casgevy and Lyfgenia did the same for sickle cell, with Casegevy becoming the first FDA-approved CRISPR therapy.

The sickle cell approvals matter most because they are the first for a patient population that is large; 100,000 Americans suffer from it — mostly Black, and historically underserved. The gene therapies are also proof of concept that the underlying CRISPR mechanism can be redirected at multiple different targets. Verve Therapeutics is using base editing to permanently disable PCSK9, a gene that controls how much LDL cholesterol stays in the bloodstream, with the promise of one-time treatment instead of daily statins for patients at high cardiovascular risk. Early trial data showed a 53 percent average drop in LDL cholesterol. Trials are open for additional hereditary-blindness genes, Pompe disease, and a long list of single-gene conditions.

The science is working, but paying for it is another matter.

These are the list prices for the recent approvals: Luxturna at $850,000 per patient, Zolgensma at $2.13 million, Casgevy at $2.2 million, Lyfgenia at $3.1 million, Hemgenix at $3.5 million. Two-thirds of US sickle cell patients are on Medicaid, and only 16,000 are eligible for Casgevy under the current label. Regeneron has pledged to provide Otarmeni for free in the US, but that works only because the OTOF patient pool is small — an estimated 50 babies a year. That math won’t work for more common disorders.

While cost may not be a problem for the families that could qualify for Otarmeni, it’s not the only concern. Cochlear implants, the standard treatment for OTOF patients for decades, have been contested within Deaf culture since the 1980s, with many arguing that deafness should be seen as identity rather than deficit. Gene therapy applied to infants makes that question all the more fraught, since the children treated with gene therapy cannot consent to the change. And not everyone would make that choice.

Beyond economic and cultural questions, we lack gene therapy for Alzheimer’s, schizophrenia, or any of the polygenic — meaning, caused by multiple genes — conditions that cause massive amounts of suffering. The cochlear is a good gene-therapy target because it is small and accessible, and OTOF is a single-gene disorder. The brain and Alzheimer’s are neither of those things. The platform that is working in one child’s inner ear in 2026 is not about to deliver universal cures by 2030, or well beyond.

What gene therapies will do, however, is keep filling in the list. The next time a parent gets a rare-disease diagnosis for their child, the question will increasingly be not whether someone is working on a gene therapy, but how soon it will be ready.

A version of this story originally appeared in the Good News newsletter. Sign up here!

Tech

Cork HQ for new onshore renewables company Perigus

![]()

Perigus Energy, formerly part of Ørsted, has been established following Copenhagen Infrastructure Partners’ acquisition of Ørsted’s European onshore business.

A new onshore renewable energy company has launched in Europe following the completion of Copenhagen Infrastructure Partners (CIP)’s acquisition of Ørsted’s European onshore business, with Cork chosen as its European headquarters.

Perigus Energy already operates across Ireland, Germany, the UK and Spain, with a combined operational and under-construction capacity of 826MW and a multi-gigawatt development pipeline.

The company said Ireland is central to its new operations. Perigus has 373MW of operational onshore wind farms across the island, with a further 179MW currently under construction. Its people, assets and development pipeline here are unaffected by the acquisition.

Two Irish projects are set to reach key milestones in the near term, according to Perigus. The Garreenleen solar project in Carlow, the company’s first solar project in Ireland, is due to be energised this month and will generate 81MW of clean electricity, enough to power around 29,000 homes.

In Tipperary, the Farranrory wind farm is expected to be fully operational later this year, adding nine turbines and 43.2MW of capacity.

Perigus Energy has also secured planning permission for the Brittas wind farm in Tipperary, consent for the 170MW Cappakeel solar farm in Laois, and “provisional success” for the Lodgewood battery energy storage project in Wexford following the latest EirGrid and SONI capacity market auction.

TJ Hunter, Perigus managing director for Ireland and the UK, said the Cork headquarters decision reflects both the company’s heritage and long-term ambitions on the island.

“While our name is new, we are an experienced team with a proven track record of delivery in Ireland since the opening of Owenreagh wind farm in Co Tyrone in 1997,” he said.

CEO Kieran White described the launch as “a very exciting next chapter”, adding that CIP’s backing would enhance the company’s ability to deliver across its investment-ready pipeline spanning wind, solar and battery storage.

Perigus Energy employs more than 200 people across offices in Ireland, Germany, the UK and Spain.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

Tech

Elon takes the stand, Big Tech drops big numbers, and a Seattle VC gets in on a billion-dollar deal

This week on the GeekWire Podcast: What it was like inside the Oakland federal courthouse where Elon Musk is suing OpenAI, Sam Altman, and Microsoft, with jury selection revealing just how hard it is to find anyone neutral about Musk these days.

Meanwhile, Microsoft and OpenAI restructured their partnership the same morning the trial began — and less than 24 hours later, OpenAI’s models landed on Amazon’s cloud.

Then, Microsoft and Amazon both dropped blockbuster earnings, with Azure up 40%, AWS posting its fastest growth in 15 quarters, and the two companies combining for nearly $400 billion in capital spending this year alone.

We also discuss a wild Semafor story about a serial entrepreneur who handed his entire life over to an AI agent that now emails people as him, sets up meetings without his knowledge, and even ordered him a computer.

Plus, the story of how Seattle’s Flying Fish Partners — a VC firm with less than $250 million under management — hustled its way into a $1.1 billion seed round alongside Sequoia, Google, and Nvidia. Then we tackle the quickly debunked rumor that Mark Zuckerberg and Tim Cook might buy the Seahawks. And finally, the return of the GeekWire Trivia Challenge.

Subscribe to GeekWire in Apple Podcasts, Spotify, or wherever you listen.

Audio editing by Curt Milton.

Tech

Cork’s Nexalus teams with TuffTek for next-gen cooling systems

![]()

Nexalus was founded by Dr Tony Robinson, Kenneth O’Mahony and Dr Cathal Wilson.

Trinity College Dublin spin-out Nexalus is collaborating with Canadian defence infrastructure manufacturer TuffTek to develop “next-generation” liquid-cooling platforms.

Nexalus’s cooling systems help control temperature in massive thermal energy-generating infrastructure, including data centres, high-performance computing (HPC) facilities and Formula 1 racing.

The Cork-based start-up was founded by Dr Tony Robinson, Kenneth O’Mahony and Dr Cathal Wilson in 2018.

The partnership will develop cooling platforms for HPCs and AI, for modern defence and security operations, reflecting a growing need for infrastructure that can perform in high-intensity environments, a joint press release from the companies read.

Under the collaboration, Nexalus will lead the design and integration of advanced liquid cooling architectures, enabling TuffTek’s platforms to support higher compute densities.

“This collaboration with TuffTek is about applying Nexalus’s engineering solutions in some of the most demanding use cases globally,” said O’Mahony, the company’s CEO. He is also a board member with Irish Manufacturing Research, having previously represented I2E2 as its chairperson.

“By integrating advanced liquid cooling into TuffTek’s ruggedised, deployable platforms, we are enabling a new standard for performance and efficiency at the edge for a rapidly growing market.”

The company’s technology was recognised as part of Fast Company’s 2025 World Changing Ideas list and Time Magazine’s list of best inventions of 2025.

TuffTek founder and CEO John Kadianos said that the collaboration is focused on solving “real operational challenges” in defence environments.

“As compute requirements increase at the edge, thermal management becomes a limiting factor. Nexalus brings a highly innovative approach that allows us to deliver more capable, reliable systems for our customers.”

TuffTek designs its products for critical operations in harsh environments, such as mining, oil and gas.

Nexalus has been previously featured on SiliconRepublic.com’s list of pioneering Irish start-ups.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

Tech

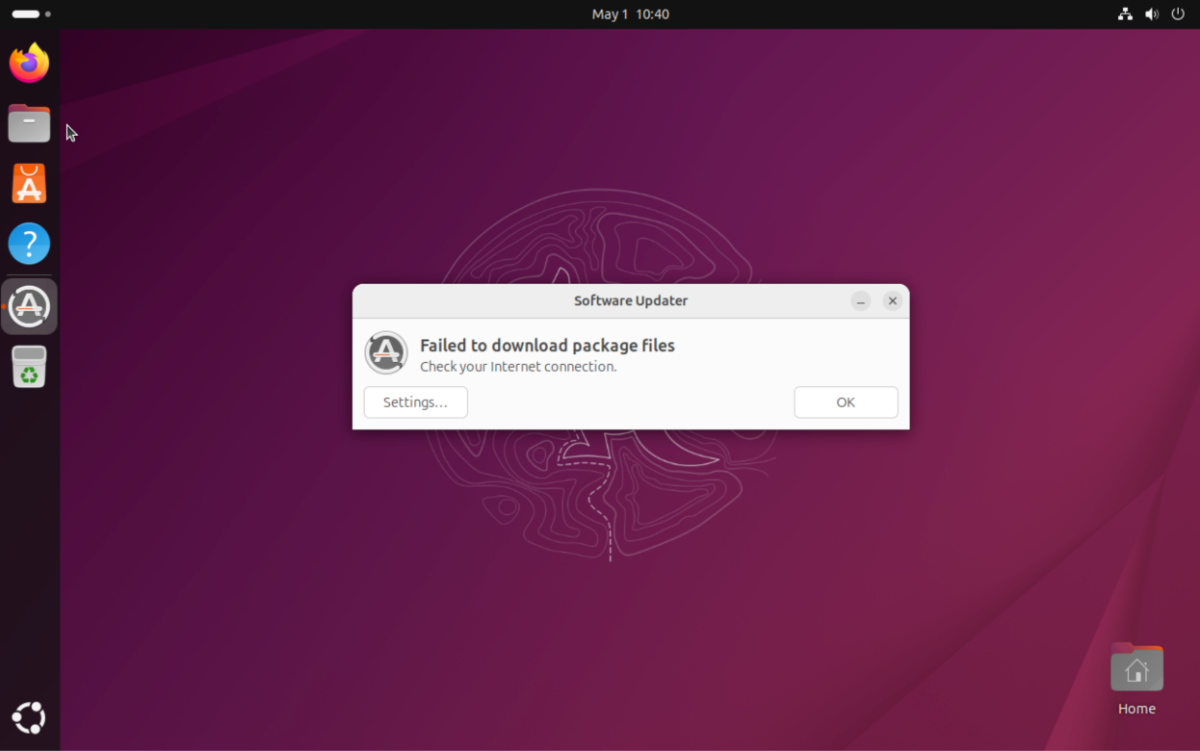

Ubuntu services hit by outages after DDoS attack

Hacktivists have claimed responsibility for taking down the public-facing infrastructure of popular Linux operating system distribution Ubuntu, as well as Canonical, the company that develops and maintains the software. The attack began on Thursday, and affected services that Ubuntu users rely on.

“Canonical’s web infrastructure is under a sustained, cross-border attack and we are working to address it. We will provide more information in our official channels as soon as we are able to,” the company said on its website.

The hacktivists are believed to have launched a distributed denial-of-service, or DDoS, a crude but often effective attack that consists of flooding a target with junk traffic until it overloads or crashes.

Ubuntu developers have been discussing the attack on an unofficial Ubuntu community forum, claiming that the attack affects Ubuntu’s security API, and several Ubuntu and Canonical websites. According to a post on a threat intelligence forum, the DDoS attack has also made it impossible for users to update and install Ubuntu. TechCrunch verified that updates failed to install on a test device running Ubuntu.

As of this writing, the outage has been ongoing for around 20 hours.

When contacted, Canonical spokesperson Lelanie de Roubaix reiterated what the company said on is website.

Hacktivists calling themselves The Islamic Cyber Resistance in Iraq 313 Team claimed on its Telegram channel that it was to blame for the DDoS attack.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

The hackers claimed to be using Beamed, a DDoS-for-hire service. These types of services, also called booters or stressers, allow anyone to pay to launch DDoS attacks, even if they have no technical skills nor the necessary infrastructure to flood targets with bogus traffic. The DDoS-for-hire service in this case claims to power attacks in excess of 3.5 Tbps, which is about half of the bandwidth of a cyberattack that Cloudflare last year called the “largest DDoS attack ever recorded.”

For years, authorities such as the FBI and Europol have played a game of whack-a-mole against these services, taking down and seizing domains, and sometimes arresting the people behind them.

This story was updated to include Canonical’s response.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

Tech

Linux Percentage of Steam Users Doubled in One Year

Steam on Linux use in March “had skyrocketed to 5.33%…” reports Phoronix, “easily the highest level we’ve seen Steam on Linux at since its inception more than a decade ago.”

So what happened in April?

[April’s results] point to Linux having a 4.52% marketshare on Steam, a drop of 0.81% compared to March. Year-over-year it’s roughly double with Steam on Linux in April 2025 being at 2.27%. Or two years ago for April 2024, Steam on Linux was at 1.9%.

Tech

Why New Cars Don’t Offer Engine Options Like Old American Cars Did

“They don’t make them like they used to” is a phrase that can apply to just about anything. For gear-heads feeling nostalgic about older cars, it’s a phrase that never seems to go away. This is especially true when comparing today’s cookie-cutter engines versus the selection that drivers had in ridiculously overpowered vintage cars. Part of the reason engines have changed and choices have been reduced is due to U.S. EPA standards.

Those standards are set by the Clean Air Act, which gives the U.S. Environmental Protection Agency authority to regulate vehicle emissions. This is done through strict federal requirements that directly influence vehicle design and engine development. As a result, car manufacturers are pushed to produce a more limited range of engine types. Corporate Average Fuel Economy (CAFE) standards are also in play. CAFE requires automakers to meet fleet-wide efficiency targets, which leads to shared engine designs being used across an entire lineup of vehicles.

As automakers worked to satisfy these standards, modern advances like turbocharging and fuel system improvements allowed for engine downsizing. This means smaller engines can produce performance similar to that of larger engines. In fact, there are even small engines with more power than muscle car V8s. So thanks to today’s technology, car manufacturers do not necessarily need to design multiple engine types when fewer can cover the same performance requirements.

Engine size alone doesn’t determine fuel efficiency

Multiple large-displacement engine types were once the norm in the automobile industry. In fact, these engines were in demand for a variety of different vehicles, like old school muscle cars. This includes the big block V8 engine, which was once a major focus for automakers. It was a standard approach taken by many manufacturers, who were unrestricted by emissions and fuel economy regulations.

There is a common belief that smaller engines get better fuel efficiency than larger engines. After all, those older V8s could get very thirsty, which means you’d be filling up quite often. But fuel economy involves a lot more than just engine size. It’s influenced by several factors, like vehicle weight, transmission, technology differences, and even driving habits. So even if you have a car with a larger engine, it doesn’t mean you’re not getting good fuel efficiency.

There are still some U.S. automakers that give you options, depending on the vehicle. But those options are often restricted to the same model, and not widespread across the board. For example, Ford offers multiple engine choices within the F-150 lineup for 2026, ranging from a 2.7L EcoBoost V6 with 325 horsepower, up to a 3.5L High Output EcoBoost V6 with 450 horsepower. So if you’re interested in finding a car or truck with a bigger engine, it’s a good idea to check the manufacturer’s website first and then go from there.

Tech

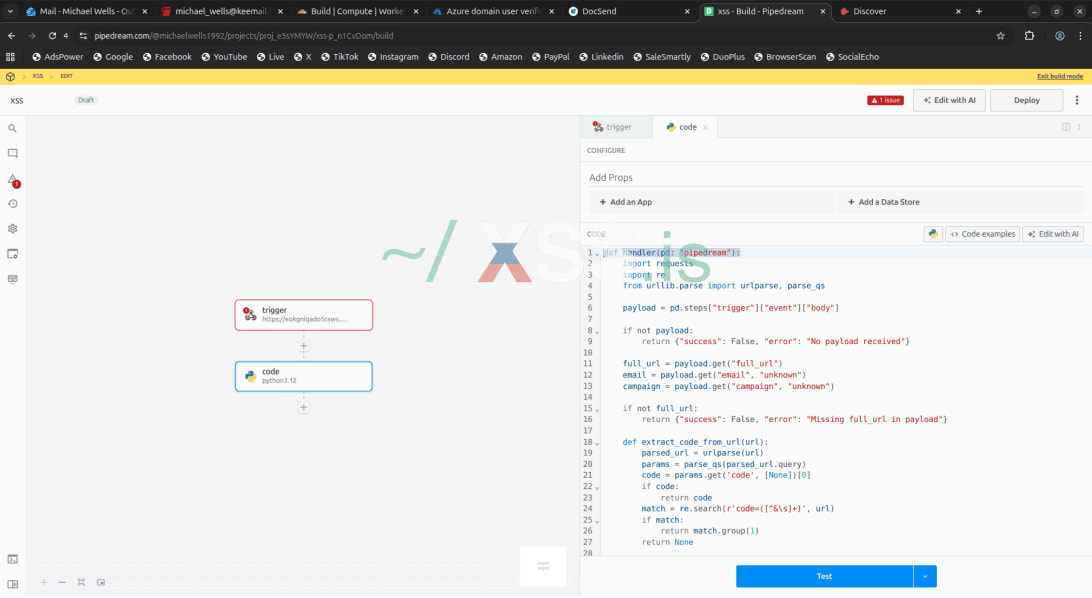

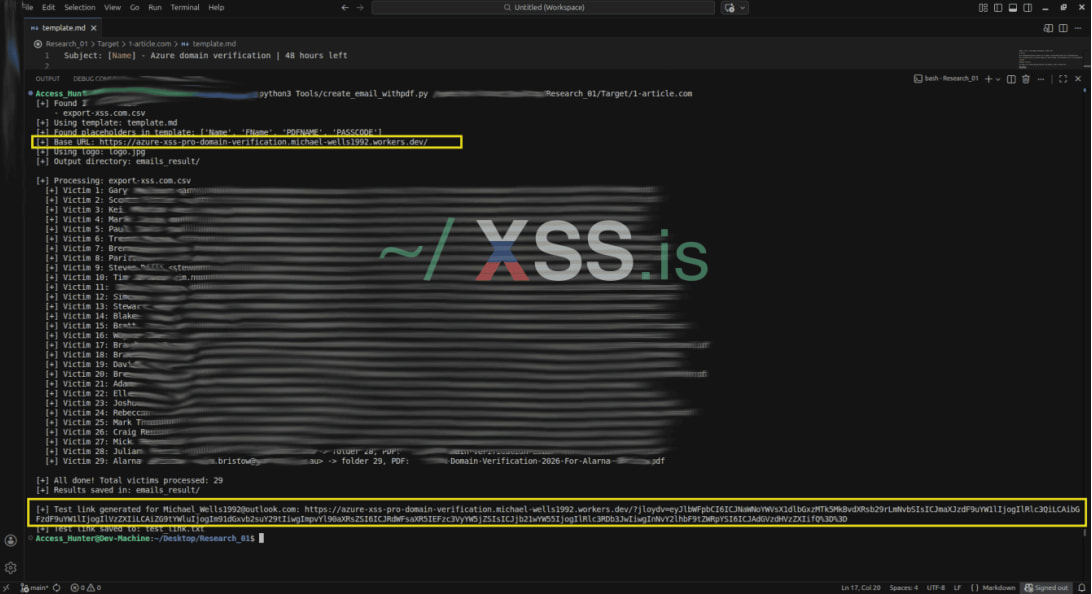

ConsentFix v3 attacks target Azure with automated OAuth abuse

A new attack type, dubbed ConsentFix v3, has been circulating on hacker forums as an improved technique that automates attacks against Microsoft Azure.

The first version of ConsentFix was presented by Push Security last December as a variation of ClickFix for OAuth phishing attacks, which tricks victims into completing a legitimate Microsoft login flow via the Azure CLI.

Using social engineering, the attacker fooled victims into pasting a localhost URL containing an OAuth authorization code that can be used to obtain tokens and hijack the account without passwords, despite multi-factor authentication (MFA).

ConsentFix v2 was developed by researcher John Hammond as a refined version of Push’s original, replacing manual copy/paste with drag-and-drop of the localhost URL, making the phishing flow smoother and more convincing.

ConsentFix v3 preserves the core idea of abusing the OAuth2 authorization code flow and targeting first-party Microsoft apps that are pre-trusted and pre-consented.

However, it brings an improvement by incorporating automation and scalability.

ConsentFix v3 attack flow

According to information retrieved from hacker forums where the new technique is promoted, the attack begins by verifying the presence of Azure in the target environment by checking for valid tenant IDs.

This is followed by gathering employee details such as names, roles, and email addresses to support impersonation.

Next, the attackers create multiple accounts across services such as Outlook, Tutanota, Cloudflare, DocSend, Hunter.io, and Pipedream to support phishing, hosting, data gathering, and exfiltration operations.

Push Security researchers explain that Pipedream, a free-to-use serverless integration platform, plays a central part in automating the attack, serving three critical roles:

- Is the webhook endpoint that receives the victim’s authorization code

- It is the automation engine that immediately exchanges that code for a refresh token via Microsoft’s API

- It is the central collector that makes captured tokens available to us in real time.

Source: Push Security

In the next phase, the attacker deploys a phishing page hosted on Cloudflare Pages that mimics a legitimate Microsoft/Azure interface and initiates a real OAuth flow through Microsoft’s login endpoint.

When the victim interacts with the page, they are redirected to a localhost URL containing an OAuth authorization code, which they are tricked into pasting or dragging back into the phishing page.

This enables the data exfiltration pipeline, in which the page sends the captured URL to a Pipedream webhook, and the backend automation immediately exchanges the authorization code for tokens.

The phishing emails can be highly personalized, generated from harvested data, and feature malicious links embedded inside a PDF hosted on DocSend to improve credibility and bypass spam filtering.

Source: Push Security

In the post-exploitation stage, the obtained tokens are imported into Specter Portal, allowing the attacker to interact with compromised Microsoft environments and access resources permitted by the token, such as email, files, and other services tied to the account.

Push Security noted that its testing of ConsentFix v3 relied on its personal Microsoft accounts; as a result, it is difficult to fully appreciate the impact, which depends on permissions, services, and tenant settings, among other factors.

In terms of mitigating ConsentFix risks, Push notes that the endeavor is complicated because trust in first-party apps is architectural, and that Family of Client IDs (FOCI), Microsoft applications that share permissions and refresh tokens, is useful otherwise.

However, there are still steps administrators can take, such as applying token binding to trusted devices, setting up behavioral detection rules, and applying app authentication restrictions.

While ConsentFix attacks are used in actual campaigns, it is unclear if the v3 variant has gained any traction among cybercriminals yet.

AI chained four zero-days into one exploit that bypassed both renderer and OS sandboxes. A wave of new exploits is coming.

At the Autonomous Validation Summit (May 12 & 14), see how autonomous, context-rich validation finds what’s exploitable, proves controls hold, and closes the remediation loop.

Tech

Tovala Family Meals Review: Good Food, Lots of Salt

A garlic-herb salmon with risotto was probably the best among the family meals I tried. The chopped asparagus was less than visually appealing when drizzled in garlic butter, but still tasty and a bit crisp. The salmon was tender and flaky. And the sweet pea risotto had no choice but to be delicious. There was so much cheese, butter, and lemon it was pretty much a concert of fats and acid.

That chicken parm was likewise a mountain of cheese and salt. It reminded me, pleasantly, of countless family meals I had as a child in the 1980s: cheese-topped chicken, garlic bread, shells stuffed with ricotta and topped with even more cheese. The big difference is that there is simply no way my mother would have cooked this meal without a vegetable.

Toval app via Matthew Korfhage

And nutrition is where Toval runs aground a little. The nutritional notes on that chicken parm meal betray 2,300 milligrams of sodium per serving, pretty much the entire daily allowance for an adult human. This is also on par with comparable servings of Stouffer’s meat lasagna. The Tovala meal also carried about 10 times the cholesterol as Stouffer’s.

Many other meals followed a similar pattern, loading up on fats and salt in order to make meals tasty. The net effect is that it’s a lot more like rich restaurant food than what most people prepare at home. Whether this is a good or a bad quality is up to you.

Only one meal of the seven I tried failed utterly: I flagged a teriyaki chicken dinner to my editor as a possible cultural crime against Japan. The meal was sweet soy drenching pale and steaming chicken, with an implausible side of thick egg rolls and some loose, unseasoned broccoli. It felt like the “Japanese” food you’d get at a mall food court in the ’90s. But again, this was a rare major misstep.

A more pernicious issue, in meals designed for the whole family, is the near-universal high-fat, cholesterol, and sodium content. Many with the income and inclination to eat hearty, low-effort meals like the ones from Tovala are either parents with children, or people in the retirement bracket. Each has their own reason to desire a little more nutrition, and less fat and salt.

By the end of a couple of weeks of testing recipes, I’ll admit I felt a little relieved. I was grateful to feel my arteries slowly reopen. Tovala’s culinary model makes a lot of sense to me, as a smart way of splitting the difference between prepared meals and fresh food. And the company has proven it can cook well. It might be nice if they’d also cook a diet that felt more sustainable.

Power up with unlimited access to WIRED. Get best-in-class reporting that’s too important to ignore. Includes unlimited digital access and exclusive subscriber-only content. Subscribe Today.

Tech

We built AI to save us from email, and it somehow made email even more soul-sucking

Writing an email is already one of the more lifeless parts of modern work, so of course the tech industry decided to automate it. AI was meant to ease workloads by managing “grunt” work—dealing repetitive junk, trimming down inbox overload, and giving people their time back. It really sounded like the right idea. But in reality, we are nowhere close to removing the misery of email.

The kind of email you’re already sick of seeing

AI lowers the effort required to produce corporate-sounding language. That means every “just following up,” every “circling back,” every “gentle reminder,” and every “happy to connect” becomes even easier to generate and even harder to escape.

A person who might have skipped sending a pointless email before can now ask AI to draft one in seconds. And the person replying might once have wrapped things up in two short sentences. Now there is always a cleaner, longer, more “professional” version waiting from a chatbot. The Guardian recently reported on worker frustration around AI-generated workplace output, including what some employees now call “workslop.”

AI just gave bad email habits some steroids

Email was never only about communication. It also became a way to signal responsiveness, usefulness, and motion. A fast reply, a full calendar, and a long thread make things look more productive, even when nobody actually needed any of it. AI slides neatly into this culture. It can answer faster, summarize faster, schedule faster, and keep the illusion of progress running all day.

Office email already rewards performance as much as usefulness. Now every half-formed thought can become a polished paragraph. Sentences can be improved, and low-value updates can be padded into something more formal, diplomatic, corporate, and even lifeless. Using AI does not make your communication any better. What you’re getting instead is just more of it. Your inbox has more messages, fillers, and new language designed to sound productive without necessarily being useful.

Things get worse when everyone starts doing it, compounding the issue. One person sends a slick AI-polished email. The reply comes back with its own AI-assisted phrasing. Someone added to the thread later uses AI to summarize the whole exchange before sending another response. And now you have a conversation that technically keeps moving, but feels less and less human with every pass.

So who’s talking to whom?

At that point, bots emailing bots does not sound like a joke anymore. Dedicated tools like AI email assistants and scheduling bots may be useful in isolation, but they are still part of the same problem. Tools like Read AI’s Ada can handle meeting logistics and participate in email threads, which makes the whole “AI talking to AI” scenario feel a lot less ridiculous now.

It started with people leaning on AI for one harmless email, which quickly steamrolled into the whole culture of email becoming even more bloated and more performative. We were supposed to get relief from one of the most draining parts of digital work. And now it feels like new technology is just keeping that machine running rather than getting rid of it.

-

Tech5 days ago

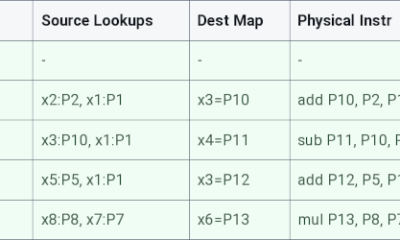

Tech5 days agoRegister Renaming | Hackaday

-

Crypto World7 days ago

Crypto World7 days agoHyperliquid $HYPE Rally Builds Momentum as AI Sector Enters Prove-It Phase

-

Politics5 days ago

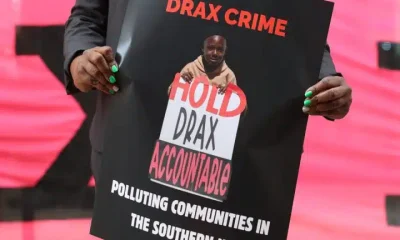

Politics5 days agoDrax board avoid their own AGM, accused of greenwashing & environmental racism

-

Tech5 days ago

Tech5 days agoWhy Blue Badges Disappeared From Toyota Hybrids

-

Tech5 days ago

Tech5 days agoImages of Samsung’s rumored smart glasses have leaked

-

Sports6 days ago

Sports6 days agoIPL 2026: Ruturaj Gaikwad registers slowest fifty of the season, enters all-time unwanted list | Cricket News

-

Tech22 hours ago

Tech22 hours agoTrump’s 25% EU auto tariff breaches Turnberry Agreement that also covers semiconductors and digital trade

-

NewsBeat6 days ago

NewsBeat6 days agoLK Bennett closes all stores after entering administration

-

Fashion4 days ago

Fashion4 days agoKylie Jenner’s KHY Enters a New Era with ‘Born in LA’

-

Entertainment7 days ago

Entertainment7 days agoMariah Carey Slams Deposition Claims In Brother’s Lawsuit

-

Business4 days ago

Business4 days agoMost Commercial Energy Audits Miss the Real Losses

-

Crypto World4 days ago

Crypto World4 days agoCFTC’s AI will review U.S. crypto registration applications, chairman tells CoinDesk

-

Business5 days ago

Business5 days ago(VIDEO) Charlize Theron Climbs Times Square Billboard to Promote New Netflix Thriller ‘Apex’

-

Business3 days ago

Business3 days agoBarclay Brothers Avoid Bankruptcy: HSBC Drops High Court Petitions After IVA Deal

-

Sports22 hours ago

Sports22 hours agoPaul Scholes issues Marcus Rashford reality check as agreement emerges over Man United star

-

Tech7 days ago

Tech7 days agoMicrosoft to roll out Entra passkeys on Windows in late April

-

Tech6 days ago

Tech6 days agoOpenAI’s Sam Altman apologizes for not reporting ChatGPT account of Tumbler Ridge suspect to police

-

Business3 days ago

Business3 days agoTesla Officially Registers Elon Musk’s Stock: What Investors Need to Know

-

Tech7 days ago

Tech7 days agoOpenAI CEO apologizes to Tumbler Ridge community

-

Tech4 days ago

Tech4 days agoGet Ready for More Brain-Scanning Consumer Gadgets

.PNG)

You must be logged in to post a comment Login