Picture a highway with networked autonomous cars driving along it. On a serene, cloudless day, these cars need only exchange thimblefuls of data with one another. Now picture the same stretch in a sudden snow squall: The cars rapidly need to share vast amounts of essential new data about slippery roads, emergency braking, and changing conditions.

These two very different scenarios involve vehicle networks with very different computational loads. Eavesdropping on network traffic using a ham radio, you wouldn’t hear much static on the line on a clear, calm day. On the other hand, sudden whiteout conditions on a wintry day would sound like a cacophony of sensor readings and network chatter.

Normally this cacophony would mean two simultaneous problems: congested communications and a rising demand for computing power to handle all the data. But what if the network itself could expand its processing capabilities with every rising decibel of chatter and with every sensor’s chirp?

Traditional wireless networks treat communication as separate from computation. First you move data, then you process it. However, an emerging new paradigm called over-the-air computation (OAC) could fundamentally change the game. First proposed in 2005 and recently developed and prototyped by a number of teams around the world, including ours, OAC combines communication and computation into a single framework. This means that an OAC sensor network—whether shared among autonomous vehicles, Internet-of-Things sensors, smart-home devices, or smart-city infrastructure—can carry some of the network’s computing burden as conditions demand.

The idea takes advantage of a basic physical fact of electromagnetic radiation: When multiple devices transmit simultaneously, their wireless signals naturally combine in the air. Normally, such cross talk is seen as interference, which radios are designed to suppress—especially digital radios with their error-correcting schemes and inherent resistance to low-level noise.

But if we carefully design the transmissions, cross talk can enable a wireless network to directly perform some calculations, such as a sum or an average. Some prototypes today do this with analog-style signaling on otherwise digital radios—so that the superimposed waveforms represent numbers that have been added before digital signal processing takes place.

Researchers are also beginning to explore digital, over-the-air computation schemes, which embed the same ideas into digital formats, ultimately allowing the prototype schemes to coexist with today’s digital radio protocols. These various over-the-air computation techniques can help networks scale gracefully, enabling new classes of real-time, data-intensive services while making more efficient use of wireless spectrum.

OAC, in other words, turns signal interference from a problem into a feature, one that can help wireless systems support massive growth.

For decades, engineers designed radio communications protocols with one overriding goal: to isolate each signal and recover each message cleanly. Today’s networks face a different set of pressures. They must coordinate large groups of devices on shared tasks—such as AI model training or combining disparate sensor readings, also known as sensor fusion—while exchanging as little raw data as possible, to improve both efficiency and privacy. For these reasons, a new approach to transmitting and receiving data may be worth considering, one that doesn’t rely on collecting and storing every individual device’s contributions.

By turning interference into computation, OAC transforms the wireless medium from a contested battlefield into a collaborative workspace. This paradigm shift has far-reaching consequences: Signals no longer compete for isolation; they cooperate to achieve shared outcomes. OAC cuts through layers of digital processing, reduces latency, and lowers energy consumption.

Even very simple operations, such as addition, can be the building blocks of surprisingly powerful computations. Many complex processes can be broken down into combinations of simpler pieces, much like how a rich sound can be re-created by combining a few basic tones. By carefully shaping what devices transmit and how the result is interpreted at the receiver, the wireless channel running OAC can carry out other calculations beyond addition. In practice, this means that with the right design, wireless signals can compute a number of key functions that modern algorithms rely on.

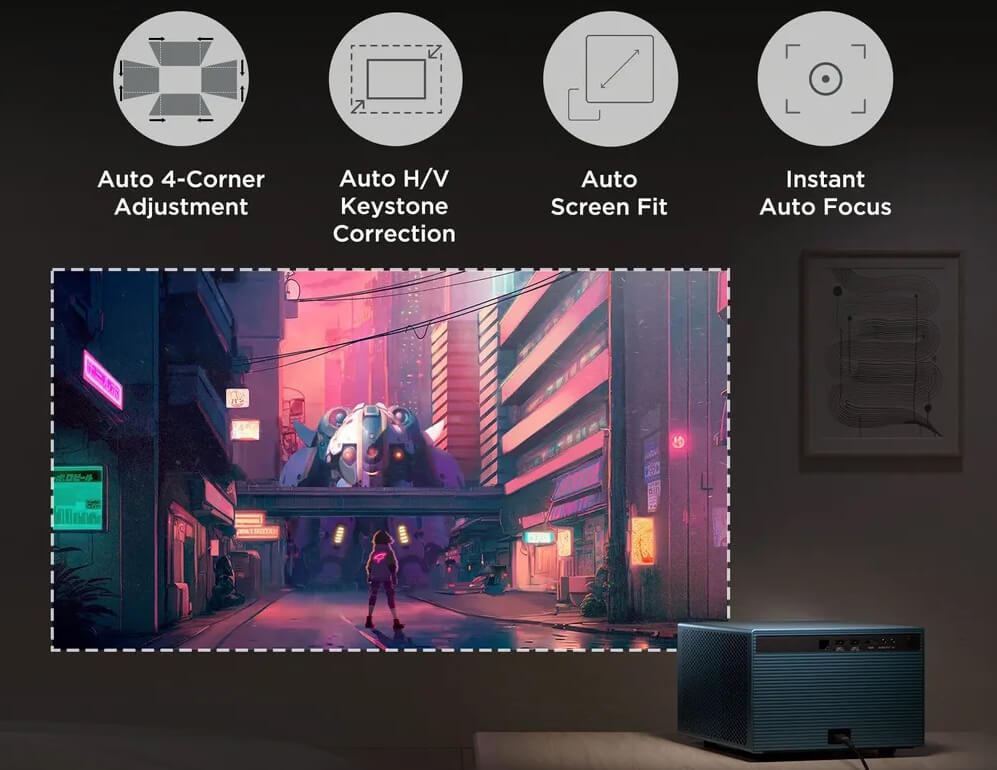

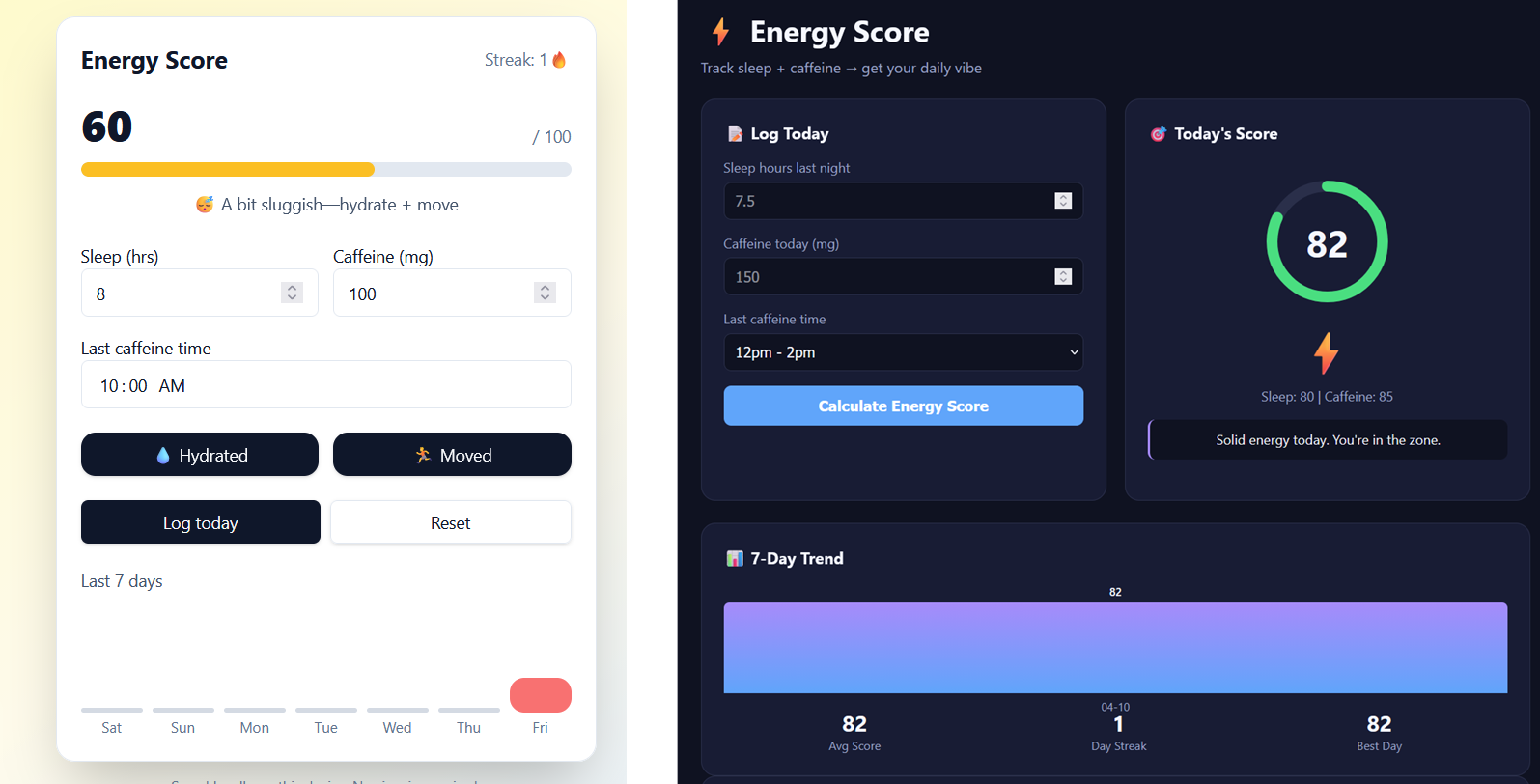

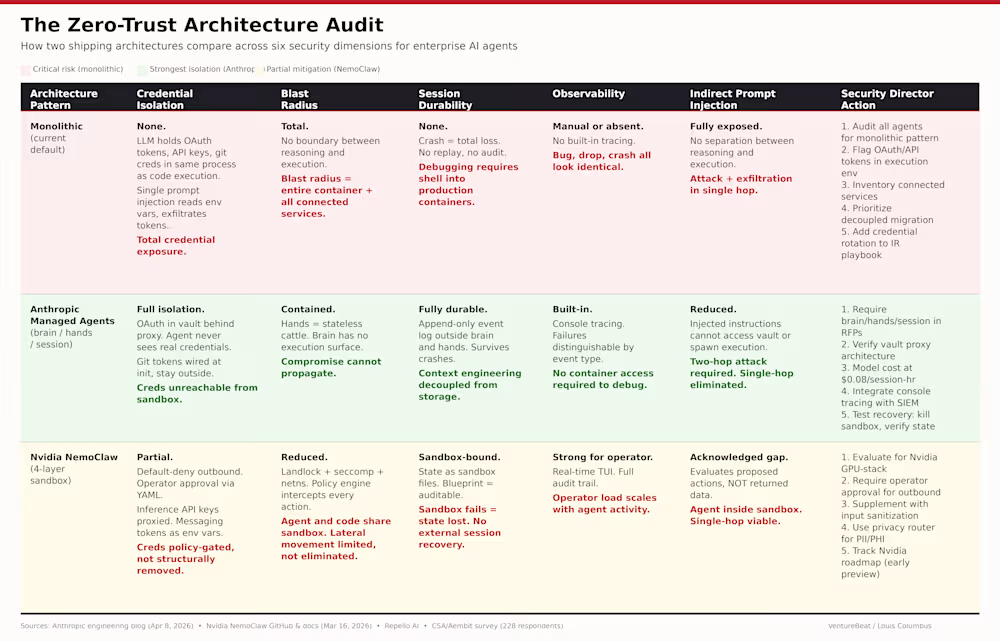

THE PROBLEM (TRADITIONAL APPROACH)

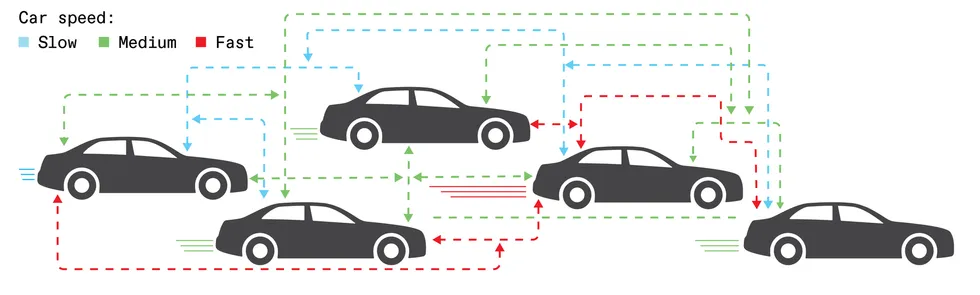

Consider five connected vehicles traveling within sight of one another. Each car reports its speed to the network. In this example, the speeds are slow, medium, and fast. Using existing standards, all five connected cars must independently track and count all incoming signals. Even in this very simplified case, the network is already congested.

Mark Montgomery

For instance, many key tasks in modern networks don’t require the logging and storage of every individual network transmission. Rather, the goal is instead to infer properties about aggregate patterns of network traffic—reaching agreement or identifying what matters most about the traffic. Consensus algorithms rely on majority voting to ensure reliable decisions, even when some devices fail. Artificial intelligence systems depend on matrix reduction and simplification operations such as “max pooling” (keeping only peak values) to extract the most useful signals from noisy data.

In smart cities and smart grids, what matters most is often not individual readings but distribution. How many devices report each traffic condition? What is the range of demand across neighborhoods? These are histogram questions—summaries of the device counts per category.

With type-based multiple access (TBMA), an over-the-air computation method we use, devices reporting a given condition transmit together over a shared channel. Their signals add up, and the receiver sees only the total signal strength per category. In a single transmission, the entire histogram emerges without ever identifying individual devices. And the more devices there are, the better the estimate. The result is greater spectrum efficiency, with lower latency and scalable, privacy-friendly operations—all from letting the wireless medium do the aggregating and counting.

It’s easy to imagine how analog values transmitted over the air could be summed via superposition. The amplitudes from different signals add together, so the values those amplitudes represent also simply add together. The more challenging question concerns preserving that additive magic, but with digital signals.

Here’s how OAC does it. Consider, for instance, one TBMA approach for a network of sensors that gives each possible sensor reading its own dedicated frequency channel. Every sensor on the network that reads “4” transmits on frequency four; every sensor that reads “7” transmits on frequency seven. When multiple devices share the same reading, their amplitudes combine. The stronger the combined signal at a given frequency, the more devices there are reporting that particular value.

A receiver equipped with a bank of filters tuned to each frequency reads out a count of votes for every possible sensor value. In a single, simultaneous transmission, the whole network has reported its state.

It might seem paradoxical—digital computation riding atop what appears to be an analog physical effect. But this is also true of all “digital” radio. A Wi-Fi transmitter does not launch ones and zeroes into the air; it modulates electromagnetic waves whose amplitudes and phases encode digital data. The “digital” label ultimately refers to the information layer, not the physics. What makes OAC digital, in the same sense, is that the values being computed—each sensor reading, each frequency-bin count—are discrete and quantized from the start. And because they are discrete, the same error-correction machinery that has made digital communications robust for decades can be applied here too.

Synchronization is where OAC’s demands diverge most sharply from digital wireless conventions. Many OAC variants today require something akin to a shared clock at nanosecond precision: Every signal’s phase must be synchronized, or the superposition runs the risk of collapsing into destructive interference. While TBMA relaxes this burden a bit—devices need only share a time window—real engineering challenges lie ahead regardless, before over-the-air computation is ready for the mobile world.

How will over-the-air computation work in the field?

Over-the-air computation has in recent years moved from theory to initial proofs-of-concept and network test runs. Our research teams in South Carolina and Spain have built working prototypes that deliver repeatable results—with no cables and no external timing sources such as GPS-locked references. All synchronization is handled within the radios themselves.

Our team at the University of South Carolina (led by Sahin) started with off-the-shelf software-defined radios—Analog Devices’ Adalm-Pluto. We modified the devices’ field-programmable gate array hardware inside each radio so it can respond to a trigger signal transmitted from another radio. This simple hack enabled simultaneous transmission, a core requirement for OAC. Our setup used five radios acting as edge devices and one acting as a base station. The task involved training a neural network to perform image recognition over the air. Our system, whose results we first reported in 2022, achieved a 95 percent accuracy in image recognition without ever moving raw data across the network.

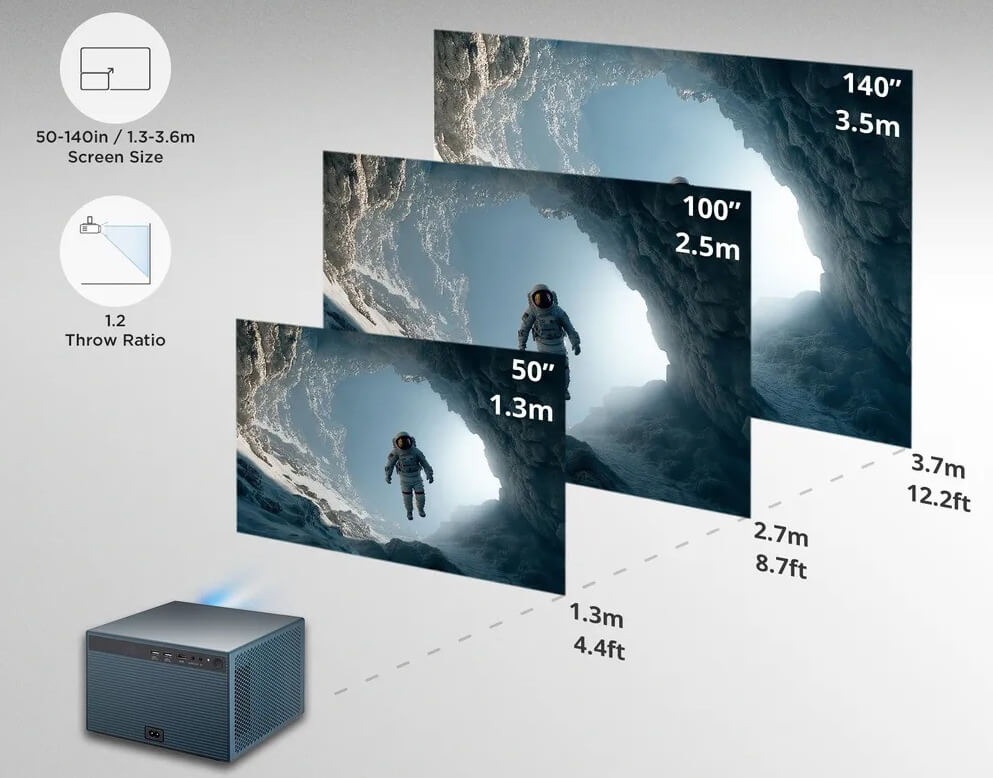

THE OVER-THE-AIR COMPUTATION (OAC) APPROACH

Using over-the-air computation, all five cars transmit their speeds simultaneously. Vehicles reporting the same speed share the same channel; their signals merely combine over the air.

Mark Montgomery

We also demonstrated our initial OAC setup at a March 2025 IEEE 802.11 working group meeting, where an IEEE committee was studying AI and machine learning capabilities for future Wi-Fi standards. As we showed, OAC’s road ahead doesn’t necessarily require reinventing wireless technology. Rather, it can also build on and repurpose existing protocols already in Wi-Fi and 5G.

However, before OAC can become a routine feature of commercial wireless systems, networks must provide finer-tuned coordination of timing and signal power levels. Mobility is a difficult problem, too. When mobile devices move around, phase synchronization degrades quickly, and computational accuracy can suffer. Present-day OAC tests work in controlled lab environments. But making them robust in dynamic, real-world settings—vehicles on highways, sensors scattered across cities—remains a new frontier for this emerging technology.

Both of our teams are now scaling up our prototypes and demonstrations. We are together aiming to understand how over-the-air computation performs as the number of devices increases beyond lab-bench scales. Turning prototypes and test-beds into production systems for autonomous vehicles and smart cities will require anticipating tomorrow’s mobility and synchronization problems—and no doubt a range of other challenges down the road.

Where OAC goes from here

To realize the technological ambitions of over-the-air computation, nanosecond timing and exquisite RF signal design will be crucial. Fortunately, recent engineering advances have made substantial progress in both of these fields.

Because OAC demands waveform superposition, it benefits from tight coordination in time, frequency, phase, and amplitude among RF transmitters. Such requirements build naturally on decades of work in wireless communication systems designed for shared access. Modern networks already synchronize large numbers of devices using high-precision timing and uplink coordination.

OAC uses the same synchronization techniques already in cellular and Wi-Fi systems. But to actually run over-the-air computations, more precision still will be needed. Power control, gain adjustment, and timing calibration are standard tools today. We expect that engineers will further refine these existing methods to begin to meet OAC’s more stringent accuracy demands.

THE OAC RESULT

One transmission yields the full picture: One car is going slow; three are traveling at medium speed; and one vehicle is moving fast. The majority condition is immediately identified—with no individual vehicle data shared or processed.

Mark Montgomery

In some cases, in fact, imperfect timing standards may be all that’s needed. Designs and emerging standards in 5G and 6G wireless systems today use clever encoding that tolerates imperfect synchronization. Minor timing errors, frequency drift, and signal overlap can in some cases still work capably within an OAC protocol, we anticipate. Instead of fighting messiness, over-the-air computation may sometimes simply be able to roll with it.

Another challenge ahead concerns shifting processing to the transmitter. Instead of the receiver trying to clean up overlapping signals, a better and more efficient approach would involve each transmitter fixing its own signal before sending. Such “pre-compensation” techniques are already used in MIMO technology (multi-antenna systems in modern Wi-Fi and cellular networks). OAC would just be repurposing techniques that have already been developed for 5G and 6G technologies.

Materials science can also help OAC efforts ahead. New generations of reconfigurable intelligent surfaces shape signals via tiny adjustable elements in the antenna. The surfaces catch radio signals and reshape them as they bounce around. Reconfigurable surfaces can strengthen useful signals, eliminate interference, and synchronize wavefront arrivals that would otherwise be out of sync. OAC stands to benefit from these and other emerging capabilities that intelligent surfaces will provide.

At the system level, OAC will represent a fundamental shift in wireless network system design. Wireless engineers have traditionally tried to avoid designing devices that transmit at the same time. But over-the-air systems will flip the old, familiar design standards on their head.

One might object that OAC stands to upend decades of existing wireless signal standards that have always presumed data pipes to be data pipes only—not microcomputers as well. Yet we do not anticipate much difficulty merging OAC with existing wireless standards. In a sense, in fact, the IEEE 802.11 and 3GPP (3rd Generation Partnership Project) standards bodies have already shown the way.

A network can set aside certain brief time windows or narrow slices of bandwidth for over‑the‑air computation, and use the rest for ordinary data. From the radio’s point of view, OAC just becomes another operating mode that is turned on when needed and left off the rest of the time.

Over the past decade, both the IEEE and 3GPP have integrated once-experimental technologies into their wireless standards—for example, millimeter-wave mobile communications, multiuser MIMO, beamforming, and network slicing—by defining each new technological advance as an optional feature. OAC, we suggest, can also operate alongside conventional wireless data traffic as an optional service. Because OAC places high demands on timing and accuracy, networks will need the ability to enable or disable over‑the‑air computation on a per‑application basis.

With continued progress, OAC will evolve from lab prototype to standardized wireless capability through the 2020s and into the decade ahead. In the process, the wireless medium will transform from a passive data carrier into an active computational partner—providing essential infrastructure for the real-time intelligent systems that future wireless technologies will demand.

So on that snowy highway sometime in the 2030s, vehicles and sensors won’t wait for permission to think together. Using the emerging over-the-air computation protocols that we’re helping to pioneer, simultaneous computation will be the new default. The networks will work as one.

From Your Site Articles

Related Articles Around the Web

You must be logged in to post a comment Login