Crypto World

Sam Altman’s OpenAI Moves Ahead With Pentagon AI Deal After Anthropic Says No

TLDR:

- OpenAI signed a deal to deploy AI models on U.S. Department of War classified networks on Feb. 28, 2026.

- The agreement bans domestic mass surveillance and requires human control over lethal force decisions.

- Anthropic reportedly refused a similar Pentagon deal, citing autonomous weapons and surveillance risks.

- Backlash on X was swift, with thousands of users announcing plans to cancel ChatGPT subscriptions.

OpenAI has agreed to deploy its AI models on classified U.S. Department of War networks, CEO Sam Altman announced. The deal follows reports that Anthropic publicly declined similar Pentagon demands over autonomous weapons and surveillance concerns.

Altman posted the announcement to X, where it quickly drew millions of views and thousands of replies. The reaction online was largely critical, with many users threatening to cancel their ChatGPT subscriptions.

OpenAI and the Department of War Reach Classified AI Agreement

The agreement allows OpenAI models to operate within DoW classified systems under specific conditions.

Altman stated the deal includes explicit prohibitions on domestic mass surveillance. It also requires human oversight for any use of force, including autonomous weapons systems. Deployment will occur on cloud networks only, with OpenAI personnel embedded to monitor model behavior.

Altman noted on X that the DoW agreed with these core safety principles.

According to the post, those principles are also reflected in existing law and policy. OpenAI said it will build technical safeguards to keep models aligned with the agreement’s terms. The company also called on the DoW to extend the same terms to all AI companies.

Altman framed the deal as part of a broader effort to reduce friction between AI companies and the government. He wrote that OpenAI wants to move away from legal and governmental conflicts.

The announcement signals a shift toward negotiated frameworks rather than standoffs. OpenAI described its mission as serving all of humanity amid a “complicated, messy, and sometimes dangerous world.”

The post received over 11,000 likes within hours of going live. Reposts and quote replies numbered in the thousands. Despite the scale of engagement, most visible reactions skewed negative.

Anthropic’s Refusal Puts Spotlight on OpenAI’s Pentagon Move

Reports from the previous day indicated Anthropic CEO Dario Amodei refused similar Pentagon demands. The refusal reportedly centered on concerns about enabling mass surveillance and autonomous weapons.

Amodei allegedly offered to help the DoW transition to another provider rather than comply. That stance drew widespread praise from AI safety advocates and researchers.

OpenAI’s subsequent agreement was widely read as stepping into the gap Anthropic left. Critics on X accused the company of opportunism. Several users announced they were switching from ChatGPT to Claude. Some described the move as contradicting OpenAI’s own stated safety values.

Crypto World

Morgan Stanley Applies for National Trust Charter to Hold Clients’ Crypto

Morgan Stanley has taken another step deeper into digital assets, filing for a new national trust bank charter that would allow the firm to custody cryptocurrencies and carry out related services for clients in the United States.

Key Takeaways:

- Morgan Stanley applied for a national trust charter to custody crypto and provide trading and staking services.

- The move is part of a broader institutional push for regulated digital asset infrastructure.

- Approval would let the bank hold client crypto directly as it expands ETFs and wealth management offerings.

A public filing with the Office of the Comptroller of the Currency shows the application, submitted Feb. 18, is under the name Morgan Stanley Digital Trust, National Association.

The move would establish a newly created banking entity rather than an acquired institution.

Morgan Stanley Subsidiary to Offer Crypto Custody, Trading and Staking Services

According to reports from Bloomberg and Forbes, the subsidiary would provide custody for selected digital assets and support investment activity through purchases, sales, swaps and transfers.

The filing also outlines plans to offer staking services, an increasingly common feature among institutional crypto platforms.

A national trust charter permits fiduciary operations such as asset safekeeping, custody and trust services. “De novo” status indicates the bank is being formed from scratch.

If approved, it would mark Morgan Stanley’s first trust charter dedicated specifically to crypto.

The application comes amid a broader push by financial institutions to secure federal oversight for digital asset operations.

More recently, payments firms and trading platforms, among them Stripe-owned Bridge and Crypto.com, have also pursued similar approvals.

The race reflects growing demand from institutional clients seeking regulated custody and trading infrastructure following years of market volatility and high-profile exchange failures.

Morgan Stanley has been steadily expanding its presence in the sector. In January, the bank appointed equity markets executive Amy Oldenburg to lead a newly formed digital asset division.

Job postings indicate the firm is hiring additional specialists across strategy and product roles tied to crypto services.

The investment bank has also filed to launch spot Bitcoin and Solana exchange-traded funds, followed by a proposed staked Ether ETF.

Together, the filings suggest a wider strategy aimed at integrating digital assets into traditional wealth management offerings.

If regulators approve the charter, Morgan Stanley would be able to directly safeguard client holdings instead of relying on third-party custodians, potentially positioning the firm as a full-service provider for institutional crypto investors.

OCC Grants Trust Bank Charters to Major Crypto Firms

The OCC approved national trust bank charters in December for a slate of crypto and digital asset firms, including BitGo, Fidelity Digital Assets, Circle, Ripple and Paxos, widening the on ramp for tokenized finance.

Trust banks sit in a narrower lane than full-service banks, since they generally cannot take deposits or make loans.

Even so, the model can still open doors for stablecoin issuers that want to custody assets and run conversion and settlement services without relying entirely on third-party providers.

Earlier this year, World Liberty Financial also filed for a US national banking charter as stablecoins shift from a trading tool into payment infrastructure.

The post Morgan Stanley Applies for National Trust Charter to Hold Clients’ Crypto appeared first on Cryptonews.

Crypto World

OpenAI Wins Defense Contract Hours After Govt Ditches Anthropic

OpenAI has secured a deal to run its AI models on the Pentagon’s classified network, a move announced by OpenAI CEO Sam Altman in a late Friday post on X. The arrangement signals a formal step toward embedding next-generation AI within sensitive military infrastructure, framed by assurances of safety and governance that align with the company’s operating limits. Altman’s message described the department’s approach as one that respects safety guardrails and is willing to work within the company’s boundaries, underscoring a methodical path from civilian deployment to classified environments. The timing places OpenAI at the center of a broader debate about how public institutions should harness artificial intelligence without compromising civil liberties or operational safety, particularly in defense contexts.

The news comes as the White House directs federal agencies to halt use of Anthropic’s technology, initiating a six-month transition for agencies already relying on its systems. The policy demonstrates the administration’s intent to tighten oversight over AI tools used across government while still leaving room for carefully orchestrated, safety-conscious deployments. The juxtaposition between a Pentagon-backed integration and a nationwide pause on a rival platform highlights a government-wide reckoning about how, where, and under what safeguards AI technologies should operate in sensitive domains.

Altman’s remarks emphasized a cautious but constructive stance toward national-security applications. He framed the OpenAI arrangement as one that prioritizes safety while allowing access to powerful capabilities, an argument that aligns with ongoing discussions about responsible AI use in government networks. The Defense Department’s approach—favoring controlled access and rigorous governance—reflects a broader policy impulse to build operational safety into deployments that could otherwise accelerate where and how AI informs critical decisions. The public signaling from both sides suggests a model in which collaboration with defense entities proceeds under strict compliance frameworks rather than broad, unfiltered usage.

Within this regulatory and political backdrop, Anthropic’s situation remains a focal point. The company had been the first AI lab to deploy models across the Pentagon’s classified environment under a $200 million contract signed in July. Negotiations reportedly collapsed after Anthropic sought assurances that its software would not enable autonomous weapons or domestic mass surveillance. The Defense Department, by contrast, insisted that the technology remain available for all lawful military purposes, a stance designed to preserve flexibility for defense needs while maintaining safeguards. The divergence illustrates the delicate balance between enabling cutting-edge capabilities and enforcing guardrails that align with national security and civil-liberties considerations.

Anthropic later stated it was “deeply saddened” by the designation and signaled its intention to challenge the decision in court. The move, if upheld, could set a significant precedent affecting how American technology firms negotiate with government agencies as political scrutiny of AI partnerships intensifies. OpenAI, for its part, has indicated it maintains similar restrictions and has written them into its own agreement framework. Altman noted that OpenAI prohibits domestic mass surveillance and requires human accountability in decisions involving the use of force, including automated weapons systems. These provisions are meant to align with the government’s expectations for responsible AI use in sensitive operations, even as the military explores deeper integration of AI tools into its workflows.

Public reaction to the developments has been mixed. Some observers on social platforms questioned the trajectory of AI governance and the implications for innovation. The discussion touches on broader concerns about how security and civil liberties can be reconciled with the speed and scale of AI deployment in governmental and defense contexts. Nonetheless, the core takeaway is clear: the government is actively experimenting with AI in national-security spaces while simultaneously imposing guardrails to prevent misuse, with the outcomes likely to shape future procurement and collaboration across the tech sector.

Altman’s comments reiterated that OpenAI’s restrictions include a prohibition on domestic mass surveillance and a requirement for human oversight in decisions involving force, including automated weapons systems. Those commitments are framed as prerequisites for access to classified environments, signaling a governance model that seeks to harmonize the power of large-scale AI models with the safeguards demanded by sensitive operations. The broader trajectory suggests a sustained interest among policymakers and defense stakeholders in harnessing AI’s benefits while maintaining tight oversight to prevent overreach or misuse. As this enters a phase of practical implementation, both government agencies and tech providers will be measured against their ability to maintain safety, transparency, and accountability in high-stakes settings.

The unfolding narrative also underscores how procurement and policy decisions around AI will influence the technology’s broader ecosystem. If the Pentagon’s experiments with OpenAI’s models within classified networks prove scalable and secure, they could set a template for future collaborations that blend cutting-edge AI with rigorous governance, a model likely to ripple into adjacent industries—including those exploring AI-assisted analytics and blockchain-based governance mechanisms. At the same time, the Anthropic episode demonstrates how这样 procurement negotiations can hinge on explicit guarantees regarding weaponization and surveillance—an issue that could shape the terms under which startups and incumbents pursue federal contracts.

In parallel, the public discourse around AI policy continues to evolve, with lawmakers and regulators watching closely how private firms respond to national-security demands. The outcome of Anthropic’s intended legal challenge could influence the negotiating playbook for future government partnerships, potentially affecting how terms are drafted, how risk is allocated, and how compliance is verified across different agencies. The OpenAI-aided deployment inside the Pentagon’s classified network remains a test case for balancing the speed and utility of AI with the accountability and safety constraints that define its most sensitive applications.

As the regulatory landscape continues to shift, many in the tech community will be watching for how these developments crystallize into concrete practice—how assessments of risk, security protocols, and governance standards evolve in next-generation AI deployments. The interplay between aggressive capability development and deliberate risk containment is now a central feature of strategic technology planning, with implications that extend beyond defense to other sectors that rely on AI for decision-making, data analysis, and critical operations. The coming months will reveal whether the OpenAI-DoD collaboration can serve as a durable model for secure, responsible AI integration within the state’s most sensitive enclaves.

OpenAI’s late-Friday X post framing the Pentagon deployment, and the Defense Department’s safety-oriented stance toward Anthropic, anchor the narrative in primary statements. The Truth Social post attributed to President Trump further contextualizes the political climate surrounding federal AI policy. On Anthropic’s side, the company’s official statement provides the formal counterpoint to the designation and its legal trajectory. Together, these sources outline a multi-faceted landscape where national security, civil liberties, and commercial interests intersect in real time.

Crypto World

U.S. and Israel Strike Iran, Crypto Market Loses $100M in Minutes

TLDR:

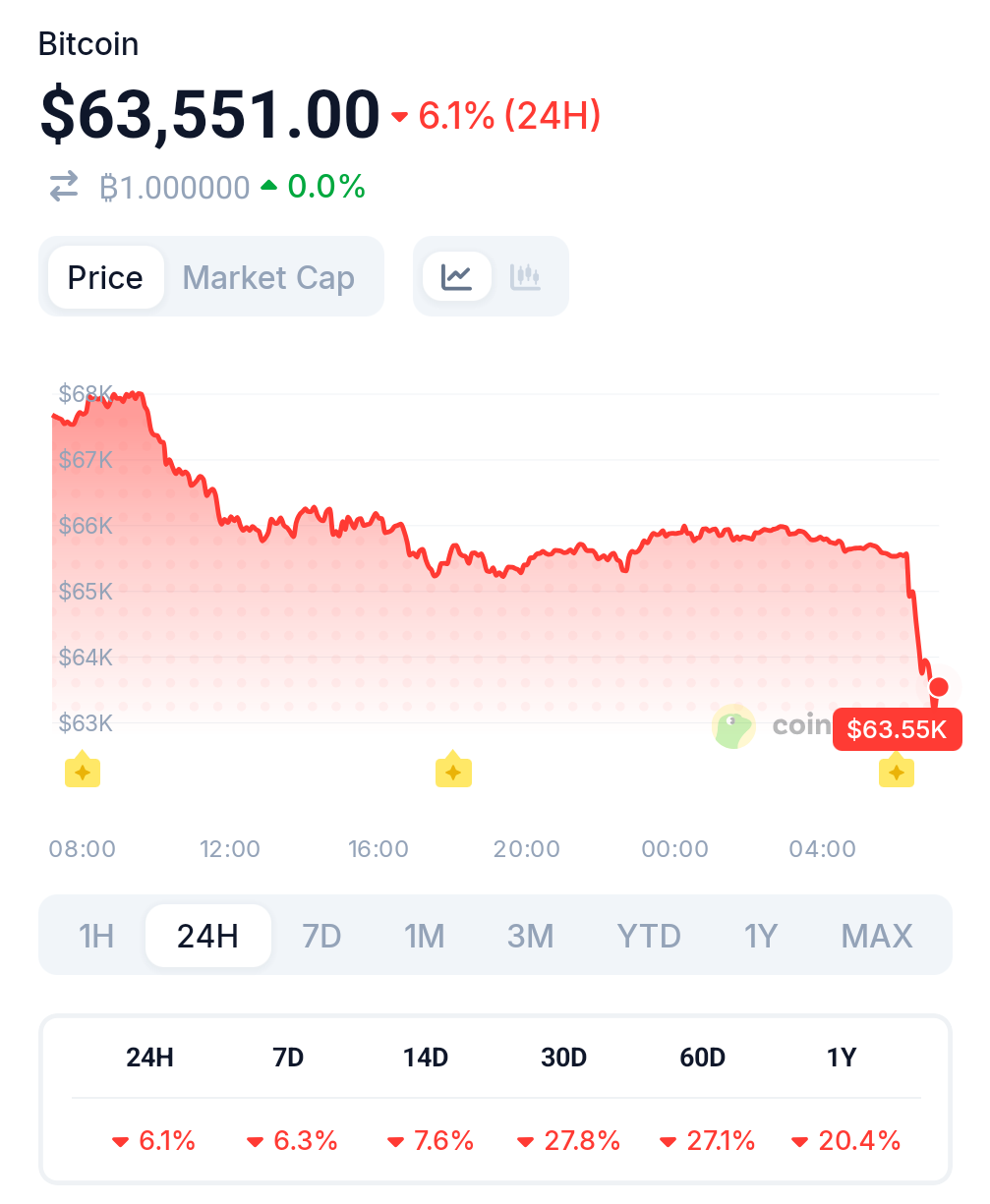

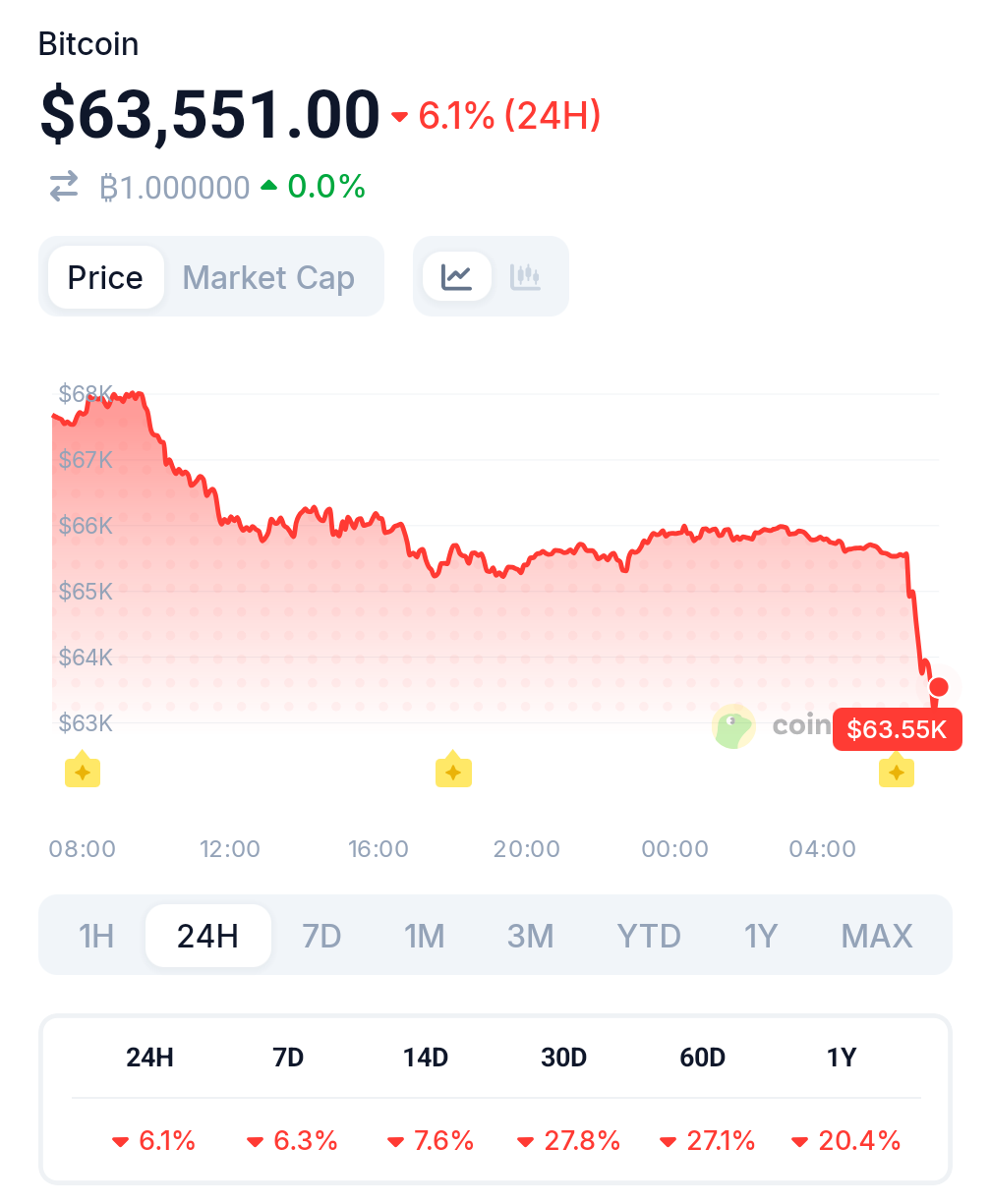

- BTC dropped below $64,000 within hours of Israel’s confirmed strike on Iran’s presidential HQ.

- Ethereum fell over 5% to under $1,900 as traders liquidated risk positions across altcoins.

- Over $100M in long positions were wiped out within 15 minutes of the strike news hitting markets.

- Polymarket trader Vivaldi007 turned $385K profit betting on a U.S.-Israel Iran strike since Feb 8.

Explosions rocked Tehran after Israel launched strikes on Iran’s presidential headquarters and Ministry of Intelligence. Sirens blared across Israel as the IDF sent emergency alerts to citizens’ phones.

Crypto markets responded immediately, shedding over $100 million in long positions within 15 minutes. The joint operation, reportedly involving the United States, sent shockwaves far beyond the Middle East.

Israel-Iran Strike Sends Crypto Prices Into Freefall

Bitcoin dropped roughly 3% within hours of the news breaking. It fell below $64,000 as traders rushed to cut exposure.

Ethereum took a harder hit, sliding over 5% to under $1,900. The broader crypto market cap lost around 6% in early trading, according to market data. According to a snapshot from the cryptobubbles, the market appears red. Most assets are recording substantial drops.

The IDF confirmed sirens sounded throughout Israel shortly before the strikes became public. Citizens received direct cellular alerts to stay near protected spaces. The military framed the alert as a proactive measure. It signaled the scale of what was unfolding.

On-chain tracking platform Lookonchain reported one high-profile casualty of the volatility. Trader Machi, who had deposited $245,000 just four days prior, was liquidated again. His account dropped to only $13,580. The timing proved catastrophic for leveraged long positions across the board.

Not everyone lost. Lookonchain also flagged Polymarket trader Vivaldi007, who had been betting on a U.S.-Israel strike against Iran since February 8. He placed wagers on nearly every available date and kept losing until now. The strikes pushed his total profit to $385,000.

Geopolitical Risk Reignites Crypto Market Volatility

This pattern is not new. When the U.S. struck Iranian nuclear sites in June 2025, BTC plunged below $100,000 during a 7% market-wide selloff.

Oil supply fears and global economic uncertainty drove the move. Crypto behaved like a risk asset, not a safe haven.

The April 2024 Israel-Iran exchange produced a similar response. BTC briefly dipped under $60,000 as capital rotated toward gold and the dollar. Markets recovered once tensions cooled. Whether that playbook repeats depends on what comes next.

Iran’s potential response remains the key variable. A closure of the Strait of Hormuz, which handles roughly 20% of global oil, could spike energy prices and reignite inflation fears.

Central bank tightening in that scenario would add further pressure on risk assets. Past modeling suggests a full escalation could cut crypto valuations by 10 to 20% in the short term.

The IDF has not issued further operational updates. Markets remain on edge.

Crypto World

Bitcoin Crashes as US and Israel Strike Iran, War Begins

Israel and the United States carried out a joint strike on Iran early Saturday, marking a major escalation in regional tensions. Bitcoin reached extremely to the news, dropping straight to $63,000 and extending daily losses to nearly 7%.

Israeli Defense Minister Israel Katz described the operation as a “preemptive strike.” The Israeli government declared a nationwide state of emergency, warning of possible Iranian retaliation using drones and ballistic missiles.

US Iran War Officially Starts

According to CNN, the strike was coordinated between Washington and Jerusalem. Officials said the action aimed to counter what they described as an immediate threat.

Details on the specific targets have not yet been fully disclosed.The move follows weeks of rising tensions between the U.S. and Iran. Washington yesterday designated Iran a State Sponsor of Wrongful Detention, accusing Tehran of holding American citizens for political leverage.

At the same time, the U.S. increased its military presence in Israel, deploying advanced fighter jets and additional assets across the region.

Bitcoin Crashes and Erased Weekly Gains

Bitcoin fell sharply following news of the strike. The cryptocurrency dropped more than 6% in 24 hours, sliding to around $63,300.

The decline erased recent recovery attempts and extended broader weakness over the past month.Traders appear to be cutting risk exposure amid fears of a wider regional conflict.

If Iran retaliates directly against Israeli or U.S. assets, the situation could escalate quickly. Energy markets are also on alert, given Iran’s strategic position in global oil routes.

Crypto World

OpenAI Wins Defense Contract After US Halts Anthropic Use

OpenAI has reached an agreement with the United States Department of Defense to deploy its artificial intelligence models on classified military networks, just hours after the White House ordered federal agencies to stop using technology from rival firm Anthropic.

In a late Friday post on X, OpenAI CEO Sam Altman announced the deal, saying the company would provide its models inside the Pentagon’s “classified network.” He wrote that the department showed “deep respect for safety” and a willingness to work within the company’s operating limits.

The announcement came amid a turbulent week for the AI sector. Earlier the same day, Defense Secretary Pete Hegseth labeled Anthropic a “Supply-Chain Risk to National Security,” a designation typically applied to foreign adversaries. The ruling requires defense contractors to certify they are not using the company’s models.

President Donald Trump simultaneously directed every US federal agency to immediately halt use of Anthropic technology, with a six-month transition period for agencies already relying on its systems.

Related: Crypto VC Paradigm expands into AI, robotics with $1.5B fund: WSJ

Anthropic Pentagon talks collapse over AI use limits

Anthropic was the first AI lab to deploy models across the Pentagon’s classified environment under a $200 million contract signed in July. Negotiations collapsed after the company sought guarantees that its software would not be used for autonomous weapons or domestic mass surveillance. The Defense Department insisted the technology be available for all lawful military purposes.

In a statement, Anthropic said it was “deeply saddened” by the designation and intends to challenge the decision in court. The company warned the move could set a precedent affecting how American technology firms negotiate with government agencies, as political scrutiny of AI partnerships continues to intensify.

Altman said OpenAI maintains similar restrictions and that they were written into the new agreement. According to him, the company prohibits domestic mass surveillance and requires human responsibility in decisions involving the use of force, including automated weapons systems.

Related: Pantera, Franklin Templeton join Sentient Arena to test AI agents

OpenAI faces backlash after deal

Meanwhile, some users on X voiced skepticism. “I just canceled ChatGPT and bought Claude Pro Max,” Christopher Hale, an American Democratic politician, wrote. “One stands up for the God-given rights of the American people. The other folds to tyrants,” he added.

“2019 OpenAI: we will never help build weapons or surveillance tools. 2026 OpenAI: department of War, hold my classified cloud instance. Integrity arc go brrrrrrr,” one crypto user wrote.

Magazine: Bitcoin may take 7 years to upgrade to post-quantum — BIP-360 co-author

Crypto World

Bitcoin’s Price Plunges Below $64K as Israel Attacks Iran

Israel also announced a state of emergency as it expects a quick retaliation by Iran.

The enhanced price volatility this week continues, as bitcoin has started to lose value rapidly once again, dropping to a multi-day low of well under $63,600.

The latest leg down was likely prompted by the quickly escalating global tension, especially between the two old enemies – Iran and Israel.

The breaking story started to develop less than half an hour ago on Saturday morning when multiple news outlets reported that Israel had launched an “preemptive attack” against Iran. The former’s Defense Minister, Israel Katz, announced a state of emergency within the country because they expect retaliation from Iran by drones and other strikes.

Similar instances in the past have impacted bitcoin’s price, and this time is no different. Given the fact that the cryptocurrency space is the only financial market open during the weekend, the effects were immediate.

In the span of just minutes, bitcoin went from $66,000 to $63,600 before recovering some ground to $64,000. However, the asset is down by over four grand since yesterday when it was rejected at $68,000.

Before that, it peaked at $70,000 on Wednesday after it bounced from a multi-week low of $62,500 marked a day earlier. The altcoins have experienced similar volatility, with many dropping by 2% or more in the past hour alone.

Consequently, the liquidations are on the rise again, hitting $450 million on a 24-hour scale. $185 million from the total came in just the last hour.

You may also like:

Binance Free $600 (CryptoPotato Exclusive): Use this link to register a new account and receive $600 exclusive welcome offer on Binance (full details).

LIMITED OFFER for CryptoPotato readers at Bybit: Use this link to register and open a $500 FREE position on any coin!

Crypto World

Bitcoin drops to $63,000 as U.S. and Israel launch strikes on Iran

Bitcoin neared $63,000 in Saturday trading after the U.S. and Israel launched military strikes on Iran, pushing the largest cryptocurrency down roughly 3% in a matter of hours and extending what had already been a difficult weekend for risk assets.

The move brings bitcoin to its lowest level since the Feb. 5 crash, when the token briefly dipped below $60,000.

Israeli Defense Minister Israel Katz declared an immediate state of emergency across all areas of Israel. A U.S. official confirmed American participation in the strikes, The Wall Street Journal reported.

The sell-off follows a well-established pattern. Bitcoin trades 24 hours a day, 7 days a week, while equity and bond markets are closed on weekends.

That makes it one of the only large, liquid assets available for traders to sell when geopolitical risk spikes outside of traditional market hours.

The result is that bitcoin often acts as a pressure valve for broader risk-off sentiment during weekend events, absorbing selling that would otherwise spread across equities, commodities, and currencies if those markets were open.

The attack risks a wider regional conflict in one of the most economically sensitive parts of the world, following a month-long U.S. military buildup and failed negotiations over Iran’s nuclear program.

Crypto World

Anthropic Supply Chain Risk Designation Triggers Lawsuit Against Trump Administration

TLDR:

- Anthropic plans court action after rejecting Pentagon requests tied to surveillance and autonomous weapons permissions.

- Contract proposals included access to geolocation, browsing data, and financial records from commercial brokers.

- Defense contractors now face compliance risks when using Claude across enterprise and cloud operations.

- The designation places Anthropic’s $380 billion IPO strategy under legal and regulatory uncertainty.

Anthropic is heading to federal court. The AI company confirmed it will challenge the Department of War’s move to designate it a supply chain risk.

Secretary Pete Hegseth announced the designation on X after months of failed contract negotiations. The move threatens to ripple far beyond a single Pentagon deal.

Read also: Sam Altman’s OpenAI Moves Ahead With Pentagon AI Deal After Anthropic Says No

Anthropic’s Pentagon Deal Collapsed Over Surveillance Demands

The breakdown started with two narrow exceptions Anthropic refused to drop.

The company would not allow Claude to be used for mass domestic surveillance of Americans. It also rejected fully autonomous weapons applications. Those were the only two lines Anthropic would not cross.

But details surfaced through Axios changed the story significantly, according to an X post by market observer Shanaka Anslem Perera.

The Pentagon’s proposed compromise would have required access to Americans’ geolocation data. It also included web browsing history and personal financial records sourced from data brokers.

Under Secretary Emil Michael was reportedly offering this deal by phone at the exact moment Hegseth posted the designation publicly.

Anthropic pushed back directly. In a published statement, the company called the designation legally unsound and historically unprecedented. No American company has ever been publicly hit with this classification before. It has typically been reserved for foreign adversaries.

The company also clarified what the designation actually covers under 10 USC 3252.

A supply chain risk designation can only restrict Claude’s use on Department of War contract work. It cannot reach commercial API access, claude.ai subscriptions, or enterprise licenses. Anthropic’s legal team is betting the court agrees.

Pentagon Accepted OpenAI’s Identical Safety Terms Hours Later

The business stakes are enormous. Eight of the ten largest US companies currently use Claude. That includes defense contractors, cloud providers, banks, and consulting firms. The $200 million Pentagon contract is not the core concern. The $14 billion enterprise ecosystem is.

Every general counsel at every Fortune 500 firm with Pentagon exposure now faces the same question. Is using Claude worth the legal uncertainty? That question alone slows procurement cycles and complicates renewals.

Anthropic’s expected IPO, reportedly targeting a $380 billion valuation with $30 billion in new capital, now sits on hold. No underwriter will price an offering while the company carries a designation alongside Huawei.

Hours after blacklisting Anthropic, the Pentagon accepted OpenAI’s proposed safety framework. That framework contained the same two red lines: no mass surveillance, no autonomous lethal weapons. Anthropic said no amount of pressure will shift its position on either point.

Crypto World

Tether Freezes $4.2B in USDT Linked to Global Crypto Crime Crackdown

TLDR:

- Tether has frozen $4.2B in USDT since 2021, with most enforcement actions taking place after 2023.

- U.S. authorities linked nearly $61M in frozen USDT to pig-butchering scams and online fraud networks.

- USDT supply now exceeds $180B, making enforcement actions more impactful across global crypto markets.

- Wallet freezing tools now play a central role in tracking and blocking cross-border illicit crypto flows.

Tether has frozen billions of dollars in USDT connected to criminal activity as regulators escalate global crypto enforcement. The action reflects growing cooperation between stablecoin issuers and law enforcement agencies.

Authorities now treat stablecoins as critical targets in fraud and sanctions investigations. The move places token controls at the center of crypto crime prevention.

Tether freezes USDT amid rising global enforcement actions

The stablecoin issuer said it has frozen about $4.2 billion in USDT tied to illicit activity. Most of the frozen amount occurred after 2023 as investigations intensified.

Data published by Reuters shows that more than $3.5 billion was restricted during the past three years. USDT supply has expanded rapidly during the same period.

The company confirmed it recently helped the U.S. Department of Justice freeze nearly $61 million linked to pig-butchering fraud schemes. These scams rely on long-term social manipulation to steal funds.

Tether also blocked wallets connected to human trafficking and conflict-related activity in Israel and Ukraine. Sanctioned Russian exchange Garantex reported that its USDT balances were frozen last year.

Figures shared by Wu Blockchain show USDT circulation now exceeds $180 billion. That level stands far above the $70 billion recorded three years ago.

The company can remotely freeze tokens held in user wallets upon receipt of formal requests from authorities. This mechanism allows direct intervention without blockchain reorganization.

Tether freezes USDT as supply tops $180 billion worldwide

USDT remains the world’s largest dollar-backed stablecoin by market value. Market data confirms the token’s dominance in daily trading volume.

Law enforcement agencies increasingly view stablecoins as key channels for moving illicit funds. Officials now track wallet activity across borders with greater coordination.

Tether said its compliance tools support global investigations into fraud, trafficking, and sanctions violations. The company has expanded wallet monitoring and blacklist functions over time.

Authorities credit the freezing capability with preventing rapid movement of stolen crypto. Funds can be locked before they reach exchanges or conversion services.

The scale of frozen assets shows how deeply stablecoins intersect with financial crime probes. It also signals tighter oversight of centralized issuers within the crypto market.

USDT’s growth continues alongside rising scrutiny from regulators and prosecutors. The stablecoin now operates under closer observation than at any point in its history.

Crypto World

Morgan Stanley Files for Crypto Trust Charter to Custody Bitcoin and Crypto Directly

TLDR:

- Morgan Stanley manages ~$9.3T in assets and filed for a national trust bank charter to custody crypto.

- The charter could allow staking services alongside direct custody for its 18 million clients.

- Morgan Stanley previously described Ripple as a leading SWIFT alternative for international payments.

- Citi is also building crypto infrastructure as institutional adoption accelerates across Wall Street.

Morgan Stanley is making a direct push into digital asset infrastructure. The firm, managing roughly $9.3 trillion in client assets, has reportedly filed for a national trust bank charter.

The move would allow it to custody Bitcoin and other cryptocurrencies at a bank-grade level. It could also open the door for client staking services.

Morgan Stanley Moves Toward Direct Crypto Custody With Trust Bank Filing

The filing marks a clear step beyond simple crypto access. Most Wall Street firms have previously relied on third-party custodians. This charter would let Morgan Stanley hold digital assets directly on behalf of clients.

That distinction matters. Custody is the foundation of institutional crypto infrastructure. Control over custody means control over client assets and the yield those assets can generate.

The firm serves approximately 18 million clients. Even a modest allocation shift across that base could move significant capital into crypto markets, according to commentary shared by crypto analyst account CryptosRus on X.

Morgan Stanley has followed a visible pattern. Access came first, then custody infrastructure, and now potentially staking yield. The progression mirrors how traditional financial services firms have historically absorbed new asset classes.

XRP and Bitcoin Both Surface as Morgan Stanley Builds Crypto Rails

Morgan Stanley’s prior statements have drawn attention alongside the charter news. The firm previously described Ripple as a leading alternative to SWIFT for international payments, according to @markchadwickx on X.

Internal documentation, as cited in the same post, reportedly noted XRP’s efficiency compared to Bitcoin and its closer alignment with how traditional banks currently operate. Morgan Stanley has not publicly confirmed those specific internal assessments.

Bitcoin remains central to the custody application. The charter, if approved, would position the firm to facilitate client purchases and swaps across multiple digital assets.

The filing comes as Washington edges closer to potential regulatory clarity. The Clarity Act has been referenced in financial circles as a framework that could formalize how institutions handle digital assets.

Other major players are also moving. Citi has been building out its own crypto infrastructure in parallel, adding further weight to the broader institutional trend.

-

Politics6 days ago

Politics6 days agoBaftas 2026: Awards Nominations, Presenters And Performers

-

Sports5 days ago

Sports5 days agoWomen’s college basketball rankings: Iowa reenters top 10, Auriemma makes history

-

Fashion13 hours ago

Fashion13 hours agoWeekend Open Thread: Iris Top

-

Politics5 days ago

Politics5 days agoNick Reiner Enters Plea In Deaths Of Parents Rob And Michele

-

Business4 days ago

Business4 days agoTrue Citrus debuts functional drink mix collection

-

Politics1 day ago

Politics1 day agoITV enters Gaza with IDF amid ongoing genocide

-

Crypto World4 days ago

Crypto World4 days agoXRP price enters “dead zone” as Binance leverage hits lows

-

Sports3 hours ago

The Vikings Need a Duck

-

Business6 days ago

Business6 days agoMattel’s American Girl brand turns 40, dolls enter a new era

-

Business6 days ago

Business6 days agoLaw enforcement kills armed man seeking to enter Trump’s Mar-a-Lago resort, officials say

-

Tech4 days ago

Tech4 days agoUnsurprisingly, Apple's board gets what it wants in 2026 shareholder meeting

-

NewsBeat2 days ago

NewsBeat2 days agoCuba says its forces have killed four on US-registered speedboat | World News

-

NewsBeat2 days ago

NewsBeat2 days agoManchester Central Mosque issues statement as it imposes new measures ‘with immediate effect’ after armed men enter

-

NewsBeat5 days ago

NewsBeat5 days ago‘Hourly’ method from gastroenterologist ‘helps reduce air travel bloating’

-

Tech6 days ago

Tech6 days agoAnthropic-Backed Group Enters NY-12 AI PAC Fight

-

NewsBeat6 days ago

NewsBeat6 days agoArmed man killed after entering secure perimeter of Mar-a-Lago, Secret Service says

-

Politics6 days ago

Politics6 days agoMaine has a long track record of electing moderates. Enter Graham Platner.

-

Business2 days ago

Business2 days agoDiscord Pushes Implementation of Global Age Checks to Second Half of 2026

-

NewsBeat3 days ago

NewsBeat3 days agoPolice latest as search for missing woman enters day nine

-

Business1 day ago

Business1 day agoOnly 4% of women globally reside in countries that offer almost complete legal equality

BREAKING: This is MASSIVE news for Alts…

BREAKING: This is MASSIVE news for Alts…