Crypto World

Oil rally crushes $37 million in crypto shorts as bitcoin drops

Crude oil just had its biggest day in history, and the traders shorting or taking bearish bets on it over the weekend paid the price.

Tokenized oil perpetual contracts on Hyperliquid recorded nearly $40 million in liquidations over the past 24 hours, per Coinglass, with $36.9 million of that coming from short positions that got obliterated as crude surged roughly 30% on a dramatic escalation of the Iran conflict.

The CL-USDC contract on Hyperliquid jumped to $114.77, up nearly 20% in 24 hours. The USOIL-USDH pair hit $135, up 9% on the day after already surging earlier in the week.

The oil move dwarfed everything else in commodities. Brent and WTI are trading at levels not seen since Russia’s invasion of Ukraine in 2022, and the single-day percentage gain is on track to be the largest in the history of the oil market.

The catalyst was a weekend that went from bad to catastrophic. Iran appointed Mojtaba Khamenei as new supreme leader, replacing his father who was killed in the opening wave of strikes. Israel launched a fresh round of attacks on Iranian and Hezbollah infrastructure.

Iranian missiles and drones expanded beyond Israel to hit Saudi Arabia and Bahrain, killing two people near Riyadh and targeting energy infrastructure. Iraq’s oil output dropped roughly 60%. Kuwait and the UAE trimmed production as tanker traffic through the Strait of Hormuz collapsed.

Anyone shorting oil into that backdrop got carried out. The $36.9 million in short liquidations on the CL contract alone made oil one of the largest single-asset liquidation events on Hyperliquid outside of bitcoin and ether on Sunday.

Across the broader crypto market, CoinGlass data shows 94,058 traders were liquidated in the past 24 hours with total losses hitting $364.4 million. Bitcoin accounted for $156.67 million of that, ether contributed $70.88 million, and solana added $19.8 million.

Long liquidations outpaced shorts at $215 million versus $149 million, reflecting the broader sell-off in crypto as risk assets dropped on the escalation. The largest single liquidation was a $6.88 million BTC-USD position on Hyperliquid.

Traders are increasingly using crypto perpetual markets to express macro views on oil, metals, and currencies, drawn by 24/7 access, lower margin requirements, and the ability to trade during weekends when traditional commodity markets are closed.

When missiles start flying on a Saturday, Hyperliquid’s oil contract is one of the only places in the world where you can get leveraged crude exposure.

Open interest on the CL-USDC contract sat at $195 million with $570 million in 24-hour volume, numbers that would have been unthinkable for a tokenized commodity product a year ago. The USOIL pair carried $4.1 million in open interest with $16.2 million in volume, smaller but growing.

Crypto World

XRP slips behind BNB as seven-month slide deepens

XRP fell to fifth in crypto market cap rankings as BNB moved ahead, with weekly ETF outflows and a seven-month slide weighing on price now.

Crypto World

Bitcoin bottom or bull trap? Whales and bears disagree

Bitcoin is showing mixed signals as on-chain data points in two different directions.

Summary

- CryptoQuant said record Bitcoin inflows to accumulation addresses showed whales were quietly building positions daily.

- XWIN Research Japan said STH-SOPR near one showed short-term holders were selling at losses.

- Negative Coinbase Premium showed weak US demand, keeping Bitcoin bottom confirmation out of reach now.

CryptoQuant analyst CW8900 said Bitcoin inflows to accumulation addresses are setting new records each day. The analyst said a large amount of BTC is moving into these wallets even as the market trades in a sideways range.

According to CW8900, whale activity appears to be keeping price action stable while accumulation continues. The analyst said large holders are maintaining prices and adding Bitcoin instead of selling into the market and creating panic among smaller investors.

CW8900 also said retail participation has thinned out. The analyst wrote that most retail investors have already left the market, leaving only a small group of participants while whales continue to build positions.

That reading supports the view that large players are buying quietly during a period of weak volatility. CW8900 said the trend increases the chance of an upward move if buying pressure continues without major disruption to price.

XWIN Research Japan presented a different view and said the market bottom is “not confirmed.” The firm pointed to the Short-Term Holder SOPR, or STH-SOPR, which tracks whether short-term holders are selling Bitcoin at a profit or a loss.

The report said the indicator is hovering around or below 1. That level usually shows that short-term holders are selling at a loss, a pattern often seen when weaker hands exit during a correction.

XWIN Research Japan said this can appear in the early stage of bottom formation. Still, the firm said that selling pressure alone does not confirm a reversal unless buyers step in with clear demand.

That is why the firm also focused on the Coinbase Premium Gap. The metric tracks the price difference between Coinbase and other exchanges and is often used as a signal for US spot demand.

Weak Coinbase premium keeps bottom debate open

XWIN Research Japan said the Coinbase Premium remains in negative territory. The firm said that reading shows US investors are not buying Bitcoin aggressively at current levels.

The report added that earlier bull phases often featured a steady positive premium, which helped support stronger upside momentum. That condition has not returned in the current market, according to the firm.

Taken together, the two views show a divided setup for Bitcoin. Whale wallets appear to be absorbing supply, but weak US demand is keeping the “bottom not confirmed” argument in place for now.

Disclosure: This article does not represent investment advice. The content and materials featured on this page are for educational purposes only.

Crypto World

Bitcoin Holds Near $67K This Easter as Market Enters Post-Peak Cooling Phase

TLDR:

- Bitcoin’s Easter prices show a steady rise from $5 in 2012 to $84.5K in 2025 before easing in 2026.

- Historical data reveals repeated cycles of growth followed by corrections across different market phases.

- The 2026 Easter price near $67K reflects a cooldown after the late 2025 peak above $120K.

- Current price action remains range-bound, signaling consolidation as the market searches for direction.

Bitcoin traded near $67,000 during Easter 2026, marking a pause after a sharp correction from late 2025 highs. Historical Easter data shows how the asset has evolved through cycles, reflecting both rapid growth phases and periods of consolidation.

Bitcoin’s Easter Prices Trace Its Evolution Across Market Cycles

Data shared by Watcher.Guru outlines Bitcoin’s Easter price history from 2012 to 2026. The asset traded at $5 in 2012 and climbed steadily over the years. By 2017, it had reached $1,195, reflecting early adoption and rising demand.

The trend continued into later cycles, with Easter 2021 recording $58,000 during a strong rally. However, prices have not moved in a straight line.

For instance, Easter 2018 saw $6,850, followed by a drop to $5,325 in 2019. This pattern shows alternating growth and correction phases.

Moving forward, Bitcoin reached $70,000 in Easter 2024 and $84,500 in 2025. These levels followed a strong upward phase that pushed the asset above $120,000 later in 2025. Yet, Easter 2026 shows a pullback to $67,000, reflecting a cooling period after the peak.

This historical data presents a clear pattern of expansion followed by retracement. Each cycle builds on the previous one, although corrections remain part of the trend. As a result, Easter prices provide a snapshot of Bitcoin’s position within broader market cycles.

2026 Easter Reflects Consolidation After Sharp Decline

Price action places Bitcoin near $66,795 as of writing, holding within a narrow range. The market has formed lower highs and lower lows since the late 2025 peak. This structure confirms a bearish trend despite the recent stabilization.

At the same time, the price is moving sideways between $65,000 and $75,000. This range indicates a balance between buyers and sellers after the earlier sell-off. Such periods often appear after strong declines, as the market searches for direction.

Technical indicators support this view of stabilization. The Relative Strength Index remains below 50, showing limited bullish strength. However, it has recovered from oversold levels, suggesting that selling pressure has slowed.

Similarly, the MACD shows weak momentum, with a slight negative reading and limited follow-through. Although a crossover attempt occurred, it has not developed into a clear trend. This aligns with the ongoing consolidation phase.

Key levels remain unchanged during this period. Support holds near $65,000, while $60,000 stands as a deeper floor. On the upside, resistance near $75,000 continues to limit upward movement, with $90,000 acting as a higher barrier.

Looking at the broader cycle, Bitcoin has moved through accumulation, markup, distribution, and markdown phases. The current period appears to be a pause following the markdown phase of early 2026. Price behavior within this range will determine the next stage.

Bitcoin’s Easter history continues to reflect its cyclical nature. While long-term growth remains visible, short-term movements show that corrections remain part of the market structure.

Crypto World

Ethereum Tests Key Range Support as Monthly Structure Signals Critical Turning Point

TLDR:

- Ethereum is nearing a multi-year support zone, where demand has historically driven strong price reversals.

- Monthly chart structure shows ETH moving within a defined range between $1,500 and $5,000 levels.

- Tightening volatility suggests a breakout may occur soon as price compresses near key support.

- Traders monitor for bullish confirmation signals before positioning within the current range setup.

Ethereum is approaching a critical support range on higher timeframes, as recent market structure points to a prolonged consolidation phase.

Analysts are closely watching price behavior near key levels, where risk-to-reward setups tend to favor strategic positioning within established boundaries.

Ethereum Tests Range Extremes on Higher Timeframes

Recent analysis shared by market participant Lennaert Snyder points to Ethereum revisiting a key monthly support zone.

His observations focus on a “sell-to-buy” candle that initiated the move toward the all-time high. That area now acts as a technical reference for long-term traders.

According to the tweet, price is testing the lower boundary of a multi-year range. This zone aligns with previous demand and remains a focal point for potential accumulation.

The presence of a long wick in that candle suggests liquidity remains in that region. Markets often revisit such wicks before establishing a directional move.

The broader monthly structure presents a clear cycle. Ethereum surged during 2020 and 2021, followed by a sharp decline in 2022.

Since then, price has moved sideways, forming a wide horizontal range. This structure indicates a market without a strong directional trend.

The range is defined by resistance near $4,800 to $5,000 and support between $1,500 and $1,700. These levels have repeatedly acted as turning points. Buyers tend to step in near the lower boundary, while sellers dominate near the upper limit.

Snyder’s commentary suggests that testing this lower range extreme could offer favorable setups. Traders often seek entries in such zones due to tighter risk control. However, confirmation through price action remains essential before any directional bias is established.

Consolidation Phase Signals Potential Expansion

On lower timeframes, Ethereum reflects a similar pattern of compression. After a sharp decline earlier this year, price stabilized and moved within a narrower range. This aligns with the broader monthly structure, reinforcing the idea of consolidation.

Technical indicators show reduced volatility, as Bollinger Bands have tightened. This typically precedes larger price movements, although direction remains uncertain. At the same time, momentum indicators indicate weakening bullish pressure in the short term.

Price currently trades near the middle to lower portion of its recent range. Resistance remains firm around $2,200 to $2,300, while support sits near $1,900. These levels act as immediate barriers within the broader structure.

The projected path shared in the analysis suggests a possible dip into deeper support. This move could sweep liquidity before a potential reversal. Such behavior is common in range-bound markets, where stop levels attract price action.

Two scenarios remain in focus. If Ethereum holds the lower support zone, a gradual move toward mid-range levels near $3,000 could follow. Continued strength may then push price toward the upper boundary of the range.

On the other hand, a breakdown below $1,500 on a monthly close would shift the structure. This would indicate a loss of support and open the door for further downside. Market participants continue to monitor these levels closely as price approaches a decision point.

As Ethereum trades near range extremes, attention remains on confirmation signals. The coming months are expected to provide clearer direction within this established structure.

Crypto World

AI is breaking crypto security by making hacks cheaper and easier, Ledger CTO warns

Crypto platforms — and investors — have long suffered from hacker attacks and exploits. Now, artificial intelligence (AI) is making that threat even worse.

That’s the view of Charles Guillemet, chief technology officer at crypto wallet provider Ledger, who said the economics of cybersecurity are breaking down as AI tools make it faster and cheaper to attack systems.

“Finding vulnerabilities and exploiting them becomes really, really easy,” Guillemet told CoinDesk in an interview. “The cost is going down to zero.”

His remarks come as crypto heists are in the headlines again. Just this week, Solana-based decentralized finance protocol Drift was exploited, with attackers draining $285 million worth of digital assets. It is one of the most severe exploits of the year so far. A week before that, an attack on yield protocol Resolv led to $25 million in losses.

Altogether, over $1.4 billion in assets were stolen or lost in crypto attacks over the course of the past year, according to data by DefiLlama.

From asymmetry to arms race

Security has long relied on an imbalance: it should be harder and more expensive to hack a system than the potential reward.

But AI is eroding that advantage. Tasks that once took skilled researchers months, like reverse engineering software or chaining exploits, can now be done in seconds with the right prompts.

For crypto, where code often controls large pools of funds, that shift raises the stakes.

“You need to be perfect,” Guillemet warned teams developing blockchain protocols.

The problem is compounded by AI-generated code. As more developers rely on AI tools, vulnerabilities could spread faster.

“There is no ‘make it secure’ button,” he said. “We are going to produce a lot of code that will be insecure by design.”

Raising the security bar

For crypto protocols, that means rethinking security from the ground up.

Guillemet pointed to formal verification — using mathematical proofs to validate code — as a stronger approach than traditional audits, which may miss bugs.

Hardware-based security is another layer, he said. Devices like hardware wallets isolate private keys from internet-connected systems, reducing exposure.

“When you have a dedicated device not exposed to the internet, it is more secure by design,” he said.

That approach is becoming more relevant as malware grows more advanced. Guillemet described attacks that scan compromised phones for wallet seed phrases, allowing hackers to drain funds without user interaction.

For average crypto users, Guillemet’s message is blunt: assume systems can and will fail.

“You can’t trust most of the systems that you use,” Guillemet said.

That could push more users toward cold storage, stronger operational security and keeping sensitive data offline. Even then, risks extend beyond software, including physical attacks targeting crypto holders.

Guillemet expects a divide ahead. Critical systems like wallets and protocols will invest heavily in security and adapt. But much of the broader software ecosystem may struggle to keep up.

“It’s really easier to hack everything,” he said.

Crypto World

Cayman Islands Tops U.S. Treasury Holdings as Fed Exposes $1.4 Trillion Data Gap

TLDR:

- The Cayman Islands officially holds $427 billion in U.S. Treasuries, but Fed research puts the true figure far higher.

- Fed researchers identified a $1.4 trillion undercount, making the Cayman Islands the largest foreign Treasury holder.

- Hedge funds domiciled in the Cayman Islands absorbed 37% of all net Treasury issuance between 2022 and 2024.

- Unlike central banks, hedge funds can exit Treasury positions rapidly, posing a risk to U.S. debt market stability.

The Cayman Islands, a Caribbean territory with just 90,000 residents, holds more U.S. Treasuries than Japan or China.

Federal Reserve researchers have found that official data undercounts the island’s actual holdings by $1.4 trillion. This discovery reshapes long-held assumptions about who finances American debt.

For decades, analysts pointed to Asian economic giants as the backbone of Treasury demand. The real picture, however, tells a different story entirely.

Hedge Funds Drive Cayman Islands’ Treasury Holdings Beyond Official Figures

Official records place Cayman Islands holdings at $427 billion, ranking it sixth among foreign holders. Japan leads on paper at $1.22 trillion, followed closely by China.

However, Fed researchers determined the official count misses over $1.4 trillion in actual Cayman-linked purchases.

The reason behind this gap is structural. The Cayman Islands serves as the legal domicile for roughly three-quarters of the world’s offshore hedge funds.

When those funds buy Treasuries, the purchases register under the Cayman Islands, regardless of where the fund managers actually operate.

Between 2022 and 2024, hedge funds domiciled there purchased $1.2 trillion in Treasury securities. That figure absorbed 37% of all net issuance during that period. As @BullTheoryio noted, that is nearly equal to what all other foreign investors combined purchased.

After the Fed’s adjustment, the Cayman Islands surpasses Japan, China, and the United Kingdom combined. This makes a nine-square-mile island the single largest foreign financier of U.S. government debt today.

Treasury Market Stability Faces Questions as Hedge Fund Exposure Grows

Central banks and sovereign wealth funds tend to hold Treasuries as long-term reserve assets. They rarely exit positions abruptly, even during periods of market stress. Hedge funds operate under an entirely different framework.

These funds carry leveraged positions and answer to performance mandates, not policy goals. They have no obligation to remain invested when market conditions shift against them. That difference matters greatly when the largest buyer controls such a large share of demand.

In April 2025, a sudden tariff announcement triggered simultaneous unwinding across multiple funds. That coordinated exit added pressure across the entire Treasury market at once. The event exposed just how quickly this pool of demand can reverse.

The Fed’s own paper concluded with a direct warning directed at analysts and policymakers. Researchers wrote that “data users should be aware that this major gap exists.” That single line carries weight given the scale of the miscounting involved.

The Cayman Islands’ GDP stands at $7 billion, yet funds registered there finance positions worth many times that figure overnight.

The concentration of leveraged, short-term capital in one jurisdiction now sits at the center of U.S. debt market dynamics.

Crypto World

Bitcoin Whales Are Losing $200 Million Daily As Market Fear Rises

Large Bitcoin investors are absorbing significant realized losses as the flagship cryptocurrency remains trapped in a prolonged sideways slump below $70,000.

According to on-chain data from Glassnode, wallets holding between 100 and 10,000 BTC are currently realizing daily losses of over $200 million based on a 7-day moving average. These large investors are often referred to as “whales” and “sharks.”

Bitcoin Slump Forces Major Holders Into Deep Losses

Notably, this pain is particularly acute among “Long-Term Holders.” This represents investors who acquired their coins more than six months ago near the peak of the previous rally.

The 30-day simple moving average of Long-Term Holder Realized Losses has climbed steadily since November 2025. This upward trend confirms that veteran investors are increasingly capitulating and selling at a loss.

While this flush-out of underwater buyers is a standard feature of bear-market resolutions, Glassnode analysts note it is not yet sufficient to call a bottom.

To signal the structural exhaustion that typically precedes a new bull cycle, selling pressure will likely need to decelerate to below $25 million in daily realized losses.

However, the chances of reaching that exhaustion point quickly seem slim, as the market is currently gripped by its most bearish sentiment in months.

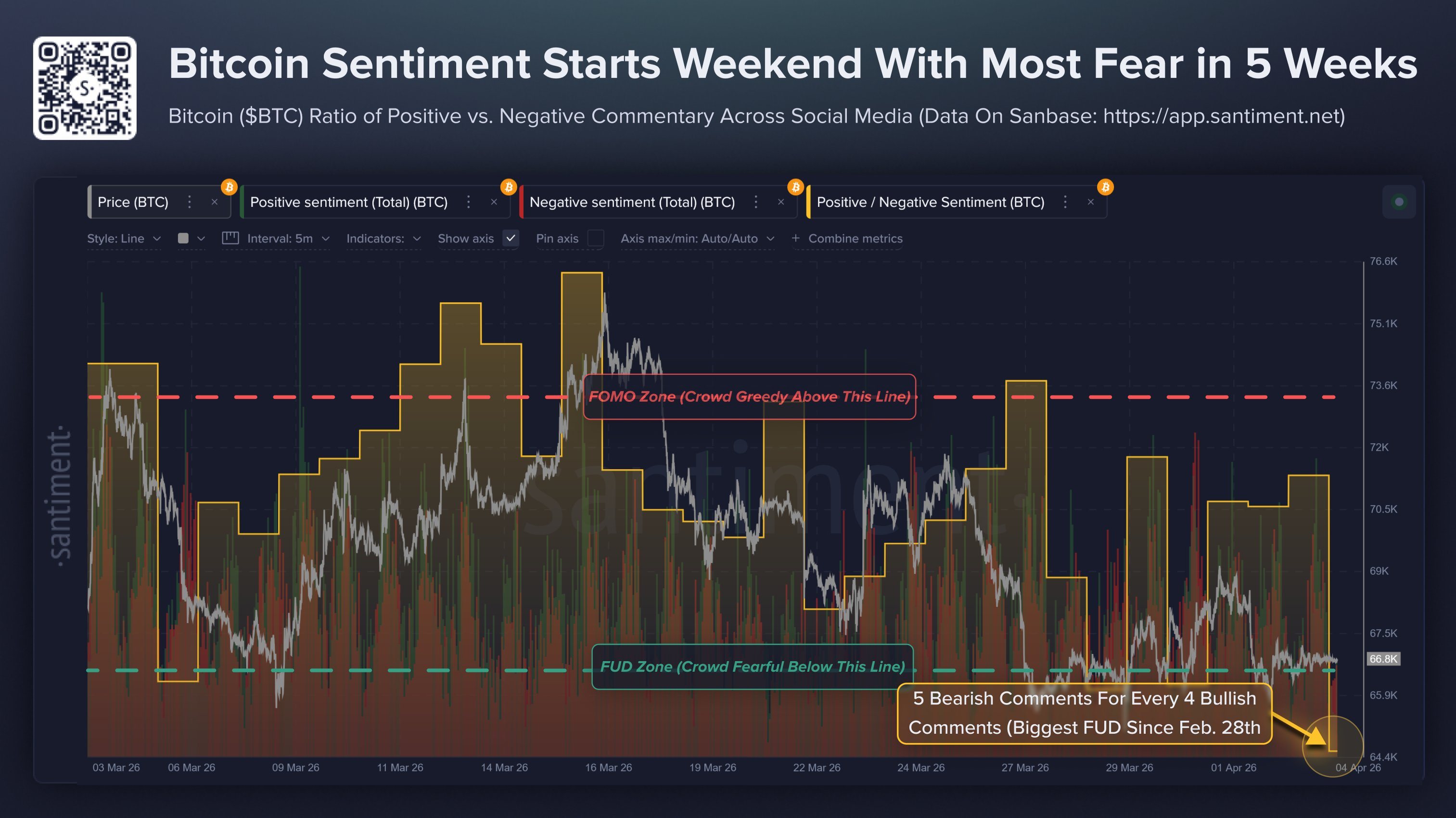

Blockchain analytics firm Santiment reports that fear, uncertainty, and doubt (FUD) have crept back into the community.

Citing data across social media platforms, including X, Reddit, and Telegram, Santiment noted that Bitcoin is seeing its highest bearish discussion ratio since late February.

The firm noted that BTC is showing a ratio of just 0.81 bullish comments per bearish one amid this extended period of stagnation.

With Bitcoin’s price hovering around $66,800, ongoing geopolitical tensions and domestic regulatory debates are fueling widespread pessimism.

Yet, Santiment pointed out that there is a silver lining for contrarian traders as markets typically move in the opposite direction of the crowd’s expectations.

Historically, this heightened fear has fueled price rebounds. This suggests the current market conditions could turn positive sooner than the broader community anticipates.

The post Bitcoin Whales Are Losing $200 Million Daily As Market Fear Rises appeared first on BeInCrypto.

Crypto World

Robert Kiyosaki issues new warning on Bitcoin and retirement

Robert Kiyosaki said current economic pressure reflects changes that began in the 1970s.

Summary

- Kiyosaki said 1974 policy shifts still shape debt, inflation, retirement pressure, and demand for Bitcoin.

- He warned baby boomers may face retirement income gaps as pensions gave way to market-based accounts.

- Santiment data showed Bitcoin bearish sentiment rose, while contrarian traders watched fear levels for reversal signs.

Robert Kiyosaki said 1974 marked a major shift in how money and retirement worked in the United States. In a post on X, he wrote that “the future created in 1974 has arrived” and tied today’s financial stress to policy changes from that period.

He connected that year to the petrodollar system and to changes in retirement planning. Kiyosaki said those changes helped shape the debt and inflation concerns now facing households and investors.

Kiyosaki also referred to the Employee Retirement Income Security Act and the wider move away from pension structures that paid workers for life. He said many workers now depend on market-based retirement accounts instead of guaranteed income after leaving work.

He warned that this shift placed more responsibility on individuals. In the same post, he wrote that “millions of baby-boomers will soon find out they have no income once they stop working,” linking that concern to long-term pressure on retirement security.

In addition, Kiyosaki repeated his long-running support for gold, silver, and Bitcoin. He described those assets as “real money” and said people should focus on financial education while looking at alternative stores of value.

His latest remarks follow similar warnings from recent months. Last month, he said a major financial “bubble burst” could send capital into scarce assets and push Bitcoin much higher. He also said Bitcoin could reach $750,000 within a year after such a crash.

Bitcoin sentiment turns more negative

At press time, Bitcoin traded near $66,826. Kiyosaki’s latest comments arrived as market sentiment around the asset weakened. Data from Santiment showed bearish discussion on social platforms rose to its highest level since late February.

The platform said the bullish-to-bearish comment ratio fell to 0.81, showing weaker confidence among traders. Santiment also said that extreme fear can sometimes act as a contrarian signal, with markets often moving against the crowd when negative sentiment grows too strong.

Crypto World

How Japan’s Surging Government Bond Yields Are Triggering a Global Liquidity Drain on Bitcoin

TLDR:

- Japan holds ¥390 trillion in JGBs — a 1% yield rise could trigger tens of trillions in unrealized losses.

- Japanese institutions are liquidating foreign risk assets, pulling global liquidity as capital returns home.

- Early 2026 saw $9.6 billion exit Bitcoin, with capital rotating into stablecoins amid rising rate pressure.

- Stablecoin supply near all-time highs signals sidelined capital that has yet to re-enter risk markets.

Rising Japanese government bond yields are quietly reshaping the global liquidity landscape in 2026. As yields climb, Japan’s largest domestic institutions face mounting pressure on their balance sheets.

This pressure triggers a chain of asset liquidations and capital repatriation that extends far beyond Japan’s borders.

Bitcoin, as a globally sensitive risk asset, is absorbing the consequences of this contraction. Understanding this dynamic is now essential for anyone tracking crypto market behavior.

How Rising JGB Yields Are Draining Global Liquidity

Japanese government bond yields have been rising steadily due to several converging macro forces. Policy normalization expectations from the Bank of Japan are a primary factor.

Persistent inflation and mounting fiscal expansion concerns are adding further upward pressure. Together, these forces are pulling bond prices lower across the curve.

Japan’s domestic institutions hold approximately ¥390 trillion in government bonds. Even a 1% rise in yields can produce tens of trillions of yen in unrealized losses.

Banks, insurers, and pension funds carry the heaviest exposure among domestic holders. These institutions are now being forced into difficult balance sheet decisions.

To manage growing losses, many institutions are liquidating risk assets abroad. Capital is being repatriated back to Japan at an accelerating pace.

Japan ranks among the world’s largest external investors, so these moves carry global weight. Each wave of repatriation effectively removes liquidity from international financial markets.

Data is already confirming this trend. Yen-denominated external credit has declined noticeably in recent months. This decline reflects the active withdrawal of Japanese capital from global markets. The essence of liquidity contraction is visible in these numbers, and Bitcoin is not immune to it.

Bitcoin Absorbs the Pressure as Deployed Liquidity Shrinks

Bitcoin’s sensitivity to global liquidity conditions makes it particularly vulnerable during this period. Historically, low-rate environments provided the fuel for Bitcoin’s price expansion cycles.

Rising rates reduce leverage across markets and suppress new demand from institutional participants. Japan’s climbing yields are directly contributing to this tightening dynamic.

Early 2026 data recorded approximately $9.6 billion flowing out of Bitcoin. Much of this capital rotated into stablecoins rather than leaving crypto markets entirely.

This rotation points to investors reducing risk exposure while staying positioned for re-entry. Higher rates appear to be the primary force behind this cautious capital movement.

Stablecoin supply data adds another layer to this picture. The “All Stablecoins (ERC20): Total Supply” chart has returned to near all-time highs.

This level shows that substantial capital remains parked and waiting on the sidelines. Yet this liquidity is not actively entering risk markets, reflecting a “liquidity exists but is not deployed” condition.

Analysts now argue that Bitcoin can no longer be tracked through on-chain metrics alone. Rates, foreign exchange movements, and global credit flows must be part of the analysis framework.

Japan’s rising JGB yields have become a central variable in understanding Bitcoin’s macro environment. Liquidity contraction originating in Tokyo is now a force felt across global crypto markets.

Crypto World

Crypto Faces Existential Token Glut as Supply Outpaces Value Growth

The crypto industry is confronting a paradox: an explosion in the number of tokens, paired with stagnating overall value. Industry observers say the surge in supply is outpacing the demand and usefulness of the assets, raising what one founder calls an existential challenge for the sector.

In a stream of posts on X, Michael Ippolito, co-founder of Blockworks, highlighted a stark divergence between the proliferation of tokens and the value they generate. “The average coin is only slightly higher than where it was in 2020 and down about 50% since 2021,” he wrote, underscoring how a larger token universe has not translated into commensurate gains for holders. He also noted that median token returns have fallen sharply, with most assets down roughly 80% from their peaks, suggesting gains have become concentrated in a narrow group of large-cap tokens while the broader market lags.

Ippolito argues the root cause is supply: a rapid expansion in token issuance has minted a vast number of assets even as total market capitalization remains mostly flat. “We created a ton of new assets and still total market cap is flat,” he said, warning that value dilution across a growing token pool undermines the industry’s fundamentals.

Key takeaways

- Token inflation is projected to outpace value generation, diluting investor returns as the number of assets multiplies against a relatively flat market cap.

- Prices and on-chain fundamentals have diverged since 2021, with on-chain revenue lifting only modestly while token prices fail to follow.

- Public commentary from prominent investors echoes concern that token issuance dynamics threaten broader ecosystem credibility and long-term relevance.

- Capital allocation appears to be shifting away from newly issued tokens toward publicly listed crypto firms, with the majority of token launches trading below their generation event prices.

Token prices break from fundamentals

Beyond the expansion of assets, observers note a weakening link between on-chain activity and market prices. In 2021, token valuations tended to track protocol revenues and usage. More recently, even as some networks have reported renewed revenue generation, prices have not mirrored that momentum. This decoupling, according to Ippolito, signals waning investor confidence in tokens as reliable vehicles for capturing value.

Arthur Cheong, founder and CEO of DeFiance Capital, echoed the sentiment, urging the industry to address the token conundrum. In a post on X, Cheong argued that if the market remains concentrated around a small handful of assets like Bitcoin and Ether, the broader ecosystem risks losing relevance. The sense of urgency around realigning token economics with price remains a recurring theme among influential investors.

Capital shifts from tokens to stocks

New research adds a practical dimension to the conversation: capital is rotating away from fresh token launches and toward publicly listed crypto companies. A February report from DWF Labs found that over 80% of token projects traded below their token generation event (TGE) price, with typical losses ranging from 50% to 70% within roughly three months. The study details a pattern where peaks occur within the first month after launch, followed by sustained selling pressure and overhang from airdrops and early investor unlocks that depress subsequent price action.

Andrei Grachev of DWF Labs framed the finding as structural rather than cyclical, suggesting that the dynamics of token issuance—especially post-launch unlocks—continue to weigh on price trajectories even for projects with active products or protocols.

Broader implications for the market

Taken together, the observations point to a market that must reconcile a rapidly expanding asset universe with a comparatively stable or shrinking value base. If the industry cannot restore alignment between token fundamentals and price, the appeal of tokens as value-bearing instruments could wane, risking broader adoption and investment interest. The conversation is reframing token issuance practices, with voices in the ecosystem calling for tighter economics, improved utility, and more disciplined distribution models to prevent perpetual dilution.

As the debate unfolds, market participants will be watching several key developments: whether new tokens adopt more conservative supply schedules or unique value accrual mechanisms, how regulators and auditors respond to proliferation and complex unlock patterns, and whether investors increasingly favor tokenized representations tied to real-world use cases or established crypto firms over speculative launches.

For readers seeking direction, the coming quarters will reveal whether the industry can re-anchor token prices to tangible fundamentals or whether concentration in a few dominant assets will persist, leaving many projects competing for marginal gains in a crowded field.

Watch next for how token issuers adapt to this critique, whether capital rotates further toward crypto-listed equities or continues to seek merit across the broader asset class, and what, if any, policy or market-driven reforms emerge to restore alignment between innovation and value.

-

NewsBeat3 days ago

NewsBeat3 days agoSteven Gerrard disagrees with Gary Neville over ‘shock’ Chelsea and Arsenal claim | Football

-

Business3 days ago

Business3 days agoNo Jackpot Winner and $194 Million Prize Rolls Over

-

Fashion2 days ago

Fashion2 days agoWeekend Open Thread: Spanx – Corporette.com

-

Entertainment6 days ago

Fans slam 'heartbreaking' Barbie Dream Fest convention debacle with 'cardboard cutout' experience

-

Crypto World4 days ago

Crypto World4 days agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Tech7 days ago

Tech7 days agoThe Pixel 10a doesn’t have a camera bump, and it’s great

-

Crypto World5 days ago

Dems press CFTC, ethics board on prediction-market insider trades

-

Tech6 days ago

Tech6 days agoAvatar Legends: The Fighting Game comes out in July and it looks pretty slick

-

Business3 days ago

Business3 days agoLogin and Checkout Issues Spark Merchant Frustration

-

Sports17 hours ago

Sports17 hours agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Tech6 days ago

Tech6 days agoApple will hide your email address from apps and websites, but not cops

-

Sports5 days ago

Sports5 days agoTallest college basketball player ever, standing at 7-foot-9, entering transfer portal

-

Tech5 days ago

Tech5 days agoEE TV is using AI to help you find something to watch

-

Politics6 days ago

Politics6 days agoShould Trump Be Scared Strait?

-

Tech5 days ago

Tech5 days agoFlipsnack and the shift toward motion-first business content with living visuals

-

Fashion6 days ago

Fashion6 days agoThe Best Spring Trends of 2026

-

Sports6 days ago

Sports6 days agoWomen’s hockey camp eyes fitness boost, tactics ahead of WC 2026 campaign | Other Sports News

-

Tech5 days ago

Tech5 days agoHow to back up your iPhone & iPad to your Mac before something goes wrong

-

Crypto World7 days ago

Crypto World7 days agoBitcoin’s Six-Month Losing Streak: What On-Chain Data Says About the Market’s Next Move

-

Politics6 days ago

Politics6 days agoBBC slammed for ignoring author of The Fraud

You must be logged in to post a comment Login