Crypto World

US military used Anthropic for Iran strike despite Trump’s ban: WSJ

The US military reportedly relied on Anthropic’s Claude AI during a major air strike in Iran, a development that surfaced just hours after President Donald Trump ordered federal agencies to halt use of the model. Commands in the region, including CENTCOM, reportedly used Claude to support intelligence analysis, target vetting, and battlefield simulations. The episode highlights how deeply AI tooling has been woven into defense operations even as policymakers push to cut ties with certain vendors. The episode underscores a tension between executive directives and on-the-ground automation that could influence procurement and risk management across defense programs.

Key takeaways

- Amazon Web Services to enable classified workflows for Claude.

- Trump administration instructed agencies to stop working with Anthropic and directed the Defense Department to treat the company as a potential security risk after contract talks broke down over unrestricted military use.

Sentiment: Neutral

Market context: The episode sits at the intersection of defense procurement, AI ethics, and national-security risk management as agencies reassess vendor dependencies and the classification of AI tools for sensitive operations.

Why it matters

The incident offers a rare glimpse into how commercial AI models are integrated into high-stakes military workflows. Claude, originally designed for broad cognitive tasks, reportedly supported intelligence analysis and the modeling of battlefield scenarios, suggesting a level of operational trust that extends beyond lab environments into real-world missions. This raises important questions about the reliability, auditing, and controllability of AI in combat planning, especially when government policy signals shift rapidly around vendor usage.

At the policy level, the friction between a contracting relationship and a presidential directive highlights a broader debate about how AI vendors should be treated in secure environments. Anthropic’s refusal to grant unrestricted military use aligns with its stated ethical boundaries, signaling that private-sector providers may increasingly push back against configurations they deem ethically problematic. The Pentagon’s response—turning to alternative suppliers for classified workloads—illustrates how defense departments may diversify AI ecosystems to reduce risk exposure, while maintaining capability in sensitive operations.

The tension also touches on the competitive dynamics of the AI-as-a-service market. With OpenAI reportedly stepping in to provide models for classified networks, the sector is likely to witness continued experimentation and renegotiation of terms around security classifications, data governance, and supply-chain risk. The situation underscores the need for rigorous governance frameworks that can adapt to rapid technological change without compromising operational security or ethical standards.

What to watch next

- Regulatory and policy updates from the Defense Department and the White House regarding AI vendor usage and security classifications.

- Any new procurement or partnerships that extend AI capabilities for classified missions, including potential agreements with alternative providers to replace or supplement Anthropic’s offerings.

- Public statements from Anthropic and OpenAI about the nature of deployments on secured networks and any new restrictions or guardrails.

- Further details on the outcome of the earlier unrestricted-use negotiations and how that will shape future defense contracting with AI vendors.

Sources & verification

- Reports about Claude’s use in a Middle East operation and the administration’s halt order, including evidence discussed with sources familiar with the matter.

- Background on Anthropic’s Pentagon contract, including the multiyear arrangement worth up to $200 million and partnerships with Palantir and AWS for classified workflows.

- Statements from Anthropic’s leadership and public comments on military use and ethical boundaries, including interviews and official responses to regulatory actions.

- OpenAI’s deployment on classified networks and related discussions, including public discourse around a deal with the U.S. military and associated coverage.

- Public discussions and social-media references connected to the OpenAI arrangement with the military, such as posts documenting industry reactions.

Anthropic’s Claude in the crosshairs: AI, ethics and policy collide in defense operations

Officials described Claude as playing a role in intelligence analysis and operational planning during a major air strike in Iran, a claim that illustrates how close AI tools have moved to battlefield decision-making. While the Trump administration moved to sever ties with Anthropic, the operational use of Claude reportedly persisted in certain commands, underscoring a disconnect between policy statements and day-to-day defense workflows. The practical reality is that AI-driven analyses, simulations, and risk assessments can slip into mission planning even as agencies reassess vendor risk and compliance requirements across departments.

The Pentagon’s prior engagement with Anthropic was substantial: a multiyear contract valued at up to $200 million and a network of partnerships, including Palantir and Amazon Web Services, that enabled Claude’s use in classified information handling and intelligence processing. The arrangement highlighted a broader strategy: diversify AI capabilities across a trusted ecosystem to ensure resilience in sensitive settings. Yet when policy directions shifted, the administration moved to reframe the vendor relationship, signaling a risk-based recalibration rather than a wholesale retreat from AI-enabled defense operations.

Behind the scenes, tensions between public policy and private sector ethics came to the fore. Defense Secretary Pete Hegseth reportedly pressed Anthropic to permit unrestricted military use of its models, a request that Anthropic’s leadership rejected as crossing ethical lines the company would not cross. The firm’s stance centers on the belief that certain uses—mass domestic surveillance and fully autonomous weapons—raise profound ethical and legal concerns, and that meaningful human oversight should survive the transition from concept to execution. This position aligns with ongoing debates about how to balance rapid AI adoption with safeguards against abuse and unintended consequences.

For its part, the Pentagon did not stand still. Facing a potential supplier gap, it began lining up replacements and reportedly reached an agreement with OpenAI to deploy models on classified networks. The shift underscores a broader strategic move to ensure continuity of capability, even as vendors re-evaluate their terms for sensitive deployments. The contrast between Anthropic’s ethical boundaries and the department’s operational needs reveals a broader policy tension: how to harness transformative technology responsibly while preserving national security imperatives.

Industry observers also noted the ecosystem effects of such transitions. The AI market is evolving toward more modular, security-cleared configurations that can be swapped or upgraded as policy and risk assessments shift. The OpenAI arrangement, in particular, signals continued appetite for integrating leading models into defense networks, albeit under stringent governance and oversight. While this trajectory promises enhanced capability for military analysts and planners, it also elevates scrutiny around data handling, model interpretability, and the risk of over-reliance on automated systems for critical decisions.

Anthropic’s CEO, Dario Amodei, has argued that while AI can augment human judgment, it cannot replace it in core defense decisions. In public remarks, he reaffirmed the company’s commitment to ethical boundaries and to maintaining human control in pivotal moments. The tension between maintaining access to cutting-edge tools and upholding ethical standards is likely to shape future negotiations with federal agencies, particularly as lawmakers and regulators scrutinize AI’s role in civilian and national-security contexts.

As the landscape evolves, the broader crypto and tech communities will be watching how these policy and procurement dynamics influence the development and deployment of advanced AI systems in high-stakes environments. The episode serves as a case study in balancing rapid technological advancement with governance, oversight, and the enduring question of where human responsibility ends and automated decision-making begins.

Crypto World

Strategy Raises STRC Yield by 25 Basis Points to 11.50%

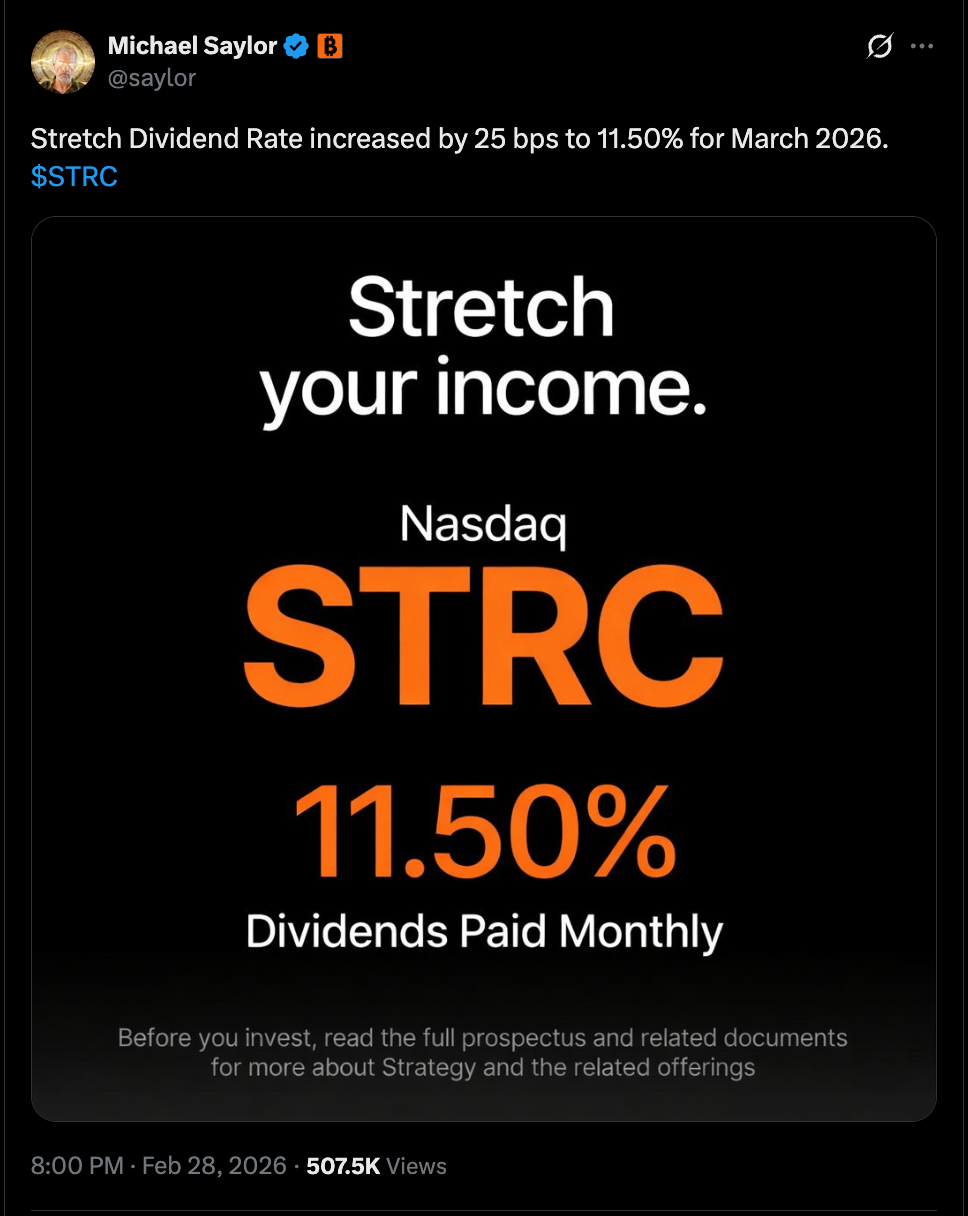

Strategy chairman Michael Saylor said in a social media post on Sunday that the largest Bitcoin (BTC) treasury company is raising the dividend on its STRC preferred stock, also known as “Stretch,” to 11.50% for March 2026, from the previous 11.25%.

STRC is perpetual, meaning the company is not obligated to buy back the stock at any specified date, and features a variable yield that changes monthly.

A Friday update on the company’s website confirmed Saylor’s post. “STRC’s dividend rate is adjusted monthly to encourage trading around STRC’s $100 par value and to help strip away price volatility,” according to the website. The dividend is also paid monthly. with the next payout date on March 31, to shareholders of record

In February, Strategy CEO Phong Le said the company is pivoting away from issuing common stock to fund its BTC purchases and toward issuing more preferred shares.

“Last year, a stretch and our perpetual preferreds raised $7 billion. That’s 33% of the entire preferred market,” Le said.

“As we go throughout the course of this year, we expect structure to be a big product for us,” he said, adding, “We will start to transition from equity capital to preferred capital.”

To be sure, the company continues to accumulate Bitcoin amid a market drawdown that has nearly halved the price of Bitcoin since October and driven down the share prices of digital asset treasury companies.

In the year to date, BTC has lost 23.2% of its value, while the share price of Bitwise Bitcoin Standard Corporations ETF (OWNB) is down 16.1%. That exchange-traded fund provides exposure to public companies holding significant amounts of Bitcoin on their balance sheets.

Related: Strategy yield wrapper lands in Europe as 21Shares lists STRC ETP

Strategy records $12.4 billion loss in Q4 2025

Strategy in early February reported a net loss of $12.4 billion for the fourth quarter of 2025, leading to investors pushing the company’s share price down by 13% to about $107 per share.

Despite revenue for the quarter increasing 1.9% year-over-year to about $123 million, the company’s stock has been in freefall.

Strategy’s (MSTR) common stock price briefly hit a high of $543 per share during intraday trading in November 2024, before falling back down below $300 in February 2025.

The company’s stock has fallen by about 75% since the November 2024 peak, closing on Friday at $129.50 a shares.

The price of BTC is trading well below Strategy’s average purchase cost of $76,020 per Bitcoin, according to data from the company.

Strategy’s last bought BTC during the week of Feb. 16, when the company purchased 592 BTC, valued at over $39.8 million, bringing its total holdings to 717,722 BTC, and marking its 100th BTC acquisition.

Magazine: Bitcoin’s ‘biggest bull catalyst’ would be Saylor’s liquidation: Santiment founder

Crypto World

Institutional crypto interest rebounds even as Bitcoin (BTC) falls 25%

The mood around digital assets has shifted again among the world’s largest allocators, according to Ron Biscardi, CEO of iConnections, which runs one of the largest capital introduction conferences globally.

Biscardi, who has spent more than 25 years in the alternative investment industry and runs a platform that represents over $55 trillion in assets, has a front-row seat. His firm tracks thousands of meetings between fund managers and institutional investors each year. That data shows how quickly sentiment can turn.

After a couple of “rough” years following the crypto market crash following the FTX collapse in 2022, interest began to stabilize at last year’s conference, he recalls. “[In 2025] we started to see funds wanting to come back, wanting to spend some money,” he said. Optimism around a more crypto-friendly regulatory stance in Washington helped, even if progress has been slow.

“I feel like what we’re seeing now at the event [this year] is a more normal experience,” Biscardi said. “It’s not extremely crazy, but it’s also not [like] ‘I don’t want to go anywhere near it.’”

A change of tone

More than 75 digital asset funds participated in this year’s event, generating roughly 750 meetings between managers and allocators, a level comparable to 2022 when crypto interest soared before the FTX collapse. Nearly one quarter of limited partners on the iConnections platform now indicate interest in digital asset strategies, reinforcing that crypto has become an established sleeve within alternatives rather than a fringe allocation.

Family offices represent the largest LP cohort expressing interest, consistent with their track record of backing emerging and innovation-driven asset classes.

And this trend has been growing in recent years. While some family offices remain cautious about the asset, many traditional wealth managers are under mounting pressure to deliver digital assets to wealthy clients, particularly in crypto hotspots like Dubai, Switzerland and Singapore.

This interest is very much alive despite the crypto winter, with the price of bitcoin down nearly 25% since the beginning of the year and its market cap losing more than a trillion in value since October’s all-time high. Stocks of popular crypto companies, like Coinbase (COIN) or Strategy (MSTR), are also trading significantly lower this year, underperforming most other tech stocks.

Biscardi, however, believes digital asset managers are “very, very close to achieving institutional legitimacy.” Bitcoin, he said, has already crossed that line, but altcoins are close. “The last piece is really the regulatory framework that lets them do it safely.”

For chief investment officers, that issue dominates. “The regulatory hurdles are number one,” Biscardi said. “It just always goes back to that.”

Large allocators, he noted, are fiduciaries. “It’s not their money, they’re fiduciaries for other people’s money, and it might be a super interesting category, but they’re just not going to allocate there until they can tell their board that they’re doing it in a responsible, safe way.”

The tone of the debate has also changed. In 2022, some investors still questioned whether crypto was real or a Ponzi scheme. “That I don’t hear any of that anymore,” Biscardi said.

In fact, some traditionally conservative pools of capital, for example, have stepped in. Endowments, which tend to focus on long-term stability and avoid sharp swings in new asset classes, have begun allocating to bitcoin and ether exchange-traded funds. The idea is not to overhaul portfolios but to add measured exposure that could lift returns in years when crypto markets perform well, especially as many investors expect equities to deliver more muted gains than in the past decade.

Still a risk asset

Nevertheless, allocators treat bitcoin “much more as a risk asset” than a store of value. “Bitcoin just hasn’t behaved that way,” he said, pointing to its correlation with equities rather than gold during market stress.

Similarly, direct token buying remains rare among institutions. Instead, he hears more about ETFs and fund structures. Limited partners rely on general partners to choose specific coins. “The LPs who get bought into the space are really looking to the GPs to make those decisions.”

What’s not rare is crypto companies investing in spreading awareness of their products and services. According to Biscardi, sponsorship numbers saw a substantial uptick at this year’s event, with companies like BitGo (BTGO), Galaxy Digital (GLXY), Ripple and Blockstream all holding top-tier sponsor status.

Read more: Bitcoin is stuck in a rut but JPMorgan says new legislation could be the ultimate spark

Crypto World

QUBT Earnings Preview: What Investors Should Know Before March 2

Key Takeaways

- Q4 FY25 earnings report scheduled for March 2, 2026

- Analysts project a $0.02 per share loss, significantly improved from last year’s $0.47 deficit

- Projected revenue of $390K represents substantial growth from prior year’s $62K

- Shares declined 8.4% on February 27, now trading beneath key technical indicators

- Implied volatility suggests potential 14.05% price swing following results

Quantum Computing Inc. approaches its fourth quarter fiscal 2025 earnings announcement scheduled for Monday, March 2, 2026, with recent price weakness creating uncertainty among shareholders. The stock experienced an 8.4% decline on February 27, closing at $8.278.

Daily trading activity registered approximately 3.37 million shares — representing a dramatic 78% reduction compared to the typical 15 million share average. This significantly lighter volume during the selloff may indicate limited selling pressure, though the implications remain subject to interpretation.

Technically, shares are positioned beneath both the 50-day moving average at $10.35 and the 200-day moving average at $13.70. Despite recent weakness, QUBT maintains gains exceeding 39% over the trailing twelve months, propelled primarily by enthusiasm surrounding its photonic computing platform.

The Street’s consensus estimate calls for a quarterly loss of $0.02 per share in Q4 2025. This figure represents substantial improvement compared to the $0.47 per share deficit recorded in the year-ago period.

On the top line, analysts anticipate revenues reaching $390K, a meaningful increase from the $62K generated in Q4 2024. Though absolute dollar amounts remain modest, the growth trajectory is capturing attention from market observers.

Luminar Deal Takes Center Stage

A significant narrative entering the earnings discussion involves the company’s $110 million all-cash purchase of Luminar Semiconductor Inc., formerly held by Luminar Technologies. This strategic transaction aims to provide QUBT with greater vertical integration and enhanced capability to generate consistent revenue streams.

Market participants will be focused on management commentary regarding semiconductor manufacturing schedules, fulfillment of existing orders, and any preliminary indicators of revenue acceleration stemming from the acquisition.

Wall Street Revises Expectations Lower

The analyst community presents a varied outlook. Lake Street analyst Max Michaelis maintained his Buy recommendation while adjusting his price objective from $24 down to $16 — nonetheless suggesting approximately 77% appreciation potential from current trading levels.

Ascendiant Capital Markets similarly reduced its target from $40 to $25 while preserving a Buy stance. Taking a more reserved approach, Wedbush established coverage with a Neutral rating and $12 price target, while Cantor Fitzgerald reaffirmed its Neutral position with a $15 target.

Rosenblatt Securities launched coverage in January with a Buy rating and $22 price objective. The aggregate consensus stands at Moderate Buy, comprising one Strong Buy, two Buys, two Holds, and one Sell among analysts providing coverage.

The mean price target across all tracked analysts reaches $18.00, implying approximately 99% upside from the February 27 trading price.

QUBT exhibits a beta coefficient of 3.44, indicating heightened volatility relative to broader market movements. The company maintains a market capitalization near $1.83 billion, with a negative P/E ratio of -13.40 consistent with its current unprofitable state.

Company insiders control 19.3% of outstanding shares. COO Milan Begliarbekov divested 2,860 shares on January 7 at $11.85 per share, trimming his holdings by approximately 10.55%. Institutional ownership remains minimal at 4.26%.

Options market activity suggests traders are anticipating a potential price movement of roughly 14.05% in either direction once earnings results are released.

The company will publish Q4 FY25 financial results prior to the market opening on March 2, 2026.

Crypto World

US Senators Warn Binance Could be a National Security Threat

US lawmakers want regulators to investigate Binance over the alleged $1.7 billion in transfers to Iran-linked entities. The concerns come at a particularly interesting time amid rising geopolitical tensions in the Middle East. However, Binance has already refuted such allegations.

In a letter, 11 lawmakers led by Sens. Chris Van Hollen and Elizabeth Warren urged Treasury Secretary Scott Bessent and Attorney General Pam Bondi to launch a formal probe.

US Lawmakers Warn Binance Oversight May be at Risk

The senators raised serious concerns about the strength of Binance’s anti-illicit finance guardrails and its adherence to sanctions and anti-money laundering laws.

“These allegations raise grave concerns that poor illicit finance controls at Binance remain a significant threat to national security. Our illicit finance controls are dangerously compromised if enormous sums can flow through Binance to terrorist groups or sanctions evaders. The firm controls the world’s largest digital asset exchange; it is essential that bad actors cannot benefit from its platform,” the lawmakers stated.

According to reports cited by the lawmakers, investigators uncovered at least two Binance accounts. These accounts were used to channel assets to entities linked to the Iran-backed Houthis and the Islamic Revolutionary Guard Corps.

Furthermore, the reports alleged that Iranian nationals successfully accessed more than 1,500 Binance accounts.

The senators described the incident as indicative of a “broader deterioration” in Binance’s compliance functions. They warned that the fund movements directly threaten the exchange’s historic 2023 settlement with US authorities.

Under that plea agreement, Binance paid a $4.3 billion fine and its founder, Changpeng Zhao, stepped down as CEO. The company also agreed to stringent oversight by a DOJ-mandated independent compliance monitor.

The lawmakers argued the alleged illicit transfers align with a wider pattern of risky behavior.

They highlighted Binance’s launch of payment cards in parts of the former Soviet Union, which they claim provides a backdoor for Russian entities to evade international sanctions.

“In light of these issues, we are deeply troubled by the prospect that Binance may once again be prioritizing profits over its compliance obligations,” the lawmakers argued.

Labeling the situation a severe national security threat, the senators gave the Treasury Department and the DOJ until March 13, 2026, to detail the results of their investigations.

If authorities determine Binance breached its 2023 monitorship terms, the exchange could face catastrophic legal and financial repercussions.

Binance Touts Compliance Efforts

In a fierce rebuttal to the allegations, Binance defended its internal controls and noted a sharp decline in illicit activity on its platform.

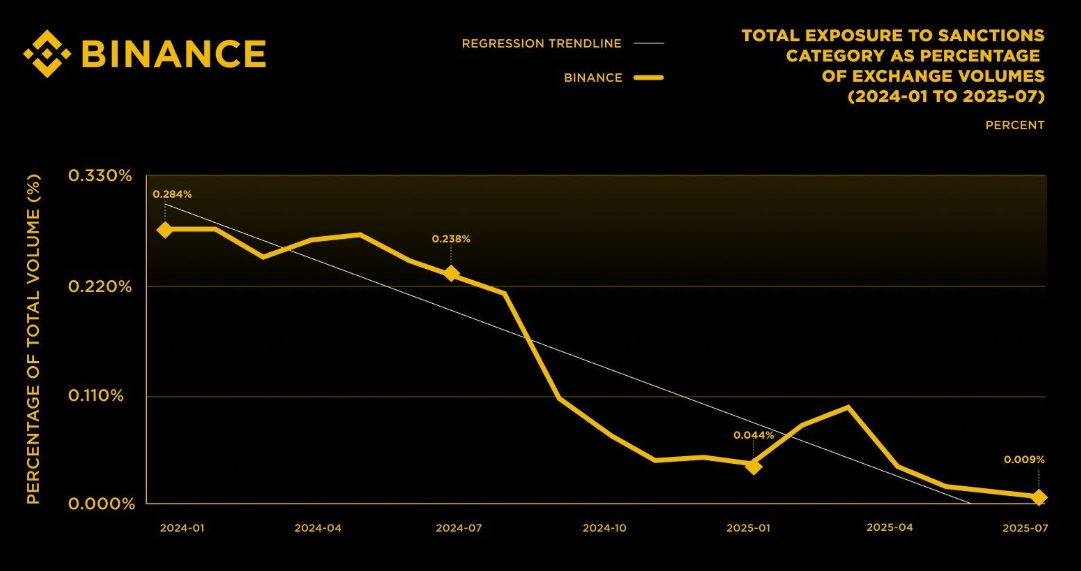

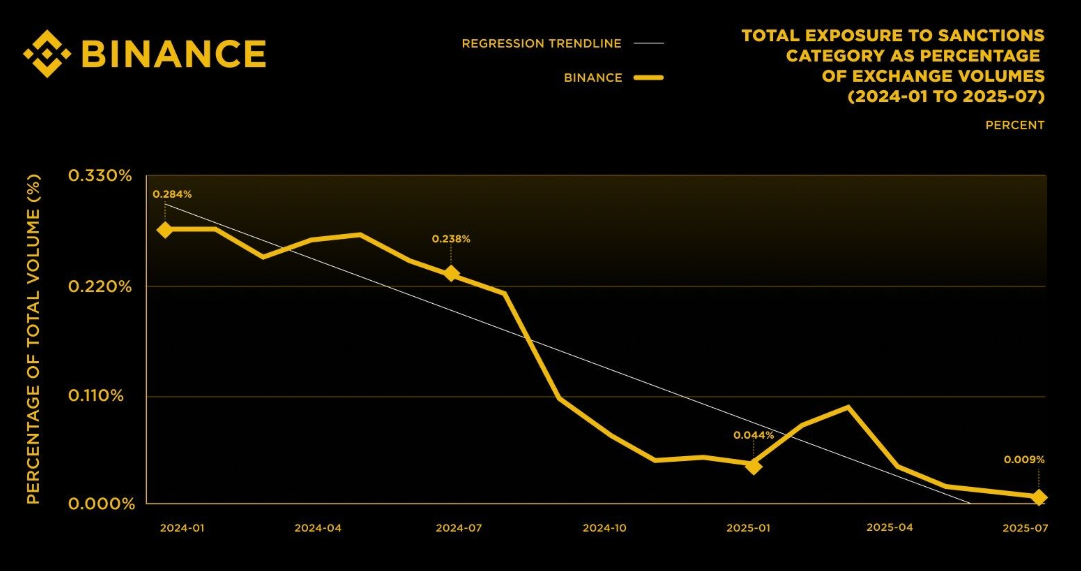

According to the firm, its sanctions-related exposure dropped 96.8% over an 18-month period, falling from 0.284% in January 2024 to just 0.009% in July 2025.

The firm linked this progress to its “best-in-class” compliance program. It argued that the recent reports present a distorted viewpoint that fundamentally misunderstands standard control processes for digital asset platforms.

Binance stated that in the specific incidents cited by the media, it acted proactively, mitigated risks, offboarded the offending accounts, and coordinated with law enforcement.

“The facts are these: Binance’s compliance program is effective and it worked here. Any statement to the contrary is simply wrong,” the exchange concluded.

Crypto World

Greg Abel Reveals Berkshire’s 4 Untouchable Holdings in Debut Letter

TLDR

- In his inaugural shareholder communication, Greg Abel designated Apple (AAPL), American Express (AXP), Coca-Cola, and Moody’s (MCO) as permanent portfolio positions

- The new CEO’s debut letter commits to continuing Buffett’s philosophy of value investing and maintaining a robust financial position

- Fourth-quarter operating profits declined 29% compared to the previous year, reaching $10.2 billion, influenced by insurance segment challenges

- Bank of America and Chevron didn’t make Abel’s list of untouchable holdings

- Buffett transitions to chairman role while maintaining a full-time office presence for advisory purposes

In his inaugural communication to shareholders, Greg Abel has outlined Berkshire Hathaway’s strategic direction as CEO, highlighting four equity positions the conglomerate intends to maintain indefinitely while disclosing a significant decline in quarterly profits.

Abel assumed the chief executive position from Warren Buffett beginning in 2026, following Buffett’s retirement announcement in May 2025. The legendary investor continues serving as chairman with plans to work full-time from the office.

The letter pinpointed four primary equity investments that Berkshire intends to preserve with “limited activity.” The quartet consists of Apple, American Express, Coca-Cola, and Moody’s.

Abel characterized these as companies Berkshire “understands well,” featuring solid management teams and promising long-term expansion prospects. He indicated the firm would only “significantly adjust” any position if fundamental changes occurred in its future outlook.

These four investments, combined with ownership stakes in five Japanese trading corporations, represent approximately two-thirds of Berkshire’s stock portfolio. The aggregate market value of these nine positions exceeds $200 billion.

What’s Not on the Forever List

Notably absent from the core holdings list were two top-five positions: Bank of America and Chevron. The Bank of America stake has been reduced by approximately half during the previous 18 months, declining to roughly 517 million shares with a market value near $28 billion.

The Chevron holding, valued at approximately $20 billion, similarly failed to earn “forever” designation from Abel. This exclusion has sparked discussion among longtime Berkshire observers.

Berkshire’s Apple investment has appreciated substantially beyond its initial purchase price. The conglomerate’s average cost basis stands around $27 per share, while the stock currently trades near $264. Although Buffett previously trimmed the Apple position by roughly 80% from peak levels, Abel’s correspondence indicates no additional reductions are anticipated.

Q4 Earnings Take a Hit

Berkshire disclosed fourth-quarter operating profits of $10.2 billion, representing a decline exceeding 29% from the year-earlier figure of $14.56 billion. The downturn stemmed partially from diminished results in the insurance operations.

For calendar year 2025, Berkshire generated operating earnings totaling $44.5 billion, falling short of 2024’s $47.4 billion but exceeding the five-year average of $37.5 billion.

Berkshire’s combined cash and Treasury bill reserves totaled $373.3 billion at quarter-end, representing a modest decline from the previous quarter’s $382 billion. Abel referenced this substantial liquidity as “dry powder” positioned for deployment when attractive investment opportunities emerge.

Uncertainty surrounds the matter of portfolio management responsibilities going forward. Abel lacks experience as an investment professional. Investment manager Ted Weschler will oversee approximately 6% of the portfolio, consistent with his allocation during Buffett’s tenure.

Abel stated that “responsibility ultimately rests with me as CEO” regarding capital allocation choices, with Buffett remaining available for consultation.

Crypto World

Strategic Investment Plays Amid Rising US-Iran Tensions

Key Takeaways

- Escalating US-Iran tensions following the reported death of Supreme Leader Khamenei in coordinated strikes trigger significant market repositioning.

- Oil markets respond with prices reaching seven-month peaks, with forecasts suggesting potential increases exceeding $10 per barrel.

- Traditional energy players like BP and Chord Energy provide direct commodity exposure while maintaining attractive dividend yields.

- Major defense contractors including Lockheed Martin and Northrop Grumman benefit from accelerating demand for advanced missile systems and stealth technology.

- Eos Energy represents a speculative opportunity tied to energy independence initiatives and infrastructure hardening driven by geopolitical uncertainty.

Following reports of Iranian Supreme Leader Ayatollah Ali Khamenei’s death in coordinated US-Israeli military operations, global financial markets have entered a period of tactical reallocation. Portfolio managers are rapidly shifting capital toward historically resilient wartime sectors.

Crude oil benchmarks have climbed to levels not seen in seven months. Defense spending projections continue climbing, while energy independence has reemerged as a central government priority.

We examine five equities currently drawing significant analyst attention amid this evolving landscape.

Energy Sector: Capitalizing on Crude Price Momentum

BP (BP)

BP operates as an integrated energy major headquartered in the United Kingdom, maintaining diversified operations across upstream production, downstream refining, and renewable energy development. Its global footprint provides natural hedging during commodity price volatility.

With Brent benchmarks approaching seven-month peaks, BP’s trading operations and refining spreads stand to benefit substantially. The equity currently offers a dividend yield exceeding 5% while trading at a forward price-to-earnings multiple below 9x.

The company executed $2.5 billion in share repurchases during the fourth quarter and maintains a progressive dividend framework targeting 4% annual increases. Fidelity analysts emphasize its income characteristics during periods of elevated risk premiums.

Chord Energy (CHRD)

Chord Energy maintains concentrated operations within North Dakota’s Williston Basin, targeting the prolific Middle Bakken and Three Forks shale formations. Current production averages approximately 232,737 barrels of oil equivalent daily.

Chord Energy Corporation, CHRD

The producer markets crude oil, natural gas liquids, and gas through pipeline networks and rail infrastructure, providing direct sensitivity to West Texas Intermediate price movements. Shareholder distributions totaled $1.2 billion throughout 2025, with shares trading at roughly 6x forward earnings.

Chord’s dividend yield approximates 4.9% to 5% with annual payout growth exceeding 20%. Koyfin and Simply Wall St. analysts maintain strong buy recommendations, citing exceptional cyclical leverage.

Eos Energy Enterprises (EOSE)

Eos Energy manufactures utility-scale battery storage systems domestically. Despite delivering 700% year-over-year revenue expansion and record quarterly performance, shares declined following fourth-quarter disclosures.

The manufacturer concluded 2025 with approximately 2 GWh of annualized manufacturing capacity alongside $240 million in contracted orders. Balance sheet liquidity exceeds $600 million.

Eos does not qualify as a traditional defensive holding. Instead, it represents a volatile, longer-horizon wager on accelerated energy security legislation should policymakers prioritize infrastructure resilience amid ongoing conflicts.

Defense Industry: Advanced Weapons Systems and Growing Backlogs

Lockheed Martin (LMT)

Lockheed Martin maintains its position as the globe’s largest dedicated defense manufacturer. The company recently finalized a $9.8 billion agreement delivering 1,970 Patriot PAC-3 Missile Segment Enhancement interceptors, marking the largest single contract in its Missiles and Fire Control division’s history.

Iran’s expanding ballistic missile capabilities have intensified demand for integrated air defense platforms including Patriot and THAAD systems, directly benefiting Lockheed’s order pipeline. J.P. Morgan sustains an overweight stance with price objectives spanning $200 to $500.

The equity provides approximately 1.5% dividend yield. Its $194 billion backlog encompasses F-35 lifecycle support and Patriot production now experiencing heightened demand.

Northrop Grumman (NOC)

Northrop Grumman serves as prime contractor for the B-21 Raider next-generation stealth bomber and the Sentinel ground-based strategic deterrent program. Both initiatives align with evolving Pentagon priorities as Iran-related threats intensify.

Morgan Stanley maintains an overweight rating with a $408 target, while shares recently changed hands near $347. The stock has appreciated over 33% during the trailing twelve months while distributing a 1.5% yield.

Significant contract awards anticipated throughout 2026 span B-21 production, F/A-XX development, and Golden Dome systems. Northrop has substantially outpaced S&P 500 returns over the past year.

Crypto World

BYD Faces Steepest Sales Decline in Six Years During February Slump

Key Takeaways

- February 2026 saw BYD’s NEV sales plunge 41.1% compared to the previous year, representing the most severe contraction since February 2020.

- The automaker has now experienced six months in a row of declining sales figures.

- NEV manufacturing output and sales volumes both contracted approximately 38% versus February 2025.

- The passenger vehicle segment experienced the most significant impact.

- International shipments reached 100,600 NEVs, while battery manufacturing capacity maintained strength.

The Chinese electric vehicle manufacturer BYD has reported its most dramatic monthly sales contraction in six years, with February figures showing a 41.1% year-over-year decrease. This alarming trend extends the company’s sales downturn to half a year.

The magnitude of this decline hasn’t been witnessed since February 2020, during the initial economic disruption caused by the coronavirus pandemic.

According to a regulatory disclosure published over the weekend, both manufacturing and sales of new energy vehicles contracted by roughly 38% when compared to the same period in 2025.

The passenger vehicle category bore the brunt of the downturn, although BYD opted not to provide granular segment-level data in its official filing.

These disappointing results emerge despite the company’s commanding presence in the worldwide electric vehicle sector and its aggressive expansion into foreign territories.

International Sales Provide Limited Relief

In terms of overseas distribution, BYD delivered 100,600 NEVs internationally during February, a metric the manufacturer identified as a relative positive amid otherwise challenging circumstances.

Battery manufacturing operations remained resilient. BYD emphasized its installed capacity for NEV power systems and energy storage batteries as indicators of sustained operational scale, despite the contraction in vehicle unit sales.

The organization seems to be relying increasingly on its battery division and international operations to counterbalance weaker performance in its home market.

It’s important to recognize that February traditionally represents a slower sales period for China’s automotive industry, primarily due to Lunar New Year celebrations that reduce operational days and showroom activity.

While this seasonal pattern occurs annually, the magnitude of the current downturn remains striking even when factoring in these calendar-related considerations.

Financial Performance Indicators

BYD’s year-to-date stock performance reflects a modest decline of -0.42% at the time of the regulatory submission, with the company maintaining a market capitalization of HK$890 billion.

Daily trading activity averages approximately 21.5 million shares.

Technical analysis indicators currently suggest a Buy rating for the equity.

The latest analyst coverage for HK:1211 also recommends a Buy position, establishing a target price of HK$130.00.

The extended six-month pattern of declining monthly sales volumes prompts concerns regarding short-term market demand, especially within China’s domestic market where rivalry among electric vehicle manufacturers has grown increasingly fierce.

BYD’s February 2026 regulatory submission verified that aggregate NEV production and sales both decreased by approximately 38% on an annual basis, with international shipments totaling 100,600 vehicles and battery division capacity characterized as robust.

Crypto World

Company’s Stretch preferred stock now paying 11.5% dividend

Leading bitcoin treasury company Strategy has again raised the dividend on its STRC (“Stretch”) preferred series.

Led by Executive Chairman Michael Saylor, the firm lifted the annualized payout by 25 basis points to 11.5%.

While STRC to this point has performed as hoped by the company — continuing to trade in a tight range close to $100 — Strategy’s common stock, MSTR, has floundered alongside the price of bitcoin.

MSTR closed February with its eighth consecutive monthly decline, falling 14% as bitcoin tumbled nearly 20%.

Stretch is meant for steady income

Strategy describes STRC as a short-duration, high-yield savings account. This latest dividend increase marks the seventh since STRC began trading in July 2025.

A perpetual preferred stock that pays monthly cash distributions, the STRC dividend rate is set each month to help the shares trade close to their $100 par value and to limit price volatility. STRC closed at $100 on Friday but had traded somewhat below that level during part of February’s brutal month for crypto, necessitating the payout boost.

Crypto World

Bitcoin Eyes Iran Reactions as Oil Triggers 5% US Inflation Forecast

Bitcoin held a steady line through a weekend marked by geopolitical flare-ups in the Middle East, easing some of the stress that had rippled through risk assets. The benchmark cryptocurrency kept its bearings around the mid-to-high $60,000s as traders weighed potential supply disruptions, oil price volatility, and the staying power of traditional markets. While the narrative around the Strait of Hormuz and regional tensions added a geopolitical layer to the narrative, Bitcoin and broader crypto markets avoided a sudden breakout, instead trading in a relatively tight corridor as weekend liquidity faded and futures markets prepared for the Monday open.

Key takeaways

- Bitcoin started the week near $67,000 after a volatile weekend, with traders watching how U.S. markets would react to ongoing regional tensions.

- Trading data pointed to a lingering focus on a notable CME futures gap at $65,880, a potential “fill” area that could influence short-term moves.

- Oil-price risk rose as Tehran signaled actions around the Strait of Hormuz, raising concerns about inflationary pressures and their potential impact on risk sentiment.

- Analysts offered mixed views: some described the initial response as positive, while others warned that the market could drift until macro catalysts clear, including the U.S. opening and inflation data.

- The crowd of strategists and traders continues to eye a possible relief rally if Bitcoin can reclaim momentum above critical moving-average levels and push toward the high-$70,000s range.

Tickers mentioned: $BTC

Sentiment: Neutral

Price impact: Neutral. Price action remained range-bound despite regional tensions and a looming data calendar.

Trading idea (Not Financial Advice): Hold. Monitor the Monday open and the CME gap as liquidity returns to the market.

Market context: The weekend period saw traditional markets digesting geopolitical headlines as traders awaited U.S. opening dynamics and inflation-related data. Early signs showed U.S. stock futures down roughly 0.65% as traders braced for potential volatility once liquidity returned to normal levels, underscoring a cautious risk-on environment for crypto assets as well.

Why it matters

Bitcoin’s behavior in the wake of regional turmoil underscores how the asset class often behaves as a macro sponge—quick to absorb risk-off impulses and slower to trend during periods of mixed signals. The tension around the Strait of Hormuz and the broader Middle East flare-up adds a persistent inflationary lens to the discussion. Oil markets, which frequently respond to geopolitical headlines, can—by extension—spark concerns about energy costs feeding into consumer prices. A notable moment referenced by market observers is the potential for inflation to surprise to the upside, a scenario some analysts say could lift traditional hedges or drive risk assets into a different regime.

On the technical front, traders highlighted Bitcoin’s proximity to a key moving-average level as a potential fulcrum. The 21-day simple moving average, an often-watchful gauge for short- to mid-term momentum, sat near a critical threshold that, if breached, could accelerate a relief rally. Observers like Michaël van de Poppe framed the setup in a nuanced way, noting that while the initial reaction to weekend events looked “positive,” markets needed to clear the CME gap and establish a higher low before committing to a sustained move higher. This view aligns with a broader narrative that price action over the next few sessions could depend as much on opening prints in the United States as on any headline flow from abroad.

“On the other hand, the 21-Day MA needs to break in order to have a relief rally. I think we’ll see it in March/April, question of how we’re opening the markets tomorrow and whether it finds a higher low.”

Data from TradingView tracked BTC/USD action as traders focused on the $67,000 region after the weekend’s headlines, painting a picture of a market waiting for a catalyst to push beyond a short-term ceiling. The absence of a decisive breakout did not surprise all participants, given the complexity of the macro backdrop and the potential for a “gap fill” scenario as futures markets settle into Monday’s session. A number of technicians agreed that a break above the immediate resistance zone could set the stage for a move toward the $73,000–$74,000 zone, underscoring how volatile macro drivers can unfold into a structured technical chase for price targets in the near term.

Beyond the chart, the weekend narrative included other voices pointing to why a breakout could be delayed. Some market participants argued that geopolitical risk had already been priced in to an extent, with the market absorbing headlines and awaiting a clearer signal from U.S. policy and data releases. Crypto traders—who often weigh cross-asset correlations—emphasized that the next few sessions would likely hinge on how traditional markets respond when liquidity returns and whether risk appetite recovers or remains cautious. “We will probably move sideways in the next days,” reasoned another active trader, highlighting the ongoing balance between geopolitical risk and macro resilience.

The macro overlay extended to inflation concerns. The Kobeissi Letter’s thread, drawing on JPMorgan research, suggested the possibility of a fresh inflation spike that could push the U.S. Consumer Price Index higher—potentially around 5%—a development that would feed into both equity and crypto dynamics. This thread arrived in the context of recent U.S. inflation prints that had already surprised to the upside, notably with the latest Producer Price Index data underscoring that the floor for inflation might be sticky rather than easily transitory. In parallel, market observers referenced Bitcoin’s historical dynamics—such as metrics that point to elevated longer-horizon returns in certain cycles—to anchor expectations for how BTC might respond as macro conditions evolve. A related discussion on a widely cited price metric is available in a Cointelegraph piece that linked to a longer-term pattern, illustrating how historically prolonged uptrends have unfolded in response to regime changes in inflation and liquidity.

As the weekend wound down, a chorus of voices underscored the nuances of the setup. Crypto influencers and traders reminded audiences that headlines alone rarely deliver a sustained move; instead, the probability of a meaningful rebound depends on the confluence of technical breakouts, macro data, and the opening tone of U.S. markets. The crosswinds—from geopolitical tensions to inflation risk—mean Bitcoin’s path may be less about a single trigger and more about a sequence of catalysts aligning in the weeks ahead.

What to watch next

- Monday open: observe whether U.S. equities’ early direction validates or contradicts the weekend narrative, particularly as the CME gap at 65,880 remains a potential target for a fill.

- BTC price action around 67,000: monitor if the asset can hold this level or accelerate toward the upper target near 73,000–74,000 based on momentum signals and moving-average dynamics.

- Oil and inflation linkage: track oil price movements and any fresh inflation data releases that could reframe risk sentiment and liquidity expectations.

- Futures and liquidity cycles: pay attention to how liquidity returns in the coming days and whether any new macro surprises push risk assets into a fresh regime.

- Geopolitical headlines: continue to monitor developments around the Strait of Hormuz and broader regional tensions, as these could reintroduce volatility into risk assets and affect hedges like BTC.

Sources & verification

- Trading view data showing BTC price activity around $67,000 after the latest Middle East events (TradingView).

- Discussion and charts cited by Michaël van de Poppe on X about the 21-day moving average and potential resistance turned support levels.

- Market commentary on the CME futures gap at $65,880 and its potential relevance to near-term price action.

- References to inflation risk and CPI considerations from JPMorgan-linked discussions in the Kobeissi Letter thread (KobeissiLetter).

- Cointelegraph coverage linking to inflation data and the broader macro narrative surrounding Bitcoin’s historical performance in higher-inflation regimes (Cointelegraph).

- Bitcoin historical price metric references and longer-term return discussions (Bitcoin historical price metric …).

- Direct posts from market participants on X offering perspectives on near-term price trajectories (Michaël van de Poppe, BitBull, Crypto Caesar).

Bitcoin steadies as geopolitical tensions test risk appetite

Bitcoin (CRYPTO: BTC) threshold dynamics dominated the narrative as regional headlines intersected with macro data expectations. The asset’s late-week price action found support near the $67,000 level, consistent with a broad risk-off-to-risk-on tug-of-war that markets have navigated throughout the weekend. While some participants argued that a relief rally could unfold if momentum gathers and key moving-average levels break, others emphasized the need for a clear bullish trigger—one that could come from a favorable Monday open or a cooling of inflation concerns. The combination of a cautious open from U.S. equities and a disciplined approach to risk deployment shaped the tone for the early week, with traders eyeing a potential test of the CME gap and a move toward higher targets if liquidity and sentiment cooperate.

Trading data pointed to ongoing technical work in BTC’s near-term chart. The 21-day moving average, a key reference for many short-term traders, sits at a level that many watch as a potential springboard for momentum. As one veteran analyst noted, decisive action above that threshold could catalyze a more pronounced move, while a failure to gain traction could prolong a consolidative phase. In parallel, market observers highlighted the role of the CME’s futures market in shaping intraday risk, with the gap below the current price acting as a potential magnet for price action if markets shift into risk-on mode.

The macro backdrop—particularly inflation dynamics and energy-price volatility—adds a layer of complexity to Bitcoin’s trajectory. The Strait of Hormuz could become a focal point for oil markets, and any supply concerns tend to reverberate through inflation expectations and risk sentiment. Analysts who have studied post-crisis price cycles note that inflation shocks can align with crypto cycles in nuanced ways: liquidity remains a critical piece, but the direction of flow—whether into crypto as a hedge or as an alt-risk asset—depends on how investors digest the evolving macro picture. In this context, Bitcoin’s price range-bound behavior over the weekend can be seen as a reflection of a market seeking a credible catalyst rather than chasing headlines.

As market participants refine their models for the week ahead, the broader takeaway is that Bitcoin’s near-term path will hinge on a confluence of factors: a measured Monday opening, the pace at which the CME gap closes, and any renewed guidance from inflation and energy data. The dynamics suggest a market that might remain cautious until a clearer signal coalesces, even as some voices project a path toward the $73,000–$74,000 zone should momentum swing in BTC’s favor. The coming days will reveal whether the technical setup can convert into a sustained trend or whether traders revert to a wait-and-see posture in response to macro uncertainty.

Crypto World

Is the Ripple ETF Hype Over? Inflows Disappoint as XRP Fights for $1.40

XRP went through intense volatility on Saturday, but it had nothing to do with the ETFs.

Although they have ended the underwhelming zero-inflow-day streak, the spot XRP ETFs are still far away from their initial glory in terms of net inflows.

At the same time, the underlying asset continues to fight with BNB for the fourth spot in the cryptocurrency market cap ranking, but it sits inches below a crucial resistance.

Ripple ETF Inflows Still Missing

CryptoPotato has reported on several occasions on the diminishing activity on the XRP ETF front. The financial vehicles saw under $8 million in net inflows during the trading week that ended on February 13, and less than $2 million in the following one. Moreover, it had three days with zero inflows during this time, a streak that extended to February 23.

However, investors finally picked up the pace in the next four trading days, albeit in a very modest manner. The net inflows stood at $3.04 million on Tuesday, $3.09 million on Wednesday, $1.22 million on Thursday, and $2.21 million on Friday. Overall, the week ended in the green, with $9.55 million entering the funds.

This modest amount is in stark contrast to the initial boom. After the first XRP-focused ETF went live for trading in mid-November, investors were rushing to pour funds into it and the four more such products that followed. Consequently, the cumulative net inflows skyrocketed to the $1 billion mark within a month since Canary Capital’s XRPC saw the light of day.

Since then, though, the trend has seemingly changed. The total net inflows stand at $1.24 billion now, which means that only $240 million has entered the funds in over two months.

XRP Fights BNB

Saturday was an eventful day in the crypto markets due to the strikes against Iran and the subsequent retaliation. XRP was not immune as it dumped from $1.43 to $1.27 before it rebounded to its starting point after reports that Iran’s Supreme Leader was killed during the attacks.

You may also like:

Popular crypto analyst CryptoWZRD noted that the asset had closed with a “dragonfly doji candle and respected the $1.30 daily support.” They believe XRP could continue higher only if it manages to close weekly above $1.3820. As of press time, the asset trades inches below that line. However, it has retaken its fourth place in terms of market cap from BNB after a quick flip on Saturday.

XRP Daily Technical Outlook:$XRP closed with a dragonfly doji candle and respected the $1.3000 Daily support. However, anything is possible due to geopolitics. Tomorrow is the Weekly transition. Above the $1.3820 resistance it can push higher if the breakout remains stable 😈 pic.twitter.com/YJaJyp0DTt

— CRYPTOWZRD (@cryptoWZRD_) March 1, 2026

Binance Free $600 (CryptoPotato Exclusive): Use this link to register a new account and receive $600 exclusive welcome offer on Binance (full details).

LIMITED OFFER for CryptoPotato readers at Bybit: Use this link to register and open a $500 FREE position on any coin!

-

Sports6 days ago

Sports6 days agoWomen’s college basketball rankings: Iowa reenters top 10, Auriemma makes history

-

Fashion2 days ago

Fashion2 days agoWeekend Open Thread: Iris Top

-

Politics6 days ago

Politics6 days agoNick Reiner Enters Plea In Deaths Of Parents Rob And Michele

-

Business5 days ago

Business5 days agoTrue Citrus debuts functional drink mix collection

-

Politics3 days ago

Politics3 days agoITV enters Gaza with IDF amid ongoing genocide

-

Sports2 days ago

The Vikings Need a Duck

-

Tech13 hours ago

Tech13 hours agoUnihertz’s Titan 2 Elite Arrives Just as Physical Keyboards Refuse to Fade Away

-

Crypto World5 days ago

Crypto World5 days agoXRP price enters “dead zone” as Binance leverage hits lows

-

Tech5 days ago

Tech5 days agoUnsurprisingly, Apple's board gets what it wants in 2026 shareholder meeting

-

NewsBeat1 day ago

NewsBeat1 day agoDubai flights cancelled as Brit told airspace closed ’10 minutes after boarding’

-

NewsBeat4 days ago

NewsBeat4 days agoCuba says its forces have killed four on US-registered speedboat | World News

-

NewsBeat1 day ago

NewsBeat1 day agoThe empty pub on busy Cambridge road that has been boarded up for years

-

NewsBeat4 days ago

NewsBeat4 days agoManchester Central Mosque issues statement as it imposes new measures ‘with immediate effect’ after armed men enter

-

NewsBeat6 days ago

NewsBeat6 days ago‘Hourly’ method from gastroenterologist ‘helps reduce air travel bloating’

-

Tech7 days ago

Tech7 days agoAnthropic-Backed Group Enters NY-12 AI PAC Fight

-

NewsBeat20 hours ago

NewsBeat20 hours agoAbusive parents will now be treated like sex offenders and placed on a ‘child cruelty register’ | News UK

-

NewsBeat7 days ago

NewsBeat7 days agoArmed man killed after entering secure perimeter of Mar-a-Lago, Secret Service says

-

NewsBeat5 days ago

NewsBeat5 days agoPolice latest as search for missing woman enters day nine

-

Business4 days ago

Business4 days agoDiscord Pushes Implementation of Global Age Checks to Second Half of 2026

-

Business3 days ago

Business3 days agoOnly 4% of women globally reside in countries that offer almost complete legal equality