The NSPCC said the Police Service of Northern Ireland were included in the data

The number of child sex abuse image crimes logged by police forces across the UK has risen by 9%, prompting renewed calls for tech companies to block nude images from being taken and shared on children’s devices.

Young people continue to face exposure to the risk of grooming, extortion, online abuse and having intimate images shared, the NSPCC said.

The charity said its research had shown that between April 1 2024 and March 31 2025 there were 36,829 recorded offences of indecent and prohibited images of children across the UK.

A total of 42 of 45 UK police forces responded to its Freedom of Information request, and a year-on-year comparison suggested there had been a 9% increase in recorded offences from 33,886 the previous year.

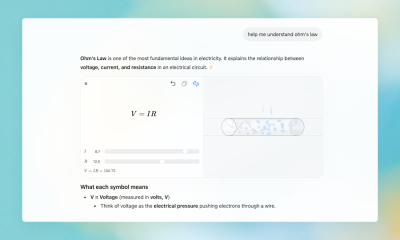

The Government’s strategy, published in December, to tackle Violence Against Women and Girls (VAWG), stated an aim to “make it impossible for children in the UK to take, share or view a nude image” and said it was “working constructively with companies to make this a reality”.

But the NSPCC said this must be made mandatory, with the Government urged to take action against tech companies if they fail to embed existing technology on children’s phones that blocks nude images from being created, shared or viewed.

The charity said these “device‑level protections” should be embedded by default, meaning children are automatically protected and adult users could go through a process to opt out.

Such technology can block a nude image taken, sent or received on a device, and the NSPCC said that because the image is never created or sent in the first place, there is nothing to encrypt and that this method can stop abuse at source.

The NSPCC said that of the 10,811 crimes where police forces recorded the platform used by perpetrators, 43% or a total of 4,615 took place on Snapchat.

Overall, Meta platforms still accounted for almost a quarter of all offences (24%), with 8% on Instagram, 7% on WhatsApp, 5% on Facebook and 4% on Messenger, the charity said.

But the NSPCC said because of end-to-end-encryption, the true scale of abuse children are experiencing online remains “hidden”.

NSPCC chief executive Chris Sherwood said: “Children across the UK are being completely failed by tech companies that should be protecting them online. We cannot keep letting them off the hook when they can do more to prevent this from happening in the first place.”

He added: “Technology already exists that could be deployed today to stop children from taking, sharing or receiving nude images. So, the real question is: what’s stopping them? If they continue to drag their feet, Government must show their might by stepping in and compelling them to act.”

Kerry Smith, chief executive of the Internet Watch Foundation, said the data “should be yet another wake-up call”, adding: “Mandatory introduction of on-device protections will protect children from unsolicited nude imagery, and from being coerced into sending sexually explicit material.

“We must see these measures applied across the board.”

Safeguarding minister, Jess Phillips, said the data uncovered by the NSPCC was “nothing short of deeply shocking”.

She added: “Predators cannot continue like this – unstopped and unchecked. We plan to stop them.

“We have committed to making it impossible for children in the UK to take, share or view nude images, and have already announced a ban on so‑called ‘nudification’ apps to stop abusive images being created and spread in the first place.

“We will not hesitate to go further until our children are safe from sexual abuse online.”

A spokesperson for Snapchat said: “We work closely with NSPCC and police to help keep our platform safe and combat child sexual exploitation.

“This report does not accurately reflect our efforts to tackle these horrific crimes and fails to recognise that information sent to police (through what are known as CyberTips) helps support their investigations to bring criminals to justice.

“We will continue to do our part because we know that seriously addressing these issues requires collaboration from stakeholders across many segments of our society, including law enforcement, experts, parents, educators, advocates and tech companies.”

Earlier this year it was announced that nudification apps would be criminalised as part of the Crime and Policing Bill, which is currently going through Parliament.

The latest data comes after two watchdogs last week warned big tech it must do more to protect young people online.

Communications regulator Ofcom wrote to Facebook, Instagram, Snapchat and others, giving them until the end of April to explain what actions they are taking on age checks and grooming protections.

Alongside Ofcom’s demands, the Information Commissioner’s Office (ICO) also wrote to Snapchat, Facebook, Instagram, and others asking them to set out how their age assurance policies keep children safe.

– The NSPCC said the Police Service of Northern Ireland and Police Scotland were included in the data but forces missing were Gloucestershire, Hampshire and Thames Valley.

For all the latest news, visit the Belfast Live homepage here and sign up to our daily newsletter.

You must be logged in to post a comment Login