Tech

Aiper Scuba V3 Review: Finally, a pool robot with an actual brain

Instant Insight

I have always maintained that owning a pool is like owning a boat – you will spend 90% of your time maintaining and cleaning it and 10% of your time enjoying it, especially if you live in Oregon as I do. Over the years, we have seen robot cleaners evolve from erratic, cord-tangled wall-bumpers to reliable vacuums, and technology keeps getting better, especially in the age of AI. Priced at $1,199 MSRP (with a street price currently around $970 USD), the Aiper Scuba V3 is not trying to be the cheapest impulse buy at the pool store, but instead, positions itself as a premium AI-driven assistant that brings sophisticated navigation of high-end robot vacuums to the bottom of your backyard oasis.

New for 2026, the Aiper Scuba V3 robotic pool cleaner has new AI features and more value, although it sacrifices some tech to offer such a good value. The Scuba V3 is currently priced between the top-of-the-line Scuba X1 Pro Max ($1699) and the Scuba X1 ($899.99), offering newer AI-focused features for just $70 more. The Scuba V3 is equipped with AI Vision and dToF(Direct Time of Flight) sensors, which give this pool cleaner more of an organized purpose than recklessly bouncing around the pool.

During my tests, the Scuba V3 proved to be a reliable, hardy worker with long battery life. If you already have a pool cleaner that is a few years old and working great, it’s not worth spending money on the Scuba V3, but if you are in the market, then I would recommend jumping into the pool cleaner ecosystem. Paired with the Aiper EcoSurfer S2 skimmer, both of these devices should do the job in keeping your pool spotlessly clean.

Aiper V3 Specifications:

Here is how the Aiper Scuba V3 measures up :

| Specification | Details |

| Dimensions (L x W x H) | 17.48 x 14.96 x 8.58 inches |

| Weight (Dry) | 18.1 pounds |

| Suction Power | 4,800 Gallons Per Hour (GPH) |

| Filtration Level | 3-micron MicroMesh™ Multi-layer Filtration |

| Debris Basket Capacity | 3.5 Liters |

| Battery Energy Content | 149.76 Watt Hours (Lithium-ion) |

| Run Time | Up to 150 minutes per charge |

| Charging Time | 5 Hours via Wireless Charging Dock |

| Navigation Technology | AI Patrol, dToF, VisionPath™ Adaptive Planning |

| Drive System | Tank treads with dual scrubbing brushes |

| Cleaning Zones | Floor, Walls, Waterline (JetAssist™) |

Design and Weight: Like a paper tank

Like most pool cleaners on the market, the Scuba V3 uses a tank tread design to move the unit around. And like the rest of the products in the Aiper robot pool cleaner line-up, the casing is made up of a piano black finish that looks high-quality. Rather than the gold or carbon fiber surround found on the more expensive Aiper units, the V3 has some light blue trim, which would indicate more of a value virtue signal. Dimension-wise, the Scuba V3 is considerably smaller than the Scuba X1 or Scuba X1 Pro Max, which are not only taller, longer, and wider, but also considerably heavier.

I put some charts below that show the weight of the Scuba V3 compared to others in its price range – it comes in on the lighter side in the comparison (when not wet), which is nice for those who really have trouble pulling these cleaners out of their pool. Aiper sells a caddie to help you transport their pool cleaners to and from the pool, but would surmise that most people can skip the caddie, as the V3 is pretty light.

I found that the tank treads did a great job moving the V3 around my pool, and they stuck to the side of the pool without issue, despite a suction lower than that of higher-end models. Underneath, you have four scrubbing brushes – two in the front and two in the back – that do a good job agitating algae, mineral deposits, and debris before the suction kicks in. The debris is funneled into a newly designed 3.5-liter collection basket wrapped in a Micromesh filter.

The overall build quality feels premium; the plastics are thick, the moving parts feel solid, and there are no flimsy latches that feel destined to snap off after a single summer in the sun. I noted in my Beatbot Aquasense 2 Ultra review that they put extra screws and parts in the box, which is a clear sign to me that something is going to wear out.

The Aiper Scuba V3 is a thoughtful and rugged piece of engineering.

When you are dealing with robotic pool cleaners, dry weight directly correlates to user experience, specifically, how miserable it is to pull the machine out of the water once it has finished its cleaning cycle. Here is how the competitive landscape breaks down:

- The Featherweights (Under 20 lbs): The Aiper Scuba V3 (18.1 lbs) and the Dolphin Liberty 400 (17.9 lbs) are the clear winners here. Aiper managed to pack the V3 with a complex AI vision system and heavy-duty tank treads without inflating its mass. It is incredibly easy to retrieve one-handed using the included hook. We also included the corded Dolphin Nautilus CC Plus (20.8 lbs) as a baseline to show that premium cordless tech doesn’t necessarily mean a heavier machine.

- The Middleweights (23 to 25 lbs): The highly anticipated Beatbot Sora 70 (23.0 lbs) sits right in the middle of the pack. While it is about five pounds heavier than the Scuba V3, that extra weight is justified by its internal buoyancy chambers, which allow it to float up and clean the surface of the water (a feature the V3 lacks). The older Aiper Scuba S1 Pro (25.0 lbs) and Beatbot Aquasense Pro (24.3 lbs) also live in this tier, representing the maximum weight most users can comfortably lift without straining their backs.

- The Heavyweight (30+ lbs): Aiper’s flagship model, the Scuba X1 Pro Max (33.1 lbs), is an absolute behemoth. While it offers a staggering 5-hour battery life and 8,500 GPH of suction, pulling 33 pounds of dead weight (plus trapped water) out of the deep end is a genuine physical workout.

Ultimately, the Scuba V3 strikes a near-perfect balance, offering premium AI navigation in a chassis light enough that anyone in the family can confidently deploy and retrieve it.

Navigation: The most important part of any robot cleaner

I get asked a lot about what makes these pool cleaners so much better than the other, and the answer is simple: Does it clean the pool to your satisfaction, and is it low maintenance? Sounds simple, but as you know, it’s not that easy. Pools come in a lot of shapes and depths, so to get a pool clean, you need a good brain to tell the cleaner how to navigate (and you need long battery life, too).

Powered by what Aiper calls its “Cognitive AI Navium Mode” and “VisionPath Adaptive Path Planning,” this robot uses an integrated underwater camera combined with dToF (Direct Time of Flight) optical sensors. Think of dToF as a form of laser radar; it sends out light pulses and measures how long they take to bounce back, creating a highly accurate 3D map of your pool’s interior. When you drop the Scuba V3 into the water, it doesn’t just wander. It assesses the shape of the pool, detects obstacles with its optical sensors, and plans a precise, overlapping, lawnmower-style route.

But the really cool trick is the “AI Patrol” mode. I actively tested this by tossing a handful of fine potting soil and a few sunken leaves into the deep end. The Scuba V3’s camera has a 2-meter detection range and is trained to recognize over 20 different types of debris. As it cruised nearby, I literally watched the robot alter its path, turn directly toward the dirt pile, and suck it up before resuming its standard grid.

It was like watching a predator spot its prey.

There is a very visible difference in how the Scuba V3 seems to navigate compared to the Scuba X1, for example. The V3 looks very “aware” almost like a living being; it’s creepy at first. Furthermore, Aiper equipped the front of the unit with dual LED headlights. This allows the AI vision system to function perfectly during night cleanings, illuminating the murky depths so it never loses its way. And for the privacy-conscious, Aiper guarantees zero image storage and zero image upload – what happens in your pool, stays in your pool.

Performance: Suck it up, kid

In all my robot pool cleaner testing, I am still wondering what the point of diminishing returns is when it comes to gallons per hour (GPH) of suction. Spend more on a pool cleaner and get a higher suction rate, but what is the minimum you need for good performance in the category? I have yet to find that out. The Scuba V3 measures in at 4800 GPH, which isn’t nearly at the top of its class, but not weak either. The higher-end and slightly more expensive Scuba X1 comes in at 6600 GPH, which feels like A LOT more compared to the V3, but in my tests, the Scuba V3 did just fine.

During my two-week testing period, my pool was subjected to a barrage of spring pollen, wind-blown sand, and the inevitable barrage of leaves. The V3 offers multiple cleaning modes, but “Auto” (which hits the floor, walls, and waterline) and “AI Patrol” were my easy-option choices. Let’s talk about the filtration first. The basket utilizes a 3-micron MicroMesh filter. For context, a single strand of human hair is about 70 microns thick.

This mesh is so fine that it doesn’t just trap leaves and twigs; it captures that incredibly annoying, cloudy silt and fine sand that usually blows right through the exhaust of cheaper robotic vacuums. You can pull the micro-mesh filter out and use the standard filter if you want. I’m located here in Western Oregon, where I do not need to deal with sand or fine debris, as you might get in Arizona or Nevada, so I typically stick with the standard filter.

Wall climbing is where the Scuba V3 truly shows off. It scales the vertical walls of my pool effortlessly. But the standout feature is the JetAssist™ horizontal waterline cleaning. Many robots will climb a wall, poke their nose out of the water, and fall back down. The V3 climbs up to the waterline and then uses a directed jet of water to push itself horizontally along the pool tile, vigorously scrubbing the scum line with its dual brushes.

It looks like it is defying gravity.

It did a solid job of cleaning the waterline, cleaning about 1 inch higher up on the side; it literally hit the brick surround that hangs over the pool. One area that the V3 needs help with (and most pool cleaners do) would be the stairs. The Scuba V3 would make it up the first step no problem, then struggle with the second on occasion. I still had to manually clean the stairs every couple of weeks to finish the job thoroughly, though.

It is important to understand where the Scuba V3 sits in terms of raw power. Here is a quick visual breakdown of how it compares to its direct competitors:

- Aiper Scuba X1 Pro Max: 8,500 GPH

- Aiper Scuba S1 Pro: 6,000 GPH

- Beatbot Aquasense Pro: 5,500 GPH

- Aiper Scuba V3: 4,800 GPH

- Dolphin Nautilus CC Plus: 4,500 GPH

The Aiper App and connectivity: Now with a weather forecast

I’ve always liked the Aiper app and find it to be easy to operate their products. It’s also easy to set up a new Aiper device with the app. Like their previous products, you need to install the app, turn the Scuba V3 into Bluetooth mode, connect the device to the app, and then set up Wi-Fi. 9/10 on the ease of use scale.

I find the interface to be clean and functional. It’s easy to find instructions and support for your product through the app in the event that you throw out the setup guide. Aiper calls their app AI Navium because it’s an “advanced, cognitive AI mode designed for intelligent pool and yard management”. The key selling points by Aiper include:

- Cognitive Cleaning plans: It will generate weekly cleaning plans based on AI analysis

- Weather/History Sync: Analyzes local weather and past cleaning logs to determine optimal cleaning times

- Vision Path Integration: Combines AI vision and dToF (direct time of flight) sensors for precise navigation

- Smart Yard management: You can store different yards and products so that the system can schedule devices based on yards.

AI Navium is an attempt by Aiper to get you to buy into their entire ecosystem of products so they can fully automate your yard. From sprinkler systems to pool cleaners and pool skimmers. I can’t really give you a detailed review of the AI Navium ecosystem based on a couple of products.

I love the idea of scheduling based on the weather, but it feels more gimmicky than anything. For me, it’s as simple as dumping the cleaner into the pool and coming back a few hours later and expecting the pool to be clean. How the cleaner does that isn’t really important to me.

I want to point out, like I do for all of my pool cleaner reviews, that once the cleaner is submerged, you will lose a Wi-Fi connection to it. WiFi signals will not travel through water unless you have a special Wi-Fi communication device like the Aiper HydroComm, which will set you back $300-$400.

The Aiper HydroComm product not only extends Wi-Fi to your submerged cleaner, but also gives you pool chemical readings so you know if you need to add more chemicals to your pool. You decide if you need something like that. For me, personally, I am not changing the cleaning settings mid-cycle, so I am perfectly fine without a Wi-Fi connection while it’s underwater.

Skimming off the top

The Aiper Scuba V3 does have one feature missing that might be important to a lot of people – the ability to skim the top of the water to get floating debris. Here is why I don’t think this is that important: I would prefer to have a dedicated skimmer like the Aiper EcoSurfer S2 than to have it built into the pool cleaner itself. Once my pool bottom and walls are clean, I will pull the cleaner out, but I like to keep the EcoSurfer S2 running all day and sometimes all night.

Since it’s powered by solar, the battery literally never runs out, so you have a product that will likely suck up the debris before it hits the bottom of the pool. It’s like preventative maintenance, and I think the Aiper Ecosurfer S2 is the best skimmer on the market. Aiper sells both the Scuba V3 and the Ecosurfer S2 together in a package that saves you around a hundred bucks; that’s what I would personally recommend.

If you want a pool cleaner that also skims on the top, there are plenty to choose from, but I highly recommend you get one with long battery life so it has plenty of time to clean the surface. Larger pools might give your pool cleaner an impossible challenge in this department if you do not size up the cleaner’s battery with your pool. You can read my Aiper Surfer S2 review if you want to know more about it.

Battery life

Battery life is what will really matter to you, especially if you have a larger pool. In my real-world testing, a full charge reliably delivered around 140 to 150 minutes of continuous cleaning. For my standard 15,000-gallon pool, this was more than enough time for the V3 to meticulously scrub the entire floor, climb every wall, and trace the entire waterline. Once the V3 was finished with the floor and walls, I had about 30 minutes of batter life leftover, not enough for another cleaning before it needed a recharge, but enough time leftover for me to run some errands and know that it’s still floating at the surface waiting for retrieval (the Scuba V3 will find an edge of the pool and float there thanks to its fans, waiting for you to pick it up out of the pool).

Aiper packs a wireless charging dock with the Scuba V3, which lets you just set the robot on the dock without plugging anything into it. Typically, only more expensive robot cleaners come with a dock like this. The Beatbot Sora 70, for example, doesn’t come with a wireless dock and has a price tag of over $300 more. Using the charging dock, fully charging the Aiper Scuba V3 took a few hours to get to a full charge – pretty standard.

The chart at the top of this response illustrates how the Aiper Scuba V3’s battery life stacks up against leading cordless robotic pool cleaners in the $900 to $1,900 price bracket.

As the data shows, the Aiper Scuba V3 ($949) sits squarely in the middle of the pack with its 150-minute run time. Here is a breakdown of what that means for your purchasing decision:

- The Direct Competitors: The Scuba V3 goes toe-to-toe with the Polaris Freedom, which typically retails for around $1,300 and offers an identical 150-minute battery life. However, the V3 heavily outperforms the similarly priced Dolphin Liberty 400 (~$1,200), which taps out after just 90 minutes of cleaning.

- The Budget Alternative: Interestingly, Aiper’s own older model, the Scuba S1 Pro ($549), actually delivers 30 more minutes of runtime (180 minutes total) for less money. While you sacrifice the V3’s advanced AI Vision navigation and wireless charging dock by dropping down to the S1 Pro, it remains a fantastic option if sheer battery longevity on a budget is your top priority.

- The Premium Upgrades: If you have an exceptionally large pool that demands marathon cleaning sessions, you will have to pay for it. The Beatbot Aquasense Pro ($1,861 and one of my favorites) pushes past the 3-hour mark with 205 minutes of bottom-cleaning endurance, while Aiper’s flagship Scuba X1 Pro Max ($1,830 and another favorite of mine) dwarfs the competition with an astonishing 300 minutes (5 hours) of battery life on a single charge.

Ultimately, while the Scuba V3 doesn’t claim the crown for the longest-lasting battery on the market, 150 minutes is more than sufficient for the average 15,000-to-20,000-gallon residential pool.

Durability and Warranty

When you drop $1,199 on a piece of technology that lives underwater, you want absolute confidence that it isn’t going to short out or fall apart after a few months. The Aiper Scuba V3 feels incredibly robust. The outer shell is made of a high-impact, UV-resistant plastic that showed absolutely no signs of fading or chalking despite sitting out in the sun for hours on end. The tank treads are thick rubber, showing minimal wear even after aggressively scrubbing abrasive pool plaster for two weeks.

Internally, the brushless motors are sealed tightly, and the elimination of the physical charging port via the new wireless dock removes the most common point of failure for underwater electronics (water leaking into the battery compartment).

Aiper backs the Scuba V3 with a comprehensive 2-year warranty. In the world of pool robotics, 2 years is the standard benchmark, though some higher-priced competitors (like Beatbot) stretch to 3 years. Aiper’s customer service has built a solid reputation over the last few years, offering 24/7 support and a 30-day free return window if the robot simply doesn’t gel with your pool’s specific layout. Furthermore, Aiper regularly pushes over-the-air firmware updates via the AI Navium app, ensuring the robot’s navigation algorithms continue to improve over time.

Full disclosure on my part: I only had the Aiper Scuba V3 for about a month, and while I had no issues with reliability, one month isn’t nearly long enough to test a pool cleaner in my opinion. So I’ll come back to the review and update it after I have the Scuba V3 for a while longer. I would recommend checking out the customer reviews on their website and any user reviews that might show up on Amazon, Google, and Reddit.

Should you buy the Aiper Scuba V3?

If you are in the market for a new pool cleaner, I would highly recommend the Scuba V3 and the Ecosurfer S2. With both products, you will have a spotless pool in no time. I think the Scuba V3 is a great value for the price; you get an effective cleaner built by a supportive company, a wireless charging doc and a very intuitive app to use.

How I Tested The Aiper Scuba V3

To evaluate the Aiper Scuba V3, I used it as my exclusive pool cleaning solution for 14 consecutive days in a 15,000-gallon, rectangle-shaped, in-ground plaster pool located in a high-wind environment prone to heavy debris. Testing involved subjecting the robot to both high-load days (deliberately dumping measured amounts of fine potting soil, sand, and larger cherry tree leaves into the deep end) and low-load days featuring standard ambient dust and bugs.

I tested the robot in all available app modes, closely monitoring the AI Patrol’s ability to recognize and divert toward specific debris clusters. Battery runtimes were measured from the moment the robot submerged to the exact moment it engaged its Smart Waterline Parking feature. Navigational efficiency was visually tracked to ensure overlapping floor coverage without repeated blind spots, and the wireless charging dock was evaluated for ease of use and consistent charging times in an uncovered outdoor environment.

Tech

Amazon sued by YouTubers for allegedly scraping their content to train AI video tool

A trio of YouTube producers filed a class action lawsuit against Amazon alleging the tech giant illegally used content from the video platform to train and improve its Nova Reel generative AI model.

The suit, filed Friday in U.S. District Court for the Western District of Washington in Seattle, describes how Amazon allegedly used datasets earmarked only for academic use, circumvented YouTube’s copyright protection measures, and scraped video content. KING5 first reported on the suit.

“In a world where Defendant and others can circumvent technological protections to exploit copyrighted works without authorization with impunity, creators will be less likely to make their creations available on YouTube and other similar platforms, for fear of losing all control of them,” the plaintiffs state in their suit. “The world will be poorer for it.”

Plaintiffs are seeking damages, restitution and injunctive relief, claiming Amazon violated the Digital Millennium Copyright Act.

An Amazon spokesperson declined to comment on the matter, citing ongoing litigation.

Amazon released its Nova foundation models in 2024 via AWS Bedrock. The Nova Reel model can take text prompts and images and turn them into short videos, with features including watermarking.

According to the suit, Amazon deployed automated download tools paired with virtual machines that cycled through IP addresses to avoid being blocked, enabling the unauthorized extraction of data from millions of videos.

The named plaintiffs include:

- Ted Entertainment, Inc. (TEI), a California-based media company owned by Ethan and Hila Klein with more than 5,800 videos on YouTube with a combined total of more than 4 billion views. TEI channels include h3h3 Productions and H3 Podcast Highlights.

- Matt Fisher, a California-based YouTuber who runs the MrShortGame Golf channel that provides instructional videos and has more than 500,000 subscribers.

- Golfholics, a golf-focused YouTube channel with more than 130,000 subscribers and millions of views.

The suit argues the plaintiffs have no way to recover intellectual property already used to train Amazon’s models. “Once AI ingests content, that content is stored in its neural network and not capable of deletion or retraction,” it states.

Dozens of similar cases are working their way through courts nationwide. Among them: the New York Times’ lawsuit against OpenAI and Microsoft, a class action by authors against Microsoft, and a suit from musicians with YouTube content against Google.

Separate lawsuits against Anthropic and music-generation startup Suno over the alleged unauthorized use of books and music in AI training have since settled. A case brought by authors against Meta was dismissed.

Tech

How Quiet Failures Are Redefining AI Reliability

In late-stage testing of a distributed AI platform, engineers sometimes encounter a perplexing situation: every monitoring dashboard reads “healthy,” yet users report that the system’s decisions are slowly becoming wrong.

Engineers are trained to recognize failure in familiar ways: a service crashes, a sensor stops responding, a constraint violation triggers a shutdown. Something breaks, and the system tells you. But a growing class of software failures looks very different. The system keeps running, logs appear normal, and monitoring dashboards stay green. Yet the system’s behavior quietly drifts away from what it was designed to do.

This pattern is becoming more common as autonomy spreads across software systems. Quiet failure is emerging as one of the defining engineering challenges of autonomous systems because correctness now depends on coordination, timing, and feedback across entire systems.

When Systems Fail Without Breaking

Consider a hypothetical enterprise AI assistant designed to summarize regulatory updates for financial analysts. The system retrieves documents from internal repositories, synthesizes them using a language model, and distributes summaries across internal channels.

Technically, everything works. The system retrieves valid documents, generates coherent summaries, and delivers them without issue.

But over time, something slips. Maybe an updated document repository isn’t added to the retrieval pipeline. The assistant keeps producing summaries that are coherent and internally consistent, but they’re increasingly based on obsolete information. Nothing crashes, no alerts fire, every component behaves as designed. The problem is that the overall result is wrong.

From the outside, the system looks operational. From the perspective of the organization relying on it, the system is quietly failing.

The Limits of Traditional Observability

One reason quiet failures are difficult to detect is that traditional systems measure the wrong signals. Operational dashboards track uptime, latency, and error rates, the core elements of modern observability. These metrics are well-suited for transactional applications where requests are processed independently, and correctness can often be verified immediately.

Autonomous systems behave differently. Many AI-driven systems operate through continuous reasoning loops, where each decision influences subsequent actions. Correctness emerges not from a single computation but from sequences of interactions across components and over time. A retrieval system may return contextually inappropriate and technically valid information. A planning agent may generate steps that are locally reasonable but globally unsafe. A distributed decision system may execute correct actions in the wrong order.

None of these conditions necessarily produces errors. From the perspective of conventional observability, the system appears healthy. From the perspective of its intended purpose, it may already be failing.

Why Autonomy Changes Failure

The deeper issue is architectural. Traditional software systems were built around discrete operations: a request arrives, the system processes it, and the result is returned. Control is episodic and externally initiated by a user, scheduler, or external trigger.

Autonomous systems change that structure. Instead of responding to individual requests, they observe, reason, and act continuously. AI agents maintain context across interactions. Infrastructure systems adjust resource in real time. Automated workflows trigger additional actions without human input.

In these systems, correctness depends less on whether any single component works, and more on coordination across time.

Distributed-systems engineers have long wrestled with issues of coordination. But this is coordination of a new kind. It’s no longer about things like keeping data consistent across services. It’s about ensuring that a stream of decisions—made by models, reasoning engines, planning algorithms, and tools, all operating with partial context—adds up to the right outcome.

A modern AI system may evaluate thousands of signals, generate candidate actions, and execute them across a distributed infrastructure. Each action changes the environment in which the next decision is made. Under these conditions, small mistakes can compound. A step that is locally reasonable can still push the system further off course.

Engineers are beginning to confront what might be called behavioral reliability: whether an autonomous system’s actions remain aligned with its intended purpose over time.

The Missing Layer: Behavioral Control

When organizations encounter quiet failures, the initial instinct is to improve monitoring: deeper logs, better tracing, more analytics. Observability is essential, but it only shows that the behavior has already diverged—it doesn’t correct it.

Quiet failures require something different: the ability to shape system behavior while it is still unfolding. In other words, autonomous systems increasingly need control architectures, not just monitoring.

Engineers in industrial domains have long relied on supervisory control systems. These are software layers that continuously evaluate a system’s status and intervene when behavior drifts outside safe bounds. Aircraft flight-control systems, power-grid operations, and large manufacturing plants all rely on such supervisory loops. Software systems historically avoided them because most applications didn’t need them. Autonomous systems increasingly do.

Behavioral monitoring in AI systems focuses on whether actions remain aligned with intended purpose, not just whether components are functioning. Instead of relying only on metrics such as latency or error rates, engineers look for signs of behavior drift: shifts in outputs, inconsistent handling of similar inputs, or changes in how multi-step tasks are carried out. An AI assistant that begins citing outdated sources, or an automated system that takes corrective actions more often than expected, may signal that the system is no longer using the right information to make decisions. In practice, this means tracking outcomes and patterns of behavior over time.

Supervisory control builds on these signals by intervening while the system is running. A supervisory layer checks whether ongoing actions remain within acceptable bounds and can respond by delaying or blocking actions, limiting the system to safer operating modes, or routing decisions for review. In more advanced setups, it can adjust behavior in real time—for example, by restricting data access, tightening constraints on outputs, or requiring extra confirmation for high-impact actions.

Together, these approaches turn reliability into an active process. Systems don’t just run, they are continuously checked and steered. Quiet failures may still occur, but they can be detected earlier and corrected while the system is operating.

A Shift in Engineering Thinking

Preventing quiet failures requires a shift in how engineers think about reliability: from ensuring components work correctly to ensuring system behavior stays aligned over time. Rather than assuming that correct behavior will emerge automatically from component design, engineers must increasingly treat behavior as something that needs active supervision.

As AI systems become more autonomous, this shift will likely spread across many domains of computing, including cloud infrastructure, robotics, and large-scale decision systems. The hardest engineering challenge may no longer be building systems that work, but ensuring that they continue to do the right thing over time.

From Your Site Articles

Related Articles Around the Web

Tech

AI is making us faster, more productive, and worse at thinking

AI is everywhere, the pressure to adopt it is relentless, and the evidence that it’s making us smarter is getting thinner by the quarter.

On New Year’s Day 2026, a programmer named Steve Yegge launched an open-source platform called Gas Town. It lets users orchestrate swarms of AI coding agents simultaneously, assembling software at speeds no single human could match.

One of the first people to try it described the experience in terms that had nothing to do with productivity. “There’s really too much going on for you to comprehend reasonably,” he wrote. “I had a palpable sense of stress watching it.”

That sentence should be pinned to the wall of every executive suite, every venture capital boardroom, and every CES keynote stage where the word “intelligence” is thrown around like confetti. Because something strange is happening in the relationship between humans and the technology we keep calling intelligent.

The machines are getting faster. The humans interacting with them are getting more exhausted, more anxious, and, by several measures, less capable of the one thing intelligence was supposed to enhance: thinking clearly.

The pressure to adopt AI is now so pervasive that it has developed its own vocabulary of coercion.

You need to have AI.

You need to use AI.

You need to buy AI.

Your competitors are already using it.

Your children will fall behind without it.

The language does not come from engineers quietly solving problems. It comes from earnings calls, product launches, and LinkedIn posts written with the manic energy of people who have confused selling a product with describing reality.

In January 2026, at the World Economic Forum in Davos, Microsoft CEO Satya Nadella offered a phrase so revealing it deserves to be studied as a cultural artefact. He warned that AI risked losing its “social permission” to consume vast quantities of energy unless it started delivering tangible benefits to people’s lives.

The framing was striking: not a question of whether the technology works, but of whether the public can be kept on board while the industry figures out if it does. Nadella called AI a “cognitive amplifier,” offering “access to infinite minds.”

A month later, a Circana survey of US consumers found that 35 per cent of them did not want AI on their devices at all. The top reason was not confusion or technophobia. It was simpler than that. They said they did not need it.

The gap between the rhetoric and the evidence has become difficult to ignore. In March 2026, Goldman Sachs published an analysis of fourth-quarter earnings data and found, in the words of senior economist Ronnie Walker, “no meaningful relationship between productivity and AI adoption at the economy-wide level.”

The bank noted that a record 70 per cent of S&P 500 management teams had discussed AI on their earnings calls. Only 10 per cent had quantified its impact on specific use cases. One per cent had quantified its impact on earnings. Meanwhile, the five largest US technology companies were collectively expected to spend $667 billion on AI infrastructure in 2026, a 62 per cent increase over the previous year.

The National Bureau of Economic Research described the situation as a “productivity paradox”: perceived gains larger than measured ones.

There are real productivity improvements, but they are strikingly narrow. Goldman found a median gain of around 30 per cent in two specific areas: customer support and software development. Outside those domains, the evidence for broad improvement was, in the bank’s assessment, essentially absent. The promised revolution, for now, is happening in two rooms of a very large house.

What is happening in those rooms, though, is worth examining closely, because even where AI delivers, something else appears to be breaking.

In February 2026, researchers at UC Berkeley’s Haas School of Business published findings from an eight-month study embedded at a 200-person US technology firm. They found that AI did not reduce workloads. It intensified them. Tasks got faster, so expectations rose. Expectations rose, so the scope expanded. Scope expanded, so workers took on responsibilities that had previously belonged to other roles. Product managers began writing code. Researchers took on engineering work. Role boundaries dissolved because the tools made it feel possible, and then the exhaustion arrived.

I got tired just write it.

The researchers identified a cycle they called “workload creep”: a gradual accumulation of tasks that goes unnoticed until cognitive fatigue degrades the quality of every decision.

Harvard Business Review gave the phenomenon a blunter name: “AI brain fry.” A Boston Consulting Group study of nearly 1,500 US workers found that 14 per cent of those using AI tools requiring significant oversight reported experiencing it, a distinct form of mental fog characterised by difficulty focusing, slower decision-making, and headaches after extended AI interaction.

The workers most affected were not the sceptics or the laggards. They were the enthusiastic adopters, the ones who had done exactly what every keynote told them to do.

The distribution of this exhaustion is not random. Sixty-two per cent of associates and 61 per cent of entry-level workers reported AI-related burnout, according to the Harvard Business Review study.

Among C-suite executives, the figure dropped to 38 per cent. The pattern is consistent with what anyone who has spent time in an organisation could have predicted: the people who make the strategic decisions about AI adoption are not the people who manage its outputs, clean up its errors, and switch between its tools eight hours a day.

All of this raises a question that the industry would prefer to skip over: what, exactly, do we mean when we use the word “intelligence”?

The term “artificial intelligence” was coined in 1956 at a workshop at Dartmouth College, and it has been doing a particular kind of ideological work ever since. By naming the field after a human quality, its founders made a move that was as much marketing as science. It invited us to see computation as cognition, pattern-matching as understanding, speed as wisdom.

Every time a product is described as “intelligent,” it borrows from the emotional weight of a word that, for most of human history, meant something like the capacity for judgement, reflection, and the ability to sit with uncertainty long enough to think clearly about it.

That is not what these systems do. What they do, often brilliantly, is statistical prediction at an extraordinary scale. They recognise patterns in data, generate plausible continuations of sequences, and optimise for objectives defined by their designers.

This is genuinely useful. It is not intelligence in the sense that any philosopher, psychologist, or, for that matter, any thoughtful person on the street would recognise. The slippage between the two meanings is not accidental. It is the engine of the entire commercial project.

Here is the deepest irony: in the rush to surround ourselves with artificial intelligence, we appear to be eroding the conditions under which actual human intelligence operates. Intelligence, the real kind, requires things that the AI economy is systematically destroying: uninterrupted attention, tolerance for ambiguity, the willingness to sit with a problem before reaching for a solution, and the cognitive space to doubt, reconsider, and change one’s mind.

Researchers at the London School of Economics argued in a February 2026 paper that the manufactured urgency around AI narrows the space for democratic deliberation itself, collapsing the future into a single inevitability and leaving no room for the slow, uncertain, distinctly human process of deciding together what we actually want.

There is something almost comic about the situation.

We have built machines that can process language, generate images, and write code at superhuman speed, and the people using them are reporting mental fog, difficulty concentrating, and a growing inability to think.

A senior engineering manager cited in the BCG study described juggling multiple AI tools to weigh technical decisions, generate drafts, and summarise information. The constant switching and verification created what he called “mental clutter.” His effort had shifted from solving the core problem to managing the tools.

Not everyone is compliant. A third of consumers have looked at the AI being pushed into their phones and laptops and said, plainly, no. Workers whose organisations value work-life balance report 28 per cent lower AI fatigue, according to BCG’s research, which suggests the problem is less about the technology itself than about the culture of compulsive adoption wrapped around it.

The question is not whether AI is useful. In certain applications, it clearly is. The question is whether the frenzy surrounding it, the relentless pressure to adopt, integrate, and accelerate, is making us smarter or just making us more compliant.

Sixty-seven billion dollars in quarterly investment. Record mentions on earnings calls. Entire conferences dedicated to the word “intelligence.”

And in a January survey, the most common reason a human being gave for not wanting any of it was four words long: I do not need it. That sentence, quiet and unimpressed, may be the most intelligent thing anyone has said about AI in years. The question now is whether we still have the attention span to hear it.

Tech

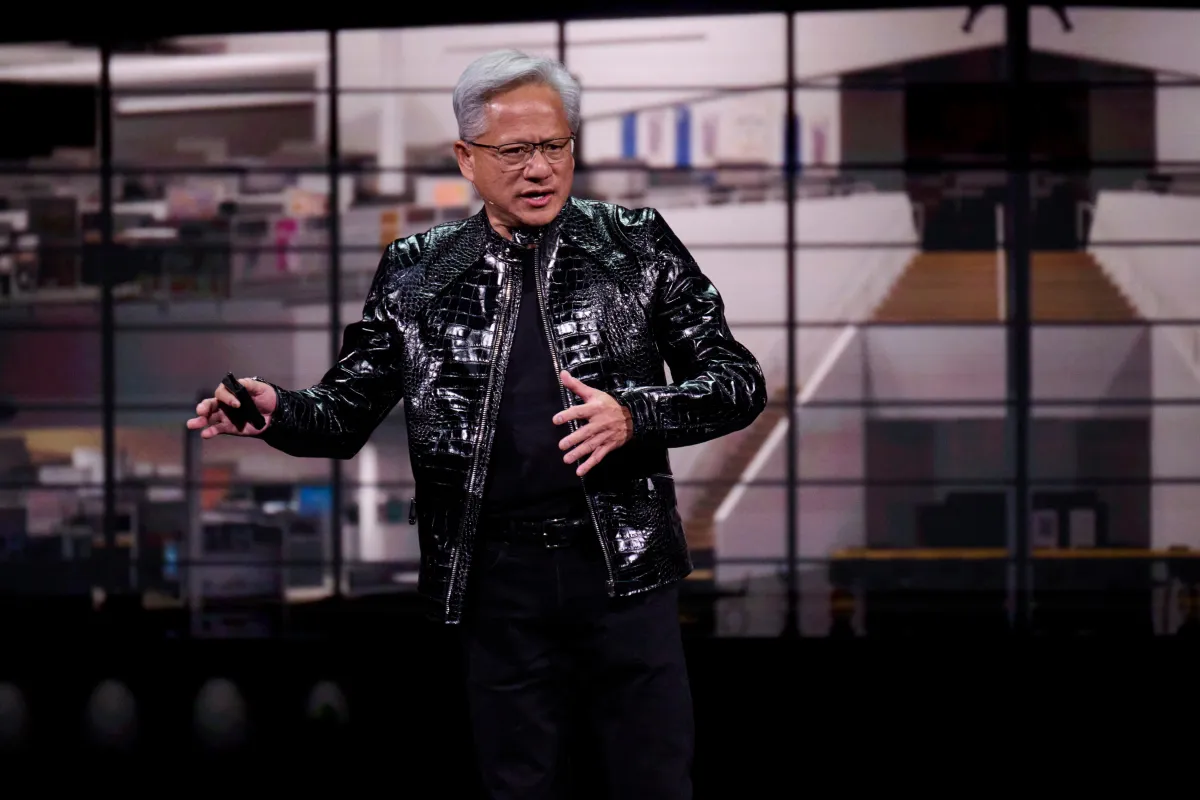

Nvidia-backed SiFive hits $3.65 billion valuation for open AI chips

SiFive, a company founded in 2015 by the UC Berkeley engineers who created an open source chip design, has landed a $400 million oversubscribed round that values the company at $3.65 billion.

This deal is interesting for a bunch of reasons. For one, SiFive’s RISC-V open chip design is based on the RISC processor, not Intel’s x86 or ARM, the two major types of CPUs that currently feed Nvidia’s GPU computer system AI empire.

Also, Nvidia was investor in this round, alongside a long list of VCs, private equity, and hedge funds. The round was led by Atreides Management, founded by former Fidelity investor bigwig Gavin Baker. (Atreides was also an investor in Cerebras Systems $1 billion round). Other investors in the round include Apollo Global Management, D1 Capital Partners, Point72 Turion, T. Rowe Price Sutter Hill Ventures, and others.

SiFive’s business model is like Arm’s was in years gone by — it licenses its chip designs to those who modify them for their own needs and does not sell the chips themselves. (In March, Arm changed its model when it launched the first-ever chip it manufactured, an AI chip, developed with Meta with customers including OpenAI, Cerebras, and Cloudflare.)

SiFive stands in rarified air with chip designs that are open, not proprietary, as well as neutral, not reliant on specific customers. In fact, SiFive hasn’t raised since March 2022, Pitchbook estimates, when it brought in $175 million led by Coatue Management at a pre-money valuation of $2.33 billion. Intel Capital, Qualcomm Ventures, Aramco Ventures, were part of that round.

RISC-V has been, until recently, better known as a chip for smaller uses, like embedded systems. But with this cash and Nvidia’s attention, SiFive is moving into CPUs for AI data centers. SiFive’s designs will work with Nvidia’s CUDA software and its NVLink Fusion, a rack server system that lets different CPUs plug into Nvidia’s “AI factory.”

In other words, as rivals Intel and AMD seek to compete with Nvidia’s GPU, Nvidia is backing an 11-year-old startup that can design CPUs on an open and completely alternate technology.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

Tech

Artemis II Astronauts Splash Down Off California’s Coast

NASA’s Artemis II crew safely splashed down off the California coast after completing a 10-day trip around the moon and back. “This is not just an accomplishment for NASA,” sad NASA Administrator Jared Isaacman. “This is an accomplishment for humanity, again, a historic mission to the moon and back.” From a report: Isaacman is aboard the USS John. P Murtha Navy recovery vessel, where the astronauts will be brought once they’ve been retrieved from the Orion capsule, and he shared “there is a lot to celebrate right now on on a mission well accomplished for Artemis II.”

Isaacman also complimented the crew as “absolutely professional astronauts, wonderful communicators and almost poets” “” as well as “ambassadors from humanity to the stars.” “I can’t imagine a better crew than the Artemis II crew that just completed a perfect mission right now. We are back in the business of sending astronauts to the moon and bringing them back safely.

This is just the beginning. We are going to get back into doing this with frequency, sending missions to the moon until we land on it in 2028 and start building our base.” Isaacman also said it’s time to start preparing for Artemis III, expected to launch in 2027. You can watch the moment of the splashdown here.

Tech

After testing both, is the choice easy?

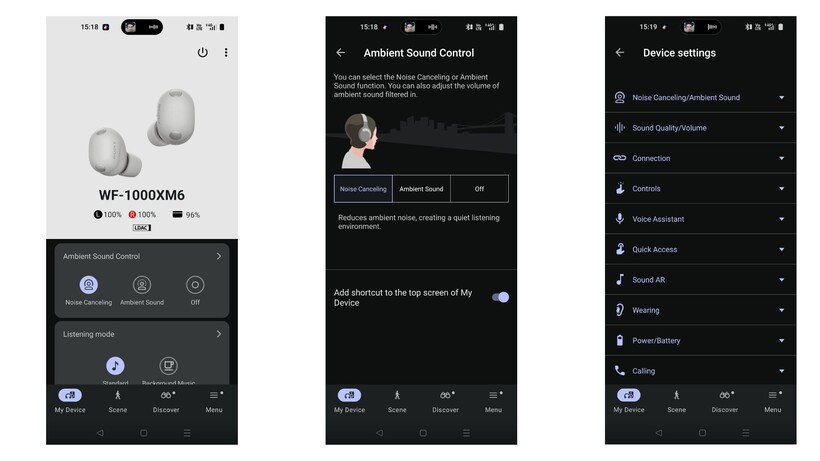

Looking for a new pair of Sony earbuds but aren’t sure whether to splurge on the latest model, or save on the older WF-1000XM5? We’re here to help.

We’ve reviewed both the Sony WF-1000XM6 and the WF-1000XM5 to help you decide which earbuds are a better fit for you.

If you’re not convinced by either Sony pair, then visit our best headphones and best wireless earbuds guides instead.

Price and Availability

The Sony WF-1000XM6 earbuds are the newer of the two and, unsurprisingly, naturally have a higher price tag at £249/$249.

Although the WF-1000XM5s are the older pair, they’re still readily available to buy. Not only that but, although the earbuds’ official RRP is £199/$199, it’s not impossible to find a hefty price cut for them. For example, at the time of writing, the XM5 buds were just £169 on Sony’s official site.

Design

- Sony WF-1000XM6s are chunkier though slimmer in profile

- Both are comfortable to wear, although the XM6 buds can be more fiddly to wear

- Both are IPX4

Although both the WF-1000XM6 and the XM5 are relatively slim and definitely pocketable, there are a few notable differences between the two.

Firstly, due to the additional microphone, the XM6 model is slightly chunkier than its predecessor, and subsequently can make the earbuds fiddly to wear and fit correctly. While we never noted an issue with comfort, we did struggle to get a perfectly airtight seal for ANC. Using the Sony Sound Connect app, we found the earbuds struggled to pass Sony’s strict test for a suitable seal. It’s frustrating, but fortunately doesn’t seem to impede the ANC too much – but more on that later.

Otherwise, both earbuds are fitted with responsive touch controls that cover playback, switching between ANC modes, volume control and more, all of which can be customised via the companion app.

In addition, both earbuds are fitted with the same stiffer ear-tips that aim to plug your ears more effectively than silicon alternatives, and both have an IPX4 rating too. This means both buds can withstand sweat and rain drops.

Winner: Sony WF-1000XM5

Sony WF-1000XM6

Sony WF-1000XM5

Features

- Both earbuds are packed with features, including Speak to Chat, Adaptive Sound Control and voice assistants

- Both also support 360 Reality Audio and can be connected to two devices at once

With both, you’ll benefit from the likes of Quick Attention Mode, Speak to Chat and Adaptive Sound Control. There’s also head gesture control, your choice of voice assistant and a clever Find Your Equalizer that allows you to adjust the sound more intuitively than playing around with bands and frequencies.

Controlling both Sony earbuds is done via the Sound Connect smartphone app, and allows you to customise touch controls, noise-cancellation modes and the Bluetooth connection too. While we wish the app was a bit more streamlined, overall it’s a solid companion piece to the buds.

One especially interesting feature on the app is the Discover section that has features like your listening history across all music services, plus logs how long you use the headphones and includes badges to help game-mify the experience too. How useful this is will depend really on your personal preference, but it shows just how feature-packed the buds are.

Winner: Tie

Sound Quality

- WF-1000XM6 has a larger 8.4mm driver

- Both offer a clear, balanced approach across the frequency range, however the XM6 have improved highs

- Overall, the XM6 is more vibrant and energetic compared to the XM5

Although there are differences between the two, it’s worth noting that both the XM6 and XM5 are brilliant sounding earbuds. However, thanks to the larger 8.4mm driver at play here, the XM6 offers a wider soundstage compared to the XM5. In fact, we found that not only were highs improved, with more clarity and detail, but bass felt weightier too. This is especially noteworthy, as we concluded that bass lovers might be a bit disappointed by the XM5’s more balanced approach.

In addition, we noted that at its default volume, the XM6 picks up more vibrancy, dynamism and energy than the XM5.

All of this, however, is not to say the XM5s don’t sound good – quite the opposite – but it’s just the XM6 has tweaked the overall quality.

Winner: Sony WF-1000XM6

Noise Cancellation

- WF-1000XM6 has one additional microphone for noise cancelling

- Although the XM5s are easier to wear, the XM6s offer overall stronger noise-cancellation

- Call quality is also stronger with the XM6s

Sony claims the WF-1000XM6 offers the “best true wireless for noise-cancellation” and we’re confident to say that they are, in fact, among the quietest pair of earbuds we’ve reviewed. While getting the right fit can be fiddly, which we’ve mentioned earlier, over the weeks we’ve found the earbuds manage to curb outside noises like traffic, voices and even planes brilliantly.

Overall, although the XM6 is a solid improvement over the XM5 pair, we should note that the XM5s are easier to wear than the XM6.

Call quality also sees an improvement, as we found the XM5 had a tendency to let in noise when we spoke. Fortunately, the XM6 sounds completely silent during phone calls.

Winner: Sony WF-1000XM6

Battery Life

- No improvements with the XM6

- Both offer eight hours per charge with an additional 16 hours in the case

Sony hasn’t made any improvements with the battery life of the XM6 buds, and promises the same 24 hours total (eight plus sixteen in the case) as the XM5. Having said that, we actually found the XM6 seemed likely to offer even more hours than Sony claims, with an hour of listening still resulting in 100% charge.

The XM5 actually benefits from a slightly faster charging speed, with a three minute charge resulting in an extra hour of playback, whereas the XM6 needs five minutes. The difference is negligible, but if you find yourself in a pinch then you’ll definitely be thankful.

Winner: Tie

Verdict

Although they’re slightly chunkier and can be quite fiddly to wear initially, the Sony WF-1000XM6 buds are a brilliant upgrade from the WF-1000XM5 pair. Not only is the ANC among the best we’ve ever tested, but the sound is more vibrant and dynamic than its predecessor.

Having said that, the XM6 buds do come with a hefty price tag. So, if you’re on a tighter budget, the XM5 is a brilliant compromise.

Tech

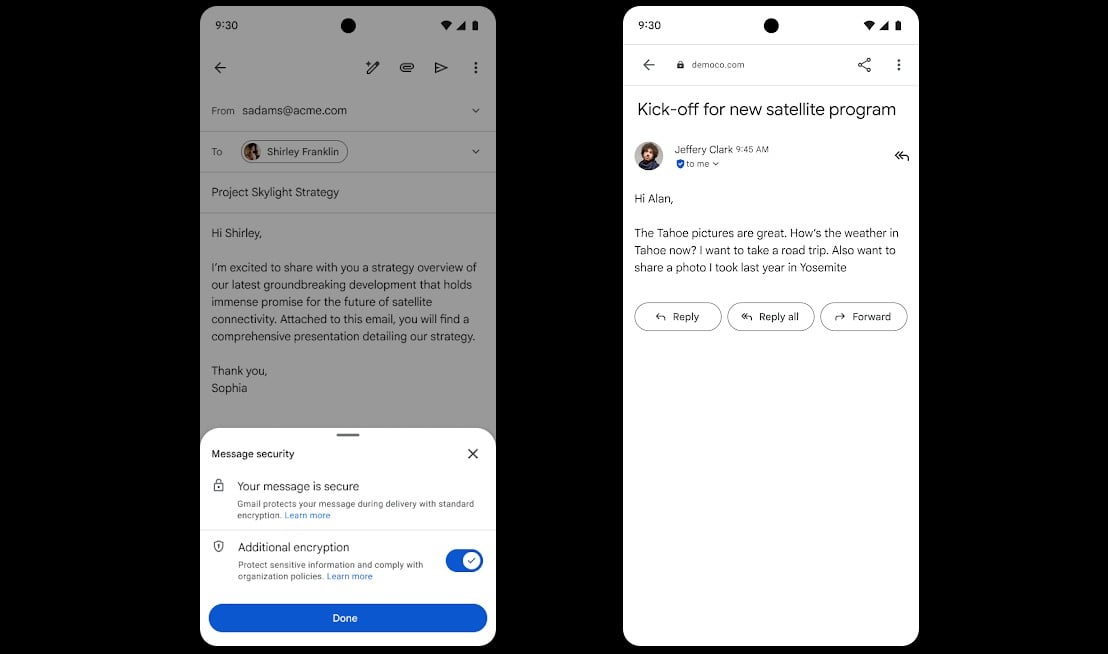

Google rolls out Gmail end-to-end encryption on mobile devices

Google says Gmail end-to-end encryption (E2EE) is now available on all Android and iOS devices, allowing enterprise users to read and compose emails without additional tools.

Starting this week, encrypted messages will be delivered as regular emails to Gmail recipients’ inboxes if they use the Gmail app.

Recipients who don’t have the Gmail mobile app and use other email services can read them in a web browser, regardless of the device and service they’re using.

“For the first time, users can compose and read these E2EE messages natively within the Gmail app on Android and iOS. No need to download extra apps or use mail portals. Users with a Gmail E2EE license can send an encrypted message to any recipient, regardless of what email address the recipient has,” Google announced on Thursday.

“This launch combines the highest level of privacy and data encryption with a user-friendly experience for all users, enabling simple encrypted email for all customers from small businesses to enterprises and public sector.”

This feature is now available for all client-side encryption (CSE) users with Enterprise Plus licenses and the Assured Controls or Assured Controls Plus add-on after admins enable the Android and iOS clients in the CSE admin interface via the Admin Console.

To send an end-to-end encrypted message, Gmail users have to turn on the “Additional encryption” option by clicking the Lock icon when writing the message.

In October, Google also announced that Gmail enterprise users can now send end-to-end encrypted emails to recipients on any email service or platform.

Gmail’s end-to-end encryption (E2EE) feature is powered by the client-side encryption (CSE) technical control, which allows Google Workspace organizations to use encryption keys they control and are stored outside Google’s servers to protect sensitive documents and emails.

This way, the messages and attachments are encrypted on the client before being sent to Google’s servers, which helps meet regulatory requirements such as data sovereignty, HIPAA, and export controls by ensuring that Google and third parties can’t read any of the data.

Gmail CSE was introduced in Gmail on the web in December 2022 as a beta test, following an initial beta rollout to Google Drive, Google Docs, Sheets, Slides, Google Meet, and Google Calendar, and it reached general availability for Google Workspace Enterprise Plus, Education Plus, and Education Standard customers in February 2023.

The company began rolling out its new end-to-end encryption (E2EE) model in beta for Gmail enterprise users in April 2025.

Tech

This Animation Startup Wants to Make It Easier to Tell Open-Ended Stories

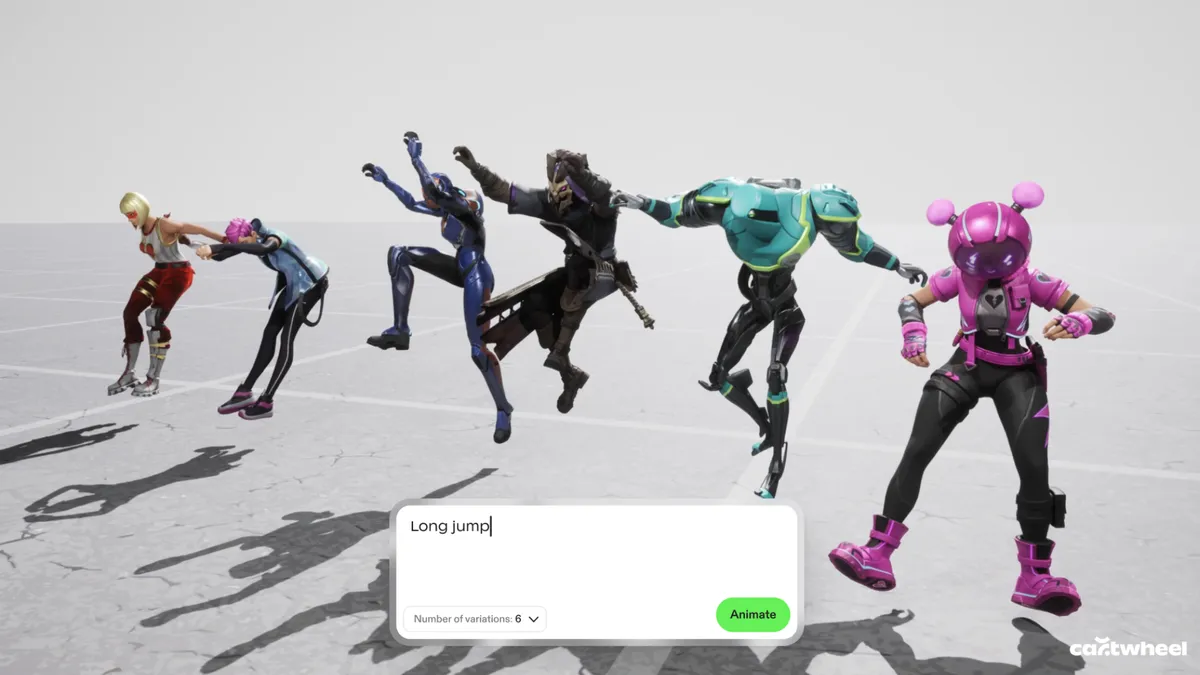

The current wave of generative AI animation often feels like a magic trick that only works once. You type in a prompt, a video appears, and if you don’t like the result — maybe the feet are all wonky, which is a regular issue with AI generations — your only real option is to try a different prompt. This “black box” approach is exactly what Cartwheel, a new 3D animation startup, is trying to dismantle.

Andrew Carr and Jonathan Jarvis, two veterans with roots at OpenAI and Google, respectively, founded the company, which is working to build a future where AI handles the technical drudgery of animation while leaving the creative soul to the artist.

I spoke with Carr and Jarvis about launching their company, defining “taste” with AI, and the technical and creative difficulties of animation in 2026.

What sets Cartwheel apart

According to the founders, one of the biggest hurdles in this space is that 3D motion data is remarkably scarce compared to the endless oceans of text and images available online that AI models are trained on.

“If you look at all the big tech companies, they’ve built their models on written language, audio, image, [and] video because there’s just so much of it, so finding those patterns is much easier,” Jarvis said. “We knew it was going to be hard, but it turns out to be harder than we thought by probably a factor of 10 or 100 to get that data.”

Read more: Generative AI in Gaming Is Here, but Facing Pushback From Gamers — and Developers

While other tech giants focus on generating final pixels, Cartwheel has spent years mapping how humans actually move. Their models are built to understand the nuances of a performance so that a simple 2D video of someone dancing in their backyard can be translated into a precise, realistic 3D skeleton.

This shift from flat images to 3D assets is what gives animators the control they have been missing in the AI era.

Cartwheel has spent years tackling the difficult task of mapping how humans actually move.

Preventing AI “sameness”

Cartwheel’s executives said they view AI’s “sameness” as a byproduct of a lack of control. If everyone uses the same generator to produce a video, the results may eventually start to look all too similar.

“The output of our system is designed for people to edit. It’s designed for people to touch and manipulate, and we don’t want someone to type something in and then have it shuffle through to a finished animation. That’s not the point of it. That’s boring, who’s going to watch that?” Carr said.

“The fact that it’s very easy for people to get into it and edit it actually totally removes the sameness problem,” he said. “You put it on different characters, you put it in different environments, you change how it looks, you push the performance, you pull the performance, and in that sense [sameness] turns into a nonissue.”

Carr and Jarvis said the solution is to provide a “control layer” where the AI output is just the starting point. By generating 3D data instead of flat video, the creator can change the lighting, move the camera or adjust a character’s pose after the AI has done its initial work — making the technology a sophisticated power tool rather than a replacement for the artist.

Founder Andrew Carr said one of his core scientific hypotheses is that movement and motion is a fundamental data type.

The future of animation with AI

Beyond just making animation faster and lowering the barrier to entry, the company is looking toward a concept they call “open-ended storytelling” or “open-ended world-building.” In modern gaming and social media, the demand for content has reached a scale that manual animation cannot possibly match.

Cartwheel envisions characters that aren’t just programmed with a few set moves but are powered by motion models that allow them to react and perform in real time. It’s less about choreographing every single frame and more about “rehearsing” with a digital actor that understands the intent of the scene.

Ultimately, the goal is to bridge the gap between 2D vision and 3D execution, said the founders.

“One of the core hypotheses that we hope is true in the next three years for Cartwheel is everyone will work in 3D even if it’s authored in 2D, even if the final output is just 2D video,” Carr said.

By focusing on the “layer below the pixels,” Carr and Jarvis said they hope that as animation becomes more automated, it also becomes more personal. The machine handles the biomechanics and the file exports, but the human keeps the final say on the taste, the timing and the heart of the story.

Tech

SaaS on the Beach returns to Barcelona

As the tech conference circuit grows more crowded, one SaaS event is making the opposite pitch: fewer people, fewer sales decks, and a lot less noise.

SaaS on the Beach, a curated event for SaaS founders, will return to Barcelona between May 20 and 21 for its second edition, positioning itself as an alternative to the large-scale trade shows that have long dominated the tech events circuit.

The event is built on selectivity. Attendance is limited to 60 handpicked founders, with participants required to meet specific criteria before they can buy a ticket. That makes SaaS on the Beach feel less like an open industry conference and more like a tightly edited peer group.

It is also stripping out many of the rituals that now define mainstream tech events. There is no exhibition hall, no sponsored speaker circuit, and no sales-pitch-heavy programming. In their place are seated dinners, roundtable discussions, and social activities meant to create more direct, less performative exchanges between attendees.

That matters because many founders no longer need more stage content. They need rooms where people speak plainly, compare notes honestly, and talk about the less polished parts of building software companies, hiring, churn, growth, product decisions, and what is actually working.

SaaS on the Beach is also leaning into a no-solicitation format, an explicit break from the conference model where networking often blurs into prospecting. The promise here is that people come to learn from peers, not to be cornered into a demo.

Barcelona is part of that pitch too. The event is presenting its Mediterranean setting as an alternative to the usual northern European conference loop, betting that a more relaxed environment can lead to better conversations.

The bigger idea behind SaaS on the Beach is that senior operators may be growing less interested in scale for its own sake. The trade show still has its place, especially for visibility and lead generation, but smaller, curated gatherings are increasingly selling something else: relevance.

That does not make them more democratic. In some ways, it makes them more exclusive. But it does make the value proposition clearer. If the standard conference model is built on volume, events like SaaS on the Beach are built on density, fewer people, more overlap, and a better chance that the conversation is worth having.

That is the model returning to Barcelona this May.

Tech

Google’s new Android backup idea is so practical that I’m annoyed it took this long

Running out of storage is one of those problems that almost everybody understands, and almost nobody handles properly. Storage can almost never be enough, so some people keep paying for cloud space. Meanwhile, others keep promising that they will “sort it out later”. And a lot of people just end up deleting things when the warning gets too annoying.

But Google’s upcoming Android feature could finally offer a better answer, with an automatic local backup to a PC. This functions wirelessly like a cloud storage service, but it is also free of charge since you’re using your own device.

Android Authority’s recent teardown of Google Play Services beta v26.15.31 revealed that Google is working on an Automatic backup feature inside Quick Share that can copy selected files from your phone to your PC without using the cloud.

Why this might be the storage fix normal people actually use

Cloud backup is useful and all, but a lot of people still do not want to pay for it. Considering the tiny amount of free storage space that you do get, stuff like WhatsApp backups, and photos and videos from a year can easily eat into this free storage immediately.

But Google’s in-development feature appears to let users automatically back up camera photos, camera videos, and audio files directly to a household PC, thanks to a new auto sync option and a Back up now button for manual transfers.

The report also revealed that deleting a file from your phone will not remove its copy from the PC backup. So the feature isn’t just about syncing—it is about finally permitting people to clear space without feeling like they are throwing memories away.

Your Android, your computer, your storage

The part that really matters is the “free” tagline. Most homes already have a laptop, desktop, or even both. And oftentimes, hundreds of gigabytes of storage sitting there are mostly unused. Unless somebody in the house is gaming, editing high-resolution gaming, or hoarding massive files, there is usually plenty of room for old phone footage, family photos, and voice notes.

So Google’s feature appears to take advantage of that reality instead of pushing people into buying more cloud space. Because it lives in Quick Share, it will likely use the same local transfer system, which also suggests that you don’t need an internet connection for backup. You just need to be in close proximity. From the start to the finish, your data stays with you.

This is the boring feature Android needed

There is still one catch though. The details arrive from an APK teardown, so Google has not formally launched the feature yet, and it could change before release. But if it does arrive, it’s the quality-of-life upgrade that could matter more than a lot of flashy AI nonsense. It is practical, wireless, and free.

-

Business6 days ago

Business6 days agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Sports7 days ago

Sports7 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Politics21 hours ago

Politics21 hours agoUS brings back mandatory military draft registration

-

Fashion21 hours ago

Fashion21 hours agoWeekend Open Thread: Veronica Beard

-

Tech4 days ago

Tech4 days agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Business6 days ago

Business6 days agoNo Jackpot Winner, Prize to Climb to $231 Million

-

Fashion5 days ago

Fashion5 days agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Sports22 hours ago

Sports22 hours agoMan United discover Nico Schlotterbeck transfer fee as defender reaches Dortmund agreement

-

Fashion4 days ago

Fashion4 days agoLet’s Discuss: DEI in 2026

-

Crypto World3 days ago

Crypto World3 days agoBitcoin recovers as US and Iran Agree a Ceasefire Deal

-

Crypto World2 days ago

Crypto World2 days agoCanary Capital Files SEC Registration for PEPE ETF

-

Business7 days ago

Business7 days agoAkebia Therapeutics, Inc. (AKBA) Discusses Pipeline Progress and Strategic Focus on Kidney Disease Treatments at R&D Day – Slideshow

-

Business18 hours ago

Business18 hours agoTesla Model Y Tops China Auto Sales in March 2026 With 39,827 Registrations, Beating Cheaper EVs and Gas Cars

-

Business1 day ago

Business1 day agoOpenAI Halts Stargate UK Data Centre Project Over Energy Costs and Copyright Row

-

Tech5 days ago

Tech5 days agoHaier is betting big that your next TV purchase will be one of these

-

Tech5 days ago

Tech5 days agoGamer Restores the Original PlayStation Portal From Two Decades Ago

-

Politics1 day ago

Politics1 day agoMalcolm In The Middle OG Turned Down ‘Buckets Of Money’ To Appear In Reboot

-

Tech5 days ago

Tech5 days agoThe Xiaomi 17 Ultra has some impressive add-ons that make snapping photos really fun

-

Tech5 days ago

Tech5 days agoSamsung just gave up on its own Messages app

-

Tech5 days ago

Tech5 days agoSave $130 on the Samsung Galaxy Watch 8 Classic: rotating bezel, sleep coaching, and running coach for $369

You must be logged in to post a comment Login