Artificial intelligence is no longer a back office enabler or a set of isolated automation software tools. It is becoming a core component of how organizations operate, compete, and deliver value.

As businesses accelerate their adoption of increasingly autonomous systems, often referred to as agentic AI, a significant leadership dilemma is emerging. The workforce is no longer exclusively human.

Article continues below

This shift represents far more than a technological upgrade. It is a structural transformation that puts business leaders in uncharted territory.

The World Economic Forum’s Four Futures framework warns of rising technological fragmentation, declining trust, and widening governance gaps.

In this context, the question for leaders is no longer whether to deploy autonomous AI, but how to govern a hybrid workforce of humans and digital agents without introducing systemic risk.

For many organizations, this is becoming one of the defining leadership challenges of the decade.

The Rise of the Non Human Workforce

Agentic AI systems differ from traditional automation in one critical way: they do not merely execute predefined tasks but interpret data, make decisions, and adapt their behavior to context. In many organizations, these systems are already performing functions once reserved for skilled employees, triaging customer requests, optimizing supply chains, generating code, or even making financial recommendations.

The productivity gains are undeniable, but so is the complexity. When digital agents act with autonomy, they also introduce new forms of organizational risk. Decisions may be opaque, accountability may be unclear, and the potential for unintended consequences increases dramatically.

Leaders must now grapple with a workforce that does not think, behave, or act like humans, and who cannot be governed through traditional management structure. This is where structured identity, access, and behavioral governance become essential.

The Governance Gap: A Growing Leadership Risk

The most significant challenge is not the technology itself, but the governance vacuum surrounding it. Many organizations deploy autonomous systems faster than they establish the controls and guardrails required to manage them. This creates a widening gap between capability and oversight.

Several risks are already becoming visible:

1. Accountability gaps: When an AI agent makes a decision that leads to financial loss, regulatory exposure, or reputational harm, who is responsible? Without clear lines of accountability, organizations face legal and ethical uncertainty.

2. Insider threat like behavior: Autonomous systems often operate with high levels of privilege and can access sensitive data, trigger workflows, or interact with customers. If misconfigured or compromised, they can behave like highly privileged insider threats, an issue we frequently encounter when assessing digital identity posture.

3. Fragmentation and drift: As organizations deploy multiple AI agents across different functions, the risk of inconsistent behavior, configuration drift, and misaligned objectives increases. Without centralized governance, autonomous systems can evolve in ways that diverge from organizational intent.

4. Erosion of trust: Employees, customers, and regulators are increasingly concerned about how AI systems make decisions. A lack of transparency and explainability can undermine confidence and impede adoption.

AI adoption alone is no longer sufficient. Governance has become the true leadership mandate.

A Governance First Mindset: The New Leadership Imperative

To navigate this new landscape, business leaders must adopt a governance first mindset that aligns with the World Economic Forum’s call for Digital Trust and systemic resilience. This requires treating agentic AI not as a standalone technology, but as a governed member of the workforce.

Several principles should guide this shift:

Establish Clear Accountability Structures

Every AI agent must have an identified human owner responsible for its actions, performance, and outcomes. This includes defining escalation paths, decision boundaries, and audit requirements. Without explicit accountability, organizations risk regulatory exposure and operational ambiguity.

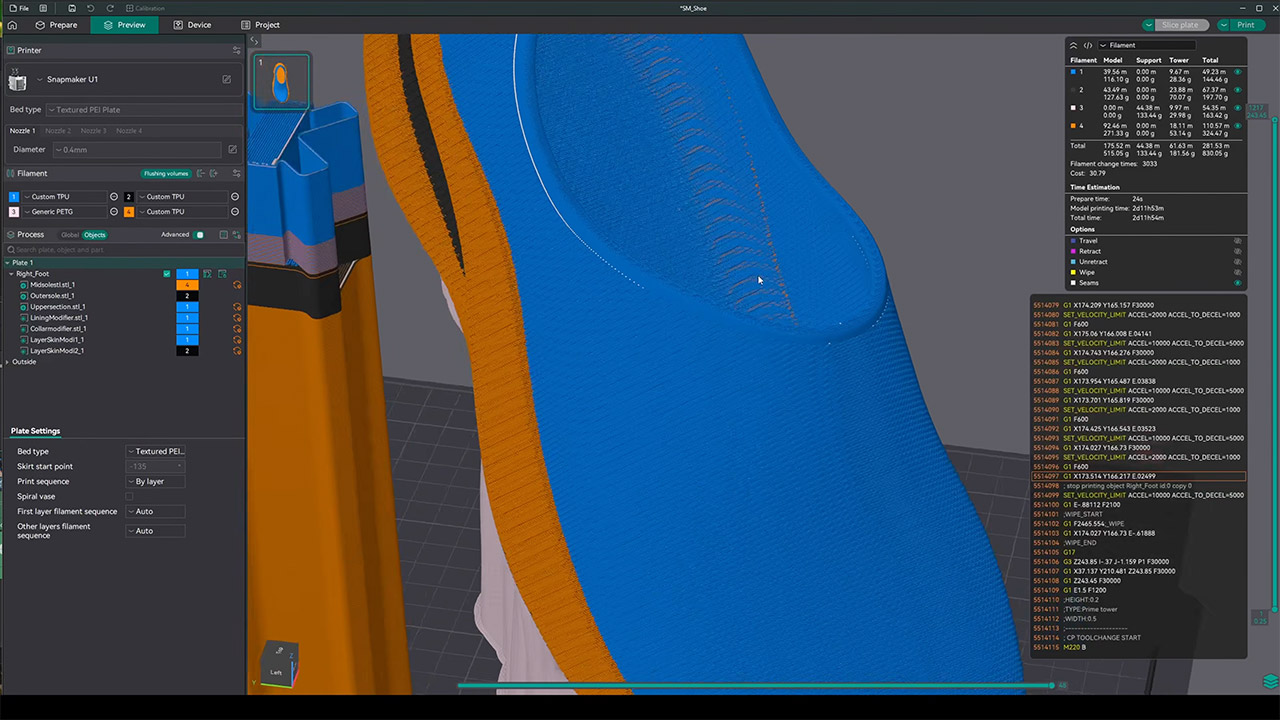

Apply Identity and Access Controls to Digital Agents

Just as employees have identities, permissions, and access levels, so too must AI agents. Leaders should ensure that digital agents are integrated into identity management frameworks with least privilege access, continuous monitoring, and lifecycle management. This reduces the risk of insider threat like behavior and prevents privilege creep, these are key principles central to our approach to digital workforce governance.

Implement Behavioral Guardrails

Autonomous systems require constraints that define acceptable behavior. These guardrails may include ethical guidelines, operational limits, safety checks, and real time monitoring. Guardrails ensure that AI agents act within organizational intent and do not drift into unsafe or unintended territory.

Build Oversight and Auditability into the System

Transparency is essential for trust. AI agents must be auditable, explainable, and observable. This includes maintaining logs of decisions, enabling post incident analysis, and ensuring that humans can intervene when necessary. Oversight is foundational to responsible autonomy.

Foster a Culture of Digital Trust

Governance is more than a technical challenge, it is a cultural one. Leaders must champion a culture that values transparency, accountability, and responsible innovation. This includes educating employees about how AI agents operate, how decisions are made, and how risks are managed. Organizations that succeed here tend to be those that treat governance as a strategic capability, not a compliance burden.

From Liability to Advantage: Building the Hybrid Workforce of the Future

When governed effectively, agentic AI can become a powerful force multiplier. It can enhance productivity, accelerate innovation, and enable organizations to operate with greater agility and precision. But without governance, the same systems can introduce systemic vulnerabilities that undermine resilience.

The role of business leaders is to ensure that autonomy does not outpace oversight. By reframing agentic AI as part of the workforce, subject to the same expectations, controls, and accountability as human employees, leaders can transform a potential liability into a strategic advantage.

The future of work will be hybrid. The organizations that continue to evolve in 2026 will be those that recognize that governing AI is not a technical task delegated to IT, but a core leadership responsibility.

Leaders who embrace this governance first approach will not only mitigate risk, but they will also build resilient, high performing organizations that define the future of the workplace and how businesses function.

You must be logged in to post a comment Login