Ultion Nuki 2025: one-minute review

The Ultion Nuki 2025 is what happens when a smart lock starts behaving like a complete security product.

At a glance, it’s doing the same job as 2023’s Ultion Nuki Plus: pairing Brisant Secure’s Ultion 3 Star PLUS cylinder and UK-specific door furniture with Nuki’s Smart Lock Pro and platform. In practice, though, this version looks more cohesive, feels quicker to respond and is better aligned with how people actually use a front door every day.

Just as importantly, there are sensible fallbacks everywhere. You can still use a physical key, operate it manually from inside, and include a biometric keypad or keyfob if you want different ways in.

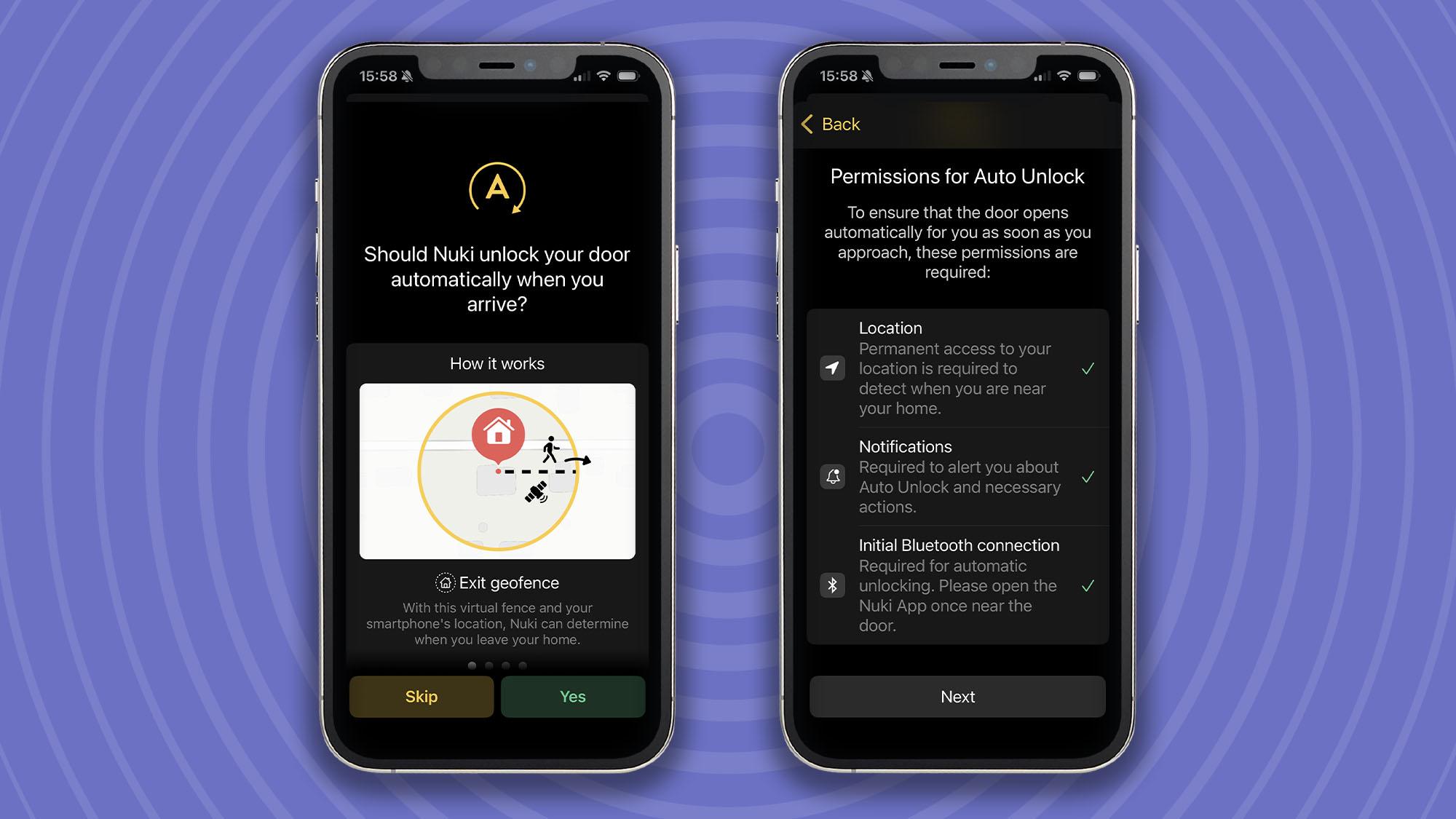

Factor in the comprehensive Nuki app, featuring geofenced Auto Unlock and remote time-limited access for guests, and it all adds up to a smart lock that feels less like a gamble and more like a must-have front-door upgrade.

Ultion Nuki 2025: price and availability

- List price £299

- Optional extra upgrades

- Available from Ultion and Amazon

The Ultion Nuki 2025 is priced at £299 and available in white/steel, black/steel, or chrome/steel finishes. At the time of writing, this particular smart lock is only sold in the UK.

It’s available from Ultion direct or Amazon, along with a suite of optional extras, including a fob for £49, fingerprint keypad for £145, and auto-lock sensor for £55.

Ultion Nuki 2025: specifications

|

Colours |

White/steel, black/steel, chrome/steel |

|

Connectivity |

Wi-Fi, Bluetooth LE, Thread |

|

Compatibility |

Matter, Apple Home, Amazon Alexa, Google Home, Wear OS, Samsung SmartThings |

|

Dimensions |

2.2 x 2.2 x 2.8 inches / 57 x 57 x 70mm |

|

Encryption |

End-to-end with challenge-response (equal to online banking) |

|

Weight |

10.2oz / 290g (Nuki Smart Lock Pro unit only) |

Ultion Nuki 2025: design

- Slimmer, cleaner Nuki Smart Lock Pro form factor

- Seven external colourways with a 20-year anti-corrosion guarantee

- Installs in minutes, no drilling required

The Ultion Nuki 2025 redesign goes further than a cosmetic refresh. The physical foundation is Brisant Secure’s Ultion 3 Star Plus cylinder — a precision-engineered unit built around a molybdenum core that’s 25 per cent denser than iron.

It carries anti-pick, anti-bump and anti-drill credentials, meets Police Preferred specification, holds Master Locksmiths Association approval, and is backed by a £5,000 burglary guarantee, which Brisant says it has never had to pay out on.

External handles are available in seven colourways with a 20-year anti-corrosion guarantee. Adjustable brackets mean installation requires no new holes. The internal handle comes in black, white, or chrome.

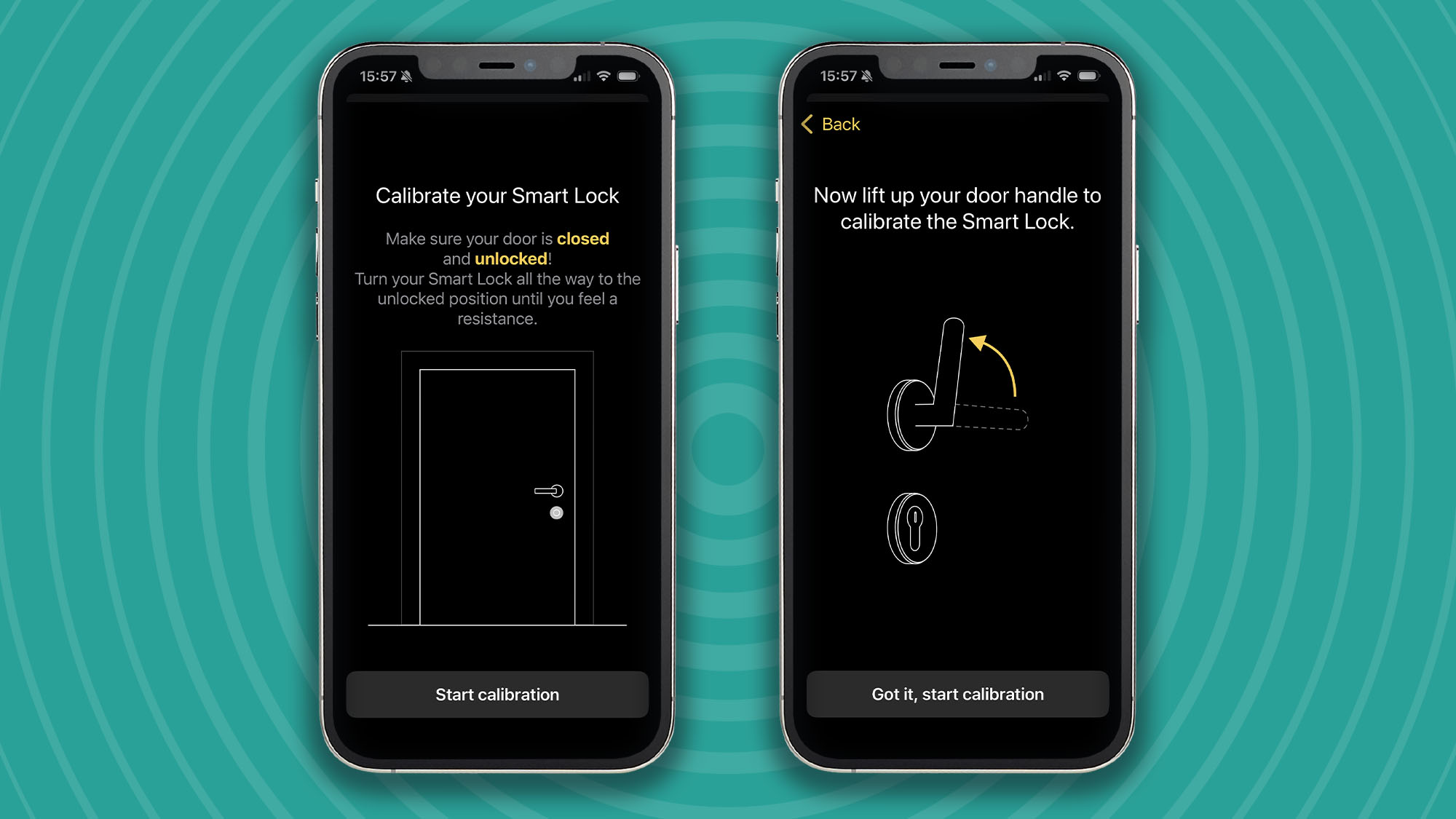

Brisant quotes a four-minute full installation, which is accurate in practice if you have a standard British cylinder lock on your door. If so, it’s a simple case of following the step-by-step guide to remove your old cylinder lock (scarily with one screw) and replace it with the new one.

From there, you simply screw in and attach the Ultion smart lock furniture around it, including the new interior handle and Nuki Smart Lock Pro unit.

The complete unit is a genuine departure from its predecessor. Where older versions were boxy and deep, this latest generation’s cleaner and slimmer form looks like it belongs on a door rather than clamped to one.

The integrated rechargeable battery charges via a magnetic USB-C cable that attaches cleanly, though a fully universal USB-C port would have been the neater solution.

Ultion Nuki 2025: performance

- Built-in Wi-Fi, no hub or bridge required

- Matter over Thread for wide smart home compatibility

- Noticeably faster motor than previous mode

What immediately distinguishes the Ultion Nuki 2025 from its predecessor is responsiveness. Commands that previously involved a perceptible pause now execute almost instantly. This applies both via the native Nuki app and through third-party systems. It’s one of those improvements you don’t fully appreciate until you’ve lived with the old version.

Built-in Wi-Fi means no bridge and no additional hardware. The Nuki app handles everything from access logs and configurable lock speeds to geofenced Auto Unlock and time-limited guest codes. It’s a well-developed platform backed by state-of-the-art encryption, comparable to online banking.

It would be silly for a smart lock not to take its own security seriously, but just in case, the Ultion Nuki 2025 is advertised as achieving the highest AV-TEST available (level 3), and also carries a BSI Kitemark for good measure.

Matter support operates over Thread rather than Wi-Fi once enabled, which theoretically improves both battery efficiency and response speed.

The optional Fingerprint Keypad (£145) adds biometric and PIN access from outside. It’s a luxury addition, but a tempting one for unlocking your door akin to a smartphone. Time-limited guest codes work well, as my cleaner will attest.

The Keyfob (£49) is the simpler option: one button, either direction. Physical key override from the outside remains available throughout, which should be considered non-negotiable on any smart lock that replaces your primary cylinder — something many brands overlook.

An LED ring around the central button communicates status at a glance: off when locked, the top segment flashing every 1.5 seconds when unlocked, and red when errors or a low battery occur. It’s a small but useful touch that lets you avoid reaching for your phone.

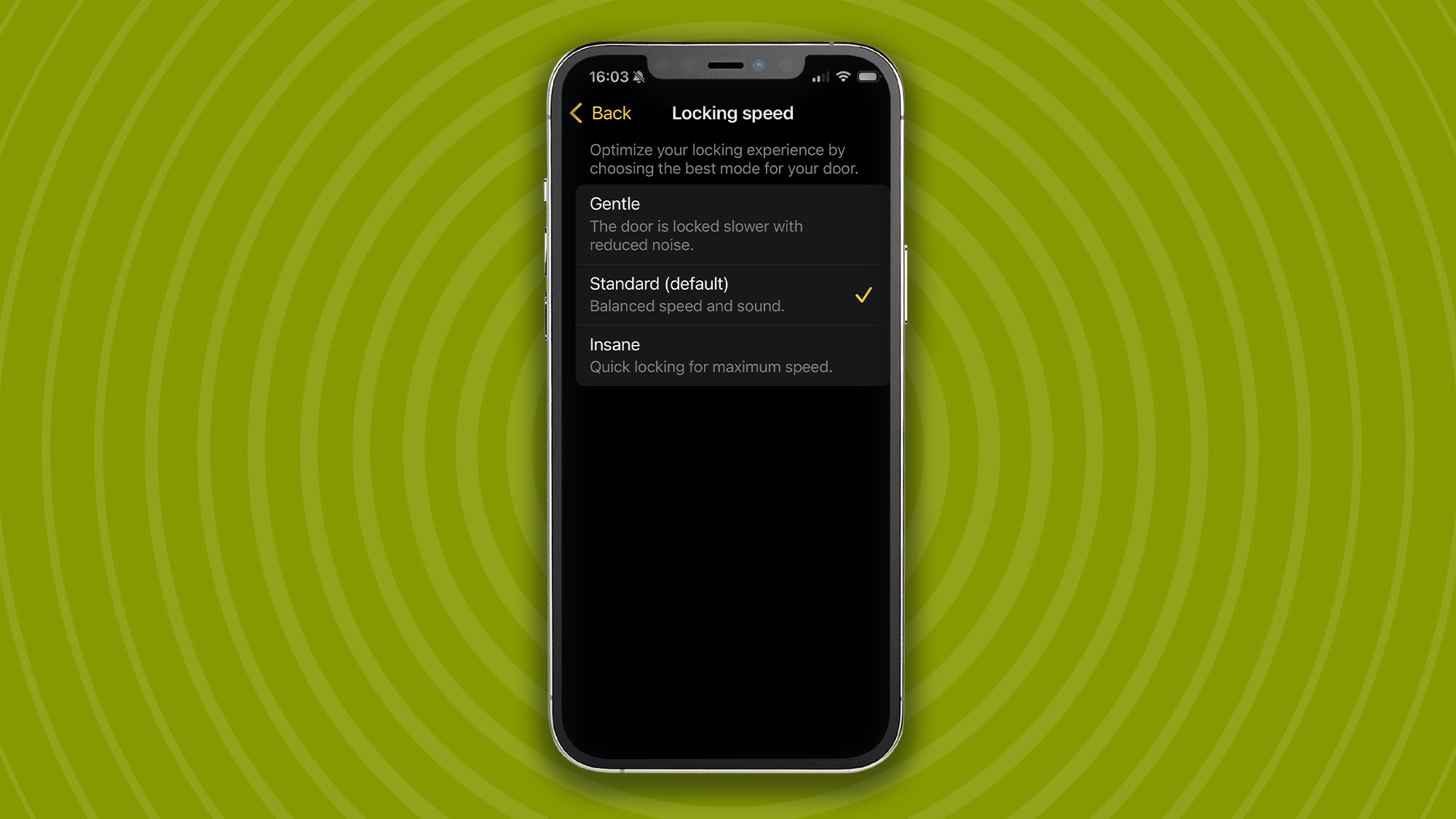

The motor offers three speed settings — Gentle for quiet, unhurried operation; Standard for a balance of speed and noise; and Insane for maximum locking speed when you need it. Most users will never leave Standard, but the option is there.

Auto-lock is available via Lock ‘n’ Go, which latches the door after a set interval following unlock. The app sensibly flags if your door requires the handle to be raised before latching, so you know what to expect before you commit to the feature.

Should you buy the Ultion Nuki 2025?

|

Attributes |

Notes |

Score |

|---|---|---|

|

Value |

Premium but justified — you’re buying a top-tier cylinder and a mature smart platform in one package. Cheaper alternatives cut corners somewhere. |

4/5 |

|

Design |

Slimmer and more refined than its predecessor, with seven external colourways and a four-minute install. The non-universal charging lead stops it from being perfect. |

4.5/5 |

|

Performance |

Near-instant response, Matter compatibility, a well-developed app and multiple access methods. Sets the standard for UK smart locks. |

5/5 |

|

App |

Clean, comprehensive and backed by years of real-world refinement. Geofencing, guest codes, motor speed control, and auto-lock all work as advertised. |

5/5 |

Buy it if

Don’t buy it if

Ultion Nuki 2025: also consider

If the Ultion Nuki 2025 isn’t right for you, here are two alternatives worth considering.

How I tested the Ultion Nuki 2025

- Installed the Ultion Nuki 2025 as my primary door lock

- Tested via the Nuki app over Wi-Fi and Bluetooth

- Connected to Apple Home and tested all smart home integrations

- Used the Fingerprint Keypad and Keyfob across daily entry and exit scenarios

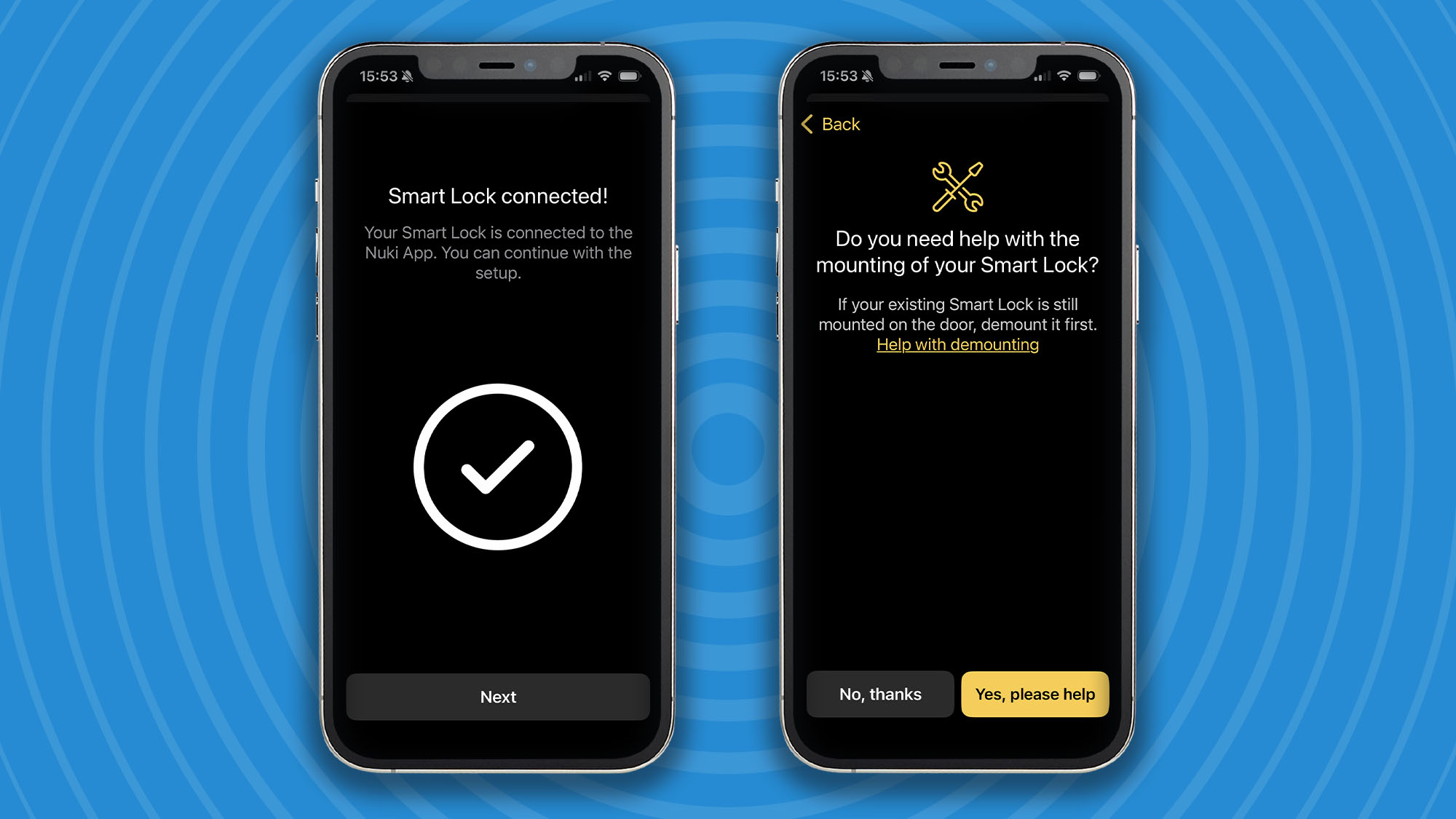

I installed the Ultion Nuki 2025 on my front door and used it as my primary lock for the duration of testing, replacing both the existing cylinder and handle hardware. Setup was completed using the Nuki app, before adding the lock to Apple Home.

All advertised features were tested in daily use, including Auto Unlock geofencing, Lock ‘n’ Go, the programmable inside button, and variable motor speeds. The Fingerprint Keypad was installed externally and put through its paces across repeated entry and exit scenarios, including time-limited guest codes. The Keyfob was tested as a standalone exit method.

I paid particular attention to responsiveness compared to the previous Ultion Nuki Plus — specifically, command lag via the app, status update speed in Apple Home, and keypad reaction time. Physical key override was verified throughout.

The lock was also stress-tested, including door-handle raise requirements and low-battery notification behaviour via the app.

For more details, see how we test, review, and rate products at TechRadar.

First reviewed April 2026

Engraved title page of The Advancement and Proficience of LearningPublic Domain

Engraved title page of The Advancement and Proficience of LearningPublic Domain

Engraved title page of Bacon’s Novum OrganumPublic Domain

Engraved title page of Bacon’s Novum OrganumPublic Domain

You must be logged in to post a comment Login