Big changes are happening at OpenAI. On Wednesday, the company announced that it would be shutting down their AI video creation app Sora only a couple months after its launch. In October, OpenAI completed a massive restructure of its organization that shakes the very foundations it was built on.

Tech

Ctrl-Alt-Speech: C’est La Vile Content

from the ctrl-alt-speech dept

Ctrl-Alt-Speech is a weekly podcast about the latest news in online speech, from Mike Masnick and Everything in Moderation‘s Ben Whitelaw.

Subscribe now on Apple Podcasts, Overcast, Spotify, Pocket Casts, YouTube, or your podcast app of choice — or go straight to the RSS feed.

In this week’s round-up of the latest news in online speech, content moderation and internet regulation, Mike and Ben cover:

Play along with Ctrl-Alt-Speech’s 2026 Bingo Card!

Filed Under: content moderation, eu, france, gavin newsom, jim jordan, social media, spain

Companies: tiktok, twitter, x

Tech

Two tech workers took it Offline, and opened a Seattle coffee shop that AI can’t replicate

The meeting was running long, so someone said what they always say: “let’s take this offline.”

For Krystal Graylin, that phrase — hollow corporate shorthand for a problem deferred, not solved — became something else entirely.

She actually did it.

Graylin, a former Microsoft product manager, and her friend from college, Lucy Kong, an auditor at EY, both watched as their industries raced to automate and cut headcount. They responded by betting on the one thing they figured AI couldn’t replicate — handing someone a drink and watching that person’s face light up.

The result is Offline Coffee Co., a new cafe in Seattle’s Capitol Hill neighborhood that opened last month, drawing on Chinese cafe culture for its menu and aesthetic, leaning into the “third place,” and serving as a deliberate departure from the corporate world both founders left behind.

In a city full of tech and coffee, Graylin and Kong are an unlikely pair to be running a cafe. Neither had worked professionally as a barista, aside from operating their home machines and hosting apartment cafe parties with friends. One was monitoring the health of Microsoft’s Azure cloud platform. The other was auditing Amazon’s books at EY.

“Some people we talked to were like, ‘You guys have no business opening a coffee shop. You haven’t been a barista or owned a food business before. What makes you think like you can just quit your job and open a cafe,’” Graylin said, adding, it’s a “fair concern.”

Friends since their days at the University of Washington, Graylin said she and Kong joked for years about the cafe idea, but only started taking it seriously last April. They considered what it would mean to give up a steady income and sign a lease for a retail space.

“Going into this, it was not like, ‘we hate our jobs so much that we want to escape and do something completely different,’” Graylin said. “We knew it would be risky, but we knew that going through this experience, it would make us change in a way that we couldn’t pay someone to teach us.”

They got the keys to 711 Bellevue Ave E. last July and quit their jobs in August. For several months they worked on building out the space, adding their design touches with light wood finishes, a tiled main bar, and thrifted furniture before opening in February. The menu is built around floral syrups and flavor combinations that Graylin and Kong would bring back from trips to China — cafe ingredients that she said are harder to find in Seattle.

“It feels crazy,” Graylin said. “I cried five or more times the first week we were open, because I was so stressed but also I was so happy to see all the people in here.”

Friends have been visiting, others work on laptops in the cafe, and neighbors are making Offline a regular stop on their dog walks. Graylin said it’s cool to become part of someone’s routine.

But the leap from tech to coffee wasn’t just about escaping the corporate grind. It turns out, Graylin said, that being a product manager prepared her for more than she expected.

Negotiating contracts, managing people, de-escalating difficult customers, knowing how to prioritize — all of it transferred. So did a comfort with AI tools, which she and Kong have leaned on to close knowledge gaps, whether researching equipment, navigating legal questions, or estimating costs before bringing in an expert.

But it was also AI, and what she saw it do to the people around her at Microsoft, that helped push her out the door.

“So much of the focus was on, how can we use AI to 10x, and cushion the impact of layoffs to avoid losing revenue,” Graylin said. “Rather than, how is everyone on the team doing with all these layoffs? How can we [use AI] to improve the work-life balance on the team?”

The cafe, she said, felt like an answer to that question — a deliberate bet on what she believes advancing technology can’t touch.

“AI is good for automating things that are really tedious and unpleasant,” she said. “But social interactions — those are things that don’t need to be sped up.”

Tech

Teams Get the Tech. The Mindset Shift Is What’s Missing.

By Yair Kuznitsov, Co-Founder & CEO, Anecdotes

Every week I talk to enterprise GRC teams who understand exactly what agentic AI can do for their profession. They’ve read the articles, seen the demos, and can articulate the difference between AI that makes a workflow go a little, or even a lot faster, and an agent that replaces it entirely.

Yet still, some remain reluctant to make the shift to agentic GRC.

When I ask why, the conversation moves away from technology pretty quickly. Most of them have the “AI budget” available, but something is holding them back from making the move and they can’t always name what it is.

The conversations all eventually lead to the same place, even if they can’t say it in so many words: they’re not sure who they are when the operations aren’t theirs anymore. It’s an identity and even value question above all else.

Most GRC practitioners carry an implicit belief about where their value comes from. That belief isn’t wrong, but it’s describing a role that’s being restructured, and those who make the transition the fastest will be the ones leading the industry in the coming years.

The Competence That Got Us Here

GRC professionals built their expertise around operational competence. Knowing how to gather the right evidence, managing audit cycles under pressure and keeping a complex compliance program running when it’s understaffed and under-resourced have been signs of a valuable GRC team member for years.

That competence took years to develop, and the people who have it are genuinely good at what they do and are rightfully valued by their business.

The problem with agentic GRC is that it doesn’t reward that competence the same way. Agents can gather evidence, open remediation tasks and can manage most of the audit cycle alone. Given that agents can handle those operations, the actual question is what a GRC professional is supposed to be doing instead, and most organizations haven’t asked it yet.

Real GRC Engineers Don’t Live in Spreadsheets. They declare controls in Terraform, version them in Git, and route every update through pull requests and CI/CD pipelines.

Download GRC Engineering 101 to learn how to get started

The Shift They’ve Been Waiting For

GRC wasn’t designed to be an operational function. It was designed to help organizations understand and manage risk. The evidence collection, the audit cycles, the status updates were always implementations of that purpose, not the purpose itself. The practitioners who got into this field weren’t drawn to it because of the “fun” of evidence collection.

They cared about whether the organization was actually protected, or just appearing to be, and wanted to provide that insight to the business.

What happened over time is that the tooling didn’t scale with the programs, and the operational burden consumed everything. The people who were supposed to be thinking about risk spent most of their time keeping the machine running, not because it was ever the point of the role, but because someone had to do it and there wasn’t another way.

What Agents Do, and What They Can’t

Agentic GRC doesn’t speed up workflows, it replaces them. Evidence no longer flows through a person; it’s pulled continuously from integrated systems. Controls aren’t checked periodically; they’re monitored in real time. Remediation isn’t tracked in spreadsheets; tickets are opened, assigned, followed up on, and closed automatically.

But agents don’t design themselves.The logic that drives them (what to collect, what constitutes a pass or fail, what triggers an escalation, what the auditor will accept as evidence) comes from a key combination: data context and human insight.

Someone has to define the risk appetite, decide what “remediated” actually means, know when the output looks right and when something is missing that the system can’t see.

Agentic GRC in Anecdotes is built around exactly this model. The agents handle the operations end to end, based on the robust data foundation we have spent years building, and the logic the GRC team defines.

When agents can handle the evidence chains, control testing, and audit prep, the question of what GRC should actually be doing shifts. And for practitioners with real depth, that answer is what they’ve always known how to do. But that doesn’t make the shift easy.

Redefining a role is hard and comes with real fears. Many people are worried about their jobs because of AI, some more rightfully than others.

For GRC professionals specifically, this is less a threat than it is the opportunity they’ve been waiting for.

The practitioners who’ve made this shift describe it less like learning something new and more like getting permission to do what they were trained to do.

Their job became telling the agents what matters: setting the right risk appetite, deciding which controls are genuinely protecting something and which ones exist because they always have, knowing when an automated finding is a real problem and when it’s noise, and translating business context into compliance logic in ways no agent can replicate, because that translation requires judgment built from years of experience.

That judgment has been sitting in GRC teams all along, waiting for the operational load to lift.

The organizations that move first on this won’t win because their teams are better at AI. They’ll win because their GRC teams finally have the time and the mandate to do what compliance was supposed to do: think clearly about risk, act on what actually matters, and stop managing a program and start leading one.

Why Letting Go Feels Like Losing

The reluctance that comes up in these conversations makes more sense when you frame it this way.

Practitioners aren’t afraid of losing their value; they’re afraid of losing the operations that became their identity, even though those operations were never what they wanted. Letting that go feels like losing something, which makes it hard to see what’s waiting on the other side. And what is waiting is far more aligned with why they got into this work in the first place.

The shift, when it happens, is less a transformation than a return to what the role was always supposed to be.

Learn more about agentic GRC with Anecdotes at anecdotes.ai

Sponsored and written by Anecdotes.

Tech

Gen Z is using AI in job interviews as graduate unemployment climbs

The class of 2025 graduated into the worst entry-level job market in five years. Now a growing number of them are using AI tools during live job interviews, and a cottage industry of startups is rushing to sell them the means to do it. Whether that constitutes cheating or common sense depends on which side of the hiring table you sit on, but the numbers behind the trend are not in dispute.

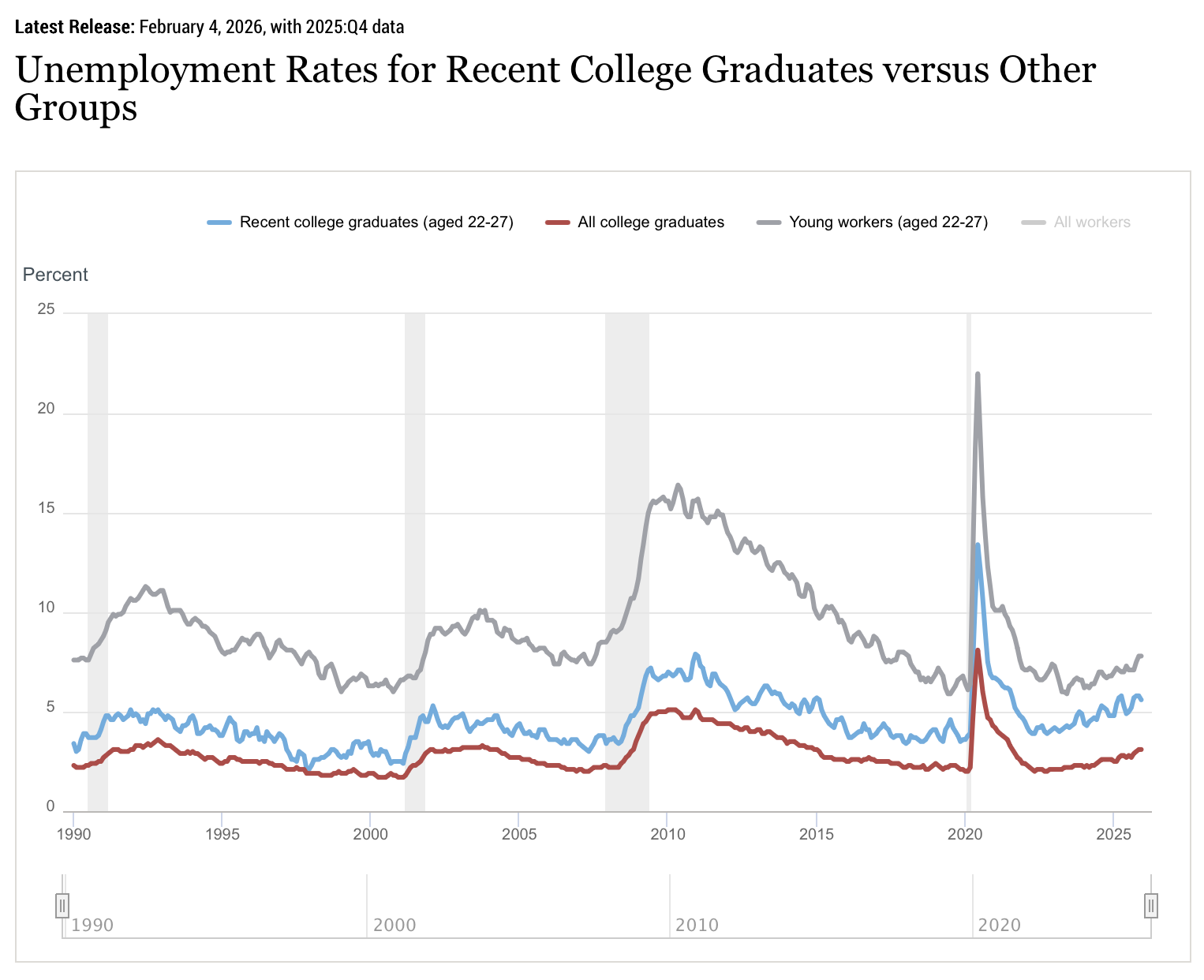

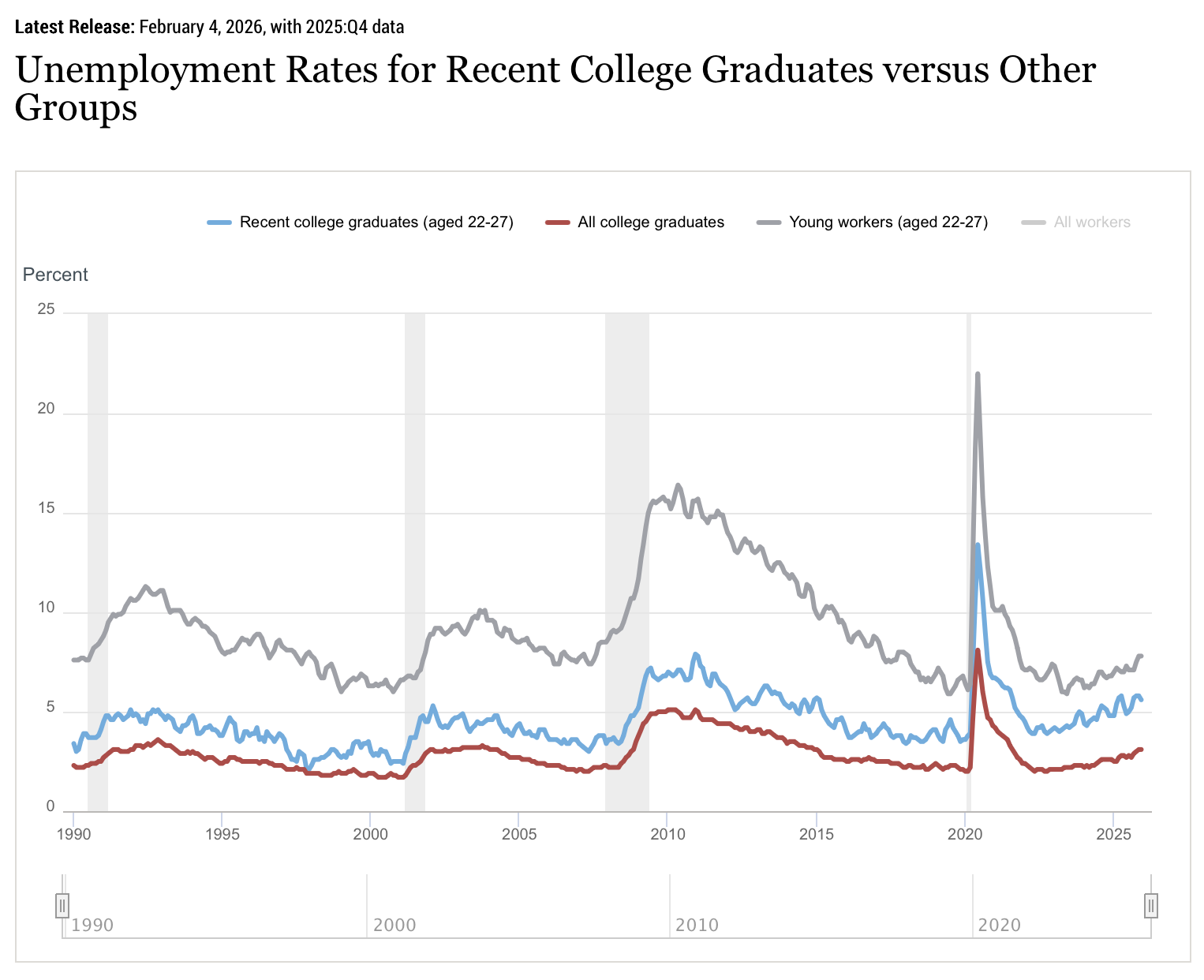

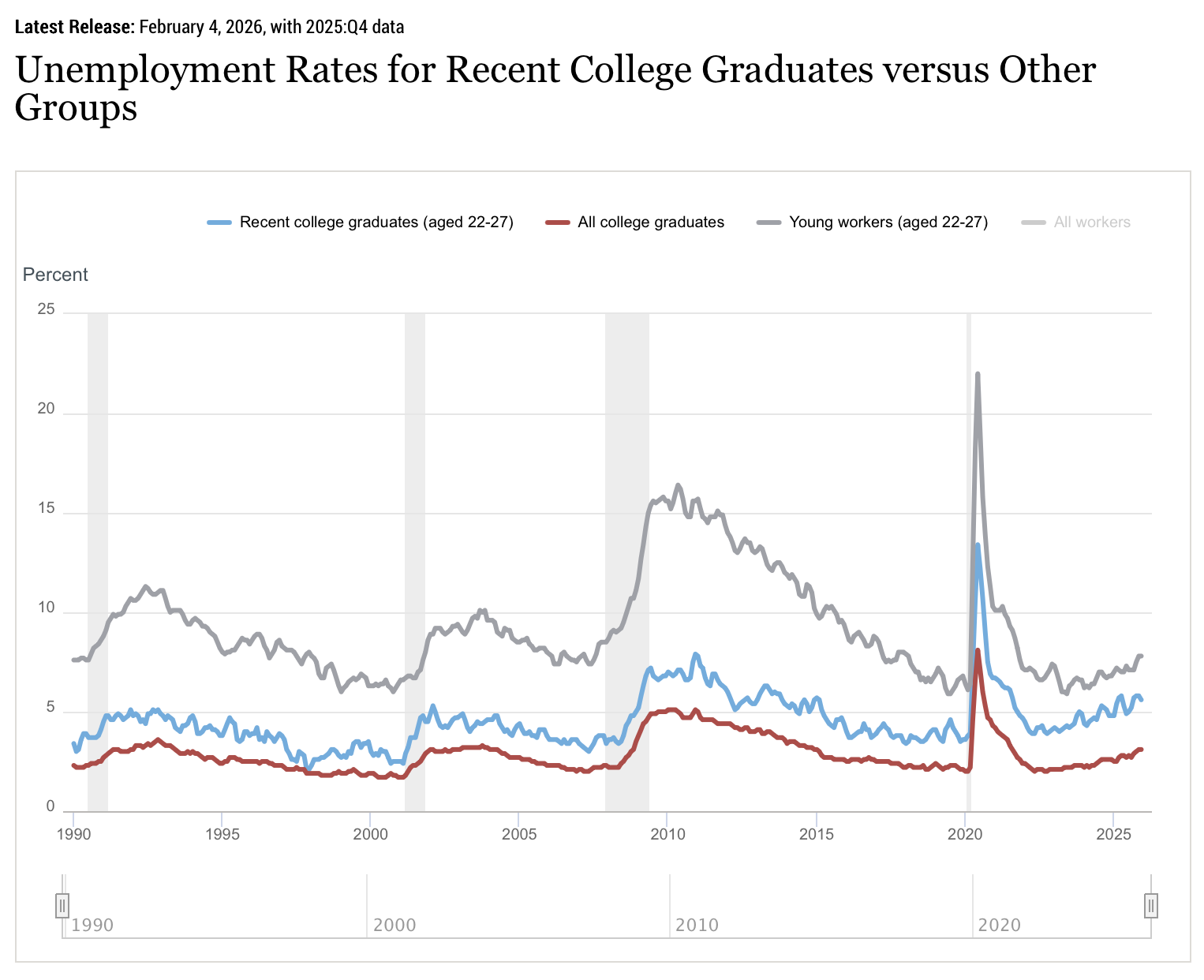

Unemployment among recent college graduates aged 22 to 27 climbed to 5.7 per cent by the end of 2025, according to the Federal Reserve Bank of New York, well above the 4.2 per cent national rate. Underemployment, which measures graduates working in jobs that do not require a degree, hit 42.5 per cent, its highest level since 2020. The tech sector, once the default destination for ambitious graduates, shed roughly 245,000 jobs in 2025, according to tracking data from Layoffs.fyi and TrueUp. Another 59,000 have gone in the first three months of 2026.

The graduates who entered this market did so having watched an older cohort get hired, promoted, and then laid off at companies like Meta, Amazon, and Google in the space of 18 months. The lesson they drew was not subtle: competence and loyalty are insufficient protection. And so they arrived armed with a technology that their universities had spent four years telling them to learn.

The tools and the companies selling them

The phenomenon surfaced this week in a press release from LockedIn AI, a startup that sells a product called DUO: a service that combines real-time AI transcription of interview questions with a live human coach who can see the candidate’s screen and provide strategic guidance during the conversation. The press release, distributed via GlobeNewswire, was framed as a trend piece about generational resilience. It was, more precisely, a product advertisement.

LockedIn AI is not alone. Its founder, Kagehiro Mitsuyami, also co-founded Final Round AI, a similar product. Both companies have faced questions about the authenticity of their marketing: reviews on Trustpilot appear to be AI-generated, and independent reviewers have noted that the software can be visible to interviewers when candidates switch between windows. A Gartner survey of 3,000 job seekers found that six per cent admitted to interview fraud, including having someone else impersonate them. Fifty-nine per cent of hiring managers suspect candidates of using AI to misrepresent themselves.

The market for these tools is growing precisely because the conditions that created them are getting worse, not better. The National Association of Colleges and Employers found that 45 per cent of employers characterised the job market for the class of 2026 as “fair,” down from “good” the previous year. Hiring projections for new graduates are essentially flat, at 1.6 per cent growth. For candidates submitting dozens of applications and receiving interview invitations at rates below two per cent, the temptation to use every available advantage is considerable.

The hypocrisy argument

The most effective argument in favour of AI-assisted interviewing is not about fairness in the abstract. It is about a specific inconsistency in how technology companies treat AI.

Google’s chief executive, Sundar Pichai, disclosed during an April 2025 earnings call that more than 30 per cent of the company’s new code is now generated with AI assistance, up from 25 per cent six months earlier. Amazon, Microsoft, and Meta all encourage their engineers to use AI coding tools daily. Applicant tracking systems powered by AI screen and reject resumes before a human ever reads them. The hiring pipeline is automated from end to end, except on the candidate’s side.

For graduates who spent their university years being told that AI fluency would define their careers, being asked to pretend the technology does not exist during a 45-minute interview feels less like a test of competence and more like a test of compliance. The companies asking them to do so are, in many cases, the same ones that will expect them to use AI tools from their first day on the job.

This argument has real force, but it also has limits. There is a difference between using AI to write code more efficiently and using AI to answer questions about your own experience, judgment, and problem-solving ability. An interview is, at least in theory, a conversation designed to evaluate what a candidate knows and how they think. Outsourcing those answers to a language model, or to a human coach whispering through an earpiece, undermines the purpose of the exercise regardless of how unfair the exercise may be.

The employer response

Companies are already adapting. In-person interview rounds rose from 24 per cent in 2022 to 38 per cent in 2025, according to hiring industry data. Seventy-two per cent of recruiting leaders now conduct at least one in-person stage specifically to combat AI-assisted fraud. Some firms have moved to whiteboard exercises, pair programming sessions, and unstructured conversations that are harder to augment with real-time tools.

The deeper question is whether the interview itself is the right mechanism for evaluating candidates in an AI-saturated labour market. If the goal is to assess what a candidate can produce with the tools they will actually use on the job, then banning those tools during the evaluation makes little sense. If the goal is to assess raw cognitive ability and domain knowledge, then AI assistance defeats the purpose entirely. Most interviews attempt to do both, which is why the current system satisfies no one.

What is clear is that the class of 2025 did not create this problem. They inherited a job market reshaped by pandemic-era overhiring, aggressive cost-cutting, and an AI revolution that is simultaneously creating and destroying opportunity at a pace that neither employers nor candidates have fully absorbed. Their decision to use AI in interviews is not rebellion. It is the predictable behaviour of rational actors in a system that has told them, repeatedly and in every other context, that AI is not optional. The fact that the system now objects to them taking that message seriously is, at minimum, worth examining.

Tech

PS5 price increases go global, rising up to $150 depending on the model

In the US, the standard PS5 will increase from $549 to $649, while the Digital Edition rises from $499 to $599. The PS5 Pro sees the largest jump, climbing from $749 to $899, and the PlayStation Portal moves from $199 to $249.

Read Entire Article

Source link

Tech

What Is Goodyear’s Longest-Lasting Tire?

Goodyear started out back in 1898, when F.A. “Frank” Seiberling bought two factories located in Akron, Ohio. Today, Goodyear has grown to become an international producer of tires, as well as non-tire products made of rubber and many other materials. In fact, Goodyear currently owns 12 different tire brands sold around the world, including Kelly, Dunlop, and Cooper, and the company has a reputation for quality tires that offer good longevity.

Out of its entire lineup, the company’s longest-lasting tire is the Goodyear Assurance MaxLife 2. Goodyear makes the Assurance MaxLife 2 in 58 different sizes, which are designed to fit just about all of the most popular cars on the road today. The tire manufacturer calls it a “next generation premium all-season tire designed to deliver more miles, more confidence and more comfort for drivers.” While you would be forgiven if this might just sounds like marketing copy to you, Goodyear is putting its money where its mouth is, backing up its longest-lasting claim with an 85,000-mile limited treadlife warranty on every Assurance MaxLife 2 tire.

The Goodyear Assurance MaxLife 2 represents the second generation of this tire. In our initial review of which car tires lasted the longest the original Goodyear Assurance MaxLife ranked well, as we found that the company pulled out all the stops when it came to durability. The Assurance MaxLife 2 continues this tradition, with Goodyear claiming it to be the longest-lasting tire that the company makes. The features that justify this claim start with Goodyear’s proprietary TredLife technology, which delivers all-weather grip and long-term performance characteristics based on the tire’s unique tread pattern as well as with the specific makeup of its rubber compound.

What else you should know about the Goodyear Assurance MaxLife 2

The Goodyear Assurance MaxLife 2 is available in sizes to fit wheels with rim diameters of 15 inches through 21 inches, with prices ranging from $155.00 to $418.00, according to the Goodyear website. Starting with the smallest 195/65R15 size, the Assurance MaxLife 2 tops out with the largest, the 275/45R21.

The Goodyear Assurance name has been applied to a wide variety of different tires, such as the Assurance All-Season, the Assurance ComfortDrive, the Assurance Fuel Max, and the Assurance WeatherReady. However, you should be aware that only the Goodyear Assurance MaxLife 2 carries the highest 85,000-mile limited treadlife warranty; all the other Goodyear Assurance tires have warranties of between 60,000 and 65,000 miles. If you want the longest-lasting Goodyear tire, your best bet is going to be the Assurance MaxLife 2, which also happened to make SlashGear’s list of the all-season tires with the best treadwear ratings.

That 85,000-mile limited treadlife warranty on the Goodyear Assurance MaxLife 2 does have some limitations. The warranty will only last until the tires have either worn down to 2/32-inch of tread depth of they reach 6 years of age after the purchase date, as they are then considered by Goodyear to have provided their “full original tread life.” Within those limits, covered tires that fail will be replaced free of charge within 12 months or their initial 2/32-inch of tread wear. Those tires that exceed these limits will be prorated, based on the percentage of the tire’s original tread that has been worn down.

Tech

Anthropic’s Claude Can Now Control Your Computer

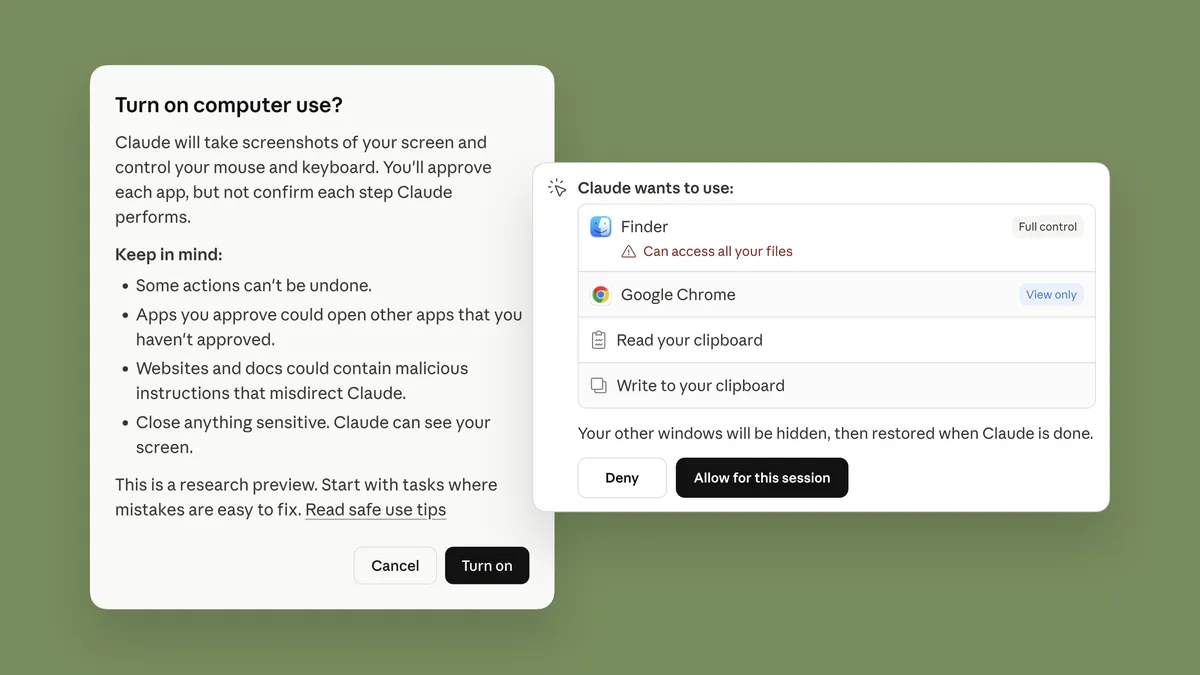

You can now let Claude take control of your computer to perform tasks like sending you a file you left on your hard drive, the AI’s developer Anthropic announced Monday. For the feature to work, you just need to be on a qualifying subscription plan.

In the wake of the viral explosion of the open-source OpenClaw framework earlier this year, Anthropic is the latest developer to deliver a tool that enables an AI model to act more independently.

OpenClaw has spawned an entire ecosystem of “claws,” or AI tools that can take simple commands and perform them somewhat autonomously on your computer or with your tools or systems. Nvidia last week debuted NemoClaw, its framework for easily setting up and installing OpenClaw, with some security settings.

Anthropic says that Claude will look for the right tools to complete the task at hand via connectors with apps like Google Calendar or Slack. If the tool or connector isn’t available, Claude can manually perform the task by typing or moving the cursor, as if it were using the keyboard and mouse. It can use programs like your web browser, dev tools and open files.

When it’s performing these tasks, it can use a computer as you normally would — by scrolling and clicking around. The only difference is that Claude will always ask for permission beforehand. You can stop Claude from performing a task at any time.

Giving your chatbot the keys to your computer can be convenient for certain tasks, but it can leave your computer vulnerable to attacks. Experts told us one major worry with agentic AI is that it can take major, sometimes dramatic actions quickly and with little warning. Claws can also be hijacked by malicious actors, who can use your personal data and systems in ways you don’t want.

Anthropic says it implemented safeguards to minimize risks like prompt injections. The system will automatically scan for this and more vulnerabilities as they are implemented.

Despite some of its efforts to keep Claude’s computer use safe, Anthropic also provides a warning to users. The feature is new and may contain errors, and the company suggests not using apps that handle sensitive data — so much so that some of these apps are disabled by default.

The research preview is available now for Claude Pro and Claude Max subscribers and limited to computers running MacOS.

Anthropic says the new computer-use feature works well with Dispatch, which allows you to assign tasks to Claude using your phone. Such tasks include checking your email every morning or opening up a Claude Cowork or Claude Code session.

The combination of computer use for Claude and Dispatch enables you to do even more while you’re not even around. Anthropic says the feature combo can create a morning briefing or run tests, for example.

Given that both features are new, some complex tasks might not work the first time. Anthropic said it’s releasing this research preview to gain early insight on where it needs the most attention to become an even more powerful tool.

Tech

The contradiction at the heart of OpenAI’s restructuring

OpenAI, which powers ChatGPT, among other AI products, was originally founded purely as a nonprofit. Now it has a for-profit arm. According to OpenAI CEO Sam Altman, the nonprofit will still guide the work of the for-profit side to ensure that artificial intelligence works for the “benefit of all humanity.” On top of that, the OpenAI Foundation, would be in charge of (theoretically) $180 billion, making it one of the largest charitable organizations in the world.

Catherine Bracy, founder of the nonprofit Tech Equity, thinks this restructuring is a blatant attempt to free up the for-profit wing to act like any other AI company. She argues that OpenAI’s for-profit wing will only ever act for the benefit of its investors. Bracy believes the OpenAI Foundation is merely a glorified and toothless corporate social responsibility arm. We reached out to OpenAI for comment and did not receive a response.

Bracy spoke with Today, Explained host Sean Rameswaram about the legality of OpenAI’s new structure and her concerns about how this all might shake out. An excerpt of their conversation, edited for length and clarity, is below.

There’s much more in the full podcast, so listen to Today, Explained wherever you get your podcasts, including Apple Podcasts, Pandora, and Spotify.

(Disclosure: Vox Media is one of several publishers that have signed partnership agreements with OpenAI. Our reporting remains editorially independent.)

You used to chat with Sam Altman?

We worked together back in the day and then kind of went out of touch with each other for a few years. Then, when I was writing a book about venture capital, I was really interested in open AI’s nonprofit model. Sam had been very explicit that the reason they founded OpenAI as a nonprofit was to put the technology at arm’s length from investors because they knew investors would exploit it in a way that would make this technology — which they thought was very dangerous — actually live up to that potential danger.

So I wanted to talk to him about the decision-making process behind that. And he was very forthcoming about that being the explicit reason why OpenAI was founded as a nonprofit. They put a lot of thought and capacity and energy into creating this [nonprofit] governance structure that would protect the technology from the whims of investors, the [profit-generating] imperatives that investors put on technology companies.

And a few months later, I saw that all come crashing down.

And when you found out that Open AI was restructuring and going to try to have it both ways — mission-driven nonprofit, but also money-driven for-profit — what was your reaction?

Disappointment. I would say that was my initial reaction. And then the secondary response was, Well, what can we do about this? And many of us came together into this coalition that really started asking questions about the responsibility of the nonprofit and the responsibility of the attorney general of California to enforce nonprofit law. And things kind of went from there.

Tell me more about that. What’s nonprofit law look like as it pertains to, say, OpenAI?

I run a nonprofit. In the tax code, that means that my organization does not need to pay taxes, but in return for that tax exemption, we are required to operate in service of a public service mission. Our mission is to ensure that the tech industry is creating opportunity for everybody. OpenAI’s nonprofit mission is to ensure that AI develops for the benefit of all of humanity. And legally, Sam Altman is required to prioritize OpenAI’s mission above all else.

So when they decided they were going to split the nonprofit from the for-profit, they found that actually legally they could not do that without divesting the intellectual property that the nonprofit owned, including all of the intellectual property that was created that underlies the ChatGPT model, and the equity stake that the nonprofit owned in the for-profit company.

I think they looked at that price tag and they said, That’s not a price we’re willing to pay. And so instead of splitting the nonprofit from the for-profit, they decided to continue down this path of nonprofit ownership, which in my mind is completely untenable, unsustainable, and irreconcilable.

Basically, every day that OpenAI exists, they are violating the law.

And actually what they’re doing is just daring the attorney general to hold them accountable for it. I think they think they’re too big to be held accountable and they need the AG [of California] to assume that he will not win a case. And that’s what they’ve done. They’ve loaded up on lawyers and they are making a bet that the AG will not pursue this in any way that’s actually meaningful.

Okay. So if I’m following you, despite the fact that OpenAI has split itself into a for-profit arm and a not-for-profit arm, their not-for-profit mission still overrides everything they do. And because of that, they are violating California law — because there’s no way that the nonprofit interests are ever going to be primary in their business.

Right. I think, as the kids would say, they’re playing in our faces. They expect us to take their word that as they operate, as they make deals with the Defense Department to develop autonomous weapons and surveillance systems on American citizens, as they battle parents in court whose children have committed suicide due to conversations that these kids were having with their chatbots, they expect us to believe that the nonprofit mission is being prioritized over the profit motivation of the company.

We all know that OpenAI’s overriding priority is to “win” the AI race. It’s to beat out the competition in the marketplace, and it’s to establish the biggest AI company they can create. To the extent that the nonprofit mission ever comes into tension with that, the company will always prioritize profits over the mission.

A law is only as good as its enforcement. And I think if there’s one rule of Silicon Valley, it is to ask forgiveness and not permission. I think they said, You know, this is worth it. There’s enough money on the line for us to just break the law and do the PR work and the lobbying work and the other work that we need to do to ensure that these laws will never be enforced against us.

And when you talk about PR work, lobbying work, are you talking about, like, saying we’re going to give away this $180 billion eventually?

Well, here’s the thing. They announced this week a list of priorities that the foundation would be investing in. They listed as one of their priorities, Alzheimer’s research. My mother is currently dying of Alzheimer’s. I have one copy of the gene that puts me at extreme risk of developing Alzheimer’s when I’m older. So I pray every day that AI helps us find a solution to Alzheimer’s fast enough that I can benefit from it, that my family can benefit from it.

But let me ask you a question. What happens, do you think, if the research that’s funded by OpenAI’s Foundation finds that actually Anthropic’s models are better at drug discovery or scientific breakthroughs than ChatGPT or any of OpenAI’s other models? What does it mean for the independence of scientific research, if all of this research is funded by an entity that has an irreconcilable conflict of interest?

“We do not have to take these companies at their word that they know best how to govern this technology. We should have bigger imaginations about what’s possible.”

We would not accept the science around nicotine that tobacco companies were funding. We do not accept the science around alcohol addiction that the alcohol companies fund. We do not accept the science around sugared beverages from the soda industry. And we should not accept that this scientific research is funded by an entity that has a vested financial interest in the outcome.

And that is why it is so critically important that the OpenAI Foundation actually be independent, that it have an independent board, that it can deploy its resources independently, that the research that it is funding is independent.

Do you still think that we’re maybe better off that OpenAI says that they want to give billions away to better society — than say Anthropic, Google, maybe having some pledges to give money away, but not nearly as much?

Well, Google has a corporate foundation. It’s called Google.org. And I expect in this structure with the tension and the conflict of interest that the OpenAI Foundation has, that it will operate much more like Google.org, which is essentially an arm of the marketing department, a corporate social responsibility program that gives money to innocuous groups — but will never do anything that undercuts Google’s priorities.

I think if you read between the lines of open AI’s press release, the work they say they want to continue doing with community funding is all about convincing people about the importance and value and benefit in using AI. I mean, that’s a market building opportunity for them. That’s not actually anything that’s going to ensure that AI is developed for the benefit of humanity. And so, no, I don’t think that they’re going to operate any differently than any of the other companies’ corporate social responsibility arms. That’s essentially what they have built here.

This is the fight of our time. AI is not inevitable. The way it develops is not inevitable. And we do not have to take these companies at their word that they know best how to govern this technology. We should have bigger imaginations about what’s possible. And if anything, this should give us more energy and motivation to fix what’s broken about our democracy than to just sit back and let billionaires control our future.

Do you ever talk to Sam Altman anymore?

He doesn’t return my calls.

Well, thanks for talking to us.

Tech

Magic-less 8 Ball Finds New Life With Pi Pico Inside

There’s an old saying that goes: when life gives you lemons, make lemonade. [lds133] must have heard that saying, because when life took the magic liquid out of his Magic 8 Ball, [lds133] made not eight-ball-aide, but an electronic replacement with a Raspberry Pi Pico and a round TFT display.

In case the Magic 8 Ball is unknown in some corners of the globe, it is a toy that consists of a twenty-sided die with a set of oracular messages engraved on it, enclosed in a magical blue liquid — and by magical, we mean isopropyl alcohol and dye. The traditional use is to ask a question, shake the eight-ball, and then ignore its advice and do whatever you wanted to do anyway.

[lds133]’s version replicates the original behavior exactly by using the accelerometer to detect the shaking, the round display to show an icon of the die, and a Raspberry Pi Pico to do the hard work. There’s also the obligatory lithium pouch cell for power, which is managed by one of the usual TP4056 breakout boards. One very nice detail is that instead of a distracting battery indicator, the virtual die changes color as the battery wears out.

We’ve seen digital 8 Balls before, like this one that used an STM32, or another that used a Raspberry Pi to display reaction GIFs. Some projects are just perennial.

Tech

Samsung Frame Pro Review: A Good TV for a Pretty Living Room

I also tested movies using the Mubi app because I’m a fuddy-duddy film fanatic, as well as a screener for Marty Supreme. Here’s where the upscaling, matte display, and AI truly shine: Marty Supreme is like an old ’70s flick, and The Frame Pro made me think I was in a movie theater. The matte display gave the Mubi films a more cinematic look.

On an Xbox Series X, I watched the entire Predator: Badlands film using the 4K Blu-ray version. It’s worth noting that though the colors look great, Samsung models don’t support Dolby Vision HDR, instead going for standard HDR10+.

For a more pure movie experience that disables any optimizations or AI enhancements, I tested the Filmmaker picture setting. In one scene, you can see the individual scales of the main character, which is awesome.

The Frame Pro has plenty of built-in cloud gaming features beyond any consoles you connect locally. I tested Cyberpunk 2077 using the Steam app and also fired up Senua’s Saga: Hellblade II on the Xbox app with no issues. One slight glitch is that, when I connected my Xbox controller to the TV it worked fine, but then I had to pair it again to the Xbox Series X later. It gave me a reason to buy an extra controller. When I tried a Sony Dualsense Wireless Controller with Cyberpunk 2077, it worked flawlessly. Forza Horizon 5 on my Xbox looked ultra-smooth, showing a Ford Bronco sliding around in the mud in a realistic way.

The Frame Pro supports up to a 144-Hz refresh rate from either the Connect One box or a Micro HDMI port, which makes it awesome for really smooth gaming. I tested Crimson Desert on an Acer Nitro 60 gaming desktop, and the colors, scenery, and overall clarity over the higher refresh rate looked stunning. If you have a stylish living room where you also want to game, this isn’t a bad choice.

During a chaotic battle, with snowy mountains off in the distance, I felt like I had jumped into one of the paintings. In the end, The Frame Pro is a fantastic TV for art—and just about everything else, as long as you don’t want the best backlighting around.

Tech

Apple's "Hide My Email" isn't as anonymous as it sounds

According to the affidavit, the FBI sought information during a probe into a threatening message received by Alexis Wilkins, the girlfriend of FBI Director Kash Patel. The email, sent from the address peaty_terms_1o@icloud.com, reads, “Do you know how happy I’ll be when your c**t ass face is canoed by an…

Read Entire Article

Source link

-

NewsBeat3 days ago

NewsBeat3 days agoManchester United reach agreement with Casemiro over contract clause amid transfer speculation

-

News Videos2 days ago

News Videos2 days agoParliament publishes latest register of MPs’ financial interests

-

Crypto World7 days ago

Crypto World7 days agoBest Crypto to Buy Now: Strategy Just Spent $1.57 Billion on Bitcoin During Fear While Early Investors Quietly Enter Pepeto for 150x Potential

-

Crypto World7 days ago

Crypto World7 days agoBitcoin Price News: Bhutan Sells $72 Million in BTC Under Fiscal Pressure, but the Smart Money Entering Pepeto Sees What the Market Does Not

-

Sports5 days ago

Sports5 days agoRemo Stars and Kano Pillars Strengthen Survival Hopes in NPFL

-

Sports5 days ago

Sports5 days agoGary Kirsten Accuses Pakistan Cricket Board Of ‘Interference’, Mohsin Naqvi Responds

-

Business6 days ago

Business6 days agoNo Winner in March 21 Drawing as Prize Rolls to $133 Million for Next

-

Tech6 days ago

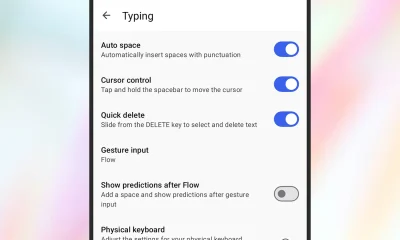

Tech6 days agoGive Your Phone a Huge (and Free) Upgrade by Switching to Another Keyboard

-

Tech6 days ago

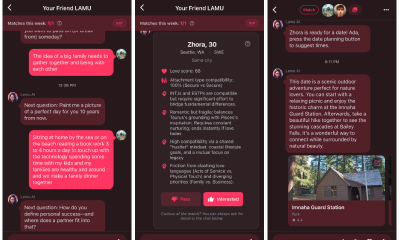

Tech6 days agoAI enters the chat: New Seattle dating app relies on tech to facilitate meaningful human connections

-

News Videos5 days ago

News Videos5 days agoCh 9 Financial Management Part 1 | Detailed One Shot | Class 12 Business Studies Boards 2026

-

Tech7 days ago

Tech7 days agoToday’s NYT Connections Hints, Answers for March 22 #1015

-

Business2 days ago

Business2 days agoInstagram, YouTube Found Responsible for Teen’s Mental Health Struggle in Historic Ruling

-

Business6 days ago

Business6 days agoWill Duke Basketball Win It All? Duke Basketball Enters Second Round as Third Favorite to Claim NCAA Title

-

Sports5 days ago

Sports5 days ago2026 Kentucky Derby horses, odds, futures, preview, date: Expert who hit 12 Derby-Oaks Doubles enters picks

-

NewsBeat15 hours ago

NewsBeat15 hours agoThe Story hosts event on Durham’s historic registers

-

NewsBeat6 days ago

NewsBeat6 days agoUpdate on Wisbech river crash as search for teenage boy enters fifth day

-

Entertainment5 days ago

Entertainment5 days agoCynthia Bailey Dishes on ‘RHOA’ Season 17, Discusses Kandi

-

Tech5 days ago

Tech5 days agoSamsung will soon let you control smart home devices from your car’s dashboard

-

NewsBeat3 days ago

NewsBeat3 days agoTesco is selling new Cadbury Dairy Milk bar and people can’t wait to try it

-

Tech6 days ago

Tech6 days agoSteamOS update adds support for Steam Machine and other non-Valve hardware

You must be logged in to post a comment Login