from the censorial-dipshit dept

For years, certain folks on the left kept insisting they wanted to bring back the Fairness Doctrine — the old FCC policy that required broadcasters to present “both sides” of controversial issues. Many of us in the tech policy world kept explaining why that was a terrible idea, one ripe for abuse and fundamentally at odds with the First Amendment. The FCC itself repealed the Doctrine back in 1987, partly because it found that compelling broadcasters to present multiple views actually reduced the quality and volume of coverage on important issues — the exact opposite of what it was supposed to do. The requirement to air “both sides” of a controversial story was the kind of burden that just made the broadcast media less willing… to cover controversial stories at all.

Well, congratulations to everyone who wanted to reanimate that corpse. FCC Chairman Brendan Carr is doing something remarkably similar — except he’s only using it in one direction (the other problem with the Fairness Doctrine, it depends entirely on the enforcers), to punish outlets that report things the Trump administration doesn’t like, while conveniently leaving alone outlets that parrot the administration’s preferred narratives.

We’ve been covering Carr’s censorial ambitions for a while now. When Trump picked Carr to chair the FCC, we noted that despite all the “free speech warrior” branding from the administration and the credulous political press that repeated it, Carr had made it abundantly clear he wanted to be America’s top censor. And he’s delivered on that promise with remarkable enthusiasm — going after CBS over “60 Minutes”, threatening ABC over Jimmy Kimmel’s jokes, and most recently threatening to revoke broadcast licenses of outlets that accurately report on the disastrous war in Iran.

Now, a broad coalition of more than 80 legal scholars, former FCC officials, and civil society organizations — organized by TechFreedom and signed by groups ranging from the ACLU to EFF to the Knight First Amendment Institute to the Institute for Free Speech — has sent a formal letter to Carr laying out, in meticulous legal detail, exactly how his threats violate the First Amendment. I’m proud to note that our think tank, the Copia Institute, is among the signatories, and this was a very easy decision.

The letter is direct about what Carr is doing:

We write concerning your abuse of the “public interest” standard as a weapon against viewpoints you and President Donald Trump do not like. You assert that “[b]roadcasters … are running hoaxes and news distortions – also known as the fake news” in a retweet of a President Donald Trump’s complaint that The Wall Street Journal and The New York Times were the “Fake News Media” because of headlines he alleged were misleading. You threatened that broadcasters who engaged in similar reporting would “lose their licenses” if they do not “correct course before their license renewals come up.” The next day, the President threatened broadcasters and programmers with “Charges for TREASON for the dissemination of false information!”

It’s kind of incredible how much of this is absolutely batshit crazy and simply could never have been imagined under any other presidential administration. The President of the United States threatened news outlets with treason charges — which carry the death penalty — for reporting things he didn’t like. And the FCC Chairman who spent years claiming to be a “free speech” absolutist, rather than defending the press from this kind of authoritarian nonsense, was the one who teed it up.

The letter does an excellent job of explaining why Carr’s reliance on the vague and essentially dormant “news distortion” policy is legally bankrupt. There’s an important distinction here that Carr is deliberately blurring: the FCC has an actual, codified Broadcast Hoax Rule that is extremely narrow and specific — it applies only when a broadcaster knowingly broadcasts false information about a crime or catastrophe, where it’s foreseeable that it will cause substantial public harm, and it actually does cause such harm. The FCC has applied it rarely, and typically only in cases involving the outright fabrication of news events like staged kidnappings.

That’s a world apart from what Carr is doing, which is invoking the far vaguer “news distortion” policy to go after headlines the president finds insufficiently flattering. As the letter notes:

[Y]our unsupported claim that unnamed broadcasters are engaged in unspecified “hoaxes,” combined with your invocation of the news distortion policy is plainly unconstitutional: it aims to do something the Supreme Court has forbidden—correcting bias or balancing speech—while its vagueness makes good-faith compliance impossible and invites arbitrary enforcement.

On that Supreme Court point, the letter cites Moody v. NetChoice (you remember: the Supreme Court case that ended Florida social media content moderation law). Recall, this is the very same Court that many expected would be friendly to conservative arguments about tech platforms supposedly “censoring” conservatives, but instead it made it crystal clear that the government has no business trying to reshape private editorial decisions:

In Moody v. Netchoice (2024), the Supreme Court rejected government efforts “to decide what counts as the right balance of private expression — to ‘un-bias’ what it thinks is biased.” “On the spectrum of dangers to free expression,” Moody said, “there are few greater than allowing the government to change the speech of private actors in order to achieve its own conception of speech nirvana.”

The letter also draws on NRA v. Vullo, another unanimous Supreme Court decision which we cite often, which held that “a government official cannot do indirectly what she is barred from doing directly: A government official cannot coerce a private party to punish or suppress disfavored speech on her behalf.” That’s a pretty precise description of what Carr is doing when he posts threats on social media about license renewals while his boss muses about treason prosecutions.

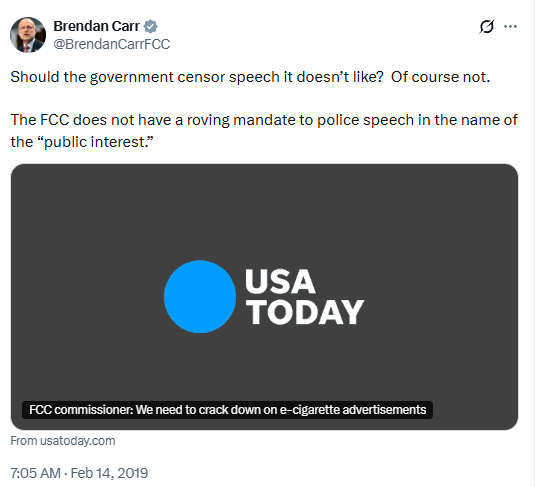

The most damning part of the letter is the receipts on Carr’s own hypocrisy. Back in 2019, Carr himself tweeted: “The FCC does not have a roving mandate to police speech in the name of the ‘public interest.’”

As the letter dryly observes, if the law were as “clear” as Carr now claims, why did he insist the FCC needed to “start a rulemaking” on it?

If, as you now claim, the “law is clear,” you would not have needed to suggest in 2024, that “we should start a rulemaking to take a look at what [the public interest standard] means.” In fact, the “public interest” standard becomes less clear each time you invoke it.

The letter also point out that Carr’s former colleague and mentor Ajit Pai also knows how messed up all this is:

Chairman Ajit Pai, your Republican predecessor, could “hardly think of an action more chilling of free speech than the federal government investigating a broadcast station because of disagreement with its news coverage or promotion of that coverage.” You have launched a flurry of such investigations.

And the letter documents that the chilling effect is already working:

Commissioner Anna Gomez has “heard from broadcasters who are telling their reporters to be careful about the way they cover this administration.”

Even Trump-supporting Republican officials like Ted Cruz have had enough of Brendan Carr’s censorial bullshit:

Sen. Ted Cruz (R-TX) understood that this a “mafioso” tactic “right out of ‘Goodfellas,’” essentially: “‘nice bar you have here, it’d be a shame if something happened to it.”

The fact that Ted Cruz of all people can see this for what it is should tell you something.

The signatories on this letter are worth noting. Beyond the civil society organizations, you’ve got former FCC officials from both parties, more than fifty First Amendment and communications law scholars from institutions ranging from Harvard to Stanford to Emory, and journalism scholars from across the country. There are people signed onto this letter who don’t agree with each other on much at all.

But on Brendan Carr’s censorship campaign, they all agree — because this really has nothing to do with partisan politics. This is about whether you believe the Constitution means what it says — or whether the First Amendment is just a talking point to wave around when it’s politically convenient and discard when it gets in the way. The same people who spent years fundraising off claims that Biden officials sending cranky emails about COVID misinformation represented an existential threat to free speech are now openly wielding license revocation and treason charges to dictate editorial content.

Look, we know Carr won’t do a damn thing in response to this letter. If anything, he’ll just screenshot parts and post it on X as proof that he’s upsetting the right people. That’s his whole game — the trolling, the culture war posturing, the audition tape for whatever higher office he’s eyeing. He doesn’t actually have to revoke any licenses (and likely couldn’t survive the legal challenge if he tried). The mere threat is the point, because, as the letter explains, the FCC can exercise “regulation by the lifted eyebrow” and hang a “Sword of Damocles” over each broadcaster’s head.

But highlighting the record still matters. When future scholars look back at this period and try to understand how a sitting FCC Chairman openly abandoned the First Amendment in service of a President who thinks “treason” is a synonym for “journalism I don’t like,” the documentation will be there.

And the breadth of the coalition sending this message matters too. This many scholars, former officials, and organizations — many of whom disagree vehemently on plenty of other issues — all looked at what Carr is doing and arrived at the same conclusion: this is unconstitutional, it’s dangerous, and someone needs to say so clearly and publicly, even if the person doing it couldn’t care less.

The letter closes with a quote from the Supreme Court that fits this moment uncomfortably well, drawn from West Virginia Board of Education v. Barnette, decided in 1943 when the country faced actual existential threats:

“[T]here is ‘one fixed star in our constitutional constellation: that no official, high or petty, can prescribe what shall be orthodox in politics, nationalism, religion, or other matters of opinion or force citizens to confess by word or act their faith therein.’”

Brendan Carr has decided he can ignore all that and censor at will. He’ll likely ignore this letter too. But unlike Carr, the record doesn’t forget.

Filed Under: 1st amendment, brendan carr, fairness doctrine, fcc, free speech, jawboning, news distortion, public interest

You must be logged in to post a comment Login