Tech

Flush with cash: Washington startup lands up to $500M to deploy facilities treating sewage, dairy waste

Wastewater treatment startup Sedron Technologies — a Washington company that once served Bill Gates a glass of water purified from sewage — announced it’s being acquired by Ara Partners. The global equity firm is investing up to $500 million in Sedron to facilitate the deployment of its sewage and manure cleaning technologies, which gives it a controlling stake in the business.

“The Ara investment is largely designed to provide us with the equity on our own balance sheet to scale up production of additional projects and plants across the country,” said Geoff Trukenbrod, interim CEO of Sedron.

The startup is deploying facilities that efficiently and sustainably treat sewage biosolids and dairy waste. Sedron’s business model is to finance, design, build, own, operate and maintain the sites, which cost about $100 million to $200 million to build.

The company generates revenue from the municipalities and farms that use their services as well as from the sale of organic fertilizer and clean energy produced at the sites.

“Imagine having a bakery, and you get paid to get flour, and you get paid for your cookies,” said Stanley Janicki, Sedron’s chief commercial officer. “It’s a phenomenal business model, not that biosolids are cookies.”

Sedron launched in 2014 as a spinoff from Janicki Industries, a longtime aerospace engineering and manufacturing company. Both are based in Sedro Woolley, a city north of Seattle in a largely agricultural stretch of Western Washington.

In 2011, Janicki received funding from what is now the Gates Foundation to develop a wastewater purification system, leading to Sedron’s launch and a video that went viral showing Bill Gates drinking a glass of water produced from sewage. The foundation supported the technology as a means for treating waste in developing countries where untreated sewage could otherwise spread pathogens.

The company is breaking ground this month on a regional waste treatment facility that will serve multiple municipalities that are home to 2 million people in South Florida. Operations are expected to begin in 2028.

Sedron’s system takes municipal biosolids — the residual product from a wastewater treatment plant — and dries the material in an energy efficient thermal dryer. The biosolids are about 85% water, which is largely evaporated and disposed of, and remaining material is fed into a biomass boiler to produce clean electricity. The energy that’s generated helps run the dryer and the excess electricity is sold. Another benefit of the system is the process destroys PFAS “forever chemicals” contaminating wastewater.

The startup’s second line of business is managing manure from livestock operations — which is one of the biggest costs for a dairy farmer. Sedron takes the waste, removes the water for use in irrigation, and produces two high-value organic fertilizers: a solid material and a concentrated liquid nitrogen fertilizer. The fertilizers are sold nationwide for use on crops such as apples, berries and spinach.

Sedron’s treatment process is more affordable and replaces the use of manure lagoons to store the waste until it can be applied to fields as a liquid. The lagoons produce planet-warming methane and pose environmental threats if they leak nutrients that can stoke algal blooms in nearby waterways or contaminate drinking water.

The company has deployed its manure technology at two dairy farms in Indiana, including a 20,000 cow dairy, and expects to start operations at a Wisconsin farm this summer.

“Our focus is on positioning Sedron as the leader in circular waste management — converting waste into carbon negative commodities faster, more cost effectively, and with greater energy efficiency than any other solution available,” said Cory Steffek, a partner at Ara Partners, in a statement.

Sedron previously raised approximately $100 million in corporate debt and equity and about $200 million in project financing, some of which was institutional. All of the legacy shareholders rolled their equity forward, Janicki said.

The 275 employee company has offices in Washington state and Chicago, and operational facilities in Indiana, Wisconsin and Florida.

The startup is focused on U.S. deployments of its facilities, aiming to launch at least two new sites each year for the next five years, then potentially scaling up from there. Janicki said they’d still like to operate in developing countries to address that initial use case.

Sedron’s leadership emphasized the importance of delivering a service that resonates with investors and business partners, doesn’t require government support to succeed and also benefits the planet.

“As the world today is retreating somewhat from climate efforts,” Janicki said, “it’s exciting to be in a business that is positioned for exceptional growth and solving environmental problems while creating valuable products.”

Tech

OpenAI pauses Stargate UK as energy costs and copyright rules block the path

In short: OpenAI has paused its Stargate UK data centre project, citing the high cost of industrial electricity in Britain and an unfavourable regulatory environment around AI copyright. The project, announced in September 2025 alongside Nvidia and Nscale, had planned to deploy 8,000 GPUs at sites in north-east England, scalable to 31,000 over time. OpenAI says it will move forward “when the right conditions” allow, though it has given no timeline. The pause is a significant setback for the UK government’s AI Growth Zones initiative and arrives as OpenAI prepares for a public listing.

What Stargate UK was supposed to be

Stargate UK was announced in September 2025 as a sovereign AI infrastructure project: a partnership between OpenAI, Nvidia, and British cloud provider Nscale to build data centre capacity in north-east England that would allow OpenAI’s models to run on local computing power. The sites earmarked were Cobalt Park near Newcastle and Blyth, both within the UK government’s designated AI Growth Zones, a framework the government had positioned as a centrepiece of its industrial strategy for artificial intelligence. The project was unveiled during US President Donald Trump’s state visit to Britain, giving it diplomatic as well as commercial significance. The initial phase involved off take of approximately 8,000 Nvidia AI processors, with an ambition to scale that to 31,000 GPUs over time, capacity that would have enabled OpenAI to serve critical public services, regulated industries such as finance, and national security partnerships without routing data through US-based infrastructure. OpenAI never disclosed the total investment figure associated with the UK project. The broader US Stargate project remains on track with data centre construction under way across the United States, backed by a $40 billion bridge loan SoftBank secured to finance its participation, making the UK pause a geographic exception rather than a signal of retreat from AI infrastructure spending overall.

The energy cost problem

The most concrete obstacle OpenAI identified is the cost of electricity in Britain. UK industrial electricity prices are among the highest of any IEA member state, more than four times those in the United States, Finland, Norway, and Sweden. For a data centre drawing 100 megawatts, that differential is not a line-item concern but a structural one: the economics of running large-scale AI inference workloads at a site where power costs four times as much as they do in Virginia or Texas are fundamentally different, and that gap compounds as capacity scales. The problem is not simply a matter of electricity tariffs. Grid connection requests in the UK surged from 41 gigawatts in November 2024 to 125 gigawatts by June 2025, with an estimated 75 gigawatts of that queue attributable to data centre projects. Buildings can be constructed in 18 to 24 months; grid connections take three to eight years. That mismatch means that even if a project clears the financial hurdle, it faces an infrastructure queue that the current regulatory and planning framework has not been designed to process at AI-infrastructure speeds. The UK government’s AI Growth Zones policy, published in November 2025, was intended in part to address exactly this bottleneck, but the zone designations do not resolve the underlying grid constraints, and OpenAI’s decision to pause suggests that the policy framework has not yet translated into the conditions that would make the investment viable.

The copyright sticking point

The regulatory concern OpenAI cited alongside energy costs points to a separate and more politically charged problem: the UK’s unresolved approach to AI copyright. UK lawmakers have been working to update the rules governing how AI models are trained on copyrighted material. The government’s preferred approach, a broad text and data mining exception with an opt-out mechanism for rights holders, was rejected by the majority of respondents to the government’s own consultation, with creative industries, publishers, and news organisations arguing that a broad exception would allow generative AI companies to train on their works without compensation or meaningful consent. The consultation produced no consensus, and the government has since delayed any legislative change. For OpenAI, which trains large language models on text scraped from the internet, the uncertainty about whether that training will be lawful, and on what terms, is a material business risk. A UK data centre is not simply a power facility, it creates legal jurisdiction. If the UK eventually adopts a copyright framework that restricts training data use more tightly than the US, operating infrastructure in Britain could expose OpenAI to liability or compliance costs that do not apply to its US operations. The pause allows OpenAI to wait for that regulatory picture to clarify before committing capital.

A pause, not a cancellation, and the IPO context

OpenAI’s statement was calibrated to leave the door open. “We continue to explore Stargate U.K. and will move forward when the right conditions such as regulation and the cost of energy enable long-term infrastructure investment,” the company said, framing the decision as contingent rather than final. The timing, however, is notable. OpenAI closed a $122 billion funding round at an $852 billion valuation in late March 2026, extending participation to retail investors for the first time in a move widely interpreted as groundwork for a public offering analysts expect as early as the fourth quarter of 2026. Companies approaching an IPO typically tighten their capital allocation discipline, avoid open-ended international commitments that could weigh on reported cash burn, and reduce exposure to projects with uncertain timelines. Pausing a data centre project that faces both energy cost headwinds and an unresolved copyright regime fits that pattern. The UK government, which had promoted Stargate UK as a signal of international investor confidence in Britain’s AI ambitions, described the decision as disappointing and said it remained in dialogue with OpenAI. OpenAI’s international Stargate expansion has not been without complications elsewhere either, its Abu Dhabi data centre plans drew an explicit threat from Iranian authorities amid escalating regional tensions, suggesting that sovereign AI infrastructure projects carry geopolitical risk profiles that are becoming a distinct factor in OpenAI’s site selection calculus. Meanwhile, Oracle appointed a new CFO this week to manage its $50 billion data centre construction programme as the central operating partner in the US Stargate project, a contrast that illustrates where AI infrastructure spending remains active and where it is being reconsidered. The year 2025 established infrastructure access and energy as the primary competitive variables in AI, and for the UK, OpenAI’s pause is a signal that it has not yet solved either.

Tech

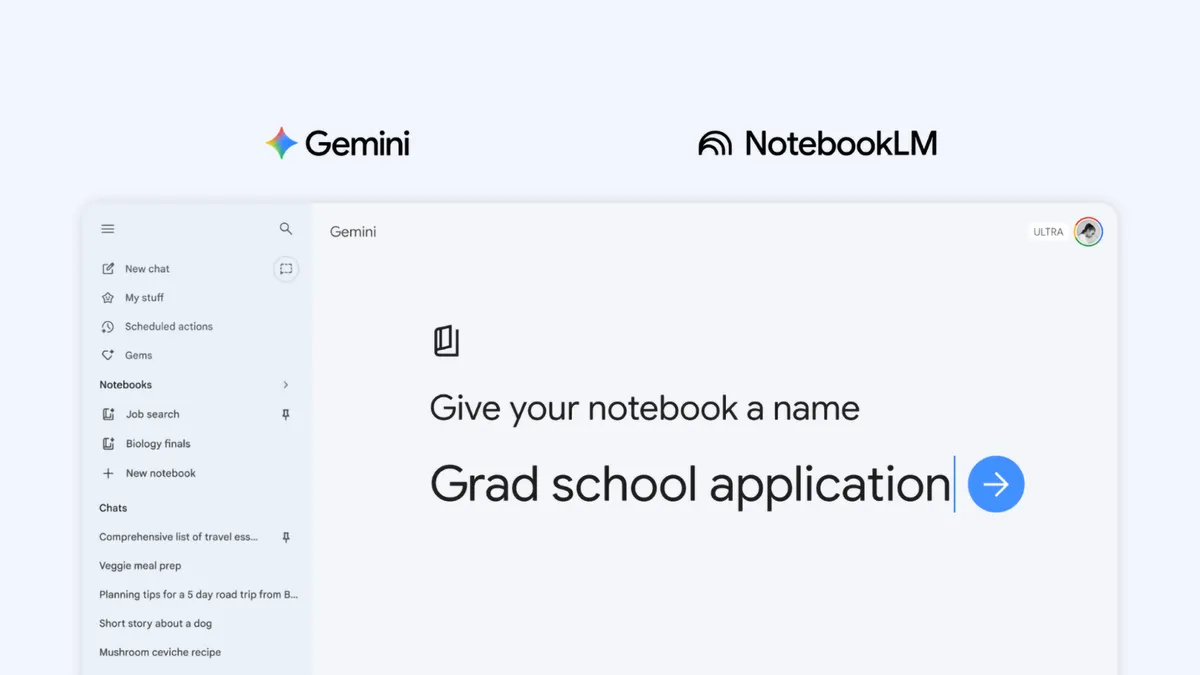

Gemini Gets New Notebooks Feature That Syncs With NotebookLM

Gemini is getting a new feature in the form of notebooks, further integrating with NotebookLM, Google announced on Wednesday.

NotebookLM is easily one of Google’s best AI tools available. It allows you to add sources to a notebook and ask questions or generate a series of outputs, based only on the material you’ve given it, making it self-contained.

It’s different from the Gemini chatbot on its own, which will search the entire internet for an answer. NotebookLM’s answers are grounded only in your sources. It’s great for studying for school, getting prepped for work and even creative inspiration. There’s no wrong way to use it, and its flexibility lends itself to be explored, so it comes as no surprise that it’s getting further integrated into Google’s own chatbot.

Late last year, Google allowed you to add a NotebookLM notebook into Gemini so it would have all the context you added. Google says to look at notebooks as personal knowledge bases. You can add files, past chats and documents on a particular subject, and you can always jump back into that conversation with Gemini and pick up where you left off. Having a dedicated space to keep things in one place is great on its own for Gemini, but you can use those notebooks in NotebookLM, too.

Notebooks make it easier to keep all the information on a particular subject in one place and give Gemini everything it needs without having to manually add details all over again. Now, that integration goes even further, and you can create notebooks directly in Gemini.

Notebooks can be synchronized between Gemini and NotebookLM, making the data in one tool instantly available in the other. This means you can add artifacts to the notebook in Gemini and immediately have the option to create a Video Overview, Infographic or other outputs within NotebookLM. Having the same synced notebook in both tools allows you to use each tool in its own way with the same database.

When the feature is available, you’ll see a new notebooks section in the side menu bar of Gemini, allowing you to quickly create one or access others you’ve made in the past.

Google is rolling out Notebooks in Gemini to AI Ultra, Pro and Plus plans on the web, and will expand access to mobile, more countries across Europe and free users in the coming weeks.

Tech

‘Not on a hunch’: Andy Jassy defends Amazon’s $200B spending spree

Andy Jassy’s new letter to Amazon shareholders is a data-heavy defense of the tech giant’s biggest bets — from AI and custom chips to satellite internet and 20-minute delivery.

In the process, the Amazon CEO discloses that AI revenue for AWS has hit a $15 billion annual run rate, that Amazon’s internal chips business is generating over $20 billion a year, and that two large customers asked to buy all of Amazon’s available Graviton chip capacity for 2026.

Amazon said no, but Jassy says it gives a sense for the demand.

“We’re not investing approximately $200 billion in capex in 2026 on a hunch,” he writes.

That is basically the thesis statement for this year’s letter, released Thursday morning. It continues a tradition that stretches back nearly three decades, to Amazon founder Jeff Bezos’s first shareholder letter in 1997, which introduced the world to the “Day 1” mindset and is appended to the latest letter every year in an attempt to show its enduring relevance.

The evolution: This is Jassy’s fifth installment since succeeding Bezos as CEO in 2021. His letters have gone from establishing his management philosophy and navigating a post-pandemic cost hangover to laying out the frameworks Amazon uses to invent and build.

This year he touches on progress in businesses including grocery (where Amazon says it’s now the second-largest U.S. grocer), satellite broadband (Amazon Leo is set to launch commercially in mid-2026), Amazon Now delivery (expanding from India to the U.S. and Europe), Alexa+, and Zoox, its autonomous ride-hailing service now starting commercial service.

His overarching message: progress won’t be a straight line (here, Jassy’s letter riffs on the title of an album by the New Zealand indie rock band The Beths) but Amazon is placing big bets on many fronts simultaneously, as it has throughout its history, and the results will come.

The unstated plea: have patience, folks, we’ve been here before, and look how it turned out.

The AI bet: Nowhere is this appeal more important than in AI, given investor concerns about the massive investments being made across the industry. Throughout the letter, Jassy makes the case that the company’s huge capital outlays are backed by real demand and realistic economics.

Jassy readily acknowledges that Amazon’s free cash flow (FCF) dropped from $38 billion to $11 billion last year, driven by a $50.7 billion increase in capital spending, primarily on AI infrastructure. That was despite revenue overall growing 12% from $638 billion to $717 billion last year.

“AI is a once-in-a-lifetime opportunity where the current growth is unprecedented and the future growth even bigger,” he writes, adding later, “We’re not going to be conservative in how we play this—we’re investing to be the meaningful leader, and our future business, operating income, and FCF will be much larger because of it.”

AWS AI revenue: The disclosure about the annual revenue run rate for AI in AWS ($15 billion as of Q1 2026) is a preview of sorts of Amazon’s upcoming quarterly earnings, which won’t be reported for a few weeks. Amazon hasn’t previously reported this type of AI revenue figure.

To put the figure in context, AWS overall had a $142 billion dollar revenue run rate as of Q4 25.

“We have never seen a technology more quickly adopted than AI,” he writes, adding later, “Amazon is smack in the middle of this land rush, and companies are choosing AWS for AI.”

Jassy compares the current AI wave to the early days of AWS, noting that three years after AWS launched commercially the cloud division had a $58 million revenue run rate. Three years after generative AI took off, the company’s AI business is nearly 260 times greater than that.

Future external businesses: Jassy points to two areas where Amazon may open up internal capabilities to outside customers.

- It’s “quite possible” Amazon will sell racks of its internally developed chips to third parties in the future, he writes.

- In addition, he says, Amazon will explore building and selling its robotics solutions to other industrial and consumer customers.

Both follow the Amazon playbook of building something internally, then offering it as an external service — the same pattern behind businesses such as AWS and Fulfillment by Amazon, the company’s logistics services for third-party sellers.

Chips economics: Jassy says Amazon currently only monetizes its custom chips through its own EC2 cloud service, but if the chips business were standalone and sold externally like Nvidia, the annual run rate would be roughly $50 billion.

He projects that at scale, Trainium will save “tens of billions of capex dollars per year” and provide “several hundred basis points of operating margin advantage” versus relying on third-party chips for inference.

Trainium2 has largely sold out. Trainium3, which started shipping in early 2026, is nearly fully subscribed. Trainium4, still about 18 months from broad availability, has already been significantly reserved.

“Our chips business is on fire,” Jassy writes, noting that the business “changes the economics for AWS, and will be much larger than most think.”

Across Amazon’s business, he writes, “It’s hard to overstate my optimism for what’s ahead.”

Read the full letter here.

Tech

Raffle: Win a T10 BESPOKE, eCoustics Special Edition ($4,000 value)

We’ve teamed up with T10 BESPOKE to raffle off a one-of-a-kind T10 BESPOKE, eCoustics Special Edition, True Luxury Wireless Hi-fidelity In-ear Computers ($4,000 USD value) custom made by HiFi Bear himself!

How to Enter

Buy a raffle ticket online for $50 each, to be entered into a random drawing after all tickets are sold.

Odds of Winning

We’re only selling 80 tickets for this raffle so each ticket buys a 1/80 chance.

See Them at AXPONA

They’ll be on display at AXPONA at the Renaissance Convention Hotel in Schaumburg, IL, from April 10-12, 2026 (2nd level lobby Left Hand Living Room just past the AXPONA help desk and directly adjacent to “the Gather Bar”).

The Prize

One T10 BESPOKE, eCoustics Special Edition, True Luxury Wireless Hi-fidelity In-ear Computers includes one pair of wireless earbuds and one pendant charging case in an exclusive, eCoustics Special Edition design ($4,000 USD value) as shown in the photos.

Product Details

Solid mirror-polished brass and snow-white ceramic zirconium, highlighted with blood-red natural reticulated Python skin, and custom eCoustics logo livery. The units feature high res DAC, Twin Cadence Tensilica Hi-Fi DSP’s, Class D and Class AB selectable amplification, quad Knowles Quartz series analog microphones, and custom Sonion balanced armatures (absolutely stunning hi-fi sound simply beyond anything you’ve ever experienced. Dual ARM M4 CPU and M0 co-processors enable on-board voice recognition and control, while 6-axis accelerometer/gyroscopes convert subtle head-motions into gestures to control music, telephony, and more. All this, in what is at the same time the world’s smallest and lightest wireless in-ear monitor; less than half the size and weight of an AirPod. All T10 Bespoke are built with a novel chassis, mechanically assembled with fine-pitch custom stainless steel watch screws which enable the units to be easily serviced, repaired, and upgraded with new hardware technology, forever, preventing technological obsolescence and enabling the lifetime warranty offered by EAR Micro.

Learn more about T10 Bespoke.

Tech

Florida AG to probe OpenAI, alleging possible connection to FSU shooting

Florida Attorney General James Uthmeier announced on Thursday his office will investigate OpenAI for its alleged harm to minors, potential to threaten national security, and its possible link to a shooting that took place at Florida State University last year.

“ChatGPT may likely have been used to assist the murderer in the recent mass school shooting at Florida State University that tragically took two lives,” Attorney General Uthmeier said in a video posted to social media.

On the day of the FSU shooting last April, the suspect allegedly asked ChatGPT how the country would react to a shooting at FSU, and what time it would be busiest at the FSU student union. These messages could potentially be used as evidence against the suspect in an October trial about the shooting.

The attorney general cited further concerns about ChatGPT’s encouragement of suicide in certain instances, which have been documented in multiple lawsuits brought by families against OpenAI. He also mentioned his concern that the Chinese Communist Party could use OpenAI’s technology against the United States.

“As big tech rolls out these technologies, they should not — they cannot — put our safety and security at risk,” he said. “We support innovation. But that doesn’t give any company the right to endanger our children, facilitate criminal activity, empower America’s enemies, or threaten our national security.”

He also called on the Florida legislature to “work quickly” to protect children from the negative impacts of AI.

“Each week, more than 900 million people use ChatGPT to improve their daily lives through uses such as learning new skills or navigating complex healthcare systems,” an OpenAI spokesperson said in a statement to TechCrunch. “Our ongoing safety work continues to play an important role in delivering these benefits to everyday people, as well as supporting scientific research and discovery.”

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

OpenAI added that it builds and continues to improve ChatGPT to understand user intent and respond in appropriate, safe ways. The company said it will cooperate with the Florida attorney general’s investigation.

On Wednesday, OpenAI unveiled its Child Safety Blueprint, which includes policy recommendations designed to improve children’s safety as it relates to AI.

This action comes as chatbot makers face pressure to confront their potential role in creating child sexual abuse material (CSAM). According to a recent report from the Internet Watch Foundation, there were over 8,000 reports of AI-generated CSAM in the first half of 2025, which represents a 14% increase year over year.

OpenAI’s blueprint recommends updating legislation to protect against AI-generated abuse material, refining the reporting process to law enforcement, and instituting better preventative safeguards against abusive uses of AI tools.

Tech

OpenAI Backs Bill That Would Limit Liability for AI-Enabled Mass Deaths or Financial Disasters

OpenAI is throwing its support behind an Illinois state bill that would shield AI labs from liability in cases where AI models are used to cause serious societal harms, such as death or serious injury of 100 or more people or at least $1 billion in property damage.

The effort seems to mark a shift in OpenAI’s legislative strategy. Until now, OpenAI has largely played defense, opposing bills that could have made AI labs liable for their technology’s harms. Several AI policy experts tell WIRED that SB 3444—which could set a new standard for the industry—is a more extreme measure than bills OpenAI has supported in the past.

The bill would shield frontier AI developers from liability for “critical harms” caused by their frontier models as long as they did not intentionally or recklessly cause such an incident, and have published safety, security, and transparency reports on their website. It defines a frontier model as any AI model trained using more than $100 million in computational costs, which likely could apply to America’s largest AI labs, like OpenAI, Google, xAI, Anthropic, and Meta.

“We support approaches like this because they focus on what matters most: Reducing the risk of serious harm from the most advanced AI systems while still allowing this technology to get into the hands of the people and businesses—small and big—of Illinois,” said OpenAI spokesperson Jamie Radice in an emailed statement. “They also help avoid a patchwork of state-by-state rules and move toward clearer, more consistent national standards.”

Under its definition of critical harms, the bill lists a few common areas of concern for the AI industry, such as a bad actor using AI to create a chemical, biological, radiological, or nuclear weapon. If an AI model engages in conduct on its own that, if committed by a human, would constitute a criminal offense and leads to those extreme outcomes, that would also be a critical harm. If an AI model were to commit any of these actions under SB 3444, the AI lab behind the model may not be held liable, so long as it wasn’t intentional and they published their reports.

Federal and state legislatures in the US have yet to pass any laws specifically determining whether AI model developers, like OpenAI, could be liable for these types of harm caused by their technology. But as AI labs continue to release more powerful AI models that raise novel safety and cybersecurity challenges, such as Anthropic’s Claude Mythos, these questions feel increasingly prescient.

In her testimony supporting SB 3444, a member of OpenAI’s Global Affairs team, Caitlin Niedermeyer, also argued in favor of a federal framework for AI regulation. Niedermeyer struck a message that’s consistent with the Trump administration’s crackdown on state AI safety laws, claiming it’s important to avoid “a patchwork of inconsistent state requirements that could create friction without meaningfully improving safety.” This is also consistent with the broader view of Silicon Valley in recent years, which has generally argued that it’s paramount for AI legislation to not hamper America’s position in the global AI race. While SB 3444 is itself a state-level safety law, Niedermeyer argued that those can be effective if they “reinforce a path toward harmonization with federal systems.”

“At OpenAI, we believe the North Star for frontier regulation should be the safe deployment of the most advanced models in a way that also preserves US leadership in innovation,” Niedermeyer said.

Scott Wisor, policy director for the Secure AI project, tells WIRED he believes this bill has a slim chance of passing, given Illinois’ reputation for aggressively regulating technology. “We polled people in Illinois, asking whether they think AI companies should be exempt from liability, and 90 percent of people oppose it. There’s no reason existing AI companies should be facing reduced liability,” Wisor says.

Tech

‘Negative’ Views of Broadcom Driving Thousands of VMware Migrations, Rival Says

“One of VMware’s biggest competitors, Nutanix, claims to have swiped tens of thousands of VMware customers,” reports Ars Technica. They said higher prices, forced bundling, licensing changes, and more strained partner relationships have frustrated customers and driven them away from the leading virtualization firm. From the report: Speaking at a press briefing at Nutanix’s .NEXT conference in Chicago this week, Nutanix CEO Rajiv Ramaswami said that “about 30,000 customers” have migrated from VMware to the rival platform, pointing to customer disapproval over Broadcom’s VMware strategy, SDxCentral, a London-based IT publication, reported today. “I think there’s no doubt that the customer sentiment continues to be negative about Broadcom,” Ramaswami said, per SDxCentral.

Nutanix hasn’t specified how many of the customers that it got from VMware are SMBs or enterprise-sized; although, adoption is said to be strongest among mid-market customers as Nutanix also tries wooing larger customers, often by starting with partial deployments. During this week’s press briefing, Ramaswami reportedly said that some of the customers that moved from VMware to Nutanix during the latter’s most recent fiscal quarter represented Nutanix’s “strongest quarterly new logo additions in eight years.” “Most of the logos came from our typical VMware migrations on to the [hyperconverged infrastructure] platform,” he said.

During the Nutanix conference, Brandon Shaw, Nutanix VP and head of technology services, said that Western Union has been migrating from VMware to Nutanix for six months, The Register reported. The financial services company is moving 900 to 1,200 applications across 3,900 cores. Shaw said that Western Union has been exploring new IT suppliers to help it become more customer-focused. Despite Broadcom’s history of “decent lines of communication” with Western Union, Shaw said that Western Union had “challenges partnering with them.”

Shaw also pointed to Broadcom’s efforts to push customers to buy the VMware Cloud Foundation (VCF), despite the product often having more features than companies need and at high prices. Since moving to Nutanix, the Denver-headquartered financial firm is also benefiting from having more flexibility around workload locations, which is important since Western Union is in over 200 countries, The Register said.

Tech

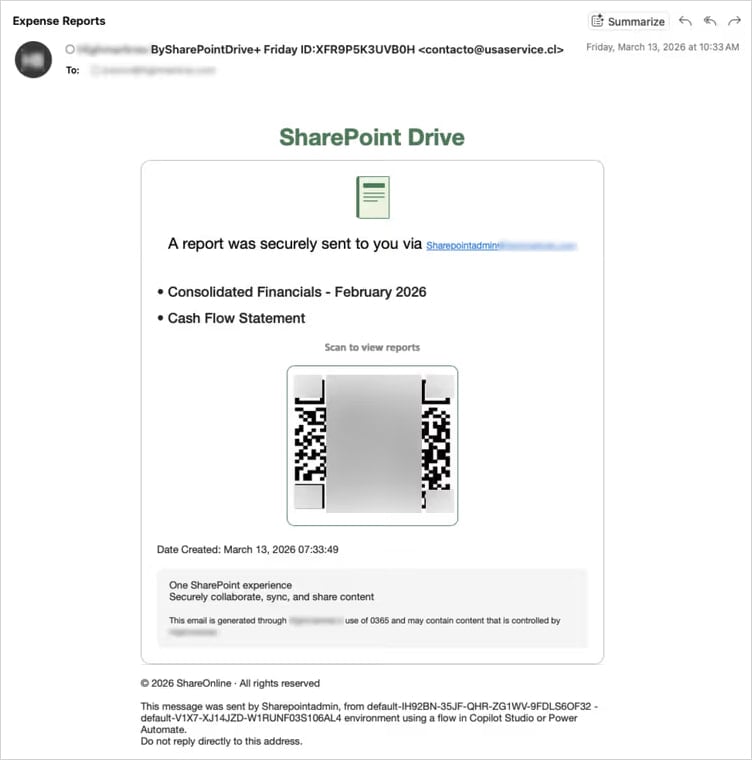

New VENOM phishing attacks steal senior executives’ Microsoft logins

Threat actors using a previously undocumented phishing-as-a-service (PhaaS) platform called “VENOM” are targeting credentials of C-suite executives across multiple industries.

The operation has been active since at least last November and appears to target specific individuals who serve as CEOs, CFOs, or VPs at their companies.

VENOM also seems to be closed access, as it has not been promoted on public channels and underground forums, thus reducing its exposure to researchers.

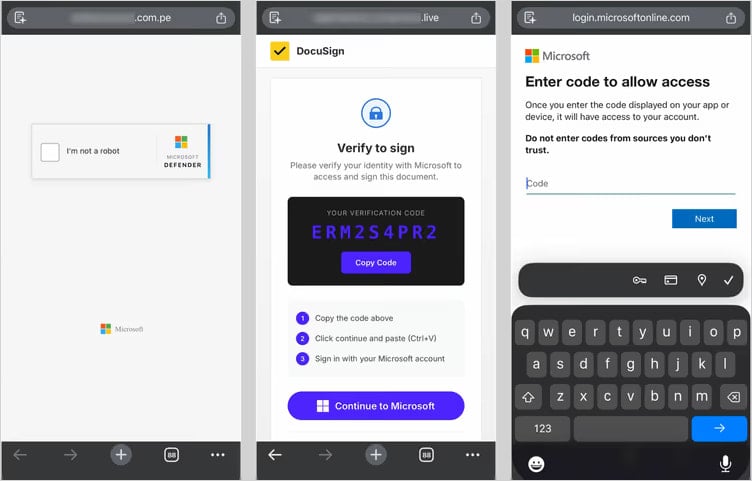

The VENOM attack chain

The phishing emails, observed by researchers at cybersecurity company Abnormal, impersonated Microsoft SharePoint document-sharing notifications as part of internal communication.

The messages are highly personalized and include random HTML noise such as fake CSS classes and comments. The attacker also injects fake email threads tailored to the target, increasing credibility.

A QR code rendered in Unicode is provided for the victim to scan for access. The trick is designed to bypass scanning tools and shift the attack to mobile devices.

Source: Abnormal

“The target’s email address is double Base64-encoded in the URL fragment—the portion after the # character,” Abnormal researchers explain.

“Fragments are never transmitted in HTTP requests, making the target’s email invisible to server-side logs and URL reputation feeds.”

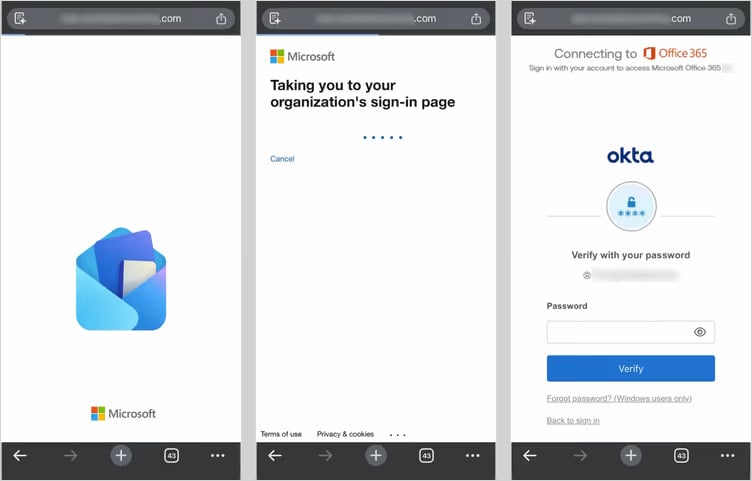

When the victim scans the QR code, they are taken to a landing page that serves as a filter for security researchers and sandboxed environments, ensuring that only real targets are redirected to the phishing platform. Users outside the threat actor’s interest are redirected to legitimate websites to reduce suspicion.

Those who pass the tests are taken to a credential-harvesting page that proxies a Microsoft login flow in real time, relaying credentials and multi-factor authentication (MFA) codes to Microsoft APIs and capturing the session token.

Source: Abnormal

Apart from the adversary-in-the-middle (AiTM) method, Abnormal has also observed a device-code phishing tactic in which the victim is tricked into approving access to their Microsoft account for a rogue device.

Source: Abnormal

This method has become very popular over the past year due to its effectiveness and resistance to password resets, with at least 11 phishing kits currently offering it as an option.

In both methods, VENOM quickly establishes persistent access during the authentication process. In the AiTM flow, it registers a new device on the victim’s account. In the device code flow, it obtains a token that also provides access to the account.

The researchers note that MFA is no longer sufficient as a defense. C-suite executives should use FIDO2 authentication, disable the device code flow when not needed, and block token abuse by implementing stricter conditional access policies.

Tech

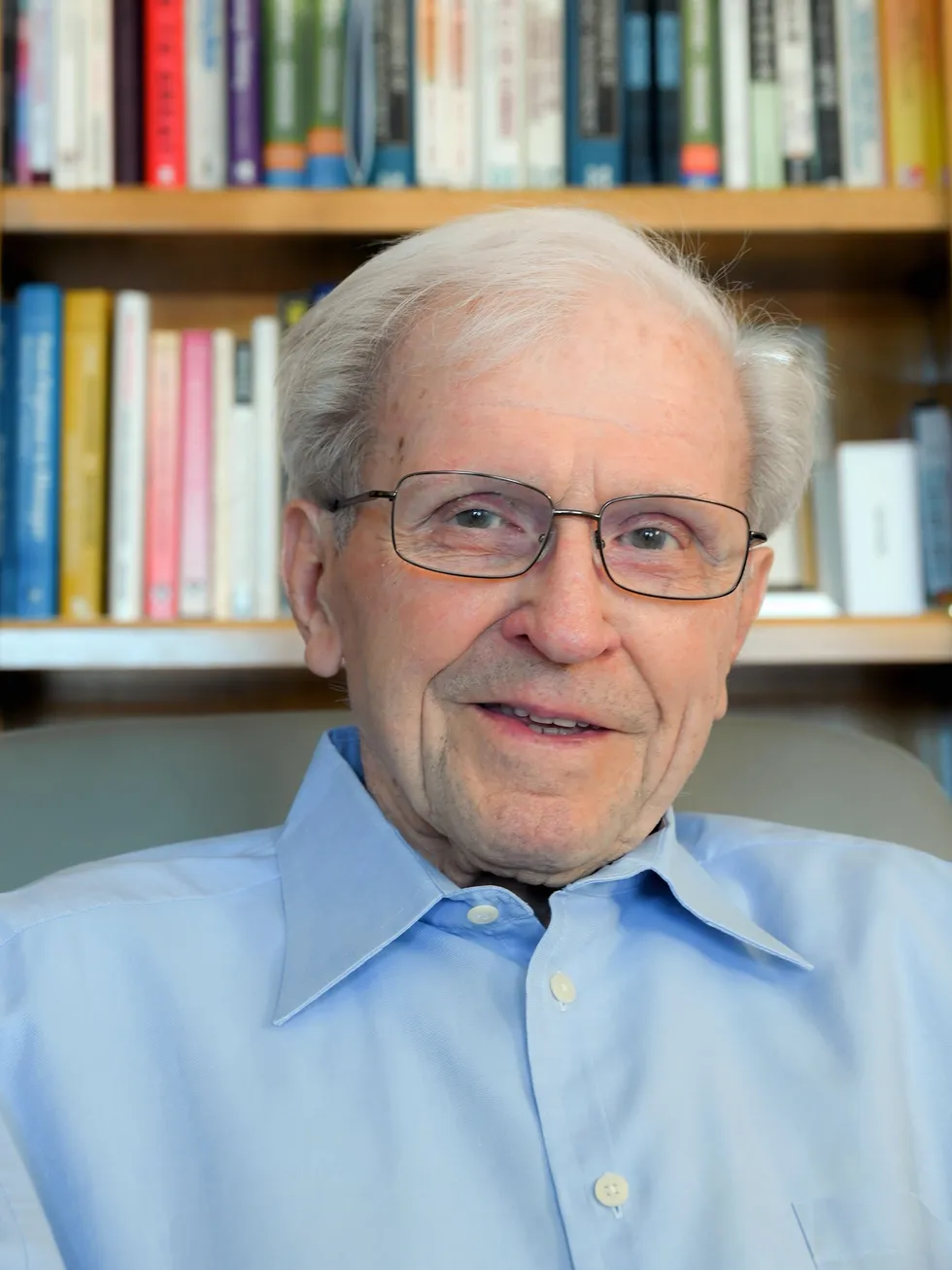

Remembering Devoted IEEE Volunteer Gus Gaynor

Gerard “Gus” Gaynor, a long-serving IEEE volunteer and former engineering director at 3M, died on 9 March. The IEEE Life Fellow was 104.

Readers of The Institute might remember Gus from his 2022 profile: “From Fixing Farm Equipment to Becoming a Director at 3M.” Just last year, he and I coauthored twoarticles. One discusses how to leverage relationships to boost your career growth. The other weighs the pros and cons of pursuing a technical or managerial career path. He was 103 years old then. How many IEEE members can claim a centenarian coauthor?

I first met Gus in 2009 at the IEEE Technical Activities Board (TAB) meeting in San Juan, Puerto Rico. We sat together in the airplane on our way back to Minneapolis, our hometown. At home I told many of my friends about the remarkable person—who was 87 years young at the time—with whom I chatted during our six-hour flight.

A decade later, he and I met for lunch in Minneapolis. He drove himself to the restaurant, just asking for a hand to navigate the snowy sidewalk.

A dedicated IEEE volunteer

Gus’s involvement with IEEE predates the organization. He joined the Institute of Radio Engineers, a predecessor society, as a student member in 1942. Twenty years later he became an active IEEE volunteer.

He served on the TAB’s finance committee and the Publications Services and Products Board. He was president of the IEEE Engineering Management Society (now the Technology and Engineering Management Society ), and he was the Technology Management Council’s first president. He was the founding editor of IEEE-USA’s online magazine Today’s Engineer, which reported on government legislation and issues affecting U.S. members’ careers. The magazine is now available as the e-newsletter IEEE-USA InSight.

He authored several books on technology management, published by IEEE-USA.

IEEE Life Fellow Gerard “Gus” Gaynor died on 9 March.The Gaynor Family

IEEE Life Fellow Gerard “Gus” Gaynor died on 9 March.The Gaynor Family

Most recently, after the formation of TEMS in 2015, he became an active member of its executive committee. He served two terms as vice president of publications.

At 100 years old, he led the launch of a new publication, TEMS Leadership Briefs, a novel short-format open-access publication aimed at technology leaders.

Gus, who is a former member of The Institute’s editorial advisory board, also worked with Kathy Pretz, The Institute’s editor in chief, to start an ongoing series of TEMS-sponsored career-interest articles. He coauthored several of them.

Throughout his 64 years as an IEEE volunteer, he received several honors. They include IEEE EMS’s Engineering Manager of the Year Award, the IEEE TEMS Career Achievement Award, and the IEEE-USA McClure Citation of Honor. In 2014 he was inducted into the IEEE Technical Activities Board Hall of Honor.

A 25-year career at 3M

Gus received a degree in electrical engineering in 1950 from the University of Michigan in Ann Arbor. He worked for several companies including Automatic Electric (now part of Nokia) and Johnson Farebox (now part of Genfare), before joining 3M in 1962.

During his successful 25-year career at 3M, he served as chief engineer for a division in Italy, established the innovation department, and led the design and installation of the company’s first computerized manufacturing facilities. He retired as director of engineering in 1987.

Last year, IEEE Life Fellow Michael Condry, a former TEMS president, organized a Zoom call with Gus and other leaders of the society to celebrate Gus’s 104th birthday. Gus looked well and was his usual upbeat self, telling everyone: “I’m good. Everything’s well. I can’t complain.”

Gus was married to Shirley Margaret Karrels Gaynor, who passed away in 2018. He lives on in the hearts and minds of his seven children, seven grandchildren, two great-grandchildren, and innumerable friends and IEEE colleagues.

From Your Site Articles

Related Articles Around the Web

Tech

Iran-linked hackers are now targeting industrial controllers in US infrastructure

Federal agencies, including the FBI, CISA, NSA, the Department of Energy, US Cyber Command, and the Environmental Protection Agency, issued an urgent joint advisory Tuesday, warning that an advanced persistent threat group linked to Iran has been exploiting vulnerabilities in programmable logic controllers since at least March 2026.

Read Entire Article

Source link

-

Fashion6 days ago

Fashion6 days agoWeekend Open Thread: Spanx – Corporette.com

-

Business4 days ago

Business4 days agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Sports5 days ago

Sports5 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Business6 days ago

Business6 days agoExpert Picks for Every Need

-

Tech2 days ago

Tech2 days agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Business5 days ago

Business5 days agoNo Jackpot Winner, Prize to Climb to $231 Million

-

Fashion4 days ago

Fashion4 days agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Fashion2 days ago

Fashion2 days agoLet’s Discuss: DEI in 2026

-

Politics7 days ago

Wings Over Scotland | The quality of mercy

-

Crypto World1 day ago

Crypto World1 day agoBitcoin recovers as US and Iran Agree a Ceasefire Deal

-

Business5 days ago

Business5 days agoAkebia Therapeutics, Inc. (AKBA) Discusses Pipeline Progress and Strategic Focus on Kidney Disease Treatments at R&D Day – Slideshow

-

Politics7 days ago

Politics7 days agoWhy so many children are now classified as ‘disabled’

-

Crypto World14 hours ago

Crypto World14 hours agoCanary Capital Files SEC Registration for PEPE ETF

-

Politics6 days ago

Politics6 days agoThe UK should not pay a penny in slavery reparations

-

Politics7 days ago

Politics7 days agoNuclear rockets, moon bases and NASA’s Mars plan

-

Tech7 days ago

Tech7 days agoThe Threadless Ball Screw Never Took Off, But Don’t Write It Off

-

Business7 days ago

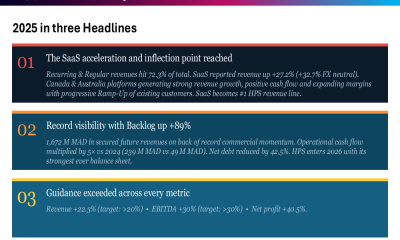

Business7 days agoHPS FY 2025 slides: SaaS inflection drives 22% revenue growth

-

Fashion7 days ago

Fashion7 days agoTory Burch’s Spring 2026 Campaign Goes on a Getaway

-

Fashion7 days ago

Fashion7 days agoFrugal Friday’s Workwear Report: Hammered Metallic Button Sweater Vest

-

Fashion6 days ago

Fashion6 days agoWeekly News Update, 4.3.26 – Corporette.com

You must be logged in to post a comment Login