For decades, the data center industry has operated on a relatively predictable model of thermodynamics. Operators built a hall, filled it with servers, and circulated cold air through the floor or aisles.

The heat load remained predominantly stable, electrical loads increased gradually, and cooling systems could be sized with conservative, static margins.

Article continues below

Product Manager, Global Chilled Water Systems, Vertiv.

The rapid adoption of generative AI and large language models (LLMs) has introduced a new thermal reality. Unlike some tasks, training an AI model experiences large fluctuations in computational workload rather than steady output.

Power draw can increase dynamically in seconds, often across mixed hardware estates with very different thermal characteristics.

This creates localized hot spots that traditional air cooling struggles to mitigate fast enough.

Without a coordinated cooling response, this thermal variability may affect the performance and lifespan of the very hardware it is meant to protect.

The shift from static to dynamic

The challenge lies in how thermal behavior has become dynamic, uneven, and tightly coupled to workload patterns. In the past, cooling was a fixed layer beneath the IT stack. Today, it must be an adaptive capability that evolves alongside the workload.

Decisions about power delivery, rack density, and workload placement now have immediate thermal consequences. This demands a closer alignment between the IT equipment and the cooling infrastructure.

Heat can no longer be treated as a downstream problem to be managed at the exhaust vent; it should be managed as part of a connected system that spans the entire facility.

Capturing heat at the source

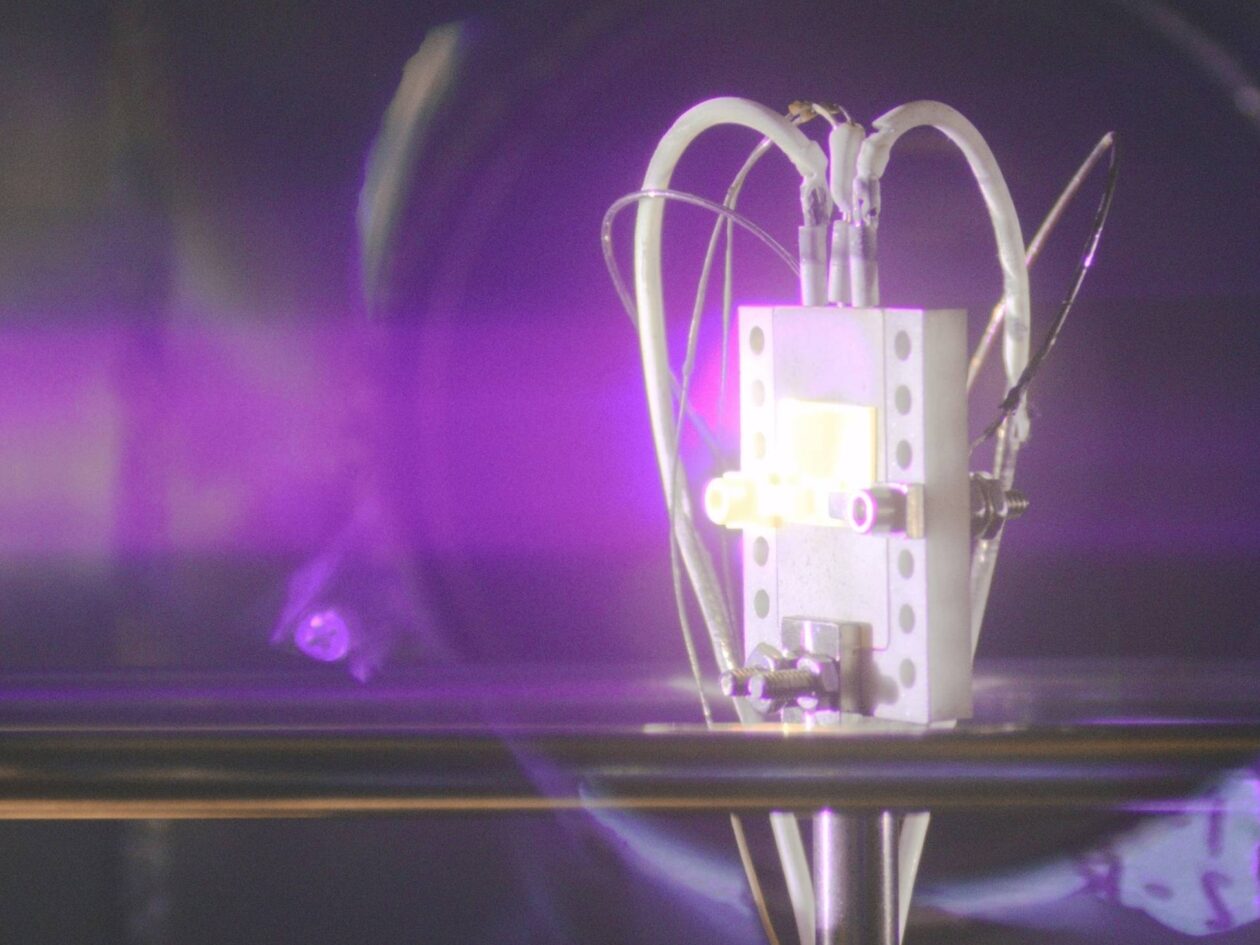

To handle the extreme density of modern graphics processing units (GPUs), operators are capturing thermal energy closer to the source.

Direct-to-chip liquid cooling traps heat at the silicon level, reducing the need for high-velocity fans and the related energy required. Rear-door heat exchangers intercept heat before it floods the aisle.

This method offers precision because liquid soaks up heat far more effectively than air, allowing operators to manage the peaks of AI workloads without over-provisioning the entire facility.

To enable this liquid-cooled architecture, Coolant Distribution Units (CDUs) play an important role. CDU offerings span a wide range of capacities and include both in-rack and in-row configurations that can be situated next to each other.

These systems support liquid-to-air and liquid-to-liquid heat exchange designs, making them versatile and suitable for diverse data center layouts, including both existing facilities and new builds.

Designing for mixed density

Air-cooling is not likely to disappear overnight or any time soon. The immediate future will probably be made up of hybrid solutions where standard racks sit alongside high-density AI clusters.

This requires a layered architectural approach. Perimeter-based air handling units and thermal wall technologies are used to define airflow paths at the boundaries of the data hall.

In data centers, room-level thermal management depends on established mechanical air cooling solutions, including Computer Room Air Conditioning (CRAC) units that use direct-expansion refrigeration and Computer Room Air Handler (CRAH) units that use chilled water from central plants.

These conventional systems remain well-suited to enabling a progressive evolution toward hybrid cooling designs, where air circulation works in tandem with targeted liquid cooling to address varying heat loads effectively.

Advancements in server and GPU architectures, combined with evolving industry standards have steadily expanded the acceptable inlet air temperatures for IT equipment – often allowing operation up to higher thresholds within the allowable ranges e.g., up to 40–45°C for certain classes.

This development permits liquid-cooled systems to function efficiently with elevated facility supply water temperatures, commonly 40°C or more.

As a result, air-based cooling gains renewed practicality and cost-effectiveness in hybrid configurations, while simultaneously driving a thorough review of legacy chilled-water and direct-expansion setups to pinpoint the most appropriate and optimized air-cooling approach.

These systems enable controlled heat collection across both raised and non-raised floor facilities. In mixed-density AI halls, these architectural elements help maintain predictable conditions even as localized liquid-based cooling strategies are layered in.

The result is greater stability and optionality. Operators can increase density selectively rather than committing to a wholesale redesign of the facility.

Rethinking waste heat rejection

Once the heat is captured, the strategies for rejecting or reusing it are also evolving. Because the precise thermal requirements for emerging AI hardware continue to evolve, and rack-level heat loads continue to rise, rigidly specifying a single chilled-water setpoint may introduce some constraints.

It could lead to suboptimal efficiency, inadequate heat rejection capacity during peak loads, or unnecessary energy waste if future systems tolerate warmer conditions. Instead, flexible architectures that accommodate a broader spectrum of supply temperatures and hybrid cooling strategies are increasingly favored to mitigate these variables while supporting scalable, high-density operations.

Trimming the cooling is emerging to answer these dynamic conditions as a key solution for data centers designed to operate at elevated water temperatures. This allows for the further extended use of free cooling and reduces dependence on mechanical compressors, aligning the cooling system with real-world operating conditions rather than fixed design points.

Free cooling screw chillers represent a strategic choice for data centers seeking to reduce energy consumption without compromising performance. They deliver high efficiency at elevated ambient temperatures, allowing operators to extend free cooling hours throughout the year.

By combining robust mechanical cooling with optimized free cooling design, these units help lower operating costs, stabilize system performance, and support more environmentally responsible thermal strategies.

At the same time, centrifugal chiller technologies continue to provide the essential backbone capacity. Where reliable cooling performance is required or where lower supply temperatures remain necessary, centrifugal systems offer the stability and scalability needed to handle variable loads.

Rather than representing competing philosophies, these approaches reflect different stages of a facility’s thermal journey and different operational priorities.

Control as the Unifying Layer

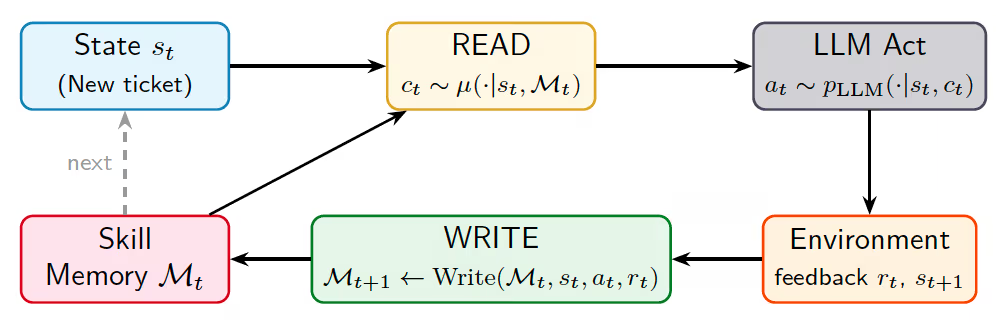

The challenge lies in managing air, liquid, and hybrid systems simultaneously. What enables this diversity of cooling strategies to function as a coherent system is control.

Modern sensors and analytics now link the IT load directly to the cooling plant and airflow management systems. When the AI workload ramps up, the control platform anticipates the heat variation and adjusts setpoints, flow rates, and cooling capacity in real time.

This reduces unnecessary energy use, improves resilience, and provides the operational insight needed to plan future expansion.

For operators, this visibility supports better decision-making. Understanding how heat actually moves through the facility makes it easier to evaluate new technologies, validate design assumptions, and manage risk as AI deployments scale.

Engaging end-to-end support service across the entire thermal chain – from initial design and commissioning through to ongoing optimization – helps to improve continuous reliability through expert deployment and predictive maintenance.

.png)

You must be logged in to post a comment Login