Denon Home series speakers review: These new smart speakers support Siri & Apple Home with premium audio

Denon’s new line of Siri-enabled Apple Home smart speakers may be what users are looking for in the absence of updated HomePod and HomePod mini. Let’s take a listen.

Japanese audio brand Denon is out with its latest range of speakers: the Denon Home 200, Denon Home 400, and Denon Home 600. While all different sizes and price points, the entire line caters to Apple users with support for conversing with Siri and AirPlay.

The new devices launch in what has been a prolonged pause in Apple’s HomePod product cycle. The second-generation full-sized HomePod launched in 2023, and HomePod mini has gone even longer without an update, hitting shelves in 2020.

This makes Denon’s new lineup even more enticing with few alternatives available. I’ve been testing both the Denon Home 200 and Denon Home 400 for the last couple of months.

Let’s see how they perform and compare to HomePod.

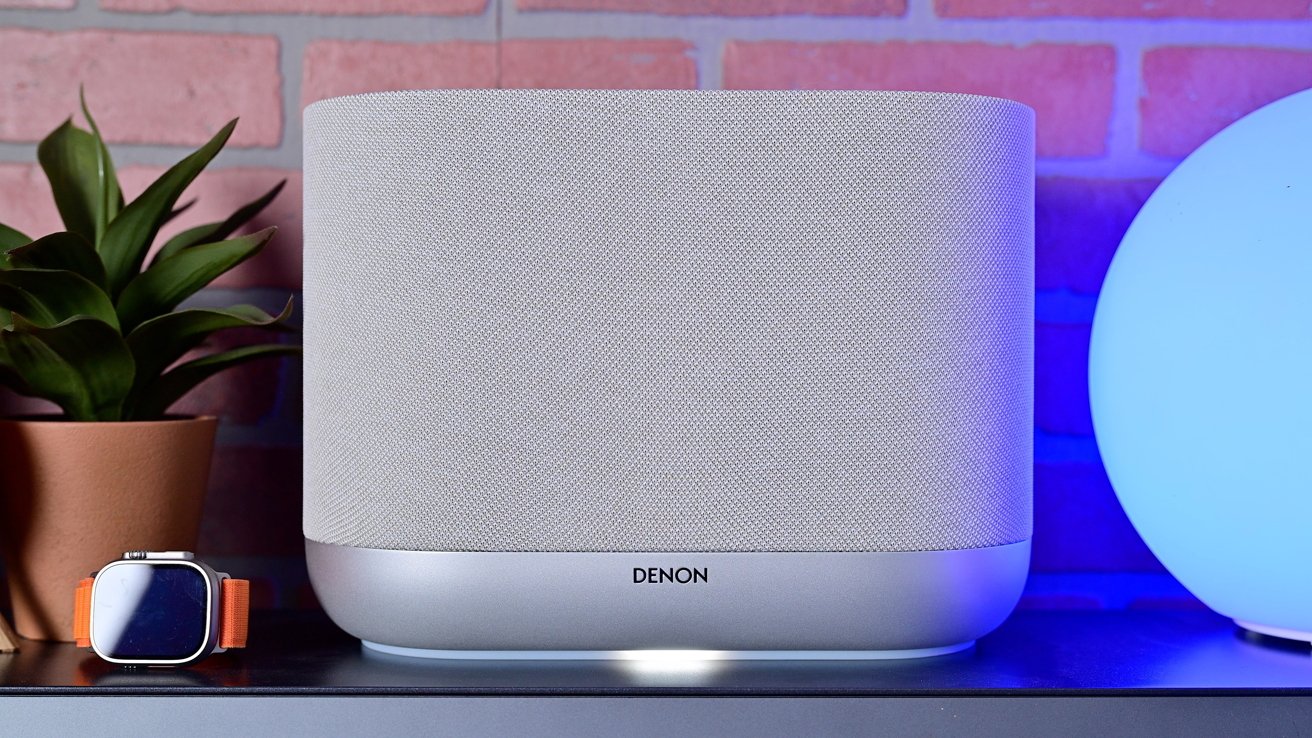

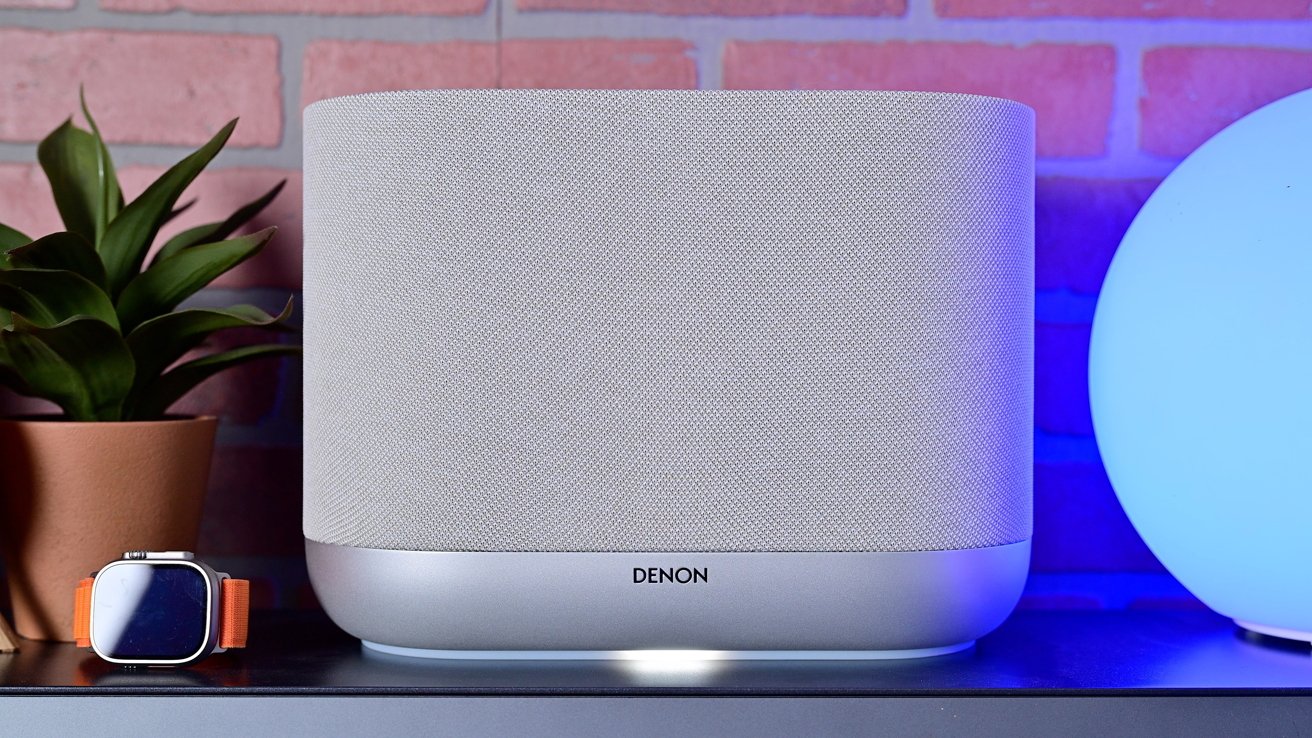

Denon Home speakers review: Design

All three speakers in the range share a clear identity. They’re wrapped in mesh fabric, with obvious buttons and metal accents.

Denon Home series speakers review: The smaller, Denon Home 200 looks sleek and elegant

The Denon Home 200 and Denon Home 400 are most similar, with a curved anodized aluminum base and the mesh-covered top. The tops are flat, with buttons on the top or side and extra IO on the back.

The Denon Home 600 is the biggest departure as the contoured speaker body appears to sit angled on top of the base. This provides better sound direction for spatial support, sending audio up, to the sides, and forward.

Denon Home series speakers review: Status light on the bottom of the Denon Home 400

I love the metal accents in particular, as they create an elegant upscale look beyond the HomePod. They’re available in both light grey and black, with the former being shown here.

Denon Home series speakers review: Controls on the top of the Denon Home 200

Unlike with HomePod that has a touch-sensitive surface, the buttons are physical and have a subtle *click* when depressed. There’s a combo play/pause button, volume controls, three user-designated shortcuts, and a multi-function button that can invoke your virtual assistant of choice.

Denon Home series speakers review: Differences in design between the Denon Home 200 and Denon Home 400

The Denon Home 400 is just over twice as wide and instead of the buttons on the top, has a metal grille that helps with Spatial Audio. The buttons are relocated to the ride side for easy access but you don’t see them from the front.

Denon Home series speakers review: Rear ports shared across the Denon home speaker line

For the bonus IO, there are both USB-C and auxiliary audio inputs, a Bluetooth toggle, and a physical toggle that will disable the mic if you don’t want a smart speaker listening in.

Finally, the speakers have a soft light that glows out of the bottom. It acts as a bit of a status light and can change color.

Denon Home speakers review: Easy setup for Apple users

There are multiple methods of setup for the new Denon speakers. I think for Apple users, though, it’s easiest when using Apple Home.

The speakers can be set up just like any other Apple Home accessory. You open the Home app, tap the + button, and scan the pairing code on the speaker.

Denon Home series speakers review: Scan the pairing code to add to the Home app

This opens a popup modal at the bottom of the screen to walk you through the onboarding process, like giving the speaker a name and assigning it a room in your home. Behind the scenes, it also adds your Wi-Fi credentials.

I’d say this is basically an ideal setup process. You don’t need to do some convoluted pairing process where you connect to a temporary network, download any third-party apps, or even manually enter any credentials.

The only way Denon could have made this any easier would be if they used NFC for commissioning rather than scanning the QR code. That means the whole setup process could be started with a tap versus opening the Home app first.

That’s something still seldom seen, even on dedicated smart home products. Companies probably skip it due to the added cost of the NFC chip that’s used merely once during that initial setup process.

Denon Home series speakers review: The status light can change colors

While we’re talking about the setup and wireless, so far in my testing, I’ve not encountered any instances of the speakers going offline. Both speakers have remained online, available, and responsive when I cast audio to them.

The speakers support Wi-Fi 6, including not only 2.4GHz and 5GHz, but 6GHz, too. With strong Wi-Fi in my home, I was able to enable the high-fidelity mode for uncompressed high bitrate audio that used during multi-room playback.

Denon Home speakers review: Smart home powers

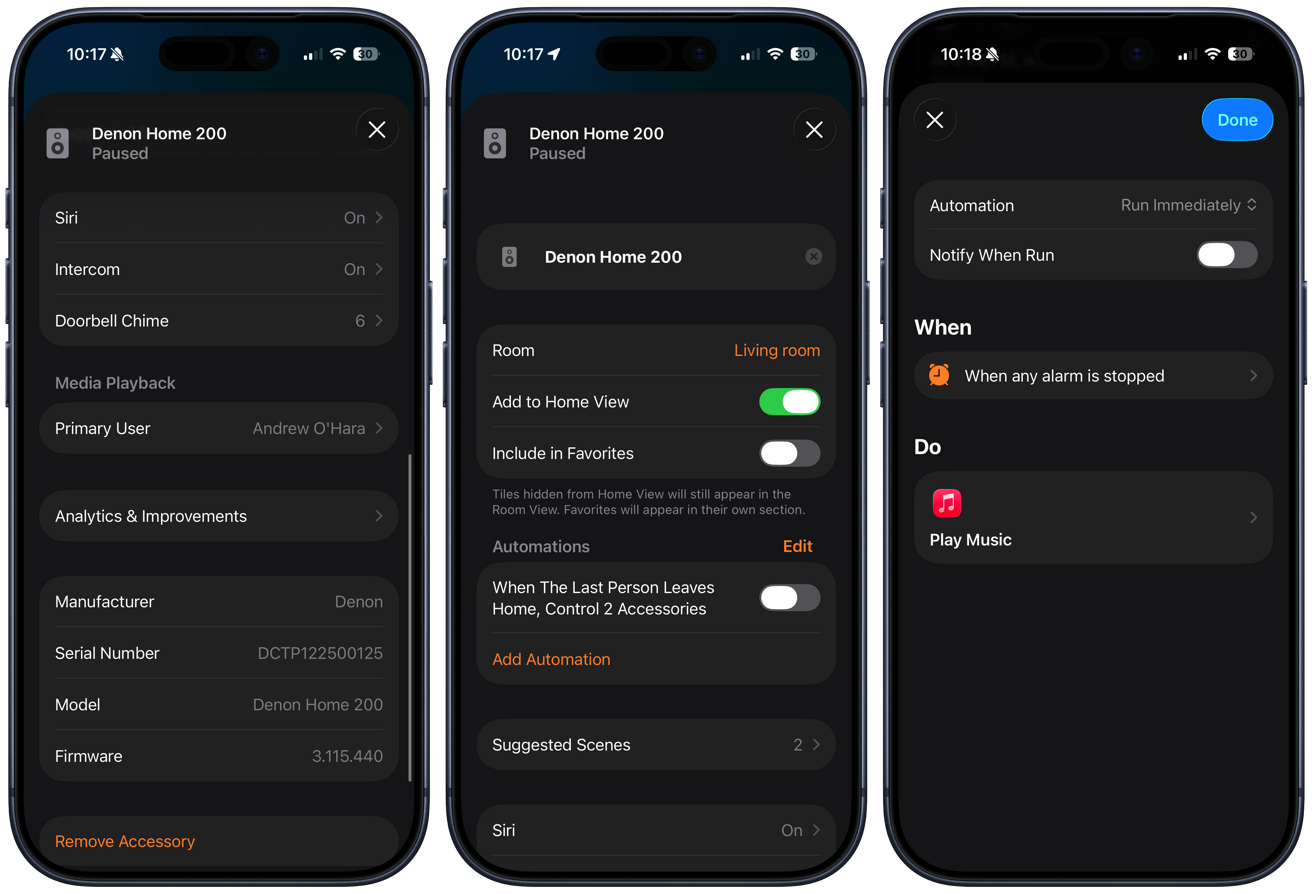

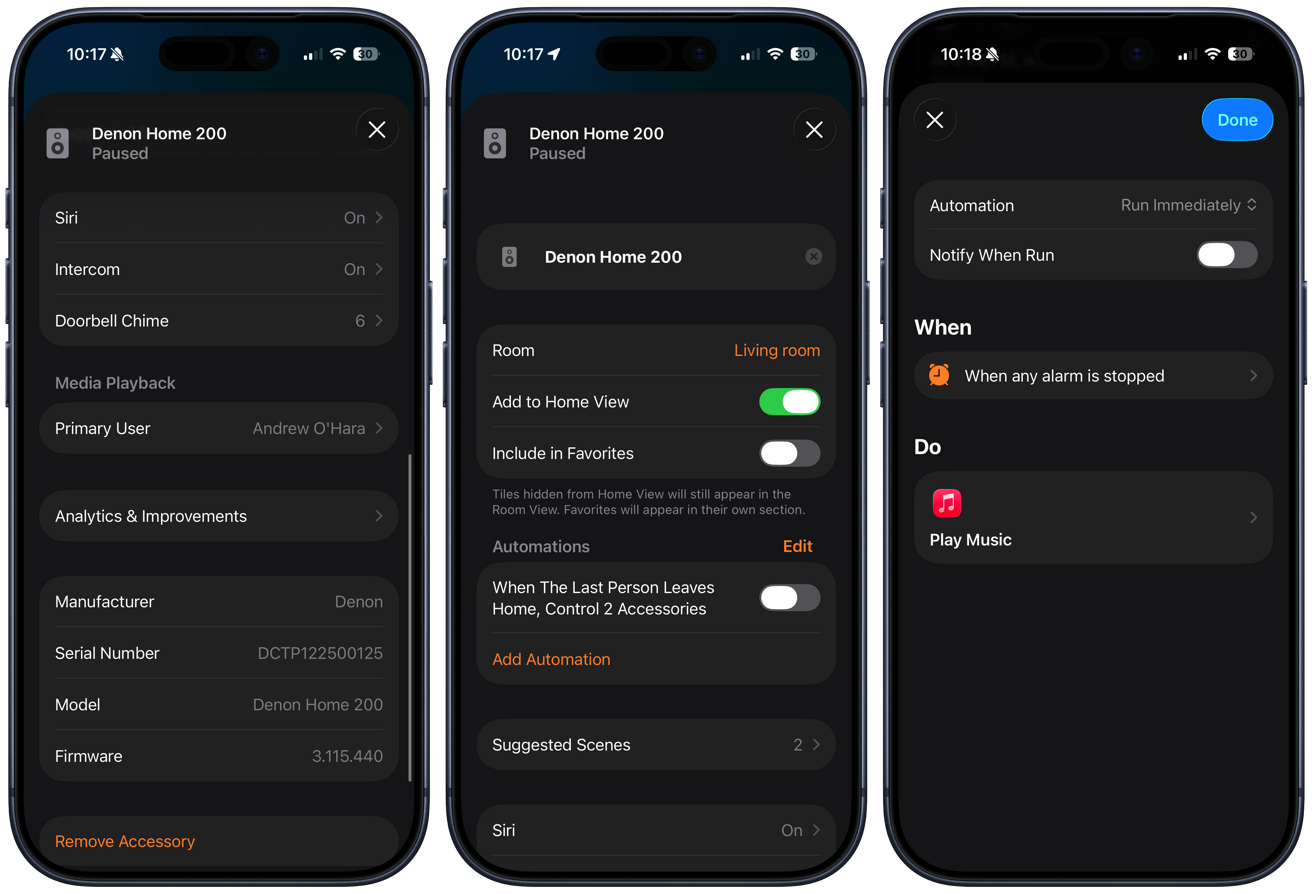

What makes these speakers so appealing to me compared to others in their weight class is that they support Apple Home. This doesn’t just make the setup process easier, but allows them to act almost identical to a HomePod.

Since it appears in the Home app as a Home accessory, you can include it in your home automations. Simple ones, for example, like automatically pausing audio playback when you or the last person leaves the home, are quite useful.

These speakers can be used in more complex scenes and automations, too. You could have the speakers play your “get ready” playlist in the morning when your alarm goes off, you could have a “pump up” playlist when you set a workout scene, or play white noise with a sleep timer when setting your “Goodnight” scene.

Denon Home series speakers review: The Denon Home 200 showing in the Home app

Another benefit is that it can be used as an intercom with other Apple Home speakers, including HomePods. If I’m in my studio, my partner can call me over the intercom from the kitchen HomePod to my studio Denon Home 400, and I can talk back to them.

If you have an Apple Home doorbell, the Denon Home speakers can act as wireless chimes. That way, if someone presses the doorbell on the front door, the Denon speaker down in the studio can chime to let me know someone is there.

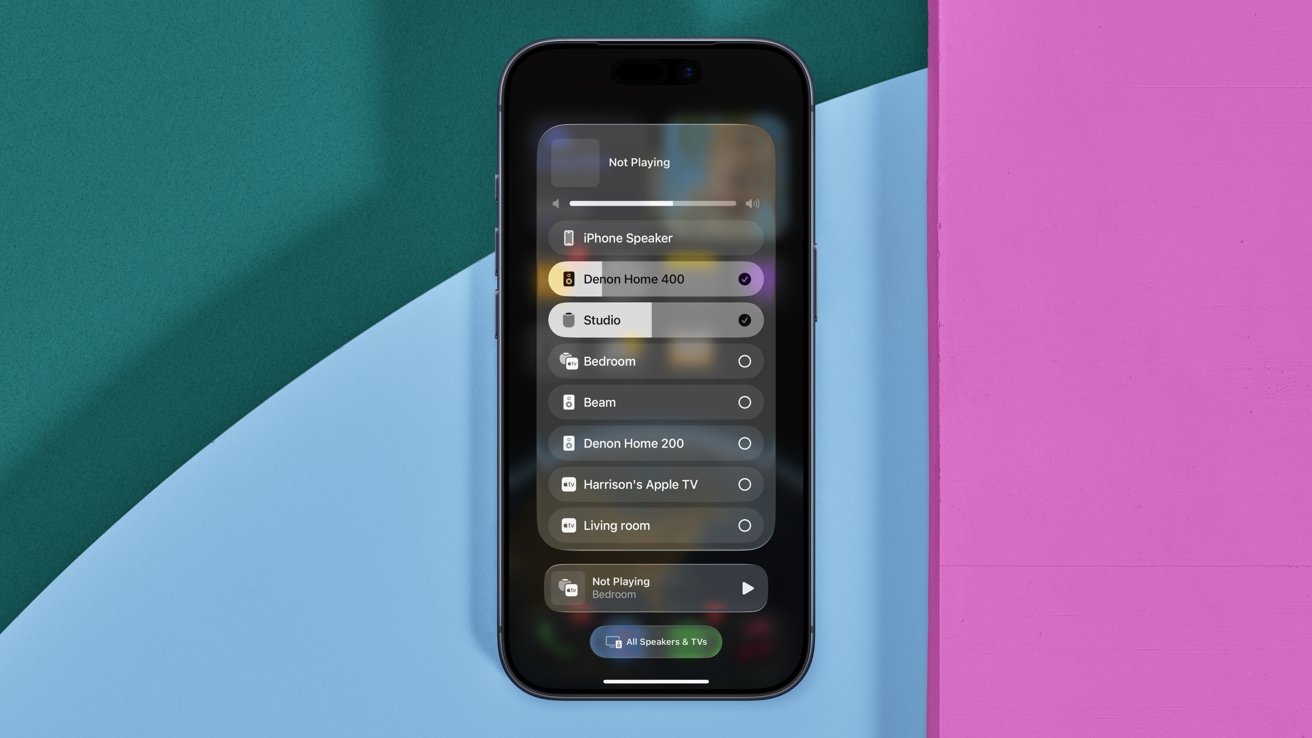

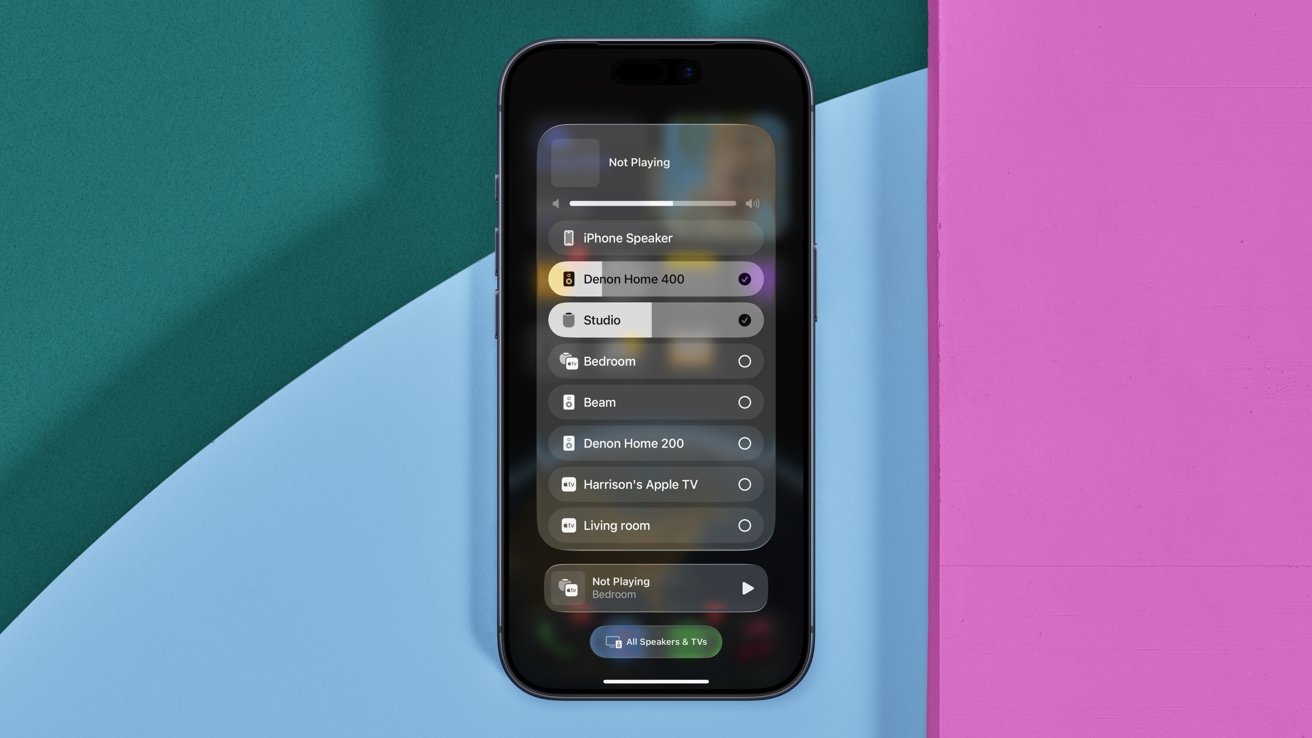

Denon Home series speakers review: Use AirPlay to cast audio to the Denon speakers, including multiple at once

This brings support for AirPlay, too. You can cast audio from nearly any Apple device to the Denon Home speakers.

That’s what allows Apple-native multi-room support. You can play to multiple AirPlay speakers at once, which can be any combination from HomePods and third-party speakers.

Denon Home series speakers review: During setup, you can turn on Siri on the speakers

My favorite is just using Siri for this. I can ask Siri on my iPhone to play my Jams playlist on the Denon Home 400, or if I say to play in a certain room, it will go to all speakers in that location.

Biggest of all is full support for Siri, though the implementation is a little confusing. Apple does allow third-party speakers to build in Siri, but so far, Denon and Ecobee are the only major players to do so.

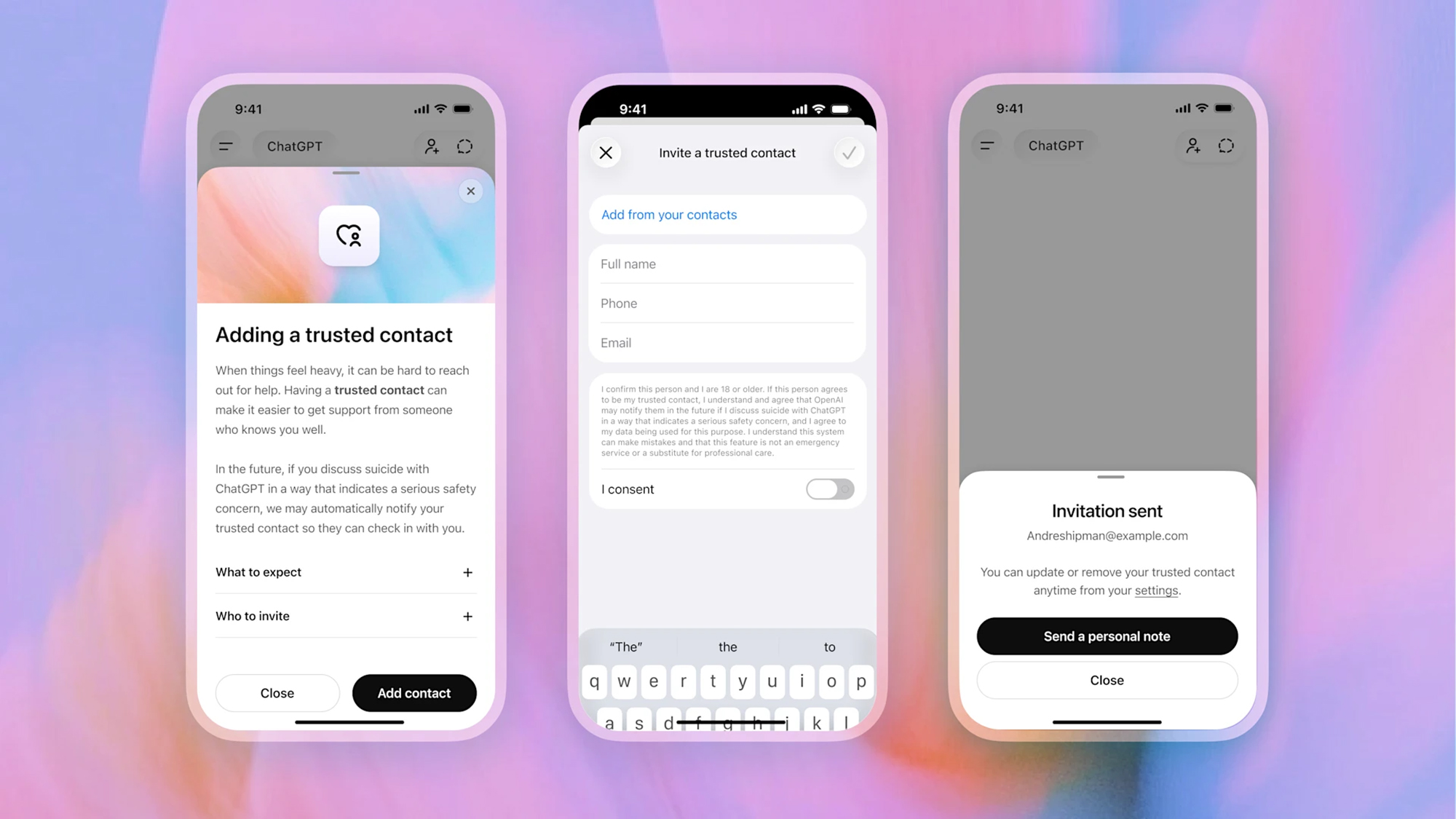

Denon Home speakers review: Siri, but not on HomePod

The catch with Siri support is that the queries aren’t processed directly on the third-party speaker, but instead require a HomePod or HomePod mini. What happens is that when you ask Siri a question, it listens on that third-party speaker, routes the question to a nearby HomePod, then gives you the answer back on the original speaker.

This major caveat is likely why some of the big players, like Sonos, prefer to cozy up to other virtual assistants like Amazon Alexa, Google Assistant, or its own assistants instead. They don’t want you to have to buy a HomePod, but rather you buy more of their speakers.

Denon Home series speakers review: The status light can change to Siri colors when you invoke Apple’s assistant

For many Apple users, they likely already have some version of HomePod or two in the Home, so I don’t consider this a huge downside. It is something to be aware of though, before purchasing the speaker with the anticipation of using Siri.

As far as utility, Siri is basically in feature parity with HomePod. Anything you can ask a HomePod, you can ask your Denon speaker.

You can ask it to control your smart home accessories, to text someone, to check the weather, convert units of measurement, and more. That said, there are some ways that they differ.

Denon Home series speakers review: Denon Home 200 is still larger than the base HomePod

HomePod, for example, can act as a full Home Hub. A Home Hub helps run scenes and automations when you aren’t at home and is a Thread Border Router.

Apple’s HomePod has handoff using ultra-wideband to automatically transfer audio as your phone approaches. The Denon still gets suggested in the Dynamic Island when you open the Music app nearby, though.

A Home Hub is also what processes the AI video for HomeKit Secure Video, such as people, car, or package detection. Plus, HomePod and HomePod mini have built-in environmental sensors for temperature and humidity.

This is a bit of reading the tea leaves, but because of how Siri works on third-party speakers, I expect Apple Intelligence to arrive sooner rather than later.

Apple has been working on these next-generation HomePod and HomePod mini for seemingly quite some time. If they do launch in the fall of 2026 as expected, Apple Intelligence will certainly be supported.

Again, another leap here, but that would mean if you purchased a new HomePod or HomePod mini with Apple Intelligence, Siri on your Denon speaker would be upgraded. Hopefully, that isn’t wishful thinking, but it’s not a big jump to make.

While I do strongly believe that’s how it will play out, I also strongly caution against buying a product today with the promise of an update in the future. If you buy these speakers now, be comfortable with how they work now, and count future upgrades as a bonus.

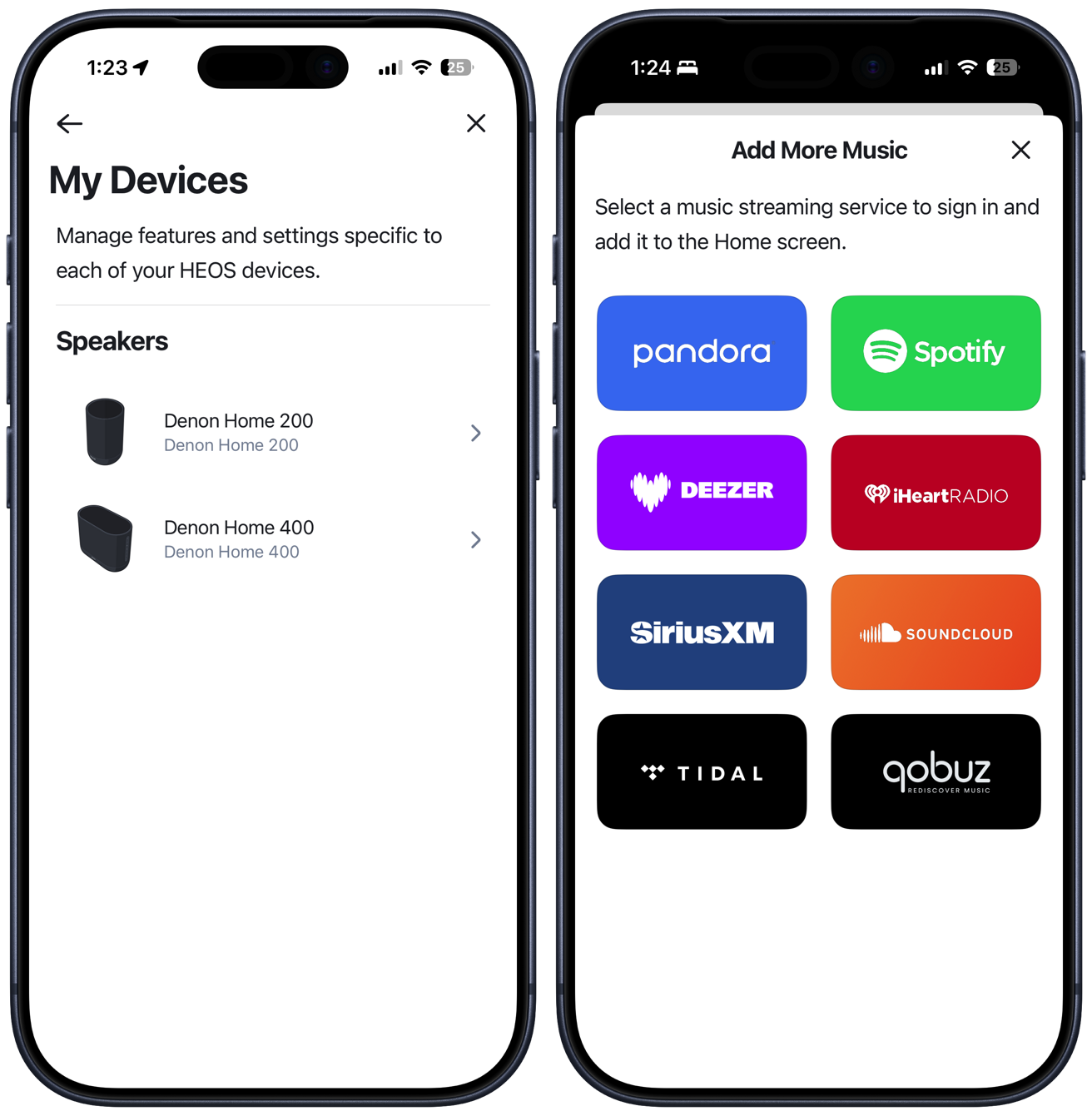

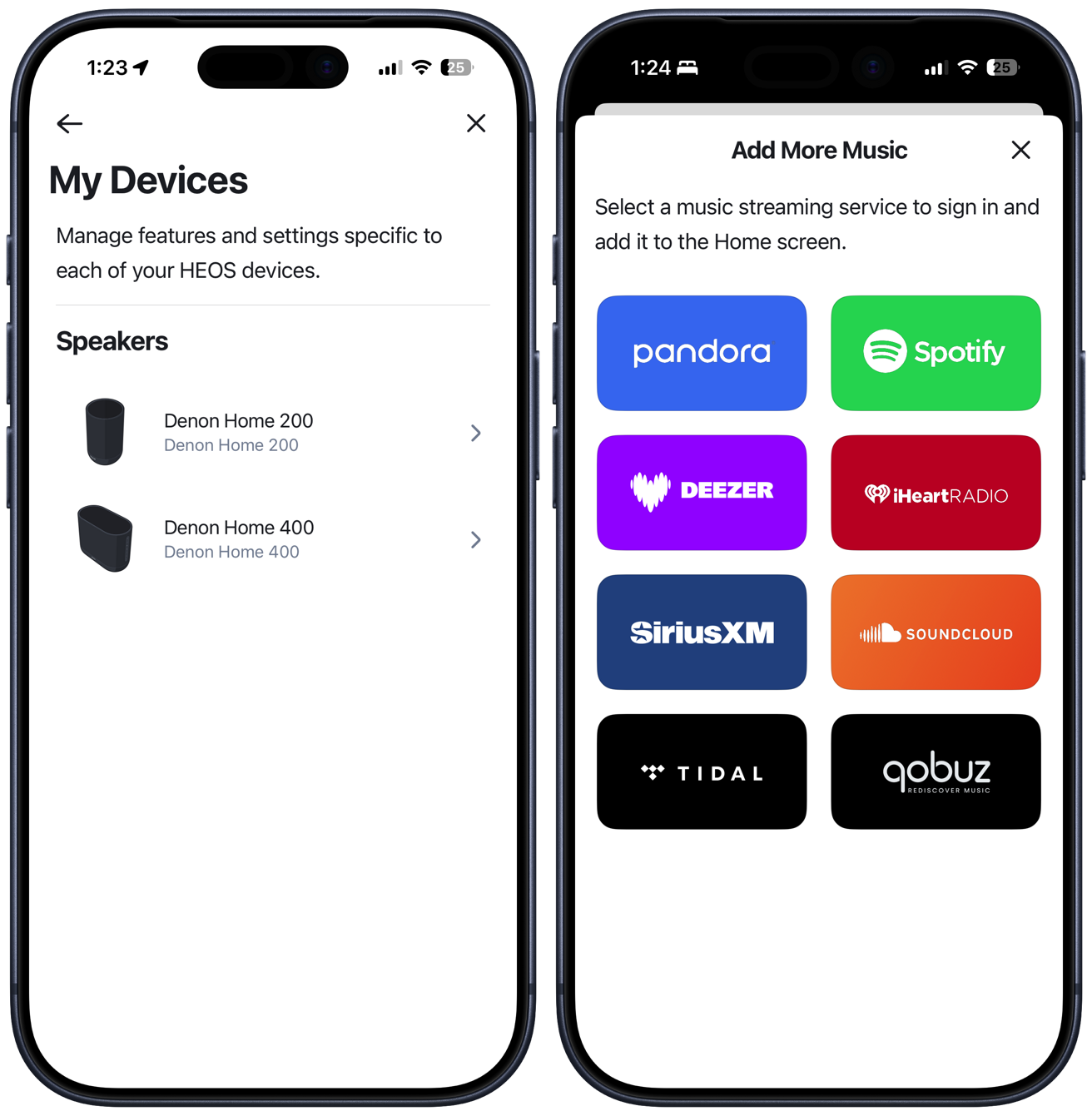

Denon Home speakers review: HEOS app

To be crystal clear, users can absolutely set up and use these speakers without any extra apps. But the Denon HEOS app has some added benefits for users that want to use it.

Denon Home series speakers review: The Denon HEOS app has more controls and direct streaming options

This app can guide through a bit more of a convoluted setup process for non-Apple users, plus has direct streaming from various platforms. Users can directly stream from a number of different services, including Tidal, Spotify, Deezer, iHeartRadio, and more.

You can stream from these services, adjust volume, perform updates, and adjust the track queue. It’s similar to the Sonos experience, though maybe a bit more limiting.

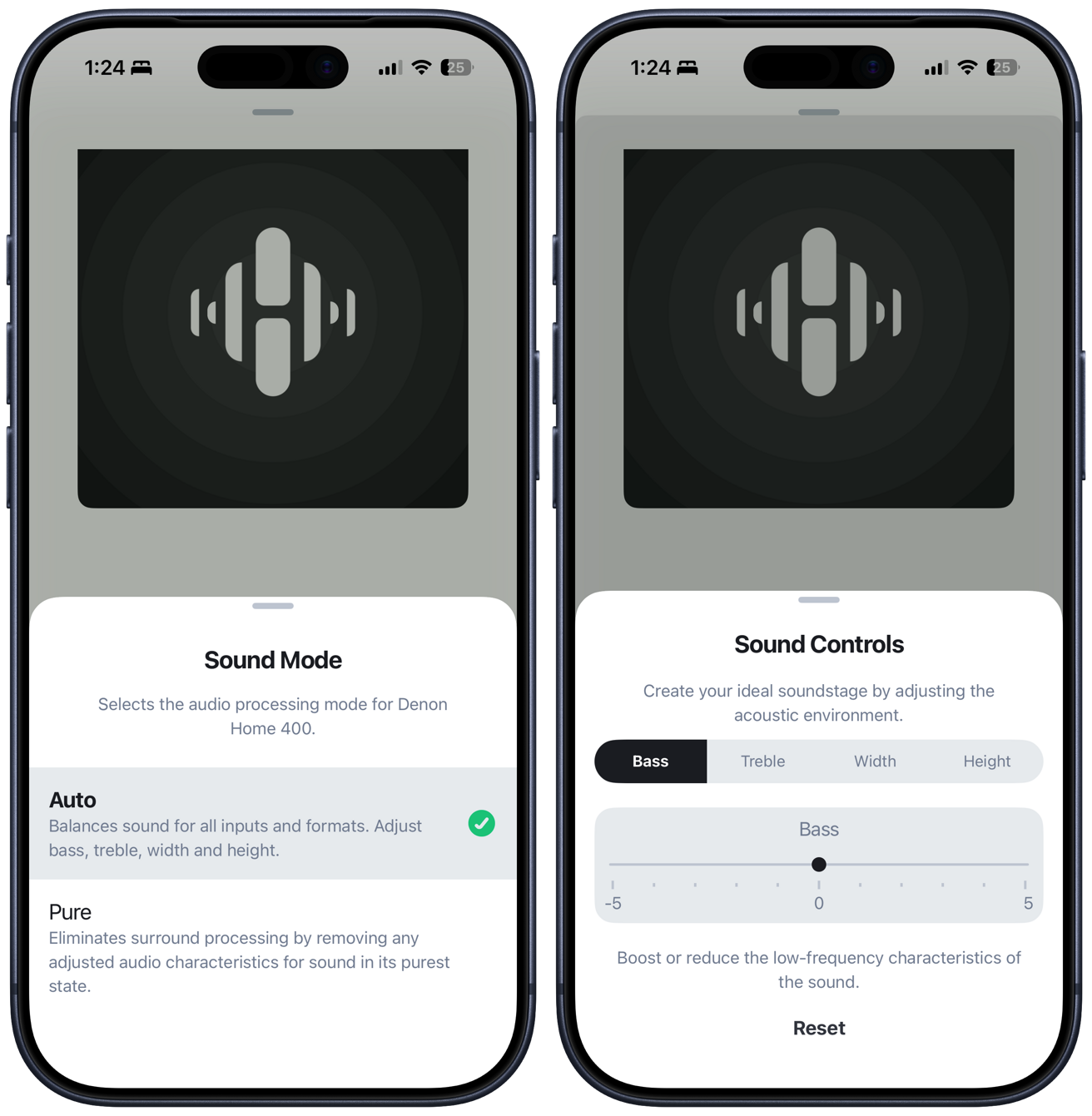

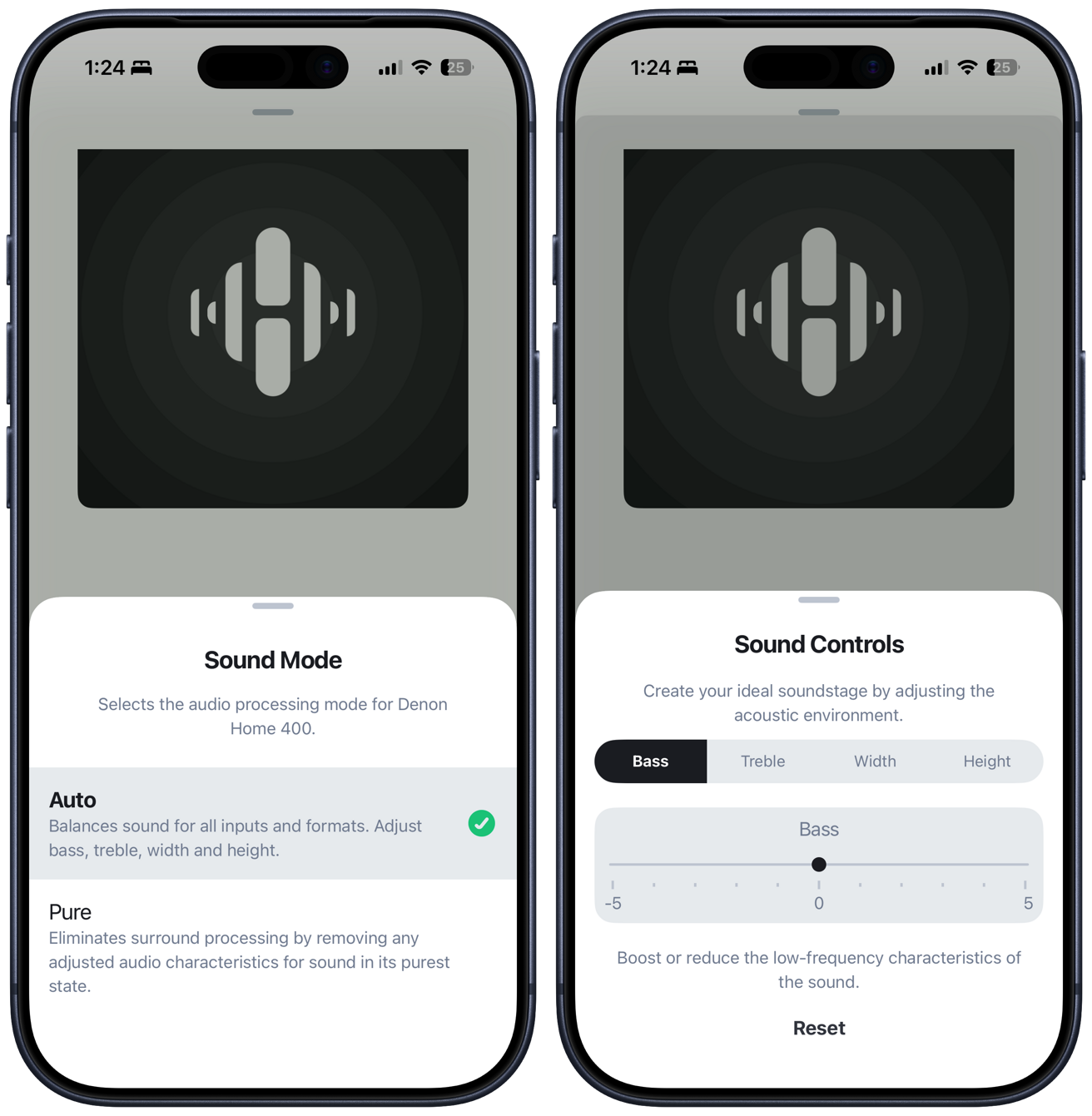

Denon Home series speakers review: You can adjust audio quality and balance from the HEOS app

Within HEOS, there are sound controls for the speakers. You can turn on “pure” mode to remove any processing or get into the weeds and manually adjust the bass, treble, or width (physical spaciousness of the soundstage).

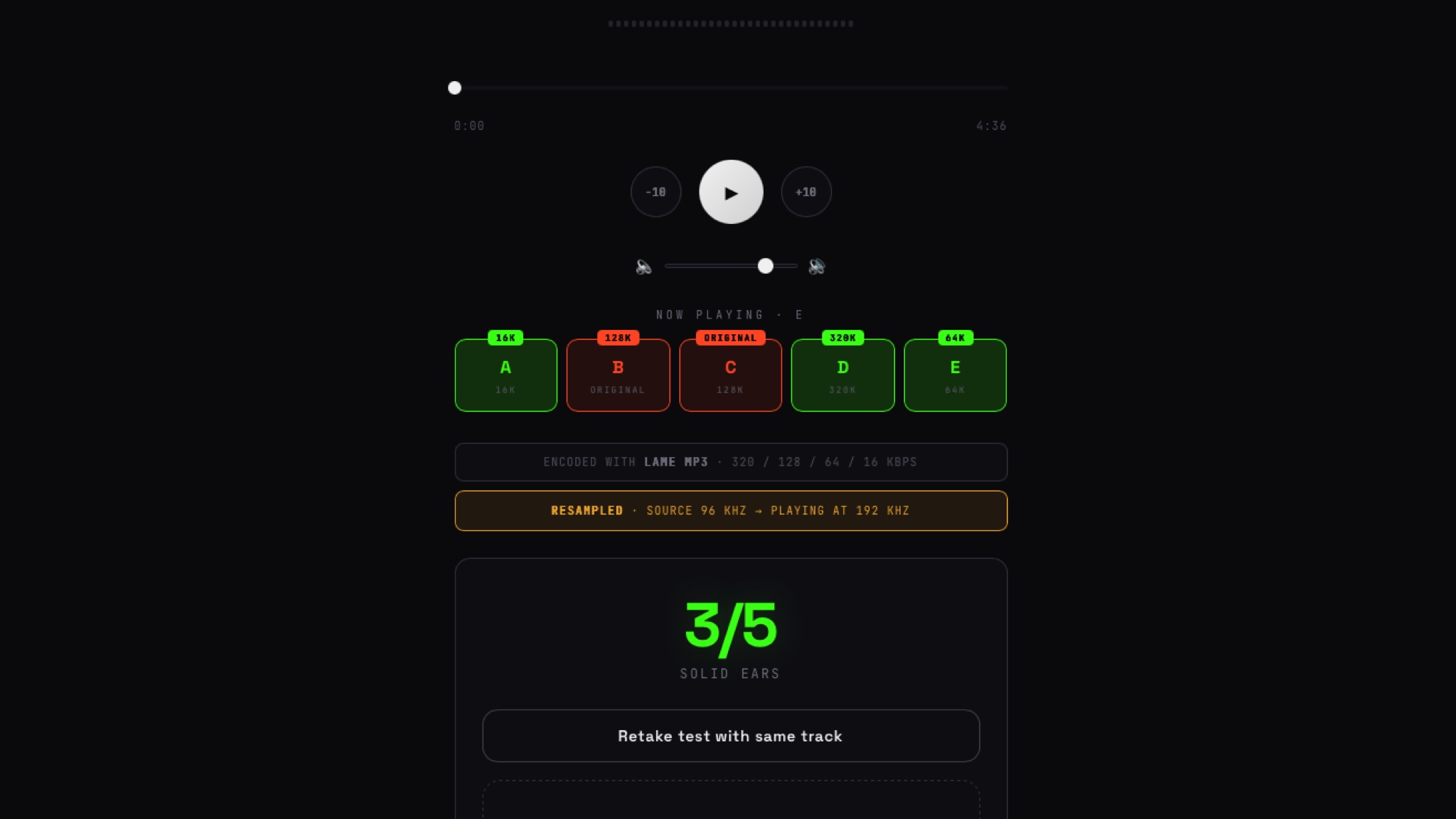

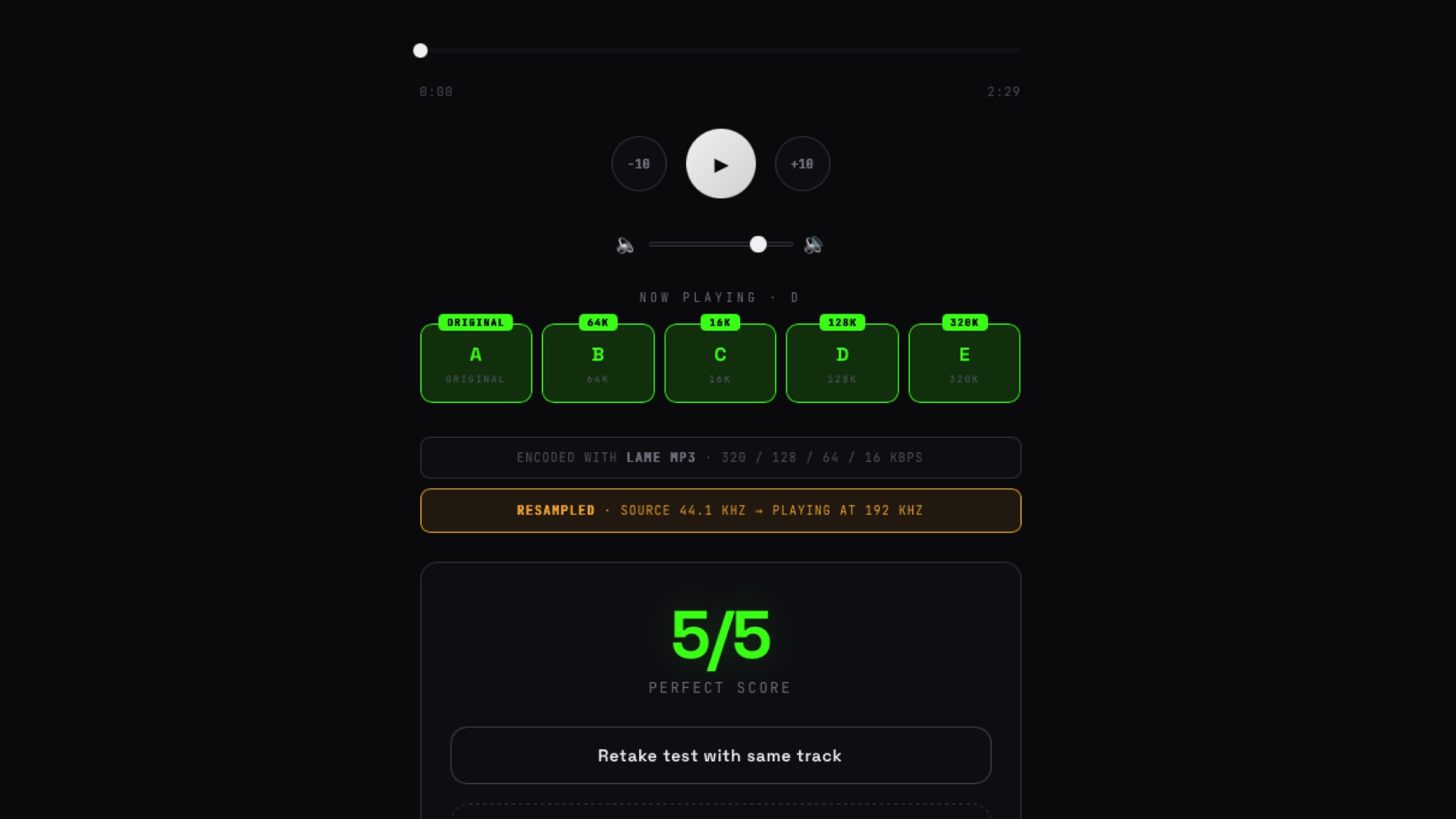

Denon Home speakers review: Audio quality

As we turn to audio quality, I want to make sure to split it between the two that I have on hand to test. I also want to compare them to the competition, such as Apple and Sonos.

Starting with the smaller of the two, the Denon Home 200 has three drivers. There are two smaller drivers positioned towards the top that angle slightly outwards and a 4-inch front-facing woofer.

Compared directly to HomePod, which is available for $100 less, the Denon Home 200 absolutely sounds better. It’s fuller, with a larger emphasis on the midrange.

Denon Home series speakers review: Controlling audio playback direct from Apple Music

Personally, at times, I find the bass on HomePod to be a bit overpowering or even sloppy, and I think Denon did an excellent job at filling out the midrange.

That isn’t to say the bass is lacking in any way on the 200. Both Denon and Apple speakers have 4-inch woofers, and it definitely puts out some oomph. It’s also much higher volume than the HomePod, with it being arguably too loud in my home to ever go past 75%.

The best way I can describe the sound is very warm, which is something I like. It also maintains this consistency, even at the high volumes.

Denon Home series speakers review: Comparing the Denon Home 200 against the Sonos Era 100 and Sonos Era 300

I’d also say that the Denon Home 200 sounds better than the Sonos Era 100, though there isn’t a perfect comparison to Sonos. This performance should be expected, given the significantly higher price tag of the Denon.

Personally, I even preferred the Denon Home 200 to the Sonos Era 300, to a degree. The Era 300 is larger and more expensive, but I think the Denon Home 200 has a warmer profile that I liked and has a smaller footprint.

Again, the comparison is tough. The Denon Home 200 lacks the upward-firing driver of the Sonos Era 300, but if you move to the Denon Home 400, it’s far more expensive, while being even bigger still.

Listening to “The Mountain Song” by Tophouse, I can very much feel the music build and swell with that full, wide sound. Similarly, “World’s Smallest Violin” by AJR has a ton of detail as the music morphs between musical instruments that make the song very cool to listen to.

Denon Home series speakers review: Denon Home 400 on a shelf in my studio

Moving to the Denon Home 400, it has six total drivers. There are two outward-firing tweeters, dual 4.5-inch woofers, and two more upward-firing drives.

This one gets even louder and is overkill for any small to medium room. It has better stereo separation as well and a broader soundstage.

I can’t emphasize how much this can really fill out a room. Thinking about the Denon Home 600, that must be wild.

When I first started listening to the Denon Home 400, the most noticeable change was the bass. It was far more powerful, but still tightly controlled.

You can feel this bass in your chest before even having to turn up the volume. It was amazing.

Theoretically, the Denon Home 400 will provide more accurate Dolby Atmos Spatial Audio than the 200. I say theoretically because I wasn’t able to test it.

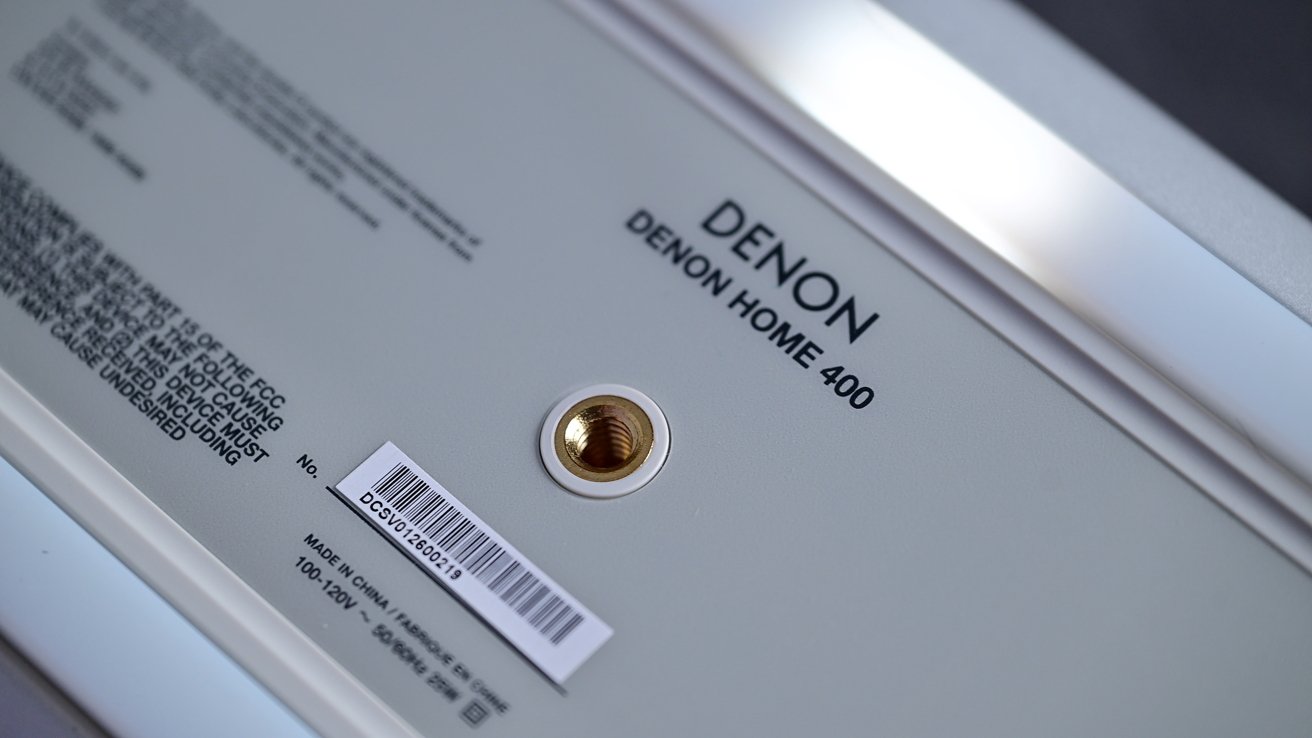

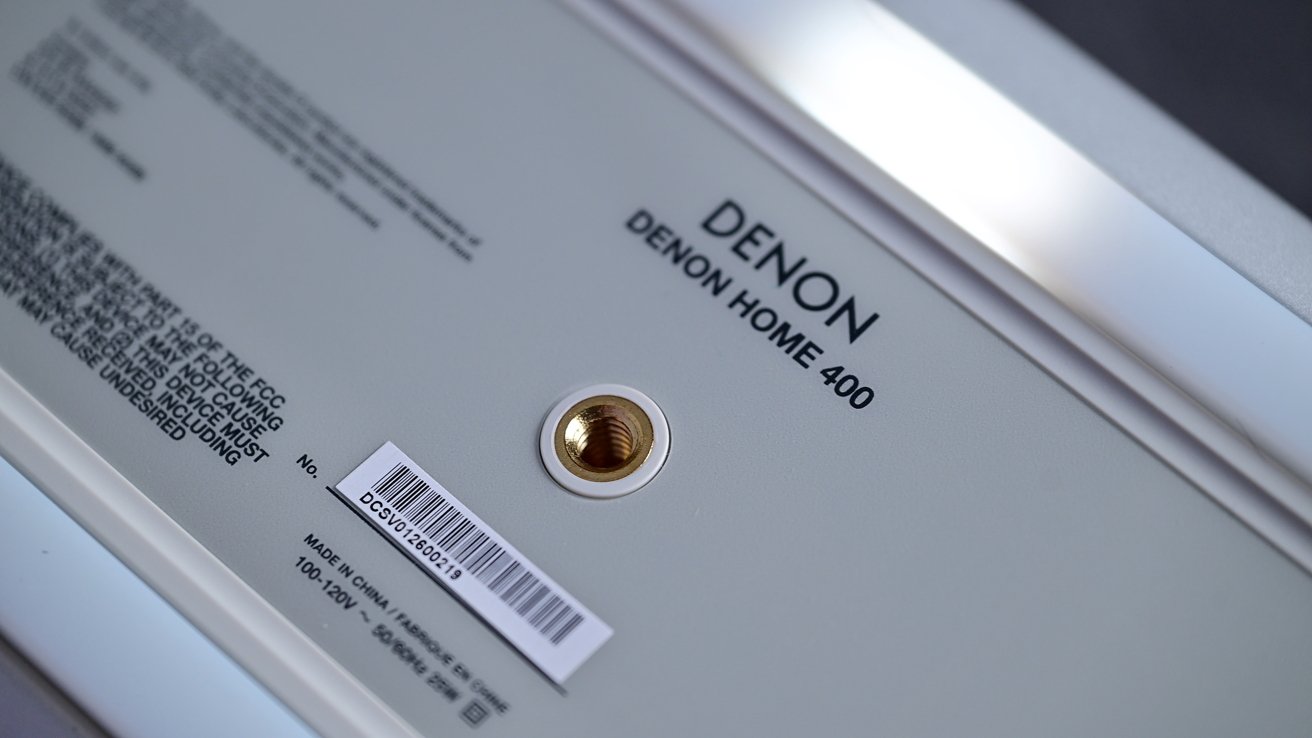

Denon Home series speakers review: The bottom of the speaker has a silicone foot and a thread for mounting on a traditional speaker stand or bracket

Currently, Dolby Atmos content is only supported when streaming directly from Tidal or Amazon Music Ultra HD. I don’t subscribe to either of these as an Apple Music listener.

Denon says it is working on Apple Music Dolby Atmos support, but there’s no promise on when that feature will be delivered.

Denon Home speakers review: Siri-ous audio quality for Apple users

In an increasingly competitive space, Denon has excelled here. I’m very pleased with the entire ecosystem.

The base model, while more expensive than a HomePod, has notably better audio quality. It also offers better on-device controls, multiple wired inputs, and still retains Siri support.

Denon Home series speakers review: Denon Home 400 is an amazing-sounding premium speaker with Siri support

Moving up the lineup, users can choose the speaker that suits their environment, upgrading to the larger, more powerful, and louder models. If you ever found that HomePod wasn’t loud enough or the audio wasn’t good enough, there were zero alternatives that let you keep Siri.

While I’m a massive Sonos fan, the Denon Home 200, 400, and 600 offer more than competitive audio quality with native Apple features. As an Apple user, Denon is offering a better experience.

Small points are subtracted for having a HomePod as a requirement for a full experience, but that onus lies on Apple, not Denon. With so few alternatives here, Denon did the absolute best it was able to, all around.

Right now, I think Denon put out the best all around smart speaker, if you’re willing to pony up for superior sound. For Apple users, it’s the premium option to choose, at least while we wait for the possibility of a refreshed HomePod.

Denon Home speakers review: Pros

- Sleek, premium, modern designs

- Built-in Siri, and smart home features like doorbell chime, and intercom

- Fantastic audio quality

- Dolby Atmos support

- Easy setup through Apple Home

Denon Home speakers review: Cons

- Requires HomePod or HomePod mini for Siri

- Somewhat expensive

- No Dolby Atmos via Apple Music yet

Denon Home 200 & Denon Home 400 Rating: 4 out of 5 stars

Where to buy Denon Home 200 & Denon Home 400

The Denon Home 200 sells for $399 and can be ordered from Amazon and B&H Photo, while the Denon Home 400 retails for $599.

That model, which comes in your choice of Charcoal or Stone, can also be purchased at Amazon and B&H Photo.

The robust Denon 600, meanwhile, will run you $799 at Amazon and B&H.

You must be logged in to post a comment Login