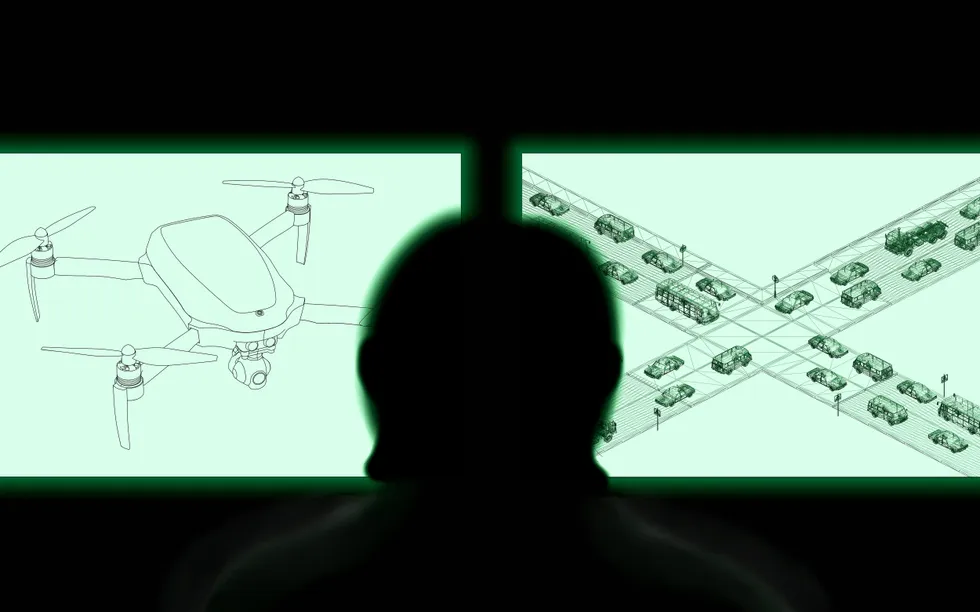

Self-driving cars often struggle with with situations that are commonplace for human drivers. When confronted with construction zones, school buses, power outages, or misbehaving pedestrians, these vehicles often behave unpredictably, leading to crashes or freezing events, causing significant disruption to local traffic and possibly blocking first responders from doing their jobs. Because self-driving cars cannot successfully handle such routine problems, self-driving companies use human babysitters to remotely supervise them and intervene when necessary.

This idea—humans supervising autonomous vehicles from a distance—is not new. The U.S. military has been doing it since the 1980s with unmanned aerial vehicles (UAVs). In those early years, the military experienced numerous accidents due to poorly designed control stations, lack of training, and communication delays.

As a Navy fighter pilot in the 1990s, I was one of the first researchers to examine how to improve the UAV remote supervision interfaces. The thousands of hours I and others have spent working on and observing these systems generated a deep body of knowledge about how to safely manage remote operations. With recent revelations that U.S. commercial self-driving car remote operations are handled by operators in the Philippines, it is clear that self-driving companies have not learned the hard-earned military lessons that would promote safer use of self-driving cars today.

While stationed in the Western Pacific during the Gulf War, I spent a significant amount of time in air operations centers, learning how military strikes were planned, implemented and then replanned when the original plan inevitably fell apart. After obtaining my PhD, I leveraged this experience to begin research on the remote control of UAVs for all three branches of the U.S. military. Sitting shoulder-to-shoulder in tiny trailers with operators flying UAVs in local exercises or from 4000 miles away, my job was to learn about the pain points for the remote operators as well as identify possible improvements as they executed supervisory control over UAVs that might be flying halfway around the world.

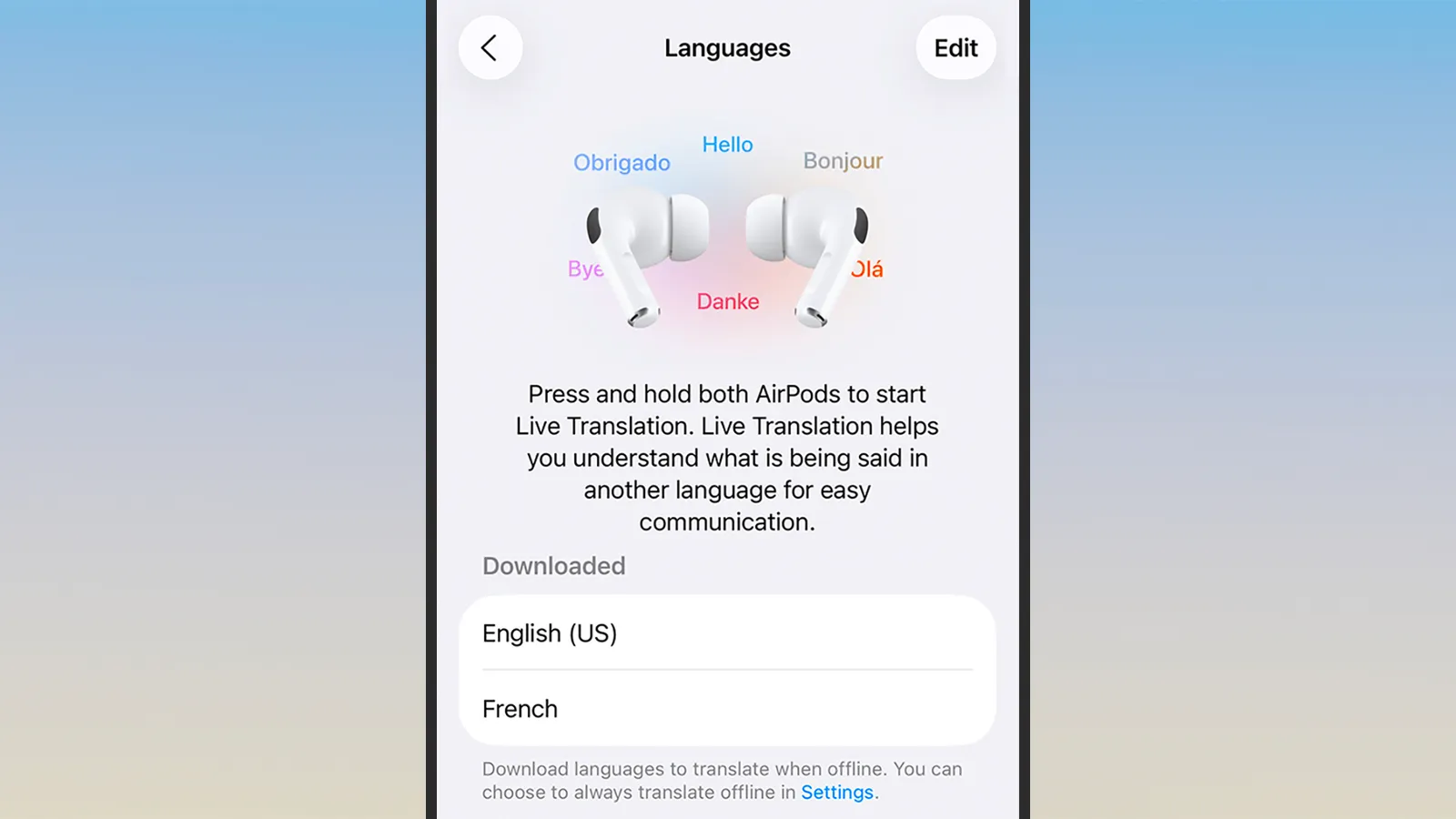

Supervisory control refers to situations where humans monitor and support autonomous systems, stepping in when needed. For self-driving cars, this oversight can take several forms. The first is teleoperation, where a human remotely controls the car’s speed and steering from afar. Operators sit at a console with a steering wheel and pedals, similar to a racing simulator. Because this method relies on real-time control, it is extremely sensitive to communication delays.

The second form of supervisory control is remote assistance. Instead of driving the car in real time, a human gives higher-level guidance. For example, an operator might click a path on a map (called laying “breadcrumbs”) to show the car where to go, or interpret information the AI cannot understand, such as hand signals from a construction worker. This method tolerates more delay than teleoperation but is still time-sensitive.

Five Lessons From Military Drone Operations

Over 35 years of UAV operations, the military consistently encountered five major challenges during drone operations which provide valuable lessons for self-driving cars.

Latency

Latency—delays in sending and receiving information due to distance or poor network quality—is the single most important challenge for remote vehicle control. Humans also have their own built-in delay: neuromuscular lag. Even under perfect conditions, people cannot reliably respond to new information in less than 200–500 milliseconds. In remote operations, where communication lag already exists, this makes real-time control even more difficult.

In early drone operations, U.S. Air Force pilots in Las Vegas (the primary U.S. UAV operations center) attempted to take off and land drones in the Middle East using teleoperation. With at least a two-second delay between command and response, the accident rate was 16 times that of fighter jets conducting the same missions . The military switched to local line-of-sight operators and eventually to fully automated takeoffs and landings. When I interviewed the pilots of these UAVs, they all stressed how difficult it was to control the aircraft with significant time lag.

Self-driving car companies typically rely on cellphone networks to deliver commands. These networks are unreliable in cities and prone to delays. This is one reason many companies prefer remote assistance instead of full teleoperation. But even remote assistance can go wrong. In one incident, a Waymo operator instructed a car to turn left when a traffic light appeared yellow in the remote video feed—but the network latency meant that the light had already turned red in the real world. After moving its remote operations center from the U.S. to the Philippines, Waymo’s latency increased even further. It is imperative that control not be so remote, both to resolve the latency issue but also increase oversight for security vulnerabilities.

Workstation Design

Poor interface design has caused many drone accidents. The military learned the hard way that confusing controls, difficult-to-read displays, and unclear autonomy modes can have disastrous consequences. Depending on the specific UAV platform, the FAA attributed between 20% and 100% of Army and Air Force UAV crashes caused by human error through 2004 to poor interface design.

UAV crashes (1986-2004) caused by human factors problems, including poor interface and procedure design. These two categories do not sum to 100% because both factors could be present in an accident.

|

Human Factors |

Interface Design |

Procedure Design |

| Army Hunter |

47% |

20% |

20% |

| Army Shadow |

21% |

80% |

40% |

| Air Force Predator |

67% |

38% |

75% |

| Air Force Global Hawk |

33% |

100% |

0% |

Many UAV aircraft crashes have been caused by poor human control systems. In one case, buttons were placed on the controllers such that it was relatively easy to accidentally shut off the engine instead of firing a missile. This poor design led to the accidents where the remote operators inadvertently shut the engine down instead of launching a missile.

The self-driving industry reveals hints of comparable issues. Some autonomous shuttles use off-the-shelf gaming controllers, which—while inexpensive—were never designed for vehicle control. The off-label use of such controllers can lead to mode confusion, which was a factor in a recent shuttle crash. Significant human-in-the-loop testing is needed to avoid such problems, not only prior to system deployment, but also after major software upgrades.

Operator Workload

Drone missions typically include long periods of surveillance and information gathering, occasionally ending with a missile strike. These missions can sometimes last for days; for example, while the military waits for the person of interest to emerge from a building. As a result, the remote operators experience extreme swings in workload: sometimes overwhelming intensity, sometimes crushing boredom. Both conditions can lead to errors.

When operators teleoperate drones, workload is high and fatigue can quickly set in. But when onboard autonomy handles most of the work, operators can become bored, complacent, and less alert. This pattern is well documented in UAV research.

Self-driving car operators are likely experiencing similar issues for tasks ranging from interpreting confusing signs to helping cars escape dead ends. In simple scenarios, operators may be bored; in emergencies—like driving into a flood zone or responding during a citywide power outage—they can become quickly overwhelmed.

The military has tried for years to have one person supervise many drones at once, because it is far more cost effective. However, cognitive switching costs (regaining awareness of a situation after switching control between drones) result in workload spikes and high stress. That coupled with increasingly complex interfaces and communication delays have made this extremely difficult.

Self-driving car companies likely face the same roadblocks. They will need to model operator workloads and be able to reliably predict what staffing should be and how many vehicles a single person can effectively supervise, especially during emergency operations. If every self-driving car turns out to need a dedicated human to pay close attention, such operations would no longer be cost-effective.

Training

Early drone programs lacked formal training requirements, with training programs designed by pilots, for pilots. Unfortunately, supervising a drone is more akin to air traffic control than actually flying an aircraft, so the military often placed drone operators in critical roles with inadequate preparation. This caused many accidents. Only years later did the military conduct a proper analysis of the knowledge, skills, and abilities needed to conduct safe remote operations, and changed their training program.

Self-driving companies do not publicly share their training standards, and no regulations currently govern the qualifications for remote operators. On-road safety depends heavily on these operators, yet very little is known about how they are selected or taught. If commercial aviation dispatchers are required to have formal training overseen by the FAA, which are very similar to self-driving remote operators, we should hold commercial self-driving companies to similar standards.

Contingency Planning

Aviation has strong protocols for emergencies including predefined procedures for lost communication, backup ground control stations, and highly reliable onboard behaviors when autonomy fails. In the military, drones may fly themselves to safe areas or land autonomously if contact is lost. Systems are designed with cybersecurity threats—like GPS spoofing—in mind.

Self-driving cars appear far less prepared. The 2025 San Francisco power outage left Waymo vehicles frozen in traffic lanes, blocking first responders and creating hazards. These vehicles are supposed to perform “minimum-risk maneuvers” such as pulling to the side—but many of them didn’t. This suggests gaps in contingency planning and basic fail-safe design.

The history of military drone operations offers crucial lessons for the self-driving car industry. Decades of experience show that remote supervision demands extremely low latency, carefully designed control stations, manageable operator workload, rigorous, well-designed training programs, and strong contingency planning.

Self-driving companies appear to be repeating many of the early mistakes made in drone programs. Remote operations are treated as a support feature rather than a mission-critical safety system. But as long as AI struggles with uncertainty, which will be the case for the foreseeable future, remote human supervision will remain essential. The military learned these lessons through painful trial and error, yet the self-driving community appears to be ignoring them. The self-driving industry has the chance—and the responsibility—to learn from our mistakes in combat settings before it harms road users everywhere.

From Your Site Articles

Related Articles Around the Web

You must be logged in to post a comment Login