From ancient lunar lava to personal tributes, the new images released from the Artemis II space mission capture fresh perspectives of our celestial neighbour.

Yesterday (7 April), NASA released the first images of the moon captured by the Artemis II astronauts during their historic test flight.

The Artemis II mission took off last week (1 April) from the Kennedy Space Center in Florida, beginning an approximately 10-day mission for NASA astronauts Reid Wiseman, Victor Glover, Christina Koch and Canadian Space Agency astronaut Jeremy Hansen.

Yesterday’s images were taken on 6 April during the crew’s seven-hour pass over the lunar far side – the first crewed lunar flyby in more than 50 years – and provide a fresh look at Earth’s closest celestial neighbour.

From an eclipse to ancient lava, here is just a handful of some of the most interesting images captured by the Artemis II crew so far.

Near and far

A picture capturing two-thirds of the moon. Towards the bottom of the image, the Orientale basin can be seen. North-east of the Orientale, seen as a dark spot, is the Grimaldi crater. Image: NASA

One of the crew’s most striking images captures two-thirds of the moon, showcasing the “intricate features of the near side”, according to NASA. The 600-mile-wide impact crater, the Orientale basin, lies along the transition between the near and far sides and can be seen at the bottom of the image.

The round black spot north-east of Orientale is the Grimaldi crater, known for its exceptionally “dark mare lava floor and heavily degraded rim”.

In-space eclipse

The moon fully eclipsing the sun, as taken by the Artemis II crew. Image: NASA

One of the most unique images taken by the Artemis II crew captures the moon fully eclipsing the sun. The corona of the sun forms a glowing halo around the moon, while light reflected off Earth forms a faint, glowing outline of the near side of the moon.

Nearly 54 minutes of totality – when the moon completely blocks the bright face of the sun – was observed by the crew.

Stars are also visible around the spectacle, which are typically too faint to see when imaging the moon, but are readily visible with the moon in darkness.

“This unique vantage point provides both a striking visual and a valuable opportunity for astronauts to document and describe the corona during humanity’s return to deep space,” according to NASA.

A different perspective

Earth in a crescent phase showing the cutoff between day and night on the planet, as seen from the Artemis II spacecraft as it conducted the lunar flyby. Image: NASA

Another image captured during the lunar flyby shows Earth split between daytime and nighttime.

Earth can be seen in a crescent phase, with sunlight coming from the right of the image. On the day side, swirling clouds are visible over the Australia and Oceania region.

Meanwhile, the lines of small indentations seen on the moon’s surface to the left of the image are secondary crater chains. These structures are formed by material ejected during a violent primary impact.

Ancient lava

A close-up snapshot of the moon as the crew approached for the flyby. The Aristarchus crater is the bright white dot in the middle of a dark grey lava flow at the top of the image. Image: NASA

In one close-up shot of the moon’s surface, taken as the NASA Orion spacecraft approached for the lunar flyby, an interesting ancient remnant can be observed.

According to NASA, dark patches visible on the top third of the lunar disc represent ancient lava.

Meanwhile, the bright white dot in the middle of a dark grey lava flow at the top of the image is the Aristarchus crater, which measures at a depth of 2.7km – making it deeper than the Grand Canyon.

A personal tribute

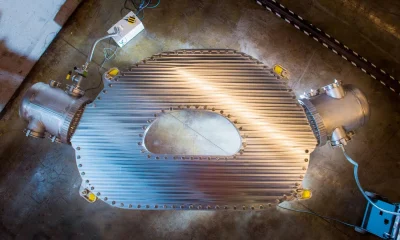

A picture of the Orientale basin, seen in the middle right of the image. The first crater named by the crew, called Integrity, lies just above the centre of the image. North of the Orientale at the top right corner of the image is the Glushko crater. To the north-west of that is the second crew-named crater, seen as a bright white spot, which the crew has called Carroll. Image: NASA

During the mission’s lunar flyby observation period, the Artemis II crew snapped an image showing the rings of the Orientale basin, one of the moon’s youngest and best-preserved large impact craters.

According to NASA, these concentric rings offer scientists a rare window into how massive impacts shape planetary surfaces, “helping refine models of crater formation and the moon’s geologic history”.

At the 10 o’clock position of the Orientale basin, two smaller craters are visible. The Artemis II astronauts submitted names for these two craters for approval by the International Astronomical Union: the first being Integrity, named after the crew’s spacecraft; and the second being Carroll, named after mission commander Reid Wiseman’s late wife.

“A number of years ago, we started this journey in our close-knit astronaut family and we lost a loved one,” said mission specialist Hansen to mission control at the time of the proposal. “And there is a feature in a really neat place on the moon, and it is on the near side/far side boundary. In fact, it’s just on the near side of that boundary, and so at certain times of the moon’s transit around Earth, we will be able to see this from Earth.

“And so we lost a loved one. Her name was Carroll, the spouse of Reid, the mother of Katie and Ellie. And if you want to find this one, you look at Glushko, and it’s just to the northwest of that, at the same latitude as Ohm, and it’s a bright spot on the moon. And we would like to call it Carroll.”

‘A human story’

Eight days into the Artemis II mission, and a number of remarkable moments have been observed in humanity’s latest major space voyage, including the crew surpassing the record for human spaceflight’s farthest distance at 248,655 miles from Earth.

But for many, the human side of the voyage – such as the crew’s sentimental proposal to name a crater – have stuck as dually important alongside the mission’s technical feats.

This rings true with award-winning Irish scientist Dr Niamh Shaw, who was present on the Kennedy Space Center’s media lawn for the historic launch.

“Space has always been a kind of compass in my life,” she told SiliconRepublic.com. “It has a way of stripping everything back, reminding me of what matters, of how small we are and how extraordinary it is that we are here at all.

“It keeps me grounded in my questions. In curiosity. In wonder. And also in responsibility. Because one of the things space teaches us, very clearly, is that there is no rescue mission coming for Earth. No one arriving to solve our problems.”

Shaw told us that what struck her just as much as the launch itself was “what happened afterwards”.

“The level of interest, the appetite for connection … People want to understand, to feel part of it, to ask questions,” she explained.

“I haven’t stopped: media calls, messages, Zooms with my Town Scientist families.

“And I found myself trying to share it in a way that made it personal for them – sending photos, describing moments, answering questions,” she added.

“Because I genuinely believe that’s where the real impact lies. Not just in the engineering achievement, extraordinary as it is. But in how it reaches people.

“In how it shifts perspective, even slightly. In how it reminds us that we are all part of something much bigger and that the story of space exploration is, ultimately, a human story.”

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

You must be logged in to post a comment Login