Oakcastle MP300: Two-minute review

Okay, I’ll fess up: this Oakcastle MP300 review wasn’t meant to take a month. I thought this super-cheap MP3 player would be a quick in-and-out style of review where I’d listen to a few tunes and take it on a trip, but it ended up being a really useful addition to my audio set-up. Good for it, not so good for my deadlines.

This is the kind of budget music player that a serious music fan would probably ignore — does anyone other than wallet-friendly Chinese brands make this kind of tech? Apparently yes, they do actually, but if I can humbly request that we stop that train of thought right now: this isn’t any bargain bin buy.

Oakcastle is a sub-brand of Majority, a brand audio fans emphatically know about. So before we get into any of its pros and cons, you’ve got an immediate benefit over most budget MP3 players online: you know this one is from a known and trusted audio specialist, with a reputation to uphold — and uphold that standing, the MP300 does.

I’m not going to pretend that this is the best MP3 player for everyone: it has limited file support, feels quite cheap in the hand, and only offers a fraction of the features that a pricier option would have.

But its simplicity is the point: this is a lovely little digital detox machine that doesn’t make you pay for fancy extras you might not need. It’s no distraction machine that’ll suck you into a WhatsApp wormhole when you’re just trying to put on a song, and its playlists aren’t so algorithmic that you’re only hearing the same eight songs on repeat.

The MP300 became my month-long go-to because I plugged it into my bookshelf speakers via aux, and just left it there. Any time I wanted music, I could easily open up my library and start playing my songs. And I do mean my songs — no music streaming here, this is a device for people who own their own music, but don’t need to hear it played in lossless.

There are other benefits that bespoke MP3 players bring over using smartphones for music, and the Oakcastle being so cheap means you can enjoy it without breaking the bank. Seniors will love it for its simplicity, and it’s something children can use to enjoy tunes without priming them for future technology addiction.

You can use a device like this at night, to put on some soothing Elgar, without a notification or two waylaying your relaxing doze. MP3 players are also nice little running devices, freeing you from your smartphone when you strap on your trainers, though something like the super-small Majority MP3 with its nifty clip might be better for that.

As I say, it’s not for everyone: if you don’t need a standalone music playing device, or conversely you want one that’s advanced and full of features, this isn’t for you — you’ll need to look to pricier options from Astell & Kern or FiiO. But there’s got to be a sweet spot between those camps who appreciate it. I certainly like the MP300 a lot.

Oakcastle MP300 review: Price and release date

- Released in February 2026

- Listed for £31.95 (about $40, AU$60)

- On sale in UK, maybe Aus, probably not US

The Oakcastle MP300 was released on February 18, 2026, alongside the same-priced MP250 model which has a sports clip and simplified controls.

Online, the MP300 is officially listed at £31.95 (about $40, AU$60), though during testing I generally saw it for a few pounds less. Not by a huge amount — you wouldn’t buy a pint with the difference, even outside London — but enough to suggest that this thing is rarely at RRP.

Oakcastle, like Majority, generally doesn’t sell its products in the US, so don’t expect availability there. Some of the brand’s gadgets sell in Australia, but at the time of writing, the MP300 wasn’t available.

Oakcastle MP300 review: Specs

|

Battery life |

50 hours |

|

Weight |

53g |

|

Connectivity |

Bluetooth 5.0, 3.5mm, USB-C |

|

Waterproofing |

NA |

|

Storage |

64GB |

|

Expandable? |

Up to 128GB |

Oakcastle MP300 review: Features

- 50-hour battery life

- Custom, simple user interface

- 64GB storage, up to 128GB more via microSD

The Oakcastle MP300 runs on a simple operating system, which feels like a glorified media library. You can see what’s currently playing, browse your general music library, make playlists and set up Bluetooth connections.

It does offer a stopwatch and calendar (with no way of inputting dates), but the point of this thing is clearly music. Navigating with the buttons isn’t especially fast, and unfortunately there’s no music search option (that I could find).

You get a battery life that, according to Majority, hits 50 hours on a single charge. That seems to match up with what I found — it’s definitely long enough that you don’t need to worry about charging frequently.

The MP300 has 64GB of built-in storage, which is plenty if you use MP3. My library was in WAV and didn’t fit onto the thing to begin with, so I had to convert them to fit. The expandable storage gets you up to 128GB extra, and you can be sure I made the most of it.

Moving my library over to the Oakcastle was easy: I plugged it into a computer and dragged and dropped the files into its folder. It wasn’t the perfect conversion: lots of album artwork didn’t get pulled through, and on the device, songs are all labelled as .mp3. But overall, it was pretty simple and worked fine.

Oakcastle MP300 review: Design

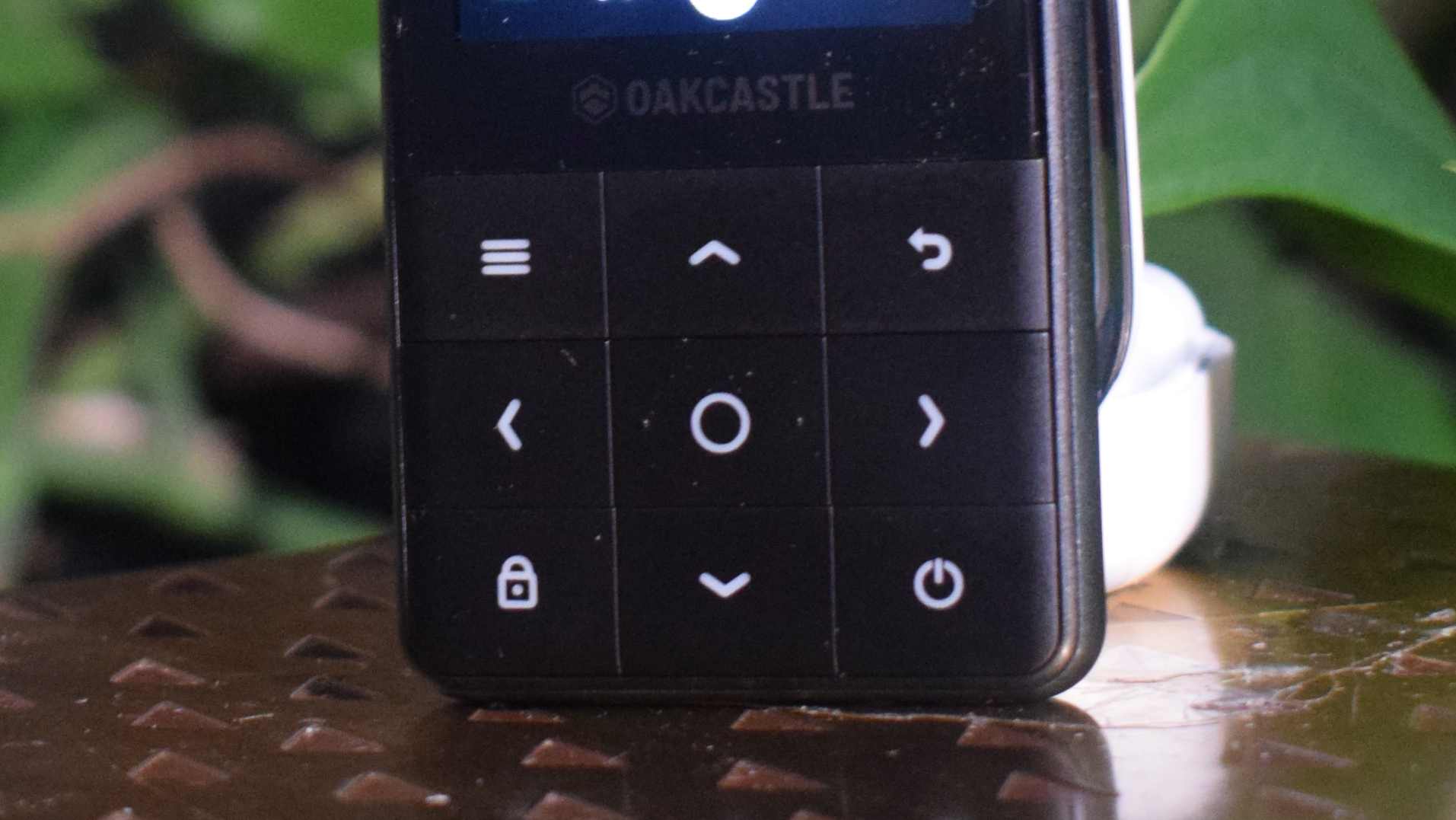

- Nine buttons plus screen

- Lightweight, but somewhat cheap feeling

- No IP rating

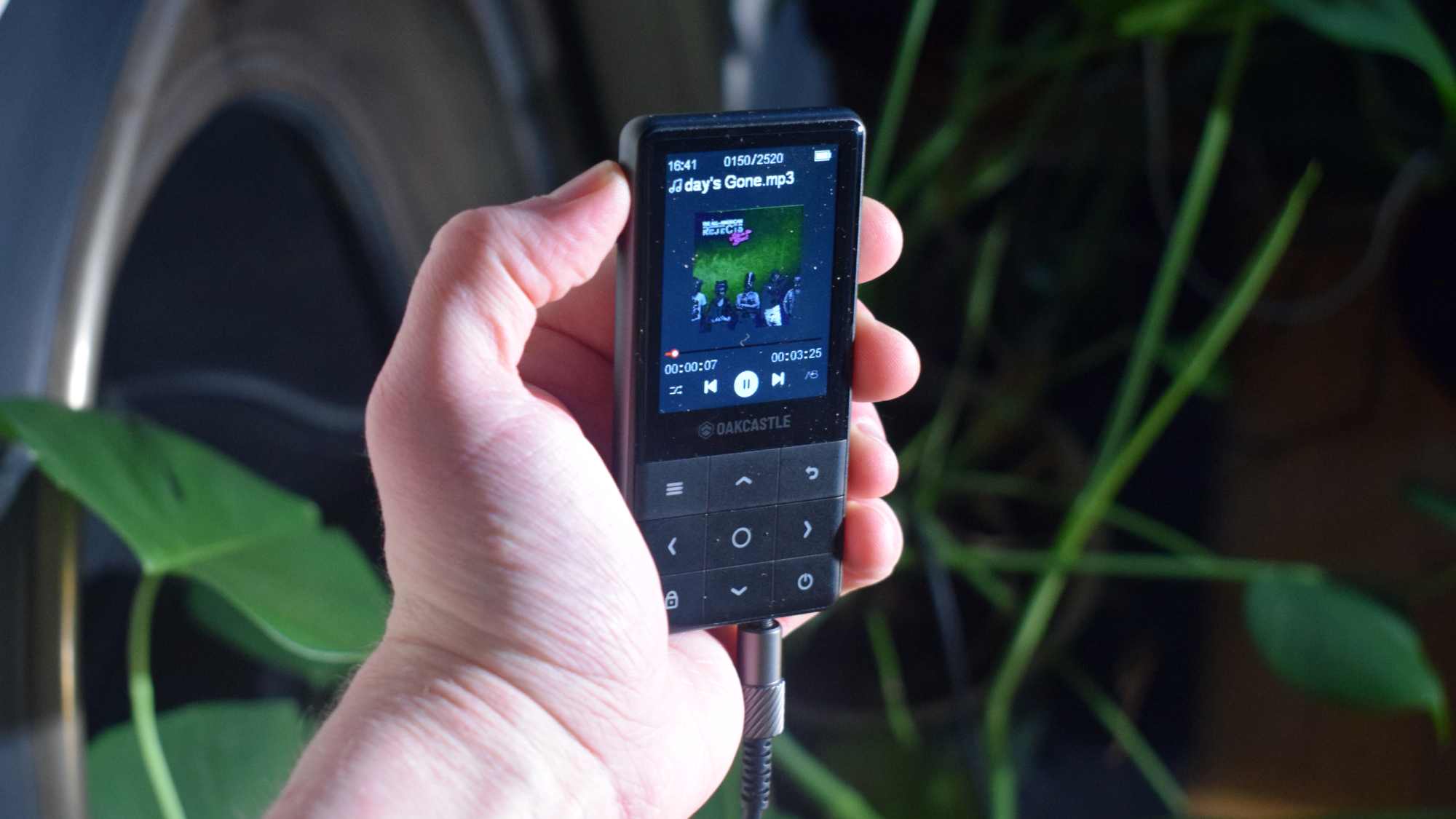

I almost thought the Oakcastle MP300 was a feature phone when I first saw it: it has a similar design, with buttons below a screen and ports on the bottom. But it’s much lighter than a phone, at 53g — there’s a good chance your earbuds case tips the scales more than that.

The MP300 is also smaller, at 50 x 101 x 9.5mm, so I could put it in my pocket without it feeling like a second smartphone. On the bottom there’s a USB-C port for charging and data connection, as well as a 3.5mm jack. The right side has a microSD card slot, which is a nice addition.

Despite being compact, the Oakcastle still feels a little hollow — it gives a little when you squeeze it, making it feel a little fragile and cheap. Saying that, the textured plastic back makes it feel a little more premium than some of the bargain-basement MP3 players you might find on line.

Accusations of ‘cheap’ become more well-founded when you move over to the buttons though. They’re not as sensitive or responsive as you’d hope, which slowed down my navigation of the player and constantly reminded me of the lack of money spent.

If you’re a pool-music-listener, I should warn you that there’s no official IP rating — try to avoid getting it wet or dusty.

Oakcastle MP300 review: Sound quality

- Supports MP3, FLAC, WAV

- No on-board equalizer

- No higher-res Bluetooth codec support

The MP300 supports three file types: MP3, FLAC and WAV. Naturally your sound quality will vary depending on which you pick; I tested all three for scientific purposes, but for general use, I had to use MP3 simply to fit all my music on the speaker.

There’s no equalizer on-board, so you’d better like your music how it comes — or, more accurately, your headphones how they come. At least you can use a wired connection to hear, not just via Bluetooth 5.0 (only your basic ‘vanilla’ SBC streaming is supported, as far as I can tell), although I appreciated having the option to go wireless.

Let’s be honest: this isn’t an MP3 player you’re buying if sound quality is your biggest concern. There are options that cost a lot more which offer extra onboard DACs to level up the audio, streaming over wi-fi and hi-res Bluetooth codec support. So the fact that sound from this thing is pretty basic is part and parcel of the MP300.

There is a built-in speaker here, but it’s quiet and doesn’t exactly sound amazing. For me, it was sometimes a simple reminder that I’d forgotten to connect to a speaker, rather than a serious way of enjoying tunes.

Oakcastle MP300 review: Value

- It’s really cheap

- Worth buying over dubious alternatives

Of course the Oakcastle MP300 is good value; you’re paying pennies to get an on-the-go music device.

Sure, it doesn’t have many feature of flashy alternatives, but it has storage capabilities and a headphone jack: what more do you need? Thanks to this latter, it’s probably better than your smartphone for music playing, let’s be honest.

Given that Oakcastle is a trusted brand, I’d feel safer giving this company my money, rather than any of the even-cheaper alternatives you’ll find online.

Should I buy the Oakcastle MP300?

|

Attributes |

Notes |

Rating |

|---|---|---|

|

Features |

It’s light in terms of features, but the battery life is solid. |

3.5/5 |

|

Design |

While it feels a little cheap, it’s light and slender enough to make up for it. |

3.5/5 |

|

Sound quality |

You’re getting the basic sound with little ability to change it. Even hi-res streaming is out of bounds. |

3/5 |

|

Value |

It’s arguably the cheapest MP3 player you should consider buying. |

5/5 |

Buy it if…

Don’t buy it if…

Oakcastle MP300 review: Also consider

| Header Cell – Column 0 |

Oakcastle MP300 |

Majority MP3 |

FiiO JM21 |

|---|---|---|---|

|

Battery life |

50 hours |

34 hours |

12 hours |

|

Weight |

53g |

33g |

156g |

|

Connectivity |

Bluetooth 5.0, 3.5mm, USB-C |

Bluetooth 5.0, 3.5mm, USB-C |

Bluetooth 5.0, 3.5mm, 4.4mm, USB-C |

|

Waterproofing |

NA |

NA |

IPX5 |

|

Storage |

64GB |

16GB |

32GB |

|

Expandable |

128GB |

128GB |

2TB |

How I tested the Oakcastle MP300

- Tested for a month

- Tested on various speakers and headphones via aux and BlueTooth

I used the Oakcastle MP300 for a full month before writing this review. I started the testing by loading it up with my music library, first in WAV and then in MP3. This collection includes a large range of genres, with roughly 3,000 tracks in all.

Through the month, I used the MP300 alongside a range of devices. I connected them to the Samsung Galaxy Buds 4 Pro and Edifier M90 via Bluetooth, and via aux I used the Edifiers, my Sony headphones and my Line 6 guitar amplifier.

I’ve been reviewing audio tech for TechRadar for years, including several other budget MP3 players and plenty of other Majority gadgets.

- First reviewed in March 2026

a) identifies the suspect, since the two images are of the same person, according to the software. Success!

a) identifies the suspect, since the two images are of the same person, according to the software. Success!

b) matches another person in the footage with the suspect’s probe image. A false positive, coupled with sloppy verification, could put the wrong person behind bars and lets the real criminal escape justice.

b) matches another person in the footage with the suspect’s probe image. A false positive, coupled with sloppy verification, could put the wrong person behind bars and lets the real criminal escape justice.

Less clear photographs are harder for FRT to process.iStock

Less clear photographs are harder for FRT to process.iStock

2020:

2020:  2023:

2023:  2026: U.S. immigration agents

2026: U.S. immigration agents

You must be logged in to post a comment Login