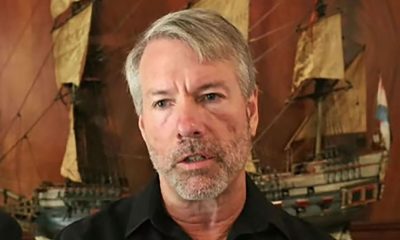

Graham Bartley discusses his role in the software engineering landscape and the evolution of his job over recent years.

“No two days are exactly the same, which is part of what keeps the job engaging,” Yahoo senior software engineer Graham Bartley told SiliconRepublic.com.

“On days when I go into the Dublin office, I typically spend the first hour of the morning enjoying tea and catching up with my colleagues face-to-face,” he said.

“I always enjoy hearing their stories, learning a little about what they’re working on and spotting opportunities for collaboration.

“On remote days, I usually start by checking in on our squad’s Jira board and any overnight Slack discussions. I lead a squad called Optimus, which is Yahoo Demand Side Platform (DSP)’s core generative AI (GenAI) backend team, so there’s often a thread to catch up on from my squad members or a design question from one of the other squads we support.”

From there, Bartley’s day is a mix of writing code, reviewing pull requests, design work, managing Jira tickets and taking calls with squad members. On a coding day, he might find himself building out a new API endpoint for Yahoo’s troubleshooting agent, writing integration tests or debugging a production issue end-to-end across multiple services.

“There are usually quite a few meetings, including our daily squad standup, individual calls with each squad member, cross-team syncs with other squads, architecture meetings and office-hour sessions where engineers present designs for feedback.

“I try to protect blocks of focused time for deep work, but being a squad lead means always being available when someone needs support.”

As a senior software engineer, how does your role fit into the wider software industry?

I work on Yahoo’s DSP, which is the technology behind how advertisers plan, target and optimise digital advertising campaigns across channels like mobile, connected TV, desktop and audio. It’s a big part of what’s called programmatic advertising and it’s a space that keeps growing.

Within that, my current focus is on applying GenAI to make the platform smarter and more intuitive. I lead a squad that built Yahoo DSP’s first GenAI-powered feature, an AI troubleshooting agent that helps advertisers understand why their campaigns might be underdelivering and what they can do about it.

What skills do you use on a daily basis and how have they evolved over time?

Day to day, I write Python and Java, design APIs and distributed systems, review code and work across the full stack from database schemas through backend services to UI components, although recently my focus has been entirely on the backend as that is my squad’s responsibility. I also design technical architectures, write design documents, and present to both technical and non-technical audiences.

The breadth of what I do has grown a lot over time. Earlier in my career, I was heads-down writing code and delivering features within a couple of codebases based on the Jira tickets I was assigned. Now I spend as much time on system design, cross-team coordination and setting technical direction as I do writing code.

The biggest recent shift has been AI, both in what I build and how I build it. On the product side, I’ve gone deep on large language models, agent frameworks, model context protocol, vector databases, knowledge bases and evaluation pipelines for non-deterministic AI outputs. Two years ago, none of that was part of my daily vocabulary.

What are the hardest parts of your working day and how do you navigate them?

Context-switching is perhaps the biggest challenge. As a squad lead and code-owner across many repositories, I might go from a deep debugging session to a design review to a cross-team Slack discussion about authentication architecture, all within a couple of hours. Each context requires full attention and the transitions are costly. I navigate that by being disciplined about time-blocking. I protect mornings for deep technical work wherever possible and cluster meetings in the afternoons. I also use structured notes and documentation heavily. If I’m interrupted mid-task, I want to be able to pick up exactly where I left off.

AI tools actually help here, too. Being able to drop back into a Claude Code session and say ‘here’s where I was, here’s what I was trying to do’ and have it reconstruct the context is genuinely useful.

Working remotely from Ireland with teams distributed globally can present some challenges, such as working after-hours to interface with colleagues abroad. Managing this is definitely a skill in itself and can be difficult because it can feel very productive to work late when US colleagues are available, for example.

I try to be aware of making up for late hours worked to keep a good work-life balance, but it’s definitely challenging balancing the feeling of productivity with the reality of burnout.

Has your role changed as the sector has grown and evolved?

Significantly. When I started, engineering work in the DSP was largely about building features in reliable CRUD applications and optimising serving workflows. The biggest evolution since then has been the arrival of GenAI. In late 2024, I was part of a small working group exploring how GenAI could be applied to our product. Within months, that exploratory work became a full strategic initiative. I went from leading a commerce media team to founding and leading Yahoo DSP’s first GenAI squad.

The way we build software is changing too. A year ago, our team wrote code the traditional way. Now we’re using AI coding assistants daily. It’s still early days and there are real learning curves. We’ve found that the AI works brilliantly for well-defined, bounded tasks but struggles with cross-cutting concerns that span multiple services and large contexts. Knowing when to lean on it and when to step back and think for yourself is a skill in itself.

The organisational model has changed too. We’ve moved from traditional team structures to a ‘squads, tribes, chapters, and guilds’ model, which puts more emphasis on cross-functional collaboration and autonomous delivery. My role has shifted from primarily writing code to being a technical leader who sets direction, defines standards and enables other teams to build on the foundations we create together.

Is there anything you know now about working in software that you wish you knew starting out?

I wish I’d known more about work-life balance and recognising the signs of burnout. Early in my career, I had a tendency to just push through and it took me a while to realise that’s not sustainable. Now I’m much more intentional about managing my time, protecting my energy and knowing when to step away from a problem. You actually come back sharper when you do.

I also wish I’d challenged assumptions more. When you’re starting out, it’s natural to take requirements and existing designs at face value. But as I’ve become more senior and taken on more design work, I’ve found that some of the biggest improvements come from questioning why something is the way it is. The best solutions often come from pushing back on the premise, not just optimising within the constraints you’re given.

What advice do you have for others looking to follow in your professional footsteps?

Don’t be afraid to say ‘I don’t know’ and then go learn. This is crucial. The best engineers I’ve worked with aren’t the ones who know everything. They’re the ones who learn quickly and aren’t embarrassed to be a beginner at something new. Being a mentor also means being a mentee and I’ve learned a lot from the engineers I’ve mentored. A year ago, I had never built an AI agent. Now I lead a team that builds them.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

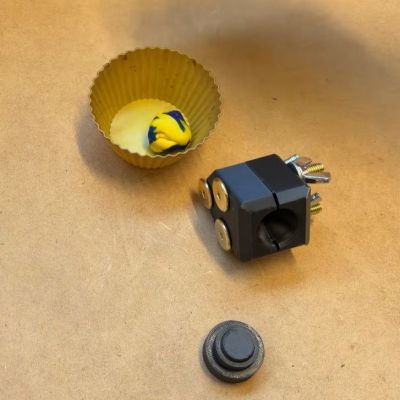

After previously trying out low-tech compression molding with a toaster oven and 3D printed molds, [future things] is back with a video that seeks to explore some of the questions raised after the first video. Questions such as how well this method works with HDPE and PLA thermoplastics, whether the flashing could be cut off by the mold and the right temperatures and times to heat the plastic before a charge is ready for inserting into the mold.

After previously trying out low-tech compression molding with a toaster oven and 3D printed molds, [future things] is back with a video that seeks to explore some of the questions raised after the first video. Questions such as how well this method works with HDPE and PLA thermoplastics, whether the flashing could be cut off by the mold and the right temperatures and times to heat the plastic before a charge is ready for inserting into the mold.

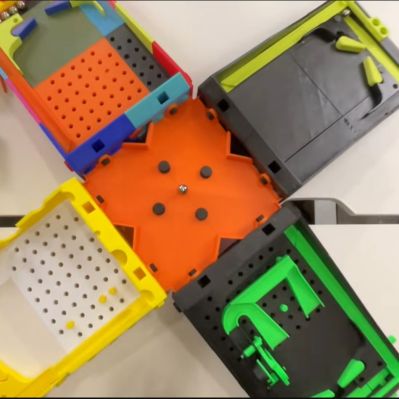

It seems fair to say that pinball machines are among the most universally loved gaming systems known today, yet the full-sized ones are both very expensive and very large, while even the good quality table-sized ones tend to be on the expensive side. That raises the question of whether a fully 3D printed pinball machine could at all be fun and not just feel like a cheapo toy? A recent video by [Steven] from [3D Printer Academy] on YouTube

It seems fair to say that pinball machines are among the most universally loved gaming systems known today, yet the full-sized ones are both very expensive and very large, while even the good quality table-sized ones tend to be on the expensive side. That raises the question of whether a fully 3D printed pinball machine could at all be fun and not just feel like a cheapo toy? A recent video by [Steven] from [3D Printer Academy] on YouTube

You must be logged in to post a comment Login