This year marks the 80th anniversary of ENIAC, the first general-purpose digital computer. The computer was built during World War II to speed up ballistics calculations, but its contributions to computing extend well beyond military applications.

Two of ENIAC’s key architects—John W. Mauchly, its co-inventor, and Kathleen “Kay” McNulty, one of the six original programmers—married a few years after its completion and raised seven children together. Mauchly and McNulty’s grandchild Naomi Most delivered a talk as part of a celebration in honor of ENIAC’s anniversary on 15 February, which was held online and in-person at the American Helicopter Museum in West Chester, Pa. The following is adapted from that presentation.

RELATED: ENIAC, the First General-Purpose Digital Computer, Turns 80

There was a library at my grandparents’ farmhouse that felt like it went on forever. September light through the windows, beech leaves rustling outside on the stone porch, the sounds of cousins and aunts and uncles somewhere in the house. And in the corner of that library, an IBM personal computer.

When I spent summers there as a child, I didn’t yet know that the computer was closely tied to my family’s story.

My grandparents are known for their contributions to creating the Electronic Numerical Integrator and Computer, or ENIAC. But both were interested in more than just crunching numbers: My grandfather wanted to predict the weather. My grandmother wanted to be a good storyteller.

In Irish, the first language my grandmother Kathleen “Kay” McNulty ever spoke, a word existed to describe both of these impulses: ríomh.

I began to learn the Irish language myself five years ago, and I was struck by how certain words and phrases had multiple meanings. According to renowned Irish cultural historian Manchán Magan—from whom I took lessons—the word ríomh has at different times been used to mean to compute, but also to weave, to narrate, or to compose a poem. That one word that can tell the story of ENIAC, a machine with wires woven like thread that was built to compute, make predictions, and search for a signal in the noise.

John Mauchly’s Weather-Prediction Ambitions

Before working on ENIAC, John Mauchly spent years collecting rainfall data across the United States. His favorite pastime was meteorology, and he wanted to find patterns in storm systems to predict the weather.

The Army, however, funded ENIAC to make simpler predictions: calculating ballistic trajectory tables. Start there, co-inventors J. Presper Eckert and Mauchly realized, and perhaps the weather would soon be computable.

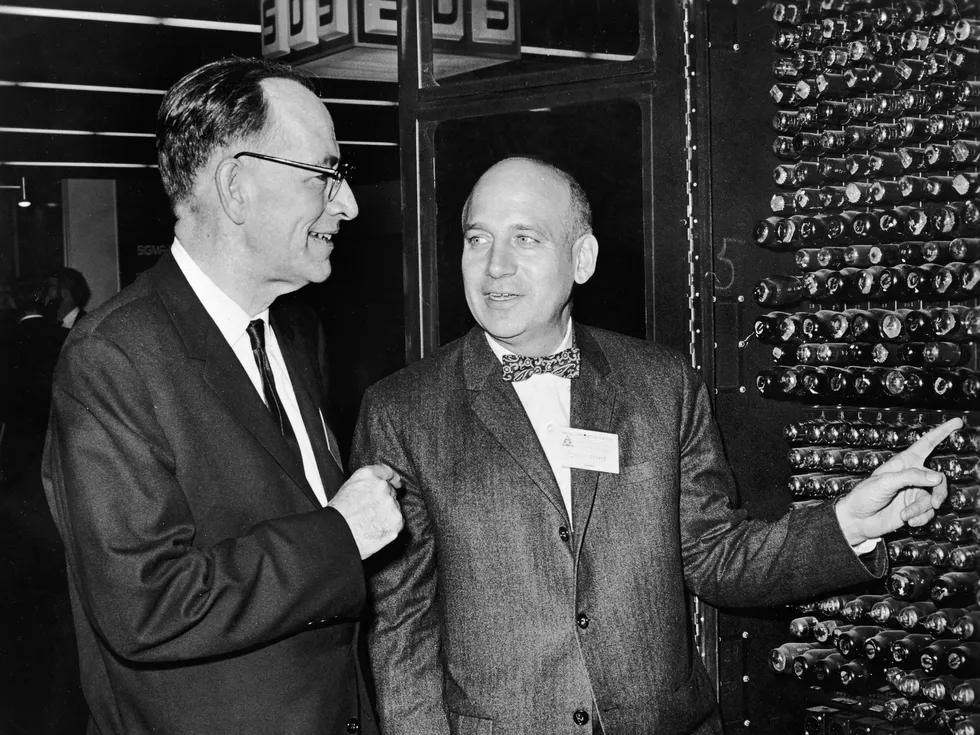

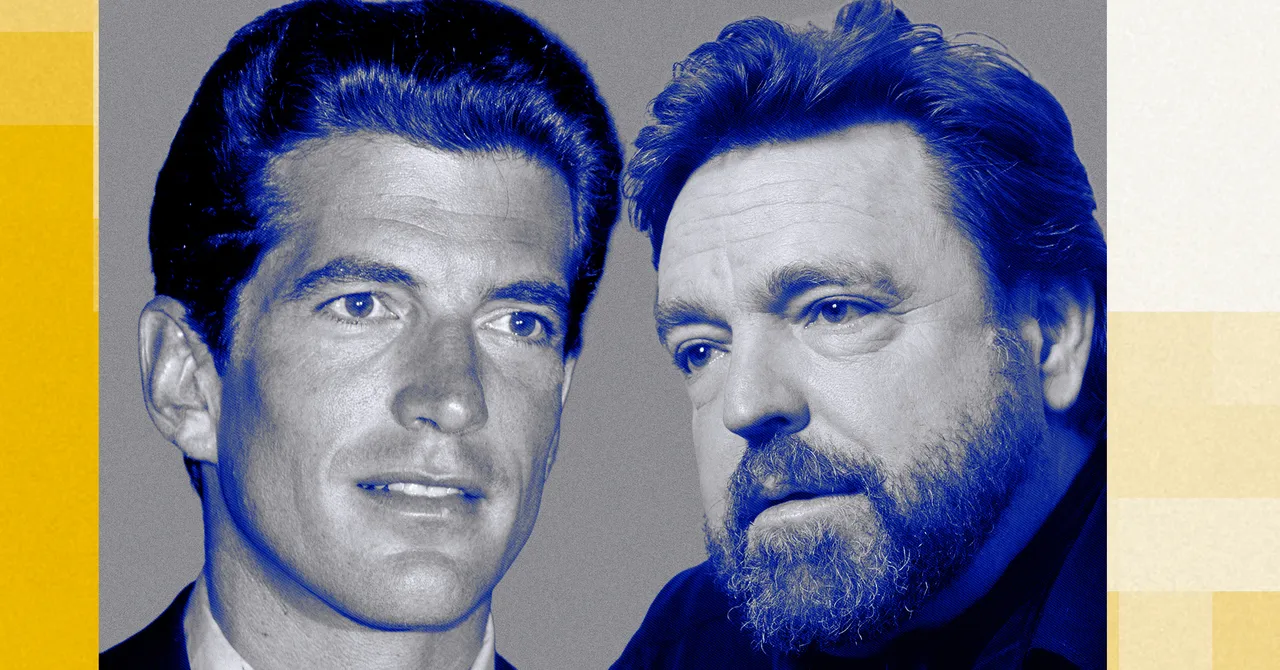

Co-inventors John Mauchly [left] and J. Presper Eckert look at a portion of ENIAC on 25 November 1966. Hulton Archive/Getty Images

Co-inventors John Mauchly [left] and J. Presper Eckert look at a portion of ENIAC on 25 November 1966. Hulton Archive/Getty Images

Weather is a system unfolding through time, and a model of a storm is a story about how that system might unfold. There’s an old Irish saying related to this idea: Is maith an scéalaí an aimsir. Literally, “weather is a good storyteller.” But aimsir also means time. So the usual translation of this phrase into English becomes “time will tell.”

Mauchly wanted to ríomh an aimsire—to weave the weather into pattern, to compute the storm, to narrate the chaos. He realized that complex systems don’t reveal their full purpose at conception. They reveal it through aimsir—through weather, through time, through use.

ENIAC’s First Programmers Were Weavers

Kathleen “Kay” McNulty was born on 12 February 1921, in Creeslough, Ireland, on the night her father—an IRA training officer—was arrested and imprisoned in Derry Gaol.

Family oral history holds that her people were weavers. She spoke only Irish until her family reached Philadelphia when she was 4 years old, entering American school the following year knowing virtually no English. She graduated in 1942 from Chestnut Hill College with a mathematics degree, was recruited to compute artillery firing tables by hand for the U.S. Army, and was then selected—along with five other women—to program ENIAC.

They had no manual. They had only blueprints.

McNulty and her colleagues learned ENIAC and its quirks the way you learn a loom: by touch, by memory, by routing threads of electricity into patterns. They developed embodied knowledge the designers could only approximate. They could narrow a malfunction to a specific failed vacuum tube before any technician could locate it.

McNulty and Mauchly are also credited with conceiving the subroutine, the sequence of instructions that can be repeatedly recalled to perform a task, now essential in any programming. The subroutine was not in ENIAC’s blueprints, nor in the funding proposal. The concept emerged as highly determined people extended their imagination into the machine’s affordances.

The engineers designed the loom. Weavers discovered its true capabilities.

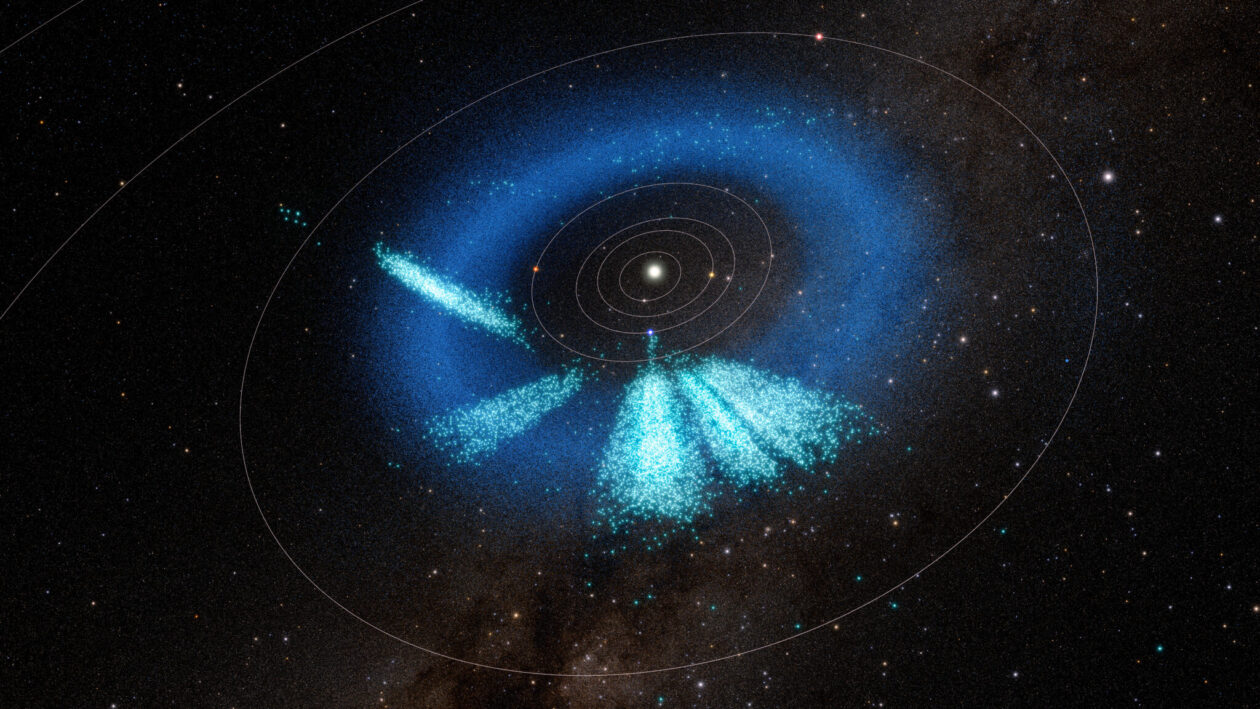

In 1950, four years after ENIAC was switched on, Mauchly’s dream was realized as it was used in the world’s first computer-assisted weather forecast. That was made possible after Klara von Neumann and Nick Metropolis reassembled and upgraded the ENIAC with a small amount of digital program memory. The programmers who transformed the math into operational code for the ENIAC were Norma Gilbarg, Ellen-Kristine Eliassen, and Margaret Smagorinsky. Their names are not as well-known as they should be.

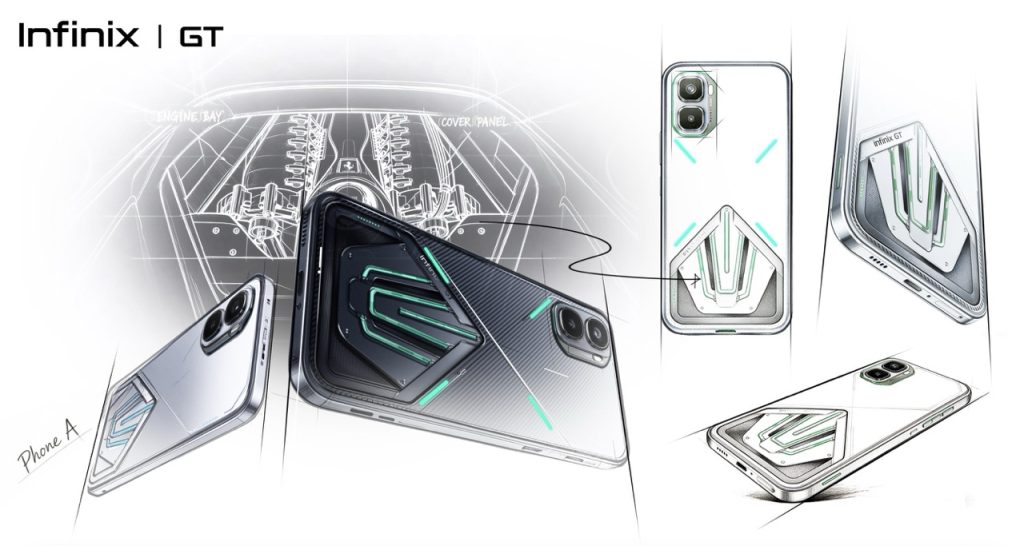

Before programming ENIAC, Kay McNulty [left] was recruited by the U.S. Army to compute artillery firing tables. Here, she and two other women, Alyse Snyder [center] and Sis Stump, operate a mechanical analog computer designed to solve differential equations in the basement of the University of Pennsylvania’s Moore School of Electrical Engineering.University of Pennsylvania

Before programming ENIAC, Kay McNulty [left] was recruited by the U.S. Army to compute artillery firing tables. Here, she and two other women, Alyse Snyder [center] and Sis Stump, operate a mechanical analog computer designed to solve differential equations in the basement of the University of Pennsylvania’s Moore School of Electrical Engineering.University of Pennsylvania

Kay McNulty, Family Storyteller

Kay married John Mauchly in 1948, describing him as “the greatest delight of my life. He was so intelligent and had so many ideas…. He was not only lovable, he was loving.” She spent the rest of her life ensuring he, Eckert, and the ENIAC programmers would be recognized.

When she died in 2006, I came to her funeral in shock, not fully knowing what I’d lost. As she drifted away, it was said, she had been reciting her prayers in Irish. This understanding made it quickly over to Creeslough, in County Donegal, and awaited me when I visited to honor her memory with the dedication of a plaque right there in the center of town.

In her own memoir, she wrote: “If I am remembered at all, I would like to be remembered as my family storyteller.”

In Irish, the word for computer is ríomhaire. One who ríomhs. One who weaves, computes, and tells. My grandfather wanted to tell the story of the weather through computing. My grandmother wanted to be remembered as a storyteller. The language of her childhood already had a word that contained both of those ambitions.

Computers as Narrative Engines

When it was built, ENIAC looked like the back room of a textile production house. Panels. Switchboards. A room full of wires. Thread.

Thread does not tell you what it will become. We tend to think of computing as calculation—discrete and deterministic. But a model is a structured story about how something behaves.

Weather models, ballistic tables, economic forecasts, neural networks: These are all narrative engines, systems that take raw inputs and produce accounts of how the world might unfold. In complex systems, when parts are woven together through use, new structures arise that no one specified in advance.

Like ENIAC, the machines we are building now—the large models, the autonomous systems—are not merely calculators. They are looms.

Their most important properties will not be specified in advance. They will emerge through use, through the people who learn how to weave with them.

Through imagination.

Through aimsir.

From Your Site Articles

Related Articles Around the Web

Co-inventors John Mauchly [left] and J. Presper Eckert look at a portion of ENIAC on 25 November 1966. Hulton Archive/Getty Images

Co-inventors John Mauchly [left] and J. Presper Eckert look at a portion of ENIAC on 25 November 1966. Hulton Archive/Getty Images

Before programming ENIAC, Kay McNulty [left] was recruited by the U.S. Army to compute artillery firing tables. Here, she and two other women, Alyse Snyder [center] and Sis Stump, operate a mechanical analog computer designed to solve differential equations in the basement of the University of Pennsylvania’s Moore School of

Before programming ENIAC, Kay McNulty [left] was recruited by the U.S. Army to compute artillery firing tables. Here, she and two other women, Alyse Snyder [center] and Sis Stump, operate a mechanical analog computer designed to solve differential equations in the basement of the University of Pennsylvania’s Moore School of

You must be logged in to post a comment Login