The acquisition of Promptfoo, which counts more than 125,000 developers and 30-plus Fortune 500 companies among its users, is OpenAI’s most direct move yet into AI application security. Its technology will go into Frontier, the company’s enterprise agent platform launched just a month ago.

When Ian Webster was leading the LLM engineering team at Discord, shipping AI products to 200 million users, he noticed something the security industry had not yet caught up with: the tools his team relied on to keep those products safe were built for a different era. Traditional vulnerability scanners could not reason about prompt injection. Static analysis had nothing to say about a model that promised a user something it had no authority to deliver. The testing infrastructure for AI applications, he concluded, simply did not exist.

So he built it himself, nights and weekends, as an open-source project. That project became Promptfoo. On Monday, OpenAI announced it is acquiring the company.

The deal, terms of which were not disclosed, will see Promptfoo’s technology integrated into OpenAI Frontier, the enterprise agent management platform that OpenAI launched in early February. In a post on X, OpenAI said the acquisition would “strengthen agentic security testing and evaluation capabilities” within Frontier, and pledged that Promptfoo would remain open source under its current licence, with continued support for existing customers.

Promptfoo, which Webster co-founded with Michael D’Angelo – a former VP of engineering and head of AI at identity verification firm Smile Identity – launched commercially in 2024 with $5 million in seed funding from Andreessen Horowitz. The seed round attracted backing from a notable roster of angels, including Shopify CEO Tobi Lütke, Discord CTO Stanislav Vishnevskiy, and Okta co-founder Frederic Kerrest. By July 2025, the company had raised an $18.4 million Series A led by Insight Partners, with a16z again participating. Total funding ahead of the acquisition was approximately $23.4 million.

At the time of the Series A, Promptfoo said it had more than 125,000 developers using its open-source framework and over 30 Fortune 500 companies running its enterprise platform in production. Customers span retail, telecoms, financial services, and media, sectors with acute exposure to the regulatory and reputational risks of AI failures.

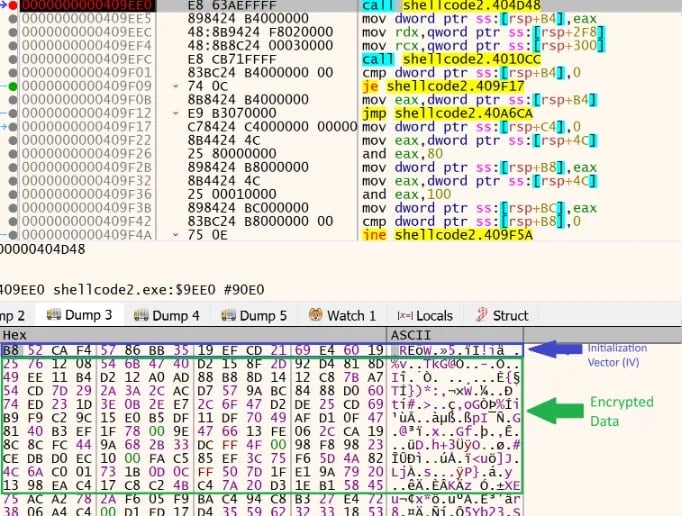

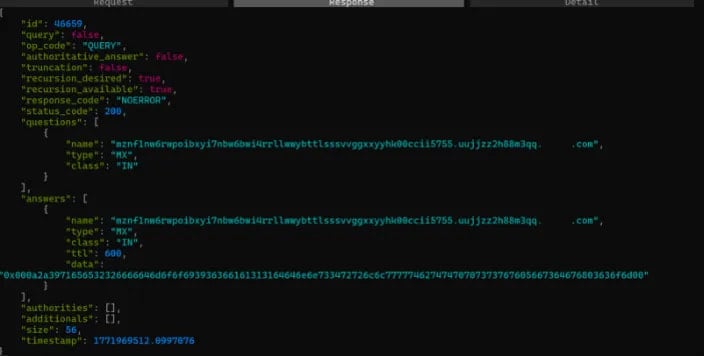

The product works by acting as an automated adversary. Rather than relying on manual penetration testing, Promptfoo’s platform talks directly to a customer’s AI application, through its chat interface or APIs, using specialised models and agents that behave like users, or specifically like attackers. When an attack succeeds, the platform records it, analyses why it worked, and iterates through an agentic reasoning loop to refine the test and expose deeper vulnerabilities. Risks the platform targets include prompt injection, data leakage, jailbreaks, and what Webster has called “application-level” failures: AI systems that promise users things they cannot deliver, or that reveal database contents to a customer service query, or that stray into political opinion in a homework tutor.

It is precisely those application-level risks that make Promptfoo’s acquisition a strategic fit for OpenAI’s current direction. Frontier, which OpenAI has described as an attempt to create “AI coworkers” for the enterprise, is designed to give AI agents access to production systems, CRM platforms, data warehouses, internal ticketing tools, and to execute workflows with real-world consequences. Agents operating at that level of access create a correspondingly enlarged attack surface. Early customers named by OpenAI for Frontier include Uber, State Farm, Intuit, and Thermo Fisher Scientific: organisations for whom a misbehaving agent is not an inconvenience but a liability.

OpenAI has been building out Frontier at speed. Since launching the platform on 5 February, the company has announced Frontier Alliances with Accenture, Boston Consulting Group, Capgemini, and McKinsey, enlisting the consulting firms to drive enterprise deployment. Separately, the company has been rolling out Codex Security, an AI-powered application security agent for software repositories, formerly known internally as Aardvark, which entered wider availability on the same day as the Promptfoo acquisition announcement.

Promptfoo is not the only AI security product entering broader availability this month. Anthropic launched Claude Code Security in February, targeting similar vulnerability scanning use cases. The convergence suggests that as AI agents move into production at scale, the question of who secures them, and how, is fast becoming one of the defining commercial battlegrounds in enterprise AI.

For Promptfoo’s open-source community, OpenAI’s commitment to keeping the project open source under its current licence will be the line to watch. The project has over 248 contributors, and its adoption by developers at companies across the AI industry – including, according to Promptfoo’s own website, teams at Anthropic and Google – was built on the premise that the tool belonged to the developer community rather than to any one vendor. That promise now sits alongside a commercial integration into one of the most powerful enterprise AI platforms in the market.